Multi-Modal Data Augmentation for End-to-End ASR

We present a new end-to-end architecture for automatic speech recognition (ASR) that can be trained using \emph{symbolic} input in addition to the traditional acoustic input. This architecture utilizes two separate encoders: one for acoustic input an…

Authors: Adithya Renduchintala, Shuoyang Ding, Matthew Wiesner

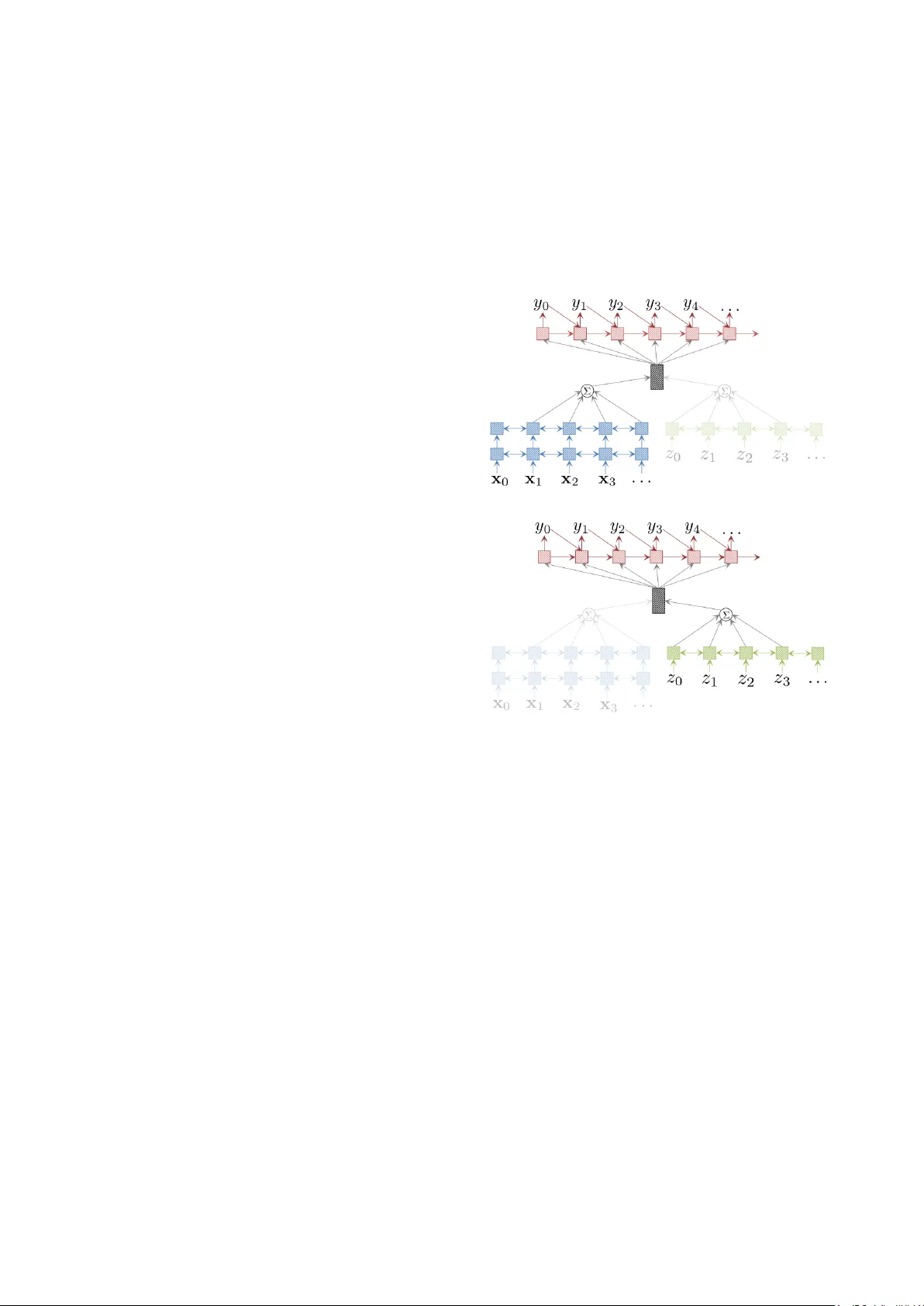

Multi-Modal Data A ugmentation f or End-to-End ASR Adithya Renduchintala, Shuoyang Ding , Matthew W iesner , Shinji W atanabe Center for Language and Speech Processing Johns Hopkins Uni versity , Baltimore, MD 21218, USA { adi.r,dings,wiesner,shinjiw } @jhu.edu Abstract W e present a new end-to-end architecture for automatic speech recognition (ASR) that can be trained using symbolic input in addition to the traditional acoustic input. This architecture uti- lizes two separate encoders: one for acoustic input and another for symbolic input, both sharing the attention and decoder pa- rameters. W e call this architecture a multi-modal data augmen- tation network (MMD A), as it can support multi-modal (acous- tic and symbolic) input and enables seamless mixing of large text datasets with significantly smaller transcribed speech cor- pora during training. W e study different ways of transform- ing large text corpora into a symbolic form suitable for train- ing our MMD A network. Our best MMDA setup obtains small improv ements on character error rate (CER), and as much as 7- 10% relativ e word error rate (WER) improvement over a base- line both with and without an external language model. 1. Introduction The simplicity of “end-to-end” models and their recent success in neural machine-translation (NMT) hav e prompted consid- erable research into replacing conv entional ASR architectures with a single “end-to-end” model, which trains the acoustic and language models jointly rather than separately . Recently , [1] achieved state-of-the-art results using an attention-based encoder-decoder model trained on over 12K hours of speech data. Howe ver , on lar ge publicly a vailable corpora, such as “Librispeech” or “Fisher English”, which are an order of magni- tude smaller , performance still lags behind that of conv entional systems. [2, 3, 4]. Our goal is to lev erage much larger te xt cor- pora alongside limited amounts of speech datasets to improv e the performance of end-to-end ASR systems. V arious methods of leveraging these te xt corpora have im- prov ed end-to-end ASR performance. [5], for instance, com- poses RNN-output lattices with a lexicon and word-lev el lan- guage model, while [6] simply re-scores beams with an e xternal language model. [7, 8] incorporate a character level language model during beam search, possibly disallowing character se- quences absent from a dictionary , while [9] includes a full word lev el language model in decoding by simultaneously keeping track of word histories and word prefixes. As our approach does not change any aspect of the traditional decoding process in end-to-end ASR, the methods mentioned above can still be used in conjunction with our MMD A network. An alternative method, proposed for NMT , augments the source (input) with “synthetic” data obtained via back- translation from monolingual target-side data [10]. W e draw inspiration from thi s approach and attempt to augment the ASR input with text-based synthesized input generated from large text corpora. (a) (b) Figure 1: Overview of our Multi-modal Data A ugmentation (MMD A) model. F igur e 1a highlights the network engaged when acoustic featur es ar e given as input to an acoustic encoder (shaded blue). Alternatively , when synthetic input is supplied the network (F igur e 1b) uses an augmenting encoder (green). In both cases a shared attention mechanism and decoder are used to pr edict the output sequence . F or simplicity we show 2 layers without down-sampling in the acoustic encoder and omit the input embedding layer in the augmenting encoder . 2. A pproach While text-based augmenting input data is a natural fit for NMT , it cannot be directly used in end-to-end ASR systems which e x- pect acoustic input. T o utilize te xt-based input, we use two sep- arate encoders in our ASR architecture: one for acoustic input and another for synthetic text-based augmenting input. Figure 1 giv es an ov erview of our proposed architecture. 2.1. MMD A Architecture Figure 1a shows a sequence of acoustic frames { x 0 , x 1 , . . . } fed into an acoustic encoder shown with blue cross hatching. The attention mechanism takes the output of the encoder and generates a context vector (gray cross hatching) which is uti- T able 1: Examples of sequences under differ ent synthetic input generation schemes. The original text for these examples is the phrase JOHN BLARE AND COMPANY . Synthetic Input Example Sequence Charstream J O H N B L A R E A N D C O M P A N Y Phonestream JH AA1 N B L EH1 R AE1 N D K AH1 M P AH0 N IY0 Rep-Phonestream JH JH JH AA1 AA1 AA1 AA1 N B L L L EH1 R AE1 AE1 AE1 N D K K K AH1 AH1 M M P AH0 AH0 AH0 N IY0 IY0 IY0 lized by the decoder (red cross hatching) to generate each tok en in the output sequence { y 0 , y 1 , . . . } . In figure 1b, the network is given a sequence of “synthetic” input tokens, { z 0 , z 1 , . . . } , where z i ∈ Z and the set Z is the vocab ulary of the synthetic input. The size and items in Z depend on the type of synthetic input scheme used (see T able 1 for examples and Section 5.2 for more details). As the synthetic inputs are categorical, we use an input embedding layer which learns a vector representation of each symbol in Z . The v ector representation is then fed into an augmenting encoder (sho wn in green cross hatching). Follo w- ing this, the same attention mechanism and decoder are used to generate an output sequence. Note that some details such as the exact number of layers, down-sampling in the acoustic encoder , and the embedding layer in the augmenting encoder are omitted in Figure 1 for sake of clarity . 2.2. Synthetic Inputs A desirable synthetic input should be easy to construct from plain text corpora, and should be as similar as possible to acous- tic input. W e propose three types of synthetic inputs that can be easily generated from text corpora and with varied similarity to acoustic inputs (see T able 1). 1. Charstr eam : The output character sequence is supplied as synthetic input without word boundaries. 2. Phonestream : W e make use of a pronunciation lexicon to expand words into phonemes where unknown pronunci- ations are recovered via grapheme-to-phoneme transduc- tion (G2P). 3. Rep-Phonestream : W e e xplicitly model phoneme dura- tion by repeating each phoneme such that the relative durations of phonemes to each other mimic what is ob- served in data (e.g. vo wels last longer than stop conso- nants). 2.3. Multi-task T raining Let D be the ASR dataset, with acoustic input and character sequence output pairs ( X j , y j ) where j ∈ { 1 , . . . , |D |} . Us- ing a text corpus S with sentences s k where k ∈ { 1 , . . . , |S |} , we can generate synthetic inputs z k = syn ( s k ) , where syn ( . ) is one of the synthetic input creation schemes discussed in the previous section. Under the assumption that both y j and s k are sequences with the same character vocabulary and from the same language, our augmenting dataset A is comprised of train- ing pairs ( z k , s k ) k ∈ { 1 , . . . , |S |} . T ypically the corpus S is much lar ger than the original ASR training set D . During train- ing, we alternate between batches from acoustic training data D (primary task) and synthesized augmenting data A (secondary task). In each batch we maximize the primary objecti ve or the secondary objecti ve. Note that in both cases the attention and decoder parameters (denoted by θ att and θ dec , see equation 1) are shared, while the acoustic encoder parameters ( θ enc ) and augmenting encoder parameters ( θ aug ) are only updated in their respectiv e training batches. L ( θ ) = ( log P ( y | X ; θ enc , θ att , θ dec ) primary objectiv e log P ( s | z ; θ aug , θ att , θ dec ) secondary objectiv e (1) W e ev aluate our model on a held out ASR dataset D 0 which only contains acoustic batches as our ultimate goal is to obtain the best ASR system. In the remainder of the paper we place our work in con- text of other multi-modal, multi-task, and data-augmentation schemes for ASR. W e propose a nov el architecture to seam- lessly train on both text (with synthetic inputs) and speech cor- pora. W e analyze the merit of these approaches on WSJ, and finally report the performance of our best performing architec- ture on WSJ [11] and the HUB4 Spanish [12] and V oxforge Italian corpora [13]. 3. Related W ork Augmenting the ASR source with synthetically generated data is already a widely used technique. Generally , label-preserving perturbations are applied to the ASR source to ensure that the system is robust to variations in source-side data not seen in training. Such perturbations include V ocal T ract Length Pertur- bations (VTLN) as in [14] to expose the ASR to a variety of synthetic speaker v ariations, as well as speed, tempo and vol- ume perturbations [15]. Speech is also commonly corrupted with synthetic noise or rev erberation [16, 17]. Importantly , these perturbations are added to help learn more robust acoustic representations, but not to expose the ASR system to new output utterances, nor do the y alter the network architecture. By contrast, our proposed method for data aug- mentation from external text exposes the ASR system to new output utterances, rather than to new acoustic inputs. Another line of work in volves data-augmentation for NMT . In [18], improvements in low-resource settings were obtained by simply copying the source-side (input) monolingual data to the target side (output). Our approach is loosely based off of [10], which improves NMT performance by creating pseudo parallel data using an auxiliary translation model in the reverse direction on target-side te xt. Previous work has also tried to incorporate other modali- ties during both training and testing, but have focused primar - ily on learning better feature representations via correlative ob- jectiv e functions or on fusing representations across modalities [19, 20]. The fusion methods require both modalities to be present at test time, while the multi view methods require both views to be conditionally independent giv en a common source. Our method has no such requirements and only makes use of the alternate modality during training. Lastly , we note that considerable work has applied multi- task training to “end-to-end” ASR. In [21], the CTC objecti ve is used as an auxiliary task to force the attention to learn mono- tonic alignments between input and output. In [22], a multi-task framew ork is used to jointly perform language-id and speech- to-text in a multilingual ASR setting. In this work our use of phoneme-based augmenting data is ef fectiv ely using G2P (P2G) as an auxiliary task in end-to-end ASR, though only implicitly . 4. Method Our MMD A architecture is a straightforward extension to Attention-Based Encoder-Decoder network [23], which is de- scribed by components as follows. 4.1. Acoustic Encoder For a single utterance, the acoustic frames form a matrix X ∈ I R L x × D x are encoded by a multi-layer bi-directional LSTM (biLSTM) with hidden dimension H for each direction, L x and D x being the length of the utterance in frames and the num- ber of acoustic features per frame, respectively . After each lay- ers’ encoding, the hidden vectors of I R 2 H are projected back to vectors of I R H using a projection layer and fed as the input into the next layer . W e also use a pyramidal encoder following [24] to down-sample the frame encodings and capture a coarser- grained resolution. 4.2. A ugmenting Encoder The augmenting encoder is a single-layer biLSTM – essentially a “shallow” acoustic encoder . As the synthetic input is symbolic (e.g. phoneme, character), we use an embedding layer which learns a real-v alued vector representation for each symbol, thus con verting a sequence of symbols z ∈ Z L z × 1 into a matrix Z ∈ I R L z × D z , where Z is the set of possible augmenting input symbols, L z is the length of the augmenting input sequence and D z is embedding size respecti vely . W e set D x = D z to ensure that the acoustic and augmenting encoders work smoothly with the attention mechanism. 4.3. Decoder W e used a uni-directional LSTM for the decoder [23, 25]. s j = LSTM ( y j − 1 , s j − 1 , c j ) (2) where y j − 1 is the embedding of the last output token, s j − 1 is the LSTM hidden state in the pre vious time step, and c j is the attention-based context vector which will be discussed in the following section. W e omitted all the layer index notations for simplicity . The hidden state of the final LSTM layer is passed through another linear transformation followed by a softmax layer generating a probability distribution o ver the outputs. 4.4. Attention Mechanism W e used Location-a ware attention [26], which e xtends the content-based attention mechanism [23] by using the attention weights from the pre vious output time-step α j − 1 when comput- ing the weights for the current output α j . The previous time- step attention weights α j − 1 are “smoothed” by a con volution operation and fed into the attention weight computation Once attention weights are computed, a weighted sum over encoder hidden states generates the the context v ector c j . 5. Experiments 5.1. Data W e compared the proposed types of synthetic data by ev aluat- ing character and word error rates (CER, WER) of ASR sys- tems trained on the W all Street Journal corpus (LDC93S6B and LDC94S13B), using the standard SI-284 set containing ∼ 37K utterances or 80 hours of speech. W e used the“dev93” set as a dev elopment set and selection criteria for the best model, which was then ev aluated on the “ev al92” dataset. W e also tested the performance of MMD A using the best performing synthetic in- put type on the Hub4 Spanish and Italian V oxforge datasets. The Hub4 Spanish corpus consists of 30 hours of 16kHz speech from three different broadcast news sources [12]. W e used the same e v aluation set as used in the Kaldi Hub4 Spanish recipe [27], and constructed a development set with the same number of utterances as the e v aluation set by randomly select- ing from the remaining training data. For the V oxforge Italian corpus, which consist of 16 hours of broadband speech, [13] we created training, de velopment, and e valuation sets, by randomly selecting 80%, 10%, and 10% of the data for each set respectiv ely , ensuring that no sentence was repeated in any of the sets. In all experiments we repre- sented each frame of audio by a vector of 83 dimensions (80 Mel-filter bank coefficients 3 pitch features). 5.2. Generating Synthetic Input The augmenting data used for the WSJ experiments are gener- ated from section ( 13-32.1 87,88,89 ) of WSJ, which is typically used for training language models applied during decoding. W e made 3 different synthetic inputs for this section of WSJ. For Charstr eam synthetic input the target-side character sequence was copied to the input while omitting word boundaries. For Phonestr eam synthetic input we constructed phone sequences using CMUDICT to which 46k words from the WSJ corpus are added[28] as the lexicon as described in section 5.2. W e trained the G2P on CMUDICT using the Phonetisaurus toolkit. For certain words consisting only of rare graphemes, we were unable to infer pronunciations and simply assigned to these words a single h unk i phoneme. Finally , we filtered out sentences with more than 1 h unk i phoneme symbol, and those abo ve 250 characters in length. The resulting augmenting dataset contained ∼ 1 . 5 M sentences. In the Rep-Phonestr eam scheme, we modified the aug- menting input phonemes to further emulate the ASR input by modeling the v ariable durations of phonemes. W e as- sumed that a phoneme’ s duration in frames is normally dis- tributed N ( µ p , σ 2 p ) and we estimated these distrib utions for each phoneme from frame-lev el phoneme transcripts in the TIMIT dataset. For example, giv en a phoneme sequence like JH AA N (for the word “John”), we would sample a sequence of frame durations f p ∼ N ( µ p , σ 2 p ) p ∈ { JH , AA , N } and re- peat each phoneme r times, where r = max(1 , Round ( f p ) / 4) . Dividing by 4 accounts for the down-sampling performed by the pyramidal scheme in the acoustic encoder . The augmenting data for both Spanish and Italian was gen- erated by using W ikipedia data dumps 1 and then scraping W ik- tionary using wikt2pron 2 for pronunciations of all words seen in the text. W e used the resulting seed pronunciation lex- icon for G2P training as before and again filtered out long sen- tences and those with resulting h unk i words after phonemic e x- pansion of all words in the augmenting data. In order to gen- erate the Rep-Phonestream data we manually mapped TIMIT phonemes to similar Italian and Spanish phonemes and applied the corresponding durations learned on TIMIT . 5.3. T raining W e implemented our MMD A model on-top of ESPNET using the PyT orch backend [21, 29]. A 4 layer biLSTM with a “pyra- 1 https://dumps.wikimedia.org/backup-inde x.html 2 https://github .com/abuccts/wikt2pron T able 2: Experiments on WSJ corpus using differ ent augmenta- tion input types (part 1). The best performing augmentation was then applied to Italian (V oxforge) and Spanish (HUB4) datasets (part 2 & 3 of the table). Corpus A ugmentation CER (ev al, de v) WER (ev al, de v) English (WSJ) No-Augmentation 7.0 , 9.9 19.5, 24.8 Charstream 7.5, 10.5 20.3, 25.7 Phonestream 7.4, 10.1 20.4, 25.3 Rep-Phonestream 7.1, 9.8 17.5, 22.7 No-Augmentation + LM 7.0, 9.8 17.2, 22.2 Rep-Phonestream + LM 6.7, 9.4 16.0, 20.8 Italian (V oxforge) No-Augmentation + LM 16.4, 15.9 47.2, 46.1 Rep-Phonestream + LM 14.8, 14.5 44.3, 44.0 Spanish (HUB4) No-Augmentation + LM 12.6, 12.8 31.5, 33.5 Rep-Phonestream + LM 12.1 , 13.1 29.5, 32.6 midal” structure was used for the acoustic encoder [6]. The biL- STMs in the encoder used 320 hidden units (in each direction) followed by a projection layer . F or the augmenting encoder, we used a single layer biLSTM with the same number of units and projection scheme as the acoustic encoder . No down-sampling was done on the augmenting input. Location-aware attention was used in all our experiments [26]. For WSJ experiments, the decoder w as a 2-layer LSTM with 300 hidden units, while a single layer w as used for both Spanish and Italian. W e used Adadelta to optimize all our models for 15 epochs [30]. The model with best validation accuracy (at the end of each epoch) was used for e valuation. For decoding, a beam-size of 10 for WSJ and 20 for Span- ish and Italian was used. In both cases we restricted the output using a minimum-length and maximum-length threshold. The min and max output lengths were set as 0 . 3 F and 0 . 8 F , where F denotes the length of down-sampled input. For RNNLM in- tegration, we trained a 2-layer LSTM language model with 650 hidden units. The RNNLM for each experiment was trained on the same sentences used for augmentation. 5.4. Results T able 2 (part 1) shows the ASR results on WSJ. Rep- Phonestream augmentation improved the baseline WER by a margin of 2%, while none of the other augmentations helped. This corroborates our intuition that data augmentation works better when synthetic inputs are similar to the real training data. Furthermore, we continued to observe gains in WER when an RNNLM was incorporated in the decoding process [8]. This suggests that while MMDA and LM have a similar effect, they can still be used in conjunction to extract further impro vement. The best performing synthetic input scheme was applied to Spanish and Italian, where a similar trend was observed. MMD A consistently achie ved better WER and obtained small improv ements in CER (see table 2, parts 2 and 3). The relativ e gains in English (WSJ) were higher than Spanish and Italian; we suspect the ad-hoc phone duration mapping we employed for these languages and mismatch in augmenting text data might hav e contributed to the lo wer relativ e gains. W e found that the Rep-Phonestream MMD A system tended to replace entire words when incorrect, while the baseline sys- tem incorrectly changed a few characters in a word, ev en if the resulting word did not exist in English (for WSJ). This beha v- T able 3: Error type differ ences between the Rep-Phonestr eam MMD A trained system and the baseline system on WSJ (dev & test combined). “Nonsense err ors” ar e substitutions or in- sertions that result in non-le gal English wor ds, e.g. CASINO substituted with ACCINO . “Legal error s” ar e error s that result le gal English wor ds, e.g. BOEING substituted with BOLDING . A ugmentation Nonsense errors % Legal errors% No-Augmentation 32.93 67.07 No-Augmentation + LM 24.34 75.66 Rep-Phonestream 24.99 75.11 Rep-Phonestream + LM 20.25 79.75 ior tended to improv e WER while harming CER. For exam- ple, in the WSJ experiments, the baseline substitutes QUOTA with COLOTA , while the Rep-Phonestream MMDA predicts COLORS . W e verified this hypothesis by computing the ratio of substitutions and insertions resulting in nonsense words to the total number of such errors on the WSJ dev elopment and ev al- uation data for the baseline system and MMDA, both with and without RNNLM re-scoring. W e see that RNNLM re-scoring actually behav es like MMD A in this regard (see T able 3). 6. Future W ork 6.1. Enhancing MMDA W e identify three possible future research directions: (i) Augmenting encoders: More elaborate designs for the augmenting encoder could be used to generate more “speech-like” encodings from symbolic synthetic inputs. (ii) Synthetic inputs: Other synthetic inputs should be ex- plored, as our choice was motivated in large part by sim- plicity and speed of generating synthetic inputs. Using something approaching a text-to-speech system to gen- erate augmenting data may be greatly beneficial. (iii) T raining schedules: Is the 1:1 ratio for augmenting and acoustic training ideal? Using more augmenting data initially may be beneficial, and a systematic study of various training schedules would reveal more insights. Furthermore, automatically adjusting the amount of aug- menting data used also seems worthy of inquiry . 6.2. Applications Our frame work is easily expandable to other end-to-end se- quence transduction applications, examples of which include domain adaptation and speech-translation. T o adapt ASR to a new domain (or even new dialect/language), we can train on additional augmenting data derived from the new domain (or dialect/language). W e also believ e the MMDA frame work may be well suited to speech-translation due to its similarity to back- translation in NMT . 7. Conclusion W e proposed the MMD A framew ork which exposes our end- to-end ASR system to a much wider range of training data. T o the best of our knowledge, this the first attempt in truly end- to-end multi-modal data augmentation for ASR. Experiments show promising results for our MMD A architecture and we highlight possible extensions and future research in this area. 8. References [1] C.-C. Chiu, T . N. Sainath, Y . W u, R. Prabhavalkar , P . Nguyen, Z. Chen, A. Kannan, R. J. W eiss, K. Rao, K. Gonina et al. , “State- of-the-art speech recognition with sequence-to-sequence models, ” arXiv preprint arXiv:1712.01769 , 2017. [2] V . Panayoto v , G. Chen, D. Pov ey , and S. Khudanpur , “Lib- rispeech: an ASR corpus based on public domain audio books, ” in Pr oceedings of the International Confer ence on Acoustics, Speec h and Signal Pr ocessing (ICASSP) . IEEE, 2015. [3] C. Cieri, D. Graff, O. Kimball, D. Miller, and K. W alker, “Fisher english training speech part 1 transcripts, ” Philadelphia: Linguis- tic Data Consortium , 2004. [4] ——, “Fisher english training part 2, ” Linguistic Data Consor- tium, Philadelphia , 2005. [5] Y . Miao, M. Go wayyed, and F . Metze, “Eesen: End-to-end speech recognition using deep rnn models and wfst-based decoding, ” in Automatic Speech Recognition and Understanding (ASRU), 2015 IEEE W orkshop on . IEEE, 2015, pp. 167–174. [6] W . Chan, N. Jaitly , Q. V . Le, and O. V inyals, “Listen, attend and spell: A neural netw ork for large vocabulary conversational speech recognition, ” in IEEE International Confer ence on Acous- tics, Speech and Signal Pr ocessing (ICASSP) , 2015. [7] A. Maas, Z. Xie, D. Jurafsky , and A. Ng, “Le xicon-free con versa- tional speech recognition with neural networks, ” in Pr oceedings of the 2015 Confer ence of the North American Chapter of the As- sociation for Computational Linguistics: Human Language T ech- nologies , 2015, pp. 345–354. [8] T . Hori, S. W atanabe, Y . Zhang, and W . Chan, “ Advances in joint CTC-attention based end-to-end speech recognition with a deep CNN encoder and RNN-LM, ” in Interspeec h , 2017, pp. 949–953. [9] A. Graves and N. Jaitly , “T o wards end-to-end speech recognition with recurrent neural networks, ” in International Conference on Machine Learning (ICML) , 2014, pp. 1764–1772. [10] R. Sennrich, B. Haddow , and A. Birch, “Improving Neural Machine Translation Models with Monolingual Data, ” in Pr oceedings of the 54th Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) . Berlin, Germany: Association for Computational Linguistics, August 2016, pp. 86–96. [Online]. A vailable: http://www .aclweb.or g/ anthology/P16- 1009.pdf [11] D. B. Paul and J. M. Baker, “The design for the W all Street Journal-based CSR corpus, ” in Pr oceedings of the workshop on Speech and Natural Language . Association for Computational Linguistics, 1992, pp. 357–362. [12] L. D. Consortium, “1997 spanish broadcast news speech (hub 4- ne) ldc98s74, ” Philadelphia, 1998. [13] V oxforge.org, “Free speech recognition, ” http://www .voxforge. org/, accessed 06/25/2014. [14] A. Ragni, K. M. Knill, S. P . Rath, and M. J. Gales, “Data aug- mentation for low resource languages, ” in Fifteenth Annual Con- fer ence of the International Speech Communication Association , 2014. [15] T . K o, V . Peddinti, D. Povey , and S. Khudanpur, “ Audio augmen- tation for speech recognition, ” in Sixteenth Annual Confer ence of the International Speech Communication Association , 2015. [16] A. Hannun, C. Case, J. Casper, B. Catanzaro, G. Diamos, E. Elsen, R. Prenger , S. Satheesh, S. Sengupta, A. Coates et al. , “Deep speech: Scaling up end-to-end speech recognition, ” arXiv pr eprint arXiv:1412.5567 , 2014. [17] E. V incent, S. W atanabe, A. A. Nugraha, J. Bark er , and R. Marxer , “ An analysis of environment, microphone and data simulation mismatches in robust speech recognition, ” Computer Speech & Language , v ol. 46, pp. 535–557, 2017. [18] A. Currey , A. V . M. Barone, and K. Heafield, “Copied monolin- gual data impro ves low-resource neural machine translation, ” in Pr oceedings of the Second Confer ence on Machine T ranslation , 2017, pp. 148–156. [19] R. Arora and K. Li vescu, “Multi-vie w cca-based acoustic features for phonetic recognition across speakers and domains, ” in Acous- tics, Speec h and Signal Pr ocessing (ICASSP), 2013 IEEE Inter- national Conference on . IEEE, 2013, pp. 7135–7139. [20] Y . Mroueh, E. Marcheret, and V . Goel, “Deep multimodal learn- ing for audio-visual speech recognition, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2015 IEEE International Confer- ence on . IEEE, 2015, pp. 2130–2134. [21] S. W atanabe, T . Hori, S. Kim, J. R. Hershey , and T . Hayashi, “Hybrid CTC/attention architecture for end-to-end speech recog- nition, ” IEEE Journal of Selected T opics in Signal Processing , vol. 11, no. 8, pp. 1240–1253, 2017. [22] S. T oshniwal, T . N. Sainath, R. J. W eiss, B. Li, P . Moreno, E. W e- instein, and K. Rao, “Multilingual speech recognition with a sin- gle end-to-end model, ” arXiv pr eprint arXiv:1711.01694 , 2017. [23] D. Bahdanau, K. Cho, and Y . Bengio, “Neural machine trans- lation by jointly learning to align and translate, ” arXiv pr eprint arXiv:1409.0473 , 2014. [24] W . Chan, N. Jaitly , Q. Le, and O. V inyals, “Listen, attend and spell: A neural netw ork for large vocabulary conversational speech recognition, ” in Acoustics, Speech and Signal Processing (ICASSP), 2016 IEEE International Confer ence on . IEEE, 2016, pp. 4960–4964. [25] J. Chorowski, D. Bahdanau, K. Cho, and Y . Bengio, “End-to-end continuous speech recognition using attention-based recurrent nn: First results, ” arXiv pr eprint arXiv:1412.1602 , 2014. [26] J. K. Chorowski, D. Bahdanau, D. Serdyuk, K. Cho, and Y . Ben- gio, “ Attention-based models for speech recognition, ” in Ad- vances in Neural Information Processing Systems (NIPS) , 2015, pp. 577–585. [27] D. Povey , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz et al. , “The kaldi speech recognition toolkit, ” in IEEE 2011 workshop on automatic speech recognition and understanding , no. EPFL- CONF-192584. IEEE Signal Processing Society , 2011. [28] J. Kominek and A. W . Black, “The cmu arctic speech databases, ” in F ifth ISCA W orkshop on Speech Synthesis , 2004. [29] S. Kim, T . Hori, and S. W atanabe, “Joint ctc-attention based end- to-end speech recognition using multi-task learning, ” in Acous- tics, Speec h and Signal Pr ocessing (ICASSP), 2017 IEEE Inter- national Conference on . IEEE, 2017, pp. 4835–4839. [30] M. D. Zeiler, “ Adadelta: an adaptive learning rate method, ” arXiv pr eprint arXiv:1212.5701 , 2012.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment