DNN Based Speech Enhancement for Unseen Noises Using Monte Carlo Dropout

In this work, we propose the use of dropouts as a Bayesian estimator for increasing the generalizability of a deep neural network (DNN) for speech enhancement. By using Monte Carlo (MC) dropout, we show that the DNN performs better enhancement in uns…

Authors: Nazreen P M, A G Ramakrishnan

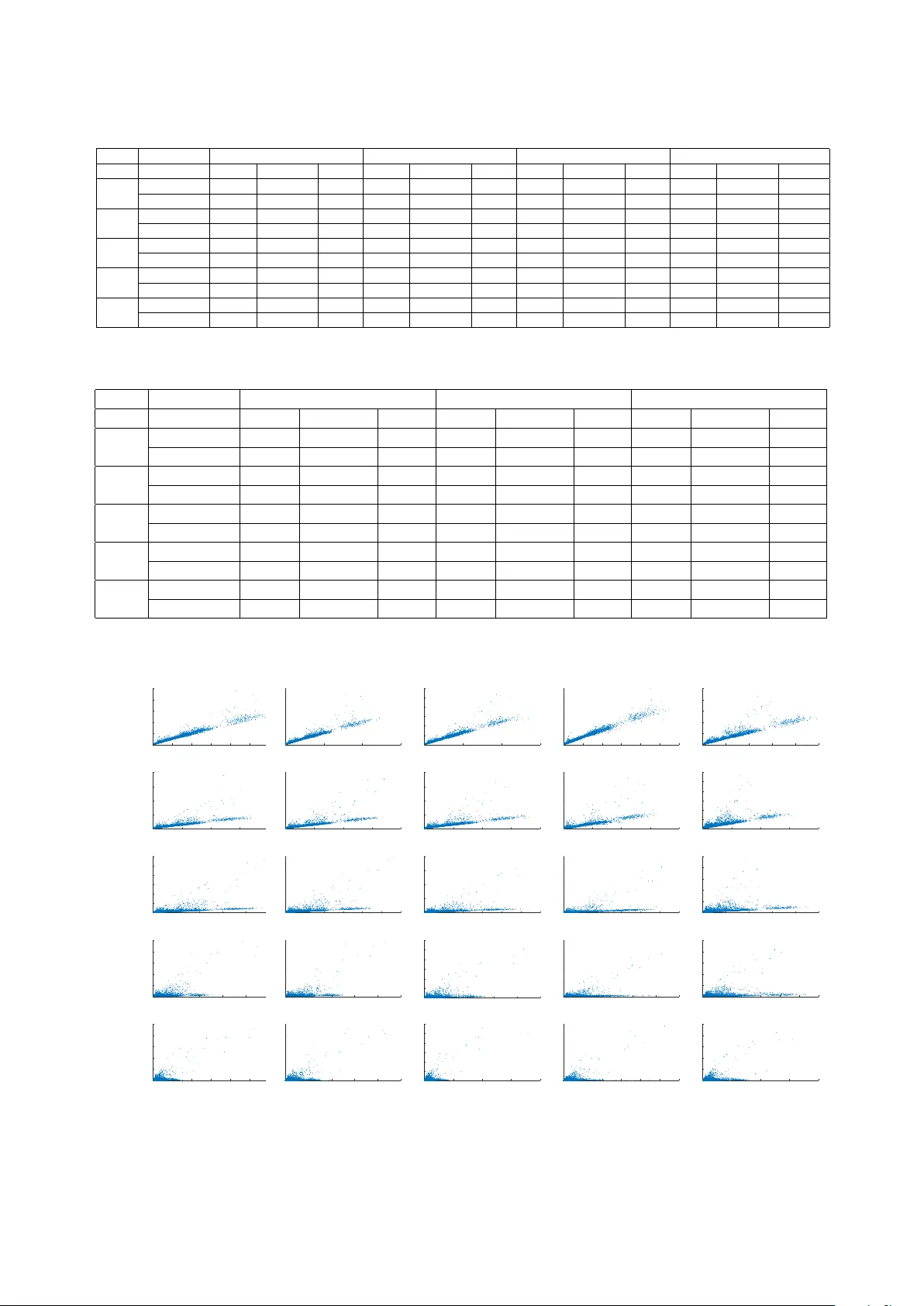

DNN Based Speech Enhancement f or Unseen Noises Using Monte Carlo Dr opout Nazr een P .M., A. G. Ramakrishnan MILE Lab, Department of Electrical Engineering, Indian Institute of Science, Bangalore 560012 nazreenp@iisc.ac.in, agr@iisc.ac.in Abstract In this work, we propose the use of dropouts as a Bayesian es- timator for increasing the generalizability of a deep neural net- work (DNN) for speech enhancement. By using Monte Carlo (MC) dropout, we sho w that the DNN performs better enhance- ment in unseen noise and SNR conditions. The DNN is trained on speech corrupted with Factory2, M109, Babble, Leopard and V olvo noises at SNRs of 0, 5 and 10 dB and tested on speech with white, pink and factory1 noises. Speech samples are ob- tained from the TIMIT database and noises from NOISEX-92. In another e xperiment, we train fi ve DNN models separately on speech corrupted with Factory2, M109, Babble, Leopard and V olvo noises, at 0, 5 and 10 dB SNRs. The model precision (estimated using MC dropout) is used as a proxy for squared error to dynamically select the best of the DNN models based on their performance on each frame of test data. 1 Index T erms : speech enhancement, deep neural networks, DNN, dropout, unseen noise, Monte Carlo, model uncertainty . 1. Introduction Single channel speech enhancement has been a challenging problem for decades. Speech enhancement techniques find se v- eral applications such as automatic speech recognition, hear- ing aids and speaker recognition. Methods proposed in the past include unsupervised methods such as spectral subtraction [1, 2], W iener filtering [3], minimum mean-square error estima- tors [4], estimators based on Gaussian prior distributions [5, 6] and residual-weighting schemes [7, 8, 9]. Most of these meth- ods may perform poorly when the background noise is non- stationary and in unexpected acoustic conditions. In supervised learning methods, prior information is fed into the models and hence they are expected to perform bet- ter than unsupervised methods [10, 11, 12]. Neural networks hav e been sho wn to learn the mapping between noisy and clean speech [13, 14, 15]. Ho wev er , these models are small net- works with a single hidden layer and cannot fully learn the mapping. Deep architectures have conquered this area recently , since these networks with multiple layers hav e been shown to better learn the complex mapping between noisy and clean fea- tures and hence gi ve really good enhancement performances. Hinton et al. proposed a greedy layer-wise unsupervised learn- ing algorithm [16, 17]. Mass et al. [18] use deep recurrent neu- ral networks for feature enhancement for noise rob ust ASRs. One of the major issues encountered by deep neural net- work (DNN) based enhancement is the degradation of perfor- mance for noises unseen during training. The model learns the mapping between noisy and clean speech well for those noises and signal to noise ratios (SNRs) with which it is trained, but performs poorly on speech corrupted by an unseen noise. In 1 Submitted on 23 March 2018 for Interspeech 2018 fact, this itself could be dealt with as a challenging task in speech enhancement scenario. Though not dealt with sepa- rately , techniques hav e been proposed in the past to address this problem. In [19], they have proposed a regression DNN-based speech enhancement framew ork, where they train a wide neu- ral network using a really huge collection of data of about 100 hours of various noise types. In [20], a DNN-SVM based sys- tem is trained on a variety of acoustic data for a huge amount of time. A noise aware training technique is adopted in [21], where a noise estimate is appended to the input feature for training. They use about 2500 hours of data for training the network. Hinton [22, 23] introduced the concept of dropouts to re- duce ov erfitting during DNN training. Though dropout omits weights during training, it is inactiv e during the inference stage, whereby all the neurons contribute to the prediction. Gal and Ghahramani [24] proposed using dropouts during testing, by showing a theoretical relationship between dropout and approximate inference in a Gaussian process. In [25], they show that by enabling dropouts during testing, and averaging the results of multiple stochastic forward passes, the predictions usually become better . They refer to this technique as Monte Carlo (MC) dropouts, where the output samples are MC sam- ples from the posterior distrib ution of models. In [24], they show that the model uncertainty can also be estimated from these samples. In this work, we explore ho w to use the idea of MC dropout to improve the generalizability of speech models, thereby im- proving the enhancement performance in a mismatched condi- tion. W e show that when the input is a noisy speech corrupted with an unseen noise, the use of MC dropout instead of normal dropout can giv e a better output. Hence the same concept could be applied to any of the abo ve mentioned DNN speech mod- els to further improve the generalizability of the output to get a better performance during unseen noise scenarios. W e also explore the usage of model uncertainty in problems where multiple noise specific DNN models are used. By using model uncertainty as an estimate of the prediction error for a sample, this technique can enable the selection of the model with the least prediction error on a frame by frame basis. A similar approach of selecting the best model based on an error estimate is proposed in [26] for robust SNR estimation. They trained a separate DNN as a classifier to select a particular re- gression model for SNR estimation. Ho wev er , this approach does not ameliorate the original problem of mismatch in train- ing and testing conditions. In our proposed algorithm, we use the intrinsic uncertainty of a model to estimate the prediction error . Since this method extracts information from the model itself, it has the potential to be a better representative of the pre- diction error . Our method also circumvents the issue of unseen testing conditions, since according to [24], the model uncer- tainty itself is an indicator of unseen data. Our approach to impro ve the generalization inv olves two methods. In the first approach, we show that MC dropout es- timate sho ws impro vement in the generalization performance of DNN and apply this to speech enhancement. W e train two DNN models, one without MC dropout and the other using MC dropout, with speech corrupted with five dif ferent noises at SNRs 0, 5 and 10 dB. During testing, multiple repetitions are performed by dropping out different units ev ery time and the empirical mean of all the outputs are taken. W e have anal- ysed that using MC dropout shows promise in improving the enhancement performance for unseen noise and SNR scenarios. The second approach is an analysis on the use of model uncertainty as an estimate of the prediction error for a sample, where multiple DNN models are used. Fiv e DNN models are trained, each using MC dropout. Each model is trained on noisy speech corrupted with a different noise at SNRs of 0, 5 and 10 dB. In a general scenario, one needs to identify the noise type to choose the right noise model to enhance the input noisy speech. Howe ver , in the case of an unseen noise scenario, the selection of the appropriate model becomes tricky . In such cases, we need to ensure that the chosen model is the one that gives the lowest error and hence a better enhancement performance. W e use the model uncertainty , estimated from the output samples of each model, as an estimate of the prediction error and choose the model based on it. Our experiments show that the stronger the correlation between the model uncertainty and the squared error , the better is the enhancement performance. 2. DNN based speech enhancement Under additiv e model, the noisy speech can be represented as, x t ( m ) = s t ( m ) + n t ( m ) (1) where x t ( m ) , s t ( m ) and n t ( m ) are the m th samples of the noisy speech, clean speech and noise signal, respectiv ely . T ak- ing the short time Fourier transform (STFT), we ha ve, x ( ω k ) = s ( ω k ) + n ( ω k ) (2) where ω k = (2 π k /R ) , k = 0 , 1 , 2 ...R − 1 , k is the index and R is the number of frequency bins. T aking the magnitude of the STFT , the noisy speech can be approximated as X ≈ S + N ∈ R R × 1 (3) where S and N represent the spectra of the clean speech and the noise, respectiv ely . A DNN based re gression model is trained using the magni- tude STFT features of clean and noisy speech. The noisy fea- tures are then fed to this trained DNN to predict the enhanced features, ˆ S . The enhanced speech signal is obtained by using the inv erse Fourier transform of ˆ S with the phase of the noisy speech signal and ov erlap-add method. 2.1. Basic DNN architecture The proposed baseline system uses a DNN to learn the complex mapping of input noisy speech to clean speech. It consists of 3 fully connected layers of 2048 neurons and an output layer of 257. W e use ReLU non-linearity as the activ ation function in all the 3 layers. Our output activ ation is also ReLU to account for the nonnegati ve nature of STFT magnitude. Backpropaga- tion algorithm is used for training. Stochastic gradient descent is used to minimize the mean square logarithmic error ( E r ) be- tween the noisy and clean magnitude spectra: E r = 1 R R X k =1 ( log ( S ( k ) + 1) − l og ( ˆ S ( k ) + 1)) 2 (4) where ˆ S and S denote the estimated and reference spectral fea- tures, respectively , at sample index k . This baseline system is only meant to illustrate the usage of our system. Consequently , we do not use the incremental improv ements in the literature. 3. Proposed methods for generalized speech models Gal and Ghahramani [24] ha ve sho wn a theoretical relationship between dropout [22] and approximate inference in a Gaussian process. The proposed system augments the baseline system by dropouts as a bayesian approximation. By using this approxi- mation, a distribution over the weights is learnt, thereby giving uncertainty of the output. The network output is simulated with input X , using dropout same as that employed during the training time. Dur- ing testing, T repetitions are performed, with different random units in the network dropped out ev ery time, obtaining the re- sults { ˆ S t ( X ) } ; 1 ≤ t ≤ T . It is shown in [24] that averag- ing forward passes through the network is equiv alent to Monte Carlo integration o ver a Gaussian process posterior approxima- tion. Empirical estimators of the predictive mean ( E ( S ) ) and variance (uncertainty , V ( S ) ) from these samples are given as: E ( S ) ≈ 1 T T X t =1 ˆ S t ( X ) (5) V ( S ) ≈ τ − 1 I D + 1 T T X t =1 ˆ S t ( X ) T ˆ S t ( X ) − E ( S ) T E ( S ) (6) where τ = l 2 p/ 2 N λ ; l : defined prior length scale, p : proba- bility of the units not being dropped, N : total input samples, λ : regularisation weight decay , which is zero for our experiments. 3.1. Single DNN model using MC dropout 32ms frame Hamming j DF T j DNN model using MC dropout compute empirical mean IDFT X 6 X f ^ S t ( X ) g ^ S ( X ) enhanced speech noisy speech Figure 1: Enhancement using single DNN-MC dr opout model. A single DNN model is trained using MC dropout with speech corrupted with fiv e noises: factory2, m109, leopard, babble and volv o at SNRs 0, 5 and 10 dB. A baseline model is trained on the same noises and SNRs, without the MC dropout. Figure 1 shows the block diagram of the proposed approach. During testing of MC dropout model, gi ven a noisy speech frame X , multiple repetitions are performed by drop- ping out different units each time gi ving T dif ferent outputs, { ˆ S t ( X ) } ; 1 ≤ t ≤ T . The empirical mean of these outputs are used as the estimated output ˆ S ( X ) as shown in eqn. 5. En- hanced speech is obtained as the in verse Fourier transform of ˆ S ( X ) with the phase of the noisy speech signal and overlap- add method. 3.2. Multiple DNN models using MC dropout with predic- tive variance (model uncertainty) as the selection scheme Model-specific enhancement techniques depend on a model se- lector [27, 28], which ensures that the model chosen for enhanc- ing each frame entails an o verall improv ed performance. Given multiple noise-specific DNN models for enhancing a frame of noisy speech, one method to select the appropriate model is to first detect the type of noise. Howe ver , if speech has been cor- rupted with an unseen noise, the selection of the appropriate j DFT j X phase 6 X model1 using MC dropout model2 using MC dropout model M using MC dropout f ^ S 1 t ( X ) g f ^ S 2 t ( X ) g f ^ S M t ( X ) g variance select output with minimum f ^ S i ∗ t ( X ) g compute empirical mean ^ S ( X ) IDFT enhanced speech noisy speech Figure 2: Enhancement using multiple DNN-MC dropout mod- els with pr edictive variance as the selection criteria. model gets harder , since the noise detector assumes that one of the models is trained with the correct noise. In this w ork, we follow [24] and say that since model uncer- tainty gives the intrinsic uncertainty of the model for a particular input, we can use it as an estimate of model error . Given that this relation holds, we can build a frame work as per Figure 2 to enhance speech. Thus, this approach works only when there is a strong correlation between model uncertainty and output error . Figure 2 shows the block diagram of the proposed ap- proach. Fi ve DNN-MC dropout models are trained on speech corrupted with factory2, leopard, m109, babble and volv o noises of SNRs 0, 5 and 10 dB. The architecture is the same as the one defined in Sec. 2.1. For a giv en noisy input frame X , each of these models generates an output by drop- ping out random units. T repetitions are performed by each model by dropping different units ev ery time, obtaining results { ˆ S i t ( X ) } ; 1 ≤ t ≤ T ; 1 ≤ i ≤ M ; where i is the model index and M = 5 . The predictive variance (uncertainty) is computed for each of the M different results. The model with the min- imum variance is selected as the best one for that frame. The enhanced output ˆ S is estimated as the empirical mean of the T outputs: { ˆ S i ∗ t ( X ) } ; 1 ≤ t ≤ T . The enhanced speech signal is obtained as the in verse Fourier transform of ˆ S with the phase of the noisy speech signal and ov erlap-add method. 4. Experiments and Results 4.1. Experimental setup TIMIT [29] speech corpus is used for the study . The fiv e noises used from the NOISEX-92 [30] database are downsampled to 16 kHz to match the sampling rate of TIMIT , in order to syn- thesize noisy training and test speech signals. The magnitude STFT is computed on frames of size 32 ms with 10 ms frame shift, after applying Hamming window . A 512-point FFT is taken and we use only the first 257 points as input to the DNN, because of symmetry in the spectrum. A DNN based re gression model is trained using the magnitude STFT features of clean and noisy speech. For multi-model MC dropout experiments, each DNN model is trained on one of the follo wing noises: Fac- tory 2, m109, leopard, babble and volvo each at SNRs 0, 5 and 10 dB. For the single model case, the DNN is trained on fac- tory2, m109, leopard, babble and volv o noises at SNRs 0,5 and 10 dB for both baseline and MC dropout. During testing, the noisy features are fed to this trained DNN to predict the en- hanced features, ˆ S . The enhanced speech signal is obtained as the in verse F ourier transform of ˆ S , using the phase of the noisy speech signal and ov erlap-add method. The DNN architecture used has been defined in Sec. 2.1. For our experiments, the number of repetitions T is chosen as 50. The Adam optimizer [31] is chosen, whose default regu- larization weight decay , λ is zero and thus, τ − 1 = 0 in eqn.6. 4.2. Results and discussion T able 1 lists the improv ements obtained in terms of sum squared error (SSE), and segmental SNR (SSNR) [32] for single DNN- MC dropout model ov er the baseline for unseen noises. W e use white, pink and factory 1 noises as unseen noises and factory2 as a seen noise. The reported results are the av erage ov er 100 files randomly selected from TIMIT [29]. The model achieves superior performance in most of the cases. It is to be noted that the improvement is significant for unseen noises like white noise, especially at low SNRs of -10 and -5 dB. Interestingly , the performance degrades with higher SNRs, though the model continues to perform better than the baseleine in terms of SSE. Though the proposed method does not result in significant im- prov ement on seen noises, the performance is comparable to the baseline model. Hence, the observations validate the proposed method of using MC dropout to improv e generalization perfor- mance on unseen noises. T able 2 lists the performance improvements obtained by the multi-model MC dropout DNNs using predicti ve v ariance, o ver the baseline single model in terms of SSE, and SSNR for speech corrupted with unseen noises of white, pink and factory1, aver - aged o ver 100 files randomly selected from TIMIT [29]. Again, the proposed method performs well on low SNRs, especially at -10 dB. Howe ver as the SNR improves, the improvement ov er the baseline drops. This performance drop can be explained by the reduced correlation between the squared error and the model uncertainty that is observed in Fig. 3. Figure 3 plots the correlation between the predictive vari- ance and the squared error (SE) of the estimated output frames for all the five MC models, for speech with white noise. The uncertainty is computed by taking the trace of the co variance matrix of each frame [25]. The plots show the weakening of the correlation between the SE and model uncertainty as the SNR improv es. The correlation is strong for -10 and -5 dB and is weak for the values of SNR (0, 5 and 10 dB) on which the model is trained, even if it is with different noises. This matches with our results, since we find that there is not much improvement ov er the baseline model as the SNR increases. Howe ver , the values are still comparable to those of the single model scheme. From the results in T able 2 and Fig. 3, the uncertainty based model selection shows promise of being potentially useful, es- pecially in t hose cases, where the correlation between the model uncertainty and the square error is strong. The interesting pat- tern on correlation needs further analysis that explores the vary- ing strength of correlation. W e would also like to learn the rela- tionship between correlation and squared error better , so that the model can be selected in a risk minimization paradigm. Each model can be trained on a dif ferent group of noises and still this algorithm has the potential to be useful. 5. Conclusion and future work In this work, we propose two novel techniques that use dropouts as a Bayesian estimator to impro ve generalizability of DNN based speech enhancement algorithms. The first method uses the empirical mean of multiple stochastic passes through the DNN-MC dropout model to obtain the enhanced output. Our experiments sho w that this technique results in superior en- hancement performances, especially on unseen noise and SNR conditions. The second method looks at the potential applica- tion of the model uncertainty as an estimate of squared error (SE), for frame-wise model selection in speech enhancement, using multiple DNN models. While the experiments on vali- T able 1: P erformance evaluation of single DNN model with MC dr opout White Pink Factory1 Factory2 SNR Metric Noisy Baseline MC Noisy Baseline MC Noisy Baseline MC Noisy Baseline MC -10 SSE x10ˆ4 3.64 3.36 3.14 3.96 0.874 0.848 3.69 0.720 0.70 4.13 0.0467 0.0461 SSNR -8.9 -8.5 -8.4 -8.8 -6.7 -6.6 -8.7 -6.0 -5.9 -8.5 1.0 1.0 -5 SSE x10ˆ4 1.12 0.960 0.913 1.22 0.270 0.251 1.12 0.213 0.200 1.29 0.0198 0.0197 SSNR -7.2 -6.6 -6.5 -7.1 -4.3 -4.2 -6.9 -3.51 -3.50 -6.7 3.05 3.08 0 SSE x10ˆ3 3.41 2.81 2.60 3.71 0.858 0.843 3.41 0.682 0.671 4.01 0.104 0.104 SSNR -4.6 -3.9 -3.8 -4.5 -1.5 -1.4 -4.4 -0.73 -0.73 -4.1 5.1 5.1 5 SSE x10ˆ3 1.03 0.844 0.827 1.12 0.291 0.288 1.02 0.244 0.242 1.24 0.069 0.069 SSNR -1.6 -0.7 -0.7 -1.4 1.7 1.7 -1.3 2.2 2.2 -0.9 7.1 7.1 10 SSE x10ˆ2 3.08 2.70 2.67 3.41 1.18 1.16 3.09 1.07 1.06 3.82 0.56 0.55 SSNR 2.0 2.7 2.7 2.2 4.7 4.7 2.3 5.0 5.0 2.6 8.9 8.9 T able 2: P erformance of multiple DNN-MC dr opout models with predictive variance based selection on unseen noises. White Pink F actory1 SNR Metric Noisy Baseline MC Noisy Baseline MC Noisy Baseline MC -10 SSE x10ˆ4 3.64 3.36 3.2 3.96 0.874 0.708 3.69 0.720 0.677 SSNR -8.9 -8.5 -8.4 -8.8 -6.7 -5.4 -8.7 -6.0 -5.3 -5 SSE x10ˆ4 1.12 0.960 0.936 1.22 0.270 0.261 1.12 0.213 0.20 SSNR -7.2 -6.6 -6.5 -7.1 -4.3 -3.7 -6.9 -3.51 -3.3 0 SSE x10ˆ3 3.41 2.81 2.70 3.71 0.858 0.943 3.41 0.682 0.771 SSNR -4.6 -3.9 -3.8 -4.5 -1.5 -1.3 -4.4 -0.73 -0.83 5 SSE x10ˆ3 1.03 0.844 0.857 1.12 0.291 0.391 1.02 .244 .285 SSNR -1.6 -0.7 -0.7 -1.4 1.7 1.6 -1.3 2.2 2.0 10 SSE x10ˆ2 3.08 2.70 2.73 3.41 1.18 1.40 3.09 1.07 1.24 SSNR 2.0 2.7 2.7 2.2 4.7 4.5 2.3 5.0 4.8 Factory2 model Leopard model M109 model Babble model V olvo model -10dB squared error 0 200 400 600 800 1000 12 00 variance 0 10 20 30 40 50 squared error 0 500 1000 1500 variance 0 10 20 30 40 50 60 squared error 0 500 1000 1500 variance 0 10 20 30 40 50 60 squared error 0 200 400 600 800 1000 1200 variance 0 10 20 30 40 squared error 0 200 400 600 800 1000 variance 0 10 20 30 40 50 -5dB squared error 0 100 200 300 4 00 variance 0 10 20 30 40 squared error 0 100 200 300 400 variance 0 10 20 30 40 squared error 0 100 200 300 400 variance 0 10 20 30 40 squared error 0 100 200 300 400 variance 0 5 10 15 20 25 30 squared error 0 100 200 300 400 variance 0 5 10 15 20 25 30 0dB squared error 0 20 40 60 80 1 00 variance 0 5 10 15 20 25 30 squared error 0 20 40 60 80 100 120 variance 0 5 10 15 20 25 30 squared error 0 20 40 60 80 100 120 variance 0 10 20 30 40 squared error 0 20 40 60 80 100 120 variance 0 10 20 30 40 squared error 0 20 40 60 80 100 variance 0 5 10 15 20 25 5dB squared error 0 10 20 30 40 50 variance 0 5 10 15 20 25 squared error 0 10 20 30 40 50 variance 0 5 10 15 20 25 30 squared error 0 10 20 30 40 50 variance 0 5 10 15 20 25 30 squared error 0 10 20 30 40 50 variance 0 10 20 30 40 squared error 0 5 10 15 20 25 30 variance 0 5 10 15 20 25 10dB squared error 0 5 10 15 20 25 30 variance 0 5 10 15 20 25 squared error 0 5 10 15 20 25 variance 0 5 10 15 20 25 squared error 0 10 20 30 40 variance 0 5 10 15 20 25 30 squared error 0 5 10 15 20 25 30 variance 0 5 10 15 20 25 30 squared error 0 5 10 15 20 variance 0 5 10 15 20 25 Figure 3: Corr elation plot between the predictive variance and the squared err or of the estimated output frames for all the five MC models for the case of speech corrupted with white noise as input dating this technique giv e only marginal improvement in some cases, the pattern of correlation between SE and model uncer- tainty , calls for further study . A particularly interesting line of study would include using complex functions that use the model uncertainty to arriv e at the optimal model for each frame. 6. References [1] S. F . Boll, “Suppression of acoustic noise in speech using spectral subtraction, ” Acoustics, Speec h Signal Pr oc, IEEE Tr ans. , v ol. 27, no. 2, pp. 113–120, 1979. [2] Y . Lu and P . C. Loizou, “ A geometric approach to spectral subtrac- tion, ” Speec h communication , vol. 50, no. 6, pp. 453–466, 2008. [3] V . Stahl, A. Fischer , and R. Bippus, “Quantile based noise estima- tion for spectral subtraction and W iener filtering, ” in Acoustics, Speech, and Signal Processing . ICASSP . Pr oceedings. Int. Conf. , vol. 3. IEEE, 2000, pp. 1875–1878. [4] Y . Ephraim and D. Malah, “Speech enhancement using a minimum-mean square error short-time spectral amplitude esti- mator , ” IEEE Tr ansactions on acoustics, speech, and signal pro- cessing , vol. 32, no. 6, pp. 1109–1121, 1984. [5] R. Martin, “Speech enhancement based on minimum mean-square error estimation and supergaussian priors, ” IEEE transactions on speech and audio pr ocessing , v ol. 13, no. 5, pp. 845–856, 2005. [6] J. S. Erkelens, R. C. Hendriks, R. Heusdens, and J. Jensen, “Mini- mum mean-square error estimation of discrete Fourier coefficients with generalized gamma priors, ” IEEE T ransactions on Audio, Speech, and Langua ge Pr ocessing , vol. 15, no. 6, pp. 1741–1752, 2007. [7] B. Y egnanarayana, C. A v endano, H. Hermansky , and P . S. Murthy , “Speech enhancement using linear prediction residual, ” Speech communication , vol. 28, no. 1, pp. 25–42, 1999. [8] W . Jin and M. S. Scordilis, “Speech enhancement by residual do- main constrained optimization, ” Speech Communication , vol. 48, no. 10, pp. 1349–1364, 2006. [9] P . Krishnamoorthy and S. M. Prasanna, “Enhancement of noisy speech by temporal and spectral processing, ” Speech Communi- cation , vol. 53, no. 2, pp. 154–174, 2011. [10] S. Srini vasan, J. Samuelsson, and W . B. Kleijn, “Codebook driven short-term predictor parameter estimation for speech enhance- ment, ” IEEE T ransactions on A udio, Speech, and Language Pr o- cessing , vol. 14, no. 1, pp. 163–176, 2006. [11] Y . Ephraim, “ A bayesian estimation approach for speech enhance- ment using hidden marko v models, ” IEEE T r ansactions on Signal Pr ocessing , vol. 40, no. 4, pp. 725–735, 1992. [12] H. Sameti, H. Sheikhzadeh, L. Deng, and R. L. Brennan, “HMM- based strategies for enhancement of speech signals embedded in nonstationary noise, ” IEEE T ransactions on Speech and Audio pr ocessing , vol. 6, no. 5, pp. 445–455, 1998. [13] S. T amura, “ An analysis of a noise reduction neural network, ” in Acoustics, Speech, and Signal Pr ocessing, ICASSP-89, Interna- tional Confer ence on . IEEE, 1989, pp. 2001–2004. [14] F . Xie and D. V an Compernolle, “ A family of MLP based nonlin- ear spectral estimators for noise reduction, ” in Acoustics, Speech, and Signal Processing , ICASSP-94, International Conference on , vol. 2. IEEE, 1994, pp. II–53. [15] E. A. W an and A. T . Nelson, “Networks for speech enhance- ment, ” Handbook of neural networks for speech pr ocessing . Artech House, Boston, USA , v ol. 139, p. 1, 1999. [16] G. E. Hinton and R. R. Salakhutdinov , “Reducing the dimension- ality of data with neural networks, ” science , vol. 313, no. 5786, pp. 504–507, 2006. [17] G. E. Hinton, S. Osindero, and Y .-W . T eh, “ A fast learning algo- rithm for deep belief nets, ” Neural computation , v ol. 18, no. 7, pp. 1527–1554, 2006. [18] A. L. Maas, Q. V . Le, T . M. O’Neil, O. V inyals, P . Nguyen, and A. Y . Ng, “Recurrent neural networks for noise reduction in ro- bust ASR, ” in 13th Annual Confer ence of the International Speech Communication Association , 2012. [19] Y . Xu, J. Du, L.-R. Dai, and C.-H. Lee, “ An experimental study on speech enhancement based on deep neural networks, ” IEEE Signal pr ocessing letters , vol. 21, no. 1, pp. 65–68, 2014. [20] Y . W ang and D. W ang, “T owards scaling up classification-based speech separation, ” IEEE T r ansactions on A udio, Speech, and Language Pr ocessing , v ol. 21, no. 7, pp. 1381–1390, 2013. [21] Y . Xu, J. Du, L.-R. Dai, and C.-H. Lee, “ A re gression ap- proach to speech enhancement based on deep neural networks, ” IEEE/ACM T ransactions on Audio, Speech and Language Pro- cessing (T ASLP) , vol. 23, no. 1, pp. 7–19, 2015. [22] G. E. Dahl, T . N. Sainath, and G. E. Hinton, “Impro ving deep neu- ral networks for L VCSR using rectified linear units and dropout, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), Interna- tional Confer ence on . IEEE, 2013, pp. 8609–8613. [23] N. Sri vasta va, G. Hinton, A. Krizhe vsky , I. Sutskever , and R. Salakhutdinov , “Dropout: A simple way to prevent neural net- works from overfitting, ” The Journal of Machine Learning Re- sear ch , vol. 15, no. 1, pp. 1929–1958, 2014. [24] Y . Gal and Z. Ghahramani, “Dropout as a bayesian approxima- tion: Representing model uncertainty in deep learning, ” in Inter - national Confer ence on Machine Learning , 2016, pp. 1050–1059. [25] A. K endall and R. Cipolla, “Modelling uncertainty in deep learning for camera relocalization, ” in Robotics and Automation (ICRA), International Confer ence on . IEEE, 2016, pp. 4762– 4769. [26] P . Papadopoulos, A. Tsiartas, and S. Narayanan, “Long-term snr estimation of speech signals in known and unknown channel con- ditions, ” IEEE/A CM Tr ansactions on Audio, Speech, and Lan- guage Pr ocessing , v ol. 24, no. 12, pp. 2495–2506, 2016. [27] Z.-Q. W ang, Y . Zhao, and D. W ang, “Phoneme-specific speech separation, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing , 2016. [28] P . M. Nazreen, A. G. Ramakrishnan, and P . K. Ghosh, “ A class- specific speech enhancement for phoneme recognition: A dictio- nary learning approach, ” Pr oc. Interspeech , pp. 3728–3732, 2016. [29] J. S. Garofolo, L. F . Lamel, W . M. Fisher, J. G. Fiscus, and D. S. Pallett, “DARP A TIMIT acoustic-phonetic continous speech cor- pus CD-R OM. NIST speech disc 1-1.1, ” NASA STI/Recon T echni- cal Report N , vol. 93, p. 27403, Feb . 1993. [30] A. V arga and H. J. M. Steeneken, “ Assessment for automatic speech recognition ii: Noisex-92: A database and an experiment to study the effect of additive noise on speech recognition sys- tems, ” Speec h Commun. , vol. 12, no. 3, pp. 247–251, Jul. 1993. [31] D. P . Kingma and J. Ba, “ Adam: A method for stochastic opti- mization, ” arXiv pr eprint arXiv:1412.6980 , 2014. [32] Y . Hu and P . C. Loizou, “Evaluation of objecti ve quality measures for speech enhancement, ” IEEE T ransactions on audio, speech, and language pr ocessing , v ol. 16, no. 1, pp. 229–238, 2008.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment