A Comparison of Modeling Units in Sequence-to-Sequence Speech Recognition with the Transformer on Mandarin Chinese

The choice of modeling units is critical to automatic speech recognition (ASR) tasks. Conventional ASR systems typically choose context-dependent states (CD-states) or context-dependent phonemes (CD-phonemes) as their modeling units. However, it has …

Authors: Shiyu Zhou, Linhao Dong, Shuang Xu

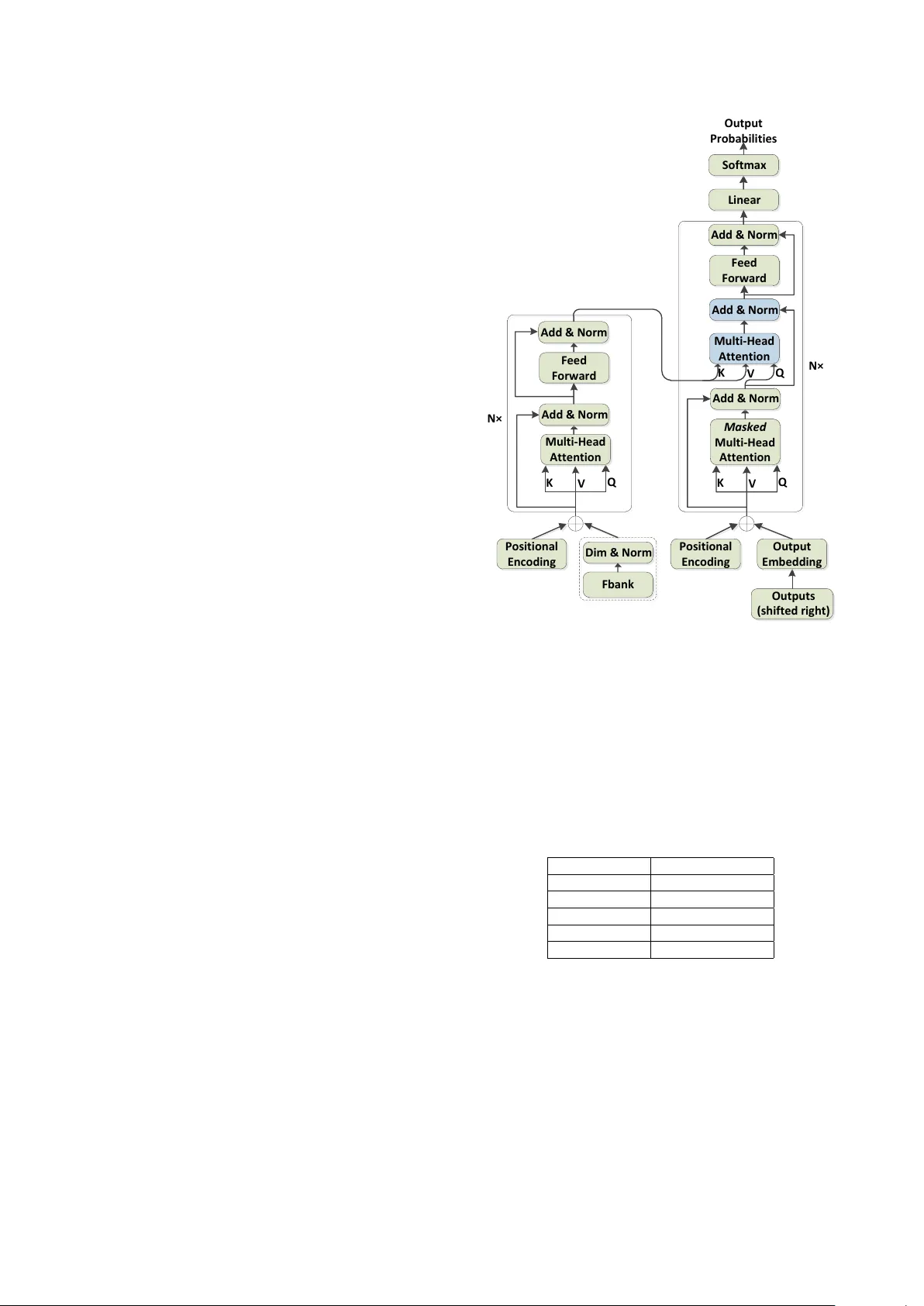

A Comparison of Modeling Units in Sequence-to-Sequence Speech Recognition with the T ransf ormer on Mandarin Chinese Shiyu Zhou 1 , 2 , Linhao Dong 1 , 2 , Shuang Xu 1 , Bo Xu 1 1 Institute of Automation, Chinese Academy of Sciences 2 Uni versity of Chinese Academy of Sciences { zhoushiyu2013, donglinhao2015, shuang.xu, xubo } @ia.ac.cn Abstract The choice of modeling units is critical to automatic speech recognition (ASR) tasks. Con ventional ASR systems typi- cally choose context-dependent states (CD-states) or context- dependent phonemes (CD-phonemes) as their modeling units. Howe ver , it has been challenged by sequence-to-sequence attention-based models, which integrate an acoustic, pronunci- ation and language model into a single neural network. On En- glish ASR tasks, pre vious attempts have already shown that the modeling unit of graphemes can outperform that of phonemes by sequence-to-sequence attention-based model. In this paper , we are concerned with modeling units on Mandarin Chinese ASR tasks using sequence-to-sequence attention-based models with the Transformer . Fiv e model- ing units are explored including context-independent phonemes (CI-phonemes), syllables, words, sub-words and characters. Experiments on HKUST datasets demonstrate that the lexicon free modeling units can outperform lexicon related modeling units in terms of character error rate (CER). Among fi ve model- ing units, character based model performs best and establishes a new state-of-the-art CER of 26 . 64% on HKUST datasets with- out a hand-designed le xicon and an extra language model in- tegration, which corresponds to a 4 . 8% relative improvement ov er the existing best CER of 28 . 0% by the joint CTC-attention based encoder-decoder netw ork. Index T erms : ASR, multi-head attention, modeling units, sequence-to-sequence, T ransformer 1. Introduction Con ventional ASR systems consist of three independent com- ponents: an acoustic model (AM), a pronunciation model (PM) and a language model (LM), all of which are trained indepen- dently . CD-states and CD-phonemes are dominant as their mod- eling units in such systems [1, 2, 3]. Howev er , it recently has been challenged by sequence-to-sequence attention-based mod- els. These models are commonly comprised of an encoder , which consists of multiple recurrent neural netw ork (RNN) lay- ers that model the acoustics, and a decoder , which consists of one or more RNN layers that predict the output sub-word se- quence. An attention layer acts as the interface between the encoder and the decoder: it selects frames in the encoder repre- sentation that the decoder should attend to in order to predict the next sub-word unit [4]. In [5], T ara et al. experimentally ver- ified that the grapheme-based sequence-to-sequence attention- based model can outperform the corresponding phoneme-based model on English ASR tasks. This work is very interesting and amazing since a hand-designed lexicon might be removed from ASR systems. As we known, it is very laborious and time-consuming to generate a pronunciation lexicon. Without a hand-designed lexicon, the design of ASR systems would be simplified greatly . Furthermore, the latest work shows that attention-based encoder-decoder architecture achiev es a ne w state-of-the-art WER on a 12500 hour English voice search task using the word piece models (WPM), which are sub-word units ranging from graphemes all the way up to entire words [6]. Since the outstanding performance of grapheme-based modeling units on English ASR tasks, we conjecture that maybe there is no need for a hand-designed lexicon on Mandarin Chi- nese ASR tasks as well by sequence-to-sequence attention- based models. In Mandarin Chinese, if a hand-designed lexicon is removed, the modeling units can be words, sub-words and characters. Character-based sequence-to-sequence attention- based models have been in vestigated on Mandarin Chinese ASR tasks in [7, 8], but the performance comparison with differ- ent modeling units are not explored before. Building on our work [9], which shows that syllable based model with the Trans- former can perform better than CI-phoneme based counterpart, we in vestigate five modeling units on Mandarin Chinese ASR tasks, including CI-phonemes, syllables (pinyins with tones), words, sub-words and characters. The Transformer is chosen to be the basic architecture of sequence-to-sequence attention- based model in this paper [9, 10]. Experiments on HKUST datasets confirm our hypothesis that the lexicon free modeling units, i.e. words, sub-words and characters, can outperform lexicon related modeling units, i.e. CI-phonemes and sylla- bles. Among five modeling units, character based model with the Transformer achiev es the best result and establishes a new state-of-the-art CER of 26 . 64% on HKUST datasets without a hand-designed lexicon and an e xtra language model integration, which is a 4 . 8% relativ e reduction in CER compared to the existing best CER of 28 . 0% by the joint CTC-attention based encoder-decoder network with a separate RNN-LM integration [11]. The rest of the paper is organized as follows. After an ov erview of the related work in Section 2, Section 3 describes the proposed method in detail. we then show experimental re- sults in Section 4 and conclude this work in Section 5. 2. Related work Sequence-to-sequence attention-based models hav e achie ved promising results on English ASR tasks and various model- ing units hav e been studied recently , such as CI-phonemes, CD-phonemes, graphemes and WPM [4, 5, 6, 12]. In [5], T ara et al. first explored sequence-to-sequence attention-based model trained with phonemes for ASR tasks and compared the modeling units of graphemes and phonemes. They ex- perimentally verified that the grapheme-based sequence-to- sequence attention-based model can outperform the corre- sponding phoneme-based model on English ASR tasks. Fur - thermore, the modeling units of WPM have been explored in [6], which are sub-word units ranging from graphemes all the way up to entire w ords. It achieved a new state-of-the-art WER on a 12500 hour English voice search task. Although sequence-to-sequence attention-based models perform very well on English ASR tasks, related works are quite few on Mandarin Chinese ASR tasks. Chan et al. first proposed Character-Pin yin sequence-to-sequence attention-based model on Mandarin Chinese ASR tasks. The Pinyin information was used during training for impro ving the performance of the char - acter model. Instead of using joint Character -Pinyin model, [8] directly used Chinese characters as network output by mapping the one-hot character representation to an embedding vector via a neural network layer . What’ s more, [13] compared the mod- eling units of characters and syllables by sequence-to-sequence attention-based models. Besides the modeling unit of character , the modeling units of words and sub-words are inv estigated on Mandarin Chinese ASR tasks in this paper . Sub-word units encoded by byte pair encoding (BPE) hav e been explored on neural machine transla- tion (NMT) tasks to address out-of-vocabulary (OO V) problem on open-v ocabulary translation [14], which iterativ ely replace the most frequent pair of characters with a single, unused sym- bol. W e extend it to Mandarin Chinese ASR tasks. BPE is ca- pable of encoding an open vocab ulary with a compact symbol vocab ulary of variable-length sub-word units, which requires no shortlist. 3. System overview 3.1. ASR T ransformer model architecture The Transformer model architecture is the same as sequence-to- sequence attention-based models except relying entirely on self- attention and position-wise, fully connected layers for both the encoder and decoder [15]. The encoder maps an input sequence of symbol representations x = ( x 1 , ..., x n ) to a sequence of con- tinuous representations z = ( z 1 , ..., z n ) . Giv en z , the decoder then generates an output sequence y = ( y 1 , ..., y m ) of symbols one element at a time. The ASR T ransformer architecture used in this work is the same as our work [9] which is shown in Figure 1. It stacks multi-head attention (MHA) [15] and position-wise, fully con- nected layers for both the encode and decoder . The encoder is composed of a stack of N identical layers. Each layer has two sub-layers. The first is a MHA, and the second is a position- wise fully connected feed-forward network. Residual connec- tions are emplo yed around each of the tw o sub-layers, follo wed by a layer normalization. The decoder is similar to the encoder except inserting a third sub-layer to perform a MHA ov er the output of the encoder stack. T o prev ent leftward information flow and preserve the auto-regressi ve property in the decoder , the self-attention sub-layers in the decoder mask out all v alues corresponding to illegal connections. In addition, positional en- codings [15] are added to the input at the bottoms of these en- coder and decoder stacks, which inject some information about the relativ e or absolute position of the tokens in the sequence. The dif ference between the NMT T ransformer [15] and the ASR T ransformer is the input of the encoder . we add a linear transformation with a layer normalization to con vert the log- Mel filterbank feature to the model dimension d model for di- mension matching, which is marked out by a dotted line in Fig- ure 1. M u l t i - H e a d A t t e n t i o n K V Q P o s i t i o n a l E n c o d i n g F e e d F o r w a r d A d d & N o r m A d d & N o r m M a s k e d M u l t i - H e a d A t t e n t i o n K V Q P o s i t i o n a l E n c o d i n g O u t p u t E m b e d d i n g M u l t i - H e a d A t t e n t i o n A d d & N o r m A d d & N o r m O u t p u t s ( s h i f t e d r i g h t ) F e e d F o r w a r d A d d & N o r m K V Q L i n e a r O u t p u t P r o b a b i l i t i e s S o f t m a x N × N × F b a n k D i m & N o r m Figure 1: The arc hitectur e of the ASR T ransformer . 3.2. Modeling units Fiv e modeling units are compared on Mandarin Chinese ASR tasks, including CI-phonemes, syllables, words, sub-words and characters. T able 1 summarizes the different number of output units inv estigated by this paper . W e sho w an example of various modeling units in T able 2. T able 1: Differ ent modeling units explor ed in this paper . Modeling units Number of outputs CI-phonemes 122 Syllables 1388 Characters 3900 Sub-words 11039 W ords 28444 3.2.1. CI-phoneme and syllable units CI-phoneme and syllable units are compared in our work [9], which 118 CI-phonemes without silence (phonemes with tones) are employed in the CI-phoneme based experiments and 1384 syllables (pinyins with tones) in the syllable based experi- ments. Extra tokens (i.e. an unknown token ( < UNK > ), a padding token ( < P AD > ), and sentence start and end tokens ( < S > / < \ S > )) are appended to the outputs, making the to- tal number of outputs 122 and 1388 respectiv ely in the CI- phoneme based model and syllable based model. Standard tied- state cross-word triphone GMM-HMMs are first trained with maximum likelihood estimation to generate CI-phoneme align- ments on training set. Then syllable alignments are generated through these CI-phoneme alignments according to the le xicon, which can handle multiple pronunciations of the same word in Mandarin Chinese. The outputs are CI-phoneme sequences or syllable se- quences during decoding stage. In order to con vert CI-phoneme sequences or syllable sequences into word sequences, a greedy cascading decoder with the Transformer [9] is proposed. First, the best CI-phoneme or syllable sequence s is calculated by the ASR T ransformer from observ ation X with a beam size β . And then, the best word sequence W is chosen by the NMT Trans- former from the best CI-phoneme or syllable sequence s with a beam size γ . Through cascading these two T ransformer models, we assume that P r ( W | X ) can be approximated. Here the beam size β = 13 and γ = 6 are employed in this work. 3.2.2. Sub-wor d units Sub-word units, using in this paper, are generated by BPE 1 [14], which iterati vely merges the most frequent pair of char- acters or character sequences with a single, unused symbol. Firstly , the symbol vocabulary with the character vocab ulary is initialized, and each word is represented as a sequence of characters plus a special end-of-word symbol ‘@@’, which al- lows to restore the original tok enization. Then, all symbol pairs are counted iterati vely and each occurrence of the most frequent pair (‘ A ’, ‘B’) are replaced with a new symbol ‘ AB’. Each merge operation produces a new symbol which represents a character n-gram. Frequent character n-grams (or whole words) are ev en- tually merged into a single symbol. Then the final symbol vo- cabulary size is equal to the size of the initial vocab ulary , plus the number of mer ge operations , which is the hyperparameter of this algorithm [14]. BPE is capable of encoding an open vocabulary with a compact symbol vocab ulary of variable-length sub-word units, which requires no shortlist. After encoded by BPE, the sub- word units are ranging from characters all the way up to entire words. Thus there are no OO V words with BPE and high fre- quent sub-words can be preserved. In our experiments, we choose the number of merge oper- ations 5000 , which generates the number of sub-words units 11035 from the training transcripts. After appended with 4 ex- tra tokens , the total number of outputs is 11039 . 3.2.3. W ord and c haracter units For word units, we collect all words from the training tran- scripts. Appended with 4 extra tokens , the total number of out- puts is 28444 . For character units, all Mandarin Chinese characters to- gether with English words in training transcripts are collected, which are appended with 4 extra tokens to generate the total number of outputs 3900 2 . 1 https://github .com/rsennrich/subword-nmt 2 we manually delete two tokens · and + , which are not Mandarin Chinese characters. 4. Experiment 4.1. Data The HKUST corpus (LDC2005S15, LDC2005T32), a corpus of Mandarin Chinese conv ersational telephone speech, is col- lected and transcribed by Hong K ong Uni versity of Science and T echnology (HKUST) [16], which contains 150-hour speech, and 873 calls in the training set and 24 calls in the test set. All experiments are conducted using 80-dimensional log-Mel filter- bank features, computed with a 25ms windo w and shifted ev ery 10ms. The features are normalized via mean subtraction and variance normalization on the speaker basis. Similar to [17, 18], at the current frame t , these features are stacked with 3 frames to the left and downsampled to a 30ms frame rate. As in [11], we generate more training data by linearly scaling the audio lengths by factors of 0 . 9 and 1 . 1 (speed perturb.), which can improve the performance in our experiments. T able 2: An example of various modeling units in this paper . Modeling units Example CI-phonemes Y IY1 JH UH3 NG3 X IY4 N4 N IY4 AE4 N4 Syllables YI1 ZHONG3 XIN4 NIAN4 Characters 一 种 信 念 Sub-words 一 种 信 @@ 念 W ords 一 种 信 念 4.2. T raining W e perform our experiments on the base model and big model (i.e. D512-H8 and D1024-H16 respecti vely) of the Transformer from [15]. The basic architecture of these two models is the same but different parameters setting. T able 3 lists the experi- mental parameters between these two models. The Adam algo- rithm [19] with gradient clipping and warmup is used for opti- mization. During training, label smoothing of value ls = 0 . 1 is employed [20]. After trained, the last 20 checkpoints are av- eraged to make the performance more stable [15]. T able 3: Experimental parameters configuration. model N d model h d k d v war mup D512-H8 6 512 8 64 64 4000 steps D1024-H16 6 1024 16 64 64 12000 steps In the CI-phoneme and syllable based model, we cascade an ASR T ransformer and a NMT T ransformer to generate word sequences from observation X . Ho wev er , we do not employ a NMT Transformer anymore in the word, sub-word and charac- ter based model, since the beam search results from the ASR T ransformer are already the Chinese character level. The total parameters of different modeling units list in T able 4. 4.3. Results According to the description from Section 3.2, we can see that the modeling units of words, sub-words and characters are lex- icon free, which do not need a hand-designed lexicon. On the contrary , the modeling units of CI-phonemes and syllables need a hand-designed lexicon. Our results are summarized in T able 5. It is clear to see that the lexicon free modeling units, i.e. words, sub-words and T able 4: T otal parameters of dif fer ent modeling units. model D512-H8 (ASR) D1024-H16 (ASR) D512-H8 (NMT) CI-phonemes 57 M 227 M 71 M Syllables 58 M 228 M 72 M W ords 71 M 256 M − Sub-words 63 M 238 M − Characters 59 M 231 M − characters, can outperform corresponding lexicon related mod- eling units, i.e. CI-phonemes and syllables on HKUST datasets. It confirms our hypothesis that we can remove the need for a hand-designed lexicon on Mandarin Chinese ASR tasks by sequence-to-sequence attention-based models. What’ s more, we note here that the sub-word based model performs better than the word based counterpart. It represents that the modeling unit of sub-words is superior to that of words, since sub-word units encoded by BPE hav e fewer number of outputs and with- out OO V problems. Ho wev er , the sub-word based model per- forms worse than the character based model. The possible rea- son is that the modeling unit of sub-w ords is bigger than that of characters which is difficult to train. W e will conduct our exper - iments on lar ger datasets and compare the performance between the modeling units of sub-words and characters in future work. Finally , among fi ve modeling units, character based model with the Transformer achieves the best result. It demonstrates that the modeling unit of character is suitable for Mandarin Chi- nese ASR tasks by sequence-to-sequence attention-based mod- els, which can simplify the design of ASR systems greatly . T able 5: Comparison of differ ent modeling units with the T rans- former on HKUST datasets in CER (%). Modeling units Model CER CI-phonemes [9] D512-H8 32 . 94 D1024-H16 30.65 D1024-H16 (speed perturb) 30 . 72 Syllables [9] D512-H8 31 . 80 D1024-H16 29 . 87 D1024-H16 (speed perturb) 28.77 W ords D512-H8 31 . 98 D1024-H16 28 . 74 D1024-H16 (speed perturb) 27.42 Sub-words D512-H8 30 . 22 D1024-H16 28 . 28 D1024-H16 (speed perturb) 27.26 Characters D512-H8 29 . 00 D1024-H16 27 . 70 D1024-H16 (speed perturb) 26.64 4.4. Comparison with pre vious works In T able 6, we compare our e xperimental results to other model architectures from the literature on HKUST datasets. First, we can find that our best results of different modeling units are comparable or superior to the best result by the deep multidi- mensional residual learning with 9 LSTM layers [21], which is a hybrid LSTM-HMM system with the modeling unit of CD- states. W e can observe that the best CER 26 . 64% of char- acter based model with the T ransformer on HKUST datasets achiev es a 13 . 4% relative reduction compared to the best CER of 30 . 79% by the deep multidimensional residual learning with 9 LSTM layers. It shows the superiority of the sequence-to- sequence attention-based model compared to the hybrid LSTM- HMM system. Moreov er , we can note that our best results with the model- ing units of words, sub-w ords and characters are superior to the existing best CER of 28 . 0% by the joint CTC-attention based encoder-decoder network with a separate RNN-LM integration [11], which is the state-of-the-art on HKUST datasets to the best of our knowledge. Character based model with the T ransformer establishes a new state-of-the-art CER of 26 . 64% on HKUST datasets without a hand-designed lexicon and an extra language model integration, which is a 7 . 8% relative reduction in CER compared to the CER of 28 . 9% of the joint CTC-attention based encoder-decoder network when no external language model is used, and a 4 . 8% relativ e reduction in CER compared to the existing best CER of 28 . 0% by the joint CTC-attention based encoder-decoder netw ork with separate RNN-LM [11]. T able 6: CER (%) on HKUST datasets compared to pre vious works. model CER LSTMP-9 × 800P512-F444 [21] 30 . 79 CTC-attention+joint dec. (speed perturb., one-pass) +VGG net +RNN-LM (separate) [11] 28 . 9 28.0 CI-phonemes-D1024-H16 [9] 30 . 65 Syllables-D1024-H16 (speed perturb) [9] 28 . 77 W ords-D1024-H16 (speed perturb) 27 . 42 Sub-words-D1024-H16 (speed perturb) 27 . 26 Characters-D1024-H16 (speed perturb) 26.64 5. Conclusions In this paper we compared fiv e modeling units on Mandarin Chinese ASR tasks by sequence-to-sequence attention-based model with the T ransformer, including CI-phonemes, syllables, words, sub-words and characters. W e experimentally verified that the lexicon free modeling units, i.e. words, sub-words and characters, can outperform lexicon related modeling units, i.e. CI-phonemes and syllables on HKUST datasets. It represents that maybe we can remove the need for a hand-designed lexi- con on Mandarin Chinese ASR tasks by sequence-to-sequence attention-based models. Among fi ve modeling units, charac- ter based model achieves the best result and establishes a new state-of-the-art CER of 26 . 64% on HKUST datasets without a hand-designed lexicon and an e xtra language model integration, which corresponds to a 4 . 8% relativ e improv ement over the existing best CER of 28 . 0% by the joint CTC-attention based encoder-decoder network. Moreover , we find that sub-word based model with the T ransformer , encoded by BPE, achiev es a promising result, although it is slightly worse than character based counterpart. 6. Acknowledgements The authors would like to thank Chunqi W ang and Feng W ang for insightful discussions. 7. References [1] G. E. Dahl, D. Y u, L. Deng, and A. Acero, “Context-dependent pre-trained deep neural networks for large-vocabulary speech recognition, ” IEEE T ransactions on audio, speech, and language pr ocessing , vol. 20, no. 1, pp. 30–42, 2012. [2] H. Sak, A. Senior , and F . Beaufays, “Long short-term memory re- current neural network architectures for lar ge scale acoustic mod- eling, ” in Fifteenth annual conference of the international speech communication association , 2014. [3] A. Senior , H. Sak, and I. Shafran, “Context dependent phone mod- els for lstm rnn acoustic modelling, ” in Acoustics, Speech and Sig- nal Processing (ICASSP), 2015 IEEE International Confer ence on . IEEE, 2015, pp. 4585–4589. [4] R. Prabhavalkar , T . N. Sainath, B. Li, K. Rao, and N. Jaitly , “ An analysis of attention in sequence-to-sequence models,, ” in Proc. of Interspeech , 2017. [5] T . N. Sainath, R. Prabhav alkar , S. Kumar , S. Lee, A. Kannan, D. Rybach, V . Schogol, P . Nguyen, B. Li, Y . Wu et al. , “No need for a lexicon? e valuating the value of the pronunciation lexica in end-to-end models, ” arXiv preprint , 2017. [6] C.-C. Chiu, T . N. Sainath, Y . Wu, R. Prabhavalkar , P . Nguyen, Z. Chen, A. Kannan, R. J. W eiss, K. Rao, K. Gonina et al. , “State- of-the-art speech recognition with sequence-to-sequence models, ” arXiv pr eprint arXiv:1712.01769 , 2017. [7] W . Chan and I. Lane, “On online attention-based speech recog- nition and joint mandarin character-pinyin training. ” in INTER- SPEECH , 2016, pp. 3404–3408. [8] C. Shan, J. Zhang, Y . W ang, and L. Xie, “ Attention-based end-to- end speech recognition on voice search. ” [9] S. Zhou, L. Dong, S. Xu, and B. Xu, “Syllable-Based Sequence- to-Sequence Speech Recognition with the Transformer in Man- darin Chinese, ” ArXiv e-prints , Apr . 2018. [10] B. X. Linhao Dong, Shuang Xu, “Speech-transformer: A no- recurrence sequence-to-sequence model for speech recognition, ” in Acoustics, Speec h and Signal Pr ocessing (ICASSP), 2018 IEEE International Confer ence on . IEEE, 2018, pp. 5884–5888. [11] T . Hori, S. W atanabe, Y . Zhang, and W . Chan, “ Advances in joint ctc-attention based end-to-end speech recognition with a deep cnn encoder and rnn-lm, ” arXiv preprint , 2017. [12] R. Prabhavalkar , K. Rao, T . N. Sainath, B. Li, L. Johnson, and N. Jaitly , “ A comparison of sequence-to-sequence models for speech recognition, ” in Proc. Inter speech , 2017, pp. 939–943. [13] W . Zou, D. Jiang, S. Zhao, and X. Li, “ A comparable study of modeling units for end-to-end mandarin speech recognition, ” arXiv pr eprint arXiv:1805.03832 , 2018. [14] R. Sennrich, B. Haddow , and A. Birch, “Neural machine translation of rare words with subword units, ” arXiv preprint arXiv:1508.07909 , 2015. [15] A. V aswani, N. Shazeer, N. Parmar , J. Uszkoreit, L. Jones, A. N. Gomez, Ł. Kaiser, and I. Polosukhin, “ Attention is all you need, ” in Advances in Neural Information Pr ocessing Systems , 2017, pp. 6000–6010. [16] Y . Liu, P . Fung, Y . Y ang, C. Cieri, S. Huang, and D. Graff, “Hkust/mts: A very large scale mandarin telephone speech cor- pus, ” in Chinese Spoken Language Processing . Springer , 2006, pp. 724–735. [17] H. Sak, A. Senior, K. Rao, and F . Beaufays, “Fast and accurate recurrent neural network acoustic models for speech recognition, ” arXiv pr eprint arXiv:1507.06947 , 2015. [18] A. Kannan, Y . Wu, P . Nguyen, T . N. Sainath, Z. Chen, and R. Prabhavalkar , “ An analysis of incorporating an external lan- guage model into a sequence-to-sequence model, ” arXiv preprint arXiv:1712.01996 , 2017. [19] D. P . Kingma and J. Ba, “ Adam: A method for stochastic opti- mization, ” arXiv preprint , 2014. [20] C. Szegedy , V . V anhoucke, S. Iof fe, J. Shlens, and Z. W ojna, “Re- thinking the inception architecture for computer vision, ” in Pr o- ceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , 2016, pp. 2818–2826. [21] Y . Zhao, S. Xu, and B. Xu, “Multidimensional residual learning based on recurrent neural networks for acoustic modeling, ” Inter- speech 2016 , pp. 3419–3423, 2016.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment