Design and Analysis of the NIPS 2016 Review Process

Neural Information Processing Systems (NIPS) is a top-tier annual conference in machine learning. The 2016 edition of the conference comprised more than 2,400 paper submissions, 3,000 reviewers, and 8,000 attendees. This represents a growth of nearly 40% in terms of submissions, 96% in terms of reviewers, and over 100% in terms of attendees as compared to the previous year. The massive scale as well as rapid growth of the conference calls for a thorough quality assessment of the peer-review process and novel means of improvement. In this paper, we analyze several aspects of the data collected during the review process, including an experiment investigating the efficacy of collecting ordinal rankings from reviewers. Our goal is to check the soundness of the review process, and provide insights that may be useful in the design of the review process of subsequent conferences.

💡 Research Summary

The paper provides a comprehensive, data‑driven evaluation of the peer‑review process used for the 2016 Neural Information Processing Systems (NIPS) conference. With 2,425 submissions, 100 area chairs (ACs), and 3,242 active reviewers, the conference experienced a 40 % increase in submissions compared with the previous year, prompting a need to scrutinize scalability, fairness, and reliability of the review workflow.

Reviewer and AC recruitment

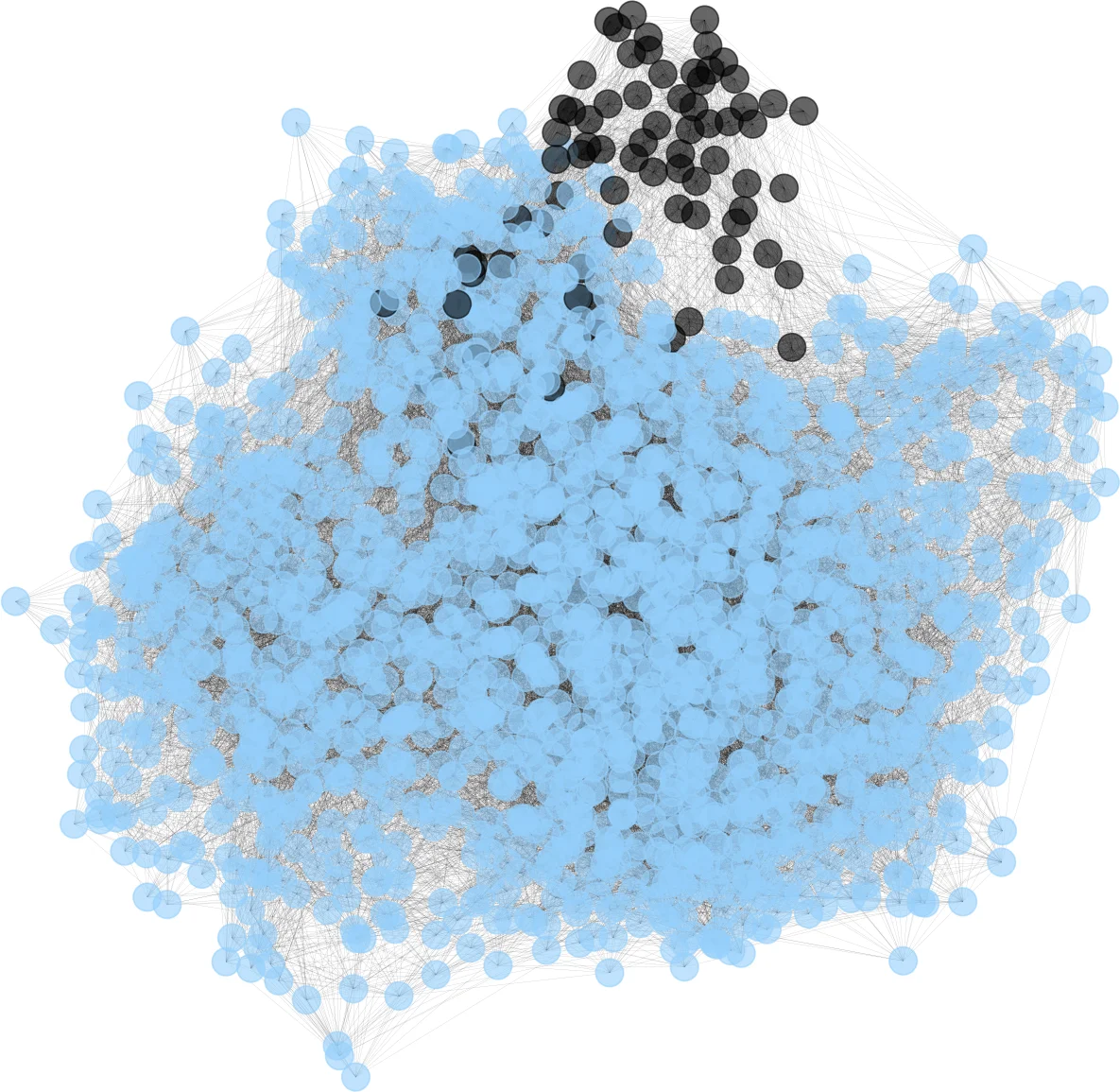

The authors distinguished two reviewer pools: an invited senior pool (Pool 1) and a volunteer author‑reviewer pool (Pool 2). Pool 1 comprised 1,236 senior researchers, 566 post‑docs, and 255 PhD students; Pool 2 added 143 senior, 206 post‑docs, and 827 students. This hybrid model aimed to meet the surge in paper volume while preserving expertise. However, analysis of the bidding data revealed a severe imbalance: only a small minority of reviewers submitted positive bids, while the majority either gave negative bids or did not bid at all. Specifically, 27 % of reviewers generated 90 % of all bids, and 50 % generated 90 % of the positive bids. Consequently, 278 papers received at most two positive bids and 816 papers received at most five, raising concerns about the adequacy of expertise coverage.

Assignment algorithm

To mitigate the bidding shortfall, the conference employed the Toronto Paper Matching System (TPMS) to compute an affinity score from paper content and reviewer profiles, combined with self‑declared subject‑area similarity and a bid multiplier. An overall similarity score guided an automated preliminary assignment, after which ACs could manually decline papers for conflict‑of‑interest reasons. The authors suggest that graph‑theoretic matching techniques can guarantee each paper receives at least three highly competent reviewers, a recommendation that could be adopted by future large‑scale venues.

Scoring rubric

The 2016 conference replaced the historic single 1‑10 score with four separate 1‑5 ratings (technical quality, novelty, impact, clarity) plus a confidence level (expert, confident, less confident). The analysis uncovered substantial mis‑calibration: reviewers frequently clustered scores at the extremes, producing many tied scores (especially between 1‑2 and 4‑5). This “score tie” phenomenon reduces the discriminative power of the cardinal system. Nevertheless, the study found no statistically significant bias between invited senior reviewers and volunteer reviewers; both groups displayed comparable mean scores and variances. Junior reviewers, however, reported lower confidence and exhibited higher variance, suggesting a need for targeted guidance or calibration for less‑experienced reviewers.

Rebuttal phase

Authors were allowed to submit rebuttals after the initial reviews. The authors measured the change in reviewer scores between the initial and post‑rebuttal phases and observed a negligible average shift of 0.07 points. This indicates that, in practice, rebuttals had minimal impact on reviewer judgments for NIPS 2016, a finding that could inform the design of future rebuttal policies (e.g., limiting time or focusing on specific concerns).

Bias and disagreement

Statistical tests showed no detectable area‑specific acceptance bias, and the overall disagreement among reviewers decreased compared with NIPS 2015. The reduction in variance may be attributed to the multi‑dimensional scoring scheme and improved reviewer‑paper matching.

Ordinal ranking experiment

After the official review cycle, the organizers collected a total ranking of all papers reviewed by each reviewer (2,189 reviewers participated). This ordinal data revealed that many tied cardinal scores could be broken by the reviewers’ implicit ranking, providing a richer signal for paper quality. Moreover, the authors demonstrated that inconsistencies—cases where a reviewer’s cardinal scores conflicted with their ordinal ranking—could be automatically flagged, offering a potential tool for quality control.

Actionable recommendations

The paper concludes with concrete suggestions: (1) enhance the bidding interface and incentivize positive bids; (2) integrate graph‑theoretic matching to guarantee a minimum number of expert reviewers per paper; (3) introduce calibration workshops and benchmark papers to align scoring scales; (4) provide additional training and confidence‑building resources for junior reviewers; (5) re‑evaluate the effectiveness of rebuttals through controlled experiments; (6) institutionalize the collection of ordinal rankings and develop automated inconsistency detection pipelines.

Overall, the study delivers a rare, post‑hoc, large‑scale empirical assessment of a top‑tier machine‑learning conference’s review process. Its findings and recommendations are directly applicable to any rapidly growing scientific venue seeking to preserve review quality, fairness, and transparency while handling thousands of submissions.

Comments & Academic Discussion

Loading comments...

Leave a Comment