Building state-of-the-art distant speech recognition using the CHiME-4 challenge with a setup of speech enhancement baseline

This paper describes a new baseline system for automatic speech recognition (ASR) in the CHiME-4 challenge to promote the development of noisy ASR in speech processing communities by providing 1) state-of-the-art system with a simplified single syste…

Authors: Szu-Jui Chen, Aswin Shanmugam Subramanian, Hainan Xu

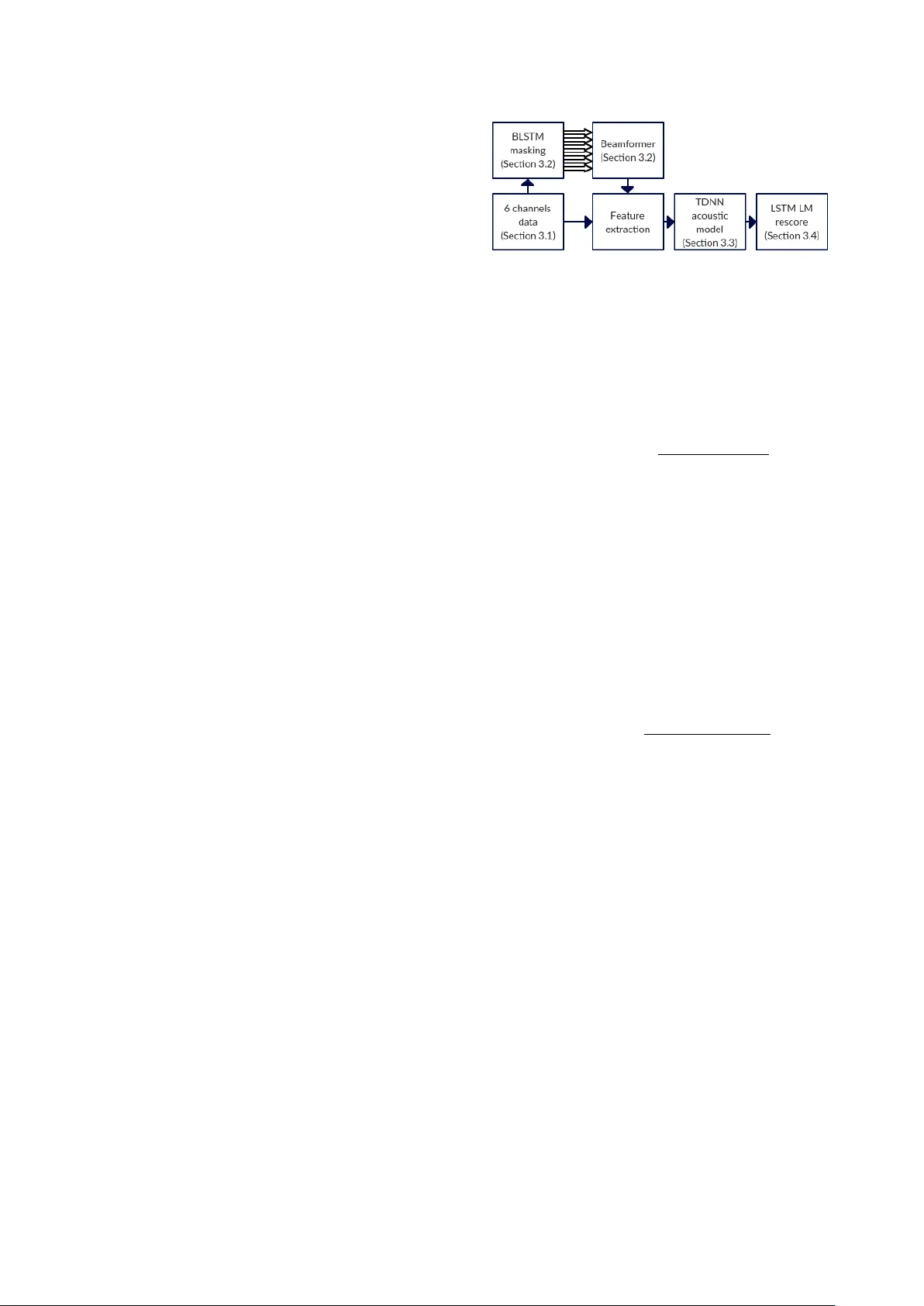

Building state-of-the-art distant speech r ecognition using the CHiME-4 challenge with a setup of speech enhancement baseline Szu-J ui Chen, Aswin Shanmugam Subr amanian, Hainan Xu, Shinji W atanabe Center for Language and Speech Processing, Johns Hopkins Uni versity , Baltimore, MD 21218, USA { schen146,asubra13,hxu31,shinjiw } @jhu.edu Abstract This paper describes a new baseline system for automatic speech recognition (ASR) in the CHiME-4 challenge to pro- mote the de velopment of noisy ASR in speech processing com- munities by providing 1) state-of-the-art system with a sim- plified single system comparable to the complicated top sys- tems in the challenge, 2) publicly av ailable and reproducible recipe through the main repository in the Kaldi speech recog- nition toolkit. The proposed system adopts generalized eigen- value beamforming with bidirectional long short-term memory (LSTM) mask estimation. W e also propose to use a time de- lay neural network (TDNN) based on the lattice-free version of the maximum mutual information (LF-MMI) trained with aug- mented all six microphones plus the enhanced data after beam- forming. Finally , we use a LSTM language model for lattice and n-best re-scoring. The final system achieved 2.74% WER for the real test set in the 6-channel track, which corresponds to the 2nd place in the challenge. In addition, the proposed base- line recipe includes four different speech enhancement mea- sures, short-time objecti ve intelligibility measure (STOI), ex- tended STOI (eSTOI), perceptual evaluation of speech quality (PESQ) and speech distortion ratio (SDR) for the simulation test set. Thus, the recipe also provides an experimental platform for speech enhancement studies with these performance measures. Index T erms : Speech recognition, noise robustness, mask- based beamforming, lattice-free MMI, LSTM language mod- eling 1. Introduction In recent years, multi-channel speech recognition has been ap- plied on devices used in daily life, such as Amazon Echo and Google Home. The recognition accuracy is greatly improv ed by exploiting microphone arrays when compared to single channel microphone devices [1–3]. Howev er, satisfactory performance is still not achie ved in noisy everyday environments. Hence, the CHiME-4 challenge is designed to conquer this scenario by recognizing speech in challenging noisy en vironments [4]. Through the series of the challenge activities, sev eral speech enhancement and recognition techniques are established as an effecti ve method for this scenario including mask-based beam- forming, multichannel data augmentation, and system combi- nation with various front-end techniques [5–9]. Although many submitted systems in the CHiME-4 chal- lenge ha ve yielded a lot of outcomes in this multi-channel Au- tomatic Speech Recognition (ASR) scenario [6–8], one of the drawbacks is that all top systems are highly complicated due to multiple systems and fusion techniques, and it is not easy for the other research groups to follo w these outcomes. This pa- per aims to deal with the above drawback by building a new baseline to promote the de velopment of noisy ASR in speech enhancement, separation, and recognition communities. W e propose a single ASR system to further push the border of this challenge. Most important of all, our system is repro- ducible since it is implemented in the Kaldi ASR toolkit and other opensource toolkits. All the scripts in our experiments can be downloaded from the of ficial GitHub website 1 . The orig- inal CHiME-4 baseline is described in [4], which uses a delay- and-sum beamformer (BeamformIt) [10], a deep neural net- work with state-level minimum Bayes Risk (DNN+sMBR) cri- terion [11], and recurrent neural network-based language model (RNNLM) [12]. On the contrary , our proposed system is sho wn in Figure 1. W e adopt to use Bidirectional long short-term mem- ory (BLSTM) mask based beamformer (Section 3.2), which has been shown to be more ef fective [13, 14] than BeamformIt. For an acoustic model, the DNN used in baseline is limited to rep- resent long-term dependencies between acoustic characteristics. Hence, a sub-sampled time delay neural network (TDNN) [15] with the lattice-free version of the maximum mutual informa- tion (LF-MMI) is used for our acoustic model [16] (Section 3.3). This paper also shows the great improvement on the w ord error rate (WER) when we combine it with data augmentation in a multichannel scenario using all six microphones plus the en- hanced data after beamforming. Then, we further use a LSTM language model (LSTMLM), which uses a ne w training crite- rion and importance sampling, and has been shown to be more efficient and better in performance [17], to re-score hypotheses. W e also incorporate computation of four different speech enhancement measures in our recipe - perceptual ev aluation of speech quality (PESQ) [18], short-time objective intelligibility measure (ST OI) [19], extended STOI (eSTOI) [20] and speech distortion ratio (SDR) [21]. W e include these measurements as part of the recipe for two reasons. First, the ASR performance shows only one aspect of the speech enhancement algorithm. Objectiv e enhancement metrics can give an indication on how well the enhancement is with different aspects (e.g., intelligi- bility , signal distortions). Second, testing an enhancement al- gorithm with ASR takes a significant amount of computational time, whereas obtaining these scores is quite fast. Hence, it can giv e an initial indication of how good the enhancement is. 2. Related work In [6], a fusion system in the DNN posterior domain is pro- posed to get the best result in the competition. [7–9] also use fusion systems in the decoding hypothesis domain with multi- ple systems mainly using different front-end techniques. Un- like these highly complicated systems, our proposed system is based on a single system without the above fusion systems, yet achiev es comparable performance to these top systems in the challenge task. One of the unique technical aspects of our pro- posed system is to fully utilize the effecti veness of TDNN with 1 https://github .com/kaldi-asr/kaldi/pull/2142 LF-MMI by combining it with multichannel data augmentation techniques, which achieves significant improv ement. Our new LSTMLM also contributes to boost the final performance. 3. Proposed system Our system starts from BLSTM mask based beamformer and followed by feature extraction. Phoneme to audio alignments are then generated by GMM acoustic model and are fed into TDNN acoustic model for training. Finally , the lattices after first pass decoding in TDNN is re-scored by a 5-gram LM and further re-scored by LSTMLM. 3.1. Data augmentation T raining with multichannel data has been shown to be effecti ve for ASR systems [1, 8, 22]. This augmentation can increase the variety in the training data and help the generalization to test set. In our work, we not only use data from all 6 channels but also add the enhanced data generated by beamformer to training set. Let O = ( o ( t ) ∈ R D | t = 1 , . . . , T ) be a sequence of D -dimensional feature vectors with length T , which is a sin- gle channel speech recognition case. In our case, we deal with an M -channel input ( M = 6 ), which is represented as O = ( o m ( t ) ∈ R D | t = 1 , . . . , T , m = 1 , . . . , M ) . Then, the original training method only uses a particular channel in- put (e.g., m -th input) as training data to obtain acoustic model parameters Θ , as follows: ˆ Θ = arg max Θ L ( O m ) , (1) where L is an objective function (log likelihood for the GMM case and negativ e cross entropy for the DNN case), with refer- ence labels as supervisions. Data augmentation approach tries to use training data of all channels, as follows: ˆ Θ = arg max Θ L ( O = { O m } M m =1 ) (2) Further , we extend to include an enhanced data O enh = ( o enh ( t ) ∈ R D | t = 1 , . . . , T ) with the abov e multichannel data, that is ˆ Θ = arg max Θ L ( { O , O enh } ) , (3) where the enhancement data O enh is obtained by a single- channel masking or beamformer method, which is described in Section 3.2. 3.2. BLSTM mask based beamformer W e use the BLSTM mask based Generalized Eigen value (GEV) beamformer described in [14]. The GEV beamforming proce- dure requires an estimate of the Cross-Power Spectral Density (PSD) matrix of the noise and the target speech. The BLSTM model estimates two masks: the first mask indicates the time frequency bin that are probably dominated by speech and the other indicates which are dominated by noise. With the com- bined speech and noise masks, we can estimate the PSD ma- trices of speech components Φ speech ( b ) ∈ C M × M at frequency bin b , and that of noise components Φ noise ( b ) ∈ C M × M , as follows: Φ v ( b ) = T X t =1 w v ( t, b ) y ( t, b ) y ( t, b ) H where v ∈ { speech , noise } , (4) Figure 1: Diagram of speech r ecognition system. where y ( t, b ) ∈ C M is an M -dimensional complex spectrum at time (frame) t in frequency bin b . y H denotes the conjugate transpose. w v ( t, b ) ∈ [0 , 1] is the mask v alue. The goal of GEV beamformer [23] is to estimate the beam- forming filter f ( b ) , which maximizes the expected SNR for each frequency bin b as given by the equation belo w: f GEV ( b ) = argmax f ( b ) f H ( b ) Φ speech ( b ) f ( b ) f H ( b ) Φ noise ( b ) f ( b ) . (5) Eq. (5) is equiv alent to solv e the follo wing eigen value problem: ( Φ noise ( b )) − 1 Φ speech ( b ) f ( b ) = λ f ( b ) , (6) where f ( b ) ∈ C M at each frequenc y bin b is the M -dimensional complex eigen vector and λ is the eigenv alue. 3.3. Time delayed neural network with lattice-free MMI For acoustic model, we use TDNN with LF-MMI training [16] instead of DNN+sMBR [11]. The architecture is similar to those described in [24]. The LF-MMI objectiv e function is shown below , which is different from usual MMI training [25] in a sense that we use phoneme sequence L instead of a word sequence to narrow do wn a search space in the denominator: L MMI = N X n =1 log p ( O n | S n ) C P ( L n ) P L p ( O n | S L ) C P ( L ) (7) where p ( O n | S L ) is the likelihood function of a speech fea- ture sequence O n giv en the state sequence S L at n 'th utterance. P ( L ) is the phoneme language model probability and C is the probability scale. Note that when combined with the data augmentation tech- nique (described in Section 3.1), TDNN is more ef fective than DNN. 3.4. LSTM language modeling The LSTM based language model (LSTMLM) has been sho wn to be ef fectiv e on language modeling [26]. It is better in find- ing a longer period of conte xtual information than con ventional RNN. W ith this property , LSTMLM can predict the next word in a more accurate way than RNNLM. Hence, instead of using a vanilla RNNLM [12], we train an LSTMLM on WSJ data, which combines the use of subword features and one-hot en- coding. An importance sampling method is used to speed up training. Most important of all, a ne w objecti ve function L LM is used for LM training, which behav es like cross-entropy objec- tiv e but trains the output to auto-normalize in order to speed up test time computation: L LM = z j + 1 − X i exp( z i ) (8) T able 1: Speech Enhancement Scor es Dev (Simu) T est (Simu) T rack Enhancement Method PESQ STOI eSTOI SDR PESQ STOI eSTOI SDR 1ch No Enhancement 2.01 0.82 0.61 3.92 1.98 0.81 0.60 4.95 1ch BLSTM Mask 2.52 0.88 0.73 9.26 2.46 0.87 0.71 10.76 2ch BeamformIt 2.15 0.85 0.65 4.61 2.07 0.83 0.62 5.60 2ch BLSTM Gev 2.13 0.87 0.69 2.86 2.12 0.87 0.69 3.10 6ch BeamformIt 2.31 0.88 0.70 5.52 2.20 0.86 0.65 6.30 6ch BLSTM Gev 2.45 0.88 0.75 3.57 2.46 0.87 0.73 2.92 where z is a pre-activ ation vector in the layer of neural network before the final softmax operation and j is an index for the cor- rect word. More detail can be found in [17]. T able 2: Experimental configurations BLSTM mask estimation input layer dimension 513 L1 - BLSTM layer dimension 256 L2 - FF layer 1 (ReLU) dimension 513 L3 - FF layer 2 (clipped ReLU) dimension 513 L4 - FF layer (Sigmoid) dimension 1026 p dropout for L1, L2 and L3 0.5 TDNN acoustic model input layer dimension 40 hidden layer dimension 750 output layer dimension 2800 l2-regularize 0.00005 num-epochs 6 initial-effecti ve-lrate 0.003 final-effecti ve-lrate 0.0003 shrink-value 1.0 num-chunk-per-minibatch 128,64 LSTM language model layers dimension 2048 recurrent-projection-dim 512 N-best list size 100 RNN re-score weight 1.0 4. Experiments 4.1. Speech Enhancement Experiments First experiments describe the speech enhancement perfor- mance of BLSTM-based speech enhancement. For the single channel track, we used the BLSTM masking technique [27] trained on the 6 channel data and took only the speech mask after the forward propagation. W e took a Hadamard product of the single channel spectrogram with the speech mask and used it as the enhanced signal to compare it with the original sig- nal without any enhancement. For the 2 channel and 6 channel tracks, we used the BLSTM based GEV beamformer described in Section 3.2 and compare it with BeamformIt. F our dif fer- ent scores as described in Section 1 - PESQ, STOI, eSTOI and SDR are computed. The BLSTM architecture used in the ex- periments is listed in T able 2. The enhancement scores are shown in T able 1. The 5th channel clean signal from the 6ch data con volved with room impulse response w as used as the reference signal for comput- ing all the four metrics. For the 1 channel track, the BLSTM mask giv es significantly better scores in all four metrics com- pared to using the noisy data without any enhancement. How- ev er, this is contrary to the ASR results, which will be discussed in the next section. BeamformIt has better SDR scores com- pared to BLSTM GEV in both the multi-channel tracks. Also, for both the multi-channel track data, eSTOI is slightly better for BLSTM GEV . In the 6ch track experiments, BLSTM GEV has a significantly better PESQ score. Overall, BLSTM-based speech enhancement shows improv ement in most of conditions except for the case of the multichannel SDR metric. 4.2. Speech Recognition Experiments Our system is trained on the speech recognition toolkit Kaldi [28]. F or TDNN acoustic model training, backstitch optimiza- tion method [29] is used. The decoding is based on 3-gram language models with explicit pronunciation and silence proba- bility modeling as described in [30]. The model is re-scored by a 5-gram language model first. Then the Kaldi-RNNLM [17] is used for training the LSTMLM, and n-best re-scoring is used to improv e performance. W e got our best result in 6 channel ex- periments by averaging forward and backward LSTMLM. The RNN re-score weight is set to be 1.0, which means the results of 5-gram LM is completely discarded. All the results in this section are reported in terms of word error rate (WER). W e also provide the parameters used in our system in T able 2. T able 3: WER of adding enhanced data when using TDNN with BeamformIt and RNNLM in the 6 channel tr ack e xperiment Data A ugmentation Dev (%) T est (%) real simu real simu all 6ch data 3.97 4.33 7.04 7.39 all 6ch and enhanced data 3.74 4.31 6.84 7.49 T able 3 shows the effectiv eness of the data augmentation for the system using TDNN with BeamformIt and RNNLM, which are described in Section 2, in the 6 channel track experiment. W e confirmed the improvement by adding enhanced data in al- most all cases except for the simulation test data. This is also found in 2 channels experiment when using TDNN (i.e. row 3 and row 4 in table 5). T ables 4 and 5 show the WER of 6 channel and 2 channel experiments. W e change our experimental condition incremen- tally to compare the ef fectiveness of each method described in Section 2. In most of the situations, e very method impro ved the WER steadily . W e observed that the performance was degraded T able 4: WER of 6 channel trac k experiments Method Dev (%) T est (%) Data Augmentation Acoustic Model Beamforming Language Model real simu real simu only 5th channel DNN+sMBR BeamformIt RNNLM 5.79 6.73 11.50 10.92 all 6ch data DNN+sMBR BeamformIt RNNLM 5.05 5.82 9.50 9.24 all 6ch and enhanced data DNN+sMBR BeamformIt RNNLM 5.62 6.46 10.27 9.41 all 6ch and enhanced data TDNN with LF-MMI BeamformIt RNNLM 3.74 4.31 6.84 7.49 all 6ch and enhanced data TDNN with LF-MMI BLSTM Gev RNNLM 2.83 2.94 4.01 3.80 all 6ch and enhanced data TDNN with LF-MMI BLSTM Gev LSTMLM 1.90 2.10 2.74 2.66 T able 5: WER of 2 channel trac k experiments Method Dev (%) T est (%) Data Augmentation Acoustic Model Beamforming Language Model real simu real simu only 5th channel DNN+sMBR BeamformIt RNNLM 8.23 9.50 16.58 15.33 all 6ch data DNN+sMBR BeamformIt RNNLM 6.87 8.06 13.33 12.57 all 6ch data TDNN with LF-MMI BeamformIt RNNLM 5.57 6.08 10.53 9.90 all 6ch and enhanced data TDNN with LF-MMI BeamformIt RNNLM 5.03 6.02 10.20 10.35 all 6ch and enhanced data TDNN with LF-MMI BLSTM Gev RNNLM 3.79 5.03 6.93 6.07 all 6ch and enhanced data TDNN with LF-MMI BLSTM Gev LSTMLM 2.85 3.94 5.40 5.03 T able 6: WER of 1 channel trac k experiments Dev (%) T est (%) Data Augmentation Acoustic Model Beamforming Language Model real simu real simu only 5th channel DNN+sMBR - RNNLM 11.57 12.98 23.70 20.84 all 6ch data DNN+sMBR - RNNLM 8.97 11.02 18.10 17.31 all 6ch data TDNN with LF-MMI - RNNLM 6.64 7.78 12.92 13.54 all 6ch data TDNN with LF-MMI - LSTMLM 5.58 6.81 11.42 12.15 all 6ch data TDNN with LF-MMI BLSTM masking RNNLM 13.15 15.62 22.47 21.61 all 6ch and enhanced data TDNN with LF-MMI BLSTM masking LSTMLM 6.78 9.10 13.64 14.95 if we applied enhanced data on the system using DNN+sMBR (i.e. ro w 2 and row 3 in table 4), while TDNN with LF-MMI could make use of the enhanced data, as discussed above. In addition, comparing with the speech enhancement results in T a- ble 1, it shows that better speech enhancement scores do not necessarily gives lower WER. Especially , there always seems to be a negativ e correlation between the ASR performance and the SDR scores. T able 6 illustrates the results of the 1 channel track experi- ment. W e found that BLSTM masking was not effecti ve if we only used one microphone although it scores better in terms of all four speech enhancement metrics in T able 1. From row 3 and ro w 5 of 6, the WER with BLSTM masking was degraded more than twice when compared to the system without BLSTM masking. Howe ver , we also discov ered that after adding the en- hanced data into the system with BLSTM masking, the WER became closer to the best setup without masking, which can be seen in row 4 and row 6 of 6. Thus, adding the enhanced data seems to be a good strategy to mitigate the degradation of speech enhancement. Finally , T able 7 presents the comparison with the official baseline and top systems in the CHiME-4 challenge. W e can see that all of these systems use a fusion technique to get their best WER. On the other hand, our proposed single system achiev ed 76% relative improv ement from the official baseline, and achiev ed the 2nd best performance. T able 7: Final WER comparison for the r eal test set. System # systems WER (%) CHiME-4 baseline [4] 1 11.51 Proposed system 1 2.74 USTC-iFlytek [6] 5 2.24 R WTH/UPB/FOR TH [7] 5 2.91 MERL [8] 6 2.98 5. Conclusion This paper describes our single ASR system for CHiME-4 speech separation and recognition challenge. The system con- sists of BLSTM masked GEV beamformer (Section3.2), TDNN with LF-MMI as acoustic model (Section3.3) and re-scoring using LSTMLM (Section3.4), which trained on all 6 channels data plus enhanced data generated by beamformer (Section3.1). The system finally achieved 2.74% WER, which outperforms the 2nd place result in the challenge. The system is publicly av ailable through the Kaldi speech recognition toolkit. Our future work will explore different architectures for TDNN and LSTM networks to further improv ement. Further- more, this system can be applied to other multichannel tasks such as AMI [31], and the CHiME-5 challenge [32]. 6. References [1] J. Barker , R. Marxer , E. V incent, and S. W atanabe, “The third CHiMEspeech separation and recognition challenge: Dataset, task and baselines, ” in IEEE W orkshop on Automatic Speec h Recognition and Understanding (ASR U) , 2015, pp. 504–511. [2] K. Kinoshita, M. Delcroix, S. Gannot, E. A. Habets, R. Haeb- Umbach, W . Kellermann, V . Leutnant, R. Maas, T . Nakatani, B. Raj et al. , “ A summary of the REVERB challenge: state-of- the-art and remaining challenges in reverberant speech processing research, ” EURASIP Journal on Advances in Signal Pr ocessing , vol. 2016, no. 1, p. 7, 2016. [3] B. Li, T . Sainath, A. Narayanan, J. Caroselli, M. Bacchiani, A. Misra, I. Shafran, H. Sak, G. Pundak, K. Chin et al. , “ Acoustic modeling for google home, ” INTERSPEECH-2017 , pp. 399–403, 2017. [4] E. V incent, S. W atanabe, A. A. Nugraha, J. Barker , and R. Marxer , “ An analysis of en vironment, microphone and data simulation mismatches in robust speech recognition, ” Computer Speech & Language , v ol. 46, pp. 535–557, 2017. [5] T . Y oshioka, N. Ito, M. Delcroix, A. Ogawa, K. Kinoshita, M. Fu- jimoto, C. Y u, W . J. Fabian, M. Espi, T . Higuchi et al. , “The NTT CHiME-3 system: Advances in speech enhancement and recogni- tion for mobile multi-microphone de vices, ” in A utomatic Speech Recognition and Understanding (ASRU) . IEEE, 2015, pp. 436– 443. [6] J. Du, Y .-H. Tu, L. Sun, F . Ma, H.-K. W ang, J. Pan, C. Liu, J.-D. Chen, and C.-H. Lee, “The USTC-iFlytek system for CHiME-4 challenge, ” Proc. CHiME , pp. 36–38, 2016. [7] T . Menne, J. Heymann, A. Alexandridis, K. Irie, A. Zeyer, M. Kitza, P . Golik, I. Kulikov , L. Drude, R. Schl ¨ uter et al. , “The R WTH/UPB/FOR TH system combination for the 4th CHiME challenge ev aluation, ” in CHiME-4 workshop , 2016. [8] H. Erdogan, T . Hayashi, J. R. Hershey , T . Hori, C. Hori, W .-N. Hsu, S. Kim, J. Le Roux, Z. Meng, and S. W atanabe, “Multi- channel speech recognition: LSTMs all the way through, ” in CHiME-4 workshop , 2016. [9] Y . Fujita, T . Homma, and M. T ogami, “Unsupervised network adaptation and phonetically-oriented system combination for the chime-4 challenge, ” Proc. CHiME , pp. 49–51, 2016. [10] X. Anguera, C. W ooters, and J. Hernando, “ Acoustic beamform- ing for speaker diarization of meetings, ” IEEE Tr ansactions on Audio, Speec h, and Language Pr ocessing , vol. 15, no. 7, pp. 2011–2022, 2007. [11] K. V esel ` y, A. Ghoshal, L. Burget, and D. Povey , “Sequence- discriminativ e training of deep neural networks. ” in Interspeech , 2013, pp. 2345–2349. [12] T . Mikolov , M. Karafi ´ at, L. Burget, J. ˇ Cernock ` y, and S. Khudan- pur , “Recurrent neural network based language model, ” in Inter- speech , 2010. [13] H. Erdogan, J. R. Hershey , S. W atanabe, M. I. Mandel, and J. Le Roux, “Improved MVDR beamforming using single-channel mask prediction networks. ” in INTERSPEECH , 2016, pp. 1981– 1985. [14] J. Heymann, L. Drude, and R. Haeb-Umbach, “Neural network based spectral mask estimation for acoustic beamforming, ” in 2016 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , March 2016, pp. 196–200. [15] A. W aibel, T . Hanazawa, G. Hinton, K. Shikano, and K. J. Lang, “Phoneme recognition using time-delay neural networks, ” in Readings in speec h recognition . Elsevier , 1990, pp. 393–404. [16] D. Povey , V . Peddinti, D. Galvez, P . Ghahremani, V . Manohar , X. Na, Y . W ang, and S. Khudanpur, “Purely Sequence-T rained Neural Networks for ASR Based on Lattice-Free MMI, ” in Inter- speech , 2016, pp. 2751–2755. [17] H. Xu, K. Li, Y . W ang, J. W ang, S. Kang, X. Chen, D. Po vey , and S. Khudanpur , “Neural network language modeling with letter- based features and importance sampling, ” 2018. [18] A. W . Rix, J. G. Beerends, M. P . Hollier, and A. P . Hekstra, “Per- ceptual Evaluation of Speech Quality (PESQ)-a New Method for Speech Quality Assessment of T elephone Networks and Codecs, ” in Pr oceedings of the Acoustics, Speech, and Signal Processing, 200. On IEEE International Confer ence - V olume 02 , ser . ICASSP ’01. IEEE Computer Society , 2001, pp. 749–752. [19] C. H. T aal, R. C. Hendriks, R. Heusdens, and J. Jensen, “ An al- gorithm for intelligibility prediction of time-frequency weighted noisy speech, ” IEEE T ransactions on Audio, Speech, and Lan- guage Pr ocessing , vol. 19, no. 7, pp. 2125–2136, Sept 2011. [20] J. Jensen and C. H. T aal, “ An algorithm for predicting the in- telligibility of speech masked by modulated noise maskers, ” IEEE/ACM T ransactions on Audio, Speech, and Language Pro- cessing , vol. 24, no. 11, pp. 2009–2022, No v 2016. [21] E. V incent, R. Gribonv al, and C. Fevotte, “Performance measure- ment in blind audio source separation, ” IEEE T ransactions on Au- dio, Speech, and Language Pr ocessing , vol. 14, no. 4, pp. 1462– 1469, July 2006. [22] T . Hori, Z. Chen, H. Erdogan, J. R. Hershey , J. Le Roux, V . Mi- tra, and S. W atanabe, “Multi-microphone speech recognition in- tegrating beamforming, robust feature extraction, and advanced DNN/RNN back end, ” Computer Speech & Languag e , vol. 46, pp. 401–418, 2017. [23] E. W arsitz and R. Haeb-Umbach, “Blind acoustic beamforming based on generalized eigen value decomposition, ” IEEE T ransac- tions on audio, speech, and languag e pr ocessing , vol. 15, no. 5, pp. 1529–1539, 2007. [24] V . Peddinti, D. Pove y , and S. Khudanpur , “ A time delay neural network architecture for efficient modeling of long temporal con- texts, ” in Interspeech , 2015. [25] D. Pove y , “Discriminative training for large vocabulary speech recognition, ” Ph.D. dissertation, University of Cambridge, 2005. [26] M. Sundermeyer , R. Schl ¨ uter , and H. Ney , “LSTM neural net- works for language modeling, ” in Interspeech , 2012. [27] “Speech enhancement with LSTM recurrent neural networks and its application to noise-rob ust ASR, author=W eninger, Felix and Erdogan, Hakan and W atanabe, Shinji and V incent, Emmanuel and Le Roux, Jonathan and Hershey , John R and Schuller, Bj ¨ orn, booktitle=International Conference on Latent V ariable Analy- sis and Signal Separation, pages=91–99, year=2015, organiza- tion=Springer. ” [28] D. Povey , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz et al. , “The Kaldi speech recognition toolkit, ” in IEEE 2011 workshop on automatic speech r ecognition and understanding . IEEE Sig- nal Processing Society , 2011. [29] Y . W ang, V . Peddinti, H. Xu, X. Zhang, D. Po vey , and S. Khudan- pur , “Backstitch: Counteracting finite-sample bias via negati ve steps, ” in Interspeech , 2017. [30] G. Chen, H. Xu, M. Wu, D. Pove y , and S. Khudanpur , “Pronunci- ation and silence probability modeling for ASR, ” in Interspeec h , 2015. [31] I. McCowan, J. Carletta, W . Kraaij, S. Ashby , S. Bourban, M. Flynn, M. Guillemot, T . Hain, J. Kadlec, V . Karaiskos et al. , “The AMI meeting corpus, ” in Proceedings of the 5th Interna- tional Confer ence on Methods and T echniques in Behavioral Re- sear ch , vol. 88, 2005, p. 100. [32] J. Barker , S. W atanabe, E. V incent, and J. Trmal, “The fifth ‘CHiME speech separation and recognition challenge: Dataset, task and baselines, ” in Interspeech , 2018, (submitting).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment