Student-Teacher Learning for BLSTM Mask-based Speech Enhancement

Spectral mask estimation using bidirectional long short-term memory (BLSTM) neural networks has been widely used in various speech enhancement applications, and it has achieved great success when it is applied to multichannel enhancement techniques w…

Authors: Aswin Shanmugam Subramanian, Szu-Jui Chen, Shinji Watanabe

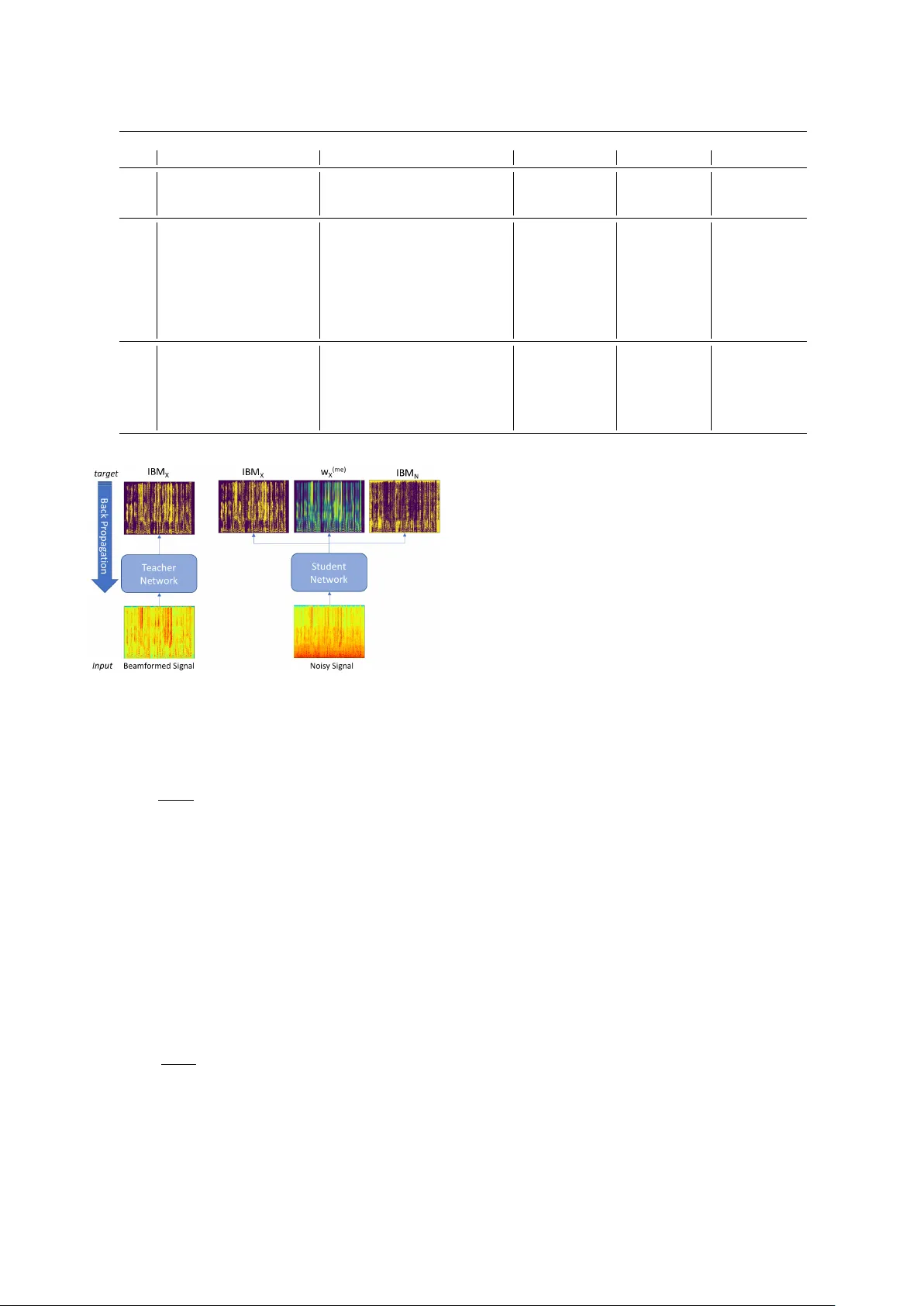

Student-T eacher Learning f or BLSTM Mask-based Speech Enhancement Aswin Shanmugam Subramanian, Szu-J ui Chen, Shinji W atanabe Center for Language and Speech Processing, Johns Hopkins Uni versity { aswin, schen146, shinjiw } @jhu.edu Abstract Spectral mask estimation using bidirectional long short-term memory (BLSTM) neural networks has been widely used in various speech enhancement applications, and it has achieved great success when it is applied to multichannel enhancement techniques with a mask-based beamformer . Howe ver , when these masks are used for single channel speech enhancement they se verely distort the speech signal and make them unsuit- able for speech recognition. This paper proposes a student- teacher learning paradigm for single channel speech enhance- ment. The beamformed signal from multichannel enhancement is given as input to the teacher network to obtain soft masks. An additional cross-entropy loss term with the soft mask target is combined with the original loss, so that the student network with single-channel input is trained to mimic the soft mask ob- tained with multichannel input through beamforming. Exper- iments with the CHiME-4 challenge single channel track data shows impro vement in ASR performance. Index T erms : Speech enhancement, speech recognition, mask estimation, BLSTM, student-teacher learning 1. Introduction The presence of background noise and reverberation de grades the performance of an automatic speech recognition (ASR) sys- tem. The performance of noise robust ASR was greatly im- prov ed by using multiple microphones instead of just using a single microphone [1, 2, 3]. Especially , mask-based beamform- ing techniques hav e sho wn outstanding results in this scenario [4, 5, 6], and many of the top systems in the CHiME-4 challenge [7] use these techniques for speech enhancement. For exam- ple, [5, 6] use a bidirectional long short-term memory (BLSTM) neural network to accurately predict speech (and noise) masks, giv en the noisy spectrogram. In [5], both speech and noise masks are in turn used to calculate cross power spectral den- sity (PSD) matrices of the noise and the target speech, which are used by the generalized eigenv alue (GEV) beamformer to get the beamforming filter [8]. Howe ver , compared with the success of multichannel speech enhancement, single-channel speech enhancement is not well established, especially when the method is combined with other speech processing applications including ASR and speaker recognition [9]. Single-channel speech enhancement, especially based on deep neural networks (DNNs), has been studied by many research groups [10, 11, 12, 13] with promis- ing performance improvement in terms of the speech enhance- ment metrics or hearing purposes. Howe ver , we often observ e a de gradation in performance when we use single-channel en- hancement as a preprocessing step for ASR due to the spectral distortions induced by the enhancement. This paper focuses on the single-channel speech enhancement to ov ercome this issue. Our idea is to fill out the g ap between single-channel and multichannel speech enhancement by using a well kno wn DNN technique called student-teacher learning. W e propose to train a teacher network, which takes the beamformed signal obtained from multichannel speech enhancement as input and predicts high-quality speech masks. Then, a student network, which takes the noisy signal as input, is trained to mimic the mask pre- dicted by the teacher network. Con ventionally student-teacher learning [14, 15] is used to reduce the complexity of the net- work. F or example, a less complex student model with fe wer parameters tries to mimic the soft targets of a more complex teacher model. In [16], student-teacher training is used for self- supervised learning of an ASR acoustic model, where the stu- dent model tries to mimic the effects of multichannel speech enhancement by using only single channel inputs. This was achiev ed by training the student network with noisy training data as input to mimic the soft posteriors of the teacher net- work trained on the enhanced speech. In addition, the benefits of using soft targets compared to hard targets for DNN acoustic models are also sho wn in [16], which is advocated by [17] as knowledge distillation. This paper follows BLSTM-mask based beamforming pro- posed in [5], which has two cross-entropy loss terms - one for speech mask target and the other for noise mask target. The speech mask predicted by the model can also be used for single- channel enhancement, which this paper uses as the baseline. W e hav e an additional loss term based on the cross entropy with the soft mask from the teacher network as a target. The ef fective- ness of the proposed method is inv estigated by using the sin- gle channel track of the CHiME-4 dataset [18]. The CHiME-4 dataset consists of both real and simulated data and it is com- mon to use only the simulation data to prepare the clean and noise targets. As the proposed method uses the soft mask from the teacher network as the target, which can be obtained ev en for the real data, it can use both real and simulation data in the train- ing stage. This is also an unique aspect of the proposed method. Additionally , four different speech enhancement metrics - per - ceptual e valuation of speech quality (PESQ) [19], short-time objectiv e intelligibility measure (STOI) [20], extended STOI (eSTOI) [21] and speech distortion ratio (SDR) [22] are used as part of the experiments to discuss the performance of speech enhancement in addition to the ASR performance. 2. BLSTM mask-based speech enhancement This section first describes a mask prediction method by using a binary cross entropy loss, which is a basic component of this paper . It also describes a mask-based beamformer to estimate beamforming filters based on the estimated masks. 2.1. Binary cross entr opy loss The noise-aware training of BLSTM mask proposed in [5] is explained in this section. The network estimates two masks: the first is the speech mask which denotes the degree of how dominant the speech component is in each time-frequency bin, while the second is the noise mask which denotes that of the noise component [23]. The ideal binary speech mask target IBM X ( t, b ) ∈ { 0 , 1 } at frame t in frequency bin b is defined based on the signal-to-noise (SNR) ratio with thresholding as: IBM X ( t, b ) = ( 1 , if k x ( t,b ) k k n ( t,b ) k > thr eshold 0 , otherwise , (1) where, k x ( t, b ) k ∈ R ≥ 0 is the power spectrum of the clean speech signal and k n ( t, b ) k ∈ R ≥ 0 is the power spectrum of the noise signal at each time-frequency bin ( t, b ) . The ideal binary noise mask IBM N ( t, b ) ∈ { 0 , 1 } is also calculated in a similar way . Giv en a sequence of T -length noisy speech magnitude spectra Y = ( {k y ( t, b ) k} B b =1 | t = 1 , · · · , T ) , the BLSTM net- work predicts the speech mask w X ( t, b ) ∈ [0 , 1] and noise mask w N ( t, b ) ∈ [0 , 1] at each time-frequenc y bin ( t, b ) , as follows: w v ( t, b ) = σ ( Lin v ( BLSTM ( Y ))) , where v ∈ { X , N } , (2) where σ ( · ) , Lin v ( · ) , and BLSTM ( · ) are sigmoid acti vation, lin- ear , and output of the masking network given in T able 1, respec- tiv ely . The binary cross entropy is used as the loss function to train the network. The overall loss function is defined by combining the speech and noise binary loss functions (loss X and loss N ) as follows: loss = loss X + loss N , (3) , 1 T ∗ B X t,b X v ∈{ X , N } CE ( IBM v ( t, b ) , w v ( t, b )) , (4) where CE ( a, a 0 ) is the binary cross entropy defined as follo ws: CE ( a, a 0 ) , a log a 0 + (1 − a ) log(1 − a 0 ) (5) = ( a log a 0 a = 0 (1 − a ) log(1 − a 0 ) a = 1 . Note that since a ∈ { 0 , 1 } , either first or second term in the right hand side of Eq. (5) becomes zero. This type of target is particular called as a hard label in the student-teacher learning [14] or knowledge distillation [17] conte xt. When we apply the estimated speech mask w X ( t, b ) to the original signal y ( t, b ) , we can perform single-channel speech enhancement as follows: x (se) ( t, b ) = w X ( t, b ) y ( t, b ) , (6) where x (se) ( t, b ) is an enhanced signal (the superscript (se) de- notes single-channel enhancement). Although the single chan- nel enhancement only requires the prediction of the speech mask, the noise mask is used to obtain the noise PSD matrix in a multichannel scenario, which will be discussed in the next section. 2.2. Mask-based beamformer This section extends to deal with a multichannel signal with M as a number of channels. When the single channel mask estimation in the pre vious section is applied to the multichan- nel signal, the corresponding speech mask w m, X ( t, b ) and noise mask w m, N ( t, b ) for each channel m can be obtained. With T able 1: Masking network ar chitectur e Layer Activation Dimension Input - 513 BLSTM T anh 256 Feedforward 1 ReLU 513 Feedforward 2 clipped ReLU 513 these multichannel masks, the follo wing time-frequency mask is obtained from a median operation: ¯ w v ( t, b ) = Median ( { w m,v ( t, b ) } M m =1 ) where v ∈ { X , N } . (7) W ith the speech and noise masks ¯ w X ( t, b ) and ¯ w N ( t, b ) , the PSD matrices, which are Φ X ( b ) ∈ C M × M of speech at fre- quency bin b , and Φ N ( b ) ∈ C M × M of noise at frequency bin b , can be estimated as follows: Φ v ( b ) = T X t =1 ¯ w v ( t, b ) y ( t, b ) y ( t, b ) H where v ∈ { X , N } (8) where y ( t, b ) ∈ C M is the M -dimensional complex spectral value at time (frame) t and frequency bin b . From these PSD matrices, the M -dimensional complex beamforming filter f ( b ) ∈ C M can be estimated. This paper adopts the GEV beamformer , which is obtained by maximizing the expected SNR with respect to f ( b ) for each frequenc y bin as giv en by the equation below: f GEV ( b ) = argmax f ( b ) f H ( b ) Φ X ( b ) f ( b ) f H ( b ) Φ N ( b ) f ( b ) . (9) This optimization is equivalent to solving the following eigen- value problem: ( Φ N ( b )) − 1 Φ X ( b ) f ( b ) = λ f ( b ) , (10) where f ( b ) is the eigen vector and λ is the corresponding eigen- value. Once we obtain the beamforming filter f GEV ( b ) , we can per- form multichannel speech enhancement as follows: x (me) ( t, b ) = f H GEV ( b ) y ( t, b ) , (11) where x (me) ( t, b ) is an enhanced signal (the superscript (me) de- notes multichannel enhancement). In general, x (me) ( t, b ) can be well-denoised by making use of spacial information and beamforming operation, compared to the single-channel enhanced signal x (se) ( t, b ) introduced in Section 2.1. This paper proposes to use these single- and multi- channel speech enhancement properties, and designs a new ob- jectiv e function for single-channel enhancement, by using the mask obtained by multichannel enhancement as a better soft la- bel . 3. Student-teacher model This section e xplains in detail the proposed single-channel en- hancement technique by using a student-teacher model. 3.1. T eacher model Firstly , the beamformed signal x (me) ( t, b ) is gi ven as input to the teacher model. The architecture of the network is the same as the one explained in Section 2.1 except that the output is only T able 2: WER of HMM-GMM ASR System Parameters WER Dev (%) WER T est (%) λ 1 λ 2 λ 3 epoch Train data (ASR) BLSTM Mask real simu real simu 1 - - - - all 6ch noisy - 21.40 23.22 35.63 31.98 2 - - - - all 6ch noisy Baseline 28.99 28.05 40.98 35.50 3 - - - 7 all 6ch noisy T eacher 24.91 26.00 40.26 35.73 4 1/3 1/3 1/3 6 all 6ch noisy Student 25.95 24.66 35.50 29.98 5 0.25 0.25 0.5 12 all 6ch noisy Student 26.56 26.19 36.33 31.36 6 0.50 0.25 0.25 5 all 6ch noisy Student 25.82 25.17 35.86 30.17 7 0.45 0.05 0.50 3 all 6ch noisy Student 25.54 24.77 34.78 29.78 8 0.35 0.15 0.50 3 all 6ch noisy Student 23.34 23.11 33.11 28.30 9 0.05 0.45 0.50 3 all 6ch noisy Student 24.07 24.01 34.88 30.00 10 0.15 0.35 0.50 3 all 6ch noisy Student 25.01 24.66 35.66 30.10 11 0.35 0.15 0.50 3 all 6ch noisy Student with real 23.42 23.55 32.64 28.88 12 - - - - all 6ch noisy + 5th ch enhanced data from baseline Baseline 22.07 23.37 34.02 30.41 13 0.35 0.15 0.50 3 all 6ch noisy + 5th ch enhanced data from baseline Student 19.78 20.76 30.66 26.60 14 0.35 0.15 0.50 3 all 6ch noisy + 5th ch enhanced data from baseline Student with real 19.79 20.85 29.80 26.66 Figure 1: Student-T eac her Model the speech mask w ( me ) X ( t, b ) and there is no noise mask. Hence the binary loss function for a teacher network is loss = 1 T ∗ B X t,b CE ( IBM X ( t, b ) , w ( me ) X ( t, b ))) . (12) Since the beamformed signal is already well-denoised, the abov e teacher network provides a high-quality speech mask. Alternativ ely , the original clean signal x ( t, b ) can be used as the input instead of the beamformed signal to train the teacher model. Howe ver , the problem of estimating a speech mask giv en a clean signal is too trivial, and the netw ork is not well- trained in such a trivial condition. 3.2. Student model W ith the speech mask w ( me ) X ( t, b ) obtained by the above teacher network, we can additionally consider the following student- teacher loss function: loss st = 1 T ∗ B X t,b CE ( w ( me ) X ( t, b )) , w ( se ) X ( t, b ))) . (13) Compared with the binary cross entropy case in Eq. (5), the target w ( me ) X ( t, b )) ∈ [0 , 1] is a bounded continuous value, and provides richer supervisions to the student network. The final loss function is expressed with the student-teacher loss and the original loss (Eq. (3)) as: loss = λ 1 loss st + λ 2 loss X + λ 3 loss N (14) where λ 1 , λ 2 , and λ 3 are linear interpolation weights. The student model is trained with this loss function, which e xpects to learn the multichannel enhancement ability within a single- channel enhancement framework. Figure 1 illustrates the pro- posed student-teacher training paradigm. 3.3. Student model with real data All the abov e formulations are discussed giv en that we hav e parallel clean speech ( x ) and noise ( n ), which are not usually obtained in the real recording. Ho wev er, the proposed network can obtain the soft target of the real data by simply applying multichannel speech enhancement for the real data. In this sce- nario, it has only one student-teacher loss term loss st (Eq. (13)), which exactly mimics the teacher network. W ith this setup, the loss function can be obtained by switching the student-teacher loss (Eq. (13)) and the combined loss (Eq. (14)) for real and simulation data as follows: loss = ( loss st for real λ 1 loss st + λ 2 loss X + λ 3 loss N for simulation (15) This is our final loss function, which fully makes use of the benefit of the student-teacher learning paradigm in speech en- hancement. 4. Experiments T o verify the effecti veness of the proposed single-channel ex- periments, the 1 channel track in the CHiME-4 challenge [7] was used. 4.1. Speech recognition The Kaldi speech recognition toolkit [24] was used for ASR experiments. The GMM baseline provided by the CHiME-4 challenge [7] was used for comparing different mask models. The mask network was implemented using the Chainer neural network toolkit [25]. The cross-v alidation loss was calculated T able 3: Speech Enhancement Scor es. Parameters Dev (Simu) T est (Simu) λ 1 λ 2 λ 3 epoch BLSTM Mask PESQ STOI eSTOI SDR PESQ STOI eSTOI SDR - - - - - 2.01 0.82 0.61 3.92 1.98 0.81 0.60 4.95 - - - - Baseline 2.52 0.88 0.73 9.26 2.46 0.87 0.71 10.76 - - - 7 T eacher 2.36 0.86 0.68 8.14 2.33 0.85 0.67 9.51 1/3 1/3 1/3 6 Student 2.54 0.88 0.73 9.13 2.49 0.87 0.71 10.64 0.50 0.25 0.25 5 Student 2.52 0.88 0.73 9.00 2.47 0.87 0.71 10.44 0.35 0.15 0.50 3 Student N/A 0.88 0.72 8.93 N/A 0.87 0.70 10.35 Figure 2: WER vs epoch for differ ent parameter combinations using the de velopment data after every epoch during the train- ing of the teacher network. The training was stopped when the loss did not decrease anymore after 5 epochs of patience and the model with the least cross-v alidation loss w as chosen. The 5th channel data w as used to estimate the tar get masks for the beamformed data. The student network was trained in a batch mode where a minibatch comprised the frames of all 6 channels of one utterance. T o compare the performance of different masking models, we first prepared an ASR baseline that w ould be neutral to the enhancement method by using only the noisy data of all 6 chan- nels as the training data. W e performed enhancement on the dev elopment and ev aluation sets of the official 1-channel track CHiME-4 dataset, with dif ferent models and decoded their en- hanced speech with the above ASR systems. The results of these ASR experiments are sho wn in T able 2 (rows 1–3). The performance of the teacher model was better than that of the original BLSTM mask as e xpected, although both de graded the performance from the non-enhanced noisy speech. The second experiments focused on our proposed student models (rows 4–11 in T able 2), and we found that most of the different configurations of student models performed better than that of the teacher model. Note that in this experiment, we fixed the number of epochs for several models as 3. This is because we additionally inv estigated the dependency between the vali- dation loss and WER, as shown in Figure 2, and we found that it did not necessarily give us the best results for the best v ali- dation score with different hyper-parameter λ , as introduced in Eq. (14). W e empirically found that 3 epochs in most of the cases seemed to gi ve good performance. This result indicates that choosing the epoch based on the WER of the dev elopment data seems to be a better criterion than the enhancement-driv en cross-validation loss (related discussions will be sho wn in the next section). W ith this setup (3 epochs), we found that the pa- rameter choice of λ 1 = 0 . 35 , λ 2 = 0 . 15 and λ 3 = 0 . 5 gave the best performance amongst the different values we tried. This is an interesting finding, since this result indicates that soft targets obtained by the teacher model would effect the performance more than that of supervised targets. W e also trained the stu- dent model with additional real data, as discussed in Section 3.3 (row 11 in T able 2), and this shows the improvement for the real test set owing to the inclusion of the real training data, which is a desired property in real en vironments. When the 5th channel of the training data was also en- hanced in the same way as the de velopment and e valuation data using the baseline BLSTM mask and included as part of the ASR training (ro w 12), the performance was slightly better than using the original noisy data for the ev aluation data but it was slightly worse for the development data. When the de velopment and ev aluation data was enhanced using our best student mod- els (rows 13 and 14), the performance improved significantly compared to using the original noisy data in the all conditions. Also, we observed the similar improvement for the real test set again when we trained the student model with real data. 4.2. Speech enhancement scores The four different scores described in Section 1 - PESQ, STOI, eSTOI and SDR were computed for se veral enhancement meth- ods, as shown in T able 3. The 5th channel clean signal from the 6ch track con volv ed with room impulse response w as used as the reference signal. All the dif ferent masking models ga ve significantly better scores in all four metrics compared to us- ing the noisy data without any enhancement although we did not observ e an y considerable difference in the scores amongst the models. In addition, by comparing T ables 2 and 3, we did not observe clear correlations between the speech enhancement scores and WERs, which also suggests that we need some care- ful in vestigation on the objecti ve function of BLSTM-based en- hancement for the ASR purpose. 5. Conclusion W e proposed a new training paradigm for mask estimation using BLSTMs based on student-teacher training for single channel speech enhancement. W e showed that the proposed student- teacher technique improved the ASR performance from the original noisy speech and the enhanced speech obtained by con- ventional BLSTM masking. Our future work is to ev aluate our speech enhancement techniques with a strong ASR back end in- cluding a time delay neural network (TDNN) [26, 27] with the lattice-free version of the maximum mutual information (LF- MMI) [28]. 6. References [1] J. Barker , R. Marxer , E. V incent, and S. W atanabe, “The third CHiMEspeech separation and recognition challenge: Dataset, task and baselines, ” in IEEE W orkshop on Automatic Speech Recognition and Understanding (ASR U) , 2015, pp. 504–511. [2] K. Kinoshita, M. Delcroix, S. Gannot, E. A. Habets, R. Haeb- Umbach, W . Kellermann, V . Leutnant, R. Maas, T . Nakatani, B. Raj et al. , “ A summary of the REVERB challenge: state-of- the-art and remaining challenges in re verberant speech processing research, ” EURASIP Journal on Advances in Signal Pr ocessing , 2016. [3] B. Li, T . Sainath, A. Narayanan, J. Caroselli, M. Bacchiani, A. Misra, I. Shafran, H. Sak, G. Pundak, K. Chin et al. , “ Acoustic modeling for google home, ” Interspeech , pp. 399–403, 2017. [4] T . Higuchi, N. Ito, T . Y oshioka, and T . Nakatani, “Robust MVDR beamforming using time-frequency masks for online/offline ASR in noise, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2016, pp. 5210–5214. [5] J. Heymann, L. Drude, and R. Haeb-Umbach, “Neural network based spectral mask estimation for acoustic beamforming, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2016, pp. 196–200. [6] H. Erdogan, J. R. Hershey , S. W atanabe, M. I. Mandel, and J. Le Roux, “Improved MVDR beamforming using single-channel mask prediction networks. ” in Interspeech , 2016, pp. 1981–1985. [7] E. V incent, S. W atanabe, A. A. Nugraha, J. Bark er , and R. Marxer , “ An analysis of en vironment, microphone and data simulation mismatches in robust speech recognition, ” Computer Speech & Language , v ol. 46, pp. 535–557, 2017. [8] E. W arsitz and R. Haeb-Umbach, “Blind acoustic beamforming based on generalized eigenv alue decomposition, ” IEEE T ransac- tions on audio, speech, and language processing , vol. 15, no. 5, pp. 1529–1539, 2007. [9] T . Hori, Z. Chen, H. Erdogan, J. R. Hershey , J. Le Roux, V . Mi- tra, and S. W atanabe, “Multi-microphone speech recognition in- tegrating beamforming, robust feature extraction, and advanced DNN/RNN back end, ” Computer Speech & Language , v ol. 46, pp. 401–418, 2017. [10] X. Lu, Y . Tsao, S. Matsuda, and C. Hori, “Speech enhancement based on deep denoising autoencoder . ” in Interspeech , 2013, pp. 436–440. [11] Y . Xu, J. Du, L.-R. Dai, and C.-H. Lee, “ An experimental study on speech enhancement based on deep neural networks, ” IEEE Signal processing letters , vol. 21, no. 1, pp. 65–68, 2014. [12] A. Narayanan and D. W ang, “Ideal ratio mask estimation using deep neural networks for robust speech recognition, ” in IEEE In- ternational Conference on Acoustics, Speech and Signal Pr ocess- ing (ICASSP) , 2013, pp. 7092–7096. [13] H. Erdogan, J. R. Hershey , S. W atanabe, and J. Le Roux, “Phase- sensitiv e and recognition-boosted speech separation using deep recurrent neural networks, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2015, pp. 708–712. [14] J. Ba and R. Caruana, “Do deep nets really need to be deep?” in Advances in Neural Information Pr ocessing Systems 27 (NIPS) , 2014, pp. 2654–2662. [15] J. Li, R. Zhao, J.-T . Huang, and Y . Gong, “Learning small- size DNN with output-distrib ution-based criteria, ” in Interspeech , 2014. [16] S. W atanabe, T . Hori, J. Le Roux, and J. Hershey , “Student- teacher network learning with enhanced features, ” in IEEE Inter- national Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2017, pp. 5275–5279. [17] G. Hinton, O. V inyals, and J. Dean, “Distilling the knowledge in a neural network, ” arXiv pr eprint arXiv:1503.02531 , 2015. [18] E. V incent, S. W atanabe, A. A. Nugraha, J. Bark er , and R. Marxer , “ An analysis of en vironment, microphone and data simulation mismatches in robust speech recognition, ” Computer Speech & Language , v ol. 46, pp. 535–557, 2017. [19] A. W . Rix, J. G. Beerends, M. P . Hollier, and A. P . Hekstra, “Per- ceptual Evaluation of Speech Quality (PESQ)-a New Method for Speech Quality Assessment of T elephone Networks and Codecs, ” in IEEE International Confer ence on Acoustics, Speech and Sig- nal Processing (ICASSP) , 2001, pp. 749–752. [20] C. H. T aal, R. C. Hendriks, R. Heusdens, and J. Jensen, “ An al- gorithm for intelligibility prediction of time-frequenc y weighted noisy speech, ” IEEE T ransactions on Audio, Speech, and Lan- guage Pr ocessing , vol. 19, no. 7, pp. 2125–2136, Sept 2011. [21] J. Jensen and C. H. T aal, “ An algorithm for predicting the in- telligibility of speech masked by modulated noise maskers, ” IEEE/ACM T ransactions on A udio, Speech, and Language Pro- cessing , vol. 24, no. 11, pp. 2009–2022, No v 2016. [22] E. V incent, R. Gribon val, and C. Fe votte, “Performance measure- ment in blind audio source separation, ” IEEE T ransactions on Au- dio, Speech, and Language Pr ocessing , vol. 14, no. 4, pp. 1462– 1469, July 2006. [23] H. Erdogan, T . Hayashi, J. R. Hershe y , T . Hori, C. Hori, W .-N. Hsu, S. Kim, J. Le Roux, Z. Meng, and S. W atanabe, “Multi- channel speech recognition: LSTMs all the way through, ” in CHiME-4 workshop , 2016. [24] D. Pov ey , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz et al. , “The Kaldi speech recognition toolkit, ” in IEEE W orkshop on Au- tomatic Speech Recognition and Understanding (ASR U) , 2011. [25] S. T okui, K. Oono, S. Hido, and J. Clayton, “Chainer: a next- generation open source framework for deep learning, ” in Pr oceed- ings of W orkshop on Machine Learning Systems(LearningSys) in The T wenty-ninth Annual Confer ence on Neural Information Pr o- cessing Systems (NIPS) , 2015. [26] A. W aibel, T . Hanazawa, G. Hinton, K. Shikano, and K. J. Lang, “Phoneme recognition using time-delay neural networks, ” in Readings in speech r ecognition . Elsevier , 1990, pp. 393–404. [27] V . Peddinti, D. Po vey , and S. Khudanpur , “ A time delay neural network architecture for efficient modeling of long temporal con- texts, ” in Interspeech , 2015. [28] D. Povey , V . Peddinti, D. Galvez, P . Ghahremani, V . Manohar , X. Na, Y . W ang, and S. Khudanpur, “Purely Sequence-Trained Neural Networks for ASR Based on Lattice-Free MMI, ” in Inter- speech , 2016, pp. 2751–2755.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment