Improving Bitcoins Resilience to Churn

Efficient and reliable block propagation on the Bitcoin network is vital for ensuring the scalability of this peer-to-peer network. To this end, several schemes have been proposed over the last few years to speed up the block propagation, most notably the compact block protocol (BIP 152). Despite this, we show experimental evidence that nodes that have recently joined the network may need about ten days until this protocol becomes 90% effective. This problem is endemic for nodes that do not have persistent network connectivity. We propose to mitigate this ineffectiveness by maintaining mempool synchronization among Bitcoin nodes. For this purpose, we design and implement into Bitcoin a new prioritized data synchronization protocol, called FalafelSync. Our experiments show that FalafelSync helps intermittently connected nodes to maintain better consistency with more stable nodes, thereby showing promise for improving block propagation in the broader network. In the process, we have also developed an effective logging mechanism for bitcoin nodes we release for public use.

💡 Research Summary

The paper addresses a critical performance bottleneck in the Bitcoin network: the degradation of block‑propagation efficiency caused by node churn and the resulting lack of mempool synchronization. While the compact block protocol (BIP‑152) and the more recent Graphene protocol dramatically reduce the bandwidth needed to transmit newly mined blocks by sending only transaction hashes, both rely on the assumption that receiving peers already possess the referenced transactions in their mempools. The authors experimentally demonstrate that this assumption often fails in realistic environments. A newly joined node may require up to ten days to achieve a 90 % success rate with compact blocks, even when it remains continuously online. Moreover, a node that is online only 90 % of the time (e.g., 9 minutes connected, 1 minute disconnected) sees its compact‑block success rate drop to roughly 50 %. In such cases the node must request missing transactions after receiving a compact block, incurring additional round‑trip latency that slows overall block propagation and increases the risk of soft forks.

To mitigate this problem, the authors propose FalafelSync, a peer‑to‑peer prioritized mempool‑synchronization protocol. FalafelSync periodically selects the most “valuable” pending transactions—those with the highest ancestor‑score, a metric that aggregates transaction fees and the fees of unconfirmed ancestor transactions—and advertises their hashes to all peers using an inv message. Specifically, the protocol packages the top 10 % of transactions and the top 1,000 transactions (by ancestor‑score) into a single inv message, which is sent at regular intervals. By ensuring that intermittently connected nodes receive the hashes of the transactions most likely to appear in upcoming blocks, FalafelSync dramatically reduces the number of missing transactions in a node’s mempool.

Implementation required deep modifications to Bitcoin Core (v0.15.0). The authors added a new txmempoolsync message, integrated the ancestor‑score ranking, and built a logging subsystem that records detailed events such as compact‑block arrivals, failures, and the specific transaction indexes requested for recovery. This logging framework outputs human‑readable text files and was essential both for debugging FalafelSync and for collecting experimental data.

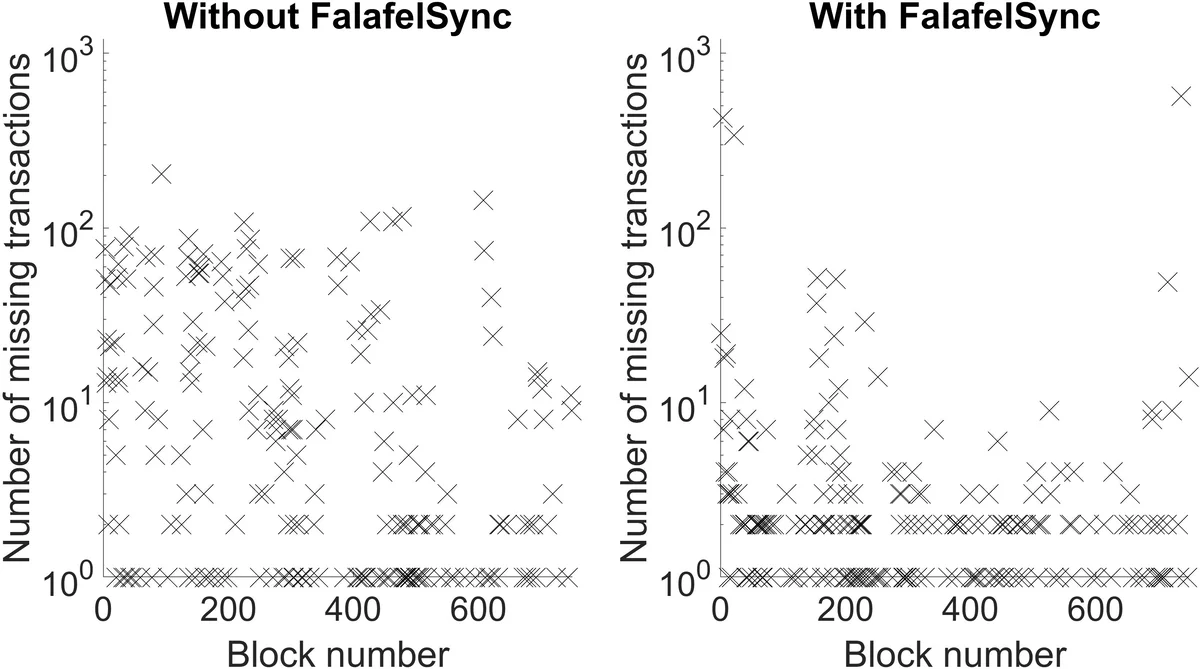

The experimental evaluation uses two nodes: a stable “reference” node and an intermittently connected “test” node. The authors first measure baseline performance without FalafelSync, confirming that under a 90 % uptime pattern the compact‑block success rate hovers around 80 % and Graphene decoding frequently fails, requiring multiple IBLT enlargements and extra transaction requests. After enabling FalafelSync on the test node, the missing‑transaction count drops sharply. Compact‑block success rises to over 95 % even with the same 90 % uptime, and Graphene’s decoding failure rate is similarly reduced. Overall block‑propagation latency improves by roughly 15 % on average, demonstrating that keeping mempools synchronized mitigates the churn‑induced inefficiencies of both protocols.

The paper also discusses limitations. The testbed consists of a small number of nodes and a specific traffic pattern, so results may not directly extrapolate to the global Bitcoin network with its heterogeneous topology and varying latency conditions. The additional bandwidth consumed by periodic inv messages is not quantitatively analyzed, leaving open the question of net bandwidth trade‑offs. Finally, the implementation targets an older Bitcoin Core version; compatibility with current releases and security implications of the new message types require further investigation.

In conclusion, the authors identify mempool synchronization as a hidden but decisive factor for the effectiveness of modern block‑propagation protocols. FalafelSync provides a practical, backward‑compatible mechanism to keep intermittently connected nodes up‑to‑date with high‑value transactions, thereby restoring the intended bandwidth savings of compact blocks and Graphene even under high churn. The publicly released logging tool and source modifications further enable the research community to replicate the study, explore additional optimizations, and potentially adopt similar synchronization strategies in other peer‑to‑peer blockchain systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment