An Event-based Diffusion LMS Strategy

We consider a wireless sensor network consists of cooperative nodes, each of them keep adapting to streaming data to perform a least-mean-squares estimation, and also maintain information exchange among neighboring nodes in order to improve performan…

Authors: Yuan Wang, Wee Peng Tay, Wuhua Hu

1 An Ev ent-based Dif fusion LMS Strate gy Y uan W ang, W ee Peng T ay , and W uhua Hu Abstract W e consider a wireless sensor network consists of cooperative nodes, each of them keep adapting to streaming data to perform a least-mean-squares estimation, and also maintain information exchange among neighboring nodes in order to improve performance. For the sake of reducing communication ov erhead, prolonging batter life while preserving the benefits of diffusion cooperation, we propose an energy-ef ficient diffusion strategy that adopts an ev ent-based communication mechanism, which allo w nodes to cooperate with neighbors only when necessary . W e also study the performance of the proposed algorithm, and show that its network mean error and MSD are bounded in steady state. Numerical results demonstrate that the proposed method can effecti vely reduce the network energy consumption without sacrificing steady-state network MSD performance significantly . I . I N T RO D U C T I O N In the era of big data and Internet-of-Things (IoT), ubiquitous smart devices continuously sense the en vironment and generate large amount of data rapidly . T o better address the real-time challenges arising from online inference, optimization and learning, distributed adaptation algorithms ha ve become especially promising and popular compared with traditional centralized solutions. As computation and data storage resources are distributed to every sensor node in the network, information can be processed and fused through local cooperation among neighboring nodes, and thus reducing system latency and improving robustness and scalability . Among various implementations of distributed adaptation solutions [1]–[6], dif fusion strategies are particularly adv antageous for continuous adaptation using constant step-sizes, thanks to their low complexity , better mean-square deviation (MSD) performance and stability [7]–[12]. Therefore diffusion strategies have attracted a lot of research interest in recent years for both single-task Y . W ang and W . P . T ay are with the School of Electrical and Electronic Engineering, Nanyang T echnological University , Singapore. Emails: ywang037@e.ntu.edu.sg, wptay@ntu.edu.sg. W . Hu was with the School of Electrical and Electronic Engineering, Nanyang T echnological University , Singapore, and now is with the Department of Artificial Intelligence for Applications, SF T echnology Co. Ltd, Shenzhen, China. E-mail: wuhuahu@sf-express.com. 2 scenarios where nodes share a common parameter of interest [13]–[19], and multi-task networks where parameters of interest differ among nodes or groups of nodes [20]–[24]. In dif fusion strategies, each sensor communicates local information to their neighboring sensors in each iteration. Howe ver , in IoT networks, devices or nodes usually ha ve limited energy budget and communication bandwidth, which prevent them from frequently exchanging information with neighboring sensors. Se veral methods to improve energy ef ficiency in diffusion ha ve been proposed in the literature, and these can be di vided into two main categories: reducing the number of neighbors to cooperate with [25]–[27]; and reducing the dimension of the local information to be transmitted [28]–[30]. These methods either rely on additional optimization procedures, or use auxiliary selection or projection matrices, which require more computation resources to implement. Unlike time-driv en communication where nodes exchange information at every iteration, event-based communication mechanisms allow nodes only trigger communication with neighbors upon occurrence of certain meaningful ev ents. This can significantly reduce energy consumption by avoiding unnecessary information exchange especially when the system has reached steady-state. It also allows ev ery node in the network to share the limited bandwidth resource so that channel efficienc y is improv ed. Such mechanisms have been de veloped for state estimation, filtering, and distributed control o ver wireless sensor networks [31]–[38], but hav e not been fully inv estigated in the context of diffusion adaptation. In [39], the author proposes a diffusion strategy where e very entry of the local intermediate estimates are quantized into values of multiple lev els before being transmitted to neighbors, communication is triggered once quantized local information goes through a quantization le vel crossing. The performance of this method relies largely on the precision of selected quantization scheme. Howe ver , choosing a suitable quantization scheme with desired precision, and requiring ev ery node being aware of same quantization scheme is practically difficult for online adaptation where parameter of interest and environment may change ov er time. In this paper , we propose an e vent-based dif fusion strate gy to reduce communication among neighboring nodes while preserve the adv antages of diffusion strate gies. Specifically , each node monitors the dif ference between the full vector of its current local update and the most recent intermediate estimate transmitted to its neighbors. A communication is triggered only if this difference is suf ficiently large. W e provide a sufficient condition for the mean error stability of our proposed strategy , and an upper bound of its steady-state network mean-squared de viation (MSD). Simulations demonstrate that our event-based strategy achieves a similar steady-state network MSD as the popular adapt-then-combine (A TC) diffusion strategy but a significantly lower communication rate. The rest of this paper is organized as follows. In Section II, we introduce the network model, problem 3 formulation and discuss prior works. In Section III, we describe our proposed ev ent-based dif fusion LMS strategy and analyze its performance. Simulation results are demonstrated in Section V followed by concluding remarks in Sections VI. Notations. Throughout this paper , we use boldface characters for random variables, plain characters for realizations of the corresponding random v ariables as well as deterministic quantities. In addition, we use upper-case characters for matrices and lo wer-case ones for vectors and scalars. The notation I N is an N × N identity matrix. The matrix A T is the transpose of the matrix A , λ n ( A ) , and λ min ( A ) is the n -th eigen value and the smallest eigen value of the matrix A , respectiv ely . Besides, ρ ( A ) is the spectral radius of A . The operation A ⊗ B denotes the Kronecker product of the two matrices A and B . The notation k·k is the Euclidean norm, k·k b, ∞ denotes the block maximum norm [11], while k A k 2 Σ , A ∗ Σ A . W e use diag {·} to denote a matrix whose main diagonal is giv en by its arguments, and col {·} to denote a column vector formed by its arguments. The notation vec( · ) represents a column vector consisting of the columns of its matrix argument stacked on top of each other . If σ = v ec(Σ) , we let k·k σ = k·k Σ , and use either notations interchangeably . I I . D A TA M O D E L S A N D P R E L I M I N A R I E S In this section, we first present our network and data model assumptions. W e then giv e a brief description of the A TC dif fusion strate gy . A. Network and Data Model Consider a network represented by an undirected graph G = ( V , E ) , where V = { 1 , 2 , · · · , N } denotes the set of nodes, and E is the set of edges. An y two nodes are said to be connected if there is an edge between them. The neighborhood of each node k is denoted by N k which consists of node k and all the nodes connected with node k . Since the network is assumed to be undirected, if node k is a neighbor of node ` , then node ` is also a neighbor of node k . W ithout loss of generality , we assume that the network is connected. Every node in the network aims to estimate an unknown parameter vector w ◦ ∈ R M × 1 . At each time instant i ≥ 0 , each node k observes data d k ( i ) ∈ R and u k ( i ) ∈ R M × 1 , which are related through the follo wing linear regression model: d k ( i ) = u T k ( i ) w ◦ + v k ( i ) , (1) where v k ( i ) is an additiv e observation noise. W e make the follo wing assumptions. 4 Assumption 1. The r e gression pr ocess { u k,i } is zer o-mean, spatially independent and temporally white. The r e gressor u k ( i ) has positive definite covariance matrix R u,k = E u k ( i ) u T k ( i ) . Assumption 2. The noise pr ocess { v k ( i ) } is spatially independent and temporally white. The noise v k ( i ) has variance σ 2 v ,k , and is assumed to be independent of the r e gr essors u ` ( j ) for all { k , `, i, j } . B. ATC Diffusion Strate gy T o estimate the parameter w ◦ , the network solves the following least mean-squares (LMS) problem: min w N X k =1 J k ( w ) , (2) where for each k ∈ V , J k ( w ) = X k ∈N k E d k ( i ) − u k ( i ) T w 2 . (3) The A TC dif fusion strategy [7], [11] is a distributed optimization procedure that attempts to solve (2) iterati vely by performing the following local updates at each node k at each time instant i : ψ k ( i ) = w k ( i − 1) + µ k u k ( i ) d k ( i ) − u k ( i ) T w k ( i − 1) , (4) w k,i = X ` ∈N a `k ψ `,i , (5) where µ k > 0 is a chosen step size. The procedure in (4) is referred to as the adaptation step and (5) is the combination step. The combination weights { a `k } are non-negati ve scalars and satisfy: a `k ≥ 0 , N X ` =1 a `k = 1 , a `k = 0 , if ` / ∈ N k . (6) The local estimates w k,i in the A TC strategy are shown to con verge in mean to the true parameter w ◦ if the step sizes µ k are chosen to be below a particular threshold [7], [11]. I I I . E V E N T - B A S E D D I FF U S I O N W e consider a modification of the A TC strategy so that the local intermediate estimate ψ k ( i ) of each node k is communicated to its neighbors only at certain trigger time instants s n k , n = 1 , 2 , . . . . Let ψ k ( i ) be the last local intermediate estimate node k transmitted to its neighbors at time instant i , i.e., ψ k ( j ) = ψ k ( s n k ) , for j ∈ s n k , s n +1 k . (7) Let − k ( i ) be the a prior gap defined as − k ( i ) = ψ k ( i ) − ψ k ( i − 1) . (8) 5 Let f − k ( i ) = − k ( i ) 2 Y k , where Y k is a positiv e semi-definite weighting matrix. For each node k , transmission of its local intermediate estimate ψ k ( i ) is triggered whenev er f − k ( i ) > δ k ( i ) > 0 , (9) where δ k ( i ) is the threshold adopted by node k at time i . In this paper, we allow the thresholds to be time-varying. W e further assume { δ k ( i ) } of each node k are upper bounded, and let δ k = sup { δ k ( i ) | i > 0 } . (10) In addition, we define binary variables { γ k ( i ) } such that γ k ( i ) = 1 if node k transmits at time instant i , and 0 otherwise. The sequence of triggering time instants 0 ≤ s 1 k ≤ s 2 k ≤ . . . can then be defined recursi vely as s n +1 k = min { i ∈ N | i > s n k , γ k ( i ) = 1 } . (11) For ev ery node in the network, we apply the event-based adapt-then-combine (EB-A TC) strategy detailed in Algorithm 1. Note that ev ery node always combines its own intermediate estimate regardless of the triggering status. A succinct form of the EB-A TC can be summarized as the following equations, ψ k ( i ) = w k ( i − 1) + µ k u k ( i ) d k ( i ) − u k ( i ) T w k ( i − 1) , (12) w k ( i ) = a kk ψ k ( i ) + X ` ∈N k \ k a `k ψ ` ( i ) . (13) I V . P E R F O R M A N C E A N A LY S I S In this section, we study the mean and mean-square error behavior of the EB-A TC diffusion strategy . A. Network Err or Recursion Model In order to facilitate the analysis of error behavior , we first define some necessary symbols and deri ve the recursiv e equations of errors across the network. T o begin with, the error vectors of each node k at time instant i are giv en by e ψ k ( i ) = w ◦ − ψ k ( i ) , (14) e w k ( i ) = w ◦ − w k ( i ) . (15) 6 Algorithm 1 Event-based A TC Dif fusion Strate gy (EB-A TC) 1: for e very node k at each time instant i do 2: Local Update: 3: Obtain intermediate estimate ψ k ( i ) using (4) 4: Event-based T riggering: 5: Compute − k ( i ) and f − k ( i ) . 6: if f − k ( i ) > δ k ( i ) then 7: (i) T rigger the communication, broadcast local update ψ k,i to ev ery neighbors ` ∈ N k . 8: (ii) Mark γ k ( i ) = 1 , and update ψ ` ( i ) = ψ ` ( i ) . 9: else if f − k ( i ) ≤ δ k ( i ) then 10: (i) Keep silent. 11: (ii) Mark γ k ( i ) = 0 , and update ψ ` ( i ) = ψ ` ( i − 1) . 12: end if 13: Diffusion Combination 14: w k ( i ) = a kk ψ k ( i ) + P ` ∈N k \ k a `k ψ ` ( i ) 15: end for Recall that under EB-A TC each node only combines the local updates { ψ ` ( i ) | ` ∈ N k } that were pre viously receiv ed from its neighbors. Therefore, we also introduce the a posterior gap k ( i ) defined as: k ( i ) = ψ k ( i ) − ψ k ( i ) , (16) to capture the discrepancy between the local intermediate estimate ψ k ( i ) and the estimate ψ k ( i ) that is av ailable at neighboring nodes. W e hav e k ( i ) = 0 , if − k ( i ) 2 Y k > δ k ( i ) , − k ( i ) , otherwise . (17) From (17), we hav e the following result. Lemma 1. The a posterior gap k ( i ) is bounded, and k k ( i ) k ≤ δ k λ min ( Y k ) 1 2 . Pr oof. See Appendix A 7 Collecting the iterates e ψ k,i , e w k,i , and k ( i ) across all nodes we have, e ψ ( i ) = col e ψ k ( i ) N k =1 , (18) e w ( i ) = col n ( e w k ( i )) N k =1 o , (19) ( i ) = col n ( k ( i )) N k =1 o . (20) Subtracting both sides of (12) from w ◦ , and applying the data model (1), we obtain the following error recursion for each node k : e ψ k ( i ) = I M − µ k u k ( i ) u T k ( i ) e w k ( i ) − µ k u k ( i ) v k ( i ) . (21) Note that by resorting to (16), the local combination step (13) can be e xpressed as w k ( i ) = a kk ψ k ( i ) + X ` ∈N k \ k a `k ( ψ ` ( i ) − ` ( i )) , (22) then subtract both sides of the above equation from w ◦ we obtain e w k ( i ) = X ` ∈N k a `k e ψ ` ( i ) + X ` ∈N k \ k a `k ` ( i ) . (23) Let A be the matrix whose ( `, k ) -th entry is the weight a `k , also we introduce matrix C = A − diag ( a kk ) N k =1 . Then relating (19), (20), (21), and (23) yields the following recursion: e w ( i ) = B ( i ) e w ( i − 1) − A T M s ( i ) + C T ( i ) , (24) where A = A ⊗ I M , C = C ⊗ I M (25) B ( i ) = A T ( I M N − M R u ( i )) , (26) R u ( i ) = diag n ( u k ( i ) u T k ( i )) N k =1 o , (27) M = diag ( µ k I M ) N k =1 , (28) s ( i ) = A T col ( u k ( i ) v k ( i )) N k =1 . (29) B. Mean Err or Analysis Suppose Assumption 1 and Assumption 2 hold, then by taking expectation on both sides of (24) we hav e the following recursion model for the network mean error , E [ e w ( i )] = B E [ e w ( i − 1)] + C T E [ ( i )] , (30) 8 where B = E [ B ] = A T ( I M N − MR u ) , (31) R u = E [ R u ( i )] = diag ( R u,k ) N k =1 . (32) W e hav e the following result on the asymptotic behavior of the mean error . Theorem 1. (Mean Error Stability) Suppose that Assumption 1 and Assumption 2 hold. Then, the network mean err or vector of EB-ATC, i.e ., E [ e w ( i )] , is bounded input bounded output (BIBO) stable in steady state if the step-size µ k is chosen such that µ k < 2 λ max ( R u,k ) . (33) In addition, the block maximum norm of the network mean error is upper-bounded by α 1 − β · max 1 ≤ k ≤ N δ k λ min ( Y k ) 1 2 , (34) wher e, α = max 1 ≤ k ≤ N (1 − a kk ) , β = k I M N − MR u k b, ∞ . (35) Pr oof. See Appendix B C. Mean-square Err or Analysis Due to the triggering mechanism and resulting a posterior gap, (20) correlates with the error vectors (18) and (19), and explicitly characterizing the exact network MSD of EB-A TC is technically difficult. Instead, we study the upper bound of the network MSD. First, we deriv e the MSD recursions as follows. From the recursion (24), we have the following for any compatible non-negati ve definite matrix Σ : k e w ( i ) k 2 Σ = e w ( i − 1) T B ( i ) T Σ B ( i ) e w ( i − 1) + s ( i ) T M T A Σ A T M s ( i ) + ( i ) T C Σ C T ( i ) + 2 e w ( i − 1) T B ( i ) T Σ C T ( i ) − 2 s ( i ) M T A Σ C T ( i ) − 2 e w ( i − 1) T B ( i ) T Σ A T M s ( i ) . (36) T aking expectation on both sides of the abo ve expression, the last term e valuates to zero under Assumption 1-2, and we have E k e w ( i ) k 2 Σ = E k e w ( i − 1) k 2 Σ 0 + t 2 + t 3 + 2 t 4 − 2 t 5 , (37) where the weighting matrix Σ 0 is Σ 0 = E h B ( i ) T Σ B ( i ) i , (38) 9 and the last four terms in (37) are given as follows, t 2 = E [ s ( i ) T MA Σ A T M s ( i )] , (39) t 3 = E [ ( i ) T C Σ C T ( i )] , (40) t 4 = E [ e w ( i − 1) T B ( i ) T Σ C T ( i )] , (41) t 5 = E [ s ( i ) M T A Σ C T ( i )] . (42) Further , we let σ = vec(Σ) and σ 0 = v ec(Σ 0 ) . W e then have σ 0 = E σ , where E = E h B ( i ) T ⊗ B ( i ) T i = [ I M 2 N 2 − I M N ⊗ MR u − MR u ⊗ I M N + ( M ⊗ M ) E ( R u ( i ) ⊗ R u ( i ))] A ⊗ A . (43) So that (37) can be rewritten as, E k e w ( i ) k 2 σ = E k e w ( i ) k 2 E σ + t 2 + t 3 + 2 t 4 − 2 t 5 . (44) Next, we derive the expression and bounds for terms 1) T erm t 2 : For the term t 2 , we hav e t 2 = E h T r A T M s ( i ) s ( i ) T MA Σ i = T r h A T M E s ( i ) s ( i ) T MA Σ i = T r A T MS MA Σ = v ec A T MS MA T σ, (45) where the equality (45) follows from the identity T r( AB ) = vec( A T ) T v ec( B ) , and S = diag ( σ 2 v ,k R u,k ) N k =1 . (46) 2) T erm t 3 : Similarly , we hav e the following for the term t 3 , t 3 = T r h C T E ( i ) ( i ) T C Σ i = v ec( C ) T h Σ ⊗ E ( i ) ( i ) T i v ec( C ) (47) 10 Moreov er , it can be verified that relationship y y T ≤ y T y I N holds for any vector y ∈ R N , and thus ( i ) ( i ) T ≤ ( i ) T ( i ) I M N follo ws immediately , so that we have E ( i ) ( i ) T ≤ ( i ) T ( i ) I M N = N X k =1 k k ( i ) k 2 I M N = N X k =1 δ k λ min ( Y k ) 1 2 I M N . (48) No w , letting ∆ = N X k =1 δ k λ min ( Y k ) 1 2 , (49) due to Σ ≥ 0 the following results follows, Σ ⊗ h E ( i ) ( i ) T − ∆ I M N i ≤ 0 , (50) and therefore, v ec( C ) T n Σ ⊗ h E ( i ) ( i ) T − ∆ I M N io v ec( C ) ≤ 0 , (51) or equiv alently , v ec( C ) T h Σ ⊗ E ( i ) ( i ) T i v ec( C ) ≤ ∆ · v ec( C ) T (Σ ⊗ I M N ) v ec( C ) = ∆ · T r C T C Σ , (52) which further implies that t 3 ≤ ∆ · vec C T C σ. (53) 3) T erm t 4 : Since matrix Σ is positive semi-definite, so that we ha ve Σ = ΘΘ T . Then, let P = e w ( i ) T B T ( i )Θ , Q = ( i ) T C Θ . (54) From the fact ( P − Q )( P − Q ) T ≥ 0 we ha ve the following, P Q T + QP T ≤ P P T + QQ T . (55) Substituting (54) into the above inequality and taking expectation on both sides gives, 2 t 4 ≤ E h e w ( i − 1) T B ( i ) T Σ B ( i ) e w ( i − 1) i + E h ( i ) T C Σ C T ( i ) i = E k e w ( i − 1) k 2 Σ 0 + t 3 . (56) 11 4) T erm t 5 : Applying manipulations similar with t 3 to t 3 , we hav e t 5 = T r h C T E ( i ) s ( i ) T MA Σ i = v ec C T E ( i ) s ( i ) T MA Σ T σ. (57) T o facilitate the ev aluation of the cov ariance matrix E ( i ) s ( i ) T , we deri ve its ( k , ` ) -th block entry , i.e., E [ k ( i ) u ` ( i ) v ` ( i )] . T o this end, substituting (1) into (12), we can express ψ k ( i ) as follows, ψ k ( i ) = w k ( i − 1) + µ k u k ( i ) u k ( i ) T e w k ( i − 1) + µ k u k ( i ) v k ( i ) , (58) so that we hav e E [ ψ k ( i ) u ` ( i ) v ` ( i )] = E [ w k ( i − 1) u ` ( i ) v ` ( i )] + µ k E h u k ( i ) u k ( i ) T e w k ( i − 1) u ` ( i ) v ` ( i ) i + µ k E [ u k ( i ) v k ( i ) u ` ( i ) v ` ( i )] . (59) Note that (59) e v aluates to zero if ` 6 = k , and when ` = k the first two terms in (59) ev aluate to zero, and the last term equals µ k σ 2 v ,k R u,k . In addition, E ψ k ( i ) u ` ( i ) v ` ( i ) = 0 for all { k , ` } ∈ V . Therefore, at particular time instant i , by conditioning on γ k ( i ) = γ k ( i ) for all k , from (8) and (17) we conclude that E [ k ( i ) u ` ( i ) v ` ( i )] = 0 , if ` 6 = k , µ k σ 2 v ,k R u,k , if ` = k and γ k ( i ) = 0 . So that the term t 5 can be expressed as, t 5 = − v ec C T G ( i ) MS MA T σ, (60) where matrix S is gi ven in (46) and G ( i ) = E diag ( γ k ( i ) I M ) N k =1 − I M N . (61) Therefore, substituting (45), (53), (56), and (61) into (44), we have the following bound for the network MSD at time instant i , E k e w ( i ) k 2 σ ≤ E k e w ( i − 1) k 2 D σ + [ f 1 + f 2 + f 3 ( i )] T σ, (62) where D = 2 E and matrix E is given in (43), and f 1 = v ec A T MS MA , f 2 = 2∆ · v ec C T C , f 3 ( i ) = 2 v ec C T G ( i ) MS MA . (63) 12 Assumption 3. Each node k adopts a r e gr essor covariance matrix R u,k whose eigen values satisfy λ max ( R u,k ) < 2 + √ 2 2 − √ 2 λ min ( R u,k ) . (64) Theorem 2. (Mean-square Error Behavior) Suppose that Assumptions 1-2 hold. Then, as i → ∞ , the network MSD of EB-A TC, i.e., E k e w ( i ) k 2 /N , has a finite constant upper bound if the step sizes { µ k } ar e chosen such that ρ ( D ) < 1 is satisfied. In addition, it follows that matrix D can be appr oximated by D ≈ F = D + O ( M 2 ) , wher e F = 2 B T ⊗ B T , (65) so that if Assumption 3 also holds and { µ k } also satisfy 1 − √ 2 2 λ min ( R u,k ) < µ k < 1 + √ 2 2 λ max ( R u,k ) , (66) an upper bound of the network MSD in steady state is given by 1 N h ( f 1 + f 2 ) T ( I M 2 N 2 − F ) − 1 + f 3 , ∞ i v ec( I M N ) + O ( µ 2 max ) , (67) wher e, µ max = max 1 ≤ k ≤ N { µ k } , (68) f 3 , ∞ = lim i →∞ i − 1 X j =0 f 3 ( i − j ) T F j . (69) Pr oof. See Appendix C Remark 1. Assumption 3 is needed additionally to ensur e that the set of µ k in (66) is non-empty . Note that if R u,k is chosen to be R u,k = σ 2 u,k I M , the above assumption (64) is automatically met, and condition (66) becomes 2 − √ 2 2 σ 2 u,k < µ k < 2 + √ 2 2 σ 2 u,k . (70) Besides, although diffusion adaptation strate gies [7]–[12] usually do not have lower bounds for step sizes on the stability of network MSD, the condition (66) is a sufficient condition to ensur e the upper bound of the network MSD (62) con verg es at steady state, so that (66) is only sufficient (but not necessary) for the stability of the exact network MSD in steady state. Indeed, numerical studies also suggest that without r elying on Assumption 3 and choosing a step size even smaller than the lower bounds in (66) will not cause the diver gence of the network MSD in steady state. 13 (a) Network topology (b) MSD performance (c) A verage ENTR Fig. 1: Simulation results for the network. V . S I M U L A T I O N R E S U L T S In this section, numerical examples are provided to illustrate the MSD performance and energy- ef ficiency of the proposed EB-A TC, and to compare against A TC and the non-cooperativ e LMS algorithm. W e performed simulations on a network with N = 60 nodes as depicted in Fig. 1(a). The measurement noise powers { σ 2 v ,k } are generated from a uniform distribution over [ − 25 , − 10] dB. W e consider a parameter of interest w ◦ with dimension M = 10 , and suppose that the zero-mean regressor u k ( i ) has cov ariance R u,k = σ 2 u,k I M , where the coef ficients { σ 2 u,k } are drawn uniformly from the interval [1 , 2] . For the ease of implementation, we adopt constant and uniform triggering thresholds δ k ( i ) = δ , and identity weighting matrix Y k = I M for the e vent triggering function of e very node. Moreover , we use the Metr opolis rule [11] for the diffusion combination (13). All the simulations results are av eraged ov er 200 Monte Carlo runs. From Fig. 1(b), it can be observed that compared with the A TC strategy , MSDs of the proposed EB- A TC in steady-state are higher by a few dBs, but still much lower than that of the non-cooperativ e LMS algorithm, which demonstrates the capability of EB-A TC to preserve the benefits of dif fusion cooperation. On the other hand, the con ver gence of EB-A TC is relatively slower . This is because in the transient phase, the ev ent-based communication mechanism of EB-A TC restricts the frequency of exchanging the newest local intermediate estimates { ψ k,i } , for the purpose of energy saving. This leads to inferior transient performance compared to A TC. On the other hand, EB-A TC achiev es significant communication o verhead savings compared to A TC. 14 T o visualize this, we define the expected network triggering rate (ENTR) as follows: ENTR( i ) = 1 N N X k =1 E γ k ( i ) . (71) The ENTR at time instant i captures how frequently communication is triggered by each node at that time instant i , on av erage. ENTR is directly proportional to the average communication overhead incurred by the nodes in the network at each time instant. From (71), it is clear that 0 ≤ ENTR( i ) ≤ 1 , so a smaller value of ENTR( i ) implies a lower energy consumption. Note that A TC has ENTR ( i ) = 1 for all time instants i . From Fig. 1(c), we observe that the ENTR for EB-A TC decays rapidly over time during the transient phase, and for all the different triggering thresholds we tested, EB-A TC uses less than 30% of the communication ov erhead of A TC after the time instant i ≈ 200 , which is the a verage time that the MSD of A TC is within 90% of its steady-state v alue. This demonstrates that e ven though EB-A TC has not reached steady-state (at i ≈ 600 ), communication between nodes do not trigger very frequently as the intermediate estimates do not change significantly after this time instant. Furthermore, in steady state, although each node maintains estimates that are close to the true parameter value, communication triggering does not completely stop. This is due to occasional abrupt changes in the random noise and regressors, which can make the local estimate update deviate significantly . This is in the same spirit of why MSD does not con ver ge to zero. It is also worth mentioning that, although in theory the methods in the literature [28]–[30] can save more energy by transmitting only a few entries or compressed v alues, for real-time applications they may not be as reliable as EB-A TC in under the same channel conditions, especially when the SNR is poor . T o guarantee successful diffusion cooperation among neighborhood, higher channel SNR or more robust encoding scheme is required for [28]–[30], whereas EB-A TC is simpler yet effecti ve. V I . C O N C L U S I O N W e have proposed an e vent-based diffusion A TC strategy where communication among neighboring nodes is triggered only when significant changes occur in the local updates. The proposed algorithm is not only able to significantly reduce communication overhead, but can still maintain good MSD performance at steady-state compared with the conv entional diffusion A TC strategy . Future research includes analyzing the expected triggering rate theoretically as well as characterizing the rate of conv ergence, and to establish their relationship with the triggering threshold, so that the thresholds can be selected to optimize its performance. 15 A P P E N D I X A P RO O F O F L E M M A 1 Since Y k is positi ve semi-definite, and therefore real symmetric, so that there exists an unitary matrix U such that Y k = U diag λ m ( Y k ) N m =1 U T , (72) Let φ m , m = 1 , 2 , . . . , M be the eigenv ectors of Y k , so we hav e U = [ φ 1 , φ 2 , · · · , φ M ] . (73) Recall that any vector x ∈ R M can be expressed as x = X m ( φ T m x ) φ m , (74) therefore it is easy to verify that k x k 2 Y k k x k 2 = x T Y k x x T x = P m λ m ( Y k )( φ T m x ) 2 P m ( φ T m x ) 2 ≥ λ min ( Y k ) (75) which implies λ min ( Y k ) · k k ( i ) k 2 ≤ k k ( i ) k 2 Y k . (76) Besides, from (17), we can conclude that k k ( i ) k 2 Y k ≤ − k ( i ) 2 Y k ≤ δ k ( i ) (77) Therefore, we hav e λ min ( Y k ) · k k ( i ) k 2 ≤ δ k ( i ) ≤ δ k (78) which giv es k k ( i ) k ≤ δ k λ min ( Y k ) 1 2 . (79) The proof is complete. 16 A P P E N D I X B P RO O F O F T H E T H E O R E M 1 T aking block maximum norm k·k b, ∞ to E [ ( i )] , due to ev ery norm is a con vex function of its ar gument, by Jensen’ s inequality and Lemma 1, we have k E [ ( i )] k b, ∞ ≤ E h k ( i ) k b, ∞ i (80) = E max 1 ≤ k ≤ N k k ( i ) k (81) ≤ max 1 ≤ k ≤ N δ k λ min ( Y ) 1 2 , (82) where we ha ve used the definition of the block maximum norm in [11] for the equality (81), and (82) follo ws from the Lemma 1. The right hand side (R.H.S.) of (82) is a finite constant scalar, which implies that the input signal to the recursion (30), i.e., E [ ( i )] is bounded. Therefore, the recursion (30) is BIBO stable if ρ ( B ) < 1 . In addition, since matrix A T is left-stochastic, by applying the Lemma. D5 and Lemma. D6 in [11], we hav e the following from (31), ρ ( B ) = ρ A T ( I M N − MR u ) (83) ≤ ρ ( I M N − MR u ) (84) = k I M N − MR u k b, ∞ . (85) Therefore, we conclude that the network mean error is BIBO stable if k I M N − MR u k b, ∞ < 1 , (86) which further yields the condition (33). T o establish the upper bound (34), we iterate (30) from i = 0 , which gives, E [ e w ( i )] = B i E [ e w (0)] + i − 1 X j =0 B j C T E [ ( i − j )] . (87) Then applying block maximum norm k·k b, ∞ on both sides of the abov e equation, by the properties of vector norms and induced matrix norms, it can be obtained that k E [ e w ( i )] k b, ∞ ≤ B i b, ∞ · k E [ e w (0)] k b, ∞ + i − 1 X j =0 B j b, ∞ · C T E [ ( i − j )] b, ∞ (88) ≤ A T i b, ∞ · k I M N − MR u k i b, ∞ · k E [ e w (0)] k b, ∞ (89) + i − 1 X j =0 A T j b, ∞ · k I M N − MR u k j b, ∞ · C T b, ∞ · k E [ ( i − j )] k b, ∞ . (90) 17 Let α = C T b, ∞ , from the Lemma. D3 of [11] we have α = C T ∞ = max 1 ≤ k ≤ N (1 − a kk ) . (91) Moreov er , since matrix A T is left-stochastic, so that we hav e A T b, ∞ = 1 by the Lemma. D4 of [11]. Let β = k I M N − MR u k b, ∞ , then substitute (82) into (90) we obtain that, k E [ e w ( i )] k b, ∞ ≤ k E [ e w (0)] k b, ∞ · β i + α · max 1 ≤ k ≤ N δ k λ min ( Y ) 1 2 · i − 1 X j =0 β j . (92) If step size µ k is chosen to satisfy 0 ≤ β < 1 , then letting i → ∞ on both sides of (92) we arriv e at follo wing inequality relationship lim i →∞ k E [ e w ( i )] k b, ∞ ≤ α 1 − β · max 1 ≤ k ≤ N δ k λ min ( Y ) 1 2 , (93) and the proof is complete. A P P E N D I X C P RO O F O F T H E T H E O R E M 2 T o obtain the upper bound of network MSD at steady state, iterating (62) from i = 1 , we have E k e w ( i ) k 2 σ ≤ E k e w ( i − 1) k 2 D σ + ( f 1 + f 2 ) T i − 1 X j =0 D j σ + i − 1 X j =0 f 3 ( i − j ) T D j σ, (94) where vectors f 1 , f 2 , and f 3 ( i ) are giv en in (63). Letting i → ∞ , the first term on the R.H.S. of the above inequality con verges to zero, and the second term con ver ge to a finite value ( f 1 + f 2 ) T ( I M 2 N 2 − D ) − 1 σ , if and only if D i → 0 as i → ∞ , i.e., ρ ( D ) < 1 . From (61) and (63) we hav e f 3 ( i ) is bounded due to ev ery entry of matrix G ( i ) is bounded. Moreover , if ρ ( D ) < 1 , there exists a norm k·k ζ such that kD k ζ < 1 , therefore we have f 3 ( i − j ) T D j σ ≤ a · kD k j ζ , (95) for some positiv e constant a . Since kD k j ζ → 0 as j → ∞ , the series, i − 1 X j =0 f 3 ( i − j ) T D j σ (96) con verges as i → ∞ , which implies the absolute con ver gence of the third term of R.H.S of (94). Besides, note that the matrix F gi ven in (65) can be explicitly expressed as F = 2 B T ⊗ B T = [ I M 2 N 2 − I M N ⊗ MR u − MR u ⊗ I M N + ( M ⊗ M ) ( R u ⊗ R u )] A ⊗ A . (97) 18 Substituting (43) in to D = 2 E and comparing with the above (97), we have D = F + O ( M 2 ) , (98) where O ( M 2 ) = ( M ⊗ M ) { E [ R u ( i ) ⊗ R u ( i )] − R u ⊗ R u } , (99) so that substituting (98) into the R.H.S of (94) gi ves E k e w ( i ) k 2 σ ≤ E k e w ( i − 1) k 2 F σ + ( f 1 + f 2 ) T i − 1 X j =0 F j σ + i − 1 X j =0 f 3 ( i − j ) T F j σ + E k e w ( i − 1) k 2 O ( M 2 ) σ + g ( i ) T O ( M 2 ) σ, (100) where g ( i ) = f 1 + f 2 + i − 1 X j f 3 ( j ) . (101) Due to the vector g ( i ) is bounded, so that if ρ ( D ) < 1 such that E k e w ( i − 1) k 2 σ is bounded, then the last two terms on the R.H.S of (100) are negligible for sufficiently small step sizes { µ k } , which means the matrix D can be approximated by D ≈ F if { µ k } are suf ficiently small and also satisfy ρ ( D ) < 1 . Therefore (100) can be further expressed as E k e w ( i ) k 2 σ ≤ E k e w ( i − 1) k 2 F σ + ( f 1 + f 2 ) T i − 1 X j =0 F j σ + i − 1 X j =0 f 3 ( i − j ) T F j σ + O ( µ 2 max ) . (102) Choosing σ = vec( I M N ) N and using arguments similar for (94), as i → ∞ the first term on the R.H.S of (102) con verges to 1 N [( f 1 + f 2 ) ( I M 2 N 2 − F ) − 1 + g ∞ ] v ec( I M N ) , (103) if and only if F is stable, i.e., ρ ( F ) < 1 , where f 3 , ∞ is giv en in (69). Since ρ ( F ) = ρ (2 B T ⊗ B T ) = 2 ρ ( B ) 2 , (104) so that a suf ficient condition to guarantee ρ ( F ) < 1 is ρ ( B ) < √ 2 2 . By the Lemma D.5 in [11], we hav e ρ ( B ) ≤ ρ ( I M N − MR u ) = max 1 ≤ k ≤ N ρ ( I M − µ k R u,k ) . (105) Thus, to hav e ρ ( B ) < √ 2 2 , we need max 1 ≤ k ≤ N ρ ( I M − µ k R u,k ) < √ 2 2 , (106) 19 which is requiring each node k to satisfy max 1 ≤ m ≤ M | 1 − µ k λ m ( R u,k ) | < √ 2 2 , (107) and this is equiv alent to require that | 1 − µ k λ m ( R u,k ) | < √ 2 2 (108) holds for each eigen value of R u,k , i.e., λ m ( R u,k ) . From (108), we obtain that µ k needs to satisfy 1 − √ 2 2 λ m ( R u,k ) < µ k < 1 + √ 2 2 λ m ( R u,k ) (109) for each of { λ m ( R u,k ) | 1 ≤ m ≤ M } . In addition, suppose for each R u,k we hav e λ max ( R u,k ) < 2 + √ 2 2 − √ 2 λ min ( R u,k ) , (110) then requiring µ k to satisfy (109) for ev ery λ m ( R u,k ) yields 1 − √ 2 2 λ min ( R u,k ) < µ k < 1 + √ 2 2 λ max ( R u,k ) , which is the condition (66). R E F E R E N C E S [1] D. Bertsekas, “ A new class of incremental gradient methods for least squares problems, ” SIAM J. Optim. , vol. 7, no. 4, pp. 913–926, 1997. [2] M. G. Rabbat and R. D. No wak, “Quantized incremental algorithms for distributed optimization, ” IEEE J. Sel. Areas Commun. , vol. 23, no. 4, pp. 798–808, April 2005. [3] N. Bogdanovi ´ c, J. Plata-Chav es, and K. Berberidis, “Distrib uted incremental-based LMS for node-specific adaptive parameter estimation, ” IEEE T rans. Signal Process. , vol. 62, no. 20, pp. 5382–5397, Oct 2014. [4] L. Xiao, S. Boyd, and S. Lall, “ A space-time diffusion scheme for peer-to-peer least-squares estimation, ” in Pr oc. Int. Conf. on Info. Pr ocess. in Sensor Networks , 2006, pp. 168–176. [5] A. Nedic, A. Ozdaglar, and P . A. Parrilo, “Constrained consensus and optimization in multi-agent networks, ” IEEE T rans. Autom. Contr ol , vol. 55, no. 4, pp. 922–938, April 2010. [6] K. Sriv astava and A. Nedic, “Distributed asynchronous constrained stochastic optimization, ” IEEE J. Sel. T opics Signal Pr ocess. , vol. 5, no. 4, pp. 772–790, Aug 2011. [7] F . S. Cattivelli and A. H. Sayed, “Diffusion LMS strategies for distributed estimation, ” IEEE T rans. Signal Pr ocess. , vol. 58, no. 3, pp. 1035–1048, March 2010. [8] X. Zhao and A. H. Sayed, “Performance limits for distributed estimation over LMS adaptive networks, ” IEEE Tr ans. Signal Pr ocess. , vol. 60, no. 10, pp. 5107–5124, Oct 2012. [9] S. Y . Tu and A. H. Sayed, “Diffusion strategies outperform consensus strategies for distributed estimation over adaptive networks, ” IEEE T rans. Signal Process. , vol. 60, no. 12, pp. 6217–6234, Dec 2012. [10] A. H. Sayed, S. Y . Tu, J. Chen, X. Zhao, and Z. J. T owfic, “Dif fusion strategies for adaptation and learning ov er networks: an examination of distributed strategies and network behavior , ” IEEE Signal Pr ocess. Mag. , vol. 30, no. 3, pp. 155–171, May 2013. 20 [11] A. H. Sayed, “Diffusion adaptation over networks, ” in Academic Pr ess Library in Signal Pr ocessing . Elsevier , 2014, vol. 3, pp. 323 – 453. [12] ——, “ Adaptiv e networks, ” Pr oc. IEEE , vol. 102, no. 4, pp. 460–497, April 2014. [13] W . Hu and W . P . T ay , “Multi-hop dif fusion LMS for energy-constrained distrib uted estimation, ” IEEE T rans. Signal Pr ocess. , vol. 63, no. 15, pp. 4022–4036, Aug 2015. [14] Y . Zhang, C. W ang, L. Zhao, and J. A. Chambers, “ A spatial diffusion strategy for tap-length estimation ov er adaptive networks, ” IEEE T rans. Signal Process. , vol. 63, no. 17, pp. 4487–4501, Sept 2015. [15] R. Abdolee and B. Champagne, “Diffusion LMS strategies in sensor networks with noisy input data, ” IEEE/ACM T rans. Netw . , vol. 24, no. 1, pp. 3–14, Feb 2016. [16] S. Ghazanfari-Rad and F . Labeau, “Formulation and analysis of LMS adaptiv e networks for distributed estimation in the presence of transmission errors, ” IEEE Internet Things J. , vol. 3, no. 2, pp. 146–160, April 2016. [17] M. J. Piggott and V . Solo, “Diffusion LMS with correlated regressors i: Realization-wise stability , ” IEEE T rans. Signal Pr ocess. , vol. 64, no. 21, pp. 5473–5484, Nov 2016. [18] K. Ntemos, J. Plata-Cha ves, N. Kolok otronis, N. Kalouptsidis, and M. Moonen, “Secure information sharing in adversarial adaptiv e diffusion networks, ” IEEE T rans. Signal Inf. Pr ocess. Netw . , vol. PP , no. 99, pp. 1–1, 2017. [19] C. W ang, Y . Zhang, B. Y ing, and A. H. Sayed, “Coordinate-descent diffusion learning by networked agents, ” IEEE T rans. Signal Pr ocess. , vol. 66, no. 2, pp. 352–367, Jan 2018. [20] J. Plata-Chav es, N. Bogdanovi ´ c, and K. Berberidis, “Distributed diffusion-based LMS for node-specific adaptive parameter estimation, ” IEEE T rans. Signal Pr ocess. , vol. 63, no. 13, pp. 3448–3460, July 2015. [21] R. Nassif, C. Richard, A. Ferrari, and A. H. Sayed, “Multitask diffusion adaptation over asynchronous networks, ” IEEE T rans. Signal Pr ocess. , vol. 64, no. 11, pp. 2835–2850, June 2016. [22] J. Chen, C. Richard, and A. H. Sayed, “Multitask diffusion adaptation over networks with common latent representations, ” IEEE J. Sel. T opics Signal Pr ocess. , vol. 11, no. 3, pp. 563–579, April 2017. [23] Y . W ang, W . P . T ay , and W . Hu, “ A multitask diffusion strategy with optimized inter-cluster cooperation, ” IEEE J. Sel. T opics Signal Pr ocess. , vol. 11, no. 3, pp. 504–517, April 2017. [24] J. Fernandez-Bes, J. Arenas-García, M. T . M. Silv a, and L. A. Azpicueta-Ruiz, “ Adaptive dif fusion schemes for heterogeneous networks, ” IEEE T rans. Signal Process. , vol. 65, no. 21, pp. 5661–5674, Nov 2017. [25] X. Zhao and A. H. Sayed, “Single-link diffusion strate gies ov er adapti ve networks, ” in Pr oc. IEEE Int. Conf. on Acoustics, Speech and Signal Pr ocess. , March 2012, pp. 3749–3752. [26] R. Arablouei, S. W erner, K. Do ˘ gançay , and Y .-F . Huang, “ Analysis of a reduced-communication dif fusion LMS algorithm, ” Signal Pr ocessing , vol. 117, pp. 355–361, 2015. [27] W . Huang, X. Y ang, and G. Shen, “Communication-reducing dif fusion LMS algorithm ov er multitask networks, ” Information Sciences , vol. 382, pp. 115–134, 2017. [28] R. Arablouei, S. W erner, Y . F . Huang, and K. Do ˘ gançay , “Distributed least mean-square estimation with partial diffusion, ” IEEE T rans. Signal Pr ocess. , vol. 62, no. 2, pp. 472–484, Jan 2014. [29] M. O. Sayin and S. S. Kozat, “Compressiv e dif fusion strategies ov er distributed networks for reduced communication load, ” IEEE T rans. Signal Pr ocess. , vol. 62, no. 20, pp. 5308–5323, Oct 2014. [30] I. E. K. Harrane, R. Flamary , and C. Richard, “Doubly compressed diffusion LMS over adaptive networks, ” in Pr oc. 50-th Asilomar Conf. on Signals, Sys. and Comp. , Nov 2016, pp. 987–991. [31] J. W u, Q. S. Jia, K. H. Johansson, and L. Shi, “Event-based sensor data scheduling: T rade-off between communication rate and estimation quality , ” IEEE T rans. Autom. Contr ol , vol. 58, no. 4, pp. 1041–1046, April 2013. 21 [32] D. Han, Y . Mo, J. Wu, S. W eerakkody , B. Sinopoli, and L. Shi, “Stochastic event-triggered sensor schedule for remote state estimation, ” IEEE T rans. Autom. Contr ol , vol. 60, no. 10, pp. 2661–2675, Oct 2015. [33] Q. Liu, Z. W ang, X. He, and D. H. Zhou, “Event-based recursiv e distrib uted filtering over wireless sensor networks, ” IEEE T rans. Autom. Contr ol , vol. 60, no. 9, pp. 2470–2475, Sept 2015. [34] A. Mohammadi and K. N. Plataniotis, “Event-based estimation with information-based triggering and adaptive update, ” IEEE T rans. Signal Pr ocess. , vol. 65, no. 18, pp. 4924–4939, Sept 2017. [35] G. S. Seyboth, D. V . Dimarogonas, and K. H. Johansson, “Event-based broadcasting for multi-agent average consensus, ” Automatica , vol. 49, no. 1, pp. 245–252, 2013. [36] E. Garcia, Y . Cao, and D. W . Casbeer, “Decentralized event-triggered consensus with general linear dynamics, ” Automatica , vol. 50, no. 10, pp. 2633–2640, 2014. [37] W . Hu, L. Liu, and G. Feng, “Consensus of linear multi-agent systems by distrib uted event-triggered strategy , ” IEEE T rans. Cybern. , vol. 46, no. 1, pp. 148–157, Jan 2016. [38] L. Xing, C. W en, F . Guo, Z. Liu, and H. Su, “Event-based consensus for linear multiagent systems without continuous communication, ” IEEE T rans. Cybern. , vol. 47, no. 8, pp. 2132–2142, Aug 2017. [39] I. Utlu, O. F . Kilic, and S. S. Kozat, “Resource-aware event triggered distributed estimation ov er adaptiv e networks, ” Digital Signal Pr ocessing , vol. 68, pp. 127–137, 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

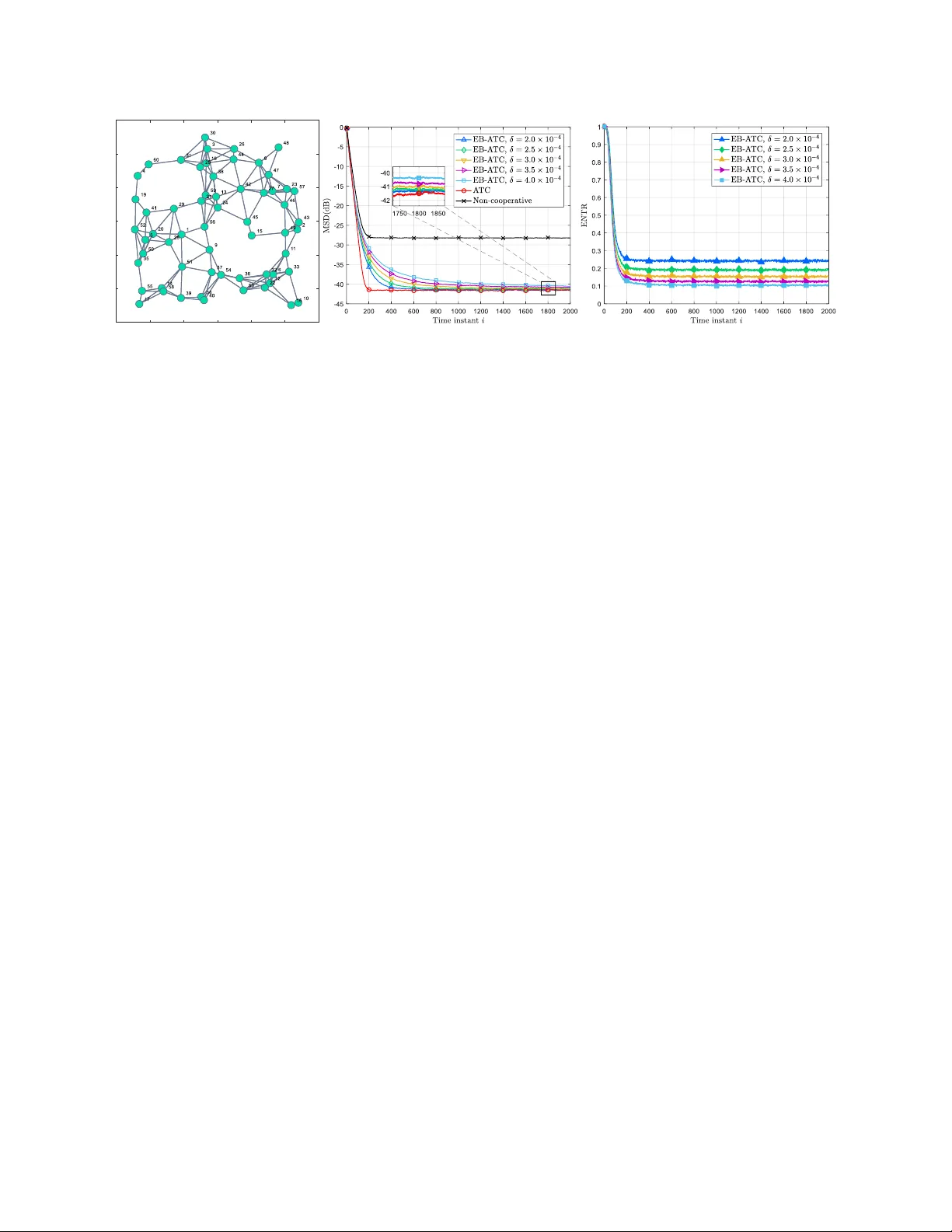

Leave a Comment