The ESO Survey of Non-Publishing Programmes

One of the classic ways to measure the success of a scientific facility is the publication return, which is defined as the number of refereed papers produced per unit of allocated resources (for example, telescope time or proposals). The recent studies by Sterzik et al. (2015, 2016) have shown that 30-50 % of the programmes allocated time at ESO do not produce a refereed publication. While this may be inherent to the scientific process, this finding prompted further investigation. For this purpose, ESO conducted a Survey of Non-Publishing Programmes (SNPP) within the activities of the Time Allocation Working Group, similar to the monitoring campaign that was recently implemented at ALMA (Stoehr et al. 2016). The SNPP targeted 1278 programmes scheduled between ESO Periods 78 and 90 (October 2006 to March 2013) that had not published a refereed paper as of April 2016. The poll was launched on 6 May 2016, remained open for four weeks, and returned 965 valid responses. This article summarises and discusses the results of this survey, the first of its kind at ESO.

💡 Research Summary

The paper presents the results of the ESO Survey of Non‑Publishing Programmes (SNPP), the first systematic investigation of why a substantial fraction of allocated observing time does not translate into refereed publications. The authors selected all Normal, Guaranteed Time (GTO) and Target of Opportunity (TOO) programmes scheduled between October 2006 and March 2013 that had not produced a refereed paper by 16 April 2016. This yielded 1 278 “non‑publishing” programmes, representing 47 % of the 2 716 programmes that met the selection criteria (including a minimum data acquisition threshold of one science frame per allocated hour). The overall publication return for the full sample was therefore 52.9 %, with Normal, GTO and TOO programmes showing similar rates (≈52–60 %).

A web‑based questionnaire was sent to the principal investigators (PIs) of the 1 278 programmes on 6 May 2016 and remained open for four weeks. A total of 965 valid responses were received (75.5 % of the target list, or an effective 80 % response rate after accounting for outdated user profiles). The response rate was higher for more recent allocations (85 % for the last semester versus 70 % for the earliest). Respondents could select multiple reasons from a list of ten options, with the most frequently chosen being “I am still working on the data” (option 8), followed by “I did publish a refereed paper” (option 1).

The authors analyse the responses both in raw frequencies and in a weighted scheme that gives equal weight to each option within a multi‑choice response. In weighted terms, the distribution of reasons is: 12.8 % published (option 1), 13.3 % insufficient data quality (2), 9.9 % insufficient data quantity (3), 2.6 % inadequate ESO tools (4), 12.2 % null or inconclusive results (5), 9.7 % lack of PI resources (6), 2.3 % science case no longer interesting (7), 23.7 % still working (8), 3.4 % published non‑refereed (9), and 12.2 % other (10).

A detailed breakdown shows instrument‑dependent variations. HARPS programmes have the highest publication fraction (≈78 %); VIMOS and CRIRES are at the low end (≈39 %). Approximately 80 % of all programmes have a publication rate below 60 %, regardless of instrument. The survey also reveals that “insufficient data quality” is reported far more often for Service Mode (SM) programmes (32 % of SM responses) than for Visitor Mode (VM) (68 % of VM responses), reflecting the greater impact of weather and seeing constraints on SM observations. Specific telescopes (e.g., VLTI with AMBER, UT1 with early CRIRES) are identified as sources of higher failure rates, often linked to early‑stage instrument commissioning or technical issues such as degraded coatings.

Option 1 (“I did publish”) uncovered a false‑negative rate of 11.4 % in the original telbib database, leading to a completeness‑corrected overall publication rate of 58.9 % for the full 2 716‑programme sample. The survey prompted updates to 64 telbib records, confirming that the database is >96 % complete.

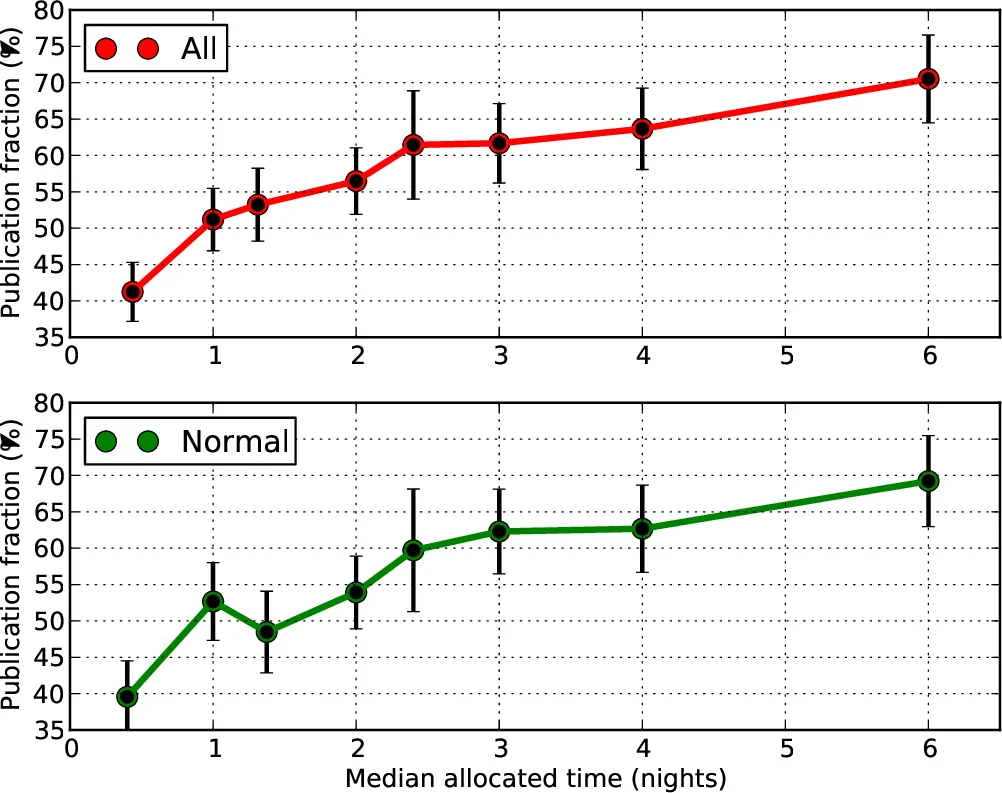

The most striking finding is that a substantial proportion of non‑publishing programmes are still “in progress”. After excluding the 110 responses that actually correspond to published papers, 339 responses (≈40 % of the corrected sample) indicated ongoing work. The authors define a ratio R = N(still working)/N(non‑publishing) and find R ≈ 0.40 overall, with a clear decline for older programmes. To interpret this trend, they construct the Publication Delay Time Distribution (PDTD) using 1 303 refereed papers linked to programmes allocated between 2008 and 2015. The cumulative distribution shows that 50 % of papers appear within 7 semesters (≈2 years) after allocation, and 95 % within 20 semesters (≈5 years). Consequently, even programmes allocated more than a decade ago retain a ~12 % probability of still being “in progress”, reflecting the long tail of the PDTD.

The authors discuss the implications for ESO’s operational model. Data quality and quantity issues, especially for SM programmes, point to the need for tighter weather and seeing constraints, improved real‑time QC, and perhaps more conservative allocation strategies for high‑risk, newly commissioned instruments. The significant “lack of resources” response underscores the growing mismatch between data production rates and the community’s capacity to analyse and publish results, suggesting that ESO might consider supporting data‑reduction pipelines, training, or collaborative networks to alleviate this bottleneck.

In summary, the SNPP provides a quantitative baseline for ESO’s publication efficiency, identifies the principal causes of non‑publication, and offers evidence‑based recommendations: (1) enhance pre‑observation planning and QC to reduce data‑quality failures; (2) invest in user support and analysis tools to mitigate resource constraints; (3) recognize the intrinsic delay in scientific output and maintain long‑term tracking of “still working” programmes; and (4) refine instrument commissioning procedures to minimise early‑stage failures. Implementing these measures could raise ESO’s effective publication return from the current ~59 % toward the 70 % target often cited for world‑class observatories.

Comments & Academic Discussion

Loading comments...

Leave a Comment