Deep Complex Networks

At present, the vast majority of building blocks, techniques, and architectures for deep learning are based on real-valued operations and representations. However, recent work on recurrent neural networks and older fundamental theoretical analysis suggests that complex numbers could have a richer representational capacity and could also facilitate noise-robust memory retrieval mechanisms. Despite their attractive properties and potential for opening up entirely new neural architectures, complex-valued deep neural networks have been marginalized due to the absence of the building blocks required to design such models. In this work, we provide the key atomic components for complex-valued deep neural networks and apply them to convolutional feed-forward networks and convolutional LSTMs. More precisely, we rely on complex convolutions and present algorithms for complex batch-normalization, complex weight initialization strategies for complex-valued neural nets and we use them in experiments with end-to-end training schemes. We demonstrate that such complex-valued models are competitive with their real-valued counterparts. We test deep complex models on several computer vision tasks, on music transcription using the MusicNet dataset and on Speech Spectrum Prediction using the TIMIT dataset. We achieve state-of-the-art performance on these audio-related tasks.

💡 Research Summary

The paper “Deep Complex Networks” addresses a long‑standing gap in deep learning: the lack of practical building blocks for complex‑valued neural networks. While theoretical work has suggested that complex numbers can provide richer representations, better gradient flow, and noise‑robust memory mechanisms, most modern architectures remain confined to real‑valued operations. The authors fill this gap by defining a complete toolbox for constructing deep complex‑valued models and demonstrate its effectiveness on both vision and audio tasks.

Core Contributions

-

Complex Representation and Convolution – A complex number z = a + ib is stored as two real tensors (real part a and imaginary part b). Complex convolution is implemented by separating the filter into real (A) and imaginary (B) components and applying the distributive property:

W∗h = (A∗x – B∗y) + i(B∗x + A∗y), where x, y are the real and imaginary parts of the input. This allows reuse of existing real‑valued convolution kernels while preserving the phase‑amplitude interaction intrinsic to complex arithmetic. -

Differentiability and Activation Functions – Back‑propagation requires the loss to be differentiable with respect to both real and imaginary parts. The paper relaxes the strict holomorphic requirement and adopts three practical activation functions:

- modReLU: Applies ReLU to the magnitude |z| plus a learnable bias b, preserving the phase θ.

- CReLU (Complex ReLU): Applies separate ReLUs to the real and imaginary components.

- zReLU: Passes the complex value unchanged only when its phase lies in the first quadrant, otherwise outputs zero.

These functions trade off strict Cauchy‑Riemann compliance for empirical performance and ease of implementation.

-

Complex Batch Normalization – Simple mean‑variance normalization is insufficient because it can produce elliptical (non‑circular) distributions. The authors treat each complex activation as a 2‑D vector and perform whitening: they compute the 2×2 covariance matrix of the real‑imaginary pair, obtain its eigen‑decomposition, and scale the data along the principal axes. This yields a circularly symmetric, zero‑mean, unit‑variance distribution, stabilizing training.

-

Weight Initialization – Extending Xavier/He initialization to the complex domain, the real and imaginary parts are drawn independently from zero‑mean Gaussian distributions whose variance is scaled according to the fan‑in/fan‑out of the layer. This preserves the expected magnitude of activations across layers and mitigates gradient explosion or vanishing.

Experimental Evaluation

The authors instantiate identical network topologies (ResNet‑style blocks) in both real and complex forms and evaluate them on several benchmarks:

-

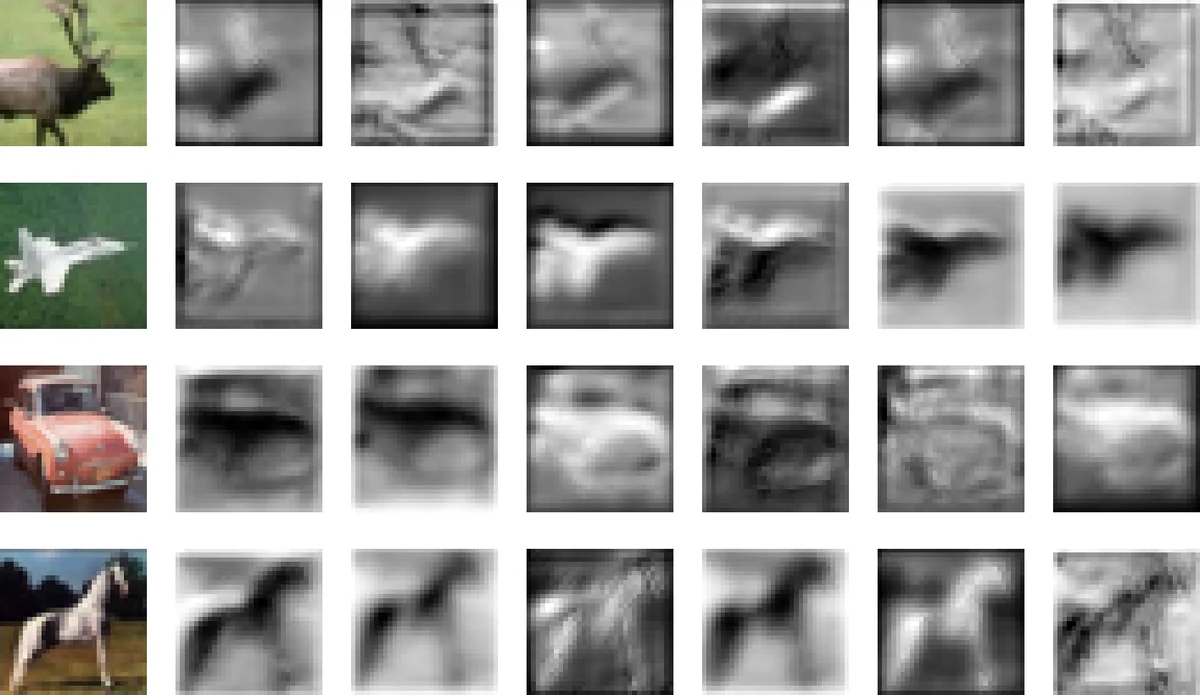

Vision: CIFAR‑10, CIFAR‑100, and a reduced‑training‑set version of SVHN (SVHN*). Complex models achieve marginal but consistent accuracy gains (≈0.3‑0.5 %) over their real counterparts, with comparable parameter counts and FLOPs.

-

Music Transcription: Using the MusicNet dataset (multi‑instrument, polyphonic music), the complex ConvNet attains a higher F1‑score than the previous state‑of‑the‑art real‑valued models, especially improving detection of high‑frequency notes and overlapping instruments. The phase information appears to help disambiguate overlapping harmonic structures.

-

Speech Spectrum Prediction: On TIMIT, the task is to predict future short‑time Fourier transform (STFT) magnitude frames. The complex model reduces the mean log‑spectral loss relative to the best real‑valued baseline, indicating better modeling of the underlying spectral dynamics.

Ablation studies reveal that modReLU generally yields the best performance, but CReLU and zReLU are competitive in scenarios where explicit phase manipulation is beneficial. Removing complex batch normalization or using naïve weight initialization leads to unstable training, confirming the necessity of the proposed components.

Implications and Future Directions

The work demonstrates that complex‑valued deep networks are not merely a theoretical curiosity; with proper normalization, initialization, and activation design, they can match or surpass real‑valued models on real‑world tasks. The ability to process amplitude and phase jointly is especially advantageous for audio and signal‑processing domains, where phase carries perceptual information. Moreover, the authors suggest that residual connections can be interpreted as associative memory updates, and embedding complex weights into these pathways may enable more efficient information retrieval and insertion.

Future research avenues include extending the toolbox to recurrent architectures (e.g., complex LSTMs/GRUs), exploring unitary/orthogonal constraints for long‑term stability, integrating complex attention mechanisms (complex Transformers), and developing hardware accelerators that exploit the inherent FFT‑friendly nature of complex convolutions.

Conclusion

“Deep Complex Networks” provides a comprehensive, practical framework for building and training deep neural networks with complex‑valued parameters. By delivering concrete implementations of complex convolution, batch normalization, weight initialization, and activation functions, and by validating them across vision and audio benchmarks, the paper establishes complex-valued deep learning as a viable and potentially superior alternative to traditional real‑valued approaches.

Comments & Academic Discussion

Loading comments...

Leave a Comment