Evaluation of Interactive Machine Learning Systems

The evaluation of interactive machine learning systems remains a difficult task. These systems learn from and adapt to the human, but at the same time, the human receives feedback and adapts to the system. Getting a clear understanding of these subtle mechanisms of co-operation and co-adaptation is challenging. In this chapter, we report on our experience in designing and evaluating various interactive machine learning applications from different domains. We argue for coupling two types of validation: algorithm-centered analysis, to study the computational behaviour of the system; and human-centered evaluation, to observe the utility and effectiveness of the application for end-users. We use a visual analytics application for guided search, built using an interactive evolutionary approach, as an exemplar of our work. Our observation is that human-centered design and evaluation complement algorithmic analysis, and can play an important role in addressing the “black-box” effect of machine learning. Finally, we discuss research opportunities that require human-computer interaction methodologies, in order to support both the visible and hidden roles that humans play in interactive machine learning.

💡 Research Summary

The chapter tackles the challenging problem of evaluating interactive machine learning (iML) systems, where a human and a learning algorithm continuously influence each other. The authors argue that a single evaluation perspective is insufficient; instead, they propose a dual‑track approach that couples algorithm‑centered evaluation with human‑centered evaluation. The former focuses on computational behavior—convergence speed, accuracy, exploration efficiency—while the latter assesses utility, usability, cognitive load, and insight generation for end‑users.

To ground this discussion, the authors performed a systematic literature review of 19 iML papers published between 2012 and 2017 in venues such as IEEE VIS, ACM CHI, and EuroVis. They classified each system according to the type of human feedback (implicit, explicit, or mixed), the nature of system feedback (visual, uncertainty indication, progressive suggestions), and the evaluation methodology employed (case study, user study, observational study, surveys). The review reveals that most works combine algorithmic metrics (e.g., time, precision/recall, convergence) with subjective measures (questionnaires, interviews, insight counts), but the balance between the two varies widely.

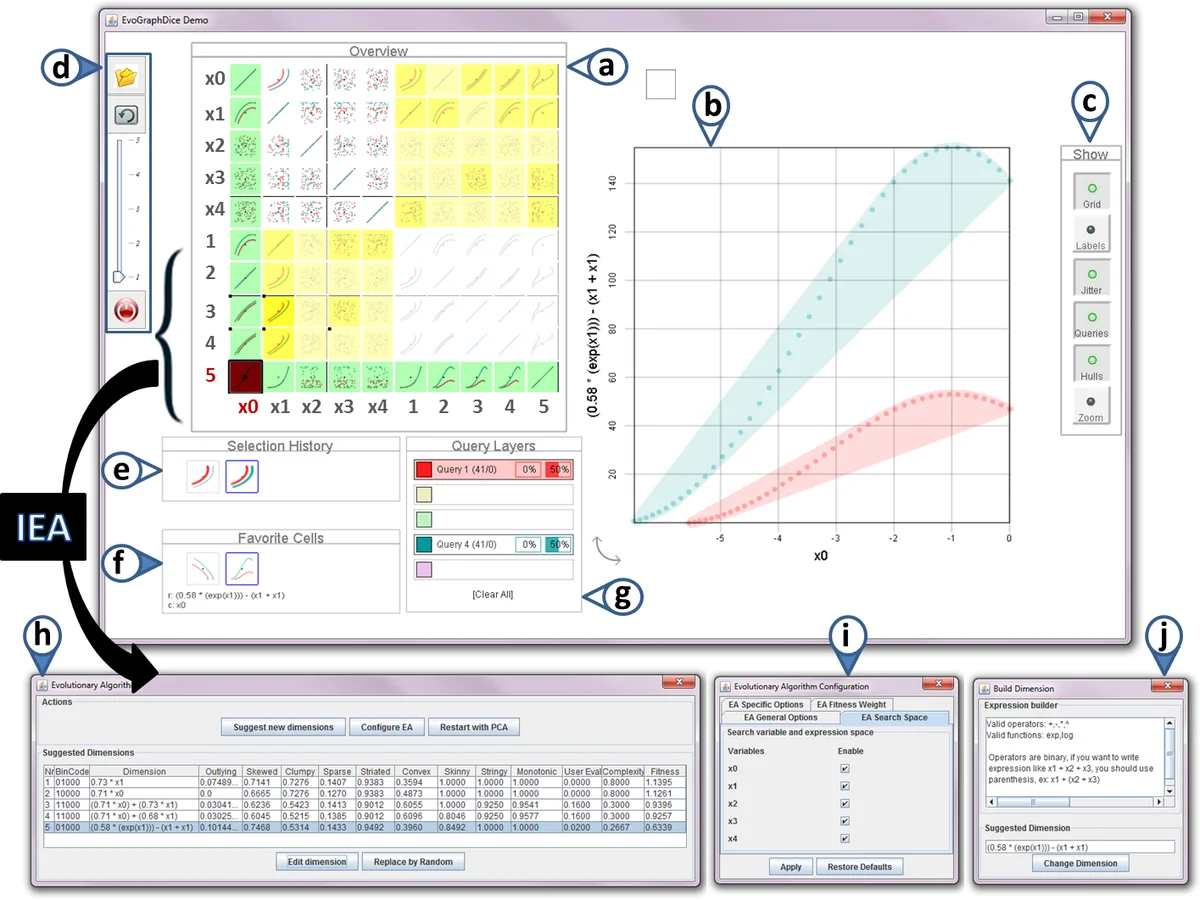

A central case study is presented: a visual‑analytics application for guided search built on an interactive evolutionary algorithm. The authors evaluated the prototype both algorithmically—measuring convergence rate and diversity of generated visualizations—and through a user study—recording task completion time, number of insights discovered, and participants’ perceived workload. Results demonstrate that human feedback dramatically improves search efficiency and that system‑provided visual feedback reduces the “black‑box” effect, making the learning process more transparent.

The chapter concludes by emphasizing the need for human‑computer interaction (HCI) methodologies in iML research. Designing appropriate feedback channels, modeling implicit user behavior, and establishing reproducible user studies are identified as critical open challenges. Future research directions include quantifying the reliability of mixed feedback, optimizing feedback integration strategies, and extending iML evaluation to multi‑user collaborative settings. Overall, the work provides a comprehensive roadmap for rigorously assessing iML systems, highlighting that algorithmic analysis and human‑centered design are mutually reinforcing pillars for advancing the field.

Comments & Academic Discussion

Loading comments...

Leave a Comment