Incremental Method for Spectral Clustering of Increasing Orders

The smallest eigenvalues and the associated eigenvectors (i.e., eigenpairs) of a graph Laplacian matrix have been widely used for spectral clustering and community detection. However, in real-life applications the number of clusters or communities (s…

Authors: Pin-Yu Chen, Baichuan Zhang, Mohammad Al Hasan

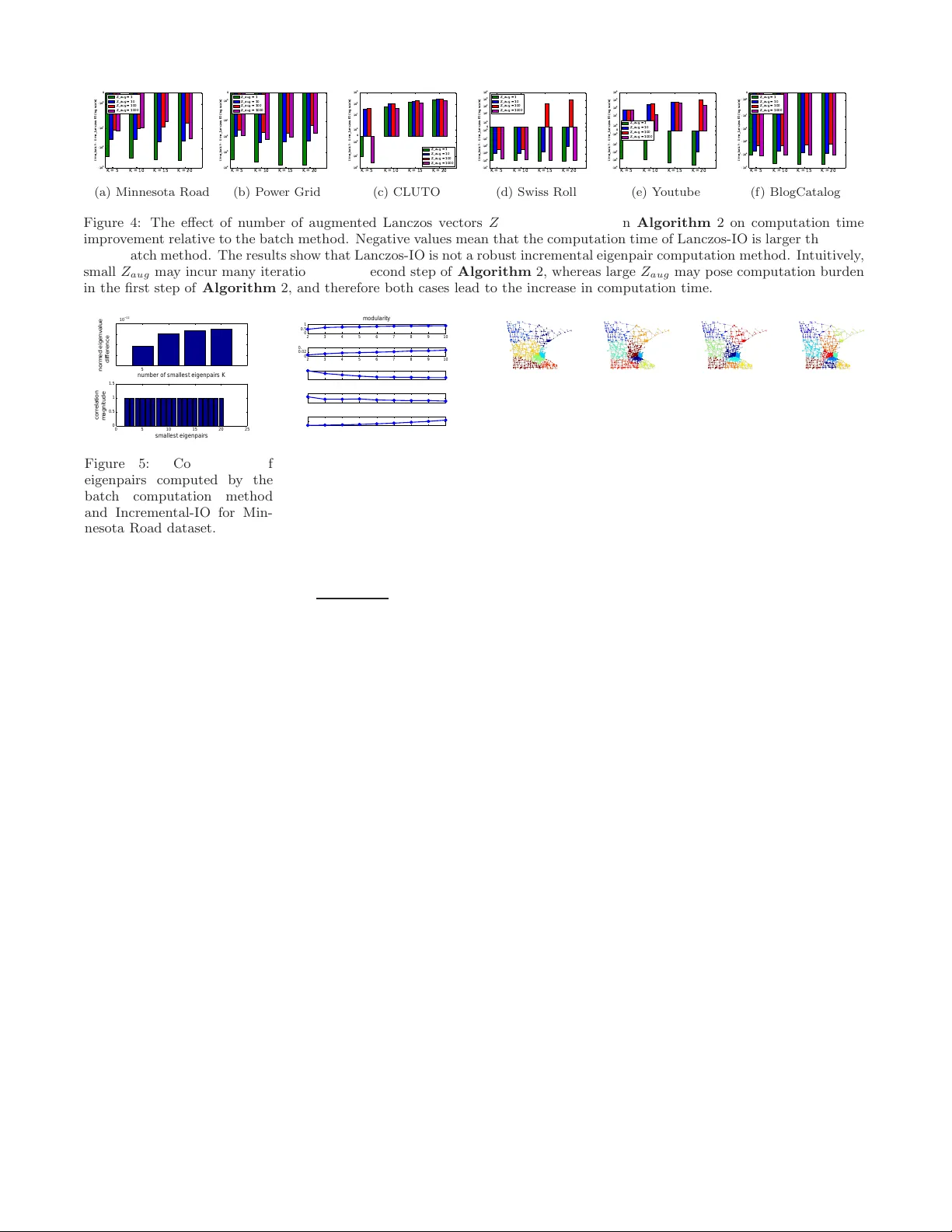

Incremental Method f or Spectral Clustering of Increasing Or der s Pin-Y u Chen Unive rsity of Michigan pinyu @umich.edu Baichuan Zhang Indiana Univ ersity - Purdue Unive rsity Indianapolis bz3@umail.iu.ed u Mohammad Al Hasan Indiana Univ ersity - Purdue Unive rsity Indianapolis alhasan@cs .iupui.edu Alfred Hero Unive rsity of Michigan hero@umich .edu ABSTRA CT The smallest eigen v alues and the as so ciated eigenv ectors (i.e., eigenpairs) of a gra ph Laplacian matrix ha ve b een widely used for spectral clustering and comm unity detection. How- ever, in real-life app lications the num b er of clusters or com- munities (say , K ) is generally unkn own a-priori. Conse- quently , t h e ma jorit y of th e existing meth o ds either choose K heuristically or they repeat the clustering meth o d with different choic es of K and accept the b est clustering result. The first option, more often, yields sub optimal result, while the second option is computationally exp ensiv e. In this work, w e prop ose an incremen tal metho d for constructing the ei gen- sp ectru m of the graph Laplacian matrix. This metho d lever- ages the eigenstructure of graph Laplacian matrix to obtain the K -th eigenpairs of the Laplacian matrix given a collection of all the K − 1 smallest eigenpairs. Our prop osed metho d adapts th e Laplacian matrix such that t he batch eigen v alue decomp osition problem transforms in to an efficien t sequential leading eigenpair compu tation problem. As a practical appli- cation, w e consider user-guided sp ectral clustering. Sp ecif- ically , we demonstrate that u sers can utilize the p rop osed incremental meth od for effective eigenpair computation and determining the d esired num ber of clusters b ased on m ultiple clustering metrics. 1. INTR ODUCTION Over the past tw o decades, the graph Laplacian matrix and its v arian ts ha ve b een widely adopted for solving v ar- ious researc h tasks, including graph p artitioning [23], data clustering [14], communit y detection [5, 28], consensus in net- w orks [19], dimensionality reduction [2], entit y disambigua- tion [33], link prediction [32], graph signal pro cessing [27], central ity measures for graph connectivity [4], in terconnected physical systems [24], netw ork v ulnerabilit y assessmen t [7], image segmen tation [26], among others. The fundamental task is to represent the data of interest as a graph for analysis, where a no de represents an entit y (e.g., a pixel in an image or a user in an online social n etw ork) and an ed ge represen ts similarit y b et we en t wo multiv ariate d ata samples or actual relation (e.g., friendship) b etw een nod es [14]. More often th e K eigenv ectors associated with the K smallest eigen v alues of the graph Laplacian matrix are used to cluster th e entities into K clusters of high similarity . F or brevity , throughout this pap er w e will call these eigenv ectors as the K smallest eigen vectors. The su ccess of graph Laplacian matrix based metho ds for graph partitioning and sp ectral clustering can b e explained by the fact that acquiring K smallest eigenv ectors is eq u iv- alen t to solving a relaxed graph cut minimization problem, whic h partitions a graph in to K clusters by minimizing var- ious ob jectiv e functions includ ing min cut, ratio cut or n or- malized cut [14]. Generally , in clustering K is selected to b e muc h smaller than n (t he number of data points), making full eigen decomp osition (suc h as QR decomposition) un nec- essary . An efficient alternative is t o use metho ds that are based on p ow er iteration, such as Arnoldi meth od or Lanc- zos metho d, which compu tes the leading eigenpairs through rep eated matrix vector m ultiplication. A RP ACK [13] library is a p opular p arallel implementation of d ifferent v arian ts of Arnoldi and Lanczos method , which is used by many com- mercial softw are including Matlab. How ever, in most si tuations the b est v alue of K is un- known and a heuristic is used by clustering algori thms to determine the number of clusters, e.g., fixing a maxim um num b er of clusters K max and detecting a large gap in the v alues of t he K max largest sorted eigenv alues or normalized cut score [16, 21 ]. Alternativel y , this v alue of K can b e deter- mined b ased on domain knowledge [1]. F or example, a user ma y require that the largest cluster size be no more than 10% of the total num b er of no des or that the t otal inter-cluster edge weigh t b e no greater th an a certain amount. In these cases, th e desired c hoice of K cannot b e determined a priori . Over-estimatio n of the upp er b oun d K max on the num b er of clusters is exp ensive as the cost of finding K eigenpairs u sing the p ow er iteration metho d grows rapidly with K . On the other hand , choosing an insufficiently large v alue for K max runs the risk of severe bias. Setting K max to the number of data p oints n is generally computationally infeasible, even for a mo derate-sized graph. Therefore, an incremen tal eigen- pair computation metho d that effectively computes the K -th smallest eigenpair of graph Laplacian matrix by utilizing t he previously computed K − 1 smallest eigenpairs is n eeded. Such an iterative metho d obviates the need to set an u pp er b ound K max on K , and its efficiency can b e explained by the adaptivity to increments in K . By exploiting the sp ecial matrix p rop erties and graph char- acteristics of a graph Lap lacian matrix, we prop ose an effi- cien t metho d for computing the ( K + 1)-th eigenpair giv en all of the K smallest eigenpairs, whic h w e call the Incremen- tal metho d of Increasing Orders (Incremental-IO). F or each increment, given the previously computed s mallest eigen- pairs, we show t h at computin g the next smallest eigenpair is equ iv alen t to computing a leading eigenpair of a particular matrix, which t ransforms p otentially tedious numerical com- putation (such as the iterative tridiagonalizatio n and eigen- decomp osition steps in th e Lanczos algorithm [11]) to simple matrix pow er iterations of k now n computational efficiency [10]. W e th en compare the p erformance of Incremental-IO with a batch computation met h od which computes all of the K small est eigenpairs in a single batch, and an incremen- tal metho d adapted from th e Lanczos algorithm, whic h we call the Lanczos metho d of Increasing Ord ers (Lanczos-IO). F or a given num b er of eigenpairs K iterative matrix- vector multipli cation of Lanczos p rocedu re yields a set of Lanczos vectors ( Q ℓ ), and a tridiagonal matrix ( T ℓ ), follo w ed by a full eigen-decomp osition of T ℓ ( ℓ is a v alue muc h smaller than t h e matrix size). Lanczos-IO sav es the Lanczos v ec- tors that were obtained while K eigenpairs were computed and use those to generate new Lanczos vectors for computing ( K + 1)-th eigenpair. Comparing to the batch metho d, our exp erimental results sho w that for a giv en order K , I ncremental-IO provides a significan t red uction in computation time. Also, as K in- creases, t h e gap b etw een Incremental-IO and the b atch ap- proac h widens, providing an order of magnitude sp eed-up . Exp eriments on real-life datasets show that t h e performance of Lan czos-I O is ov erly sensitiv e to t h e selection of augmented Lanczos vectors, a para meter that cann ot be optimized a priori —for some of our exp erimental datasets, Lanczos-IO p erforms even w orse than the batch method (see Sec. 6 ). Moreo ver, Lanczos-IO consumes significant amount of mem- ory as it has to sa ve the Lanczos vectors ( Q ℓ ) for making the incremental approach realizable. In summary , Lanczos-IO, although an incremen tal eigenpair compu tation alg orithm, falls short in terms of robustn ess. T o illustrate the real-life utilit y of incremental eigenpair computation metho ds, we d esign a user-guided sp ectral clus- tering algorithm which uses Incremental-IO. The algorithm provides clustering solution for a sequence of K v alues effi- cien tly , and thus enable a user to compare these clustering solutions for facilitating the selection of the most appropriate clustering. The contributions of this pap er are summarized as follo ws. 1. W e propose an incremen tal eigenpair comput ation metho d (Incremental-IO) for both unnormalized and normal- ized graph Laplacian matrices, by transforming the orig- inal eigenv alue decomp osition problem into an efficien t sequential leading eigenpair computation problem. Sim- ulation results show that Incremental-IO generates the desired eige npair accurately and has sup erior p erfor- mance ov er the b atch computation metho d in terms of computation time. 2. W e sho w that Incremental-IO is robust in compari- son t o Lanczos-IO, whic h is an incremental eigenpair metho d th at we design by adapting th e Lanczos metho d. 3. W e use several real-life datasets to demonstrate the utilit y of Incremental-IO. Specifically , we sho w that Incremental-IO is suitable for user-guided sp ectral clus- tering which provides a sequence of clustering results for un it increment of the num b er K of clusters, an d up dates the associated cluster eva luation metrics for helping a user in decision making. 2. RELA TED WORKS 2.1 Incr emental eigen value dec omposition The p rop osed meth od (Incremental-IO) aims to incremen- tally compute th e smallest eigenpair of a given graph Lapla- cian matrix. There are several works that are named as in- crementa l eigenv alue decomp osition metho ds [8, 9, 17, 18, 25]. How ever, these works focu s on up dating the eigenstructure of graph Laplacian matrix of dynamic graphs when nodes (d ata samples) or edges are inserted or deleted from th e graph, whic h are d ifferent from incremen tal computation of eigen- pairs of increasing orders. 2.2 Cluster Count Selection f or Spectral Clus- tering Man y sp ectral clustering algorithms utilize t he eigenstruc- ture of graph Laplacian matrix for selecting num b er of clus- ters. In [21], a v alue K that maximizes the gap b etw een the K - th and the ( K + 1)-th smallest eigenv alue is selected as th e num b er of clusters. In [16], a val ue K that minimizes the sum of cluster-wise Euclidean distance betw een each d ata p oint and t h e centroid obtained from K- means clustering on K smallest eigenv ectors is selected as the number of clus- ters. In [31], the smallest eigen vectors of normalized graph Laplacian matrix are rotated to fin d the b est alignmen t that reflects the true clusters. A mo del based method for deter- mining the number of clusters is prop osed in [22]. N ote that aforemen tioned meth ods use only one single clustering met- ric to determine the num b er of clusters and often implicitly assume an up p er boun d on K (namely K max ). 3. INCREMENT AL EIGENP AIR COMPUT A- TION FOR GRAP H LAPLA CIAN MA TRI- CES 3.1 Backgr ound Throughout this pap er b old upp ercase letters (e.g., X ) de- note matrices and X ij (or [ X ] ij ) denotes th e en try in i -t h ro w and j -th column of X , bold lo w ercase letters ( e.g., x or x i ) denote column vectors, ( · ) T denotes matrix or vector transp ose, italic letters ( e.g., x or x i ) denote scalars, and cal- ligraphic upp ercase letters (e.g., X or X i ) denote sets. The n × 1 vector of ones (zeros) is d enoted by 1 n ( 0 n ). The ma- trix I denotes an identit y matrix and the matrix O denotes the matrix of zeros. W e use tw o n × n symmetric matrices, A and W , to denote the adjacency and weigh t matrices of an undirected wei ghted simple graph G with n no des and m edges. A ij = 1 if there is an edge b etw een no des i and j , and A ij = 0 otherwise. W is a non n egativ e symmetric matrix such t hat W ij ≥ 0 if A ij = 1 and W ij = 0 if A ij = 0. Let s i = P n j =1 W ij denote the strength of no de i . Note that when W = A , the strength of a nod e is equiva lent to its degree. S = diag( s 1 , s 2 , . . . , s n ) is a diagonal matrix with nod al strength on its m ain d iagonal and th e off-diagonal entries b eing zero. The ( unnormalized) graph Laplacian matrix is defined as L = S − W . (1) One p op u lar vari ant of the graph Laplacian matrix is t h e normalized graph Laplacian matrix defined as L N = S − 1 2 LS − 1 2 = I − S − 1 2 WS − 1 2 , (2) T able 1: Utilit y of the lemmas, corollaries, and theorems. Graph Type / Laplacian Matrix Unnormaliz ed Normalized Connected Graphs Lemma 1 Theorem 1 Corollary 1 Corollary 3 Disconnec ted Graphs Lemma 2 Theorem 2 Corollary 2 Corollary 4 where S − 1 2 = diag( 1 √ s 1 , 1 √ s 2 , . . . , 1 √ s n ). The i -th smallest eigen v alue and its associated unit- norm eigen vector of L are denoted by λ i ( L ) and v i ( L ), respectively . That is, the eigen- pair ( λ i , v i ) of L has the relation Lv i = λ i v i , and λ 1 ( L ) ≤ λ 2 ( L ) ≤ . . . ≤ λ n ( L ). The eigen vectors hav e un it Euclidean norm and they are orthogonal to each other such that v T i v j = 1 if i = j and v T i v j = 0 if i 6 = j . The eigen v alues of L are said to b e d istinct if λ 1 ( L ) < λ 2 ( L ) < . . . < λ n ( L ). Similar notations are used for L N . 3.2 Theor etical f oundations of the pr oposed method (Incr emental-IO) The follo wing lemmas and corollarie s pro vide th e corner- stone for establishing the prop osed incremental eigenpair com- putation metho d (Incremental -IO). The main idea is that we utilize the eigenspace structure of graph Laplacian matrix to inflate sp ecific eigenpairs via a particular p erturbation ma- trix, without affecting other eigenpairs. Incremental-IO can b e viewed as a sp ecialized Hotelling’s deflation metho d [20] designed for graph Laplacian matrices by exploiting their sp ectral prop erties and associated graph characteristics. It w orks for b oth connected, and d isconnected graphs using b oth n ormalized and u nnormalized graph Laplacian matri- ces. F or illustration pu rp oses, in T able 1 we group the es- tablished lemmas, corollaries, and th eorems u nder different graph types and d ifferent graph Laplacian matrices. Because of the page limit, the pro ofs of the established lemmas, the- orems and corollaries are giv en in the extended vers ion 1 . Lemma 1. Assume that G i s a c onne cte d gr aph and L i s the gr aph L aplacian with s i denoting the sum of the entries in the i -th r ow of the w ei ght matrix W . L et s = P n i =1 s i and define e L = L + s n 1 n 1 T n . Then the eigenp airs of e L satisfy ( λ i ( e L ) , v i ( e L )) = ( λ i +1 ( L ) , v i +1 ( L )) for 1 ≤ i ≤ n − 1 and ( λ n ( e L ) , v n ( e L )) = ( s, 1 n √ n ) . Cor ollar y 1. F or a normali ze d gr aph L aplacian matrix L N , as sume G is a c onne cte d gr aph and let e L N = L N + 2 s S 1 2 1 n 1 T n S 1 2 . Then ( λ i ( e L N ) , v i ( e L N )) = ( λ i +1 ( L N ) , v i +1 ( L N )) for 1 ≤ i ≤ n − 1 and ( λ n ( e L N ) , v n ( e L N )) = (2 , S 1 2 1 n √ s ) . Lemma 2. Assume t hat G is a disc onne cte d gr aph with δ ≥ 2 c onne cte d c omp onents, and let s = P n i =1 s i , l et V = [ v 1 ( L ) , v 2 ( L ) , . . . , v δ ( L )] , and let e L = L + s V V T . Then ( λ i ( e L ) , v i ( e L )) = ( λ i + δ ( L ) , v i + δ ( L )) for 1 ≤ i ≤ n − δ , λ i ( e L ) = s f or n − δ + 1 ≤ i ≤ n , and [ v n − δ +1 ( e L ) , v n − δ +2 , ( e L ) , . . . , v n ( e L )] = V . Cor ollar y 2. F or a normali ze d gr aph L aplacian matrix L N , assume G is a disc onne cte d gr aph with δ ≥ 2 c onne cte d c omp onents. L et V δ = [ v 1 ( L N ) , v 2 ( L N ) , . . . , v δ ( L N )] , and let e L N = L N +2 V δ V T δ . Then ( λ i ( e L N ) , v i ( e L N )) = ( λ i + δ ( L N ) 1 http://a rxiv.org/abs/1512.0 7349 , v i + δ ( L N )) for 1 ≤ i ≤ n − δ , λ i ( e L N ) = 2 for n − δ + 1 ≤ i ≤ n , and [ v n − δ +1 ( e L N ) , v n − δ +2 , ( e L N ) , . . . , v n ( e L N )] = V δ . Remark 1. note that the c olum ns of any matrix V ′ = VR with an orthono rmal tr ansformation m atrix R (i.e., R T R = I ) ar e also the lar gest δ eigenve ctors of e L and e L N in L em ma 2 and C or ol lary 2. Without loss of gener ality we c onsider the c ase R = I . 3.3 Incr emental method of incr easing o rders Give n the K smalle st eigenpairs of a graph Laplacian ma- trix, w e prov e that compu ting the ( K + 1)-t h smallest eigen- pair is equival ent to computing th e leading eigenpair (the eigenpair with the largest eigen v alue in absolute v alue) of a certain p erturb ed matrix. The ad van tage of this transforma- tion is that the leading eigenpair can b e efficien tly computed via matrix p ow er iteration metho ds [11, 13]. Let V K = [ v 1 ( L ) , v 2 ( L ) , . . . , v K ( L )] be the matrix with columns b eing t he K smallest eigen vectors of L and let Λ K = diag( s − λ 1 ( L ) , s − λ 2 ( L ) , . . . , s − λ K ( L )) be the d iagonal matrix with { s − λ i ( L ) } K i =1 b eing its main diagonal. The follo wing theorems show that giv en the K smallest eigenpairs of L , the ( K + 1)-th smallest eigenpair of L is the leading eigen vector of the original graph Laplacian matrix p erturb ed by a certain matrix. Theorem 1. (connected graph s) Given V K and Λ K , as- sume that G is a c onne cte d gr aph. Then the eigenp air ( λ K +1 ( L ) , v K +1 ( L )) is a l e ading eigenp air of the mat rix e L = L + V K Λ K V T K + s n 1 n 1 T n − s I . In p articular, if L has di stinct eigenvalues, then ( λ K +1 ( L ) , v K +1 ( L )) = ( λ 1 ( e L ) + s, v 1 ( e L )) . The next t heorem describ es an incremen tal eigenpair com- putation metho d when the graph G is a disconnected graph with δ connected components. The columns of the matrix V δ are t h e δ smallest eigenve ctors of L . Note that V δ has a canonical represen tation that the n on zero entri es of eac h column are a constan t and their indices ind icate the no des in eac h conn ected comp onent [6 , 14], and the column s of V δ are the δ smallest eigenv ectors of L with eigenv alue 0 [6]. Sin ce the δ smallest eigenpairs with the canonical representation are trivial by identifying t h e connected components in the graph, we only focus on compu ting the ( K + 1)-th small- est eigenpair given K smallest eigenpairs, where K ≥ δ . The columns of the matrix V K,δ = [ v δ + 1 ( L ) , v δ + 2 ( L ) , . . . , v K ( L )] are the ( δ + 1)-th to the K -th smallest eigen vectors of L and the matrix Λ K,δ = diag( s − λ δ + 1 ( L ) , s − λ δ + 2 ( L ) , . . . , s − λ K ( L )) is the d iagonal matrix with { s − λ i ( L ) } K i = δ + 1 b eing its main diagonal. If K = δ , V K,δ and Λ K,δ are defined as the matrix with all entries b eing zero, i.e., O . Theorem 2. (disconnected graphs) Assume that G is a disc onne cte d gr aph with δ ≥ 2 c onne cte d c omp onents, given V K,δ , Λ K,δ and K ≥ δ , the eigenp ai r ( λ K +1 ( L ) , v K +1 ( L )) is a le ading eigenp air of the matrix e L = L + V K,δ Λ K,δ V T K,δ + s V δ V T δ − s I . In p articular, if L has distinct nonzer o eigen- values, then ( λ K +1 ( L ) , v K +1 ( L )) = ( λ 1 ( e L ) + s, v 1 ( e L )) . F ollo wing th e same metho dology for proving Theorem 1 and using Corollary 1, for normalized graph Laplacian ma- trices, let V K = [ v 1 ( L N ) , v 2 ( L N ) , . . . , v K ( L N )] b e t he ma- trix with columns b eing th e K smallest eigenv ectors of L N and let Λ K = diag(2 − λ 1 ( L N ) , 2 − λ 2 ( L N ) , . . . , 2 − λ K ( L N )). The follo wing coroll ary pro vides the basis for incremen tal eigenpair comput ation for normalized graph Laplacian ma- trix of connected graphs. Cor ollar y 3. F or the normalize d gr aph L aplacian m a- trix L N of a c onne cte d gr aph G , given V K and Λ K , the eigenp air ( λ K +1 ( L N ) , v K +1 ( L N )) is a le ading eigenp air of the matrix e L N = L N + V K Λ K V T K + 2 s S 1 2 1 n 1 T n S 1 2 − 2 I . In p articular, if L N has distinct ei genvalues, then ( λ K +1 ( L N ) , v K +1 ( L N )) = ( λ 1 ( e L N ) + 2 , v 1 ( e L N )) . F or d isconnected graphs with δ connected comp onents, let V K,δ = [ v δ + 1 ( L N ) , v δ + 2 ( L N ) , . . . , v K ( L N )] with col umns b eing the ( δ + 1)-th to th e K -th smallest eigen vectors of L N and let Λ K,δ = diag(2 − λ δ + 1 ( L N ) , 2 − λ δ + 2 ( L N ) , . . . , 2 − λ K ( L N )). Based on Corollary 2, the follo wing corollary provides an incrementa l eigenpair computation meth od for normalized graph Laplacian matrix of disconnected graphs. Cor ollar y 4. F or the normalize d gr aph L aplacian m a- trix L N of a disc onne cte d gr aph G with δ ≥ 2 c onne cte d c omp onents, given V K,δ , Λ K,δ and K ≥ δ , the eigenp air ( λ K +1 ( L N ) , v K +1 ( L N )) i s a le ading eigenp ai r of the matrix e L N = L N + V K,δ Λ K,δ V T K,δ + 2 s S 1 2 1 n 1 T n S 1 2 − 2 I . In p articu- lar, if L N has distinct eigenvalues, then ( λ K +1 ( L N ) , v K +1 ( L N )) = ( λ 1 ( e L N ) + 2 , v 1 ( e L N )) . 3.4 Computational complexity analysis Here we analyze the computational complexit y of Incremental- IO and compare it with th e b atch computation metho d. Incremental- IO u tilizes the existing K smallest eigenpairs to compute th e ( K + 1)-th smallest eigenpair as describ ed in Sec. 3.3, whereas the batc h computation method recomputes all eigenpairs for eac h v alue of K . Both metho d s can b e easily implemented via well-dev eloped numerical compu tation pack ages such as ARP ACK [13]. F ollo wing the analysis in [10], the avera ge relative error of the leading eigenv alue from the Lanczos algorithm [11] has an upp er b ound of the order O (ln n ) 2 t 2 , where n is the num b er of no des in the graph and t is the number of iter- ations for Lanczos algorithm. Therefo re, when one sequen- tially computes from k = 2 to k = K smallest eigenpairs, for Incremen tal-IO the upp er bound on the av erage rel a- tive error of K smallest eigenpairs is O K (ln n ) 2 t 2 since in eac h increment compu ting the correspondin g eigenpair can b e transformed to a leading eigenpair computation process as d escribed in S ec. 3.3. On th e other hand, for the batch computation metho d, th e up p er b ound on the a verage rel- ative error of K smallest eigenpairs is O K 2 (ln n ) 2 t 2 since for the k -th incremen t ( k ≤ K ) it needs to compute all k smallest eigenpairs fro m scratc h. These results also imply that to reach the same av erage relativ e error ǫ for sequen - tial computation of K smallest eigenpairs, In cremental -IO requires Ω q K ǫ ln n iterations, whereas the batch metho d requires Ω K ln n √ ǫ iterations. It is difficult to analyze the computational complexity of Lanczos-IO, as its conver gence results h eavily dep end on th e quality of previously generated Lanczos vectors. Algorithm 1 In cremental algorithm for user-guided spectral clustering u sing Incremental-IO (steps 1- 3) Input: connected un directed weigh ted graph W Output: K clusters { b G k } K k =1 Initialization: K = 2. V 1 = Λ 1 = O . Flag = 1. S = diag( W1 n ). W N = S − 1 2 WS − 1 2 . L = diag( W N 1 n ) − W N . s = 1 T n W N 1 n . while Flag= 1 do 1. e L = L + V K − 1 Λ K − 1 V T K − 1 + s n 1 n 1 T n − s I . 2. Compute t h e leading eigenpair ( λ 1 ( e L ) , v 1 ( e L )) and set ( λ K ( L ) , v K ( L )) = ( λ 1 ( e L ) + s, v 1 ( e L )). 3. Up date K smallest eigenpairs of L by V K = [ V K − 1 v K ] and [ Λ K ] K K = s − λ K ( L ). 4. Perf orm K-means clustering on the rows of V K to ob t ain K clusters { b G k } K k =1 . 5. Compute u ser-sp ecified clustering metrics. if user d ecides to stop then Flag= 0 Output K clusters { b G k } K k =1 else Go back to step 1 with K = K + 1. end if end whil e 4. APPLICA TION: USER-GUIDED SPECTRAL CLUSTERING WITH INCREMENT AL-IO Based on the developed incremen tal eigenpair comput ation metho d (In cremental -IO) in Sec. 3, we p rop ose an incre- mental algorithm for user-guided sp ectral clustering as sum- marized in A lgorithm 1. This algorithm sequentially com- putes the smallest eigenpairs via Incremental-IO (steps 1-3) for sp ectral clustering and provides a sequence of clusters with t he v alues of user-sp ecified clustering metrics. The input graph is a connected u n directed wei ghted graph W and we conv ert it t o th e reduced weigh ted graph W N = S − 1 2 WS − 1 2 to alleviate th e effect of imbala nced edge weigh ts. The entri es of W N are p roperly normalized by the nodal strength such that [ W N ] ij = [ W ] ij √ s i · s j . W e then obtain the graph Laplacian matrix L for W N and in crementally com- pute the eigenpairs of L via In cremental -IO (steps 1-3) until the user decides to stop further computation. Starting from K = 2 clusters, the algorithm incremen tally computes th e K -th smallest eigenpair ( λ K ( L ) , v K ( L )) of L with the kn o wledge of the previous K − 1 smallest eigen- pairs via Theorem 1 an d ob t ains matrix V K conta ining K smallest eigenv ectors. By viewing each row in V K as a K - dimensional vector, K - means clustering is implemented to separate th e ro ws in V K into K clusters. F or eac h incremen t, the identified K clusters are denoted by { b G k } K k =1 , where b G k is a graph partition with b n k nod es and b m k edges. In addition to incremental computation of smallest eigen- pairs, for eac h increment the algorithm can also b e used to up date clustering metrics such as normalized cu t, mo d ular- it y , and cluster size distribution, in order to p ro vide users with clustering information to stop the incremental compu - tation pro cess. The incremental compu tation algorithm al- lo ws u sers to efficiently track t he changes in clusters as the num b er K of hypothesized clusters increases. Note that Al gorithm 1 is prop osed for conn ected graphs and t heir correspond ing unnormalized graph Laplacian ma- Algorithm 2 Lanczos metho d of Increasing Ord ers (Lanczos-IO) Input: real symmetric matrix M , # of initial Lanczos vectors Z ini , # of augmented Lanczos vectors Z aug Output: K leading eigenpairs { λ i , v i } K i =1 of M Initialization: Compute Z ini Lanczos ve ctors as columns of Q and the corresp onding tridiagonal matrix T of M . Flag = 1. K = 1. Z = Z ini . while Flag= 1 do 1. Obtain the K leading eigenpairs { t i , u i } K i =1 of T . U = [ u 1 , . . . , u K ]. 2. Residual error = | T ( Z − 1 , Z ) · U ( Z , K ) | while R esidual error > T olerence do 2-1. Z = Z + Z aug 2-2. Based on Q and T , comput e the next Z aug Lanczos vectors as columns of Q aug and the augmented tridiagonal matrix T aug 2-3. Q ← [ Q Q aug ] and T ← T O O T aug 2-4. Go bac k to step 1 end whil e 3. { λ i } K i =1 = { t i } K i =1 . [ v 1 , . . . , v K ] = QU . if user d ecides to stop then Flag= 0 Output K leading eigenpairs { λ i , v i } K i =1 else Go back to step 1 with K = K + 1. end if end whil e trices. The algorithm can b e easily ad ap t ed to disconnected graphs or normalized graph Laplacian matrices by modify- ing step s 1-3 based on the developed results in Theorem 2, Corollary 3 and Coroll ary 4. 5. IMPLEMENT A TION W e implemen t the prop osed incrementa l eigenpair com- putation metho d (Incremental-IO) using Matlab R201 5a’s “eigs” function, whic h is based on ARP ACK pack age [13]. Note that this function takes a parameter K an d retu rns K leading eigenpairs of the given matrix. The eig s function is implemented in Matlab with a Lanczos algorithm that com- putes th e leading eigenpairs (the imp licitly-restarted Lanc- zos meth od [3 ]). This Matlab funct ion iteratively generates Lanczos vectors starting from an initial vector (th e default setting is a random vector) with restart. F ollow ing Theo- rem 1, Incremental-IO works b y sequentially p erturbing the graph Laplacian matrix L with a p articular matrix and com- puting the leading eigenpair of the p ertu rb ed matrix e L (see Algorithm 1) by calling eig s ( e L , 1). F or th e batch compu- tation meth od , we use eig s ( L , K ) t o compute the desired K eigenpairs from scratch as K increases. F or implementing Lanczos-IO, we extend th e Lanczos al- gorithm of fixed order ( K is fixed) u sing th e PROP ACK pack age [12]. As we have stated earlier, Lanczos-IO w orks by storing all prev iously generated Lanczos vectors and u s- ing them to compute new L an czos v ectors for each incre- ment in K . The general pro cedure of computing K leading eigenpairs of a real symmetric matrix M u sing Lanczos-IO is d escribed in Algorithm 2. The op eration of Lanczos-IO is similar to the ex plicitly-restarted Lanczos algorithm [29], whic h restarts the computation of Lanczos vectors with a subset of p reviously comput ed Lanczos vectors. Note that the Lanczos-IO consumes additional memory for storing all previously computed Lanczos vectors when compared with the prop osed incremen tal metho d in Algorithm 1, since the eig s function uses the implicitly-restarted Lanczos meth od that spares the need of storing Lanczos vectors for restart. T o apply Lanczos-IO to sp ectral clustering of increasing orders, w e can set M = L + s n 1 n 1 T n − s I to obtain the smallest eigen vectors of L . Throughout the exp eriments the parameters in Al gorithm 2 are set t o b e Z ini = 2 0 and T olerence = ǫ · k M k , where ǫ is the machine precision, k M k is th e op erator norm of M , and these settings are consis- tent with the settings used in eig s function [13]. The num- b er of augmented Lanczos vectors Z aug is set to b e 10, and the effect of Z aug on the compu tation time is discussed in Sec. 6.2. The Matlab implementation of t he aforemen tioned batch meth od , Lanczos-IO, and Incremental-IO are a v ailable at https://sites.google.com/s ite/pinyuc henpage/cod es. 6. EXPERIMENT AL RESUL TS W e p erform severa l ex p eriments: first, compare the com- putation time b etw een Incremental-IO, Lanczos-IO, and the batch method; second, numerically verif y the accuracy of Incremental-IO; third, demonstrate the u sages of Incremental- IO for user-guided sp ectral clu stering. F or the first ex p eri- ment, we generate synthetic Erdos-Renyi random graphs of v arious sizes. F or th e second exp eriment, w e compare the consistency of eigenpairs obt ained from Incremental-IO and the batch metho d. F or the t hird exp erimen t, we use six p op- ular graph datasets as summarized in T able 2. 6.1 Comparison of computation time on simu- lated graphs T o illustrate th e adv an tage of In crementa l-IO, we compare its computation time with the other tw o metho ds, the batc h metho d and Lanczos-IO, for v arying order K and v arying graph size n . The Erdos-Renyi random graphs th at w e bu ild are used for this compariso n. Fig. 1 (a) sho ws the com- putation time of In cremental -IO, Lanczos-IO, and the batch computation metho d for sequenti ally comput ing from K = 2 to K = 10 smallest eigenpairs. It is observed that the compu- tation t ime of Incremental-IO and Lanczos-IO gro ws linearly as K increase s, whereas t h e computation time of the b atch metho d grows sup erlinearly with K . Fig. 1 (b) shows the computation time of all three meth- ods with resp ect to different graph size n . It is observed that the difference in computation time b etw een the batch metho d and the t w o incremen tal metho ds gro w exp onential ly as n in- creases, which suggests that in this exp eriment Incremental- IO and Lanczos-IO are more efficient than the b atc h compu- tation metho d, esp ecially for large graphs. It is worth noting that although Lanczos-IO has similar p erformance in compu- tation time as Incremental-IO, it req u ires additional memory allocation for storing all previously computed Lanczos vec- tors. 2 http://www.cs.purdue.edu /homes/dgleich/nmcomp/matlab/minnesota 3 http://www- p ersonal.u mich.edu/ ∼ me jn/netdata 4 http://glaros.dtc.umn.e du/gkhome/views/cluto 5 http://isomap.stanford.edu /datasets.html 6 http://socialc omputing.asu. edu/datasets/Y ouT ube 7 http://socialc omputing.asu. edu/datasets/BlogCatalog T able 2: Statistics of datasets Dataset Nod es Edges Density Minnesota Road 2 2640 intersections 3302 roads 0.095% Po w er Grid 3 4941 p ow e r stations 6594 p ow e r lin es 0.054% CLUTO 4 7674 data p oints 748718 kNN ed ges 2.54% Swiss Roll 5 20000 data p oints 81668 kNN ed ges 0.041% Y outub e 6 13679 users 76741 interactions 0.082% BlogCatalog 7 10312 bloggers 333983 interactions 0.63% 0 2 4 6 8 10 12 −50 0 50 100 150 200 250 300 350 400 450 number of eigenpairs K computation time (seconds) n=10000 batch method Lanczos−IO Incremental−IO (a) 0 2000 4000 6000 8000 10000 12000 10 0 10 1 10 2 10 3 number of nodes n computation time in log scale (seconds) K=10 batch method Lanczos−IO Incremental−IO (b) Figure 1: Sequential eigenpair computation time on Erdos- Renyi random graphs with ed ge connection p robabilit y p = 0 . 1. The marker represen ts ave raged comput ation time of 50 trials and the error b ar represents standard deviation. (a) Computation time with n = 10000 and different num ber of eigenpairs K . (b) Computation time with K = 10 and different num ber of n o des n . 6.2 Comparison of computation time on real- life datasets Fig. 2 shows the time improv ement of I ncremental-IO rel- ative t o the batch meth od for the real-life datasets listed in T able 2, where the difference in computation t ime is display ed in log scale to accommodate large v ariance of time impro ve- ment across datasets that are of widely va rying size. It is ob- serve d that the gain in compu tational time via I n cremental - IO is m ore pronounced as cluster count K increases , which demonstrates the merit of th e prop osed incremental metho d . On the other h and, although Lanczos-IO is also an incre- mental metho d, in addition to the we ll-known issue of requir- ing m emory allocation for storing all Lanczos vectors, the exp erimental results sho w th at it does n ot provide p erfor- mance rob u stness as I ncremental-IO does, as it can p erform even w orse th an the batch metho d for some cases. Fig. 3 sho ws that Lanczos-IO actually results in excessive compu- tation t ime compared with the b atc h metho d for four out of the six d atasets, whereas in Fig. 2 Incremental-IO is sup erior than the batch metho d for all these d atasets, whic h demon- strates the robustn ess of Incremental-IO o ver Lanczos-IO. The reason of lacking robu stness for Lanczos-IO can b e ex - plained b y the fact the previously computed Lanczos vectors ma y not b e effective in m in imizing the Ritz app ro ximation error of the desired eigenpairs. In contras t, Incremental-IO and the batch metho d adopt the imp licitly-restarted Lanc- zos metho d, which restarts the Lanczos algorithm when the generated Lanczos vectors fail to m eet the Ritz ap p ro xima- tion criterion, and may eventuall y lead to faster conv ergence. F urthermore, Fig. 4 shows that Lanczos-IO is ov erly sensitiv e to the num b er of augmented Lanczos vectors Z aug , whic h is Min ne so ta R oa d P owe r Grid CL UTO Swiss R oll Y o ut u be Blo gC a ta log 0 1 0 0 1 0 1 1 0 2 1 0 3 ti m e _ba tch - ti m e _in cr em e ntal (log sc ale ) C l u s ter C o u n t = 5 C l u s ter C o u n t = 1 0 C l u s ter C o u n t = 1 5 C l u s ter C o u n t = 2 0 Figure 2: Computation time impro vemen t of Incremental- IO relativ e to the batch metho d . Incremental-IO outp erforms the batch metho d for all cases, and h as impro vemen t w.r.t. K . M i n ne sota R o a d P o we r Gr i d C L UTO S wi ss R oll Y outu b e Blo gC a talo g -10 4 -10 3 -10 2 -10 1 -10 0 0 10 0 10 1 10 2 10 3 ti me _ba tch - ti me _Lanc zos-IO (log sca le ) Cl uste r Count = 5 Cl uste r Count = 1 0 Cl uste r Count = 1 5 Cl uste r Count = 2 0 Figure 3: Computation time impro vemen t of Lanczos-IO relativ e to the batch meth od . Negative v alues mean that Lanczos-IO requ ires more computation time than the batch method . a p arameter that cannot b e optimized a priori . Theorem 1 establishes that the prop osed incremental metho d exactly compu tes the K -th eigenpair using 1 to ( K − 1)- th eigenpairs, yet for th e sak e of exp eriments with real datasets, w e hav e computed the n ormed eigenv alue difference and eigen- vector correlation of the K smallest eigenpairs using the batch method and I ncremental-IO as display ed in Fig. 5. The K small est eigenpairs are identical as ex p ected; to b e sp ecific, using Matlab library , on the Minnesota road dataset for K = 20, the normed eigen v alue difference is 7 × 10 − 12 and the associated eigenv ectors are id entical up to d ifferences in sign. Results for the other datasets are rep orted in the ex- tended vers ion 1 . 6.3 Clustering metrics for user -guided spectral clustering In real-life, an analyst can use Incremental-IO for cluster- ing along with a mec hanism for selecting the b est choice of K starting from K = 2. T o demonstrate this, in the ex p eriment w e use five clustering metrics th at can be used for online de- cision making regarding the value of K . These m etrics are commonly u sed in clustering unw eigh ted an d wei ghted graphs and th ey are summarized as follo ws. 1. Modularity: mo dularity is defined as Mod = K X i =1 W ( C i , C i ) W ( V , V ) − W ( C i , V ) W ( V , V ) 2 , (3) where V is the set of all no des in the graph, C i is th e i -th cluster, W ( C i , C i ) ( W ( C i , C i )) d enotes the sum of wei ghts of all internal (ex ternal) edges of th e i -th cluster, W ( C i , V ) = W ( C i , C i ) + W ( C i , C i ), and W ( V , V ) = P n j =1 s j = s d enotes the total no dal strength. 2. Sc aled normalized cut (SNC): NC is defi ned as [30] NC = K X i =1 W ( C i , C i ) W ( C i , V ) . (4) SNC is NC divided by th e number of clusters, i.e., NC/ K . 3. Scaled median (or maxi mum) cluster size: Scaled medium ( maximum) cluster size is th e medium (maximum) cluster size of K clusters div ided by the total num b er of no des n of a graph. 4. Scaled sp ectrum energy: scaled sp ectrum energy is the sum of the K smallest eigen v alues of the graph Laplacian matrix L d ivided by the sum of all eigen v alues of L , whic h K = 5 K = 1 0 K = 1 5 K = 2 0 -10 3 -10 2 -10 1 -10 0 0 time_b atch - tim e_Lanczos-IO (log sca le) Z _ a u g = 1 Z _ a u g = 10 Z _ a u g = 10 0 Z _ a u g = 10 00 (a) Minnesota Road K = 5 K = 1 0 K = 1 5 K = 2 0 -10 4 -10 3 -10 2 -10 1 -10 0 0 time_b atch - tim e_Lanczos-IO (log sca le) Z _ a u g = 1 Z _ a u g = 10 Z _ a u g = 10 0 Z _ a u g = 10 00 (b) Po wer Grid K = 5 K = 1 0 K = 1 5 K = 2 0 -10 2 -10 1 -10 0 0 10 0 10 1 10 2 10 3 time_b atch - tim e_Lanczos-IO (log sca le) Z _ a u g = 1 Z _ a u g = 10 Z _ a u g = 10 0 Z _ a u g = 10 00 (c) CLU TO K = 5 K = 1 0 K = 1 5 K = 2 0 -10 5 -10 4 -10 3 -10 2 -10 1 -10 0 0 10 0 10 1 10 2 10 3 10 4 time_b atch - tim e_Lanczos-IO (log sca le) Z _ a u g = 1 Z _ a u g = 10 Z _ a u g = 10 0 Z _ a u g = 10 00 (d) Swiss Rol l K = 5 K = 1 0 K = 1 5 K = 2 0 -10 4 -10 3 -10 2 -10 1 -10 0 0 10 0 10 1 10 2 10 3 10 4 time_b atch - tim e_Lanczos-IO (log sca le) Z _ a u g = 1 Z _ a u g = 10 Z _ a u g = 10 0 Z _ a u g = 10 00 (e) Y outube K = 5 K = 1 0 K = 1 5 K = 2 0 -10 5 -10 4 -10 3 -10 2 -10 1 -10 0 0 time_b atch - tim e_Lanczos-IO (log sca le) Z _ a u g = 1 Z _ a u g = 10 Z _ a u g = 10 0 Z _ a u g = 10 00 (f ) BlogCatalog Figure 4: The effect of number of augmented Lan czos vectors Z aug of Lanczos-IO in Algorithm 2 on computation t ime impro vemen t relative to the batch metho d. Negative val ues mean t hat the computation time of Lanczos-IO is larger than th at of the batch method . The results show that Lanczos-IO is not a robust incremental eigenpair computation meth od. I ntuitiv ely , small Z aug ma y incur many iterations in the second step of Algorithm 2, whereas large Z aug ma y p ose computation bu rd en in the first step of Algorithm 2, and therefore b oth cases lead to the increase in computation time. 5 10 15 20 0 2 4 6 8 x 10 −12 number of smallest eigenpairs K normed eigenvalue difference 0 5 10 15 20 25 0 0.5 1 1.5 smallest eigenpairs correlation magnitude Figure 5: Consistency of eigenpairs computed b y the batch computation meth od and Incremental-IO fo r Min- nesota Road dataset. 2 3 4 5 6 7 8 9 10 0 0.5 1 modularity 2 3 4 5 6 7 8 9 10 0 0.02 0.04 NC/K 2 3 4 5 6 7 8 9 10 0 0.5 median cluster size/n 2 3 4 5 6 7 8 9 10 0 0.5 1 maximum cluster size/n 2 3 4 5 6 7 8 9 10 0 0.5 1 x 10 −5 scaled spectrum energy K Figure 6: Five clustering metrics compu ted incre- mental ly via Algorithm 1 for Minnesota Road. can be computed by scaled sp ectrum energy = P K i =1 λ i ( L ) P n j =1 L j j , (5) where λ i ( L ) is the i -th smallest eigenv alue of L and P n j =1 L j j = P n i =1 λ i ( L ) is the sum of diagonal elemen ts of L . These metrics provide alternativ es for gauging th e qu alit y of the clustering metho d . F or example, Mo d and NC reflect the trade-off b etw een intracluster similarity and intercluster separation. Therefore, the larger the v alue of Mod, the b et- ter th e clustering quality , and th e smaller the v alue of NC, the b etter the clustering qu alit y . Scaled sp ectrum energy is a typical measure of cluster qualit y for sp ectral cluster- ing [16, 21, 31], and smaller spectrum energy means b etter separabilit y of clusters. F or Mo d and scaled NC, a user might look for a cluster count K suc h that the increment in the clus- tering metric is not significant, i.e., th e clustering metric is saturated beyond such a K . F or scaled median and maxi- mum clu ster size, a user might require th e cluster count K to b e such that the clustering metric is b elo w a desired v alue. F or scaled sp ectrum energy , a user might lo ok for a noticeable increase in the clustering metric b etw een consecutive v alues of K . 6.4 Demonstration Here we use Minnesota Road data to demonstrate ho w users can u tilize th e clustering metrics in Sec. 6.3 to deter- mine th e num b er of clusters. The five metrics ev aluated for Minnesota Road clustering with resp ect to different cluster counts K are display ed in Fig. 6 . Starting from K = 2 clusters, these metrics are up dated by the incremental user- guided spectral clustering algorithm ( Algorithm 1) as K increases. If the user imp oses th at t he maxim um cluster size should b e less than 30% of the total number of no des, then (a) K = 7 (b) K = 8 (c) K = 9 (d) K = 10 Figure 7: Visualization of user-guided spectral clustering on Minnesota R oad with resp ect to selected cluster coun t K . Colors represent d ifferent clusters. the algorithm returns clustering results w ith a n umber of clusters of K = 6 or greater. I nsp ecting the mo dularity one sees it saturates at K = 7, and th e user also observes a no- ticeable increase in scaled spectrum energy when K = 7. Therefore, the algorithm can b e used to incrementally gen- erate four clustering results for K = 7 , 8 , 9, and 10. The selected clustering results in Fig. 7 are show n to b e consis- tent with geographic separations of different granularit y . W e also apply t he prop osed incremental user-guided sp ec- tral clustering algorithm ( Algorithm 1) to Po w er Grid, CLUTO, Swiss Roll, Y outub e, and BlogCatalog. In Fig.8, w e sho w how the v alue of clustering metrics changes with K , for eac h dataset. The in crementa l meth od enables us to efficien tly generate all clustering results with K = 2 , 3 , 4 . . . and so on. Due to space limitation, for eac h dataset we only sho w the trend of the three clustering m et rics th at ex hibit th e highest v ariation for d ifferent K ; thus, the chosen clustering metrics can b e different for differen t datasets. This suggests that se- lecting t he correct number of clusters is a difficult task and a user might need to use differen t clustering metrics for a range of K v alues, and Incremental-IO is an effective to ol to supp ort such an endeav or. 7. CONCLUSION In this pap er w e present Incremental -IO, an efficient incre- mental eigenpair comput ation meth od for graph Laplacia n matrices which w orks by transforming a batch eigen v alue de- composition problem in to a sequential leading eigenpair com- putation problem. The metho d is elegan t, robust and easy to implement using a scientific programming language, such as Matlab. W e provide analytical pro of of its correctness. W e also demonstrate that it achiev es significant reduction in computation time when compared with the b atc h computa- tion metho d. Pa rticularly , it is observed that the difference in computation time of these tw o metho ds grows exp onentiall y as th e graph size in creases. T o demonstrate the effectiv eness of Incremental-IO, we also sh ow exp erimental eviden ces that obtaining such an in- crementa l method by adapting the existing leading eigenpair 2 4 6 8 10 12 14 16 18 20 0 0.5 1 modularity 2 4 6 8 10 12 14 16 18 20 0 0.5 median cluster size/n 2 4 6 8 10 12 14 16 18 20 0 0.5 1 x 10 −5 scaled spectrum energy K (a) Po wer Gr id 2 4 6 8 10 12 14 16 18 20 0 0.5 1 modularity 2 4 6 8 10 12 14 16 18 20 0 0.5 1 maximum cluster size/n 2 4 6 8 10 12 14 16 18 20 0 1 2 x 10 −4 scaled spectrum energy K (b) CLUTO 2 4 6 8 10 12 14 16 18 20 0 0.5 1 modularity 2 4 6 8 10 12 14 16 18 20 0 0.5 median cluster size/n 2 4 6 8 10 12 14 16 18 20 0 1 2 x 10 −6 scaled spectrum energy K (c) Swiss Roll 2 4 6 8 10 12 14 16 18 20 0 0.5 modularity 2 4 6 8 10 12 14 16 18 20 0 0.1 0.2 NC/K 2 4 6 8 10 12 14 16 18 20 0.6 0.8 1 maximum cluster size/n K (d) Y outube 2 4 6 8 10 12 14 16 18 20 −0.2 0 0.2 modularity 2 4 6 8 10 12 14 16 18 20 0.6 0.8 1 NC/K 2 4 6 8 10 12 14 16 18 20 0 0.5 1 maximum cluster size/n K (e) Bl ogCatalog Figure 8: Three selected clustering metrics of each d ataset. The complete clustering metrics can b e found in the exten ded versi on (av ailable at http://arxiv.org/abs/ 1512.0734 9 ). solv ers (such as, the Lanczos algorithm) is non-obvious and such efforts generally do not lead to a robu st solution. Finally , we demonstrate that the proposed incremen tal eigenpair compu tation metho d (In cremental -IO) is an effec- tive tool f or a user-guided sp ectral clustering task, whic h effectivel y up dates clustering results and metrics for each in- crement of th e cluster count. Acknowledgmen ts This work is partial ly supp orte d by the Consortium for V erification T echnology under Department of Energy Nation al Nucle ar Secu r ity Administration aw ard number de-na0002534, by ARO grant W911NF- 15-1-0479, by ARO grant W911NF-15-1-0241, and by Mohamm ad Hasan’s NSF CAREER Aw ard (I IS-1149851). 8. REFERENCES [1] S. Basu, A. Banerjee, and R. J. Mo one y . Active semi -sup ervision for pai r wise constrai n ed clusterin g. In SDM , v olume 4, pages 333–344, 2004. [2] M. Bel kin and P . Niyogi. Laplac ian eige nmaps for dimension ality redu ction and data representation. Neur al c omputation , 15(6):1373–1396, 2003. [3] D. Calvetti, L. Reichel, and D. C. Soren sen. An im plicitl y restarted lan c zos meth od for large sym metric eige nv alue problems. Ele ctr onic T r ansactions on Numerical Analysis , 2(1), 1994. [4] P .-Y. Chen and A. Hero. Deep community d etection . IEEE T ran s. Signal Pr o c ess. , 63(21):5706–5719, Nov. 2015. [5] P .-Y. Chen and A. Hero. Phase transiti ons in sp e ctral community d etection . IEEE T r ans. Signal Pro cess. , 63(16):4339–4347, Au g 2015. [6] P .-Y. Chen and A. O. Hero. No de remov al vuln erability of the largest c omp onent of a ne tw ork. In G lob alSIP , pages 587–590, 2013. [7] P .-Y. Chen and A. O. Hero. Assessing and safeguard i ng netw ork resilienc e to no dal attacks. IEEE Commun. Mag. , 52(11):138–143, Nov. 2014. [8] C. Dhanjal, R. Gaudel, and S. Cl´ emen¸ con. Efficient eigen-up d ating f or sp ect ral graph clu stering. Neur o co mputing , 131:440–452, 2014. [9] P . Jia, J. Yin, X. Huang, and D. Hu. Increme ntal laplacian eigenm ap s by preserving adjace nt inform ation b etw een data p oints. Pattern Re co g nition Letters , 30(16):1457–1463, 2009. [10] J. Ku czynski and H. W ozniako wski. Estimating the l argest eigenv alu e by the p ow er an d lan czos algorithm s with a rand om start. SIAM journal on m atrix analysis and application s , 13(4):1094–1122, 1992. [11] C. Lanczos. An iteration meth od for the solution of the eigenv alu e proble m of lin e ar differential and integral op erators. Journal of Rese ar ch of the National Bure au of Standar ds , 45(4), 1950. [12] R. M. Larsen. Com p uting the svd for large and sparse matric e s. SCCM, Stanfor d University, June , 16, 2000. [13] R. B. Lehouc q , D. C. Sorensen, and C. Y an g. ARP ACK users’ guide: solution of lar ge-scale eigenvalue problems with implicitly restarte d Arnoldi methods , volume 6. Siam, 1998. [14] U. Luxb urg. A tutorial on spe ctral clusterin g. Statisti cs and Computing , 17(4):395–416, Dec. 2007. [15] R. Merris. Laplacian matrices of graph s: a survey . Line ar A lgebra and its Applicati ons , 197-198:143–176, 1994. [16] A. Y. Ng, M. I. Jordan , and Y. W e iss. On spe ctral clusterin g: Analysis and an algori thm. In NIPS , pages 849–856, 2002. [17] H. Ning, W. Xu , Y. Chi, Y. Gong, and T. S. Huang. Incre mental sp e ctral cl u stering with ap plication to monitoring of evolving blog c ommunities. In SDM , pages 261–272, 2007. [18] H. Ning, W. Xu , Y. Chi, Y. Gong, and T. S. Huang. Incre mental sp e ctral cl u stering by efficiently up dating the eigen-system. Pattern Re co gnition , 43(1):113–127, 2010. [19] R. Olfati -Sab er, J. F ax, and R. Murr ay . Consen sus and co op eration in netw ork ed multi-agent systems. Pr o c. IEEE , 95(1):215–233, 2007. [20] B. N. Parlett. The symmetric e igenvalue pr oblem , volume 7. SIAM, 1980. [21] M. Polito and P . Perona. Grouping and dim ensionality reducti on by loc ally line ar embed ding. In NIPS , 2001. [22] L. K. M. Po on , A. H. Liu, T. Liu, and N. L. Zhang. A mo del-based approach to r ou nding in sp ectral cluste ring. In UAI , page s 68–694, 2012. [23] A. Pothen, H. D. Sim on, and K. -P . Liou. Partitioning sparse matrices with eigenvectors of graphs. SIAM journal on m atrix analysis and applic ations , 11(3):430–452, 1990. [24] F. Radic chi and A. Are nas. Ab r u pt transition i n the structural formation of interconnecte d netw orks. Nature Physics , 9(11):717–720, N ov. 2013. [25] G. Ranjan, Z.-L. Zhang, and D. Boley . Incremental c omputation of pse udo-inv erse of lapl acian. In C ombinatorial Optimization and Applic ations , pages 729–749. S pringer, 2014. [26] J. Sh i and J. Malik. Normalized cuts and image segmentation. IEEE T r ans. Pattern An al. Mach. Intel l. , 22(8):888–905, 2000. [27] D. Shuman, S . Narang, P . F r ossard, A. Orte ga, and P . V ande rgheynst. The em erging field of signal pro ce ssing on graphs: Exte nding high-dim ensional data analysis to netw orks and oth er irregul ar domains. IEEE Signal Pr o cess. Mag. , 30(3):83–98, 2013. [28] S. Wh ite and P . S myth. A sp ectral clu ste ring approach to finding c ommunities i n graph. In SDM , volume 5, pages 76–84, 2005. [29] K. W u and H. Simon. Thick-restart lan czos meth o d for large symmetric ei genv alue probl ems. SIAM Jou rnal on Matrix A nalysis and Applic ations , 22(2):602–616, 2000. [30] M. J. Zaki and W. M . Jr. Data Mini ng and A nalysis: F undamental C onc epts and Algorithms . Cambridge University Press, 2014. [31] L. Zeln ik-Manor and P . Perona. Se lf-tuning sp ectr al clustering. In NIPS , pages 1601–1608, 2004. [32] B. Zhang, S. Choud hury , M. A. Hasan, X. Ning, K. Agarw al, and S . P . andy Paola Pesan tez Cabre ra. T rust from th e past: Ba yesian p e rsonalized ranking based link pr e diction in knowledge graphs. In SDM MNG Workshop , 2016. [33] B. Zhang, T. K . Saha, and M. A. Hasan. Name disambiguation from l ink data in a collab oration graph. In ASONAM , pages 81–84, 2014. 9. SUPPLEMENT ARY FILE 9.1 Pr oof of Lemma 1 Since L is a p ositive semidefin ite (PSD) matrix, λ i ( L ) ≥ 0 for all i . S ince G is a connected graph, by (1) L1 n = ( S − W ) 1 n = 0 n . Theref ore, by the PSD p rop erty we ha ve ( λ 1 ( L ) , v 1 ( L )) = (0 , 1 n √ n ). More ov er, since L is a symmetric real-v alued square matrix, from (1) we hav e trace( L ) = n X i =1 L ii = n X i =1 λ i ( L ) = n X i =1 s i = s. (6) By the PSD property of L , w e ha ve λ n ( L ) < s since λ 2 ( L ) > 0 for any connected graph. Therefore, by the or- thogonalit y of eigenv ectors of L (i.e., 1 T n v i ( L ) = 0 for all i ≥ 2) the eigenv alue decomp osition of e L can b e represented as e L = n X i =2 λ i ( L ) v i ( L ) v T i ( L ) + s n 1 n 1 T n = n X i =1 λ i ( e L ) v i ( e L ) v T i ( e L ) , (7) where ( λ n ( e L ) , v n ( e L )) = ( s, 1 n √ n ) and ( λ i ( e L ) , v i ( e L )) = ( λ i +1 ( L ) , v i +1 ( L )) for 1 ≤ i ≤ n − 1. 9.2 Pr oof of Lemma 2 The graph Laplacian matrix of a disconnected graph con- sisting of δ connected comp onents can be represen ted as a matrix with diagonal blo ck structu re, where eac h blo ck in the diagonal corresp onds to one connected comp onent in G [6], i.e., L = L 1 O O O O L 2 O O O O . . . O O O O L δ , (8) where L k is th e graph Laplacian matrix of k -t h connected compon ent in G . F rom the p roof of Lemma 1 eac h con- nected component contributes to exactly one zero eigenv alue for L , and λ n ( L ) < δ X k =1 X i ∈ comp onent k λ i ( L k ) = δ X k =1 X i ∈ comp onent k s i = s. (9) Therefore, w e hav e the results in Lemma 2. 9.3 Pr oof of Corollary 1 Recall from (2 ) that L N = S − 1 2 LS − 1 2 , and also we h a ve L N S 1 2 1 n = S − 1 2 L1 n = 0 n . Mo reov er, it can b e shown that 0 ≤ λ 1 ( L N ) ≤ λ 2 ( L N ) ≤ . . . ≤ λ n ( L N ) ≤ 2 [15], and λ 2 ( L N ) > 0 if G is connected. F ollo wing the same deriv a- tion for Lemma 1 we obtain the corolla ry . N ote t hat S 1 2 = diag( √ s 1 , √ s 2 , . . . , √ s n ) and ( S 1 2 1 n ) T S 1 2 1 n = 1 T n S1 n = s . 9.4 Pr oof of Corollary 2 The results can b e obtained by follow ing t he same d eriv a- tion pro ced ure in Sec. 9.2 and the fact that λ n ( L N ) ≤ 2 [15]. 9.5 Pr oof of Theor em 1 F rom Le mma 1, L + s n 1 n 1 T n + V K Λ K V T K = n X i = K +1 λ i ( L ) v i ( L ) v T i ( L ) + K X i =2 s · v i ( L ) v T i ( L ) + s n 1 n 1 T n , (10) whic h is a v alid eigenpair decomp osition that can b e seen b y inflating the K smallest eigenv alues of L to s with the orig- inally paired eigenv ectors. Using (10) we obtain th e eigen- v alue decomp osition of e L as e L = L + V K Λ K V T K + s n 1 n 1 T n − s I = n X i = K +1 ( λ i ( L ) − s ) v i ( L ) v T i ( L ) . (11) Since 0 ≤ λ K +1 ( L ) ≤ λ K +2 ( L ) ≤ . . . ≤ λ n ( L ), we ha ve | λ K +1 ( L ) − s | ≥ | λ K +2 ( L ) − s | ≥ . . . ≥ | λ n ( L ) − s | . There- fore, the eigenpair ( λ K +1 ( L ) , v K +1 ( L )) can b e obtained by computing the leading eigenpair of e L . In particular, if L has distinct eigenv alues, th en the leading eigenpair of e L is uniqu e. Therefore, by (11) we hav e the relation ( λ K +1 ( L ) , v K +1 ( L )) = ( λ 1 ( e L ) + s, v 1 ( e L )) . (12) 9.6 Pr oof of Theor em 2 First observe from (8) th at L has δ zero eigen v alues since eac h connected comp onent contributes to exactly one zero eigen v alue for L . F ollowi ng the same deriv ation pro cedure in the pro of of T heorem 1 and using Lemma 2, w e hav e e L = L + V K,δ Λ K,δ V T K,δ + s V δ V T δ − s I = n X i = K +1 ,K ≥ δ ( λ i ( L ) − s ) v i ( L ) v T i ( L ) . (13) Therefore, th e eigenpair ( λ K +1 ( L ) , v K +1 ( L )) can b e obtained by computing the leading eigenpair of e L . If L has distinct nonzero eigen v alues (i.e, λ δ + 1 ( L ) < λ δ + 2 ( L ) < . . . < λ n ( L )), w e obtain the relation ( λ K +1 ( L ) , v K +1 ( L )) = ( λ 1 ( e L )+ s, v 1 ( e L )). 9.7 Complete clustering metrics for user -guided spectral clustering Fig. 7 displays th e five clustering metrics introd uced in Sec. 6. 3 on Minn esota Road, Po wer Grid, CLUTO, Swiss Roll, Y outube, a nd BlogCatal og. T hese metrics are com- puted incrementall y via Al gorithm 1 and they provide mul- tiple criterions for users to decide the final cluster count. 9.8 Demonstration of e quivalence between the pr oposed incr emental computation method and the batch computation method Here we use the K small est eigenpairs of the d atasets in T able 2 computed by the batc h method and Incremental- IO to verify T heorem 1 , which prov es that Incremental-IO exactly compu tes the K -th eigenpair using 1 to ( K − 1)-th eigenpairs. Fig. 8 shows th e normed eigenv alue differences (in terms of ro ot mean squared error) and the correlations of the associated eigen vectors obtained from the tw o metho ds. It can b e observed that th e normed eigenv alue difference is negligible and the associated eigenv ectors are identical up to the difference in sign, i.e., the eigenv ector correlatio n in mag- nitude equals to 1 for every pair of corresp onding eigen vectors of the tw o meth od s. 2 3 4 5 6 7 8 9 10 0 0.5 1 modularity 2 3 4 5 6 7 8 9 10 0 0.02 0.04 NC/K 2 3 4 5 6 7 8 9 10 0 0.5 median cluster size/n 2 3 4 5 6 7 8 9 10 0 0.5 1 maximum cluster size/n 2 3 4 5 6 7 8 9 10 0 0.5 1 x 10 −5 scaled spectrum energy K (a) Minnesota Road 2 4 6 8 10 12 14 16 18 20 0 0.5 1 modularity 2 4 6 8 10 12 14 16 18 20 0 0.02 0.04 NC/K 2 4 6 8 10 12 14 16 18 20 0 0.5 median cluster size/n 2 4 6 8 10 12 14 16 18 20 0 0.5 1 maximum cluster size/n 2 4 6 8 10 12 14 16 18 20 0 0.5 1 x 10 −5 spectrum energy K (b) P o wer Gr id 2 4 6 8 10 12 14 16 18 20 0 0.5 1 modularity 2 4 6 8 10 12 14 16 18 20 0 0.02 0.04 NC/K 2 4 6 8 10 12 14 16 18 20 0 0.5 median cluster size/n 2 4 6 8 10 12 14 16 18 20 0 0.5 1 maximum cluster size/n 2 4 6 8 10 12 14 16 18 20 0 1 2 x 10 −6 spectrum energy K (a) Swiss Roll 2 4 6 8 10 12 14 16 18 20 0 0.5 1 modularity 2 4 6 8 10 12 14 16 18 20 0 0.05 0.1 NC/K 2 4 6 8 10 12 14 16 18 20 0 0.5 median cluster size/n 2 4 6 8 10 12 14 16 18 20 0 0.5 1 maximum cluster size/n 2 4 6 8 10 12 14 16 18 20 0 1 2 x 10 −4 spectrum energy K (b) CLUTO 2 4 6 8 10 12 14 16 18 20 0 0.5 modularity 2 4 6 8 10 12 14 16 18 20 0 0.1 0.2 NC/K 2 4 6 8 10 12 14 16 18 20 0 0.5 median cluster size/n 2 4 6 8 10 12 14 16 18 20 0.6 0.8 1 maximum cluster size/n 2 4 6 8 10 12 14 16 18 20 0 5 x 10 −5 spectrum energy K (a) Y outube 2 4 6 8 10 12 14 16 18 20 −0.2 0 0.2 modularity 2 4 6 8 10 12 14 16 18 20 0.6 0.8 1 NC/K 2 4 6 8 10 12 14 16 18 20 0 0.5 median cluster size/n 2 4 6 8 10 12 14 16 18 20 0 0.5 1 maximum cluster size/n 2 4 6 8 10 12 14 16 18 20 0 0.5 1 x 10 −4 spectrum energy K (b) BlogCatalog Figure 7: Multiple clustering metrics of d ifferent datasets listed in T able 2. The metrics are mo dularity , scaled normalized cut (N C/ K ), scaled median and maximum cluster size, and scaled sp ectrum en ergy . 5 10 15 20 0 2 4 6 8 x 10 −12 number of smallest eigenpairs K normed eigenvalue difference 0 5 10 15 20 25 0 0.5 1 1.5 smallest eigenpairs correlation magnitude (a) Minnesota Road 5 10 15 20 0 2 4 6 8 x 10 −11 number of smallest eigenpairs K normed eigenvalue difference 0 5 10 15 20 25 0 0.5 1 1.5 smallest eigenpairs correlation magnitude (b) P o wer Gr id 5 10 15 20 0 1 2 3 4 x 10 −11 number of smallest eigenpairs K normed eigenvalue difference 0 5 10 15 20 25 0 0.5 1 1.5 smallest eigenpairs correlation magnitude (c) CLUTO 5 10 15 20 0 2 4 6 8 x 10 −11 number of smallest eigenpairs K normed eigenvalue difference 0 5 10 15 20 25 0 0.5 1 1.5 smallest eigenpairs correlation magnitude (d) Swiss Rol l 5 10 15 20 0 1 2 3 4 x 10 −10 number of smallest eigenpairs K normed eigenvalue difference 0 5 10 15 20 25 0 0.5 1 1.5 smallest eigenpairs correlation magnitude (e) Y outube 5 10 15 20 0 0.5 1 x 10 −11 number of smallest eigenpairs K normed eigenvalue difference 0 5 10 15 20 25 0 0.5 1 1.5 smallest eigenpairs correlation magnitude (f ) BlogCatalog Figure 8: Comparisons of the smallest eigenpairs by the batch metho d and In cremental -IO for datasets listed in T able 2.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment