Bilinear residual Neural Network for the identification and forecasting of dynamical systems

Due to the increasing availability of large-scale observation and simulation datasets, data-driven representations arise as efficient and relevant computation representations of dynamical systems for a wide range of applications, where model-driven m…

Authors: Ronan Fablet, Said Ouala, Cedric Herzet

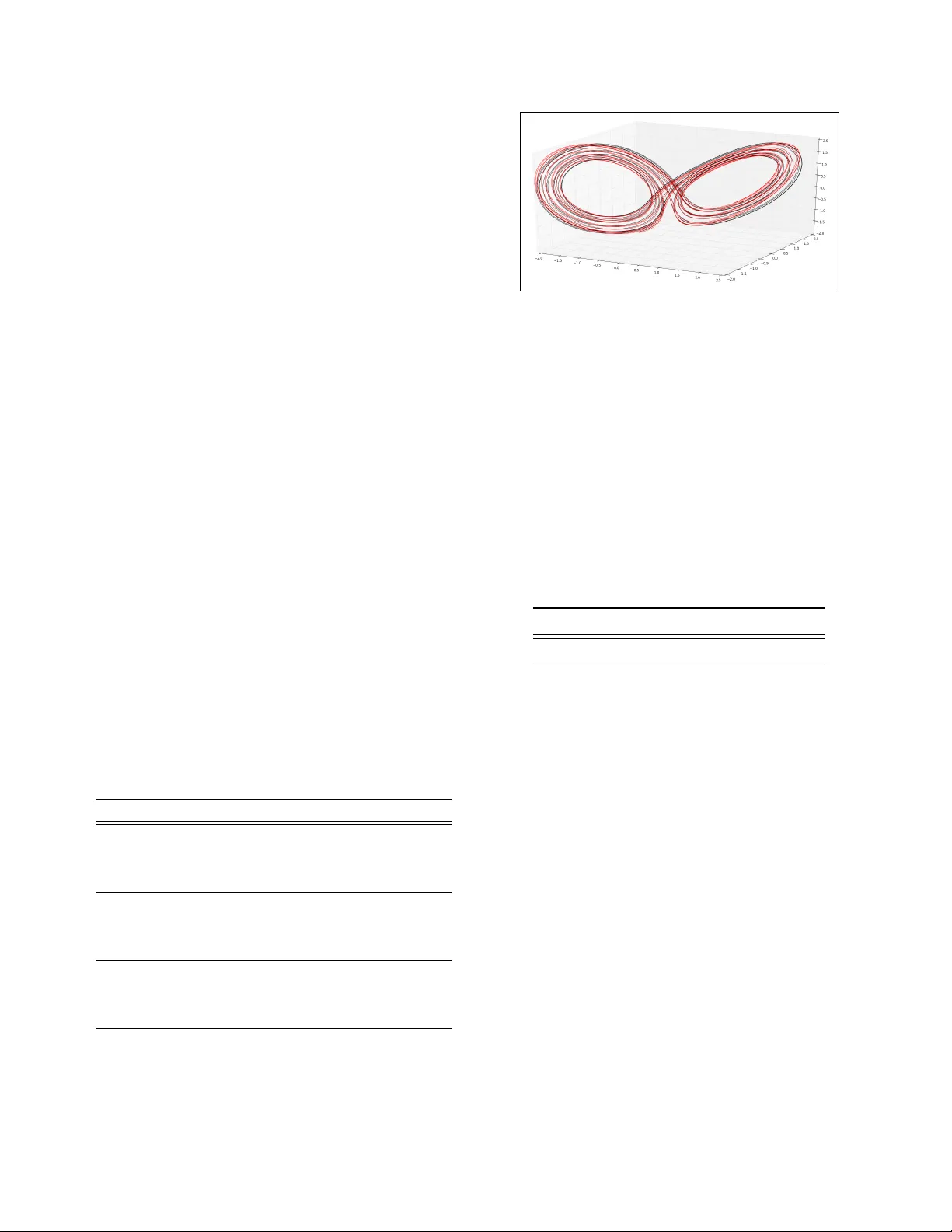

BILINEAR RESIDU AL NEURAL NETWORK FOR THE IDENTIFICA TION AND FORECASTING OF D YNAMICAL SYSTEMS Ronan F ablet 1 , Said Ouala 1 , Cédric Herzet 1 , 2 (1) IMT Atlantique; Lab-STICC, Brest, France (2) INRIA Bretagne-Atlantique, Fluminance, Rennes, France ABSTRA CT Due to the increasing av ailability of large-scale observ ation and simulation datasets, data-dri ven representations arise as efficient and rele vant computation representations of dynami- cal systems for a wide range of applications, where model- driv en models based on ordinary differential equation remain the state-of-the-art approaches. In this work, we in vestigate neural networks (NN) as physically-sound data-driv en repre- sentations of such systems. Reinterpreting Runge-K utta me- thods as graphical models, we consider a residual NN archi- tecture and introduce bilinear layers to embed non-linearities which are intrinsic features of dynamical systems. From nu- merical experiments for classic dynamical systems, we de- monstrate the rele vance of the proposed NN-based architec- ture both in terms of forecasting performance and model iden- tification. Index T erms — Dynamical systems, neural networks, Bi- linear layer , Forecasting, ODE, Runge-K utta methods 1. PR OBLEM ST A TEMENT AND RELA TED WORK Model-driv en strategies have long been the classic frame- work to address forecasting and reconstruction of physical systems. T ypical examples may be taken from geosciences [1]. The ev er increasing a vailability of large-scale observ ation and simulation datasets make more and more appealing the dev elopment of data-driv en strategies especially when dea- ling with computationally-demanding models or high mode- ling uncertainties [1]. In this conte xt, data-dri ven schemes typically aim to iden- tify computational representations of the dynamics of a giv en state from data, i.e. the time evolution of the variable of in- terest. Physical models usually describe this time de volution through an ordinary differential equation (ODE). One may distinguish two main families of data-driv en approaches. A first category inv olves global parametric representations deri- ved from physical principles [2]. Polynomial representations are typical examples [3]. The combination of such represen- tations with sparse regression was recently shown to signifi- This work was supported by Labex Cominlabs (grant SEACS) and CNES (grant OSTST -MAN A TEE). cantly open ne w research a venues. A second cate gory of ap- proach adopts a machine learning point of view and states the considered issue as a regression issue for a predefined time step dt , i.e. the regression of the state at time t + dt given the state at time t . A v ariety of machine learning regression models hav e been inv estigated, among which neural networks and nearest-neighbor models (often referred to as analog fo- recasting models in geoscience) are the most popular ones [4, 5]. Such approaches offer more modeling flexibility to op- timize forecasting performance, at the expense howe ver in ge- neral of a lack of interpretability of the learnt representation. In this work, we in vestigate neural network (NN) repre- sentations for dynamical systems governed by some under- lying b ut unkno wn ODEs. W e aim to deriv e computationally- efficient and physically-sound representations. Our contri- bution is three-fold : i) we make explicit the interpretation of Runge-Kutta inte gration schemes as graphical models to introduce a residual NN architecture, ii) we introduce a NN architecture with bilinear layers to embed intrinsic non-linearities depicted by the dynamical systems, iii) we demonstrate the relev ance of the proposed NN architecture with respect to state-of-the-art models both for model identi- fication and forecasting for dif ferent classic systems, namely Lorenz-63 and Lorenz-96 dynamics [6], which are represen- tativ e of ocean-atmosphere dynamics, and Oregenator system [7], which relates to oscillatory chemical dynamics. This paper is organized as follows. Section 2 describes the proposed NN-based architecture for dynamical systems. Section 3 presents numerical e xperiments. W e further discuss our contributions in Section 4. 2. NEURAL NET ARCHITECTURES FOR D YNAMICAL SYSTEMS W e present in this section the proposed NN architectures to represent and forecast a dynamical system governed by an unknown ODE. W e first point out the graphical representa- tion of Runge-K utta methods as residual neural nets. Based on this graphical representation, we introduce the proposed bilinear NN. W e then discuss training issues and applications to forecasting and reconstruction problems. 2.1. Runge-Kutta methods as r esidual neural nets Let us consider a dynamical system, whose time-v arying state X is go verned by an ordinary differential equation (ODE) : dX t dt = M ( X t , θ ) (1) where θ is some parameters. The fourth-order Runge-Kutta integration scheme is among the most classical ones for si- mulation state dynamics from a gi ven initial condition X ( t 0 ) . It relies on the following sequential update for a predefined integration time step dt : X t 0 +( n +1) dt = X t 0 + n · dt + 4 X i =1 α i k i (2) { k i } are defined as follo ws : k i = M X t 0 + β i k i − 1 dt , θ with k 0 = 0 , α 1 = α 4 = 1 / 6 , α 2 = α 3 = 2 / 6 , β 1 = β 4 = 1 and β 2 = β 3 = 1 / 2 . Runge-Kutta integration scheme (2) may be restated using a graphical model as illustrated in the bottom panel of Fig.1. Assuming we know operator F , the fourth-order runge-Kutta scheme can be reg arded as a recurrent network with a four- layer residual net [8], each layer sharing the same operator F . In this architecture, coef ficients { α i } i refers to the relati ve weights given to the ouputs of the four repeated blocks F . The same holds for coefficient β i which refer to the weight given to the output from block i − 1 when added to input X t when feeded to block i . Based on this representation of numerical integration (2) as a residual net, we may state the identification of dynamical operator M in (1) as the learning of the parameters of block F for a recurrent residual NN stated as in Fig. 1. The other parameters, namely coefficients { α i } i and { β i } i , may be set to the values used in the fourth-order Runge-Kutta scheme or learnt from data. Overall, the key aspect of the consider residual recurrent net is the architecture and parameterization chosen for the shared block F . W e may also stress that the fourth-order architecture sketched in Fig.1 may be extended to any lower - or higher -order scheme. As a special case, the explicit Euler scheme leads to a one-block architecture. Such NN representation of numerical schemes have been in vestigated in previous works [9, 10] to estimate coef ficients { α i } i and { β i } i for a giv en ODE. The objectiv e is here to learn all the parameters of the NN representation of the un- ko wn ODE governing observ ed time series. 2.2. Proposed bilinear neural net ar chitecture Neural net architectures classically e xploit conv olutional, fully-connected and non-linear activ ation layer [8]. F ollowing this classic frame work, operator F may be approximated as a combination of such elementary layers. It may be noted that dynamical systems, as illustrated for instance by Lorenz dy- namics (3) and (5), in volv e non-linearities, which might not Fig. 1 . Proposed bilinear residual arc hitecture f or the repre- sentation of a dynamical system represented by (1). W e illus- trate an architecture associated with a 4 th -order Runge-Kutta- like numerical integration for an elementary time-step dt = 1 . It in volves a four -layer residual neural net with an elementary network F repeated four times. The output of this elemen- tary network in volves a fully-connected layer F C 4 whose in- puts are the concatenation of the output of the fully-connected layer F C 1 and the element-wise product between the outputs of fully-connected layers F C 2 and F C 3 . be well-approximated by the combination of a linear trans- form of the inputs and of a non-linear activ ation layer . Espe- cially physical dynamical systems often inv olve bilinear non- linearities, which express some multiplicativ e interaction bet- ween two physical v ariables [2, 11]. Among classic physical models, one may cite for instance advection-dif fusion dyna- mics or shallo w water equations. Polynomial decompositions then appear as natural representation of dynamical systems for instance for model reduction issues [3]. These considerations motiv ate the introduction of a bili- near neural net architecture. As illustrated in Fig.1, we can combine fully-connected layers and an element-wise product operator to embed a second-order polynomial representation for operator F in the proposed architecture. High-order poly- nomial representation might be embedded similarly . In Fig.1, we illustrate an architecture where operator F can be repre- sented as the linear combination of three linear terms (i.e., linear combination of the input variables) and three bilinear terms (i.e., products between two linear combination of the input variables). In this architecture, the parameterization of the architecture initially relies on the definition of the number of linear and non-linear terms, which relate to the number of hidden nodes in the fully-connected layers, respectiv ely F C 1 and F C 2 , 3 . The calibration of the proposed architecture then comes to learning the weights of the different fully-connected layers. It may be noted that bilinear NN architectures hav e also been proposed in other context [12, 13]. 2.3. T raining issues Giv en the proposed architecture and a selected paramete- rization, i.e. the number of nodes of the fully-connected layers F C 1 , 2 , 3 the number of F blocks, the learning of the model aims primarily to learn the weights of the fully-connected layers associated with block F . As stated previously , coef fi- cients { α i } i and { β i } i from (2) may be set a priori or learned from the data. Gi ven a dataset { X t n , X t n + dt } n , correspon- ding to state time series for a given time resolution dt , the loss function used for training is the root mean square error of the forecasting at one time step dt . Gi ven the relationship between the number of elementary blocks F in the conside- red architecture and the order of the underlying integration scheme, one may consider an incremental strate gy , where we initially consider a one-block architecture, i.e . an explicit Eu- ler inte gration scheme prior to increasing the number of F - blocks for a higher-order numerical scheme. Regarding initialization aspects, the weights of the fully- connected layers F C 1 , 2 , 3 , 4 are set randomly and coef ficients { α i } i and { β i } i are set to those of the associated Runge-Kutta scheme. W e use K eras frame work with T ensorflow back end to implement the proposed architecture. During the learning step, we impose a hard constraint that the dif ferent F -blocks share the same parameters after each training epoch. 2.4. Application to for ecasting and latent dynamics iden- tification In this study , we first consider forecasting of the ev olution of state X from a giv en initial condition X t 0 . For a trained NN architecture, two strategies may be considered : i) the use of the trained architecture as a recurrent neural net architec- ture to forecast a time series for a number of predefined time steps dt , ii) the plug-an-play use of the trained operator F in a classic ordinary differential equation solver . It may be no- ted that, for a trained operator F , the fourth-order architecture sketched in Fig. 1 is numerically equiv alent to a fourth-order Runge-Kutta solv er . W e also explore the potential of the proposed NN repre- sentation for the identification of latent dynamics. More spe- cifically , we assume that we are pro vided with a time series of states { Y t + kdt } k , which is dri ven by the dynamics of a latent lower -dimensional state X t according to a linear mapping, i.e. Y t + kdt = H X t + kdt with H a linear mapping from the low- dimensional space to the hig-dimensional one. T o address the identification of the dynamics of latent state X t from time se- ries { Y t + kdt } k , we consider the proposed NN representation in which F C 1 layer serves as a mapping layer from the high- dimensional space to the low-dimensional one and F C 4 layer becomes mapping block from the low-dimensional block to the high-dimensional one. 3. NUMERICAL EXPERIMENTS This section presents the numerical experiments we per- form to demonstrate the relev ance of the proposed bilinear NN architecture. W e introduce the considered case-studies and our experimental setup, and report results including the benchmarking with respect to state-of-the-art schemes. 3.1. Considered case-studies and experimental setup W e consider three reference dynamical systems to per- form a qualitati ve and quantitative ev aluation of the proposed architecture : namely , Lorenz-63, Oregonator and Lorenz-96 dynamics. For all models, we generate time series exemplars from the numerical integration of the ODE which governs each system. W e use the Shampine and Gordon solver [14]. The Lorenz-63 system is a 3-dimensional system gov er- ned by the following ODE : dX t, 1 dt = σ ( X t, 2 − X t, 2 ) dX t, 2 dt = ρX t, 1 − X t, 2 − X t, 1 X t, 3 dX t, 3 dt = X t, 1 X t, 2 − β X t, 3 (3) Under parameterization σ = 10 , ρ = 28 and β = 8 / 3 , Lorenz-63 system in volv es chaotic dynamics with two attrac- tors. The integration time step dt is set to 0.01. Oregonator system is also a 3-dimensional system. This stiff dynamical system is go verned by : dX t, 1 dt = α ( X t, 2 + X t, 1 (1 − β X t, 1 − X t, 2 )) dX t, 2 dt = 1 α ( X t, 3 − (1 + X t, 1 ) X t, 2 ) dX t, 3 dt = σ ( X t, 1 − X t, 3 ) (4) Here, we consider α = 77 . 27 , β = 8 . 375 . 10 − 6 and σ = 0 . 161 . The integration time step h is set to 0.1. Lorenz-96 system is a 40-dimensional system. It in volves propagation-like dynamics go verned by : dX t,i dt = ( X t,i +1 − X t,i − 2 ) X t,i − 1 + A (5) with periodic boundary conditions ( i.e. X t, − 1 = X t, 40 and X t, 41 = X t, 1 ). T ime step h is set to 0.05 and A = 9 . For each system, we generate a time series of 50000 time steps to create our training dataset and a time series of 1000 time steps for the test dataset. For a giv en data-driv en re- presentation, we ev aluate the forecasting performance as the Root Mean Square error (RMSE) for an integration time step of h , 4 h and 8 h , where h is the integration time step of the si- mulated time series. The RMSE is a veraged over all the initial conditions taken from the test time series. For benchmarking purposes, we compare the proposed bilinear residual NN re- presentation to the following data-dri ven representation : — a sparse regression model [2] referred to as SR. It combines an augmented bilinear state as regression variable and a sparsity-based re gression ; — an analog forecasting operator [5] referred to as AF . It applies locally-linear operators estimated from nearest neighbors, retrie ved according to a Gaussian kernel as in [5] ; Sev eral NN representations are ev aluated : — the proposed bilinear residual architecture using a one-block version (Euler-like setting), referred to as Bi-NN(1), and a four-block version (Runge-Kutta-like setting) with share layers, referred to as Bi-res-NN- SL(4). W e use 3-dimensional (resp. 40-dimensional) fully-connected layers for the linear and bilinear layers F C 1 , 2 , 3 for Lorenz-63 and Oregonator sys- tems (resp. Lorenz-96 system). — a neural network architecture similar to the above four-block one b ut replacing the proposed bilinear block by a classic MLP . From cross-validation experi- ments, we consider a MLP with 5 hidden layers (resp. 11 hidden layers) and 6 nodes in each layer (resp. 80 nods in each layer) for both Lorenz-63 and Oregona- tor models (resp. Lorenz-96 model). This archi tecture is referred to as MLP-SL(4) ; — a MLP architecture trained to predict directly state at time t + h from the state at time t . From cross- validation experiments, we consider a MLP with 5 hidden layers (resp. 10 hidden layers) and 6 nodes in each layer (resp. 80 nodes) for the Lorenz-63 and the Oregonator models (resp. the Lorenz-96 model). This architecture is referred to as MLP . 3.2. Results Learning from noise-free training data : in this experiment, we compare the quality of the forecasted state trajectories ge- nerated using the models described above. The learning of the data-driven models is carried using noise-free time series computed using the analytical dynamical models. T able 1 . F orecasting performance of data-driven models for Lorenz-63, Oregonator and Lorenz-96 dynamics : mean RMSE for different forecasting time steps for the floowing models, AF (A), SR (B), MLP (C), MLP-SL(4) (D), Bi-NN(1) (E), Bi-NN-SL(4) (F). See the main text for details. A B C D E F Lorenz-63 t 0 + h 0 . 001 0 . 002 0 . 114 0 . 009 0 . 002 1.37E-5 t 0 + 4 h 0 . 004 0 . 008 0 . 172 0 . 035 0 . 006 4.79E-5 t 0 + 8 h 0 . 007 0 . 014 0 . 197 0 . 071 0 . 013 8.17E-5 Oregonator t 0 + h > 10 2 6 . 921 3 . 159 4 . 503 0.035 0.038 t 0 + 4 h > 10 2 7 . 558 4 . 660 4 . 961 4 . 458 4.296 t 0 + 8 h > 10 2 8 . 275 4 . 268 4 . 249 3 . 524 3.448 Lorenz-96 t 0 + h 0 . 242 0 . 031 0 . 827 0 . 731 0 . 049 0.012 t 0 + 4 h 0 . 580 0 . 086 1 . 623 1 . 870 0 . 140 0.035 t 0 + 8 h 0 . 988 0 . 147 2 . 215 2 . 752 0 . 246 0.064 Model identification : W e in vestigate model identification performance for Lorenz-63 dynamics in T ab .2. W e report the performance in terms of model parameter estimation for the Fig. 2 . Identification of the latent Lorenz-63 dynamics of an observed 5-dimensional dynamical system : we illustrate the reconstructed 3-dimensional latent dynamics (black,-) w .r .t. the true one (red,-) using the proposed Bi-NN(1) model. See the main text for details. three data-driv en schemes whose parameterization e xplicitly relates to the true physical equations, namely SR, Ni-NN(1) and Bi-NN(4)-SL. Bi-NN(4)-SL leads to a better estimation of model parameters, which explains the better forecasting performance. T able 2 . MSE in the estimation of Lorenz-63 parameters for SR, Bi-NN(1) and Bi-NN(4)-SL models. See the main manuscript for details. SR Bi-NN(1) Bi-NN(4)-SL MSE 0.0387 0.2570 0.0239 Identification of latent Lorenz-63 dynamics : W e further illustrate the potential of the proposed bilinear NN represen- tation for the identification of latent lo wer-dimensional dyna- mics for an observed dynamical system. In Fig.2, we consi- der a 5-dimensional system, dri ven by underlying Lorenz- 63 dynamics according to a linear mapping. Using solely a time series of observations of the 5-dimensional system with dt = 0 . 01 , we successfully identify the underlying low-dimensional chaotic behavior . It may be noted that the identification issue is achiev ed up to a 3 × 3 rotation matrix. 4. CONCLUSION In this w ork, we demonstrated the rele vance of a residual bilinear neural net representation for the modeling and identi- fication of dynamical systems. Our NN-based representation relies on the representation of classic numerical scheme as a multi-layer network. This NN representation opens new research av enues for the e xploitation of machine-learning- based and physically-sound strategies for the modeling, iden- tification and reconstruction of dynamical systems. 5. REFERENCES [1] G. Evensen, Data Assimilation , Springer Berlin Heidel- berg, Berlin, Heidelber g, 2009. [2] S. L. Brunton, J. L. Proctor , and J. N. Kutz, “Discov e- ring go verning equations from data by sparse identifica- tion of nonlinear dynamical systems, ” Pr oceedings of the National Academy of Sciences , v ol. 113, no. 15, pp. 3932–3937, Apr . 2016. [3] J. Paduart, L. Lauwers, J. Swe vers, K. Smolders, J. Schoukens, and R. Pintelon, “Identification of non- linear systems using Polynomial Nonlinear State Space models, ” A utomatica , vol. 46, no. 4, pp. 647–656, Apr . 2010. [4] Z. Zhao and D. Giannakis, “ Analog Forecasting with Dynamics-Adapted Kernels, ” arXiv :1412.3831 [phy- sics] , Dec. 2014, arXiv : 1412.3831. [5] R. Lguensat, P . T andeo, P . Aillot, and R. Fablet, “The Analog Data Assimilation, ” Monthly W eather Review , 2017. [6] Edward N. Lorenz, “Deterministic Nonperiodic Flo w, ” Journal of the Atmospheric Sciences , v ol. 20, no. 2, pp. 130–141, Mar . 1963. [7] R.J. Field and M.J. Schneider, “Oscillating chemical reactions and nonlinear dynamics, ” J ournal of Chemical Education , vol. 66, no. 3, 1989. [8] Y . LeCun, Y . Bengio, and G. Hinton, “Deep learning, ” Natur e , vol. 521, no. 7553, pp. 436–444, May 2015. [9] A. A. Anastassi, “Constructing Runge–Kutta methods with the use of artificial neural networks, ” Neural Com- puting and Applications , vol. 25, no. 1, pp. 229–236, July 2014. [10] C. Tsitouras, “Neural networks with multidimensional transfer functions, ” IEEE T ransactions on Neural Net- works , vol. 13, no. 1, pp. 222–228, Jan. 2002. [11] N. M. Mangan, J. N. K utz, S. L. Brunton, and J. L. Proc- tor , “Model selection for dynamical systems via sparse regression and information criteria, ” Pr oc. R. Soc. A , vol. 473, no. 2204, pp. 20170009, Aug. 2017. [12] T . Y . Lin, A. RoyChowdhury , and S. Maji, “Bilinear CNN Models for Fine-Grained V isual Recognition, ” in 2015 IEEE International Conference on Computer V i- sion (ICCV) , Dec. 2015, pp. 1449–1457. [13] D.C. Park and T .-K. Jeong, “Complex-bilinear recur- rent neural network for equalization of a digital satell ite channel, ” IEEE T ransactions on Neural Networks , v ol. 13, no. 3, pp. 711–725, May 2002. [14] R. Ashino, M. Nagase, and R. V aillancourt, “Behind and be yond the Matlab ODE suite, ” Computers & Ma- thematics with Applications , vol. 40, no. 4, pp. 491–512, Aug. 2000.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment