TorPolice: Towards Enforcing Service-Defined Access Policies in Anonymous Systems

Tor is the most widely used anonymity network, currently serving millions of users each day. However, there is no access control in place for all these users, leaving the network vulnerable to botnet abuse and attacks. For example, criminals frequently use exit relays as stepping stones for attacks, causing service providers to serve CAPTCHAs to exit relay IP addresses or blacklisting them altogether, which leads to severe usability issues for legitimate Tor users. To address this problem, we propose TorPolice, the first privacy-preserving access control framework for Tor. TorPolice enables abuse-plagued service providers such as Yelp to enforce access rules to police and throttle malicious requests coming from Tor while still providing service to legitimate Tor users. Further, TorPolice equips Tor with global access control for relays, enhancing Tor’s resilience to botnet abuse. We show that TorPolice preserves the privacy of Tor users, implement a prototype of TorPolice, and perform extensive evaluations to validate our design goals.

💡 Research Summary

TorPolice introduces the first privacy‑preserving access‑control framework for the Tor anonymity network, addressing the long‑standing problem that Tor lacks any mechanism to limit how its users can interact with external services. Because anyone can launch a Tor client without restriction, botnets routinely exploit Tor exit relays to conduct spam, web‑scraping, vulnerability scanning, and command‑and‑control operations. Service providers consequently treat Tor traffic as “second‑class” citizens, serving CAPTCHAs or outright blacklisting exit IPs, which harms legitimate users. TorPolice mitigates this tension by allowing service operators and the Tor network itself to enforce fine‑grained, service‑defined access policies while preserving Tor’s core anonymity guarantees.

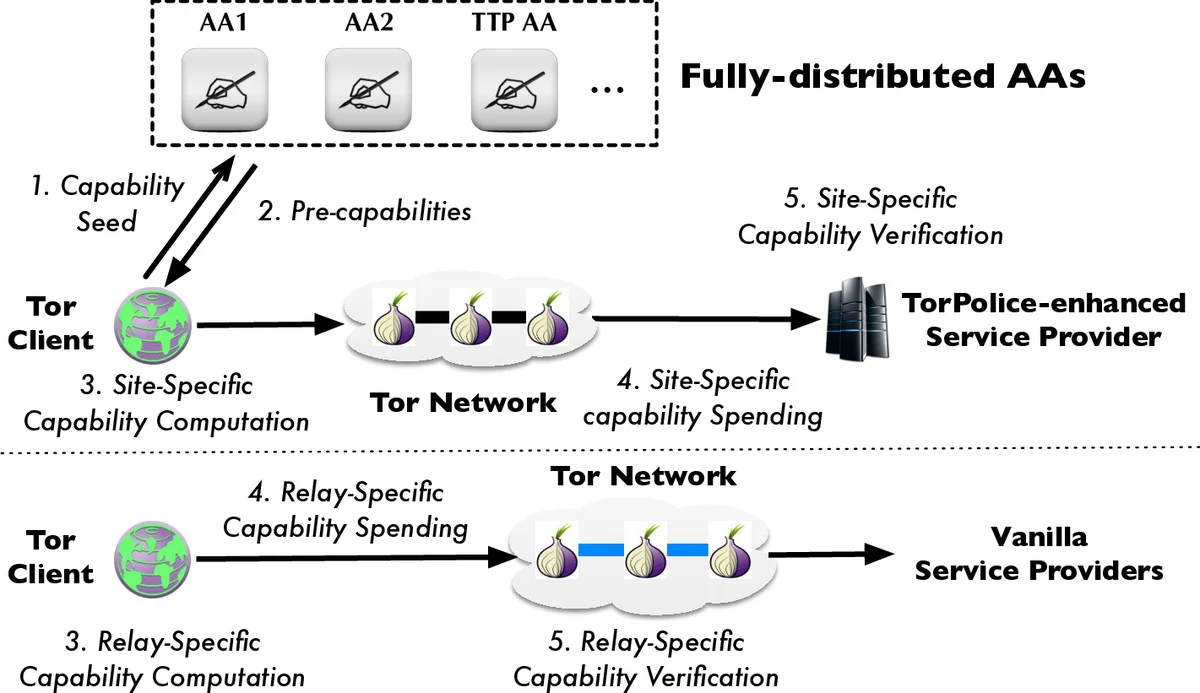

The system is built around capability (capability) tokens issued by a set of fully distributed, partially‑trusted Access Authorities (AAs). A client first obtains a “capability seed” – a resource that is easy to acquire but costly to scale, such as a solved CAPTCHA or a computational puzzle. The seed is submitted anonymously to an AA, which issues a pre‑capability using a blind‑signature protocol. Blind signatures break the link between the request and the later use of the token, ensuring that the AA cannot correlate a client’s identity with its subsequent activity. Each AA maintains two distinct key pairs and two rate‑limiters: one for service‑access capabilities and another for relay‑access capabilities. The public keys are published in the Tor consensus so that any client, relay, or service can verify signatures.

From a pre‑capability, the client locally derives either a site‑specific capability (to access a particular TorPolice‑enhanced service) or a relay‑specific capability (to build a Tor circuit through a particular relay). The client presents the appropriate capability when contacting the service or when establishing a circuit; the recipient verifies the AA’s signature and checks that the token complies with its locally configured policy (e.g., maximum requests per minute, required puzzle‑solution difficulty, etc.). Because the AA’s rate‑limiters are per‑seed, a malicious botnet can only obtain a bounded number of tokens proportional to the number of seeds it can solve, preventing unlimited token generation. Moreover, the system allows each service to define its own policy parameters, such as throttling the number of reviews per capability for Yelp or requiring higher‑difficulty puzzles for high‑risk endpoints.

On the network side, relay‑specific capabilities enable global rate‑limiting of circuit creation. Instead of each relay applying its own local limits (which bots can bypass by rotating relays), a relay will only accept a circuit request that carries a valid capability signed by an AA. This creates a network‑wide “token bucket” that caps the total number of circuits a client can open, dramatically reducing the load that bot‑driven circuit storms place on the Tor infrastructure.

Security analysis assumes AAs are honest‑but‑curious: they follow the protocol but may try to infer additional information. The use of blind signatures, per‑seed rate limiting, and the restriction to a single key pair per capability type prevents an AA from partitioning the anonymity set or linking multiple requests to the same client. Even if an AA is compromised, the distributed nature of the authority set and the ability of services to blacklist misbehaving AAs limit the impact. The authors also discuss DDoS resilience, recommending that AAs be hosted behind commercial DDoS‑mitigation services and that the Tor directory authorities continue to provide redundancy.

A prototype was implemented by modifying the Tor client, relay code, and adding a lightweight capability‑verification module to a sample service. Evaluation was performed both in the Shadow network simulator and on the live Tor network. Results show that capability issuance and verification add less than 1 % CPU overhead, and the additional latency for circuit establishment is on the order of tens of milliseconds—well within typical Tor latency variance. In service‑level experiments, TorPolice reduced malicious request rates by over 80 % while keeping false‑positive denial of legitimate traffic below 2 %.

In summary, TorPolice achieves four major contributions: (1) it introduces a practical, privacy‑preserving access‑control layer for Tor; (2) it enables both service providers and the Tor network to enforce independent, fine‑grained policies; (3) it does so without centralizing trust, using a set of distributed AAs and blind signatures; and (4) it is incrementally deployable, allowing upgraded clients, relays, and services to interoperate with legacy components. Future work includes exploring additional seed types (e.g., anonymous cryptocurrency proofs), dynamic policy adaptation based on real‑time abuse metrics, and large‑scale longitudinal studies of TorPolice’s impact on both network health and user experience.

Comments & Academic Discussion

Loading comments...

Leave a Comment