A Novel Approach for Effective Learning in Low Resourced Scenarios

Deep learning based discriminative methods, being the state-of-the-art machine learning techniques, are ill-suited for learning from lower amounts of data. In this paper, we propose a novel framework, called simultaneous two sample learning (s2sL), t…

Authors: Sri Harsha Dumpala, Rupayan Chakraborty, Sunil Kumar Kopparapu

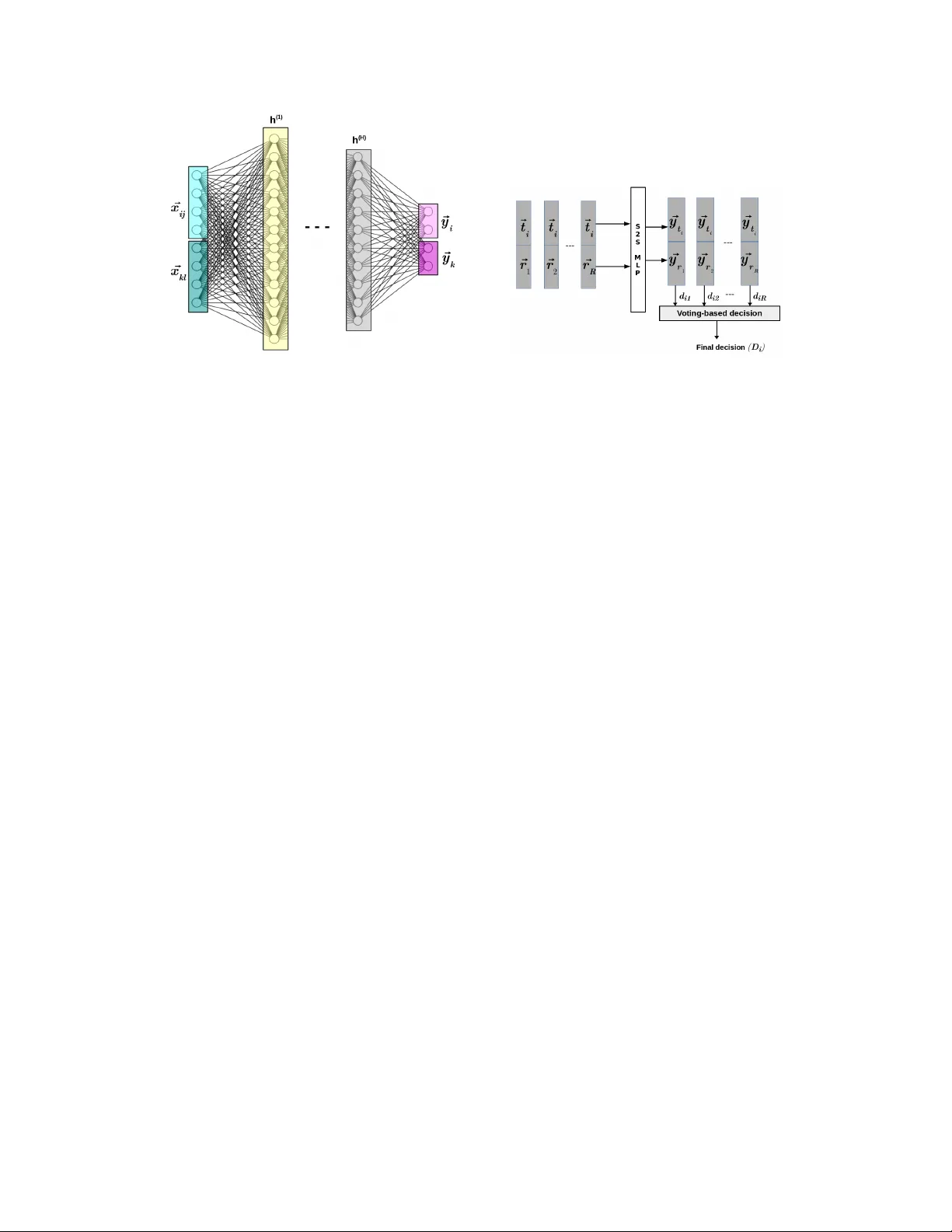

A Nov el A ppr oach f or Effecti ve Lear ning in Low Resour ced Scenarios ∗ Sri Harsha Dumpala TCS Research and Innov ation-Mumbai d.harsha@tcs.com Rupayan Chakraborty TCS Research and Innov ation-Mumbai rupayan.chakraborty@tcs.com Sunil Kumar K opparapu TCS Research and Innov ation-Mumbai sunilkumar.kopparapu@tcs.com Abstract Deep learning based discriminative methods, being the state-of-the-art machine learning techniques, are ill-suited for learning from lower amounts of data. In this paper , we propose a novel framew ork, called simultaneous two sample learning (s 2 sL), to effecti v ely learn the class discriminativ e characteristics, ev en from very low amount of data. In s 2 sL, more than one sample (here, two samples) are si- multaneously considered to both, train and test the classifier . W e demonstrate our approach for speech/music discrimination and emotion classification through exper - iments. Further , we also show the ef fecti veness of s 2 sL approach for classification in low-resource scenario, and for imbalanced data. 1 Introduction Deep neural networks (DNNs), in particular con volutional and recurrent neural netw orks, with huge architectures hav e been prov en successful in wide range of tasks including audio processing such as speech to text [1 - 4], emotion recognition [5 - 8], speech/non-speech (e.g., of non-speech include noise, music, etc.,) classification [9 - 12], etc. T raining these deep architectures require large amount of annotated data, as a result, they cannot be used in low data resource scenarios which is common in speech-based applications [13 - 15]. Apart from collecting large data corpus, annotating the data is also very dif ficult, and requires manual supervision and efforts. Especially , annotation of speech for tasks like emotion recognition also suf fer from lack of agreement among the annotators [16]. Hence, there is a need to b uild reliable systems that can work in lo w resource scenario. In this work, we propose a no vel approach to address the task of classification in low data resource scenarios. Our approach in volv es simultaneously considering more than one sample (in this work, two samples are considered) to train the classifier . W e call this approach as simultaneous two sample learning (s 2 sL). The proposed approach is also applicable to low resource data suf fering with data imbalance. The contributions of this paper are: • Representation of the training data, where the feature vectors pertaining to two dif ferent samples are simultaneously considered to train the classifier . • Introduce modifications to the multi-layer perceptron (MLP) architecture so that it can be trained using our proposed data representation. • New decision mechanism to classify the test samples. ∗ Presented at NIPS 2017 Machine Learning for Audio Signal Processing (ML4Audio) W orkshop, Dec. 2017 Figure 1: Training of s 2 s-MLP . Figure 2: T esting of s 2 s-MLP . 2 Proposed appr oach The s 2 sL approach proposed to address lo w data resource problem is explained in this Section. In this work, we use MLP (modified to handle our data representation) as the base classifier . Here, we explain the s 2 sL approach by considering two-class classification task. 2.1 Data repr esentation Consider a two-class classification task with C = { C 1 , C 2 } denoting the set of class labels, and let N 1 and N 2 be the number of samples corresponding to C 1 and C 2 , respectiv ely . In general, to train a classifier , the samples in the train set are provided as input-output pairs as follo ws. ( ~ x ij , C i ) , i = 1 , 2; and j = 1 , 2 , · · · , N i , (1) where ~ x ij ∈ R d × 1 refers to the d -dimensional feature vector representing the j th sample corre- sponding to i th class label, and C i ∈ C , refers to output label of i th class. In the proposed data representation format, called simultaneous two sample (s 2 s) representation, we will simultaneously consider two samples as follo ws. ([ ~ x ij , ~ x kl ] , [ C i , C k ]) , ∀ i, k = 1 , 2; j = 1 , 2 , · · · , N i and l = 1 , 2 , · · · , N k , (2) where ~ x ij , ~ x kl ∈ R d × 1 are the d -dimensional feature vectors representing the j th sample in i th class and l th sample in the k th class, respectiv ely; and ( C i , C k ) ∈ C refers to the output labels of i th and k th class, respectiv ely . Hence, in s 2 s data representation, we will ha ve an input feature v ector of length 2 d i.e., [ ~ x ij , ~ x kl ] ∈ R 2 d × 1 , and output class labels as either [ C 1 , C 1 ] , [ C 1 , C 2 ] , [ C 2 , C 1 ] or [ C 2 , C 2 ] . Each sample can be combined with all the samples (i.e., with ( N 1 + N 2 ) samples) in the dataset. Therefore, by representing the data in the s 2 s format, the number of samples in the train set increases to ( N 1 + N 2 ) 2 from ( N 1 + N 2 ) samples. W e hypothesize that the s 2 s format is expected to help the network not only to learn the characteristics of the two classes separately , b ut also the difference and similarity in characteristics of the two classes. 2.2 Classifier training MLP , the most commonly used feed forward neural netw ork, is considered as the base classifier to validate our proposed s 2 s frame work. Generally , MLPs are trained using the data format gi ven by eq. (1) . But to train the MLP on our s 2 s based data representation (as in eq. (2) ), the following modifications are made to the MLP architecture (refer to Figure 1). • W e hav e 2 × d units (instead of d units) in the input layer to accept the two samples i.e., ~ x ij and ~ x kl , simultaneously . 2 • The structure of the hidden layer in this approach is similar to that of a regular MLP . The number of hidden layers and hidden units can be v aried depending upon the complexity of the problem. The number of units in the hidden layer is selected empirically by varying the number of hidden units from 2 to twice the length of the input layer (i.e., 2 to 4 × d ) and the unit at which the highest performance is obtained are selected. In this paper , we considered only a single hidden layer . Rectified linear units (ReLU) are used for hidden layer . • The output layer will consist of units equal to twice the considered number of classes in the classification task i.e, the output layer will ha ve four units for two-class classification task. The sigmoid activ ation function (not softmax) is used on the output layer units. Unlike regular MLP , we use sigmoid activ ation units in the output layer , with binary cross-entropy as the cost function, because the output labels in the proposed s 2 s based data representation will ha ve more than one unit activ e at a time (not one-hot encoded output) and this condition cannot be handled using softmax function. As can be seen from Figure 1, the output layer in our proposed method has outputs ~ y i and ~ y k which corresponds to the outputs associated with the input feature v ectors ~ x ij and ~ x kl , respecti vely . For a two-class classification problem, there will be four units in the output layer and the possi- ble output labels are [0 , 1 , 0 , 1] , [0 , 1 , 1 , 0] , [1 , 0 , 0 , 1] , [1 , 0 , 1 , 0] corresponding to the class labels [ C 1 , C 1 ] , [ C 1 , C 2 ] , [ C 2 , C 1 ] and [ C 2 , C 2 ] , respectiv ely . This architecture is referred to as s 2 s-MLP . In s 2 sL, s 2 s-MLP is trained using the s 2 s data representation format. Further , the s 2 s-MLP is trained using adam optimizer . 2.3 Classifier testing Generally , the feature vector corresponding to the test sample is provided as input to the trained MLP in the testing phase and the class label is decided based on the obtained output. Ho wev er , in s 2 sL method, the feature v ector corresponding to the test sample should also be con v erted to the s 2 s data representation format to test the trained s 2 s-MLP . W e propose a testing approach, where the gi ven test sample is combined with a set of preselected reference samples, whose class label is known a priori, to generate multiple instances of the same test sample as follo ws. [ ~ t, ~ r j ] , j = 1 , 2 , · · · , R, (3) where ~ t ∈ R d × 1 , ~ r j ∈ R d × 1 refer to the d -dimensional feature vector corresponding to the test sample and the j th reference sample, respecti vely . R refers to the considered number of reference samples. These reference samples can be selected from any of the two classes. For testing the s 2 s-MLP (as shown in Figure 2), each test sample ~ t i (same as ’ t ’ in (3)) is combined with all the R reference samples ( ~ r 1 , ~ r 2 , · · · , ~ r R ) to form R different instances of the same test sample ~ t i . The corresponding outputs ( d i 1 , d i 2 , · · · , d iR ) obtained from s 2 s-MLP for the R generated instances of ~ t i are combined by voting-based decision approach to obtain the final decision D i . The class label that gets maximum votes is considered as the predicted output label. 3 Experiments W e v alidate the performance of the proposed s 2 sL by providing the preliminary results obtained on two dif ferent tasks namely , Speech/Music discrimination and emotion classification. W e considered the GTZAN Music-Speech dataset [17], consisting of 120 audio files ( 60 speech and 60 music), for task of classifying speech and music. Each audio file (of 2 seconds duration) is represented using a 13 -dimensional mel-frequency cepstral coef ficient (MFCC) v ector , where each MFCC vector is the av erage of all the frame level (frame size of 30 msec and an overlap of 10 msec) MFCC vectors. It is to be noted that our main intention for this task is not better feature selection, but to demonstrate the effecti v eness of our approach, in particular for low data scenarios. The standard Berlin speech emotion database (EMO-DB) [18] consisting of 535 utterances corre- sponding to 7 different emotions is considered for the task of emotion classification. Each utterance is represented by a 19 -dimensional feature vector obtained by using the feature selection algorithm from WEKA toolkit [19] on the 384 -dimensional utterance lev el feature vector obtained using openS- MILE toolkit [20]. For two class classification, we considered the two most confusing emotion 3 T able 1: Accuracies (in %) for balanced data classification. Data proportion T ask 1/4 2/4 3/4 4/4 Speech/ MLP 70.8 74.6 80.1 81.2 Music s 2 sL 75.2 79.3 82.7 85.1 Neutral/ MLP 86.3 88.0 90.5 91.1 Sad s 2 sL 90.4 91.2 92.1 92.9 T able 2: F 1 for imbalanced data classification. Note: EB is Eusboost and MM is MWMO TE. Data proportion T ask 1/4 2/4 3/4 4/4 MLP .41 .49 .53 .56 Anger/ EB .47 .54 .59 .64 Happy MM .48 .55 .61 .66 s 2 sL .54 .60 .64 .69 pairs i.e., (Neutral,Sad) and (Anger, Happy). Data corresponding to Speech/Music classification ( 60 speech and 60 music samples) and Neutral/Sad classification ( 79 neutral and 62 sad utterances) is balanced whereas Anger/Happy classification task has data imbalance, with anger forming the majority class ( 127 samples) and happy forming the minority class ( 71 samples). Therefore, we sho w the performance of s 2 sL on both, balanced and imbalanced datasets. All experimental results are v alidated using 5 -fold cross validation ( 80 % of data for training and 20 % for testing in each fold). Further , to analyze the effecti v eness of s 2 sL in low resource scenarios, different proportions of training data, within each fold, are considered to train the system. For this analysis, we considered 4 different proportions i.e., (1 / 4) th , (2 / 4) th , (3 / 4) th and (4 / 4) th of the training data to train the classifier . For instance, (2 / 4) th means considering only half of the original training data to train the classifier, and (4 / 4) th means considering the complete training data. 5 -fold cross validation is considered for all data proportions. Accuracy (in %) is used as a performance measure for balanced data classification tasks (i.e., Speech/Music classification and Neutral/Sad emotion classification), whereas the more preferred F 1 measure [21] is used as a measure for imbalanced data classification task (i.e., Anger/Happy emotion classification). T able 1 sho w the results obtained for proposed s 2 sL approach in comparison to that of MLP for the tasks of Speech/Music and Neutral/Sad classification, by considering different proportions of training data. The v alues in T able 1 are mean accuracies (in %) obtained by 5 -fold cross v alidation. It can be observed from T able 1 that for both tasks, s 2 sL method outperforms MLP , especially at lo w resource conditions. s 2 sL shows an absolute impro vement in accurac y of 4 . 4 % and 4 . 1 % ov er MLP for Speech/Music and Neutral/Sad classification tasks, respectiv ely , when (1 / 4) th of the original training data is used in experiments. T able 2 sho w the results (in terms of F 1 values ) obtained for proposed s 2 sL approach in comparison to that of MLP for Anger/Happy classification (data imbalance problem). Here, state-of-the-art methods i.e., Eusboost [22] and MWMOTE [23] are also considered for comparison. It can be observed from T able 2 that the s 2 sL method outperforms MLP , and also performs better than Eusboost and MWMO TE techniques on imbalanced data (around 3 % absolute improv ement in F 1 value for s 2 sL compared to MWMO TE, when (4 / 4) th of the training data is considered). In particular , at lower amounts of training data, s 2 sL outperforms all the other methods, illustrating its ef fecti veness e v en for low resourced data imbalance problems. s 2 sL method shows an absolute improvement of 6 % ( 0 . 54 − 0 . 48 ) in F 1 value ov er the second best ( 0 . 48 for MWMO TE), when only (1 / 4) th of the training data is used. 4 Conclusions In this paper , we introduced a nov el s 2 s framework to effecti vely learn the class discriminativ e characteristics, e ven from lo w data resources. In this frame work, more than one sample (here, two samples) are simultaneously considered to train the classifier . Further , this framework allows to generate multiple instances of the same test sample, by considering preselected reference samples, to achiev e a more profound decision making. W e illustrated the significance of our approach by providing the e xperimental results for two dif ferent tasks namely , speech/music discrimination and emotion classification. Further , we showed that the s 2 s framew ork can also handle the low resourced data imbalance problem. 4 References [1] Hinton, G., Deng, L., Y u, D., Dahl, G. E., Mohamed, A. R., Jaitly , N., Senior , A., V anhoucke, V ., Nguyen, P ., Sainath, T . N. & Kingsbury , B. (2012) Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups. IEEE Signal Pr ocessing Magazine , pp. 82–97. [2] V in yals, O., Ravuri, S. V . & Pov ey , D. (2012) Revisiting recurrent neural networks for rob ust ASR. In Pr oc. IEEE International Confer ence on Acoustics, Speech and Signal Processing (ICASSP) , pp. 4085–4088. [3] Lu, L., Zhang, X., Cho K. & Renals, S. (2015) A study of the recurrent neural network encoder-decoder for large v ocabulary speech recognition. In Pr oc. INTERSPEECH , pp. 3249–3253. [4] Zhang, Y ., Pezeshki, M., Brakel, P ., Zhang, S., Bengio, C.L.Y . & Courville, A. (2017) T ow ards end-to-end speech recognition with deep con volutional neural netw orks. arXiv preprint , arXi v:1701.02720. [5] Han, K., Y u, D. & T ashev , I. (2014) Speech emotion recognition using deep neural network and extreme learning machine. In Pr oc. Interspeech , pp. 223–227. [6] T rigeorgis, G., Ringev al, F ., Brueckner , R., Marchi, E., Nicolaou, M. A., Schuller , B., & Zafeiriou, S. (2016) Adieu features? End-to-end speech emotion recognition using a deep conv olutional recurrent network. In Proc. ICASSP , pp. 5200–5204). [7] Huang, Che-W ei, & Shrikanth S. Narayanan. (2016) Attention Assisted Discov ery of Sub-Utterance Structure in Speech Emotion Recognition. In Pr oc. INTERSPEECH , pp. 1387–1391. [8] Zhang, Z., Ringe val, F ., Han, J., Deng, J., Marchi, E. & Schuller , B. (2016) F acing realism in spontaneous emotion recognition from speech: Feature enhancement by autoencoder with LSTM neural networks. In Pr oc. INTERSPEECH , pp. 3593–3597. [9] Scheirer , E. D. & Slaney , M. (2003) Multi-feature speech/music discrimination system. U.S. P atent 6,570,991 . [10] Pikrakis, A. & Theodoridis S. (2014) Speech-music discrimination: A deep learning perspective. In Pr oc. Eur opean Signal Pr ocessing Confer ence (EUSIPCO) , pp. 616–620. [11] Choi, K., Fazekas, G. & Sandler , M. (2016) Automatic tagging using deep conv olutional neural networks. arXiv preprint , arXi v:1606.00298. [12] Zazo Candil, R., Sainath, T .N., Simko, G. & Parada, C. (2016) Feature learning with raw-wav eform CLDNNs for V oice Activity Detection. In Pr oc. Interspeech , pp. 3668–3672. [13] T ian, L., Moore, JD. & Lai, C. (2015) Emotion recognition in spontaneous and acted dialogues. In Pr oc. International Conference on Af fective Computing and Intelligent Inter action (A CII) , pp. 698–704. [14] Dumpala, S.H., Chakraborty , R. & K opparapu, S.K. (2017) k-FFNN: A priori knowledge infused feed- forward neural networks. arXiv pr eprint , arXi v:1704.07055. [15] Dumpala, S.H., Chakraborty , R. & K opparapu, S.K. (2018) Knowledge-dri ven feed-forward neural network for audio affecti ve content analysis. T o appear in AAAI-2018 workshop on Af fective Content Analysis . [16] Deng, J., Zhang, Z., Marchi, E. & Schuller , B. (2013) Sparse autoencoder -based feature transfer learning for speech emotion recognition. In Pr oc. Humaine Association Conference on Affective Computing and Intellig ent Interaction (ACII) , pp. 511–516. [17] George Tzanetakis, Gtzan musicspeech. A vailabe on-line at http://marsyas.info/download/datasets. [18] Burkhardt, F ., Paeschke, A., Rolfes, M., Sendlmeier, W .F . & W eiss, B. (2005) A database of german emotional speech. In Pr oc. Interspeech , pp. 1517–1520. [19] Hall, M., Frank, E., Holmes, G., Pfahringer, B., Reutemann, P . & Witten, I.H. (2009) The WEKA data mining software: an update. ACM SIGKDD e xplorations newsletter , 11 (1), pp. 10–18. [20] Eyben, F ., W eninger , F ., Gross, F . & Schuller , B. (2013) Recent de velopments in opensmile, the munich open-source multimedia feature extractor . In Proc. ACM international conference on Multimedia , pp. 835–838. [21] Maratea, A., Petrosino, A. & Manzo, M. (2014) Adjusted f-measure and kernel scaling for imbalanced data learning. Information Sciences 257 :331–341. [22] Galar , M., Fernandez, A., Barrenechea, E. & Herrera, F . (2013) Eusboost: Enhancing ensembles for highly imbalanced data-sets by ev olutionary undersampling. P attern Recognition 46 (12):3460–3471. [23] Barua, S., Islam, M. M., Y ao, X. & Murase, K. (2014) Mwmote–majority weighted minority oversampling technique for imbalanced data set learning. IEEE T ransactions on Knowledge and Data Engineering 26 (2):405– 425. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment