Multi-Dialect Speech Recognition With A Single Sequence-To-Sequence Model

Sequence-to-sequence models provide a simple and elegant solution for building speech recognition systems by folding separate components of a typical system, namely acoustic (AM), pronunciation (PM) and language (LM) models into a single neural netwo…

Authors: Bo Li, Tara N. Sainath, Khe Chai Sim

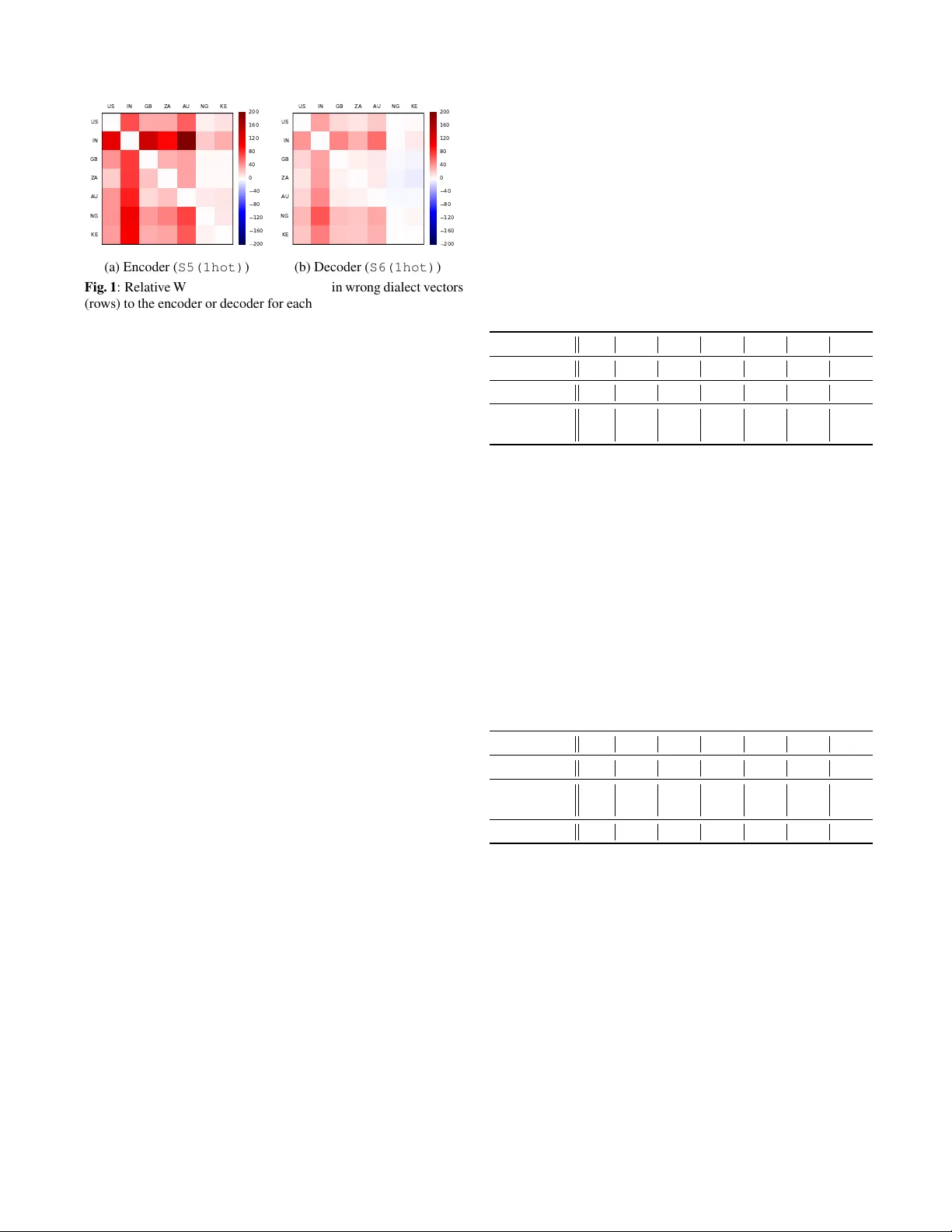

MUL TI-DIALECT SPEECH RECOGNITION WITH A SINGLE SEQUENCE-T O-SEQUENCE MODEL Bo Li, T ara N. Sainath, Khe Chai Sim, Mic hiel Bacchiani, Eugene W einstein, P atrick Nguyen, Zhifeng Chen, Y onghui W u, Kanishka Rao Google Inc., USA { boboli,tsainath,khechai,michiel,weinstein,drpng,zhifengc,yonghui,kanishkarao } @google.com ABSTRA CT Sequence-to-sequence models pro vide a simple and eleg ant solution for building speech recognition systems by folding separate com- ponents of a typical system, namely acoustic (AM), pronunciation (PM) and language (LM) models into a single neural network. In this w ork, we look at one such sequence-to-sequence model, namely listen, attend and spell (LAS) [1], and explore the possibility of train- ing a single model to serve different English dialects, which simpli- fies the process of training multi-dialect systems without the need for separate AM, PM and LMs for each dialect. W e show that simply pooling the data from all dialects into one LAS model falls behind the performance of a model fine-tuned on each dialect. W e then look at incorporating dialect-specific information into the model, both by modifying the training targets by inserting the dialect symbol at the end of the original grapheme sequence and also feeding a 1-hot rep- resentation of the dialect information into all layers of the model. Experimental results on seven English dialects show that our pro- posed system is ef fecti ve in modeling dialect v ariations within a sin- gle LAS model, outperforming a LAS model trained indi vidually on each of the sev en dialects by 3.1~16.5% relativ e. Index T erms — multi-dialect, sequence to sequence, adaptation 1. INTR ODUCTION Dialects are defined as variations of the same language, specific to geographical regions or social groups. Although they share many similarities, there are usually large differences at sev eral linguis- tic levels; amongst others: phonological, grammatical, orthographic (e.g., “color” vs. “colour”) and very often dif ferent vocab ularies. As a result, automatic speech recognition (ASR) systems trained or tuned for one specific dialect perform poorly when tested on another dialect of the same language [2]. In addition, systems simultane- ously trained on many dialects fail to generalize well for each indi- vidual dialect [3]. Ine vitably , multi-dialect languages pose a chal- lenge to ASR systems. If enough data exists for each dialect, a com- mon practice is to treat each dialect independently [2, 4, 5]. Alter - nativ ely , in cases where dialects are resource-scarce, these models are boosted with data from other dialects [3, 6]. In the past, there hav e been many attempts to build multi-dialect/language systems. The usual approach has been to define a common set of uni versal phone models [7, 8, 9] with appropriate parameter sharing [6] and train it on data from many languages with ev entual adaptation on the data from the language of interest [10, 11, 12, 13]. [11, 14] dev eloped similar neural network models with language indepen- dent feature extraction and language dependent phonetic classifiers. [12] further in vestigated a grapheme-based multi-dialect model with dialect-dependent phoneme recognition as secondary tasks. Adap- tation techniques such as MLLR and MAP are commonly used for Gaussian mixture model based systems [13]; but for neural network based models, adaptation by continued training on dialect-specific data works well [12]. W e believe it has been challenging to build a universal multi- dialect model for conv entional ASR systems because these models still require a separate pronunciation and language model (PM and LM) per dialect, which are trained independently from the multi- dialect acoustic model (AM). Therefore, if the AM predicts an in- correct set of sub-word units from the wrong dialect, errors get prop- agated to the PM and LM. Sequence-to-sequence models provide a simple and elegant so- lution to the ASR problem by learning and optimizing a single neural network for the AM, PM and LM [15, 16, 17, 18, 19, 20]. W e be- liev e such a model is very attractiv e to look at for building a single multi-dialect system specifically for this reason. T raining a multi- dialect sequence-to-sequence model is simple, as it requires simply pooling all the grapheme symbols together . In addition, the AM, PM and LM variations are jointly modeled across dialects [21]. The sim- plicity and joint optimization make it a good candidate for training multi-dialect systems. In this work, we adopt the attention-based sequence-to-sequence model, namely listen, attend and spell (LAS) [1], for multi-dialect modeling. It has shown good performance compared to other sequence-to-sequence models for single dialect tasks [20]. Our goal here is to build a single LAS model for sev en English dialects. W e start by simply pooling all the data together . F or English the grapheme set is shared across dialects, nothing needs to be modified for the output. Although this model giv es acceptable performance on each dialect, it falls behind the ones independently fine-tuned on each dialect. In the literature, adaptation methods [22, 23] are typically used to inte grate dialect-specific information into the system. W e hypoth- esize that by explicitly providing dialect information to the LAS model we should be able to bridge the gap between the dialect- independent and dialect-dependent models. Firstly , we use dialect information in the output by introducing an artificial token into the grapheme sequence [24]. The LAS model needs to learn both grapheme prediction and dialect classification. Secondly , we feed the dialect information as input vectors to the system. It can be either used as an extra information vector appended to the inputs of each layer or as weight coefficients for cluster adaptiv e training (CA T) [25]. Our experimental results show that using dialect information can ele vate the performance of multi-dialect LAS system to out- perform dialect-dependent ones. The proposed system has several attractiv e benefits: 1) simplicity: no changes are required for the model and scaling to more dialects is trivial - by simply adding more data; 2) improvement for low-resource dialects: in the multi-dialect system, the majority of the parameters are implicitly shared by all the dialects, which forces the model to generalize across dialects during training. It is observed that the recognition quality on the low resource dialect is significantly improv ed. 2. MUL TI-DIALECT LAS MODEL The LAS [1] model consists of an encoder (akin to an acoustic model), a decoder (like a language model) and an attention model which learns an alignment between the encoder and decoder outputs. The encoder is normally a stack of recurrent layers; in this study we use 5 layers of unidirectional long short term memory (LSTM) [26]. The decoder is a neural language model, consisting of 2 LSTM lay- ers. The attention module takes in the decoder’ s lowest layer’ s state vector from the previous time step and estimates attention weights for each frame in the encoder output in order to compute a single context vector . The context vector is then input into the decoder network, along with the pre viously predicted label from the decoder to generate logits from the final layer in the decoder . Finally , these logits are input into a softmax layer , which outputs a probability distribution over the label in ventory (i.e., graphemes), conditioned on all previous predictions. In con ventional LAS models, the label in ventory is augmented with two special symbols, , which is input to the decoder at the first time-step, and , which indi- cates the end of a sentence. During inference, the label prediction process terminates when the label is generated. The baseline multi-dialect LAS system is built by simply pool- ing all the data together . The output targets consist of 75 graphemes for English, which are shared across dialects. In the following sec- tion, we describe how we can improve the baseline multi-dialect LAS model by providing dialect information. W e assume this in- formation is kno wn in adv ance or can be easily obtained [5]. W e believ e explicitly pro viding such dialect information would be help- ful to improve the performance of the multi-dialect LAS model. W e discuss three ways of passing the dialect information into the LAS model, namely feeding it as output targets, as input vectors, or di- rectly factoring the encoder layers based on the dialect. 2.1. Dialect Information as Output T argets A common way to make the LAS model aw are of the dialect is through multi-task learning [27]. W e can add an extra dialect clas- sification cost to the training and regularize the model to learn hid- den representations that are effecti ve for both dialect classification and grapheme prediction. Ho wev er , this requires having two sepa- rate objectiv e functions that are weighted, and deciding the optimal weight for each task is a parameter that needs to be swept [27]. A simpler approach, similar to [24], is to expand the label in- ventory of our LAS model to include a list of special symbols, each corresponding to a dialect. For example, when including the British English, we add the symbol into the label in ventory . In [24], the special symbol is added to the beginning of the target la- bel sequence. For example, for a British accented speech utterance of “hello world”, the con ventional LAS model uses “ h e l l o w o r l d ” as the output targets; in the new setup the output target is “ h e l l o w o r l d ”. The model needs to figure out which dialect the input speech is first before making any grapheme prediction. In LAS, each label prediction is dependent on the history . Adding the dialect symbol at the beginning, we implicitly incur the dependency of the grapheme prediction on the dialect classification. When the model makes errors in dialect classification, it may hurt the grapheme recognition performance. In this study , we assume the correct dialect information is always av ailable. Hence, we explore inserting the dialect symbol at the end of the label sequence as well. For the e xample utterance, the target sequence no w become “ h e l l o w o r l d ”. By inserting the dialect symbol at the end, the model still needs to learn a shared representation but avoids the unnecessary dependency and is less sensitiv e to dialect classification errors. 2.2. Dialect Information as Input V ectors Another way of providing dialect information is to pass this informa- tion as an additional feature [28]. T o conv ert the categorical dialect information into a real-valued feature vector , we in vestigate the use of 1-hot vectors, whose v alues are all ‘0’ e xcept for one ‘1’ at the in- dex corresponding to the giv en dialect, and data-driven embedding vectors whose v alues are learned during training. These dialect vec- tors can be appended to different layers in the LAS model. At each layer the dialect vectors are linearly transformed by the weight ma- trices and added to the original hidden activ ations before the nonlin- earity . This effecti vely enables the model to learn dialect-dependent biases. W e are mainly interested in two configurations: 1) adding it to the encoder layers, which ef fecti vely pro vides dialect information to help model the acoustic variations across dialects; and 2) append- ing it to the decoder layers, which models dialect-specific language model variations. Ultimately , we can also combine the two by feed- ing dialect vectors into both the encoder and the decoder . 2.3. Dialect Information as Cluster Coefficients Another approach to modeling variations in the speech signal (for example dialects) which has been explored for con ventional models is cluster adaptive training (CA T) [25]. W e can treat each dialect as a separate cluster and use 1-hot dialect vectors to switch clusters; alternativ ely , we can use data-dri ven dialect embedding vectors as weights to combine clusters. A drawback of the CA T approach is that it adds extra network layers, which typically adds more param- eters to the LAS model. In this study , our goal is to maintain sim- plicity of the LAS model and limit the increase in model parameters. Thus, we only tested a simple CA T setup for the encoder of the LAS model to compare with the input vector approaches discussed in the previous sections. Specifically , we use a few clusters to compensate activ ation offsets of the 4th LSTM layer based on the shared repre- sentation learned by the 1st LSTM layer, to account for the dialect differences. For each cluster , a single layer 128D LSTM is used with output projection to match the dimension of the 4th LSTM layer . The weighted sum of all the CA T bases using dialect vectors as interpo- lation weights is added back to the 4th LSTM layer’ s outputs, which are then fed to the last encoder layer . 3. EXPERIMENT AL DET AILS Our experiments are conducted on about 40K hours of noisy train- ing data consisting of 35M English utterances. The training utter- ances are anonymized and hand-transcribed, and are representative of Google’ s voice search traffic. It includes speech from 7 dif fer- ent dialects, namely America (US), India (IN), Britain (GB), South Africa (ZA), Australia (A U), Nigeria & Ghana (NG) and Ken ya (KE). The amount of dialect-specific data can be found in T able 1. The training data is created by artificially corrupting clean utterances using a room simulator, adding varying degrees of noise and rever - beration such that the overall SNR is between 0 and 20dB [29]. The noise sources are from Y ouTube and daily life noisy environmen- tal recordings. W e report results on dialect-specific test sets, each contains roughly 10K anonymized, hand-transcribed utterances from Google’ s voice search traffic without ov erlapping with the training data. This amounts to roughly 11 hours of test data per dialect. All experiments use 80-dimensional log-mel features, computed with a 25ms window and shifted ev ery 10ms. Similar to [30, 31], at the current frame, t , these features are stacked with 3 frames to the left and do wnsampled to a 30ms frame rate. In the baseline LAS model, the encoder network architecture consists of 5 unidirectional 1024D LSTM [26] layers. Additiv e attention [1] is used for all experiments. The decoder network is a 2-layer 1024D unidirectional LSTM. All networks are trained to predict graphemes, which have 75 symbols in total. The model has a total number of 60.6M parameters. All networks are trained with the cross-entropy criterion, using asyn- chronous stochastic gradient descent (ASGD) optimization [32], in T ensorFlow [33]. The training terminates when the change of WERs on a de v set is less than a gi ven threshold for certain number of steps. T able 1 : Number of utterances per dialect for training (M for mil- lion) and testing (K for thousand). Dialect US IN GB ZA A U NG KE T rain(M) 13.7 8.6 4.8 2.4 2.4 2.1 1.4 T est(K) 12.9 14.5 11.1 11.7 11.7 9.8 9.2 4. RESUL TS 4.1. Pooling All Data Firstly , we build a single grapheme LAS model on all the data to- gether ( S1 in T able 2). For comparison, we also b uild a set of dialect- dependent models. Due to the large v ariations in the amount of data we have for each dialect, a lot of tuning is required to find the best model setup from scratch for each dialect. For the sake of simplicity , we take the joint model as the starting point and retraining the same architecture for each dialect independently ( S2 in T able 2). Instead of updating only the output layers [11, 14], we find reestimating all the parameters work better . T o compensate for the extra training time the fine-tuning brings in, we also keep the baseline model train- ing for similar extra number of steps; we do not find to improve the WER. Comparing the dialect-independent model ( S1 ) with the dialect-dependent ones ( S2 ), simply pooling the data together gives acceptable recognition performance, but having a language-specific model by fine-tuning still achiev es better performance. T able 2 : WER (%) of dialect-independent ( S1 ) and dialect- dependent ( S2 ) LAS models. Dialect US IN GB ZA A U NG KE S1 10.6 18.3 12.9 12.7 12.8 33.4 19.2 S2 9.7 16.2 12.7 11.0 12.1 33.4 19.0 4.2. Using Dialect-Specific Information Our next set of experiments look at using dialect information to see if we can hav e a joint multi-dialect model improv e performance ov er the dialect-specific models ( S2 ) in T able 2. 4.2.1. Results using Dialect Information as Output T ar gets W e first add the dialect information into the target sequence. T wo se- tups are explored, namely adding at the beginning ( S3 ) and adding at the end ( S4 ). The results are presented in T able 3. Inserting the dialect symbol at the end of the label sequence is much better than at the beginning, which eliminates the dependenc y of grapheme pre- diction on the erroneous dialect classification. S4 is more preferable and outperforms the dialect-dependent model ( S2 ) on all the dialects except for IN and ZA. T able 3 : WER (%) of inserting dialect information at the beginning ( S3 ) or at the end ( S4 ) of the grapheme sequence. Dialect US IN GB ZA AU NG KE S2 9.7 16.2 12.7 11.0 12.1 33.4 19.0 S3 9.9 16.6 12.3 11.6 12.2 33.6 18.7 S4 9.4 16.5 11.6 11.0 11.9 32.0 17.9 4.2.2. Results using Dialect Information as Input V ectors T able 4 : WER (%) of feeding the dialect information into the LAS model’ s encoder ( S5 ), decoder ( S6 ) and both ( S7 ). The dialect in- formation is con verted into an 8D vector using either 1-hot represen- tation ( 1hot ) or learned embedding ( emb ). Dialect US IN GB ZA A U NG KE S2 9.7 16.2 12.7 11.0 12.1 33.4 19.0 S5(1hot) 9.6 16.4 11.8 10.6 10.7 31.6 18.1 S5(emb) 9.6 16.7 12.0 10.6 10.8 32.5 18.5 S6(1hot) 9.4 16.2 11.3 10.8 10.9 32.8 18.0 S6(emb) 9.4 16.2 11.2 10.6 11.1 32.9 18.0 S7(1hot) 9.1 15.7 11.5 10.0 10.1 31.3 17.4 Next we experiment with directly feeding the dialect informa- tion into different layers of the LAS model. The dialect information is con verted into an 8D vector using either 1-hot representation or an embedding v ector learned during training. This vector is then ap- pended to both the inputs and hidden activations. W e compare the usefulness of this dialect vector to the LAS encoder and decoder . From T able 4, feeding it to encoder ( S5 ) gi ves gains on dialects with less data (namely GB, ZA, A U, NG and KE) and has comparable performance on US, but is still a bit worse on IN compared to the fine-tuned dialect-dependent models ( S2 ). Similarly , we pass the dialect vector (using both 1-hot and learned embedding) to the de- coder of LAS ( S6 ). T able 4 shows that in this way the single multi- dialect LAS model outperforms the individually fine-tuned dialect- dependent models on all dialects except for IN, for which it obtains the same performance. Comparing the use of 1-hot representation and learned embed- ding, we do not observe big differences for both the encoder and decoder . It is most likely the small dimensionality of the vectors used ( i.e. 8D) that is insufficient to suggest any preference between the 1-hot representation and the learned embedding. In future, when scaling up to more dialects/languages, using embedding vectors in- stead of 1-hot to represent a lar ger set of dialects/languages could be a more economical way . Feeding dialect vectors into different layers effecti vely enables the model to explicitly learn dialect-dependent biases. For the en- US IN GB ZA AU NG KE US IN GB ZA AU NG KE 200 160 120 80 40 0 40 80 120 160 200 (a) Encoder ( S5(1hot) ) US IN GB ZA AU NG KE US IN GB ZA AU NG KE 200 160 120 80 40 0 40 80 120 160 200 (b) Decoder ( S6(1hot) ) Fig. 1 : Relativ e WER changes when feeding in wrong dialect vectors (rows) to the encoder or decoder for each test set (columns). The red and blue colors indicate the relativ e increase and decrease of WERs respectiv ely and the white color means no change in WERs. coder , these biases would help capture dialect-specific acoustic vari- ations; while in the decoder , they can potentially address the lan- guage model v ariations. Experimental results suggest that these sim- ple biases indeed help the multi-dialect LAS model. T o understand their effects, we test system S5(1hot) and S6(1hot) with mis- matched dialect vector on each test set. The relativ e WER changes are depicted in Figure 1. Each row represents the dialect vector fed into the model and each column corresponds to a dialect-specific test set. The white diagonal blocks are the “correct” setups, where we feed in the correct dialect vector on each test set. The red and blue colors represent the relative increase and decrease of WERs respec- tiv ely . The darker the color is, the larger the change is. Comparing the effect on encoder and decoder, wrong dialect vectors degrades more on encoders, suggesting more acoustic v ariations across di- alects than language model dif ferences. Across dif ferent dialects, IN seems to have the most distinguishable characteristics. NG and KE, the two smallest dialects in this study , benefit more from the sharing of parameters as the performance varies little with different dialect vectors. This suggests the proposed model is capable of handling the unbalanced dialect data properly , learning strong dialect-dependent biases when there’ s enough data and sticking to the shared model otherwise. Another interesting observation is that, for these two di- alects, feeding dialect vectors from ZA is slightly better than using their own. This suggests in future pooling similar dialects with less data may giv e better performance. One evidence that the model successfully learns dialect-specific lexicons is “color” in US vs. “colour” in GB. On the GB test set, the system without any explicit dialect information ( S1 ) and the one feeding it only to encoder layers ( S5 ) generate recognition hypothe- ses with both “color” and “colour” although “color” appears much less frequently . Howe ver , for the model S6 , where the dialect in- formation is directly fed into decoder layers, only “colour” appears; moreov er , if we feed in the dialect vector for US to S6 on the GB test set, the model successfully switches all the “colour” predictions to “color”. Similar observations are found for “labor” vs. “labour”, “center” vs. “centre” etc. Next, we feed the 1-hot dialect vector into all the layers of the LAS model ( S7 ). Experimental results (T able 4) show that this sys- tem outperforms the dialect-dependent models on all the test sets, with the largest gains on A U (16.5% relativ e WER reduction). 4.2.3. Results using Information as Cluster Coefficients In the literature, instead of directly feeding the dialect vector as in- puts to learn a simple bias, it can also be used as a cluster coeffi- cient vector to combine multiple clusters and learn more complex mapping functions. For comparisons, we implement a simple CA T system ( S8 ) only for the encoder . Experimental results in T able 5 show that unlike directly feeding dialect vectors as inputs, CA T fa- vors more learned embeddings ( S8(emb) ), which encourages more parameter sharing across dialects. In addition, comparing this to directly using dialect vectors ( S5(1hot) ) for the encoder, CA T ( S8(emb) ) is more effecti ve on US and IN and similar on other dialects. Howev er , in terms of model size, comparing to the baseline model ( S1 ), S5(1hot) only increases by 160K parameters, while S8(emb) adds around 3M extra. W e will lea ve a more thorough study of CA T for future work. T able 5 : WER (%) of a CA T encoder LAS system ( S8 ) with 1-hot ( 1hot ) and learned embedding ( emb ) dialect vector . Dialect US IN GB ZA A U NG KE S2 9.7 16.2 12.7 11.0 12.1 33.4 19.0 S5(1hot) 9.6 16.4 11.8 10.6 10.7 31.6 18.1 S8(1hot) 9.9 17.0 12.1 11.0 11.6 32.5 18.3 S8(emb) 9.4 16.1 11.7 10.6 10.6 32.9 18.1 4.3. Combining Adaptation Strategies Lastly , we integrate the joint dialect identification ( S4 ) and the use of dialect vectors ( S7(1hot) ) into a single system ( S9 ). The per- formance of this combined multi-dialect LAS system is presented in T able 6. It works much better than doing joint dialect identifi- cation ( S4 ) alone, but has similar performance to the one uses di- alect vectors ( S7(1hot) ). This is because when feeding in dialect vectors into the LAS model, especially in the decoder layers, the model is already doing a very good job in predicting the dialect. Specifically , the dialect prediction error for S9 on the dev set dur- ing training is less than 0.001% compared to S4 ’ s 5%. Overall, our best multi-dialect system ( S7(1hot) ) outperforms dialect-specific models and achiev es 3.1~16.5% WER reductions across dialects. T able 6 : WER (%) of the combined multi-dialect LAS system ( S9 ). Dialect US IN GB ZA A U NG KE S2 9.7 16.2 12.7 11.0 12.1 33.4 19.0 S4 9.4 16.5 11.6 11.0 11.9 32.0 17.9 S7(1hot) 9.1 15.7 11.5 10.0 10.1 31.3 17.4 S9 9.1 16.0 11.4 9.9 10.3 31.4 17.5 5. CONCLUSIONS In this study , we explored a multi-dialect end-to-end LAS system trained on 7 English dialects. The model utilizes a 1-hot dialect vec- tor at each layer of the LAS encoder and decoder to learn dialect- specific biases. It is optimized to predict the grapheme sequence appended with the dialect name as the last symbol, which effectiv ely forces the model to learn shared hidden representations that are suit- able for both grapheme prediction and dialect classification. Experi- mental results show that feeding a 1-hot dialect vector is very ef fec- tiv e in boosting the performance of a multi-dialect LAS system, and allows it to outperform a LAS model trained on each individual lan- guage. Furthermore, we also find that using CA T could potentially be more powerful in modeling dialect variations though at a cost of increased parameters, which will be addressed in future work. 6. REFERENCES [1] W . Chan, N. Jaitly , Q. V . Le, and O. V inyals, “Listen, Attend and Spell, ” CoRR , v ol. abs/1508.01211, 2015. [2] F adi Biadsy , Pedro J Moreno, and Martin Jansche, “Google’ s cross-dialect Arabic v oice search, ” in Pr oc. ICASSP . IEEE, 2012. [3] Mohamed Elfeky , Meysam Bastani, Xavier V elez, Pedro Moreno, and Austin W aters, “T o wards acoustic model unifi- cation across dialects, ” in Pr oc. SLT . IEEE, 2016. [4] Dirk V an Compernolle, “Recognizing speech of goats, w olves, sheep and non-natives, ” Speech Communication , vol. 35, no. 1, pp. 71–79, 2001. [5] Mohamed Elfeky , Pedro Moreno, and V ictor Soto, “Multi- dialectical languages effect on speech recognition: T oo much choice can hurt, ” in Pr oc. ICNLSP , 2015. [6] Hui Lin, Li Deng, Dong Y u, Y i-fan Gong, Alex Acero, and Chin-Hui Lee, “A study on multilingual acoustic modeling for large v ocabulary ASR, ” in Pr oc. ICASSP . IEEE, 2009. [7] Zhirong W ang, Umut T opkara, T anja Schultz, and Alex W aibel, “T owards universal speech recognition, ” in Proc. ICMI . IEEE, 2002. [8] Hui Lin, Li Deng, Jasha Droppo, Dong Y u, and Alex Acero, “Learning methods in multilingual speech recognition, ” Pr oc. NIPS , 2008. [9] Ngoc Thang V u, David Imseng, Daniel Pove y , Petr Motlicek, T anja Schultz, and Herv ´ e Bourlard, “Multilingual deep neural network based acoustic modeling for rapid language adapta- tion, ” in Pr oc. ICASSP . IEEE, 2014. [10] Samuel Thomas, Sriram Ganapathy , and Hynek Hermansky , “Cross-lingual and multi-stream posterior features for low re- source L VCSR systems, ” in Pr oc. INTERSPEECH , 2010. [11] Geor g Heigold, V incent V anhoucke, Alan Senior , Patrick Nguyen, M Ranzato, Matthieu De vin, and Jeffre y Dean, “Mul- tilingual acoustic models using distributed deep neural net- works, ” in Proc. ICASSP . IEEE, 2013. [12] Kanishka Rao and Has ¸ im Sak, “Multi-accent speech recog- nition with hierarchical grapheme based models, ” in Pr oc. ICASSP . IEEE, 2017. [13] W illiam Byrne, Peter Beyerlein, Juan M Huerta, Sanjee v Khu- danpur , Bhaskara Marthi, John Morgan, Nino Peterek, Joe Pi- cone, Dimitra V ergyri, and T W ang, “T owards language inde- pendent acoustic modeling, ” in Pr oc. ICASSP . IEEE, 2000. [14] Arnab Ghoshal, Pawel Swietojanski, and Stev e Renals, “Mul- tilingual training of deep neural networks, ” in Proc. ICASSP . IEEE, 2013. [15] Ale x Graves, “Sequence transduction with recurrent neural networks, ” , 2012. [16] Ale x Graves and Navdeep Jaitly , “T o wards end-to-end speech recognition with recurrent neural networks, ” in Pr oc. ICML , 2014. [17] Jan K Chorowski, Dzmitry Bahdanau, Dmitriy Serdyuk, Kyungh yun Cho, and Y oshua Bengio, “Attention-based mod- els for speech recognition, ” in Pr oc. NIPS , 2015. [18] Y u Zhang, W illiam Chan, and Navdeep Jaitly , “V ery deep con volutional networks for end-to-end speech recognition, ” in Pr oc. ICASSP . IEEE, 2017. [19] Liang Lu, Xingxing Zhang, and Ste ve Renais, “On training the recurrent neural network encoder -decoder for lar ge vocab ulary end-to-end speech recognition, ” in Pr oc. ICASSP . IEEE, 2016. [20] Rohit Prabhavalkar , Kanishka Rao, T ara N Sainath, Bo Li, Leif Johnson, and Navdeep Jaitly , “A Comparison of Sequence- to-Sequence Models for Speech Recognition, ” in Proc. Inter- speech , 2017. [21] Stephan Kanthak and Hermann Ney , “Multilingual acoustic modeling using graphemes, ” in Pr oc. INTERSPEECH . ISCA, 2003. [22] V assilios Diakoloukas, V assilios Digalakis, Leonardo Neumeyer , and Jaan Kaja, “Development of dialect-specific speech recognizers using adaptation methods, ” in Pr oc. ICASSP . IEEE, 1997. [23] Y an Huang, Dong Y u, Chaojun Liu, and Y ifan Gong, “Multi- accent deep neural network acoustic model with accent- specific top layer using the KLD-regularized model adapta- tion, ” in Pr oc. INTERSPEECH , 2014. [24] Melvin Johnson, Mike Schuster, Quoc V Le, Maxim Krikun, Y onghui W u, Zhifeng Chen, Nikhil Thorat, Fernanda V i ´ egas, Martin W attenberg, Greg Corrado, et al., “Google’ s multi- lingual neural machine translation system: enabling zero-shot translation, ” , 2016. [25] T ian T an, Y anmin Qian, and Kai Y u, “Cluster adapti v e training for deep neural network based acoustic model, ” IEEE/ACM T ransactions on Audio, Speech, and Language Processing , vol. 24, no. 3, pp. 459–468, 2016. [26] Sepp Hochreiter and J ¨ urgen Schmidhuber , “Long short-term memory, ” Neur al computation , vol. 9, no. 8, pp. 1735–1780, 1997. [27] Michael L Seltzer and Jasha Droppo, “Multi-task learning in deep neural networks for improved phoneme recognition, ” in Pr oc. ICASSP . IEEE, 2013. [28] Ossama Abdel-Hamid and Hui Jiang, “Fast speaker adaptation of hybrid NN/HMM model for speech recognition based on discriminativ e learning of speaker code, ” in Proc. ICASSP . IEEE, 2013. [29] Chanw oo Kim, Anan ya Misra, Kean Chin, Thad Hughes, Arun Narayanan, T ara Sainath, and Michiel Bacchiani, “Gener - ation of large-scale simulated utterances in virtual rooms to train deep-neural networks for far -field speech recognition in Google Home, ” in Pr oc. INTERSPEECH . ISCA, 2017. [30] Has ¸ im Sak, Andrew Senior, Kanishka Rao, and Franc ¸ oise Bea- ufays, “Fast and accurate recurrent neural network acoustic models for speech recognition, ” , 2015. [31] Golan Pundak and T ara N Sainath, “Lower Frame Rate Neural Network Acoustic Models, ” in Proc. INTERSPEECH , 2016. [32] Jef frey Dean, Greg Corrado, Rajat Monga, Kai Chen, Matthieu Devin, Mark Mao, Andre w Senior , Paul Tuck er , K e Y ang, Quoc V Le, et al., “Large scale distrib uted deep networks, ” in Pr oc. NIPS , 2012. [33] Mart ´ ın Abadi, Ashish Agarwal, P aul Barham, Eugene Brevdo, Zhifeng Chen, Craig Citro, Greg S Corrado, Andy Da vis, Jeffre y Dean, Matthieu Devin, et al., “T ensorflo w: Large- scale machine learning on heterogeneous distrib uted systems, ” arXiv:1603.04467 , 2016.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment