Precision Scaling of Neural Networks for Efficient Audio Processing

While deep neural networks have shown powerful performance in many audio applications, their large computation and memory demand has been a challenge for real-time processing. In this paper, we study the impact of scaling the precision of neural netw…

Authors: Jong Hwan Ko, Josh Fromm, Matthai Philipose

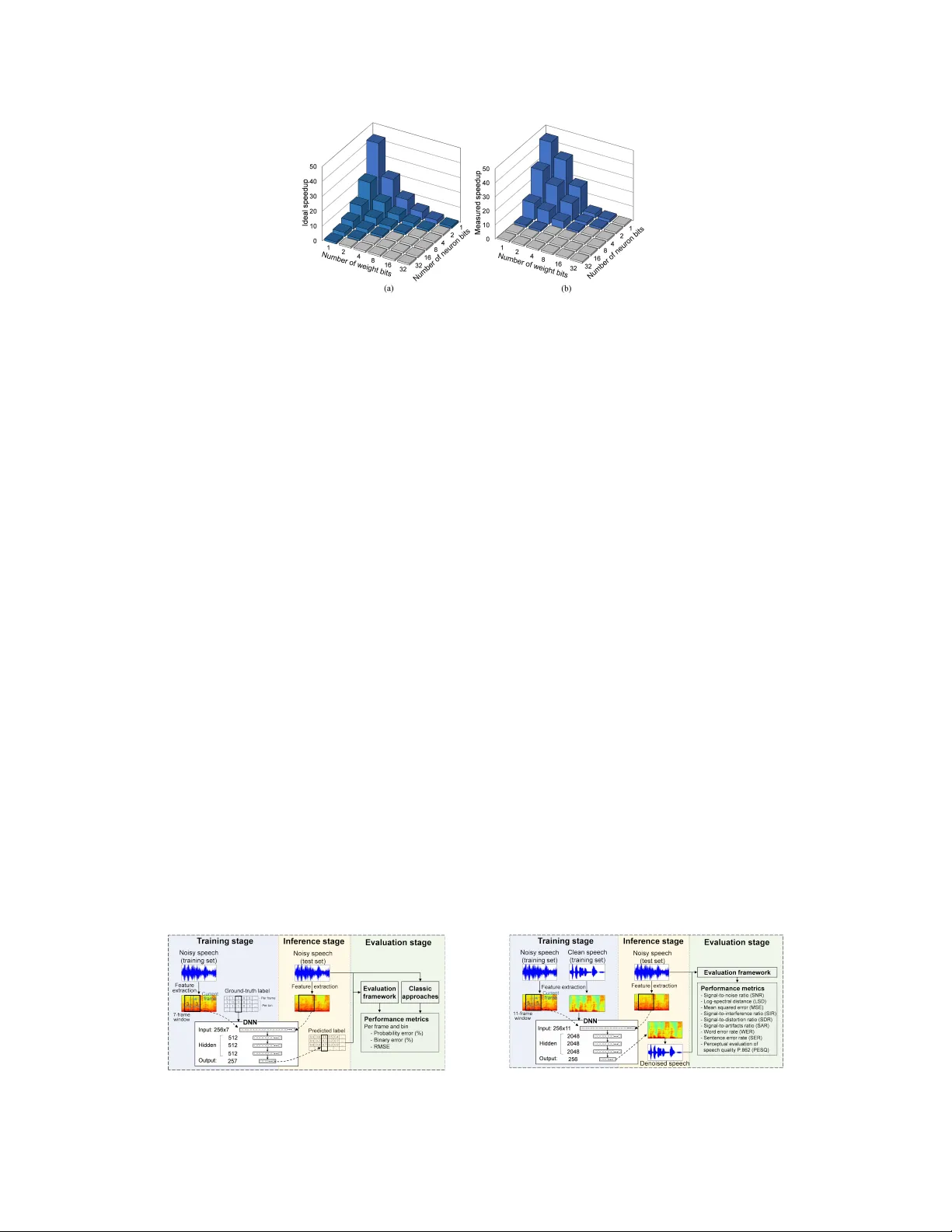

Pr ecision Scaling of Neural Networks f or Efficient A udio Pr ocessing Jong Hwan K o School of Electrical and Computer Engineering Georgia Institue of T echnology jonghwan.ko@gatech.edu Josh Fr omm Department of Electrical Engineering Univ ersity of W ashington jwfromm@uw.edu Matthai Philipose, Ivan T ashe v , and Shuayb Zarar Microsoft AI and Research {matthaip, ivantash, shuayb}@microsoft.com Abstract While deep neural networks ha ve sho wn powerful performance in many audio applications, their large computation and memory demand has been a challenge for real-time processing. In this paper, we study the impact of scaling the precision of neural networks on the performance of two common audio processing tasks, namely , voice-acti vity detection and single-channel speech enhancement. W e determine the optimal pair of weight/neuron bit precision by exploring its impact on both the performance and processing time. Through experiments conducted with real user data, we demonstrate that deep neural networks that use lower bit precision significantly reduce the processing time (up to 30x). Howe ver , their performance impact is lo w (< 3.14%) only in the case of classification tasks such as those present in voice acti vity detection. 1 Introduction V oice acti vity detection (V AD) and speech enhancement are critical front-end components of audio processing systems, as the y enable the rest of the system to process only the speech se gments of input audio samples with improved quality [ 1 ]. W ith the rapid de velopment of deep-learning technologies, V AD and speech enhancement approaches based on deep neural networks (DNNs) hav e sho wn powerful performance highly competiti ve to con ventional methods [ 2 , 3 , 4 ]. Howe ver , DNNs are inherently complex with high computation and memory demand [ 5 ], which is a critical challenge in real-time speech applications. For example, e ven a simple 3-layer DNN for speech enhancement requires 28 MOPs/frame and 56 MB of memory , as shown in column 3 of T able 1. T able 1: Computation/memory demand and performance of DNNs with baseline/reduced bit width. The processing time was measured on a CNTK frame work [6] with an Intel CPU. T as k W eigh t/neuron bit width MOPs/frame Memory (MB) Processing time /frame (ms ) Performance V oice activity detection 32/32 [2] 3 6.0 138 8.20% * 1/2 [This wo rk] 0.14 (21 × ↓) 0.19 (32 × ↓) 4.6 (30 × ↓) 1 1.34% * Speech enhancement 32/32 [3] 28 56 1,288 24.04 † 1/2 [This wo rk] 1.3 (21 × ↓) 1.75 (32 × ↓) 43.9 (30 × ↓) 10.33 † * V AD error , † SNR improvement 31st Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA. A recently proposed method for reducing the computation and memory demand is a precision scaling technique that represents the weights and/or neurons of the network with reduced number of bits [ 7 ]. While several studies ha ve sho wn effecti ve application of binarized (1-bit) networks in image classification tasks [ 8 , 9 ], to the best of our kno wledge, no work has been done to analyze the ef fect of v arious bit-width pairs of weights and neurons on the processing time and the performance of audio processing tasks like V AD and single-channel speech enhancement. In this paper , we present the design of efficient deep neural networks for V AD and speech enhancement that scales the precision of data representation within the neural network. T o minimize the bit- quantization error, we use a bit-allocation scheme based on the global distribution of the weight/neuron values. The optimal pair of weight/neuron bit precision is determined by exploring the impact of bit widths on both the performance and the processing time. Our best results show that the DNN for V AD with 1-bit weights and 2-bit neurons (W1/N2) reduces the processing time by 30 × , providing 3 . 7 × lower processing time and 9.54% lower error rate than a state-of-the-art W ebR TC V AD [ 10 ]. For speech enhancement, the DNN with W1/N2 bit precision enhances SNR (signal-to-noise ratio) by 10.33 with 30 × smaller processing time. 2 Precision Scaling of Deep Neural Netw orks While the rounding scheme is commonly used for precision scaling [ 11 ], it can result in lar ge quantization error as it does not consider global distribution of the values. In this work, we use a precision scaling method based on residual error mean binarization [ 12 ], in which each bit assignment is associated with the corresponding approximate v alue determined by the distrib ution of the original values. As illustrated in Figure 1(a), the first representation bit is assigned deterministically based on their sign, and the approximate value for each bit assignment is computed by adding/subtracting the av erage distance from the reference value (0 in the first bit assignment). Each approximate value becomes the reference of each bit se gment in the next bit assignment step. This approach allocates the same number of values in each bit assignment bin to minimize the quantization error . W e estimate the ideal inference speedup due to the reduced bit precision by counting the number of operations in each bit-precision case [see Figure 1(b)]. In the regular 32-bit netw ork, we need two operations (32-bit multiplication and accumulation) per one pair of input feature and weight element. When the network has 1-bit neurons and weights, multiplication can be replaced with XNOR and bit count operations, which can be performed with 64 elements per c ycle. When the network has 2 or more bit neurons and weights, we need to perform the three operations for all combinations of the bits. Therefore, the ideal speedup is computed as S peedup = max 1 , 128 3 × weig ht bit w idth × neur on bit w idth ! . W e hav e implemented our precision scaling methodology within the CNTK frame work [ 6 ], which provides optimized CPU-implementations for variable bit precision DNN layers. Figure 2 shows the ideal speedup and the actual speedup measured on an Intel processor . The measured speedup is similar to or e ven higher than the ideal v alues because of the benefits of loading the lo w-precision (a) Example bit allocation (b) ( T op ) 32-bit, ( Bottom ) 1-bit network. Figure 1: The approach of extreme precision scaling or binarization of neural networks that is distribution sensiti ve. 2 Figure 2: Speedup due to reduced bit precision of neurons and weights: (a) Ideal and (b) measured speedup. Blue bars indicate speedup > 1 and gray bars indicate speedup = 1. weights, as the bottleneck of the CNTK matrix multiplication is memory access. The figure also indicates that reducing weight bits leads to higher speedup than reducing neuron bits since the weights can be pre-quantized, making their memory loads very ef ficient. 3 Experimental Framework Dataset: W e created 750/150/150 files of training/v alidation/test datasets by con volving clean speech with room impulse responses and adding pre-recorded noise at dif ferent SNRs and distances from the microphone. Each clean speech file included 10 sample utterances that were collected from voice queries to the Microsoft Cortana V oice Assistant. Further, our noise files contained 25 types of recordings in the real world. V AD: As sho wn in Figure 3(a), we utilized noisy speech spectrogram windo ws of 16 ms and 50% ov erlap with a Hann smoothing filter , along with the corresponding ground-truth labels for DNN training and inference. Our baseline DNN had three 512-neuron hidden layers with 7-frame windo ws as in [ 2 ]. The network was trained to minimize the squared error between the ground-truth and predicted labels. Then the noisy spectrogram from the test dataset was used to generate the predicted labels, which were compared with the ground-truth labels to compute performance metrics. Speech enhancement: The framew ork we used in this case was similar to the one for V AD, e xcept for the use of clean speech spectrogram for training instead of the ground-truth acti vity label. W e utilized the baseline DNN model with three hidden layers presented in [ 3 ]. After performing the inference, the denoised speech from the output layer was used to compute the list of performance metrics sho wn in Figure 3(b). Due to space limitations, and since they are good proxies for speech quality , in this paper we only discuss the SNR and PESQ [13] metrics. 4 Experimental Results V AD: Figure 4(a) indicates that the detection accurac y of the DNN is more sensiti ve to neuron bit reduction than weight bit reduction. Note that ev en the DNN with 1-bit weights and neurons provides (a) V AD (b) Speech enhancement Figure 3: Experimental frame work. 3 V o i c e A c t i v i t y D e t e c t i o n T e s t s e t w i t h U n s e e n N o i s e 0 5 10 15 20 V AD frame error (%) Normalized speedup /normalized V AD fram e error 1 2 4 8 1 2 4 8 1 2 4 8 1 2 4 8 (a) (b) 0 1 2 3 W1/N1: 17.76% WebR TC: 20.88% Classic: 18.08% W32/N32: 8.20% W8/N8: 8.65% Figure 4: V AD performance of DNN with different pairs of weight/neuron bit precision. (a) Frame- lev el binary detection error and (b) normalized speedup/normalized V AD frame error . A red bar indicates the optimal pair of bit precision (1-bit weights/2-bit neurons). lower detection error than non-DNN based methods such as classic V AD [ 14 ] and W ebR TC V AD [ 10 ]. T o choose the optimal pair of weight/neuron bit precision in terms of detection accurac y and processing time, we introduce a new metric computed by multiplying normalized speedup and V AD error . Figure 4(b) sho ws that the optimal bit precision pair is determined as 1-bit weights and 2-bit neurons (W1/N2). As we reduce the bit width to W1/N2, the per-sample processing time reduces from 138 ms to 4.6 ms ( 30 × reduction), with a slight increase in the error rate (8.20% to 11.34%). The DNN with W1/N2 outperforms the W ebR TC V AD with 3 . 7 × lower process ing time and 9.54% lower error rate. Speech enhancement: As Figure 5(a) sho ws, SNR is improved for all bit-width pairs, except for 1-bit neurons. The optimal bit precision pair considering inference speedup and SNR improv ement is W1/N2. Howe ver , Figure 5(b) shows that the PESQ improv ement is not achie ved by DNNs with low bit precision; the most ef ficient model that enhances PESQ is W2/N4 with 9 × speedup. This is mainly because of the limited capability of the baseline DNN model, which improves PESQ by 0.38. The result also indicates that the lower -precision values (especially in the neural bit) are not suitable for an estimation or regression task (such as speech enhancement). 5 Conclusions In this paper , we presented a methodology for efficiently scaling the precision of neural networks for two common audio processing tasks. Through a careful design-space exploration, we demonstrated that a DNN model with optimal bit-precision v alues reduces the processing time by 30 × with only a slight increase in the error rate. Even at these modest precision scaling lev els, it outperforms a state-of-the-art W ebR TC V AD with 3 . 7 × lower processing time and 9.54% lower error rate. The low bit precision DNN also enhances the quality of noisy speech, but the precision could not be reduced much for speech enhancement. Our results indicate that the precision scaling of DNNs may be better suited for classification or detection tasks such as V AD rather than estimation or regression tasks such as speech enhancement. T o validate this hypothesis, we intend to further e xplore the scaling of neural-network bit precisions for other classification tasks such as source separation and microphone beam forming and estimation tasks such as acoustic echo cancellation. N u m b e r o f we i g h t b i ts N u m b e r o f w e i g h t b i t s Clean: 57.37 Noisy: 15.18 Clean: 4.48 Noisy: 2.26 SNR (dB) PESQ W32/N32: 39.22 W32/N32: 2.64 Most efficient model with PESQ impr ovement: W2/N4 W1/N2: 25.51 (a) (b) Figure 5: Speech enhancement performance of DNN with dif ferent precision. (a) SNR and (b) PESQ. 4 References [1] Xiao-lei Zhang and Deliang W ang. Boosting Contextual Information for Deep Neural Network Based V oice Activity Detection. IEEE/ACM T ransactions on A udio, Speech, and Langua ge Pr ocessing , 24(2):252–264, 2016. [2] Ivan T ashev and Seyedmahdad Mirsamadi. DNN-based Causal V oice Activity Detector. In Information Theory and Applications W orkshop , 2016. [3] Y ong Xu, Jun Du, Li-Rong Dai, and Chin-Hui Lee. An Experimental Study on Speech Enhancement Based on Deep Neural Networks. IEEE Signal Pr ocessing Letters , 21(1):65–68, 2014. [4] Xiao-lei Zhang and Ji W u. Deep Belief Networks Based V oice Activity Detection. IEEE T ransactions on Audio, Speec h, and Language Pr ocessing , 21(4):697–710, 2013. [5] J. H. Ko, D. Kim, T . Na, J. Kung, and S. Mukhopadhyay . Adaptiv e weight compression for memory-ef ficient neural networks. Design, Automation T est in Eur ope Confer ence Exhibition (D A TE), 2017 , pages 199–204, March 2017. [6] A. Agrawal et al. An introduction to computational networks and the computational network toolkit. Micr osoft T echnical Report MSR-TR-2014-112 , 2014. [7] Itay Hubara, Matthieu Courbariaux, Daniel Soudry , Ran El-Y ani v , and Y oshua Bengio. Quantized Neural Networks: T raining Neural Networks with Lo w Precision W eights and Activ ations. Journal of Machine Learning Resear ch , 1:1–48, 2000. [8] Jiaxiang W u, Cong Leng, Y uhang W ang, Qinghao Hu, and Jian Cheng. Quantized Con volutional Neural Networks for Mobile De vices. Arxiv 2016 , page 11, 2016. [9] T . Na and S. Mukhopadhyay . Speeding Up Con volutional Neural Network T raining with Dynamic Precision Scaling and Flexible Multiplier -Accumulator. ISLPED 2016 . [10] https://webrtc.org/. [11] Suyog Gupta, Ankur Agrawal, Kailash Gopalakrishnan, and Pritish Narayanan. Deep Learning with Limited Numerical Precision. In International Confer ence on International Confer ence on Machine Learning , 2015. [12] W ei T ang, Gang Hua, and Liang W ang. How to train a compact binary neural network with high accuracy? AAAI , pages 2625–2631, 2017. [13] ITU-T , recommendation p.862, perceptual ev aluation of speech quality (PESQ): An objectiv e method for end-to-end speech quality assessment of narrow-band telephone networks and speech codecs. International T elecommunication Union- T elecommunication Standar disation Sector , 2001. [14] Ivan T ashev , Andre w Lo vitt, and Alex Acero. Unified Frame work for Single Channel Speech Enhancement. Pr oceedings of the 2009 IEEE P acific Rim Confer ence on Communications, Computers and Signal Pr ocessing (P ACRIM ’09) , (September):883–888, 2009. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment