Deep Cross-Modal Correlation Learning for Audio and Lyrics in Music Retrieval

Little research focuses on cross-modal correlation learning where temporal structures of different data modalities such as audio and lyrics are taken into account. Stemming from the characteristic of temporal structures of music in nature, we are mot…

Authors: Yi Yu, Suhua Tang, Francisco Raposo

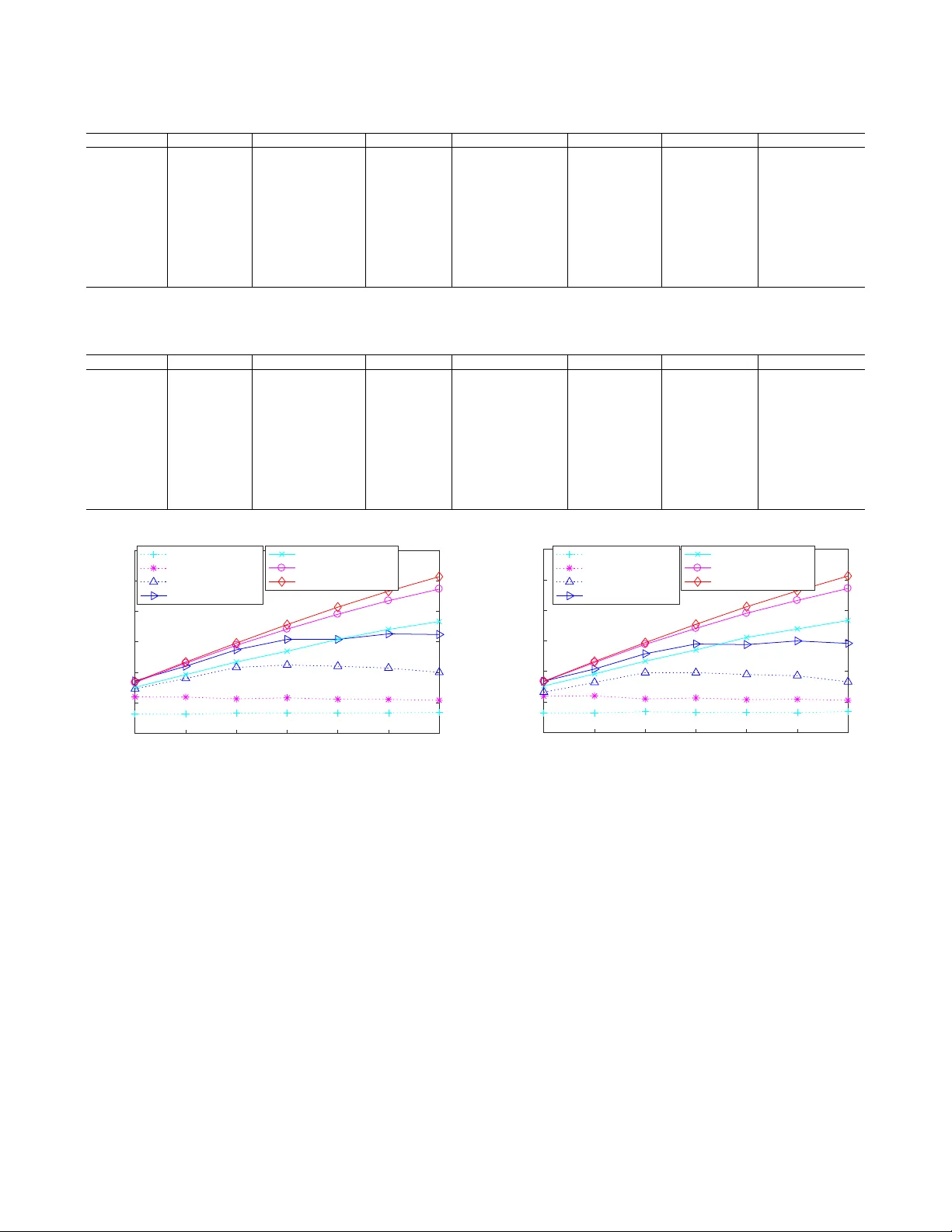

1 Deep Cross-Modal Correlation Learning for Audio and L yrics in Music Retrie v al Y i Y u 1 , Suhua T ang 2 , Francisco Raposo 3 , Lei Chen 4 1 Digital Content and Media Sciences Research Division, National Institute of Informatics, T okyo 2 Dept. of Communication Engineering and Informatics, The Univ ersity of Electro-Communications, T okyo 3 Instituto Superior T ´ ecnico, Uni versidade de Lisboa, Lisbon 4 Department of Computer Science and Engineering, Hong K ong Uni versity of Science and T echnology Abstract —Deep cross-modal lear ning has successfully demon- strated excellent performances in cr oss-modal multimedia re- trieval, with the aim of learning joint representations between different data modalities. Unfortunately , little research focuses on cross-modal correlation learning where temporal structures of different data modalities such as audio and lyrics are taken into account. Stemming from the characteristic of temporal structures of music in nature, we are motivated to learn the deep sequential correlation between audio and lyrics. In this work, we propose a deep cross-modal correlation learning ar- chitecture in volving two-branch deep neural networks for audio modality and text modality (lyrics). Different modality data are conv erted to the same canonical space where inter modal canonical correlation analysis is utilized as an objective function to calculate the similarity of temporal structures. This is the first study on understanding the correlation between language and music audio through deep architectur es for learning the paired temporal correlation of audio and lyrics. Pre-trained Doc2vec model follo wed by fully-connected layers (fully-connected deep neural network) is used to represent lyrics. T wo significant contributions are made in the audio branch, as follo ws: i) pre- trained CNN followed by fully-connected layers is investigated for repr esenting music audio. ii) W e further suggest an end-to- end architecture that simultaneously trains con volutional layers and fully-connected layers to better learn temporal structures of music audio. Particularly , our end-to-end deep architecture contains two properties: simultaneously implementing feature learning and cross-modal correlation learning, and learning joint repr esentation by considering temporal structures. Experimental results, using audio to retriev e lyrics or using lyrics to retrie ve audio, verify the effectiveness of the proposed deep correlation learning architectures in cross-modal music retrie val. Index T erms —Con volutional neural networks, deep cr oss- modal models, correlation learning between audio and lyrics, cross-modal music retrie val, music knowledge discovery I . I N T R O D U C T I O N Music audio and lyrics provide complementary information in understanding the richness of human beings’ cultures and activities [1]. Music 1 is an art expression whose medium is sound organized in time. L yrics 2 as natural language represent music theme and story , which are a very important element for creating a meaningful impression of the music. Starting from the late 2014, Google provides music search results containing Francisco was in volv ed in this work during his internship in National Institute of Informatics (NII), T okyo. 1 https://en.wikipedia.org/wiki/Music 2 https://en.wikipedia.org/wiki/L yrics 1 Fig. 1. Google lyrics for song title “another brick in the wall (part II)”. song lyrics as shown in Fig. 1 when gi ven a specific song title. Howe ver , searching lyrics in this way is insufficient because sometimes people might lack exact song title b ut kno w a segment of music audio instead, or want to search an audio track with part of the lyrics. Then, a natural question arises: how to retriev e the lyrics by a segment of music audio, and vice versa? Searching lyrics by audio was almost impossible years ago due to the limited av ailability of large volumes of music audio and lyrics. The profusion of online music audio and lyrics from music sharing websites such as Y ouT ube, MetroL yrics, Azlyrics, and Genius sho ws the opportunity to understand musical knowledge from content-based audio and lyrics by lev eraging large volumes of cross-modal music data aggre- gated in Internet. Motiv ated by the fact that audio content and lyrics are very fundamental aspects for understanding what kind of cultures and activities a song wants to conv ey to us, this research pays attentions to deep correlation learning between audio and lyrics for cross-modal music retrie v al and considers two real- world tasks: using audio to retriev e lyrics or using lyrics to retriev e audio. Sev eral contributions are made in this paper, as follows: i) T o the best of our knowledge, this w ork is the first research where a deep correlation learning architecture with two-branch neural networks and correlation learning model is studied for cross-modal music retrie v al by using either audio or lyrics as a query modality . ii) Dif ferent music modality data are projected to the shared space where inter modal canonical correlation analysis is 2 exploited as an objective function to calculate the similarity of temporal structures. Fully-connected deep neural networks (DNNs) and an end-to-end DNN are proposed to learn audio representation, where the pre-trained Doc2vec model followed by fully-connected layers is employed to extract lyrics feature. iii) Extensive experiments confirm the ef fecti veness of our deep correlation learning architecture for audio-lyrics music retriev al, which are meaningful results and studies for attract- ing more ef forts on mining music knowledge structure and correlation between different modality data. The rest of this paper is structured as follo ws. Research motiv ation and background are introduced in Sec.II. Sec.III giv es the preliminaries of Conv olutional Neural Networks (CNNs) and Deep Canonical Correlation Analysis (DCCA). Then, Sec.IV presents why and how we exploit CNNs and DCCA to build a deep correlation learning architecture for audio-lyrics music retriev al. The task of cross-modal music retriev al in our work is described in Sec.V. Experimental ev aluation results are shown in Sec.VI. Finally , conclusions are pointed out in Sec.VII. I I . M O T I V AT I O N A N D B AC K G RO U N D Music has permeated our daily life, which contains dif ferent modalities in real-w orld scenarios such as temporal audio signal, lyrics with meaningful sentences, high-lev el semantic tags, and temporal visual content. Ho we ver , correlation learn- ing between lyrics and audio for cross-modal music retriev al has not been sufficiently studied. Pre vious works [2], [3], [4] mainly focused on content-based music retriev al with single modality . With the widespread av ailability of large-scale multimodal music data, it brings us research opportunity to tackle cross-modal music retriev al. A. L yrics and Audio in Music Recent research has shown that lyrics, audio, or the com- bination of audio and lyrics are mainly applied to semantic classification such as emotion or genre in music. For example, authors in [5] proposed an unsupervised learning method for mood recognition where Canonical Correlation Analysis (CCA) was applied to identify correlations between lyrics and audio, and the ev aluation of mood classification was done based on the valence-arousal space. An interesting corpus with each song in the MIDI format and emotion annotation is in- troduced in [6]. Coarse-grained classification for six emotions is learned by support vector machines (SVM), and this work showed that either textual feature or audio feature can be used for emotion classification, and their joint use leads to a significant improvement. Emotion lyrics datasets in English [7] are annotated with continuous arousal and v alence values. Specific text emotion attributes are considered to complement music emotion recognition. Experiments on the regression and classification of music lyrics by quadrant, arousal, and valence categories are performed. Application of hierarchical attention network is proposed in [8] to handle genre classification of intact lyrics. This network is able to pay attention to words, lines, and segments of the song lyrics, where the importance of words, lines, and segments in layer structure is learned. Distinct from intensive research on music classification by using lyrics and audio, our work focuses on audio-lyrics cross- modal music retriev al: using audio to retriev e lyrics or vice versa. This is a very natural way for us to retriev e lyrics or audio on the Internet. Howe ver , no much research has in vestigated this task. B. Cr oss-modal Music Retrieval Some existing researches on cross-modal music retriev al intensiv ely focus on in vestigating music and visual modal- ities [9], [10], [11], [12], [13], [14], [15], [16]. Similarity between audio features extracted from music and image features extracted from the album cov ers are trained by a Jav a SOMT oolbox framework in [11]. Then, according to this similarity , people can organize a music collection and make use of album cover as visual content to retriev e a song ov er multimodal music data. Based on multi-modal mixture models, a statistical method to jointly modeling music, images, and text [12] is used to support retriev al over a multimodal dataset. T o generate a soundtrack for the outdoor video, an effecti ve heuristic ranking method is suggested based on heterogeneous late fusion by jointly considering venue categories, visual scene, and user listening history [13]. Confidence scores, produced by SVM-hmm models constructed from geographic, visual, and audio features, are combined to obtain different types of video characteristics. T o learn the semantic correlation between music and video, a novel approach to selecting features and statistical novelty based on kernel methods [14] is proposed for music segmentation. Co-occurring changes in audio and video content of music videos can be detected, where the correlations can be used in cross-modal audio-visual music retrie val. L yrics-based music attributes are utilized for image representation in [16]. Cross-modal ranking analysis is suggested to learn semantic similarity between music and im- age, with the aim of obtaining the optimal embedding spaces for music and image. Distinct from intensi ve research on considering the use of metadata for different music modalities in cross-modal music retriev al, our work focuses on deep architecture based on correlation learning between audio and lyrics for content-based cross-modal music retriev al. C. Deep Cr oss-modal Learning W e hav e witnessed sev eral ef forts dev oted to inv estigating cross-modal learning between different modalities, such as [17], [18], [19], [20], [21], [22], to facilitate cross-modal matching and retriev al. Most importantly , latest studies ex- tensiv ely pay attention to deep cross-modal learning between image and textual descriptions such as [17], [19], [22], [23]. Most existing deep models with two-branch sub-networks explore pre-trained conv olutional neural network (CNN) [24] as image branch [19] and utilize pre-trained document-level embedding model [25] or hand-crafted feature extraction such as bag of words [17] as text branch. Image and text modalities are conv erted to the joint embedding space calculating a single ranking loss function by feed-forward way . Image-text benchmarks such as [26], [27] are applied to ev aluate the performances of cross-modal matching and retrie val. There 3 are two features for existing deep cross-modal retriev al: i) cross-modal correlation between image and text is learned without considering temporal sequences. ii) Pre-trained models are directly applied to represent image or text. Distinct from existing deep cross-modal retriev al architectures, this work takes into account temporal sequences to learn the correlation between audio and lyrics for facilitating audio-lyrics cross- modal music retriev al, where sequential audio and lyrics are con verted to the canonical space. A neural network with two- branch sequential structures for audio and lyrics is trained. I I I . P R E L I M I N A R I E S W e focus on de veloping a two-branch deep architecture for learning the correlation between audio and lyrics in the cross- modal music retriev al, where se veral variants of deep learning models are inv estigated for processing audio sequence while pre-trained Doc2vec [25] is used for processing lyrics. A brief revie w of CNNs and DCCA e xploited in this work is addressed in the following. A. Con volutional Neural Networks (CNNs) CNNs hav e been exploited to handle not only v arious tasks in the field of computer vision and multimedia [28], [29], but also the tasks of music information retrie val such as genre clas- sification [30], acoustic ev ent detection [31], automatic music tagging [32]. Generally speaking, when lacking computational power and large annotated datasets, it is preferred to directly use pre-trained CNNs such as VGG16 [28] to extract features [20][31], or further combine it with fully-connected layers to extract semantic features [19][23][31]. Different from plain spatial con volutional operation, CNN tries to use different kernels (filters) to capture different local patterns, and this will generate multiple intermediate feature maps (called channels). Specifically , the con volutional operation in one con v olutional layer is defined as x j = f ( K − 1 X k =0 H j k ⊗ s k + a j ) , (1) where the superscripts j , k are channel indices, s k is the k -th channel input, x j is the j -th channel output, ⊗ is the con volutional operation, H j k is the con volutional kernel (or the filter) that associates the k -th input channel with the j - th output channel, a j is the bias for j -th channel, and f ( · ) is a non-linear activ ation function. All weights that define a con volutional layer are represented as a 4-dimensional array with a shape of ( h, l , K , J ) , where h and l determine the kernel size, and K and J are the number of input and output channels, respectiv ely . When mel-spectrogram is used as the input of the first con volutional layer , it only has one channel. A 2D conv olutional kernel H j k , as a common filter, is applied to the whole input channel. This kernel is shifted along both (frequency and time) ax es and a local correlation is computed between the kernel and input. The kernels are trained to find local salient patterns that maximize the ov erall objectiv e. As a kernel sweeps the input, it generates a new output in order , which preserves the spatiality of the input, i.e., the frequency and time constraint of the spectrogram. Con volutional layers are often followed by pooling layers, which reduce the size of feature map by down sampling them. The max function is a typical pooling operation. This selects the maximal v alue from a pooling region, instead of keeping all information in the region. This pooling operation also enables distortion and translation in variances by discarding the original location of the selected value, and the capability of such in v ariance within each pooling layer is determined by the pooling size. W ith a small pooing size, the network does not have enough distortion in variance, while a too large pooling size may completely loose the location of a salient feature. Instead of using a large pooling size in one layer, using multiple small pooling sizes at different pooling layers will enable the system to gradually abstract the features to be more compact and more semantic. B. Deep Canonical Corr elation Analysis (DCCA) CCA has been a very popular method for embedding multimodal data in a shared space. Before presenting our deep multimodal correlation learning between audio and lyrics, we first giv e an o vervie w of CCA and DCCA. Let x ∈ R m (e.g., audio feature) and y ∈ R n (e.g., textual feature) be zero mean random (column) vectors with cov ariances C xx , C y y and cross-cov ariance C xy . When a linear projection is performed, CCA [33] tries to find two canonical weights w x and w y , so that the correlation between the linear projections u = w T x x and v = w T y y is maximized. ( w x , w y ) = ar gmax ( w x , w y ) cor r ( w T x x , w T y y ) = ar gmax ( w x , w y ) w T x C xy w y q w T x C xx w x · w T y C y y w y . (2) One of the kno wn shortcoming of CCA is that its linear projection may not well model the nonlinear relation between different modalities. DCCA [34] tries to calculate non-linear correlations be- tween different modalities by a combination of DNNs (deep neural networks) and CCA. Different from KCCA which relies on kernel functions (corresponding to a logical high dimensional (sparse) space), DNN has the extra capability of compressing features to a low dimensional (dense) space, and then CCA is implemented in the objective function. The DNNs, which realize the non-linear mapping ( ϕ x ( · ) and ϕ y ( · ) ), and the canonical weights ( w x and w y that model the CCA between ϕ x ( x ) and ϕ y ( y ) ), are trained simultaneously to maximize the correlation after the non-linear mapping, as follows. ( w x , w y , ϕ x , ϕ y ) = ar gmax ( w x , w y ,ϕ x ,ϕ y ) cor r ( w T x ϕ x ( x ) , w T y ϕ y ( y )) . (3) 4 I V . D E E P A U D I O - L Y R I C S C O R R E L A T I O N L E A R N I N G W e de velop a deep cross-modal correlation learning ar- chitecture that predicts latent alignment between audio and lyrics, which enables audio-to-lyrics or lyrics-to-audio music retriev al. In this section, we explain ho w our deep architecture is learned. Specifically , we in vestigate different deep network models for correlation analysis and different deep learning methods for audio feature extraction. A. Learning Strate gy On one hand, lyrics as natural language express semantic music theme and story; on the other hand, music audio con- tains some properties such as tonality and temporal over time and frequenc y . They are correlated in the semantic sense. Ho w- ev er, audio and lyrics belong to dif ferent modality and cannot be compared directly . Therefore, we extract their features separately , and then map them to the same semantic space for a similarity comparison. Because linear mapping in CCA does not work well, we design deep networks to realize non-linear mapping before CCA. Consequently , deep correlation models for learning temporal structures are considered for representing lyrics branch and audio branch. W e inv estigate two deep network architectures. i) Separate feature extraction, completely independent of the following DCCA analysis. T ext branch follows this architecture, where the pre-trained Doc2vec [25] model is used to compute a compact textual feature vector . As for audio, directly using the pre-trained CNN model [32] belongs to this architecture as well. ii) Joint training of audio feature extraction and DCCA analysis between audio and lyrics. In this way , feature extraction is also correlated with the subsequent DCCA. Here, for the audio branch, a CNN model is trained from the ground together with the following fully-connected layers, based on an end-to-end learning procedure. It is expected that this CNN is adapted to the DNN so as to extract more meaningful audio features. B. Network Ar chitectur e Figure 2 shows an end-to-end deep conv olutional DCCA network, which aims at simultaneously learning the feature extraction and the deep correlation between audio and lyrics. This model is degenerated to a simple DCCA network, when the CNN model marked in pink dashed line is replaced by a pre-trained model. From the sequence of words in the lyrics, te xtual feature is computed, more specifically , by a pre-trained Doc2vec model. Music audio signal is represented as a 2D spectrogram, which preserves both its spectral and temporal properties. Howe ver , it is difficult to directly use this for the DCCA analysis, due to its high dimension. Therefore, we in vestigate two variants for the dimension reduction. (i) Audio feature is extracted by a pre-trained con volutional model, and we study the pure effect of DCCA in analyzing the correlation. i.e., sub DNNs with fully connected layers are trained to maximize the correlation between audio and textual features. (ii) An end-to-end deep network for audio branch that integrates conv olutional layers Convolutional layers Doc2vec Fully-connected layers Fully-connected layers CCA Embedding MFCC feature sequence Fig. 2. Deep correlation learning between audio and lyrics. for feature extraction and non-linear mapping for correlation learning together, is trained. In the future work, we will also consider the integration of Doc2V ec with its subsequent DNN. 1) Audio featur e extr action: The audio signal is represented as a spectrogram. W e mainly focus on mel-frequency cepstral coefficients (MFCCs), because MFCCs are very efficient fea- tures for semantic genre classification [35] and music audio similarity comparison [36]. W e will also compare MFCC with Mel-spectrum, which contains more detailed information. T o compute a single feature vector for correlation analysis, we successiv ely apply con volutional layers with different kernels to capture local salient features, and use pooling layers to reduce the dimension. By inserting the pooling layer between adjacent con vo- lutional layers, a kernel in the late layer corresponds to a larger kernel in the previous layer , and has more capacity in representing semantic information. Then, using small kernels in different con volutional layers can achiev e the function of a large kernel in one con volutional layer , but is more robust to scale variance. In this sense, a combination of successive con volutional layers and pooling layers can capture features at different scales, and the kernels can learn to represent complex patterns. For each audio signal, a slice of 30s is resampled to 22,050Hz with a single channel. W ith frame length 2048 and step 1024, there are 646 frames. For the end-to-end learning, a sequence of MFCCs (20x646) are computed. By initial experiments we found that our approach is not v ery sensitiv e to the time resolution. Therefore, we decimate the spectrogram into 4 sub sequences, each with 161 frames and associated with the same lyrics. For implementing an end-to-end deep learning, the configu- ration of CNN used for audio branch in this work is sho wn in T able I. It consists of 3 con volutional layers and 3 max pooling layers, and outputs a feature vector with a size of 1536. W e tried to add more con volutional layer but see no significant difference. Rectified linear unit (ReLU) is used as an acti vation function in each conv olutional layer except the last one. Batch 5 T ABLE I C O NFI G U R A T I ON O F C N N S F O R AU D IO B R A NC H MFCC: 20x646/4 Con volution, 3x3x48 Max-pooling (2,2), output 10x80x48 Con volution: 3x3x96 Max-pooling (3,3), output 3x26x96 Con volution: 3x3x192 Max-pooling (3,3), output 1536 T ABLE II S T RU CT U R E O F S U B -D N N S Sub-DNN1 (Audio) Sub-DNN2 (T ext) 1st layer 1024, sigmoid 1024, sigmoid 2nd layer 1024, sigmoid 1024, sigmoid 3rd layer (output) D , linear D , linear normalization is used before activ ation. Con volutional kernels (3x3) are used in ev ery con volutional layer . These kernels help to learn local spectral-tempo structures. In this way , CNN con verts an audio feature sequence (a 2D matrix) to a high dimensional vector , and retains some astonishing properties such as tempo in v ariances, which can be very helpful for learning musical features in semantic correlation learning between lyrics and audio. W ith the input spectrogram s , the feature output by the con volutional layers is x = f 3 ( H 3 ⊗ f 2 ( H 2 ⊗ f 1 ( H 1 ⊗ s + a 1 ) + a 2 ) + a 3 ) , where H i , a i and f i are the conv olutional kernel, bias, and acti v ation function in the i th layer . As for the pre-trained model, we apply the pre-trained CNN model in [32], which has 5 con volutional layers, each with either a verage pooling or standard deviation pooling, generating a 30-dimension vector per layer . Concatenating all of them together generates a feature vector of 320 dimension. 2) T e xtual feature extr action: L yrics text of each song is tokenized by using coreNLP [37], and passed to the in- fer vector module of the Doc2V ec model [25], generating a 300-dimensional feature for each song. W e use the pretrained apnews dbow weights 3 in the experiment. 3) Non-linear mapping of featur es: Audio features and textual features are further con verted into low dimensional features in a shared D -dimensional semantic space by using different sub DNNs composed of fully connected layers. The details of sub DNNs are sho wn in T able II. These two sub DNNs (each with 3 fully connected layers) implement the non-linear mapping of DCCA. The audio feature generated by the feature extraction part is denoted as x ∈ R m ( m varies with each method) and deep te xtual feature is denoted as y ∈ R 300 . The overall functions of sub-DNNs are denoted as ϕ x ( x ) = g 3 ( Ψ 3 · g 2 ( Ψ 2 · g 1 ( Ψ 1 x + b 1 ) + b 2 ) + b 3 ) , where Ψ i and b i are the weight matrix and bias for the i th layer and g i ( · ) is the acti vation function. And ϕ y ( y ) is computed in a similar way . Then, ϕ x ( x ) is the overall result of the con volutional layer and its subsequent DNN, giv en the input spectrogram s . 4) Objective function of CCA: Assume the batch size in the training is N , X ∈ R D × N and Y ∈ R D × N are the outputs of sub DNN of the two batches, corresponding to audio 3 https://ibm.ent.box.com/s/9ebs3c759qqo1d8i7ed323i6shv2js7e ( ϕ x ( x ) ) and lyrics ( ϕ y ( y ) ), respectiv ely . Let covariance of ϕ x ( x ) and ϕ y ( y ) be C X X , C Y Y and their cross-cov ariance be C X Y . W ith the linear projection matrices W X and W Y , the correlation between the canonical components ( W T X X and W T Y Y ) can be computed. This correlation indicates the association between the tw o modalities and is used as an ov erall objectiv e function, which is maximized to find all parameters (con volutional kernels H ( · ) , non-linear projections ϕ x ( · ) and ϕ y ( · ) , linear projection matrices W X and W Y ). ( H , W X , W Y ,ϕ x ,ϕ y ) = ar gmax ( H , W X , W Y ,ϕ x ,ϕ y ) cor r ( W T X X , W T Y Y ) . At first, with H , ϕ x , ϕ y being fixed, W X and W Y are computed by ( W X , W Y ) = ar gmax ( W X , W Y ) W T X C X Y W Y q W T X C X X W X · W T Y C Y Y W Y . This can be rewritten in the trace-form ( W X , W Y ) = ar g max ( W X , W Y ) tr ( W T X C X Y W Y ) , (4) subject to : W T X C X X W X = W T Y C Y Y W Y = I . Here, cov ariance C X X , C Y Y and cross-cov ariance C X Y are computed as follows C X X = 1 N − 1 ˆ X ˆ X T + r I , (5) C Y Y = 1 N − 1 ˆ Y ˆ Y T + r I , (6) C X Y = 1 N − 1 ˆ X ˆ Y T , (7) ˆ X = X − X , ˆ Y = Y − Y where X and Y are av erage of ϕ x ( x ) and ϕ y ( y ) within the batch, and r is a small positiv e constant used to ensure the positiv e definiteness of C X X and C Y Y . By defining T , C − 1 / 2 X X C X Y C − 1 / 2 Y Y and performing sin- gular v alue decomposition on T as T = U D V T , W X and W Y can be computed by [34] W X = C − 1 / 2 X X U , W Y = C − 1 / 2 Y Y V . (8) Then, Eq.(4) can be rewritten as tr (( W T X C X Y W Y ) T · W T X C X Y W Y ) = tr ( T T T ) . (9) Accordingly , the gradient of the correlation with respect to X is giv en by 1 N − 1 (2 ∇ X X ˆ X + ∇ X Y ˆ Y ) , (10) ∇ X X = − 1 2 C − 1 / 2 X X U D U T C − 1 / 2 X X , 6 ∇ X Y = C − 1 / 2 X X U V T C − 1 / 2 Y Y . And the gradient of the correlation with respect to Y can be computed in a similar way . Then, the gradients are back propagated, first in the sub DNN, where ϕ x ( x ) and ϕ x ( y ) are updated. As for the audio branch, the gradients are further back propagated to the con volutional layers, and the kernel filters H are updated. The whole procedure is shown in Algorithm 1. Algorithm 1 Joint training of CNN and DCCA 1: procedur e J O I N T T R A I N ( A , L ) A : audio, L : lyrics 2: Initialize conv olutional net, sub-networks for mapping 3: Compute MFCC spectrogram from audio A , → Ω A 4: Compute textual feature from lyrics L , → Ω L 5: for each epoch do 6: Randomly divide Ω A , Ω L to batches 7: for each batch ( ω A , ω L ) of audio and lyrics do 8: for each pair ( s , l ) ∈ ( ω A , ω L ) do 9: s → x by con volutions 10: l → y by pretrained Doc2V ec model 11: x → ϕ x ( x ) by non-linear mapping 12: y → ϕ y ( y ) by non-linear mapping 13: end for 14: Get con verted batch ( X , Y ) 15: Apply CCA on ( X , Y ) to compute W X , W Y 16: Compute the gradient with respect to X , Y 17: Back propagate to the sub network 18: Back propagate to the con volutional network 19: end for 20: end for 21: end procedur e V . M U S I C C RO S S - M O D A L R E T R I E V A L TA S K S T wo kinds of retriev al tasks are defined to ev aluate the effecti veness of our algorithms: instance-lev el and category- lev el. Instance-level cross-modal music retrie v al is to retriev e lyrics when gi ven music audio as input or vice v ersa. Category- lev el cross-modal music retriev al is to retrieve lyrics or audio, searching most similar audio or lyrics with the same mood category . W ith a given input (either audio slice or lyrics), its canonical component is computed, and its similarity with the canonical components of the other modality in the database is computed using the cosine similarity metric, and the results are ranked in the decreasing order of the similarity score. V I . E X P E R I M E N T S The performances of the proposed DCCA v ariants are ev aluated and compared with some baselines such as variants of CCA and deep multi-view embedding approach [38]. A. Experiment Setting Pr oposed methods . As discussed in Sec. IV, two vari- ants of DCCA in combination with CNN are in vestigated: 1) PretrainCNN-DCCA (the application of DCCA on the pretrained CNN model [32]), 2) JointT rain-DCCA (the joint training of CNN and DCCA). Baseline methods include some shallow correlation learn- ing methods (without fully connected layers between feature extraction and CCA), such as 3) Spotify-CCA (which ap- plies CCA on the 65-dimensional audio features provided by Spotify 4 ), 4) PretrainCNN-CCA (which applies CCA on the features extracted by the pretrained CNN model), and multi- view methods such as 5) Spotify-MVE (Spotify feature with deep multi-view embedding method similar to [38] where arbitrary mappings of two different views are embedded in the joint space based on considering matched pairs with minimal distance and mismatched pairs with maximal distance), 6) PretrainCNN-MVE. W e also ev aluated 7) Spotify-DCCA. In all these methods, the lyrics branch uses the features extracted by the pretrained Doc2vec model. Besides MFCC, we also e valuate the feature of Mel- spectrum. The dimension for Mel-spectrum is 96 per frame, and there are four con v olutional layers, where each of the first three is follo wed by a max pooling layer , and the final output is 3072 dimension. As for the MVE methods, both branches share the same parameters (activ ation function, number of neurons and so on) and both hav e 3 fully connected layers (with 512, 256, and 128 neurons respectiv ely). Batch normalization is used before each layer and tanh acti v ation function is applied after each layer . Audio-lyrics dataset . Currently , there is no large audio/lyrics dataset publically av ailable for cross-modal music retriev al. Therefore, we build a new audio-lyrics dataset. Spotify is a music streaming on-demand service, which provides access to ov er 30 million songs, where songs can be searched by various parameters such as artist, playlist, and genre. Users can create, edit, and share playlists on Spotify . Initially , we take 20 most frequent mood categories (aggressive, angry , bittersweet, calm, depressing, dreamy , fun, gay , happy , heavy , intense, melancholy , playful, quiet, quirky , sad, sentimental, sleepy , soothing, sweet) [9] as playlist seeds to inv oke Spotify API. For each mood category , we find the top 500 popular English songs according to the popularity provided by Spotify , and further crawl 30s audio slices of these songs from Y ouT ube, while lyrics are collected from Musixmatch. Altogether there are 10,000 pairs of audio and lyrics. Evaluation metric . In the retrie v al ev aluation, we use mean reciprocal rank 1 (MRR1) and recall@N as the metrics. Because there is only one relev ant audio or lyrics, MRR1 is able to show the rank of the result. MRR1 is defined by M RR 1 = 1 N q N q X i =1 1 r ank i (1) , (11) where N q is the number of the queries and r ank i (1) corre- sponds to the rank of the relev ant item in the i th query . W e 4 https://dev eloper .spotify .com/web-api/get-audio-features/ 7 0 0.1 0.2 0.3 0 50 100 150 200 250 300 350 40 0 MRR1 Num ber of epochs Spoti f y Pretrai nCNN JointTr ain(MFCC) JointTrain(Mels pec) Fig. 3. MRR1 with respect to the numbers of epochs (Using audio as query to search lyrics, #CCA-component=30) also e v aluate recall@N to see how often the relev ant item is included in the top of the ranked list. Assume S q is the set of its relev ant items ( | S q | = 1 ) in the database for a given query and the system outputs a ranked list K q ( | K q | = N ). Then, recall is computed by r ecall = | S q T K q | | S q | (12) and is av eraged ov er all queries. W e use 8,000 pairs of audio and lyrics as the training dataset, and the rest 2,000 pairs for the retrie val testing. Because we generate 4 sub-sequences from each original MFCC sequence, there are 32,000 pairs of audio/lyric pairs in JoinTrain. In each run, the split of audio-lyrics pairs into training/testing is random, and a new model is trained. All results are averaged ov er 5 runs (cross-validations). In the batch-based training, the batch size is unified to 1000 samples in all methods, and the training takes 200 epochs for Joint- T rain and 400 epochs for other DCCA methods. Furthermore, training MVE requires the presence of non-paired instances. T o this end, we randomly selected 1 non-paired instance for each song in the dataset. The margin hyper-parameter was set to 0.3, according to our preliminary experiments. Then, we trained MVE for 1280 epochs. Experiment envir onment . The ev aluations are performed on a Centos7.2 server , which is configured with two E5-2620v4 CPU (2.1GHz), three GTX 1080 GPU (11GB), and DDR4- 2400 Memory (128G). Moreov er , it contains CUDA8.0, Conda3-4.3 (python 3.5), T ensorflow 1.3.0, and K eras 2.0.5. B. P erformance under Differ ent Numbers of Epochs Fig. 3 shows the MRR1 results of Spotify-DCCA, PretrainCNN-DCCA, JointT rain-DCCA with MFCC and JointT rain-DCCA with Mel-spectrum, under different numbers of epochs. In all methods, MRR1 increases with the number of epochs, but with different trend. It is clear that MFCC has similar performance as Mel-spectrum, conv erging much fast than the other two methods and achieving higher MRR1. Hereafter , we only use MFCC as the raw feature for JointT rain. C. Impact of the Numbers of CCA Components Here, we e v aluate the impact of the number of CCA/MVE components, which affects the performance of both the base- line methods and the proposed methods. The number of CCA/MVE components is adjusted from 10 to 100. The results of MRR1 and recall of Spotify-CCA are marked as N/A when the number of CCA components is greater than 65, the dimension of Spotify feature. The MRR1 results, with audio feature as query to search lyrics, are shown in T able III. Clearly , with the linear CCA, Spotify-CCA and PretrainCNN-CCA have poor performance, although the performance increases with the number of CCA components. In comparison, with DCCA, the MRR1 results are much improv ed in Spotify-DCCA and PretrainCNN- DCCA. The MRR1 performance increases with the number of CCA components, and approaches a constant value in PretrainCNN-DCCA. MRR1 decreases a little in Spotify- DCCA when the number of CCA components gets greater than 65, the dimension of Spotify feature. Using MVE, the peak performance of Spotify-MVE and PretrainCNN-MVE lies between that of CCA and DCCA. With the end-to- end training, the MRR1 performance is further improved in JointT rain-DCCA, and is almost insensiti ve to the number of CCA components. But a further increase in the number of CCA components will lead to the SVD failure in CCA. T able IV shows the MRR1 results achiev ed using lyrics as query to search audio in the database, which has a similar trend as in T able III. Generally , when audio and lyrics are con verted to the same semantic space, they share the same statistics, and can be retriev ed mutually . T able V and T able VI sho w the results of recall@1 and result@5. Recall@N in these tables is only a little greater than MRR1 in T able III and T able IV, which indicates that for most queries, its relev ant item either appears at the first place, or not in the top-n list at all. This infers that for some songs, lyrics and audio, ev en after being mapped to the same semantic space, are not similar enough. T able VII and T able VIII show the MRR1 results per category , where the first item with the same mood category as the query is regarded as relev ant. Compared with the instance-lev el retriev al, the MRR1 result per category is about 12% larger in all methods, but cannot be improved more by increasing the number of CCA/MVE components. Because there are 20 mood categories, and some mood categories hav e similar meaning, this increases the difficulty of distinguishing songs in the category le vel. D. Impact of the number of training samples Here we in vestigate the impact of the number of training samples, by adjusting the percentage of samples for training from 20% to 80%. The percentage of samples for the retriev al test remains 20%, and the number of training samples is chosen in such a way that there are the same number of songs per mood category . Fig. 4 and Fig. 5 show the MRR1 results in the instance- lev el retriev al. Spotify-CCA and PretrainCNN-CCA do not benefit from t he increase of the training samples. Spotify-MVE 8 T ABLE III I N STA N CE - L E VE L M R R 1 W I T H R ES P E C T T O D IFF E R E NT N U M BE R S O F C C A / MV E C O MP O N EN T S ( U S I NG AU D I O A S Q UE RY ) #CCA/MVE Spotify-CCA PretrainCNN-CCA Spotify-MVE PretrainCNN-MVE Spotify-DCCA PretrainCNN-DCCA JointT rain-DCCA 10 0.023 0.022 0.121 0.166 0.125 0.189 0.247 20 0.029 0.040 0.134 0.187 0.168 0.225 0.254 30 0.034 0.054 0.095 0.158 0.183 0.236 0.256 40 0.039 0.069 0.084 0.115 0.183 0.239 0.256 50 0.039 0.078 0.067 0.107 0.178 0.237 0.256 60 0.040 0.085 0.065 0.094 0.177 0.240 0.257 70 N/A 0.090 0.061 0.085 0.174 0.239 0.256 80 N/A 0.094 0.056 0.080 0.171 0.237 0.257 90 N/A 0.098 0.054 0.063 0.164 0.238 0.257 100 N/A 0.099 0.043 0.072 0.154 0.237 0.257 T ABLE IV I N STA N CE - L E VE L M R R 1 W I T H R ES P E C T T O D IFF E R E NT N U M BE R S O F C C A / MV E C O MP O N EN T S ( U S I NG LYR I C S A S Q U ERY ) #CCA/MVE Spotify-CCA PretrainCNN-CCA Spotify-MVE PretrainCNN-MVE Spotify-DCCA PretrainCNN-DCCA JointT rain-DCCA 10 0.022 0.022 0.114 0.157 0.124 0.190 0.248 20 0.029 0.038 0.119 0.179 0.168 0.225 0.254 30 0.034 0.053 0.083 0.147 0.184 0.236 0.256 40 0.038 0.065 0.067 0.100 0.183 0.240 0.254 50 0.041 0.076 0.056 0.097 0.180 0.236 0.256 60 0.041 0.083 0.053 0.082 0.176 0.241 0.257 70 N/A 0.089 0.049 0.074 0.174 0.240 0.256 80 N/A 0.094 0.048 0.068 0.170 0.237 0.257 90 N/A 0.099 0.044 0.053 0.163 0.239 0.256 100 N/A 0.102 0.035 0.062 0.152 0.237 0.256 T ABLE V I N STA N CE - L E VE L R E C A LL @ N W I T H R E SP E C T T O D I FFE R E NT N U M BE R S O F C C A C O M P ON E N TS ( U S IN G AU D IO A S Q U ERY ) Spotify@1 PretrainCNN@1 JointT rain@1 Spotify@5 PretrainCNN@5 JointT rain@5 CCA DCCA CCA DCCA DCCA CCA DCCA CCA DCCA DCCA 10 0.006 0.094 0.007 0.160 0.233 0.025 0.150 0.025 0.217 0.257 20 0.010 0.138 0.020 0.204 0.243 0.034 0.193 0.047 0.243 0.262 30 0.014 0.155 0.031 0.217 0.245 0.043 0.205 0.068 0.252 0.263 40 0.019 0.155 0.045 0.221 0.245 0.047 0.205 0.085 0.255 0.262 50 0.020 0.150 0.053 0.220 0.246 0.049 0.200 0.095 0.250 0.262 60 0.020 0.151 0.060 0.222 0.246 0.051 0.197 0.102 0.254 0.263 70 N/A 0.147 0.065 0.222 0.246 N/A 0.197 0.107 0.253 0.263 80 N/A 0.144 0.068 0.220 0.246 N/A 0.191 0.112 0.250 0.264 90 N/A 0.137 0.071 0.220 0.247 N/A 0.186 0.120 0.253 0.263 100 N/A 0.129 0.073 0.220 0.246 N/A 0.175 0.121 0.251 0.263 T ABLE VI I N STA N CE - L E VE L R E C A LL @ N W I T H R E SP E C T T O D I FFE R E NT N U M BE R S O F C C A C O M P ON E N TS ( U S IN G L Y R IC S A S Q UE RY ) Spotify@1 PretrainCNN@1 JointT rain@1 Spotify@5 PretrainCNN@5 JointT rain@5 CCA DCCA CCA DCCA DCCA CCA DCCA CCA DCCA DCCA 10 0.005 0.090 0.007 0.160 0.235 0.024 0.151 0.022 0.219 0.257 20 0.009 0.138 0.019 0.204 0.242 0.034 0.193 0.048 0.242 0.261 30 0.014 0.157 0.031 0.219 0.245 0.042 0.205 0.064 0.250 0.263 40 0.018 0.155 0.040 0.223 0.244 0.048 0.205 0.081 0.252 0.261 50 0.021 0.154 0.050 0.218 0.246 0.051 0.199 0.092 0.250 0.262 60 0.021 0.150 0.057 0.224 0.247 0.051 0.197 0.101 0.254 0.263 70 N/A 0.147 0.064 0.224 0.245 N/A 0.196 0.108 0.252 0.263 80 N/A 0.144 0.069 0.221 0.247 N/A 0.190 0.113 0.250 0.264 90 N/A 0.137 0.072 0.222 0.246 N/A 0.186 0.119 0.253 0.263 100 N/A 0.126 0.077 0.221 0.247 N/A 0.172 0.121 0.249 0.262 and PretrainCNN-MVE benefits a little. In comparison, when DCCA is used, the increase of training samples enables the system to learn more div erse aspect of audio/lyric features, and the MRR1 performance almost linearly increases. In the future, we will try to crawl more data for training a better model to improv e the retriev al performance. The MRR1 result, with lyrics as query to search audio, as shown in Fig. 5, has a similar trend as that in Fig. 4. Fig. 6 and Fig. 7 show the MRR1 results when the retriev al is performed in the category lev el. This has a similar trend as the result of instance-lev el retrie v al. V I I . C O N C L U S I O N Understanding the correlation between different music modalities is very useful for content-based cross-modal music 9 T ABLE VII C A T E G O R Y - L E V EL M R R 1 W I T H R E SP E C T T O D I FFE R E N T N UM B E R S O F C C A /M V E C O M PO N E N TS ( U SI N G A UD I O A S Q U E RY ) #CCA/MVE Spotify-CCA PretrainCNN-CCA Spotify-MVE PretrainCNN-MVE Spotify-DCCA Pretrain-DCCA JointT rain-DCCA 10 0.177 0.172 0.249 0.286 0.260 0.313 0.364 20 0.180 0.187 0.265 0.313 0.296 0.344 0.367 30 0.182 0.199 0.230 0.284 0.307 0.349 0.372 40 0.187 0.212 0.222 0.246 0.307 0.356 0.368 50 0.189 0.218 0.211 0.237 0.304 0.358 0.370 60 0.188 0.225 0.206 0.230 0.302 0.355 0.373 70 N/A 0.230 0.203 0.221 0.298 0.358 0.370 80 N/A 0.234 0.196 0.215 0.294 0.352 0.370 90 N/A 0.235 0.192 0.203 0.294 0.356 0.370 100 N/A 0.233 0.188 0.208 0.282 0.354 0.374 T ABLE VIII C A T E G O R Y - L E V EL M R R 1 W I T H R E SP E C T T O D I FFE R E N T N UM B E R S O F C C A /M V E C O M PO N E N TS ( U SI N G LY RI C S A S Q UE RY ) #CCA/MVE Spotify-CCA PretrainCNN-CCA Spotify-MVE PretrainCNN-MVE Spotify-DCCA Pretrain-DCCA JointT rain-DCCA 10 0.178 0.170 0.246 0.277 0.256 0.314 0.366 20 0.176 0.188 0.249 0.304 0.294 0.344 0.368 30 0.179 0.198 0.222 0.273 0.305 0.351 0.372 40 0.185 0.208 0.204 0.235 0.307 0.358 0.365 50 0.191 0.220 0.199 0.228 0.306 0.355 0.373 60 0.190 0.223 0.195 0.221 0.302 0.356 0.374 70 N/A 0.231 0.190 0.208 0.298 0.360 0.371 80 N/A 0.236 0.191 0.205 0.290 0.354 0.370 90 N/A 0.237 0.186 0.194 0.288 0.356 0.369 100 N/A 0.238 0.180 0.203 0.280 0.355 0.375 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Percentage of samples for training 0 0.05 0.1 0.15 0.2 0.25 0.3 MRR1 Spotify-CCA PretrainCNN-CCA Spotify-MVE PretrainCNN-MVE Spotify-DCCA PretrainCNN-DCCA JointTrain-DCCA Fig. 4. Instance-level MRR1 under different percentages of training samples (Using audio as query to search text lyrics, #CCA-component=30, 20% for testing) retriev al and recommendation. Audio and lyrics are most interesting aspects for storytelling music theme and events. In this paper, a deep correlation learning between audio and lyrics is proposed to understand music audio and lyrics. This is the first research for deep cross-modal correlation learning between audio and lyrics. Some efforts are made to giv e a deep study on i) deep models for processing audio branch are in vestigated such as pre-trained CNN with or without being followed by fully-connected layers. ii) An end-to-end conv olutional DCCA is further proposed to learn correlation between audio and lyrics where feature extrac- tion and correlation learning are simultaneously performed and joint representation is learned by considering temporal structures. iii) Extensiv e ev aluations show the effecti veness of the proposed deep correlation learning architecture where con volutional DCCA performs best when considering retriev al 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Percentage of samples for training 0 0.05 0.1 0.15 0.2 0.25 0.3 MRR1 Spotify-CCA PretrainCNN-CCA Spotify-MVE PretrainCNN-MVE Spotify-DCCA PretrainCNN-DCCA JointTrain-DCCA Fig. 5. Instance-level MRR1 under different percentages of training samples (Using text lyrics as query to search audio signal, #CCA-component=30, 20% for testing) accuracy and con verging time. More importantly , we apply our architecture to the bidirectional retriev al between audio and lyrics, e.g., searching lyrics with audio and vice versa. Cross- modal retrie v al performance is reported at instance le vel and mood category level. This work mainly pays attention to studying deep models for processing music audio while keeping pre-trained Doc2vec for processing lyrics in correlation learning. W e are collecting more audio-lyrics pairs to further improve the retriev al per- formance, and will integrate dif ferent music modality data to implement personalized music recommendation. In the future work, we will inv estigate some deep models for processing lyrics branch. L yrics contain a hierarchical composition such as verse, chorus, bridge. W e will extend our deep architec- ture to complement musical composition (giv en music audio) where Long Short T erm Memory (LSTM) will be applied for 10 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Percentage of samples for training 0 0.1 0.2 0.3 0.4 MRR1 Spotify-CCA PretrainCNN-CCA Spotify-MVE PretrainCNN-MVE Spotify-DCCA PretrainCNN-DCCA JointTrain-DCCA Fig. 6. Cate gory-lev el MRR1 under different percentages of training samples (Using audio signal as query to search text lyrics, #CCA-component=30, 20% for testing) 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Percentage of samples for training 0 0.1 0.2 0.3 0.4 MRR1 Spotify-CCA PretrainCNN-CCA Spotify-MVE PretrainCNN-MVE Spotify-DCCA PretrainCNN-DCCA JointTrain-DCCA Fig. 7. Cate gory-lev el MRR1 under different percentages of training samples (Using text lyrics as query to search audio signal, #CCA-component=30, 20% for testing) learning lyrics dependencies. R E F E R E N C E S [1] B. Nettl, “ An ethnomusicologist contemplates universals in musical sound and musical culture, ” In N. W allin, B. Merker , and S. Brown, editors, The origins of music, MIT Pr ess, Cambridge, MA , pp. 463–472, 2000. [2] Y . Y u, M. Crucianu, V . Oria, and E. Damiani, “Combining multi- probe histogram and order-statistics based lsh for scalable audio content retriev al, ” in Pr oceedings of the 18th ACM International Conference on Multimedia , ser . MM’10, 2010, pp. 381–390. [3] Y . Y u, R. Zimmermann, Y . W ang, and V . Oria, “Scalable content-based music retrie v al using chord progression histogram and tree-structure lsh, ” IEEE Tr ansactions on Multimedia , vol. 15, no. 8, pp. 1969–1981, 2013. [4] Y . Y u, M. Crucianu, V . Oria, and L. Chen, “Local summarization and multi-lev el lsh for retrieving multi-variant audio tracks, ” in Proceedings of the 17th ACM International Conference on Multimedia , ser . MM ’09, 2009, pp. 341–350. [5] M. McV icar , T . Freeman, and T . De Bie, “Mining the correlation between lyrical and audio features and the emergence of mood, ” in 12th International Society for Music Information Retrieval Conference , Pr oceedings , ser. ISMIR ’11, 2011, pp. 783–788. [6] R. Mihalcea and C. Strapparava, “L yrics, music, and emotions, ” in Pr oceedings of the 2012 Joint Confer ence on Empirical Methods in Natural Language Processing and Computational Natural Language Learning , ser . EMNLP-CoNLL ’12, 2012, pp. 590–599. [7] R. Malheiro, R. Panda, P . Gomes, and R. P . Paiv a, “Emotionally- relev ant features for classification and regression of music lyrics, ” IEEE T ransactions on Affective Computing , vol. PP , no. 99, pp. 1–1, 2016. [8] A. Tsaptsinos, “Lyrics-based music genre classification using a hierar- chical attention network, ” CoRR , vol. abs/1707.04678, 2017. [9] Y . Y u, Z. Shen, and R. Zimmermann, “ Automatic music soundtrack generation for outdoor videos from contextual sensor information, ” in Pr oceedings of the 20th ACM International Conference on Multimedia , ser . MM ’12, 2012, pp. 1377–1378. [10] E. Acar, F . Hopfgartner, and S. Albayrak, “Understanding affectiv e con- tent of music videos through learned representations, ” in Pr oceedings of the 20th Anniversary International Conference on MultiMedia Modeling - V olume 8325 , ser . MMM 2014, 2014, pp. 303–314. [11] R. Mayer , “ Analysing the similarity of album art with self-organising maps, ” in Advances in Self-Or ganizing Maps - 8th International W ork- shop, WSOM 2011, Espoo, F inland, June 13-15, 2011. Pr oceedings , 2011, pp. 357–366. [12] E. Brochu, N. de Freitas, and K. Bao, “The sound of an album cover: Probabilistic multimedia and information retriev al, ” in Artificial Intelligence and Statistics (AISTA TS) , 2003. [13] R. R. Shah, Y . Y u, and R. Zimmermann, “ Advisor: Personalized video soundtrack recommendation by late fusion with heuristic rankings, ” in Pr oceedings of the 22Nd ACM International Conference on Multimedia , ser . MM ’14, 2014, pp. 607–616. [14] O. Gillet, S. Essid, and G. Richard, “On the correlation of automatic audio and visual segmentations of music videos, ” IEEE Tr ansactions on Cir cuits and Systems for V ideo T echnology , vol. 17, no. 3, 2007. [15] L. Nanni, Y . M. Costa, A. Lumini, M. Y . Kim, and S. R. Baek, “Combining visual and acoustic features for music genre classification, ” Expert Syst. Appl. , vol. 45, no. C, pp. 108–117, 2016. [16] X. W u, Y . Qiao, X. W ang, and X. T ang, “Bridging music and image via cross-modal ranking analysis, ” IEEE T ransactions on Multimedia , vol. 18, no. 7, pp. 1305–1318, 2016. [17] Q. Jiang and W . Li, “Deep cross-modal hashing, ” CoRR , vol. abs/1602.02255, 2016. [18] Y . Cao, M. Long, J. W ang, and S. Liu, “Collective deep quantization for efficient cross-modal retriev al, ” in Pr oceedings of the Thirty-Fir st AAAI Conference on Artificial Intelligence, F ebruary 4-9, 2017, San F rancisco, California, USA. , 2017, pp. 3974–3980. [19] Y . Y u, S. T ang, K. Aizaw a, and A. Aizaw a, “V enuenet: Fine-grained venue discovery by deep correlation learning, ” in Proceedings of the 19th IEEE International Symposium on Multimedia , ser . ISM’17, 2017, pp. –. [20] C. Zhong, Y . Y u, S. T ang, S. Satoh, and K. Xing, Deep Multi-label Hashing for Large-Scale V isual Sear ch Based on Semantic Graph . Springer International Publishing, 2017, pp. 169–184. [21] Y . Huang, W . W ang, and L. W ang, “Instance-aware image and sentence matching with selective multimodal LSTM, ” CoRR , vol. abs/1611.05588, 2016. [22] Y . Y u, H. Ko, J. Choi, and G. Kim, “V ideo captioning and retriev al models with semantic attention, ” CoRR , vol. abs/1610.02947, 2016. [23] F . Y an and K. Mikolajczyk, “Deep correlation for matching images and text, ” in IEEE Conference on Computer V ision and P attern Recognition, CVPR 2015 , 2015, pp. 3441–3450. [24] K. Simonyan and A. Zisserman, “V ery deep conv olutional networks for large-scale image recognition, ” CoRR , vol. abs/1409.1556, 2014. [25] J. H. Lau and T . Baldwin, “ An empirical ev aluation of doc2vec with practical insights into document embedding generation, ” CoRR , vol. abs/1607.05368, 2016. [26] T . Lin, M. Maire, S. J. Belongie, L. D. Bourdev , R. B. Girshick, J. Hays, P . Perona, D. Ramanan, P . Doll ´ ar , and C. L. Zitnick, “Microsoft COCO: common objects in context, ” CoRR , vol. abs/1405.0312, 2014. [27] N. Rasiwasia, J. Costa Pereira, E. Coviello, G. Doyle, G. R. Lanckriet, R. Levy , and N. V asconcelos, “ A new approach to cross-modal multi- media retriev al, ” in ACM MM’10 , 2010, pp. 251–260. [28] K. Simonyan and A. Zisserman, “V ery deep conv olutional networks for large-scale image recognition, ” CoRR , vol. abs/1409.1556, 2014. [29] A. Krizhevsky , I. Sutskever , and G. E. Hinton, “Imagenet classification with deep con volutional neural networks, ” Commun. A CM , vol. 60, no. 6, pp. 84–90, 2017. [30] Y . M. Costa, L. S. Oliveira, and C. N. Silla, “ An ev aluation of con vo- lutional neural networks for music classification using spectrograms, ” Appl. Soft Comput. , vol. 52, no. C, pp. 28–38, 2017. [31] S. Hershey , S. Chaudhuri, D. P . W . Ellis, J. F . Gemmeke, A. Jansen, R. C. Moore, M. Plakal, D. Platt, R. A. Saurous, B. Seybold, M. Slaney , R. J. W eiss, and K. Wilson, “Cnn architectures for large-scale audio classification, ” in 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) , 2017, pp. 131–135. [32] K. Choi, G. Fazekas, and M. B. Sandler , “ Automatic tagging using deep con volutional neural networks, ” CoRR , vol. abs/1606.00298, 2016. [33] H. Hotelling, “Relations between two sets of v ariates, ” Biometrika , vol. 28, no. 3/4, pp. 321–377, 1936. 11 [34] G. Andrew , R. Arora, J. Bilmes, and K. Livescu, “Deep canonical cor- relation analysis, ” in Proceedings of the 30th International Conference on International Confer ence on Machine Learning - V olume 28 , ser . ICML ’13, 2013, pp. III–1247–III–1255. [35] S. Sigtia and S. Dixon, “Improved music feature learning with deep neural networks, ” in 2014 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) , 2014, pp. 6959–6963. [36] P . Hamel, M. E. P . Davies, K. Y oshii, and M. Goto, “Transfer learning in mir: Sharing learned latent representations for music audio classification and similarity , ” in ISMIR , 2013. [37] C. D. Manning, M. Surdeanu, J. Bauer , J. R. Finkel, S. Bethard, and D. McClosky , “The stanford corenlp natural language processing toolkit, ” in Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics, ACL 2014 , 2014, pp. 55–60. [38] W . He, W . W ang, and K. Liv escu, “Multi-view recurrent neural acoustic word embeddings, ” CoRR , vol. abs/1611.04496, 2016. [Online]. A vailable: http://arxiv .org/abs/1611.04496

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment