Realistic multi-microphone data simulation for distant speech recognition

The availability of realistic simulated corpora is of key importance for the future progress of distant speech recognition technology. The reliability, flexibility and low computational cost of a data simulation process may ultimately allow researche…

Authors: Mirco Ravanelli, Piergiorgio Svaizer, Maurizio Omologo

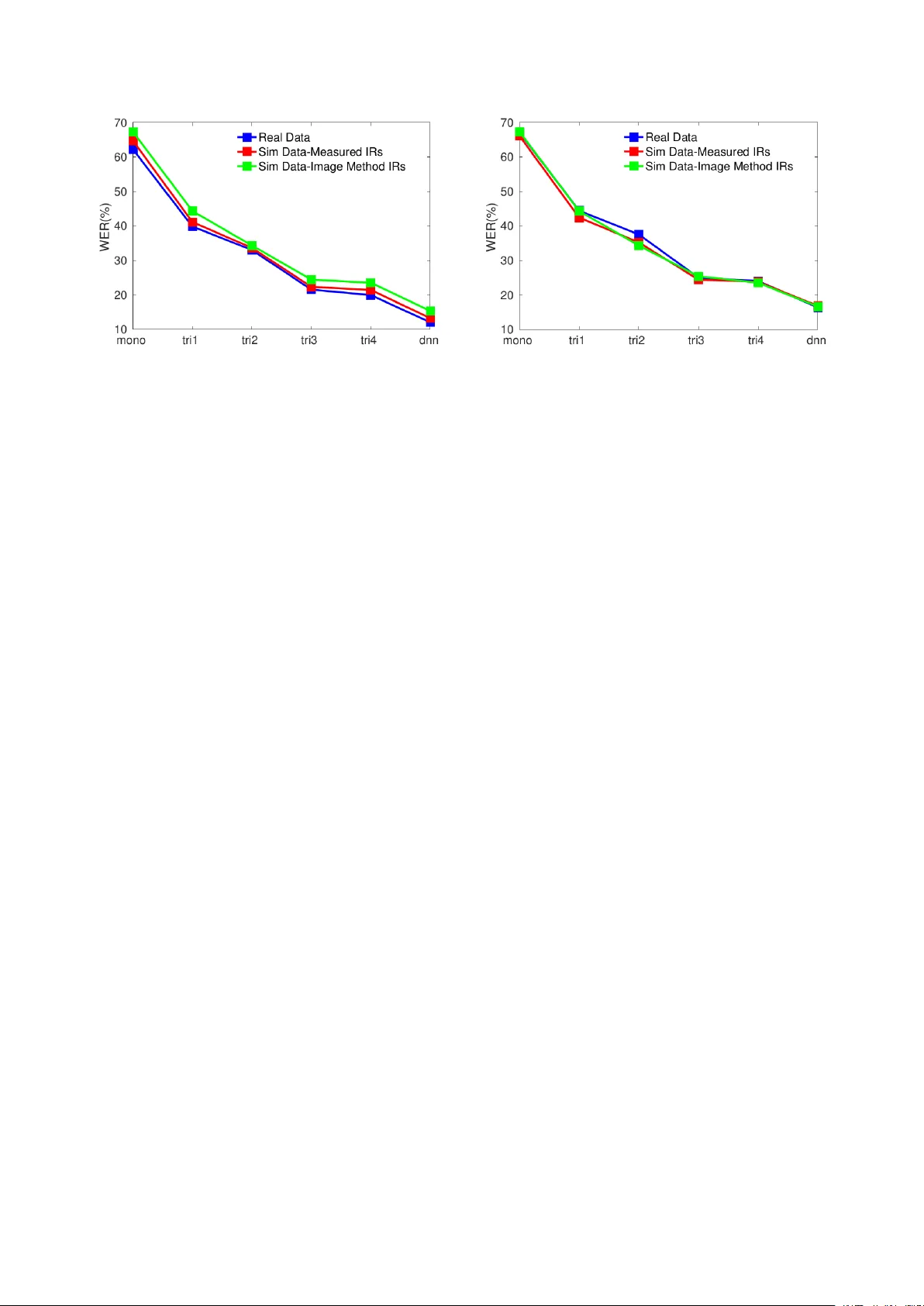

Realistic multi-micr ophone data simulation f or distant speech r ecognition Mir co Ravanelli, Pier gior gio Svaizer , Maurizio Omologo Fondazione Bruno K essler (FBK), T rento, IT AL Y mravanelli@fbk.eu, svaizer@fbk.eu, omologo@fbk.eu Abstract The a vailability of realistic simulated corpora is of key impor- tance for the future progress of distant speech recognition tech- nology . The reliability , flexibility and low computational cost of a data simulation process may ultimately allow researchers to train, tune and test different techniques in a v ariety of acous- tic scenarios, av oiding the laborious ef fort of directly recording real data from the targeted en vironment. In the last decade, sev eral simulated corpora hav e been re- leased to the research community , including the data-sets dis- tributed in the context of projects and international challenges, such as CHiME and REVERB. These efforts were extremely useful to derive baselines and common ev aluation frameworks for comparison purposes. At the same time, in many cases they highlighted the need of a better coherence between real and sim- ulated conditions. In this paper, we examine this issue and we describe our approach to the generation of realistic corpora in a domes- tic context. Experimental v alidation, conducted in a multi- microphone scenario, shows that a comparable performance trend can be observed with both real and simulated data across different recognition frameworks, acoustic models, as well as multi-microphone processing techniques. Index T erms : distant speech recognition, simulated data, real data, multi-microphone speech corpora. 1. Introduction Distant Speech Recognition (DSR) represents a fundamental technology tow ards natural human-machine interfaces. Despite the recent substantial progress in various related fields, includ- ing spatial filtering [1, 2], microphone selection [3], source separation [4], speech dereverberation [5], speaker localization [6], acoustic event detection [7] as well as acoustic modeling [8, 9, 10, 11, 12], DSR still exhibits a lack of robustness, es- pecially when adv erse acoustic conditions originated by non- stationary noises and acoustic rev erberation are met [13]. The av ailability of realistic simulated corpora and, more im- portantly , the definition of common methodologies, algorithms and good practices to generate simulated data plays a crucial role for fostering future research in this field and will ev entu- ally help researchers to better migrate laboratory results into real application scenarios. Approaches as contaminated speech training [14, 15, 16], multi-style training [17, 18, 19] and data augmentation [20, 21, 22, 23] hav e, in fact, been shown very effecti ve in improving the DSR system performance. During the last decade, some simulated corpora have been made av ailable to the research community under projects or in- ternational challenges. V aluable examples are the corpora re- leased under the ChiME [24, 25] and REVERB [26] challenges, which have contributed to define common tasks, baselines and ev aluation framew orks across researchers. Other simulated cor- pora hav e been released under the CHIL project [27] and, more recently , under the EU DIRHA project [28, 29, 30, 31, 32]. These ef forts were extremely important to stimulate research in the DSR field, but in several cases they also pointed out the need of a better coherence between real and simulated data performance. In [25], for instance, the authors state that “ The [CHiME3] c hallenge has drawn attention to the value of simu- lated training data, but highlighted the need for better simula- tion algorithm. It has also demonstrated that caution is needed when interpreting results of challenges that use simulated data evaluation. ”. W e fully agree with this statement, as our past experience confirms that prudence is needed when using simu- lated data. This caution is often to be attributed to very subtle differences that may characterize the process of simulation as, for instance, the accuracy and the realism of impulse responses. The main purpose of this paper is to inv estigate on the le vel of agreement in performance trend, that can be obtained with real and simulated signals. A major focus of our work is on rev erberation, rather than background noise. Simulations are based on the contamination method described in [15]. Each impulse response (IR) is measured according to the procedure described in [33], while simulated IRs are deri ved by a modified version of the image method [34], which was experimented in our past works. This modified version dif fers from the original [34] just for simulating also the directivity pattern of the source, besides sound propagation effects. The experiments, conducted in a new multi-microphone domestic scenario that was devel- oped under DIRHA, demonstrate a good lev el of agreement in performance, evident with all the inv estigated acoustic models and processing. W e also sho w the improv ement that can be obtained when measured IRs, instead of image-method based ones, are used to train acoustic models. The paper is organized as follows: Sec. 2 outlines the data simulation approach; Sec. 3 describes the adopted experimental setup, while Sec. 4 reports on the experimental validation of the methodology . Finally , Sec. 5 will draw some conclusions and provide an outlook on future work. 2. Data Simulation In this work, the data simulation process is achie ved according to the following equation 1 : y ( t ) = x ( t ) ∗ h ( t ) + n ( t ) (1) where y ( t ) is the simulated distant-talking signal, x ( t ) is the close-talking speech, h ( t ) is the impulse response of the acous- tic en vironment for a giv en source and microphone position, ∗ 1 https://github.com/mravanelli/pySpeechRev is the con v olution operator , and n ( t ) is an additive background noise. Sev eral important aspects must be considered for an ef- fectiv e simulation, as discussed in the follo wing. 2.1. Close-T alking Recordings Our experience in data simulation suggests that the availabil- ity of a high-quality close-talking data set is crucial for gen- erating realistic distant-talking simulated data. Particular atten- tion should be directed to ensuring dry and noiseless recordings, since the possible presence of noise sources, saturation, rev er- beration ef fects due to the room acoustic as well as distance be- tween speak er and microphone can produce artifacts in the later simulation process. The quality and the characteristics of the microphone can also influence the realism of the simulations. In the context of the DIRHA project, high quality close- talking speech signals hav e been acquired under extremely quiet conditions (with a SNR of at least 50-60 dB for each sentence), in an acoustically treated recording room, using a high-quality microphone (Neumann TLM 103) and a professional audio card (RME Octamic II). 2.2. Impulse Response The impulse response is the most representative feature char- acterizing an acoustic space. In the assumption of linear time- in v ariant reverberant rooms, IRs provide a complete description of the changes a sound signal undergoes when trav eling from one point in space to another [35]. The impulse response can be either measured in the targeted en vironment or geometrically inferred by simulations. Sev eral techniques have been proposed in the last decade for measuring the IR of an acoustic enclosure, including solu- tions based on Maximum Length Sequence (MLS) [36], Linear Chirps [37], or Exponential Sine Sweeps (ESS) [37]. In [33], a comparison between these different methods has been proposed for distant speech recognition purposes, showing that ESS out- performs the other methods, especially when long (1 minute) and high dynamic excitation signals can be emitted in the acous- tic environment. This result is due to a better management of the harmonic distortions introduced by the loudspeaker as well as to a more fav orable SNR. That study also rev ealed that using a professional loudspeaker for exciting the acoustic en vironment (e.g., a professional Genelec 8030) leads to a much more real- istic impulse response measurement, if compared to what ob- tained with a cheaper loudspeaker . Follo wing these guidelines, an IR measurement campaign has been conducted in the context of the DIRHA project to acoustically characterize a real apart- ment equipped with a network of microphones. As discussed in [28], about 9000 IRs were estimated. Synthetic room impulse responses can be generated by the well-known Image-source Method (IM) [34], based on a geo- metric model accounting for room size, source and microphone positions, and ideal propagation/reflection paths within the en- closure. The baseline method only considers attenuation and (approximated) time instants of arriv al of reflections, which re- sults in quite unrealistic IRs. Sev eral improvements have been proposed in order to achiev e IRs with characteristics that bet- ter match with those measured in real en vironments [38, 39]. In this work, for instance, a modified version of the standard algorithm allo wing us to simulate directive sources is consid- ered. This version has sho wn to be ef fecti ve to generate IRs better reflecting real-world conditions. Howev er , such simpli- fied propagation models, assuming an empty shoebox geometry , cannot reproduce the complex patterns of sound propagation in 453 cm 485 cm L1C L4L L2R LA6 L3L Ceiling Array Harmonic Arrays Kitchen Living-room Figure 1: An outline of two rooms of the DIRHA apartment considered for this study . Small blue dots represent digital MEMS microphones, red ones refer to the channels considered for the following experimental acti vity , while black ones repre- sent the other av ailable microphones. The right pictures show the ceiling array and the two linear harmonic arrays installed in the living-room. real rooms, as will be shown in Sec.4.5. 3. Experimental Setup This section describes the microphone setup, the task, the cor- pora as well as the speech recognition frame work considered in this work. 3.1. Multi-microphone Setup The apartment used in the DIRHA project is equipped with high-quality omnidirectional microphones (Shure MX391/O), connected to multichannel clocked pre-amp and A/D boards (RME Octamic II), which provide a synchronous sampling at 48 kHz, with 24 bit resolution. The living-room and the kitchen comprise the largest concentration of sensors and de vices. As shown in Fig. 1, the living-room includes three microphone pairs, a microphone triplet, two 6-microphone ceiling arrays (one consisting of MEMS digital microphones), two harmonic arrays (consisting of 15 electret microphones and 15 MEMS digital microphones, respectiv ely). The experiments in this work refer to the use of the fi ve microphones depicted in red in Fig.1. The reverberation time T 60 of the considered room is about 0.75 seconds, which indicates that the acoustic character - istics are quite challenging for DSR studies. 3.2. T ask and corpora The task considered in this work is the W all Street Journal (WSJ-5k), in agreement with the task addressed in the CHiME 3 challenge. While CHiME 3 was pretty focused on robust- ness against noise, in this work the main source of disturbance is rev erberation. For testing purposes we employed both real X X X X X X X X X A.M. Data T ype Single Distant Microphone Delay-and-Sum Beamforming Oracle Microphone Selection Real Data Sim Data Real Data Sim Data Real Data Sim Data Mono 62.2 64.7 56.8 58.8 49.6 51.9 T ri1 39.8 41.1 33.9 34.9 28.0 29.2 T ri2 33.0 33.6 28.4 29.1 22.6 23.2 T ri3 21.5 22.3 18.0 19.1 13.6 14.9 T ri4 19.9 21.4 17.5 17.4 12.6 13.8 DNN 12.0 13.2 10.7 11.6 7.2 7.6 T able 1: WER(%) obtained in a distant-talking scenario with real and simulated data across different acoustic models and microphone processing. and simulated data, which are deriv ed from recordings in the DIRHA apartment. Real data were collected from four nativ e US English speakers (two females and tw o males) uttering a to- tal of 272 WSJ sentences in different positions of the apartment. In particular , each subject was positioned in the living-room and read the material from a tablet, standing still or sitting on a chair, in a given position. After a set of 10-12 sentences, she/he was asked to move to a dif ferent position and take a different ori- entation. In order to allow a fair comparison between real and simulated data, we asked the same speakers to utter the same sentences in our recording studio, using the acquisition set-up described in Sec.2.1. Moreover , for each position/orientation of the speaker in the real recording, a corresponding IR was mea- sured, allowing us to deriv e a simulated corpus well-matching with the speaker positions used for the real data. The train- ing phase is based on the WSJ0 database (LDC catalog num- ber LDC93S6A), which was contaminated with an impulse re- sponse measured in a position different from those used for test- ing purposes. 3.3. ASR framework The experimental part of this work is based on the Kaldi toolkit [40]. The recipe considered for training and testing the DSR system is similar to the s5 recipe proposed in the Kaldi re- lease for WSJ data. In short, the speech recognizer is based on standard MFCCs and acoustic models of increasing com- plexity . “ Mono ” is the simplest system based on 48 context- independent phones of the English language, each modeled by a three state left-to-right HMM (overall using 1000 gaussians). A set of context-dependent models are then derived. In “ tri1 ” 2.5k tied states with 15k gaussians are trained by exploiting a binary regression tree.“ T ri2 ”is an e v olution of the standard context-dependent model in which a Linear Discriminant Anal- ysis (LD A) is applied. In both ‘ ‘tri3 ” and “ tri4 ” models Speak er Adaptiv e T raining (SA T) is also performed. The difference is that “ tri4 ” is bootstrapped by the pre viously computed ‘ ‘tri3 ” model. The considered “ DNN ”, based on the Karel’ s recipe, is composed of 6 hidden layers of 2048 neurons, with a context window of 19 consecutiv e frames (9 before and 9 after the cur- rent frame) and an initial learning rate of 0.008. The weights are initialized via RBM pre-training, while the fine tuning is performed with stochastic gradient descent optimizing cross- entropy loss function. 4. Results This section provides some speech recognition results, with the purpose of v alidating the proposed data simulation approach. In the following sub-section, a close-talking baseline is provided, while in subsections 4.2, 4.3 and 4.4 distant-talking experiments mono tri1 tri2 tri3 tri4 dnn WER(%) 0 10 20 30 40 50 60 70 Single-Mic-Real Single-Mic-Sim Beam-Real Beam-Sim Oracle-Real Oracle-Sim Figure 2: Graphical representation of the performance trends reported in T able 1. with single microphone, beamforming on the ceiling array , and oracle microphone selection are respectiv ely presented. 4.1. Close-talking baseline The W ord Error Rate (WER%) obtained by decoding the close- talking WSJ sentences recorded in the recording studio is 3 . 7% (using DNN models trained with the original clean WSJ data set). It is worth nothing that, under such fav orable acoustic conditions, the DNN model leads to a very accurate sentence transcription. For reference purposes, the average WER with close-talking signals recorded in the DIRHA apartment is about 5% . 4.2. Single distant-microphone perf ormance The results reported in the first column of T able 1 sho w the per - formance obtained when a single distant microphone (i.e., the “ LA6 ” ceiling microphone depicted in Fig. 1) is considered. Results clearly highlight that in the case of distant-speech input the ASR performance is dramatically reduced, if compared to a close-talking case. The use of robust DNN models trained with contaminated speech material leads, as expected, to a substan- tial improvement of the WER when compared to other GMM- based systems. The most interesting result, ho we ver , is that a similar performance trend is obtained for both real and simu- lated data over different acoustic models. This trend can also be appreciated by comparing the continuous (real data) and dashed (sim data) blue lines of Fig. 2. In particular , the av erage rela- tiv e WER distance between such data-sets computed over the considered acoustic models is about 6%. W e believe that this is a significant result, especially if one considers that part of this Figure 3: Comparison between real and simulated data with contaminated training performed with a measured IR. Figure 4: Comparison between real and simulated data with contaminated training performed with an image method IR. variability can be attributed, despite our best efforts for align- ing simulated and real data, to the f act that in the tw o recording sessions speakers inevitably uttered the same sentence in a dif- ferent way . 4.3. Delay-and-sum beamforming performance The simulation methodology described in Sec. 2, can be e x- tended in a very straightforward way to a multi-microphone scenario. It would be thus of crucial importance to ensure that the similar trend between real and simulated data achie ved with a single microphone is preserved e ven when multi-microphone processing is applied to the data. Here a standard delay-and- sum beamforming, based on source-microphone delays com- puted with the GCC-PHA T algorithm [41], is applied to the six microphones of the ceiling array of Fig. 1. T able 1 and Fig. 2 show that beamforming is helpful in improving the system performance. One can also note that, as hoped, a similar per- formance trend between the data-sets is reached when applying beamforming. For instance, in the case of real data coupled with DNN acoustic models, delay-and-sum beamforming leads to a relativ e improv ement of about 12% ov er the single microphone case, which is similar to the improvement of 13% obtained with the simulated data. 4.4. Oracle microphone selection perf ormance T o further confirm the result achiev ed in the previous sections, an oracle microphone selection is applied to both real and simu- lated data. An oracle microphone selection is performed by se- lecting, for each sentence uttered by the speaker , the best WER from the five signals acquired by the red microphones in Fig. 1. T able 1 and Fig. 2, confirm that the consistency between real and simulated data is largely preserved. The experimental results also show that an optimal microphone selection would be particularly helpful for improving the DSR performance. A proper channel selection has a great potential ev en when com- pared with a microphone combination based on delay-and-sum beamforming. For instance, in the case of real data with DNN acoustic models, a WER of 7.2 % is obtained with an oracle channel selection against a WER of 10.7% achie v ed with beam- forming. 4.5. Measured vs Geometric Modeling of IRs In this section we compare the simulations based on mea- sured IRs, so far considered, with simulations deri ved by im- age method-based IRs. For the latter case, the geometry of the targeted living room, the spatial coordinates of microphones and speakers, as well as the re verberation time T 60 of 0.75s are imposed to the IM algorithm. As outlined in Sec. 2.2, a cer- tain source spatial directivity similar to that exhibited by a real speaker , is considered. Fig. 3 and Fig. 4 sho w the perfor- mance observed using two different training strategies. In par- ticular , Fig. 3 reports the trend obtained when the training set is contaminated with an impulse response measured in the target en vironment, while Fig. 4 presents the results obtained when using an image method-based IR. Results confirm that, in both matching and mismatching conditions, simulated data obtained with measured IRs exhibit a trend very similar to that observed with real data. For instance, in the case of DNN, performance with Real, Sim-Measured IRs, and Image Method, are 12.0%, 13.2%, and 15.3%, respectiv ely . On the other hand, despite our best ef forts for increasing the realism of image method-based IRs, the performance with such simulation approach is still un- satisfactory . In particular , in the case of DNN the relativ e per- formance loss using image-method based IRs, instead of mea- sured IRs, for contaminated training is 36% (i.e., from 12% to 16.3%). 5. Conclusion In this paper we discussed our best practices to generate re- alistic multi-microphone data for training and testing distant- speech recognition systems. Our approach has been validated by comparing real data with simulated data obtained by con- volving close-talking dry speech sequences with impulse re- sponses measured in a domestic environment. The experimen- tal results show that a very similar performance trend can be obtained between real and simulated data over different ex- perimental conditions, in volving different acoustic models and multi-microphone processing techniques. This study also re- vealed that data simulation based on IRs measured in the tar- geted en vironment ensures much better results than those ob- tained with an IR simulator based on Image method. Howe ver , in the perspecti ve of a real application, measuring every time the IRs can be unpractical. The results reported in this paper are thus just a starting point towards a future w ork, which will study more in depth ho w the gap between measured and synthetic IRs can be reduced. An ideal solution would be to automatically analyze the recorded speech and to drive an unsupervised adap- tation of initial IRs possibly generated by simulation. 6. References [1] M. Brandstein and D. W ard, Micr ophone arrays . Springer , Berlin, 2000. [2] W . Kellermann, Beamforming for Speech and Audio Signals . in HandBook of Signal Processing in Acoustics, Springer , 2008. [3] M. W olf and C. Nadeu, “Channel selection measures for multi- microphone speech recognition, ” Speech Communication , v ol. 57, pp. 170–180, Feb . 2014. [4] S. Makino, T . Lee, and H. Sawada, Blind Speech Separation . Springer , 2010. [5] P . A. Naylor and N. D. Gaubitch, Speech Der everberation. Springer , 2010. [6] R. DeMori, Spoken Dialogues with Computers . London: Aca- demic Press, 1998, chapter 2. [7] A. T emko, C. Nadeu, D. Macho, R. Malkin, C. Zieger , and M. Omologo, “ Acoustic ev ent detection and classification, ” in Computers in the Human Interaction Loop . Springer London, 2009, pp. 61–73. [8] P . Swietojanski, A. Ghoshal, and S. Renals, “Hybrid acous- tic models for distant and multichannel large vocabulary speech recognition, ” in Pr oc. of ASR U 2013 , pp. 285–290. [9] Y . Liu, P . Zhang, and T . Hain, “Using neural network front-ends on far field multiple microphones based speech recognition, ” in Pr oc. of ICASSP 2014 , pp. 5542–5546. [10] F . W eninger , S. W atanabe, J. Le Roux, J. Hershey , Y . T achioka, J. Geiger , B. Schuller , and G. Rigoll, “The MERL/MELCO/TUM System for the REVERB Challenge Using Deep Recurrent Neu- ral Network Feature Enhancement, ” in IEEE REVERB W orkshop , 2014. [11] S. S. Masato Mimura and T . Kawahara, “Rev erberant speech recognition combining deep neural networks and deep autoen- coders, ” in IEEE REVERB W orkshop , 2014. [12] A. Schwarz, C. Huemmer, R. Maas, and W . Kellermann, “Spa- tial Diffuseness Features for DNN-Based Speech Recognition in Noisy and Rev erberant En vironments, ” in Pr oc. of ICASSP 2015 . [13] E. H ¨ ansler and G. Schmidt, Speech and Audio Pr ocessing in Ad- verse Envir onments . Springer , 2008. [14] M. Ravanelli and M. Omologo, “Contaminated speech training methods for robust DNN-HMM distant speech recognition, ” in Pr oc. of INTERSPEECH 2015 , pp. 756–760. [15] M. Matassoni, M. Omologo, D. Giuliani, and P . Svaizer , “Hid- den Markov model training with contaminated speech material for distant-talking speech recognition.” Computer Speec h & Lan- guage , v ol. 16, no. 2, pp. 205–223, 2002. [16] M. Rav anelli and M. Omologo, “On the selection of the impulse responses for distant-speech recognition based on contaminated speech training, ” in Pr oc. of INTERSPEECH 2014 , pp. 1028– 1032. [17] A. Sehr , C. Hofmann, R. Maas, and W . Kellermann, “Multi- style training of HMMS with stereo data for reverberation-rob ust speech recognition, ” in Pr oc. of HSCMA 2011 , pp. 196–200. [18] L. Couvreur , C. Couvreur, and C. Ris, “A corpus-based approach for robust ASR in reverberant en vironments.” in Proc. of INTER- SPEECH 2000 , pp. 397–400. [19] T . Haderlein, E. N ¨ oth, W . Herbordt, W . Kellermann, and H. Nie- mann, “Using Artificially Reverberated T raining Data in Distant- T alking ASR.” ser . Lecture Notes in Computer Science, v ol. 3658. Springer , 2005, pp. 226–233. [20] X. Cui, V . Goel, and B. Kingsbury , “Data augmentation for deep neural network acoustic modeling, ” in Proc. of ICASSP 2014 , pp. 5582–5586. [21] T . Y oshioka, N. Ito, M. Delcroix, A. Ogawa, K. Kinoshita, M. Fu- jimoto, C. Y u, W . J. Fabian, M. Espi, T . Higuchi, S. Araki, and T . Nakatani, “The NTT CHiME-3 system: Advances in speech enhancement and recognition for mobile multi-microphone de- vices, ” in Pr oc. ASRU 2015 , pp. 436–443. [22] S. R. A. Ragni, K. Knill and M. Gales, “Data augmentation for low resource languages, ” in Pr oc. of INTERSPEECH 2014 , pp. 5582–5586. [23] T . Ko, V . Peddinti, D. Pove y , and S. Khudanpur, “ Audio augmen- tation for speech recognition, ” in Pr oc. of INTERSPEECH 2015 , pp. 3586–3589. [24] J. Barker , E. V incent, N. Ma, H. Christensen, and P . Green, “The P ASCAL CHiME speech separation and recognition challenge.” Computer Speech and Languag e , vol. 27, no. 3, pp. 621–633, 2013. [25] J. Barker, R. Marxer , E. V incent, and S. W atanabe, “The third CHiME Speech Separation and Recognition Challenge: Dataset, task and baselines, ” in Pr oc. of ASR U 2015 . [26] K. Kinoshita, M. Delcroix, T . Y oshioka, T . Nakatani, E. Habets, R. Haeb-Umbach, V . Leutnant, A. Sehr, W . K ellermann, R. Maas, S. Gannot, and B. Raj, “The reverb challenge: A Common Eval- uation Frame work for Derev erberation and Recognition of Rev er- berant Speech, ” in Pr oc. of W ASP AA 2013 , pp. 1–4. [27] D. Mostefa, N. Moreau, K. Choukri, G. Potamianos, S. Chu, A. T yagi, J. Casas, J. Turmo, L. Cristoforetti, F . T obia, A. Pnev- matikakis, V . Mylonakis, F . T alantzis, S. Burger , R. Stiefelhagen, K. Bernardin, and C. Rochet, “The CHIL Audiovisual Corpus for Lecture and Meeting Analysis inside Smart Rooms, ” Language r esour ces and evaluation , vol. 41, no. 3, pp. 389–407, 01/2008 2007. [28] L. Cristoforetti, M. Rav anelli, M. Omologo, A. Sosi, A. Abad, M. Hagmueller , and P . Maragos, “The DIRHA simulated corpus, ” in Pr oc. of LREC 2014 , pp. 2629–2634. [29] M. Matassoni, R. Astudillo, A. Katsamanis, and M. Ravanelli, “The DIRHA-GRID corpus: baseline and tools for multi-room distant speech recognition using distributed microphones, ” in Pr oc. of INTERSPEECH 2014 , pp. 1616–1617. [30] A. Brutti, M. Rav anelli, P . Svaizer , and M. Omologo, “ A speech ev ent detection/localization task for multiroom environments, ” in Pr oc. of HSCMA 2014 , pp. 157–161. [31] E. Zwyssig, M. Rav anelli, P . Svaizer , and M. Omologo, “ A multi-channel corpus for distant-speech interaction in presence of known interferences, ” in Proc. of ICASSP 2015 , pp. 4480–4484. [32] M. Ravanelli, L. Cristoforetti, R. Gretter, M. Pellin, A. Sosi, and M. Omologo, “The DIRHA-ENGLISH corpus and related tasks for distant-speech recognition in domestic environments, ” in Proc. of ASRU 2015 , pp. 275–282. [33] M. Ravanelli, A. Sosi, P . Svaizer , and M. Omologo, “Impulse re- sponse estimation for robust speech recognition in a reverberant en vironment, ” in Pr oc. of EUSIPCO 2012 . [34] J. Allen and D. Berkley , “Image method for efficiently simulat- ing smallroom acoustics, ” in J. Acoust. Soc. Am , 1979, pp. 2425– 2428. [35] H. Kuttruff, Room acoustic , 5th ed. Spon Press, 2009. [36] M. Schroeder , “Diffuse sound reflection by maximum-length se- quences, ” in J. Acoust. Soc. Am , vol. 57(1), 1975, pp. 149–150. [37] A. Farina, “Simultaneous measurement of impulse response and distortion with a swept-sine technique, ” in Pr oc. of the 108th AES Con vention , 2000, pp. 18–22. [38] P . Peterson, “Simulating the response of multiple microphones to a single acoustic source in a reverberant room, ” in J. Acoust. Soc. Am , vol. 80(5), 1986, pp. 1527–1529. [39] E. Lehmann and A. Johansson, “Prediction of energy decay in room impulse responses simulated with an image-source model, ” in J. Acoust. Soc. Am , vol. 124(1), 2008, pp. 269–277. [40] D. Pove y at all, “The Kaldi Speech Recognition T oolkit, ” in Pr oc. of ASRU 2011 . [41] C. H. Knapp and G. C. Carter , “The generalized correlation method for estimation of time delay , ” vol. 24, no. 4, pp. 320–327, 1976.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment