Experiential, Distributional and Dependency-based Word Embeddings have Complementary Roles in Decoding Brain Activity

We evaluate 8 different word embedding models on their usefulness for predicting the neural activation patterns associated with concrete nouns. The models we consider include an experiential model, based on crowd-sourced association data, several popular neural and distributional models, and a model that reflects the syntactic context of words (based on dependency parses). Our goal is to assess the cognitive plausibility of these various embedding models, and understand how we can further improve our methods for interpreting brain imaging data. We show that neural word embedding models exhibit superior performance on the tasks we consider, beating experiential word representation model. The syntactically informed model gives the overall best performance when predicting brain activation patterns from word embeddings; whereas the GloVe distributional method gives the overall best performance when predicting in the reverse direction (words vectors from brain images). Interestingly, however, the error patterns of these different models are markedly different. This may support the idea that the brain uses different systems for processing different kinds of words. Moreover, we suggest that taking the relative strengths of different embedding models into account will lead to better models of the brain activity associated with words.

💡 Research Summary

The paper investigates how different word‑embedding representations correspond to neural activation patterns elicited by concrete nouns, using functional magnetic resonance imaging (fMRI) data. Eight embedding models are evaluated: (1) an experiential model derived from crowd‑sourced attribute ratings, (2) several neural network‑based distributional models (Word2Vec, FastText, GloVe, LexVec, and a standard Word2Vec skip‑gram), and (3) a syntactic, dependency‑based model (Levy & Goldberg, 2014). The authors adopt a bidirectional decoding framework. In the forward direction, embeddings are used as predictors in a ridge‑regression model to estimate voxel‑wise fMRI responses. In the reverse direction, the same regression architecture predicts embedding vectors from the measured brain activity. Five‑fold cross‑validation and permutation testing are employed to guard against over‑fitting and to assess statistical significance.

The dataset originates from Mitchell et al. (2008): nine participants viewed 60 concrete nouns, each presented six times, yielding 360 stimulus‑locked fMRI volumes. Each stimulus is represented by a 25‑dimensional experiential feature vector and by the various embedding vectors (all 300‑dimensional, L2‑normalized). The regression models use a regularization parameter λ = 0.01 and are evaluated with Pearson correlation (r) and mean‑squared error (MSE) between predicted and actual brain patterns (or vice‑versa).

Results show that neural‑network embeddings outperform the experiential model in both directions. The dependency‑based embedding achieves the highest forward‑prediction performance (average r ≈ 0.45, MSE ≈ 0.12), indicating that syntactic context captures aspects of meaning that align closely with the brain’s representation of concrete nouns. For the reverse mapping (brain → embedding), GloVe attains the best performance (r ≈ 0.42, MSE ≈ 0.15), suggesting that global co‑occurrence statistics are particularly recoverable from the measured neural signals. FastText, LexVec, and standard Word2Vec perform moderately well, while the experiential model lags behind (r ≈ 0.28 forward, r ≈ 0.30 reverse).

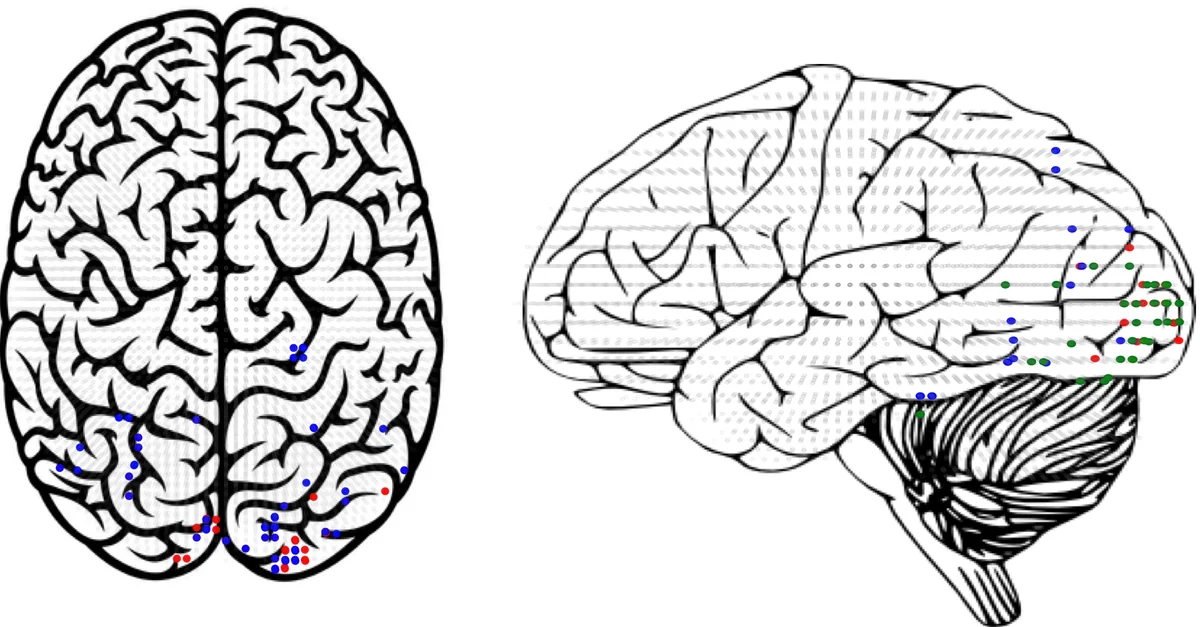

Error‑pattern analyses reveal systematic differences across models. The dependency‑based model shows lower errors in visual and motor cortices, whereas GloVe’s errors are smallest in prefrontal and temporal regions. The experiential model exhibits higher errors in sensory‑motor areas. These divergent spatial error profiles support the hypothesis that the brain utilizes multiple, partially independent systems for processing different semantic dimensions—syntactic, statistical, and sensorimotor.

The authors argue that integrating complementary strengths of the various embeddings could improve decoding accuracy. Simple ensemble methods (e.g., weighted averaging of model predictions) or multi‑task learning frameworks could exploit the distinct information each embedding captures. They acknowledge limitations: the study is restricted to nouns, the participant pool is modest, and fMRI’s temporal resolution is low. Future work should expand to verbs and adjectives, incorporate higher‑temporal‑resolution modalities such as MEG/EEG, and explore subject‑specific embedding fine‑tuning.

In conclusion, the study demonstrates that (i) syntactic, dependency‑based embeddings best predict brain activation from word vectors, (ii) count‑based GloVe embeddings best reconstruct word vectors from brain activity, and (iii) the distinct error topographies of these models provide neurobiological evidence for multiple, complementary semantic processing streams. Leveraging these complementary representations promises more accurate brain‑language models and advances our understanding of the neural basis of meaning.

Comments & Academic Discussion

Loading comments...

Leave a Comment