Robust massive MIMO Equilization for mmWave systems with low resolution ADCs

Leveraging the available millimeter wave spectrum will be important for 5G. In this work, we investigate the performance of digital beamforming with low resolution ADCs based on link level simulations including channel estimation, MIMO equalization and channel decoding. We consider the recently agreed 3GPP NR type 1 OFDM reference signals. The comparison shows sequential DCD outperforms MMSE-based MIMO equalization both in terms of detection performance and complexity. We also show that the DCD based algorithm is more robust to channel estimation errors. In contrast to the common believe we also show that the complexity of MMSE equalization for a massive MIMO system is not dominated by the matrix inversion but by the computation of the Gram matrix.

💡 Research Summary

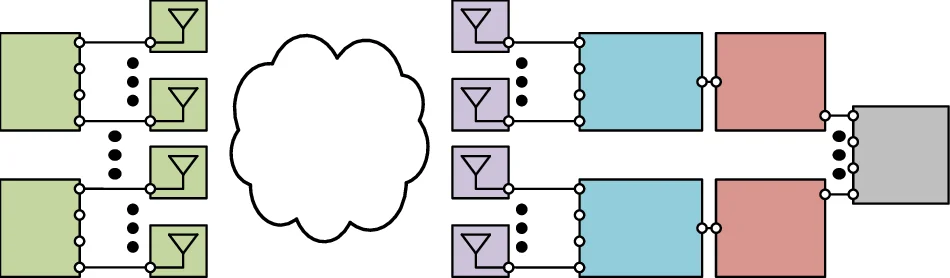

The paper investigates the feasibility of using low‑resolution analog‑to‑digital converters (ADCs) in massive multiple‑input multiple‑output (MIMO) systems operating at millimeter‑wave (mmWave) frequencies, a scenario that is highly relevant for 5G and upcoming 6G deployments. High‑resolution ADCs, while providing accurate digitisation of the wide mmWave bandwidth, consume a prohibitive amount of power, especially when hundreds of antenna elements are present. The authors therefore focus on a fully digital beam‑forming architecture that employs 2‑ to 4‑bit ADCs, arguing that this regime offers a better trade‑off between energy consumption and achievable performance than the extreme 1‑bit case often studied in the literature.

The system model follows the 3GPP New Radio (NR) specifications, in particular the Type‑1 demodulation reference signals (DMRS) that have been standardized for channel estimation. The DMRS are generated from a pseudo‑noise sequence and are orthogonalised across users by a combination of time‑domain cyclic shifts, frequency‑division multiplexing, and code‑division multiplexing. The authors provide the exact mathematical description of the reference‑signal generation (equations (1) and (2)) and illustrate how the cyclic shift of half an OFDM symbol translates into a sign‑alternating sequence in the frequency domain.

Channel estimation is performed by first extracting the raw estimates at the DMRS positions (equation (4)) and then applying a two‑dimensional interpolation filter that is derived from a minimum‑mean‑square‑error (MMSE) criterion. The interpolation matrices for time (A_t) and frequency (A_f) are constructed separately to keep computational load manageable. While the optimal MMSE filter would require knowledge of the full channel covariance, the authors adopt a model‑based approach using an exponential power delay profile, which yields closed‑form expressions for the cross‑correlation between sub‑carriers (equation (8)). To limit the size of the matrices, only a subset of reference symbols (K_C) is used, which introduces only a negligible performance loss because distant sub‑carriers are weakly correlated.

The core contribution of the paper lies in the comparison between two equalisation strategies: the conventional linear minimum‑mean‑square‑error (MMSE) equaliser and a sequential Dichotomous Coordinate Descent (DCD) algorithm. The MMSE solution requires solving x̂ = (HᴴH)⁻¹Hᴴy, where H is the estimated channel matrix. In massive MIMO the dominant computational burden is not the matrix inversion itself but the formation of the Gram matrix HᴴH, whose complexity scales quadratically with the number of antennas. The authors quantify this by measuring the number of floating‑point operations (FLOPs) required for both steps and demonstrate that the Gram matrix accounts for roughly 70 % of the total MMSE cost.

The DCD algorithm, originally proposed for CDMA multi‑user detection, is adapted to the massive MIMO context. Starting from the relaxed optimisation problem min_{x∈

Comments & Academic Discussion

Loading comments...

Leave a Comment