Efficient Use of Limited-Memory Accelerators for Linear Learning on Heterogeneous Systems

We propose a generic algorithmic building block to accelerate training of machine learning models on heterogeneous compute systems. Our scheme allows to efficiently employ compute accelerators such as GPUs and FPGAs for the training of large-scale machine learning models, when the training data exceeds their memory capacity. Also, it provides adaptivity to any system’s memory hierarchy in terms of size and processing speed. Our technique is built upon novel theoretical insights regarding primal-dual coordinate methods, and uses duality gap information to dynamically decide which part of the data should be made available for fast processing. To illustrate the power of our approach we demonstrate its performance for training of generalized linear models on a large-scale dataset exceeding the memory size of a modern GPU, showing an order-of-magnitude speedup over existing approaches.

💡 Research Summary

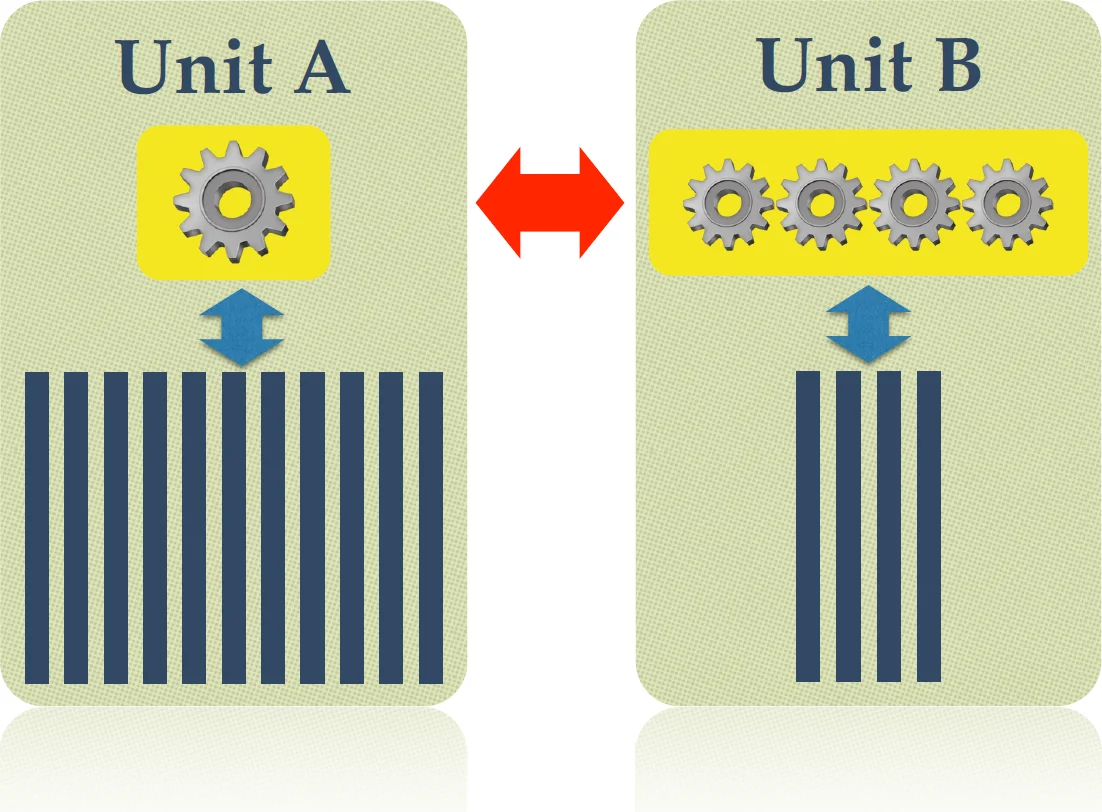

The paper addresses the challenge of training large‑scale linear models on heterogeneous compute platforms where a fast accelerator (GPU or FPGA) has limited memory while a slower host (CPU) can store the full dataset. The authors propose a duality‑gap‑based heterogeneous learning scheme (DUHL) that dynamically selects the most “important” subset of coordinates (features) to be processed on the accelerator, thereby maximizing the use of its computational power while respecting its memory constraints.

The theoretical foundation starts from the primal‑dual formulation of generalized linear models minα f(Aα)+g(α). Using Fenchel‑Rockafellar duality, a per‑coordinate duality gap gap_i(α_i) is defined, which quantifies how much each coordinate contributes to the overall sub‑optimality. The authors extend existing coordinate‑descent convergence analyses to (i) block updates of arbitrary size m, (ii) approximate updates characterized by a factor θ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment