A Communitys Perspective on the Status and Future of Peer Review in Software Engineering

Context: Pre-publication peer review of scientific articles is considered a key element of the research process in software engineering, yet it is often perceived as not to work fully well. Objective: We aim at understanding the perceptions of and attitudes towards peer review of authors and reviewers at one of software engineering’s most prestigious venues, the International Conference on Software Engineering (ICSE). Method: We invited 932 ICSE 2014/15/16 authors and reviewers to participate in a survey with 10 closed and 9 open questions. Results: We present a multitude of results, such as: Respondents perceive only one third of all reviews to be good, yet one third as useless or misleading; they propose double-blind or zero-blind reviewing regimes for improvement; they would like to see showable proofs of (good) reviewing work be introduced; attitude change trends are weak. Conclusion: The perception of the current state of software engineering peer review is fairly negative. Also, we found hardly any trend that suggests reviewing will improve by itself over time; the community will have to make explicit efforts. Fortunately, our (mostly senior) respondents appear more open for trying different peer reviewing regimes than we had expected.

💡 Research Summary

This paper investigates the current state and future prospects of peer review in software engineering by surveying authors and reviewers associated with the International Conference on Software Engineering (ICSE) from 2014 to 2016. The authors identified 932 potential participants (authors and reviewers) and received 241 completed responses, yielding a 29 % response rate. The questionnaire comprised 19 items, including ten closed‑ended Likert‑scale questions and nine open‑ended questions, allowing both quantitative analysis and qualitative coding of free‑text responses.

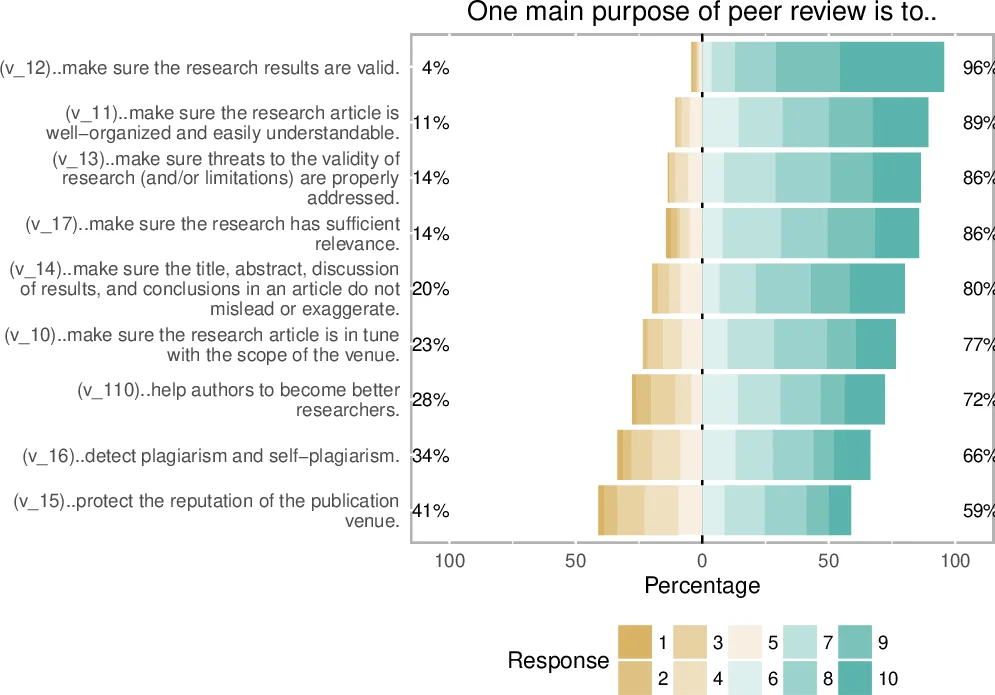

The quantitative findings reveal a largely negative perception of review quality. Roughly one‑third of respondents rated the reviews they received as “good,” while an equal proportion described them as “useless” or “misleading.” When asked about the purpose of peer review, participants emphasized quality improvement and originality assessment over simple acceptance/rejection decisions.

Regarding anonymity, the majority expressed a preference for stronger blinding mechanisms. Many respondents favored a double‑blind system (authors and reviewers unknown to each other) and a “zero‑blind” approach where reviewers disclose their identities, believing that name disclosure would increase accountability and review quality. Opinions on fully open review (publishing reviews alongside papers) were mixed but leaned positively, especially among those who value transparency and reproducibility.

Compensation emerged as a nuanced issue. While direct monetary rewards were not the primary motivator, participants strongly supported mechanisms that make reviewing work visible and citable—such as platforms like Publons or institutional recognition of review contributions. The average agreement with statements linking formal acknowledgment to increased reviewing effort was around 4 on a 5‑point scale.

To explore how attitudes might evolve, the authors applied linear regression models using demographic variables (age, seniority, year of participation). The models indicated no significant trend toward automatic improvement over time; the “status‑quo will improve on its own” hypothesis was not supported. However, senior researchers showed slightly greater openness to experimental review regimes (e.g., double‑blind, open review) than junior colleagues.

Qualitative analysis of open‑ended responses identified recurring themes: (1) concerns about reviewer expertise and consistency, (2) perceived bias against certain methods or topics, (3) desire for clearer reviewer guidelines and training, (4) interest in public acknowledgment of good reviews, and (5) suggestions for systematic changes such as mandatory reviewer training, standardized review forms, and decoupling review from venue prestige.

The authors discuss several implications. First, the prevailing dissatisfaction suggests that the software engineering community cannot rely on passive evolution; deliberate policy changes are required. Second, a hybrid model combining double‑blind anonymity for authors, optional reviewer identity disclosure, and optional open publication of review reports could balance accountability with protection against retaliation. Third, establishing a transparent credit system for reviewers could mitigate reviewer fatigue and improve review thoroughness.

Limitations are acknowledged. The sample is confined to a single high‑profile conference, which may not represent the broader spectrum of software engineering venues (journals, workshops, regional conferences). Moreover, participation bias is possible because individuals with strong opinions about peer review may be more likely to respond. Consequently, the findings may not be fully generalizable across the entire discipline.

In conclusion, the study provides empirical evidence that software engineering peer review is viewed as suboptimal, that there is modest but notable support for stronger blinding, open review, and reviewer recognition, and that no spontaneous improvement trend is observable. The authors recommend that conference organizers, journal editors, and research institutions experiment with mixed‑model review processes, implement formal reviewer credit mechanisms, and conduct longitudinal studies across diverse venues to monitor the impact of such reforms.

Comments & Academic Discussion

Loading comments...

Leave a Comment