Identifying civilians killed by police with distantly supervised entity-event extraction

We propose a new, socially-impactful task for natural language processing: from a news corpus, extract names of persons who have been killed by police. We present a newly collected police fatality corpus, which we release publicly, and present a model to solve this problem that uses EM-based distant supervision with logistic regression and convolutional neural network classifiers. Our model outperforms two off-the-shelf event extractor systems, and it can suggest candidate victim names in some cases faster than one of the major manually-collected police fatality databases.

💡 Research Summary

The paper introduces a socially important natural‑language‑processing task: automatically extracting the names of civilians who have been killed by police from a large corpus of news articles. The authors note that the United States government does not maintain systematic records of police‑related fatalities, and existing official statistics miss thousands of deaths. Non‑profit organizations and news outlets have therefore built their own databases by manually reading millions of articles, a labor‑intensive process that limits scalability. To address this gap, the authors (1) collect a new dataset of U.S. web news articles from 2016 using a broad set of police‑related and fatality‑related keywords, and (2) release a publicly available “Fatal Encounters” (FE) database containing roughly 18 000 verified police‑kill incidents.

The task is framed as cross‑document entity‑event extraction. Given a set of documents D, the system must output a list of person entities E and, for each entity e, a probability that the person was killed by police, P(y_e = 1 | x_M(e)), where x_M(e) denotes all sentences (mentions) that contain that person’s name. Each mention i is a token span identified by a named‑entity recognizer (spaCy) and must contain a first‑name/last‑name pair. The authors replace the target name in each mention with a special “TARGET” token and replace other person names with a generic “PERSON” token to focus the model on the context surrounding the target. They further filter sentences to retain only those containing at least one police keyword and one fatality keyword, which removes 99 % of mentions while preserving a reasonable recall (≈0.57) of true victims.

Modeling proceeds in two stages. First, a mention‑level binary classifier predicts whether a given sentence asserts that the target was killed by police. Two classifiers are explored: (a) logistic regression (LR) with hand‑crafted features derived from dependency paths, part‑of‑speech tags, and word n‑grams, and (b) a convolutional neural network (CNN) that learns representations from word embeddings. Both output a sigmoid probability P(z_i = 1 | x_i) = σ(β^T f_γ(x_i)).

Second, the mention‑level predictions are aggregated to an entity‑level probability using a logical disjunction (OR) model, also known as the noisy‑or formulation. The probability that an entity is a victim is computed as

P(y_e = 1 | x_M(e)) = 1 − ∏_{i∈M(e)} (1 − P(z_i = 1 | x_i)).

This captures the intuition that a single positive sentence is sufficient to label the person as a victim, while multiple weakly indicative sentences can collectively raise the confidence.

Because gold‑standard mention labels are unavailable, the authors employ distant supervision using the FE database. Two labeling strategies are defined. “Hard” labeling assigns a positive label to any mention whose name matches a name in the FE database (or, for higher precision, also requires the location mentioned in the sentence to match the database). This approach is noisy: manual inspection shows that about 36 % of name‑only matches do not actually describe a police killing. To mitigate this, the authors introduce a “soft” EM‑based training regime. They first train the mention classifier on the hard labels, then iteratively (E‑step) compute posterior probabilities q(z_i) = P(z_i | x_M(e_i), y_{e_i}) using the current model and the noisy‑or constraint, and (M‑step) re‑estimate the classifier parameters by maximizing the expected log‑likelihood weighted by q(z_i). This is essentially a multiple‑instance learning framework where at least one mention per positive entity must be true, but the exact mention is latent.

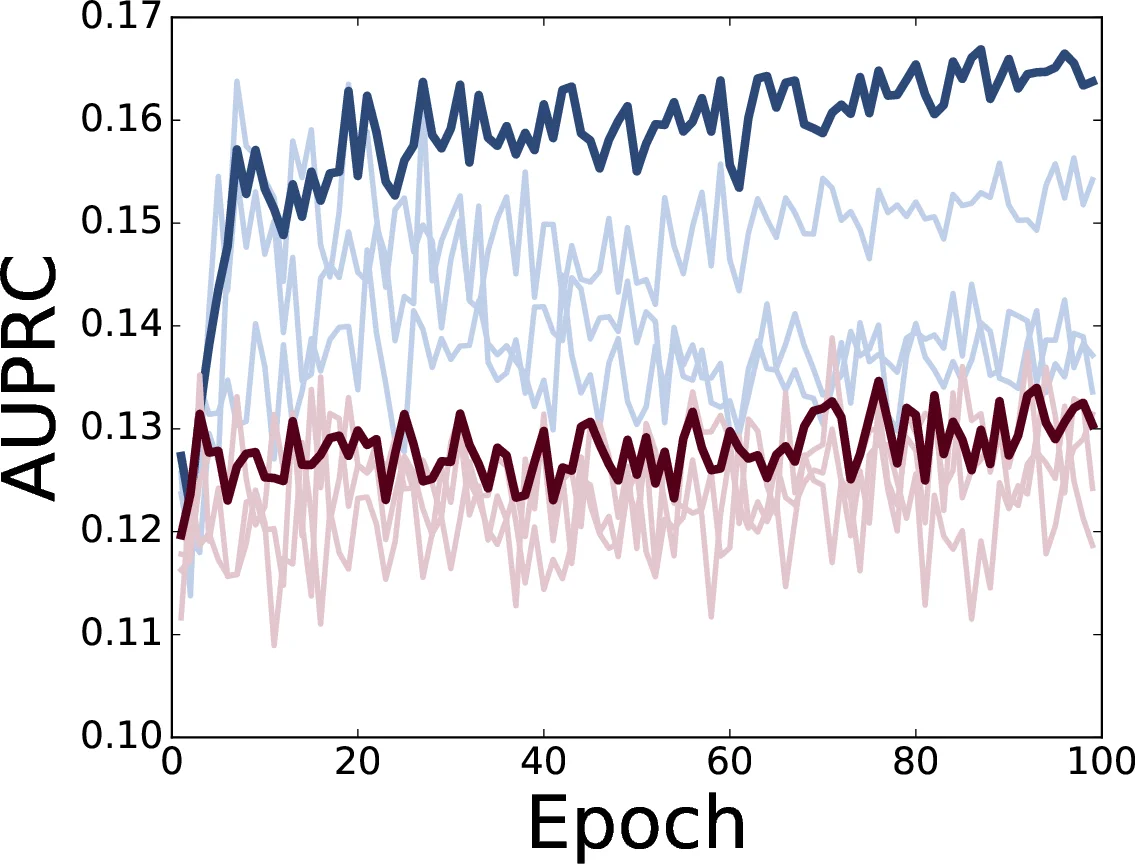

Experiments are conducted on a temporal split: articles from Jan–Aug 2016 form the training set, and Sep–Dec 2016 form the test set. After processing, the training set contains 132 833 mentions (≈1.3 k positive entities), and the test set contains 68 925 mentions (≈258 positive entities). Evaluation uses the area under the precision‑recall curve (AUPRC). The soft‑EM logistic regression model with inverse regularization C = 0.1 achieves an AUPRC of 0.18, outperforming two off‑the‑shelf event extraction systems (OpenIE and Stanford Event Extractor) that were trained on generic event ontologies. The CNN variants perform comparably but do not surpass the LR‑EM model.

Beyond quantitative metrics, the system demonstrates practical utility: it identified 39 victims not present in The Guardian’s “The Counted” database as of January 1 2017, suggesting that an automated pipeline can surface new cases faster than manual curation.

The paper also discusses limitations. The reliance on exact first‑last name matching for coreference leads to occasional false merges for common names, and the dataset is U.S.‑centric, limiting generalizability to other countries or languages. The distant‑supervision labels remain noisy despite the EM refinement, and the keyword filtering, while improving precision, reduces recall.

Future work is outlined: (1) more sophisticated entity resolution (e.g., using contextual embeddings or external knowledge bases), (2) expanding to multilingual news sources, (3) extending the model to multi‑label event types (e.g., distinguishing “shot by police” from “killed in police‑initiated chase”), and (4) integrating human‑in‑the‑loop verification to improve label quality. The authors release the corpus, code, and trained models, encouraging the community to build upon this socially impactful task.

Comments & Academic Discussion

Loading comments...

Leave a Comment