Correntropy Maximization via ADMM - Application to Robust Hyperspectral Unmixing

In hyperspectral images, some spectral bands suffer from low signal-to-noise ratio due to noisy acquisition and atmospheric effects, thus requiring robust techniques for the unmixing problem. This paper presents a robust supervised spectral unmixing …

Authors: Fei Zhu, Abderrahim Halimi, Paul Honeine

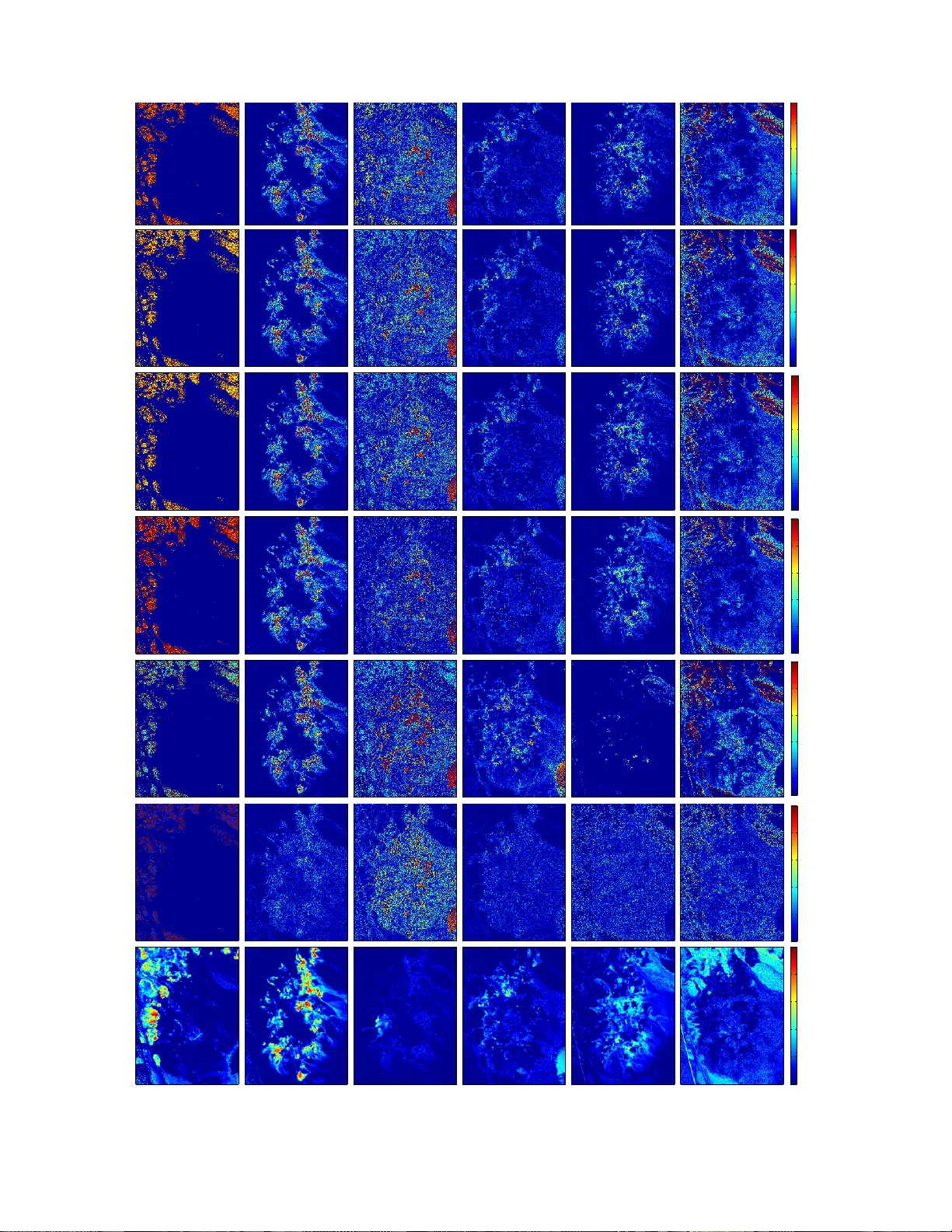

1 Correntrop y Maximizat ion via ADMM – Application to Robust Hyperspectral Unmixing – Fei Zhu, Abderrahim Halimi, Paul Honeine, Badong Chen, Nan ning Zheng Abstract In hyperspectral images, some spectral bands suf fer from low signal-to-noise ratio due to noisy acquisition and atmospheric effe cts, thus requiring robust techniqu es for the unmixing problem. This paper presents a robust sup ervised spectral unmixing approach for hyperspectral images. The robustness is achiev ed by wri ting the unmixing problem as the maximization of the correntrop y criterion subject to the most commonly used constraints. T wo unmixing problems are derived: the first problem considers the fully-constrained unmixing, with both the non-negati vity and sum-to-one constraints, while the second one deals with the non -negati vity and the sparsity-promoting of the abundances. The corresponding optimization problems are solved efficiently using an alternating direction method of multipliers (ADMM) approach. Experiments on synthetic and real hyperspectral images v alidate t he performance of the propo sed algo rithms for dif ferent scenarios, demonstrating that the correntropy-based unmixing is robust t o outlier bands. Index T erms Correntropy , maximum correntropy estimation, alternating direction method of multipliers, hy perspectral image, unmixing problem. F . Zhu is with the Institut Charles Delauna y (CNRS), Uni versit ´ e de T echnologie de Troye s , France. (fei.zhu@utt .fr) A. Halimi is with the School of Engineering and Physical Science s, Heriot-W att Uni versity , U.K. (a.halimi@hw .ac.uk) P . Honeine is with the LITIS lab, Univ ersit ´ e de Rouen, France. (paul.honeine@un i v-rouen.fr) Badong Chen and Na nning Zheng are with the Institute of Artificial Intelligen ce and Robotics, Xi’an Jiaotong Unive rsity , Xi’an, China. (chenbd; nnzheng@mail.xjt u.edu.cn) 2 I . I N T RO D U C T I O N S Pectral unmixing is an essential issue in m any discipline s, inclu ding signal and imag e p rocessing, with a wide range of applications, such as c lassification, segmentation, material iden tification and target d etection. T ypica lly , a hyp erspectral image co rrespon ds to a scene taken at many contin uous and n arrow ban ds across a certain wav e length rang e; n amely , each pixel is a spe ctrum. Assuming that each spectru m is a mixtu re of several pure m aterials, the unm ixing pr oblem consists in two tasks: (i) identifyin g these pur e materials (the so-called e ndmembers ); (ii) estimating the ir p ropor tions (the so-called abundances ) a t each pixel [1]. In p ractice, these two steps can be p erform ed either sequentially o r simultan eously [2]. W ell- known endmem ber extractio n algorithms in clude the pu re-pixel-based o nes, e.g. , the vertex compon ent analysis ( VCA) [3] and the N-FINDR [4], as well as th e minim um-volume-based on es, e.g. , the m inimum simplex analy sis [5] and the m inimum volume constrain ed nonn egati ve matrix factorization [6]. While the end member extraction is relatively easy from geometry , the abunda nce estimation remains an o pen prob lem. Indeed, the abundances can be estimated using least-squ ares metho ds, geometric app roaches [2 ], or by tackling recen tly-raised issues such as n onlinear ity [7], [8]. In this p aper, we consider the abundance estimation problem . The linear mix ture mo del (LMM) is the mo st in vestigated over the past dec ades [ 6], [9], [10]. Its u nderlyin g premise is that each pixel/spectru m is a linear comb ination of the endme mbers. T o be physically interpretab le, two constraints are often enforce d in the estimation problem : the abundance non -negativity constraint (A NC) a nd th e abundance sum-to-o ne constraint (ASC) for eac h pixel [ 11]. Considerin g bo th co nstraints, the fully -constrained least-sq uares metho d (FCLS) was presented in [9]. A more recently pro posed unmixing algor ithm is the so- called SUnSAL, fo r Sparse Unmixing b y variable Splitting and Augmented Lagrangian [1 2]. It ad dresses the same optimization p roblem by taking advantage of the alternating direction method of multipliers (ADMM) [13]. A constrained -version o f SUnSAL was also pro posed to solve the constrain ed sparse regression pro blem, whe re the sum-to -one constrain t (ASC) is relaxed an d the ℓ 1 -norm regularizer is add ed. All the se un mixing algorith ms hugely suffer from noisy data and outliers within bands. Indeed , in real hyperspe ctral imag es for rem ote sensing, a co nsiderable pro portion (about 20%) o f the spectral ban ds are no isy with low SNR, d ue to the a tmospheric effect such as w ater absorp tion [14]. These bands need to be removed pr ior to applying any existing unmixing metho d; otherwise, the u nmixing quality d rastically decr eases. Such sensiti vity to outliers is due to the in vestigated ℓ 2 -norm as a cost functio n in the FCLS and SUnSAL a lgorithms, a s well as all un mixing algo rithms that explore least-squares solu tions. It is worth no ting that nonlin ear u nmixing algorithms also suffer from this drawback, in cluding th e kernel-b ased fully- constrained least-squ ares (KFCLS) [15], nonlinear fluc tuation methods [ 7] an d p ost-nonlin ear methods [1 6]. Inform ation theoretic learning provides an elegant alternati ve to the con ventional minimization of the ℓ 2 -norm in least-squar es 3 problem s, by co nsidering th e maximizatio n of the so-called cor rentropy [17], [1 8]. Due to its stability and robustness to noise and ou tliers, the corre ntropy maximizatio n is based on theo retical f oundatio ns and ha s b een su ccessfully a pplied to a wid e class of applicatio ns, includ ing cancer c lustering [ 19], face r ecognition [20], and recently h yperspectr al un mixing [2 1], to n ame a few . In these works, the resulting p roblem is optimized by the half-q uadratic tech nique [2 2], either in a supervised m anner [20] or as an unsuper vised nonnegative matrix factorization [1 9], [2 1]. In this p aper, we consider the hyper spectral unmixin g p roblem by definin g an app ropriate corr entropy-b ased criterion, thus taking advantage o f its ro bustness to large outliers, as o pposed to the conv entional ℓ 2 -norm criteria. By includ ing con straints common ly used for ph ysical interpre tation, we prop ose to solve the resulting co nstrained optim ization problem s with alternating direction m ethod of multiplier s (ADMM) alg orithms. Indeed , the ADMM a pproach splits a hard p roblem into a seque nce of small and hand ful ones [13]. Its relevance to solve n onconve x p roblems was studied in [13, Section 9]. W e show th at ADM M provides a relev an t fram ew o rk for inco rporating different constrain ts raised in the un mixing pro blem. W e pre sent the so- called CUSAL (for Corr en tr opy-b ased Un mixing by variable Splitting and Augmented Lagrangia n ), and study in pa rticularly two algorith ms: CUSAL-FC to solve the fully-co nstrained (ANC a nd ASC) corr entropy-ba sed unm ixing prob lem, and the CUSAL-SP to solve the sparsity-pr omoting corren tropy-based unmix ing problem . The rest of the pap er is o rganized a s follows. W e first p rovide a succin ct survey o n th e classical unmixin g pro blems in Section II. In Section III, we propo se the corr entropy-ba sed u nmixing p roblems sub ject to the aforem entioned constrain ts, and study the ro bustness. The r esulting optimization problem s are solved by the ADMM algo rithms describe d in Sectio n IV. Experime nts o n synthetic and real hyp erspectral im ages are pr esented in Sections V and V I, respectively . Finally , Section V II provides some conc lusions and futur e works. I I . C L A S S I C A L U N M I X I N G P RO BL E M S The linea r mixtu re mod el ( LMM) assume s that each spec trum can be expressed as a linear com bination of a set of pure material spectra, term ed end members [1]. Consider a hypersp ectral image and let Y ∈ R L × T denote th e matr ix of the T pixels/spectra of L spectral b ands. Let y ∗ t be its t -th co lumn and y l ∗ its l -th row , represen ting the l - th band of all p ixels. For notation simplicity , we d enote y t = y ∗ t , for t = 1 , . . . , T . The LMM can be written as y t = R X r =1 x r t m r + n t = M x t + n t , (1) where M = [ m 1 · · · m R ] ∈ R L × R is the matrix com posed by th e R en dmember s with m r = [ m 1 r · · · m Lr ] ⊤ , x t = [ x 1 t · · · x Rt ] ⊤ is the abundance vector associated with the t - th pixel, and n t ∈ R L is the additive noise. I n matrix form for all pixels, we have Y = M X + N , wher e X = [ x 1 · · · x T ] ∈ R R × T and N is the noise matrix. 4 In the following, the en dmembe rs are assumed kn own, either from g round -truth info rmation or by using any endm ember extraction techniq ue. The spectral unmixin g p roblem co nsists in estimating the abundances for each pixel, of ten by solving the least-squares o ptimization p roblem min x t k y t − M x t k 2 2 , (2) for each t = 1 , . . . , T , where k · k 2 denotes the conv entional ℓ 2 -norm . Th e solution to this conventional least-squ ares prob lem is g i ven by the pseudo-inverse of th e (tall) endm ember matrix , with x t = ( M ⊤ M ) − 1 M ⊤ y t . The least-squares op timization problem s (2), for all t = 1 , . . . , T , are often wr itten in a sing le op timization pr oblem u sing the f ollowing ma trix fo rmulation min X k Y − M X k 2 F , (3) where k · k 2 F denotes the Fr obenius n orm. Its solution is X LS = ( M ⊤ M ) − 1 M ⊤ Y . (4) Finally , this optimizatio n problem can be also tack led by conside ring all th e image pixels at each sp ectral b and, which yields the following least-squares optimization problem min X L X l =1 k y l ∗ − ( M X ) l ∗ k 2 2 , where ( · ) l ∗ denotes the l -th r ow o f its argume nt. While all th ese prob lem f ormulation s h av e a closed-f orm solution, they suffer from two m ajor dr awbacks. Th e first o ne is that several constraints n eed to b e impo sed in or der to h av e a physical mean ing of the r esults. The secon d drawback is its sensitivity to noise an d o utliers, due to the use of the ℓ 2 -norm as a fitness mea sure. These two dr awbacks are d etailed in the f ollowing. T o b e phy sically inter pretable, the abundances sh ould be no nnegative (ANC) and satisfy the sum -to-one constra int (ASC). Considering both constraints, th e fully-co nstrained least-squares problem is f ormulated as, fo r each t = 1 , . . . , T , min x t k y t − M x t k 2 2 , subject to x t 0 and 1 ⊤ x t = 1 , where 1 ∈ R R × 1 denotes the column vector of ones and 0 is th e no n-negativity applied elemen t-wise; In matrix form: min X k Y − M X k 2 F , subject to X 0 and 1 ⊤ x t = 1 , fo r t = 1 , . . . , T . Since th ere is no closed-for m solution when dea ling with the n on-negativity constraint, se vera l iterative tech niques have been propo sed, such a s the active set scheme with the Lawson and Hanson’ s a lgorithm [23], the multiplicative iterative strategies [24], and the fu lly-constrain ed least-squares (FCLS) techn ique [9]. More recently , the alternatin g direction method of multip liers (ADMM) was app lied with success for hyp erspectral unmix ing problem , with the SUnSAL algorith m [12]. 5 Recent work in hypersp ectral unmix ing have advocated the spa rsity of the abundance vectors [12], [25], [26]. In this case, each spec trum is fitted b y a sp arse linear mixture of endm embers, namely on ly the abundances with resp ect to a small number of end members are n onzero. T o this end, the spar sity-prom oting re gularization with the ℓ 1 -norm is included in the cost functio n, yielding the following constrain ed sparse regression pro blem [12], for each t = 1 , . . . , T , min x t k y t − M x t k 2 2 + λ k x t k 1 , subject to x t 0 , where the par ameter λ balances the fitness of the least-squar es solution and the spar sity lev el. It is worth noting th at the ASC is r elaxed whe n the ℓ 1 -norm is in cluded. T his problem is o ften con sidered by using the fo llowing matrix for mulation min X k Y − M X k 2 F + λ T X t =1 k x t k 1 , subject to X 0 . Sensitivity to outliers All the afo remention ed algo rithms rely o n so lving a (con strained) least-squ ares o ptimization problem , th us inheritin g the drawbacks of using the ℓ 2 -norm as the fitness m easure. A major drawback is its sensitivity to o utliers, wh ere o utliers are some spectral b ands that largely d e viate from the rest of th e ban ds. Indeed, considerin g all the im age pixels, the least-square s optimization problem s take the fo rm min X L X l =1 k y l ∗ − ( M X ) l ∗ k 2 2 , (5) subject to any o f the afor ementioned co nstraints. From this fo rmulation, it is easy to see how the sq uared ℓ 2 -norm gives more weigh t to large residuals, name ly to outliers in which p redicted values ( M X ) l ∗ are far fro m a ctual obser vations y l ∗ . Moreover , it is com mon for hype rspectral images to pr esent up to 2 0% of unu sable spectral bands due to low signal-to-no ise ratio e ssentially from atm ospheric effects, such as water absorp tion. In th e following section , we overcom e this difficulty by co nsidering th e corr entropy maximization principle f rom the inf ormation theor etic learnin g, which yields an optimization problem that is robust to outliers. I I I . C O R R E N T RO P Y - BA S E D U N M I X I N G P R O B L E M S In this section, we examine the co rrentro py and w rite the unmixing pro blems as corr entropy ma ximization ones. Algorithms for solving th ese p roblems ar e derived in Section IV. A. Corr entr opy The correntro py , s tudied in [ 17], [1 8], is a nonlinear local similar ity measu re. For two r andom variables, Y and its estimation b Y u sing som e model/algo rithm, it is defined b y I E [ κ ( Y , b Y )] , (6) 6 where I E [ · ] is the expectation operato r , and κ ( · , · ) is a shift- in variant kernel satis fying the Me rcer theorem [27]. In pr actice, while the joint d istribution fu nction o f Y and b Y is unavailable, the samp le e stimator of c orrentro py is adopted instead. Emp loying a finite numbe r o f data { ( y l ∗ , b y l ∗ ) } L l =1 , it is estimated by 1 L L X l =1 κ ( y l ∗ , b y l ∗ ) , (7) up to a norm alization factor . The Gaussian kernel is th e most com monly- used kernel fo r corren tropy [17], [20], [28]. This leads to th e following expression fo r the corr entropy 1 L L X l =1 exp − 1 2 σ 2 k y l ∗ − b y l ∗ k 2 2 , (8) where σ denotes the ban dwidth of the Gaussian kernel. The maximization of th e corren tropy , giv en by max b y 1 ∗ ,..., b y L ∗ 1 L L X l =1 κ ( y l ∗ , b y l ∗ ) , is ter med the ma ximum corre ntropy criterion [17]. I t is n otew o rthy that well-known second-or der statistics, such as the mean square err or (MSE) dep ends h eavily on the Gaussian and lin ear assum ptions [17]. Howe ver , in presence of no n-Gaussian noise an d in particu lar large outliers, i.e. , observations greatly deviated fr om the d ata bulk, the effectiv eness of the MSE-b ased algorithm s will sign ificantly deterio rate [29]. By contr ast, the maximiza tion o f the correntr opy criterion is approp riate f or non-Gau ssian signal processing, and is ro bust in particular against la rge outliers, as shown next. B. The u nderlying r obustness of the c orr entr o py c riterion In this section, we study the sensitivity to outliers o f th e cor rentropy maximization pr inciple, by showing the robustness o f the under lying m echanism. T o this end, we examine the b ehavior of the co rrentropy in term s of the residua l erro r defined by ǫ l = k y l ∗ − b y l ∗ k 2 . Thus, the corren tropy (8) b ecomes 1 L L X l =1 exp − 1 2 σ 2 ǫ 2 l . Compared with second -order statistics, e.g. MSE, the corren tropy is mor e ro bust with respect to th e outliers, as shown in Fig. 1 illustratin g th e second- order an d th e corren tropy objective function s in terms o f the residu al er ror . As the residua l error increases, the second-o rder function keeps incre asing d ramatically . On the contrary , th e correntr opy is on ly sensitive within a region of small residual errors, this region being controlled by th e kern el ban dwidth. For large ma gnitudes of residua l error, the corren tropy falls to zero. Consequ ently , the co rrentropy criter ion is robust to large outliers. 7 6 0 5 4 3 2 1 1 0 . 5 1 . 5 residual error correntrop y ( σ = 0 . 5 ) correntrop y ( σ = 2 ) correntrop y ( σ = 5 ) second-orde r function Fig. 1. Illustra tion of second-ord er objecti ve function (solid line) and the correntrop y objec ti ve functio n (dashed lines) with differe nt val ues of the ker nel bandwidt h. C. Correntr o py-based un mixing pr o blems The corr entropy-b ased un mixing p roblem con sists in estimating the un known abundance ma trix X , b y minimizin g the objective fu nction C (the n egati ve of cor rentropy), given b y C ( X ) = − L X l =1 exp − 1 2 σ 2 k y l ∗ − ( M X ) l ∗ k 2 2 , (9) where the Gau ssian kernel was consider ed, or e quiv alen tly C ( X ) = − L X l =1 exp − 1 2 σ 2 T X t =1 y lt − R X r =1 x r t m l r 2 ! . (10) Considering both the AN C an d ASC c onstraints, the f ully-constra ined co rrentropy un mixing pro blem beco mes min X C ( X ) , sub ject to X 0 and 1 ⊤ x t = 1 , for t = 1 , . . . , T . (11) For the sake of promotin g sparse repr esentations, the o bjectiv e fun ction ( 9)-(10) can be augm ented b y the ℓ 1 -norm penalty on the abundance matrix X , leadin g to the following prob lem min X C ( X ) + λ T X t =1 k x t k 1 , subject to X 0 . (12) 8 I V . A D M M F O R S O L V I N G T H E C O R R E N T RO P Y - BA S E D U N M I X I N G P RO B L E M S W e first br iefly r evie w the alternating dir ection me thod o f multipliers (ADMM), following th e expr essions in [1 3, Chap. 3]. Consider an o ptimization p roblem of th e form min x f ( x ) + g ( x ) , where the f unctions f and g are closed, prop er and conve x . The ADM M solves the equiv a lent constrain ed prob lem min x , z f ( x ) + g ( z ) subject to Ax + B z = c , (13) such as having the particular constraint x = z for in stance. While this formula tion m ay seem trivial, the o ptimization pr oblem can now be tackled using the augm ented Lagrangian method where the objective fu nction is separable in x and z . By alternating on each variable separately , the ADMM repeats a dire ct update of the d ual variable. In its scaled fo rm, the ADMM alg orithm is sum marized in Algorithm 1. Algorithm 1 The ADMM alg orithm [1 3] Input: f unctions f and g , matrices A and B , vecto r c , param eter ρ 1: Initialize k = 0 , x 0 , z 0 and u 0 2: repeat 3: x k +1 = arg min x f ( x ) + ρ 2 k Ax + B z k − c + u k k 2 2 ; 4: z k +1 = arg min z g ( z ) + ρ 2 k Ax k +1 + B z − c + u k k 2 2 ; 5: u k +1 = u k + Ax k +1 + B z k +1 − c ; 6: k = k + 1 ; 7: until stoppin g criterion A. Corr entr opy- based u nmixing with full-co nstraints In the f ollowing, we apply the ADMM algorith m to solve th e cor rentropy-b ased u nmixing prob lem in the fully -constraine d case, presented in (1 1). Th e main step s are sum marized in Alg orithm 2. Rewrite the variables to b e optimized in a vector x ∈ R RT × 1 , which is stacked b y th e columns of the matrix X , namely x = [ x ⊤ 1 · · · x ⊤ T ] ⊤ . Re write also th e following vectors in R RT × 1 : z = [ z ⊤ 1 · · · z ⊤ T ] ⊤ and u = [ u ⊤ 1 · · · u ⊤ T ] ⊤ , where, for t = 1 , . . . , T , z t = [ z 1 t · · · z Rt ] ⊤ and u t = [ u 1 t · · · u Rt ] ⊤ . 9 By following the formulatio n of the A DMM in Alg orithm 1 , we set f ( x ) = C ( x ) + T X t =1 ι { 1 } ( 1 ⊤ x t ) (14) g ( z ) = ι R RT + ( z ) A = − I , B = I and c = 0 , where I is the id entity m atrix, 0 ∈ R RT × 1 is th e zero vector and ι S ( u ) is the indicator functio n of the set S d efined by ι S ( u ) = 0 if u ∈ S ; ∞ otherwise . In this case, the subprob lem of the x -upd ate (in line 3 of Algorithm 1) addre sses a no nconve x problem with out any closed- form solu tion. T o overcome this difficulty , we app ly an inexact ADMM variant in lines 3- 5 of Algorithm 2 , which solves the subprob lem iterativ ely using the gra dient descent metho d, instead of solving it exactly an d explicitly . Before that, we e liminate th e T equality co nstraints, i.e. , the sum-to- one con straints, by replacing x Rt with x Rt = 1 − R − 1 X r =1 x r t , for t = 1 , . . . , T . L et x ∈ R ( R − 1) T × 1 be th e red uced vector of ( R − 1) un knowns to b e estimated, stacked by x t = x 1 t · · · x ( R − 1) t ⊤ , for t = 1 , . . . , T . By th is m eans, th e objective fu nction in (14) is transfo rmed from (10) into the redu ced-form f 1 ( x ) = − L X l =1 exp − 1 2 σ 2 T X t =1 ǫ l ( x t ) 2 ! , (15) where ǫ l ( x t ) = y lt − m lR − P R − 1 p =1 ( m lp − m lR ) x pt , for l = 1 , . . . , L . The gradien t of (15) with respect to x is stacked as ∂ f 1 ∂ x = " ∂ f 1 ∂ x 1 ⊤ · · · ∂ f 1 ∂ x T ⊤ # ⊤ ∈ R ( R − 1) T × 1 , where ∂ f 1 ∂ x t = h ∂ f 1 ∂ x 1 t · · · ∂ f 1 ∂ x ( R − 1) t i ⊤ , with th e entries g i ven by ∂ f 1 ( x ) ∂ x r t = 1 σ 2 L X l =1 ( m lR − m lr ) exp − 1 2 σ 2 T X s =1 ǫ l ( x s ) 2 ǫ l ( x t ) , for all r = 1 , . . . , ( R − 1 ) a nd t = 1 , . . . , T . Similarly , the function ρ 2 k x − z k − u k k 2 2 is expressed with respect to x as φ ( x ) = ρ 2 T X t =1 1 − R − 1 X p =1 x pt − z Rt,k − u Rt,k 2 + R − 1 X p =1 ( x pt − z pt,k − u pt,k ) 2 with the e ntries in its gradien t ∂ φ ∂ x giv en by ∂ φ ( x ) ∂ x r t = ρ x r t + R − 1 X p =1 x pt − 1 + z Rt,k − z r t,k + u Rt,k − u r t,k , (16) 10 for all r = 1 , . . . , R − 1 and t = 1 , . . . , T . The solu tion o f the z - update in line 4 Alg orithm 1 b ecomes the pro jection of x k +1 − u k onto the first orth ant, as shown in line 7 of Algorithm 2. Algorithm 2 Correntro py-based unm ixing with full-co nstraints ( CUSAL-FC ) 1: Initialize k = 0 , ρ > 0 , η > 0 , σ > 0 ; x 0 , z 0 and u 0 ; 2: repeat 3: repeat 4: x k +1 = x k +1 − η ( ∂ f 1 ∂ x k +1 + ∂ φ ∂ x k +1 ) ; 5: until con vergence 6: reform x k +1 using x k +1 ; 7: z k +1 = max( 0 , x k +1 − u k ) ; 8: u k +1 = u k − ( x k +1 − z k +1 ) ; 9: k = k + 1 ; 10: until stoppin g criterion B. Sp arsity-pr omoting unmix ing algo rithm In order to a pply the ADM M algorithm, we expre ss the constrain ed optimization problem (12) as follows f ( x ) = C ( x ) (17) g ( x ) = ι R RT + ( x ) + λ k x k 1 A = − I , B = I and c = 0 . By analogy with the previous case, th e x -u pdate in line 3 o f Algorithm 1 is solved iter ativ e ly with the g radient descent method and is g i ven in Algor ithm 3 lines 3-5 . The gradien t of (17) with respect to x is stacked b y ∂ f ∂ x t , where ∂ f ∂ x t = − 1 σ 2 L X l =1 ǫ l ( x t ) exp − 1 2 σ 2 T X s =1 ( ǫ l ( x s )) 2 ! m ⊤ l , for t = 1 , . . . , T , w here ǫ l ( x t ) = y lt − P R r =1 x r t m lr . The z -u pdate in line 4 Algo rithm 1 in volves solvin g z k +1 = arg min z ι R RT + ( z ) + ( λ/ρ ) k z k 1 + 1 2 k z − x k +1 − u k k 2 2 . (18) In [13], the ADMM has been applied to solve various ℓ 1 -norm pro blems, inclu ding the well-k nown LA SSO [30]. T he only difference betwee n ( 18) and the z -update in L ASSO is that in the latter, no non-n egati vity term ι R + RT ( z ) is en forced. In this 11 case, the z -update in LASSO is th e elemen t-wise soft thr esholding op eration z k +1 = S λ/ρ ( x k +1 − u k ) , where the sof t thresholdin g o perator [13] is defined b y S b ( ζ ) = ζ − b if ζ > b ; 0 if k ζ k < b ; ζ + b if ζ < − b . Follo wing [ 12], it is straig htforward to project the r esult o nto the n onnegative orthant in order to include the no n-negativity constraint, th us yielding z k +1 = max( 0 , S λ/ρ ( x k +1 − u k )) , where the maximu m fun ction is elem ent-wise. All these resu lts lead to th e corr entropy-b ased unmixing algorith m with sparsity- promo ting, as summarized in Algo rithm 3. Algorithm 3 Correntro py-based unm ixing with sparsity-p romotin g ( CUSAL-SP )) 1: Initialize k = 0 , ρ > 0 , σ > 0 , η > 0 , λ > 0 ; x 0 , z 0 and u 0 ; 2: repeat 3: repeat 4: x k +1 = x k +1 − η ∂ f ∂ x k +1 + ρ ( x k +1 − z k − u k ) ; 5: until con vergence 6: z k +1 = max( 0 , S λ/ρ ( x k +1 − u k )) ; 7: u k +1 = u k − ( x k +1 − z k +1 ) ; 8: k = k + 1 ; 9: until stoppin g criterion C. On the initia lisation and the band width determina tion W e ap ply a three- fold stopp ing criterion for Algo rithms 2 an d 3, a ccording to [13], [ 12]: (i) the p rimal and d ual residu als are small enou gh, n amely k x k +1 − z k +1 k 2 ≤ ǫ 1 and ρ k z k +1 − z k k 2 ≤ ǫ 2 , whe re ǫ 1 = ǫ 2 = √ RT × 10 − 5 as in [12], (ii) the primal residual starts to increase, i.e. , k x k +1 − z k +1 k 2 > k x k − z k k 2 , or (iii) the m aximum iter ation nu mber is a ttained. The bandwidth σ in the Gaussian kernel should be well-tuned. N ote that a small value fo r this par ameter pu nishes ha rder the outlier ban ds, thus incr easing the r obustness of the alg orithm to outliers [20]. Note that, in this study , the ADMM is ap plied to 12 address a no nconve x o bjective fun ction, thus no convergence is gu aranteed theor etically , a ccording to [13]. Considerin g these issues, we propo se to fix th e bandwidth emp irically as sum marized in Algo rithm 4 an d de scribed next. Follo wing [20], [21], we first initialize the ban dwidth parame ter as a function of the reconstru ction error, g iv en by σ 2 0 = R 8 L k Y − M X LS k 2 F , (19) where X LS is the least-squ ares solution ( 4). In the case of a result too apa rt f rom that of least-squa res solu tion, th e parameter is a ugmented b y σ = 1 . 2 σ , un til that the con dition k Y − M X k F k Y − M X LS k F < 2 is satisfied. Th e algo rithm divergence occu rs if the stopping criterio n (ii) is satisfied, n amely the p rimal residual inc reases over iterations. I n this case, eithe r the par ameter is too large due to an overestimated initialization , or it is too small. Accord ingly , we either decre ase it by σ = σ 0 /p , or increase it by σ = 1 . 2 σ , u ntil that the ADMM conver g es. Algorithm 4 T unin g the bandwid th parame ter σ 1: Initialize σ = σ 0 using (19); p = 1 ; 2: Do CUSAL with Algo rithm 2 or Algorith m 3; 3: if stopp ing criterio n (i) or (iii) is satisfied then 4: if condition k Y − M X k 2 k Y − M X LS k 2 < 2 is satisfied, then 5: σ ∗ = σ ( optimal value) 6: else 7: increase σ = 1 . 2 σ , an d g o to line 2 8: end if 9: else 10: if σ > 1000 σ 0 (due to th e overestimated σ 0 ) then 11: p = p + 1 ; 12: decrease σ = σ 0 /p , and go to line 2 13: else 14: increase σ = 1 . 2 σ , an d go to line 2 15: end if 16: end if 13 V . E X P E R I M E N T S W I T H S Y N T H E T I C D AT A In th is section , the perfo rmance of the prop osed fully-con strained ( CUSAL-FC) and sparsity-p romoting (CUSAL-SP) algorithm s is ev aluated o n synthetic data. A comp arative study is pe rformed considering six state-of- the-art metho ds p roposed for linear and nonlinear unmixin g models. • Fully-Con strained Least-Sq uares ( FCLS ) [9]: The FCLS is developed for the linear model. En forcing bo th ANC and A SC constraints, th is tech nique y ields the o ptimal abunda nce matrix in the least-sq uares sen se. • Sparse Unm ixing by variable Splitting and Au gmented Lagrang ian ( SUnSAL ) [12]: This method is ba sed on the ADMM. Sev e ral variants are developed by including different constrain ts, with the fully-con strained SUnSAL- FCLS and the sparsity-pro moting SUnSAL-sparse. • The Bayesian algo rithm fo r Gener alized Bilinear Mod el ( BayGBM ) [ 31], [32]: This m ethod estimates the abundances with the gen eralized biline ar model ( GBM), wh ich adds second- order in teractions be tween en dmember s to the linear model, y ielding the m odel y t = M x t + R − 1 X i =1 R X j = i +1 γ ij,t x it x j t ( m i ⊙ m j ) + n t , where 0 ≤ γ ij,t ≤ 1 controls the interaction s betwee n endme mbers m i and m j , an d ⊙ is the elem ent-wise produ ct. T he BayGBM considers b oth ANC an d ASC. • The Bay esian algorith m f or Polyn omial Post-No nlinear Mixing Mo del ( BayPP NMM ) [33]: This algorithm estimates the parameters b y assum ing that the p ixel reflectance s are nonline ar functio ns of endmem bers using y t = M x t + b t ( M x t ) ⊙ ( M x t ) + n t , (20) where the n onlinear terms are c haracterized by b t ∈ R , and bo th ANC and ASC are requ ired. • Kernel Fu lly-Constrained L east-Squares ( KF CLS ) [1 5]: This method generalizes FCLS, b y replacing the in ner p roduct with a kern el fun ction. In the following, the Gaussian kernel is applied for simu lation. • Robust nonnegative matrix factor ization ( rNMF ) [3 4]: T o captu re the nonlinear effect (ou tliers), this NMF-based metho d introdu ces a g roup- sparse regularization term into the linear mod el. Accountin g for b oth co nstraints, the pr oblem is optimized b y a block-co ordinate descent strategy . For fair compar ison in this paper, the en dmembe rs a re fixed with th e real o nes. Th e regularization parameter is set with the degree of spar sity as sugg ested in [33]. W e first co mpare the fully-co nstrained CUSAL-FC, presented in IV -A, with the state-of -the-art methods. A series of experiments are perfor med, m ainly c onsidering the influen ces of four asp ects: (i) mixture model, ( ii) no ise level, (iii) num ber of corrupted b ands a nd ( i v) number of endmemb ers. 14 0 50 100 150 200 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Bands Reflectance 0 50 100 150 200 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Bands Reflectance Fig. 2. T he R = 3 (left) and 6 (right) USGS signature s chosen for simulati on. Each image, of 5 0 × 50 pixels, is g enerated using e ither th e linear m ixing mo del ( 1) o r the p olynom ial post-non linear mix ing model (PPNMM) (20), where n t is a Gaussian n oise o f SNR ∈ { 15 , 35 } d B . The R ∈ { 3 , 6 } end members, as shown in Fig. 2, are drawn fro m the USGS dig ital spectral libr ary [35]. These endmemb ers are d efined over L = 244 continuo us bands with the wa veleng th rangin g from 0 .2 µm to 3. 0 µm . The abundance vectors x t are unifor mly generated u sing a Dirichlet distribution as in [35], [ 36]. For PPNMM, the values of b t are gen erated unif ormly in the set ( − 3 , 3 ) accord ing to [33]. T o imitate th e noisy b ands in the r eal hyp erspectral imag es, sev eral bands in th e g enerated data are cor rupted by replacin g the correspo nding rows of Y with ra ndom values within [0 , 1 ] . The num ber of corr upted band s varies in the set { 0 , 20 , 40 , 60 } . The unmixing perfo rmance is ev aluated using the abundance root mean square error (RMSE) [31], [37], defined b y RMSE = v u u t 1 RT T X t =1 k x t − b x t k 2 , where b x t is the estimated abundance vector . Fig. 3 and 4 illustrates the average of RMSE over 10 Mon te-Carlo r ealizations, respectively o n the LMM and PPNMM data. It is easy to see that, in pre sence o f outlier ban ds, the p roposed CUSAL-FC algorithm outperf orms all the compar ing metho ds in terms of RMSE, f or d ifferent mixture mode ls, noise levels and number s of endmemb ers. It is also shown that the p erform ance of th e pr oposed alg orithm im proves when incr easing the SNR. The perf ormance of the pro posed the sparsity- promo ting CUSAL-SP , p resented in I V -B, is compar ed with the sparsity- promo ting SUnSAL-sparse, as well as the FCLS, on a series o f data with spa rse abundance matrices. The influences of (i) the number of cor rupted band s and (ii) th e sparsity level of the abundances, a re stud ied. Ea ch ima ge, o f 15 × 15 pixels, is gen erated by the linear mixture model. T he endm ember matrix is com posed by R = 62 USGS signatures, where the angle betwe en any two different endm embers is larger th an 1 0 ◦ [25]. The K nonzero en tries in ea ch abundan ce vector x t are g enerated by a Dir ichlet distribution. Th e value of K ( i.e. , the indic ator of sparsity level) rang es f rom 4 to 2 0, while th e numb er of 15 0 20 40 60 0.04 0.06 0.08 0.1 0.12 0.14 0.16 0.18 Number of corrupted bands RMSE CUSAL-FC SUnSAL-FCL S FCLS BayGBM BayPPNMM KFCLS rNMF (a) SNR = 15 , R = 3 0 20 40 60 0.05 0.06 0.07 0.08 0.09 0.1 0.11 Number of corrupted bands RMSE (b) SNR = 15 , R = 6 0 20 40 60 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16 Number of corrupted bands RMSE (c) SNR = 35 , R = 3 0 20 40 60 0 0.02 0.04 0.06 0.08 0.1 0.12 Number of corrupted bands RMSE (d) SNR = 35 , R = 6 Fig. 3. LMM data: T he root m ean square error (RMSE) with respect to the number of corrupte d bands, average d over ten Monte-Carlo realizati ons, for dif ferent number of endmembers and SNR. corrup ted bands varies in { 0 , 20 , 40 , 6 0 } . W e set the Gaussian noise by SNR = 30 d B, a level that that is comm only presen t in real hy perspectral im ages accor ding to [2 5]. For both sparsity-pro moting alg orithms, the regula rization parameter λ is adjusted using the set { 10 − 5 , 5 · 10 − 5 , 1 0 − 4 , 5 · 1 0 − 4 , 1 0 − 3 , 1 0 − 2 , 1 0 − 1 } . The u nmixing per forman ce with th e sparsity-p romotin g alg orithms is ev alu ated using th e signal-to -reconstru ction error, measured in decibels, accordin g to [12], [25]. It is d efined by SRE = 10 log 10 P T t =1 k x t k 2 2 P T t =1 k x t − b x t k 2 2 ! . The results, averaged over ten Mon te-Carlo rea lizations, are illustrated in Fig. 5. Considering that the abundanc e matrix under estimation is sparse at different levels, we conclud e the fo llowing: Concer ning th e case withou t outlier bands, the CUSAL- SP outperf orms the SUnSAL- SAL fo r K > 8 and FCLS for K > 12 . When the nu mber of outlier b ands is increases, the propo sed CUSAL-SP algorithm generally p rovides the best unmix ing quality with the highest SRE value, especially for K > 6 . 16 0 20 40 60 0.04 0.06 0.08 0.1 0.12 0.14 0.16 0.18 Number of corrupted bands RMSE CUSAL-FC SUnSAL-FCL S FCLS BayGBM BayPPNMM KFCLS rNMF (a) SNR = 15 , R = 3 0 20 40 60 0.05 0.06 0.07 0.08 0.09 0.1 0.11 Number of corrupted bands RMSE (b) SNR = 15 , R = 6 0 20 40 60 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16 0.18 0.2 Number of corrupted bands RMSE (c) SNR = 35 , R = 3 0 20 40 60 0 0.02 0.04 0.06 0.08 0.1 0.12 Number of corrupted bands RMSE (d) SNR = 35 , R = 6 Fig. 4. PPNMM data: The root mean square error (RMSE) with respect to the number of corrupted bands, average d ov er ten Monte-Car lo realiza tions, for differe nt number of endmembers and SNR. V I . E X P E R I M E N T S W I T H R E A L D AT A This section presen ts th e per formanc e of the propo sed algor ithms on a real hype rspectral image. W e con sider a 250 × 19 0 sub-image taken fr om th e Cuprite mining image, acquire d by the A VIRIS sensor when flying over Las V egas, Nev ad a, USA. The image has been wide ly investigated in the literature [ 7], [25]. The r aw data contains L = 22 4 band s, covering a wa veleng th range 0 . 4 − 2 . 5 µm . Among, there are 37 relatively n oisy ones with low SNR, nam ely the b ands 1 − 3 , 105 − 115 , 150 − 170 , and 223 − 224 . Th e geogr aphic compo sition o f th is ar ea is estimated to include up to 1 4 minerals [3]. Neglecting the similar signatures, we consid er 12 endmem bers as ofte n in vestigated in the literature [7], [3 8]. Th e VCA technique is first applied to extract these en dmember s on th e clean image with L = 187 band s. Starting fro m L = 18 7 b ands, the no isy bands, rando mly chosen fro m the ban ds 1 − 3 , 105 − 115 , 1 50 − 170 , an d 22 3 − 2 24 , a re grad ually in cluded to form a series of input d ata. Therefo re, the experiments are con ducted with L = 187 , 193 , 199 , 205 , 211 , 217 , 223 and 224 b ands. 17 4 6 8 10 12 14 16 18 20 3 4 5 6 7 8 9 CUSAL-SP SUnSAL-sparse FCLS K SRE(dB) (a) 0 corrupted band 4 6 8 10 12 14 16 18 20 0.5 1 1.5 2 2.5 3 3.5 K SRE(dB) (b) 20 corrupted bands 4 6 8 10 12 14 16 18 20 −0.2 0.1 0.4 0.7 1 1.3 1.6 1.9 K SRE(dB) (c) 40 corrupte d bands 4 6 8 10 12 14 16 18 20 −1 −0.5 0 0.5 1 1.5 2 K SRE(dB) (d) 60 corrupte d bands Fig. 5. LMM data: The avera ged signal-to-rec onstruction error (SRE) with respect to the sparsity lev el K , aver aged ov er ten Monte-Ca rlo realiza tions. Comparison for vari ous number of corrupte d bands at SNR = 30 . Since gro und-tru th abundan ces are un known, the perfor mance is m easured with the averaged spectral a ngle distance (SAD) between the in put spectra y t and the reconstru cted ones b y t , as illustrated in Fig. 6 , whe re the SAD is defin ed b y SAD = 1 T T X t =1 arccos y ⊤ t b y t k y t kk b y t k ! . The estimated abundance map s u sing 1 87 , 205 an d 2 24 b ands are given in Fig. 7, Fig. 8, and Fig. 9, respectively . I n absence of noisy ban ds ( i.e., L = 1 87 bands), all the co nsidered metho ds lead to satisfactory abundance map s, with BayPPNMM providing the smallest SAD. As th e number of noisy band s in creases, espe cially from L = 199 to L = 22 4 , the unmix ing perform ance of the state- of-the-a rt m ethods deterior ates dr astically , wh ile the pro posed CUSAL yields stable SAD. The ob tained r esults confirm th e good behavior of the prop osed CUSAL algorithms a nd th eir robustness in presence of corru pted spectral bands. 18 187 193 199 205 211 217 224 2 2.5 3 3.5 4 4.5 5 5.5 6 6.5 7 SUnSAL−FCLS FCLS BayGBM BayPPNMM KFCLS rNMF CUSAL-FC SAD Number of band s Fig. 6. Cuprite image: The ave raged spectral angle distanc e (SAD) using diffe rent number of bands, computed without the noisy bands 1 − 3 , 105 − 115 , 150 − 170 , and 223 − 224 . V I I . C O N C L U S I O N This pap er presented a super vised u nmixing algor ithm b ased on the cor rentropy m aximization prin ciple. T wo cor rentropy- based u nmixing p roblems were addressed, the fir st with the no n-negativity and sum -to-one constra ints, an d the secon d with the n on-negativity constra int an d a sparsity-p romotin g term. The altern ating direction method of multipliers (ADMM) was in vestigated in o rder to solve the corre ntropy-based u nmixing proble ms. Th e effecti veness and r obustness of the p roposed unmixin g meth od we re validated o n synthetic and real hyp erspectral imag es. Fu ture works inclu de th e gener alization of the corren tropy criterion to accoun t for the mu ltiple re flection p henome non [3 1], [39], as well as in corpor ating no nlinear models [40]. A C K N O W L E D G M E N T This work was suppo rted by the French ANR, grant HYP ANE MA: ANR-12BS03-0 033 . 19 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 Fig. 7. Cupr ite image: Estimated abund ance maps using 187 clean bands. Left to right: sphene, alunite, buddingtoni te, kaolinit e, chalcedon y , highway . T op to bottom: SUnSAL -FCLS, FCLS, BayGBM, BayPPNMM, KF CLS, rNMF , CUSAL-FC. 20 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 Fig. 8. Cuprite image: Estimat ed abund ance maps using 205 bands, with 187 clea n bands. Same legend as Fig. 7. 21 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 Fig. 9. Cuprite image: Estimat ed abund ance maps using all the 224 bands, with 187 clean bands. Same legend as Fig. 7. 22 R E F E R E N C E S [1] N. Kesha va and J. F . Mustard, “Spect ral unmixing, ” IE EE Signal Proce s sing Magazine , vol. 19, no. 1, pp. 44–57, Jan 2002. [2] P . Honeine and C. Richard , “Geometric unmixing of large hyperspectra l images: a barycentric coordinate approach , ” IEE E T ransaction s on Geoscience and Remote Sensing , vol . 50, no. 6, pp. 2185–2195, Jun. 2012. [3] J. Nascimento and J. M. Biouca s-Dias, “V erte x component analysis: a fast algorithm to unmix hyperspectral data, ” IEEE T ransact ions on Geoscie nce and Remote Sensing , vol . 43, no. 4, pp. 898–910, Apr . 2005. [4] M. W inter , “N-FINDR: an algorit hm for fast autonomous spectral end-member determina tion in hyperspect ral data: an algorith m for fast autonomous spectra l end-member determinat ion in hyperspectra l data, ” Pro c. of SPIE: Imaging Spectr ometry V , vol. 3753, no. 10, 1999. [5] J. Li, A. Agathos, D. Zaharie, J. Bioucas-Dias, A. Plaza, and X. Li, “Minimum volume s imple x analysis: A fast algorit hm for linear hyperspec tral unmixing, ” IEEE T ransactions on Geoscience and Remote Sensing , vol . 53, no. 9, pp. 5067–5082, Sept 2015. [6] L. Miao and H. Qi, “Endmember extrac tion from highly mixed data using minimum volume constrained nonnegati ve matrix fac toriza tion, ” IEEE T ransacti ons on Geoscienc e and Remote Sensing , vol. 45, no. 3, pp. 765–777, March 2007. [7] J. Chen, C. Richard, and P . Honeine , “Nonlinear unmixing of hyperspec tral data based on a linear -m ixture/ nonlinear-fluctuation model, ” IEE E T ransacti ons on Signal Proc essing , vol. 61, no. 2, pp. 480–492, Jan. 2013. [8] ——, “Nonline ar estimation of material abund ances of hyperspectra l images w ith ℓ 1 -norm spatial regulari zation , ” IEEE Tr ansactions on Geoscience and Remote Sensing , vol. 52, no. 5, pp. 2654–2665, May 2014. [9] D. Heinz and C. Chang, “Fully constra ined least squares linea r spectra l mixture analysis method for material quantificat ion in hyperspec tral imagery , ” IEEE T ransactions on Geoscience and Remote Sensing , vol. 39, no. 3, pp. 529–545, Mar . 2001. [10] A. Huck, M. Guillaume, and J . Blanc-T alon, “Minimum dispersion constrained nonneg ati ve matrix factori zatio n to unmix hyperspectral data, ” IEEE T ransacti ons on Geoscienc e and Remote Sensing , vol. 48, no. 6, pp. 2590–2602 , Jun. 2010. [11] J. M. Bioucas-Dias, A. Plaza, N. Dobigeon, M. Parent e, Q. Du, P . Gader , and J. Chanussot, “Hyperspectral unmixing overvie w: Geometrica l, statistical , and s parse regression-b ased approaches, ” IEEE J ournal of Selected T opics in Applied E arth Observations and Remote Sensing , vol. 5, no. 2, pp. 354–379, 2012. [12] J. M. Bioucas-Dia s and M. A. Figueiredo , “ Alternating direction algorithms for constrained sparse regressio n: Applica tion to hyperspectra l unmixing, ” in IEE E W orkshop on Hyperspectr al Imag e and Signal Pr ocessing: Evolution in Remote Sensing (WHISPERS) , 2010, pp. 1–4. [13] S. Boyd, N. Parikh, E. Chu, B. Peleato, and J. Eckstein, “Distrib uted optimizatio n and s tati stical learning via the alternating direction method of multipli ers, ” F oundati ons and Tr ends R in Machin e Learning , vol. 3, no. 1, pp. 1–122, 2011. [14] A. Zelinski and V . Goyal , “Denoising hyperspectra l imagery and recove ring junk bands using wav elets and sparse approximatio n, ” in IEE E Internat ional Confer ence on Geoscienc e and Remote Sensing Symposium (IGARSS) , July 2006, pp. 387–390. [15] J. Broadwate r, R. Chellap pa, A. Banerje e, and P . Burlina , “Kernel fully constrained least squares abund ance estimate s, ” in IEEE International Geoscience and Remote Sensing Symposium (IGARSS) , 2007, pp. 4041–4044. [16] J. Chen, C. Richard, and P . Honeine, “Estimating abundance fractions of materia ls in hyperspectral images by fitting a post-nonlinear m ixing model, ” in Proc. IEEE W orkshop on Hyperspe ctral Imag e and Signal Proc essing : Evolutio n in Remote Sensing , Jun. 2013. [17] W . Liu, P . Pokharel, and J. C. Pr´ ıncipe , “Corrent ropy: properties and application s in non-gaussian signal processing, ” IEE E T ransactions on Signal Pr ocessing , vol. 55, no. 11, pp. 5286–5298, 2007. [18] J. C. Principe, Information theor etic learning: Renyi’ s entr opy and kerne l perspectiv es . Springer Science & Business Media, 2010. [19] J. J. W ang, X. W ang, and X. Gao, “Non-ne gativ e matrix fact orization by maximizing correntrop y for cancer clusteri ng, ” BMC bioinf ormatics , vol. 14, no. 1, p. 107, 2013. 23 [20] R. He, W . Z heng, and B. Hu, “Maximum correntrop y criterion for robust face recognition, ” IE EE T ransactions on P attern Analysis and Machin e Intell igen ce , vol. 33, no. 8, pp. 1561–1576, 2011. [21] Y . W ang, C. Pan, S. Xiang, and F . Zhu, “Robust hyperspectra l unmixing with corrent ropy-ba sed metric, ” IEEE Tr ansactions on Image P r ocessing , vol. 24, no. 11, pp. 4027–4040, Nov 2015. [22] M. Nikolo va and M. Ng, “ Analysis of half-quadrati c minimizat ion m ethods for signal and image recov ery , ” SIAM Journa l on Scientific computing , vol. 27, no. 3, pp. 937–966, 2005. [23] C. L. L awson and R. J. Hanson, Solving Least Squares P r oblems (Classics in Applied Mathemati cs) . Society for Industrial Mathematic s, 1987. [24] H. Lant ´ eri, M. Roche, O. Cuev as, and C. Aime, “ A general m ethod to de vise maximum-likel ihood signal restoration multiplicat i ve algorithms with non-ne gati vity constraint s, ” Signal Pro cessing , vol. 81, pp. 945–974, May 2001. [25] M. D. Iordache, J. M. Bioucas-Dia s, and A. Plaza, “Sparse unmixing of hyperspectral data, ” IEEE T ransacti ons on Geoscience and Remote Sensing , vol. 49, no. 6, pp. 2014–2039, June 2011. [26] ——, “T otal vari ation spatial regulari zatio n for sparse hyperspect ral unmixing, ” IEE E Tr ansactions on Geoscienc e and R emote Sensing , vol. 50, no. 11, pp. 4484–4502, Nov . 2012. [27] V . V apnik, The Nature of Statistical Learning Theory . Ne w Y ork, NY , USA: Springer -V erlag , 1995. [28] B. Chen and J. C. Pr´ ıncipe, “Maximum correntrop y estimation is a smoothed map estimation, ” IEEE Signal Pro cessing Letters , vol. 19, no. 8, pp. 491–494, 2012. [29] Z. W u, S. Peng, B. Chen, and H. Z hao, “Robu st hammerstein adapti ve filtering under maximum correntrop y criterion, ” Entr opy , vol. 17, no. 10, p. 7149, 2015. [30] R. Tibshirani , “Regre ssion shrinkage and selection via the lasso, ” J ournal of the Royal Statistical Societ y . Series B (Methodolo gical) , pp. 267–288, 1996. [31] A. Halimi, Y . Altmann, N. Dobigeon, and J. Y . T ourneret, “Nonlinear unmixing of hyperspectral images using a generali zed bilinear model, ” IEEE T ransacti ons on Geoscienc e and Remote Sensing , vol. 49, no. 11, pp. 4153–4162, Nov . 2011. [32] ——, “Unmixing hyperspectra l images using the generalize d bilinear model. ” in IE EE Internati onal Confer ence on Geoscienc e and R emote Sensing Symposium (IGAR SS) , 2011, pp. 1886–1889. [33] Y . Altmann, A. Halimi, N. Dobigeon, and J. Y . T ourneret, “Supervised nonlinea r spectral unmixing using a postnonline ar mixing model for hyperspect ral imagery , ” IE EE T ransactions on Image Proce ssing , vol. 21, no. 6, pp. 3017–3025, 2012. [34] C. Fev otte and N. Dobigeon, “Nonlinear hyperspect ral unmixing with robust nonnega tiv e m atrix facto rizati on, ” IEEE T ransact ions on Image Proce s sing , vol. 24, no. 12, pp. 4810–4819, Dec 2015. [35] J. M. Bioucas-Dias and J. M. P . Nasciment o, “Hyperspect ral subspace identi fi cati on, ” IEEE T ransacti ons on Geoscience and Remote Sensing , vol. 46, no. 8, pp. 2435–2445, Aug 2008. [36] A. Halimi, N. Dobigeon, and J.-Y . T ourneret, “Unsupervised unmixing of hyperspectral images account ing for endmember var iabili ty, ” IEE E T ransactions on Image P r ocessing , vol. 24, no. 12, pp. 4904–4917, december 2015. [37] N. Y okoya, J . Chanussot, and A. Iwasaki, “Nonlinea r unmixing of hyperspectral data using semi-nonnegat i ve matrix factori zation, ” IEEE T ransacti ons on Geoscience and R emote Sensing , vol. 52, no. 2, pp. 1430–1437, Feb . 2014. [38] X. Lu, H. W u, Y . Y uan, P . Y an, and X. Li, “Manifold regula rized sparse nmf for hyperspect ral unmixing, ” IEE E T ransact ions on Geoscie nce and Remote Sensing , vol. 51, no. 5, pp. 2815–2826, 2013. [39] W . Fan, B. Hu, J . Miller , and M. Li, “Comparati ve study between a new nonlinea r model and common linear model for analysin g labora tory simulate d- forest hyperspectral data, ” Internat ional J ournal of Remote Sensing , vol. 30, no. 11, pp. 2951–2962, 2009. [40] A. Halimi, P . Honeine, and J. Bioucas-Dia s, “Hyperspec tral unmixing in presence of endmember va riabil ity , nonlinea rity or mismodelling effec ts, ” http:// arxiv .org/abs/ 1511.05698 , pp. 1–32, Nov . 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment