The DIRHA-English corpus and related tasks for distant-speech recognition in domestic environments

This paper introduces the contents and the possible usage of the DIRHA-ENGLISH multi-microphone corpus, recently realized under the EC DIRHA project. The reference scenario is a domestic environment equipped with a large number of microphones and mic…

Authors: Mirco Ravanelli, Maurizio Omologo

THE DIRHA-ENGLISH CORPUS AND RELA TED T ASKS FOR DIST ANT -SPEECH RECOGNITION IN DOMESTIC ENVIR ONMENTS Mir co Ravanelli, Luca Cristofor etti, Roberto Gr etter , Mar co P ellin, Alessandr o Sosi, Maurizio Omologo Fondazione Bruno K essler (FBK), 38123 Pov o, T rento, Italy (mravanelli,cristofo,gretter,pellin,alesosi,omologo)@fbk.eu ABSTRA CT This paper introduces the contents and the possible usage of the DIRHA-ENGLISH multi-microphone corpus, recently realized under the EC DIRHA project. The reference scenario is a domestic en vironment equipped with a large number of microphones and microphone arrays distributed in space. The corpus is composed of both real and simulated mate- rial, and it includes 12 US and 12 UK English nati ve speakers. Each speaker uttered different sets of phonetically-rich sen- tences, newspaper articles, conv ersational speech, keywords, and commands. From this material, a large set of 1-minute se- quences was generated, which also includes typical domestic background noise as well as inter/intra-room re verberation ef- fects. Dev and test sets were deriv ed, which represent a very precious material for different studies on multi-microphone speech processing and distant-speech recognition. V arious tasks and corresponding Kaldi recipes ha ve already been de- veloped. The paper reports a first set of baseline results obtained using different techniques, including Deep Neural Networks (DNN), aligned with the state-of-the-art at international lev el. Index T erms — distant speech recognition, microphone arrays, corpora, Kaldi, DNN 1. INTR ODUCTION During the last decade, much research has been dev oted to improv e Automatic Speech Recognition (ASR) performance [1]. As a result, ASR has recently been applied in sev eral fields, such as web-search, car control, automated voice an- swering, radiological reporting and it is currently used by mil- lions of users worldwide. Nevertheless, most state-of-the-art systems are still based on close-talking solutions, forcing the user to speak very close to a microphone-equipped device. Although this approach usually leads to better performance, it is easy to predict that, in the future, users will prefer to relax the constraint of handling or wearing an y de vice to ac- cess speech recognition services. There are indeed various real-life situations where a distant-talking (far -field) 1 interac- 1 In the following, the same concept is referred to as “distant-speech”. tion is more natural, con venient and attractiv e [2]. In partic- ular , amongst all the possible applications, an emerging field is speech-based domestic control, where users might prefer to freely interact with their home appliances without wearing or ev en handling any microphone-equipped device. This sce- nario was addressed under the EC DIRHA (Distant-speech Interaction for Robust Home Applications) project 2 , which had the ultimate goal of de veloping voice-enabled automated home services based on Distant-Speech Recognition (DSR) in different languages. Despite the growing interest towards DSR, current tech- nologies still exhibit a significant lack of rob ustness and flexibility , since the adverse acoustic conditions originated by non-stationary noises and acoustic rev erberation make speech recognition significantly more challenging [3]. Although con- siderable progresses were made at multi-microphone front- end processing lev el in order to feed ASR with an enhanced speech input [4, 5, 6, 7, 8], the performance loss observed from close-talking to distant-speech remains quite critical, ev en when the most advanced DNN-based backend frame- works are adopted [9, 10, 11, 12, 13, 14]. T o further progress, a crucial step regards the selection of data suitable to train and test the various speech process- ing, enhancement, and recognition algorithms. Collecting and transcribing suf ficiently large data sets to co ver any possible application scenario is a prohibitive, time-consuming and e x- pensiv e task. In a domestic context, in particular , due to the large variabilities that can be introduced when deplo ying such systems in dif ferent houses, this issue becomes ev en more challenging than in any other traditional ASR application. In this context, an ideal system should be flexible enough in terms of microphone distribution in space, and in terms of other possible profiling actions. Moreover , it must be able to provide a satisfactory beha viour immediately after its instal- lation, and to improve performance thanks to its capability to learn from the en vironment and from the users. In order to dev elop such solutions, the av ailability of high-quality and realistic, multi-microphone corpora rep- resents one of the fundamental steps to wards reducing the 2 The research presented here has been partially funded by the European Unions 7th Framework Programme (FP7/2007-2013) under grant agreement no. 288121 DIRHA (for more details, please see http:// dirha.fbk.eu). performance gap between close-talking and distant-speech interaction. Along this direction, strong ef forts ha ve been spent recently by the international scientific community , through the development of corpora and challenges, such as REVERB [15], CHIME [16, 17] and ASpIRE. Nev erthe- less, we feel that other complementary corpora and tasks are necessary to the research community in order to further boost technological advances in this field, for instance providing a large number of “observ ations” of the same acoustic scene. The DIRHA-ENGLISH corpus complements the set of corpora previously collected under the DIRHA project in other four languages (i.e., Austrian German, Greek, Italian, Portuguese) [18]. It giv es the chance of working on English, the most commonly used language inside the ASR research community , with a very large number of microphone chan- nels, a multi-room setting, and the use of microphone arrays having different characteristics. Half of the material is based on simulations, and half is based on real recordings, which allows one to assess recognition performance in real-world conditions. It is also worth mentioning that some portions of the corpus will be made publicly a vailable, with free access, in the short term (as done with other data produced by the DIRHA consortium). The purpose of this paper is to introduce the DIRHA- ENGLISH corpus as well as to provide some baseline results on phonetically-rich sentences, which were obtained using the Kaldi framework [19]. The resulting TIMIT -like phone recognition task can be seen as complementary to the WSJ- like and con versational speech tasks also av ailable for a next distribution. The remainder of the paper is or ganized as follows. Sec- tion 2 provides a brief description of the DIRHA project, while Section 3 focuses the contents and characteristics of the DIRHA-ENGLISH corpus. Section 4 gi ves a description of the experimental tasks so far defined, and of the corre- sponding baseline results. Section 5 dra ws some conclusi ons. 2. THE DIRHA PR OJECT The EC DIRHA project, which started in January 2012 and lasted three years, had the goal of addressing acoustic scene analysis and distant-speech interaction in a home environ- ment. In the following, some information are reported about project goals, tasks, and corpora. 2.1. Goals and tasks The application scenario targeted by the project is character- ized by a quite flexible voice interactiv e system to talk with in any room, and from any position in space. Exploiting a microphone network distrib uted in the different rooms of the apartment, the DIRHA system reacts properly when a com- mand is giv en by the user . The system is always-listening, waiting for a specific keyword to “capture” in order to begin a dialogue. The dialogue that is triggered in this way , gives the end-user a possible access to devices and services, e.g., open/close doors and windo ws, switch on/off lights, control the temperature, play music, etc. Barge-in (to interact while music/speech prompts are played), speaker verification, con- current dialogue management (to support simultaneous dia- logues with dif ferent users) are some advanced features char - acterizing the system. Finally , a very important aspect to men- tion is the need to limit the rate of false alarms, due to possible misinterpretation of normal con versations or of other sounds captured by the microphones, which do not carry any relev ant message to the system. Starting from these targeted functionalities, se veral exper - imental tasks were defined concerning the combination be- tween front-end processing algorithms (e.g., of speak er local- ization, acoustic echo cancellation, speech enhancement, etc.) and an ASR backend, in each language. Most of these tasks were referred to voice interaction in the ITEA apartment 3 , sit- uated in Trento (Italy), which was the main site for acoustic and speech data collection. 2.2. DIRHA corpora The DIRHA corpora were designed in order to provide multi- microphone data sets that can be used to in vestigate a wide range of tasks as those mentioned abov e. Some data sets were based on simulations realized ap- plying a contamination method [20, 21, 22] that combines clean-speech signals, estimated Impulse Responses (IRs), and real multichannel background noise sequences, as described in [18]. Other corpora were recorded under real-world condi- tions. Besides the DIRHA-ENGLISH corpus described in the next section, other corpora de veloped in the project are: • The DIRHA Sim corpus described in [18] (30 speak- ers x 4 languages), which consists of 1-minute multi- channel sequences including different acoustic ev ents and speech utterances; • A W izard-of-OZ data set proposed in [23] to e valu- ate the performance of speech activity detection and speaker localization components; • The DIRHA AEC corpus [24], which includes data specifically created for studies on Acoustic Echo Can- cellation (AEC), to suppress known interferences dif- fused in the en vironment (e.g., played music); • The DIRHA-GRID corpus [25], a multi-microphone multi-room simulated data set that deri ves from con- taminating the GRID corpus [26] of short commands in the English language. 3 W e w ould like to thank ITEA S.p.A (Istituto T rentino per l’Edilizia Abi- tativ a) for making available the apartment used for this research. 3. THE DIRHA-ENGLISH CORPUS As done for the other four languages, also the DIRHA- ENGLISH corpus consists of a real and a simulated data set, the latter one deri ving from contamination of a clean speech data set described next. 3.1. Clean speech material The clean speech data set was realized in a recording studio of FBK, using professional equipment (e.g., a Neumann TLM 103 microphone) to obtain high-quality 96 kHz - 24 bit mate- rial. 12 UK and 12 US speakers were recorded (6 males and 6 females, for each language). For each of them, the corre- sponding recorded material includes: • 15 read commands; • 15 spontaneous commands; • 13 ke ywords; • 48 phonetically-rich sentences (from the Harvard cor- pus); • 66 or 67 sentences from WSJ-5k; • 66 or 67 sentences from WSJ-20k; • about 10 minutes of con versational speech (e.g., the subject was asked to talk about a mo vie recently seen). The total time is about 11 hours. All the utterances were manually annotated by an expert. For the phonetically-rich sentences, an automatic phone segmentation procedure was applied as done in [27]. An expert then checked manually the resulting phone transcriptions and time-aligned boundaries to confirm their reliability . For both US and UK English, 6 speakers were assigned to the dev elopment set, while the other 6 speak ers were assigned to the test set. These assignments were done in order to dis- tribute WSJ sentences as in the original task [28]. The data set contents are compliant with TIMIT specifications (e.g., file format). 3.2. The microphone network The ITEA apartment is the reference home environment that was av ailable during the project for data collection as well as for the dev elopment of prototypes and showcases. The flat comprises fiv e rooms which are equipped with a network of sev eral microphones. Most of them are high-quality omnidi- rectional microphones (Shure MX391/O), connected to multi- channel clocked pre-amp and A/D boards (RME Octamic II), which allowed a perfectly synchronous sampling at 48 kHz, with 24 bit resolution. The bathroom and two other rooms were equipped with a limited number of microphone pairs 453 cm 485 cm LD07 L1C L4L L2R LA6 L3L Ceiling Array Harmonic Arrays KA6 Kitchen Living-room Fig. 1 . An outline of the microphone set-up adopted for the DIRHA-ENGLISH corpus. Blue small dots represent digital MEMS microphones, red ones refers to the channels consid- ered for the following experimental acti vity , while black ones represent the other av ailable microphones. The right pictures show the ceiling array and the two linear harmonic arrays in- stalled in the living-room. and triplets (i.e., ov erall 12 microphones), while the living- room and the kitchen comprise the lar gest concentration of sensors and devices. As shown in Figure 1, the living-room includes three microphone pairs, a microphone triplet, two 6-microphone ceiling arrays (one consisting of MEMS digi- tal microphones), two harmonic arrays (consisting of 15 elec- tret microphones and 15 MEMS digital microphones, respec- tiv ely). More details about this facility can be found in [18]. Concerning this microphone network, a strong effort was dev oted to characterize the en vironment at acoustic le vel, through dif ferent campaigns of IR estimation, leading to more than 10.000 IRs that describe sound propagation from dif fer- ent positions in space (and different possible orientations of the sound source) to any of the av ailable microphones. The method adopted to estimate an IR consists in diffusing a known Exponential Sine Sweep (ESS) signal in the target en vironment, and recording it by the av ailable microphones [29]. The accuracy of the resulting IR estimation has a re- markable impact on the speech recognition performance, as shown in [30]. For more details on the creation of the ITEA IR database, please refer to [30, 18]. Note that the micro- phone network considered for the DIRHA-ENGLISH data set (shown in Fig.1) is limited to the li ving-room and to the kitchen of the ITEA apartment, but also considers harmonic arrays and MEMS microphones which were unav ailable in the other DIRHA corpora. 3.3. Simulated data set The DIRHA-ENGLISH simulated data sets derive from the clean speech described in Section 3.1, and from the applica- tion of the contamination method discussed in [20, 30]. The resulting corpus consists of a large number of 1- minute sequences, each including a variable number of sen- tences uttered in the li ving-room with different noisy condi- tions. Four types of sequences have been created, correspond- ing to the respectiv e following tasks: 1) Phonetically-rich sentences 4 ; 2) WSJ 5-k utterances; 3) WSJ 20-k utterances; 4) Con versational speech (also including keywords and com- mands). For each sequence, 62 microphone channels are av ailable, as outlined in Section 3.2. 3.4. Real data set For what concerns real recordings, each subject was posi- tioned in the living-room and read the material from a tablet, standing still or sitting on a chair , in a giv en position. After each set, she/he was asked to move to a dif ferent position and take a different orientation. For each speaker , the recorded material corresponds to the same list of contents reported in Section 3.1 for the clean speech data set. Note also that all the channels recorded through MEMS digital microphones were time-aligned with the others during a post-processing step (since using the same clock for all the platforms was not feasible due to different settings and sam- pling frequency in the case of MEMS de vices) . Once collected the whole real material, 1-minute se- quences were derived from it in order to ensure a coherence in terms of sequence between simulation and real data sets. 4. EXPERIMENTS AND RESUL TS This section describes the proposed task and the related base- line e xperiments concerning the US phonetically-rich portion of the DIRHA-English corpus. 4.1. ASR framework 4.1.1. T raining and testing corpora In this work, the training phase is accomplished with the train part of the TIMIT corpus [31]. For the DSR experiments, the 4 While noisy conditions are quite challenging for the WSJ and con versa- tional parts of the DIRHA-English corpus, the phonetically-rich sequences are characterized by more fa vorable conditions in order to make this material more suitable for studies on rev erberation effects only . original TIMIT dataset is re verberated using three impulse re- sponses measured in the living-room. Moreover , some multi- channel noisy background sequences are added to the rever - berated signals, in order to better match real-world condi- tions. Both the impulse responses and the noisy sequences are different from those used to generate the DIRHA-ENGLISH simulated data set. The test phase is conducted using the real and the simu- lated phonetically-rich sentences of the DIRHA-English data set. In both cases, for each 1-minute sequence an oracle voice activity detector (V AD) is applied in the next experiments 5 , in order to avoid any possible bias due to inconsistent sentence boundaries. A down-sampling of the speech sequences from 48 to 16 kHz is finally performed. 4.1.2. F eatur e extraction A standard feature extraction based on MFCCs is applied to the speech sentences. In particular , the signal is blocked into frames of 25 ms with 10 ms overlapping and, for each frame, 13 MFCCs are extracted. The resulting features, together with their first and second order deriv ativ es, are then arranged into a single observation v ector of 39 components. 4.1.3. Acoustic model training In the follo wing experiments, three different acoustic mod- els of increasing complexity are considered. The procedure adopted for training such models is the same as that used for the original s5 TIMIT Kaldi recipe [19]. The first base- line ( mono ), refers to a simple system characterized by 48 context-independent phones of the English language, each modeled by a three state left-to-right HMM (overall using 1000 gaussians). The second baseline ( tri ) is based on a context-dependent phone modeling and on speaker adaptiv e training (SA T). Overall, 2.5k tied states with 15k gaussians are employed. Finally , the DNN baseline ( DNN ), trained with the Karel’ s recipe [32], is composed of 6 hidden layers of 1024 neurons, with a context window of 11 consecuti ve frames (5 before and 5 after the current frame) and an initial learning rate of 0.008. 4.1.4. Pr oposed task and evaluation The original Kaldi recipe is based on a bigram language model estimated from the phone transcriptions av ailable in the training set. Con versely , we propose the adoption of a pure phone-loop (i.e., zero-gram based) task, in order to av oid any non-linear influence and artifacts possibly origi- nated by a language model. Our past experience [30, 33, 14] indeed suggests that, ev en though the use of language models is certainly helpful in increasing the recognition performance, 5 Alternativ e tasks (not presented here) hav e been defined as well with the same material, in order to in vestigate V AD and ASR components together . cl vcl g sil ax ch ih cl k ih n l eh cl vcl g sil ax ch ih cl k ih n l eh Fig. 2 . The phrase “ a chic ken leg ” uttered in close and distant-talking scenarios, respectiv ely . The closures (in red) are dimmed by the rev erberation tail in the distant speech. the adoption of a simple phone-loop task is more suitable for experiments purely focusing on the acoustic information. Another difference with the original Kaldi recipe regards the ev aluation of silences and closures. In the ev aluation phase, the standard Kaldi recipe (based on Sclite ) maps the original 48 English phones into a reduced set of 39 units, as originally done in [34]. In particular , the six closures ( bcl , dcl , gcl , kcl , pcl , tcl ) are mapped as “optional silences” and possible deletions of such units are not scored as errors. These phones would be likely considered as correct, since deletions of short closures occur very frequently . W e believe that the latter aspect might introduce a bias in the ev aluation metrics, especially for DSR tasks, where the rev erberation tail makes the recognition of the closures nearly infeasible, as highlighted in Figure 2. For this reason, we propose to simply filter out all the silences and closures from both the reference and the hypothesized phone sequences. This leads to a performance reduction, since all the fav orable optional silences added in the original recipe are avoided. Howe ver , a more coherent estimation of the recognition rates concerning phones as occlusiv e and vowels is reached. 4.2. Baseline results This section provides some baseline results 6 , which might be useful in the future to other researchers for reference purposes. In the following sections, results based on close- 6 Part of the experiments are conducted with a T esla K40 donated by the NVIDIA Corporation. talking and distant-speech input are presented. 4.2.1. Close-talking performance T able 1 reports the performance obtained by decoding the clean sentences recorded in the FBK recording studio with ei- ther a phone bigram language model or a simple phone-loop. Results are pro vided using both the standard Kaldi s5 and the proposed recipe, in order to highlight all the discrepancies in performance that can be observed in these different experi- mental settings. Recipe LM T ype Mono T ri DNN Standard Kaldi s5 Bigram LM 36.4 23.2 20.1 Standard Kaldi s5 Phone-loop 39.4 26.3 22.4 Proposed Evaluation Bigram LM 42.7 28.6 24.6 Proposed Evaluation Phone-loop 46.7 32.5 27.5 T able 1 . Phone Error Rate (PER%) performance obtained applying different Kaldi recipes to the phonetically-rich sen- tence (dev-set) acquired in the FBK recording studio. As expected, these results highlight that the system perfor- mance is significantly improved when passing from a simple monophone-based model to a more competitiv e DNN base- line. Moreov er , as outlined in Sec.4.1.4, applying the original Kaldi ev aluation provides a mismatch of about 20% in rela- tiv e error reduction, which does not correspond to any real system improvement. Next experiments will be based on the pure phone-loop grammar scored with the proposed ev alua- tion method. 4.2.2. Single distant-micr ophone performance In this section, the results obtained with a single distant microphone are discussed. T able 2 shows the performance achiev ed with some of the microphones highlighted in Fig.1. Simulated Data Real Data Mic. ID Mono T ri DNN Mono T ri DNN LA6 68.8 57.7 51.6 70.5 60.9 55.1 L1C 67.4 58.5 52.4 70.3 61.7 55.6 LD07 67.5 58.1 53.2 71.5 62.6 57.3 KA6 76.7 67.3 64.0 80.5 73.6 70.5 T able 2 . PER(%) performance obtained with single dis- tant microphones for both the simulated and real dataset of phonetically-rich sentences. The results clearly highlights that in the case of distant- speech input the ASR performance is dramatically reduced, if compared to a close-talking case. As already observed with close-talking results, the use of a DNN significantly outper- forms the other acoustic modeling approaches. This is con- sistent for all the considered channels, with both simulated and real data sets. Actually , the performance on real data is slightly worse than that achiev ed on simulated data, due to a lower SNR characterizing the real recording sessions. It is also w orth noting that almost all the channels pro vide a similar performance and a comparable trend over the con- sidered acoustic models. Only the kitchen microphone (KA6) corresponds to a more challenging situation, since all the ut- terances of both real and simulated data sets were pronounced in the living-room. 4.2.3. Delay-and-sum beamforming performance This section reports the results obtained with a standard delay-and-sum beamforming [4] applied to both the ceiling and the harmonic arrays of the living-room. Simulated Data Real Data Array ID Mono T ri DNN Mono T ri DNN Ceiling arr . 66.2 55.9 50.4 65.9 55.9 50.6 Harmonic arr . 66.2 56.0 51.8 66.2 56.2 51.5 T able 3 . PER(%) performance obtained with a delay-and- sum beamforming applied to both the ceiling and the linear harmonic array . T able 3 shows that beamforming is helpful in improving the system performance. For instance, in the case of real data one passes from a PER of 55.1 % , with the single microphone, to a PER of 50.6 % , when delay-and-sum beamforming is ap- plied to the ceiling array signals. Even though the ceiling array is composed of six micro- phones only , it ensures a slightly better performance when compared with a less compact 13 element harmonic array . This result might be due both to a better position of the ceil- ing array , which often ensures the presence of a direct path stronger than reflections, and to adoption of higher quality microphones. The performance improvement introduced by delay-and-sum beamforming is higher with real data, con- firming that spatial filtering techniques are particularly help- ful when the acoustic conditions are less stationary and pre- dictable. 4.2.4. Micr ophone selection performance The DIRHA-English corpus can also be used for microphone selection experiments. It would be thus of interest to pro vide some lower and upper bound performance for a microphone selection technique applied to this data set. T able 4 compares the results achiev ed with random and with oracle selections of the microphone, for each phonetically-rich sentence. For this selection, we considered the six microphones of the living- room, which are depicted as red dots in Figure 1. Results show that a proper microphone selection is crucial for improving the performance of a DSR system. The gap between the upper bound limit, based on an oracle channel Simulated Data Real Data Mic. Sel. Mono Tri DNN Mono T ri DNN Random 67.6 57.7 52.4 70.3 61.0 55.4 Oracle 56.6 47.1 42.0 60.3 49.6 44.0 T able 4 . PER(%) performance obtained with a random and an oracle microphone selection. selection and the lower bound limit, based on a random se- lection of the microphone, is particularly lar ge. This confirms the importance of suitable microphone selection criteria. A proper channel selection has a great potential e ven when compared with a microphone combination based on delay- and-sum beamforming. F or instance, a PER of 44.0 % is obtained with an oracle channel selection against a PER of 50.6% achiev ed with the ceiling array . 5. CONCLUSIONS AND FUTURE WORK This paper described the DIRHA-ENGLISH multi-microphone corpus and the first baseline results concerning the use of the phonetically-rich sentence data sets. Overall, the experimen- tal results show the expected trend of performance, quite well aligned to past works in this field. In research studies on DSR, there are many adv antages in using phonetically-rich material with such a large number of microphone channels. For instance, there is the possibility of better focusing on the impact on performance of some front- end processing techniques for what concerns specific phone categories. The corpus, also includes WSJ and con versational speech data sets that can be object of public distribution 7 and of a possible use in next challenges regarding DSR. The latter data sets can be very helpful to in vestigate other key topics as, for instance, multi-microphone hypothesis combination based on confusion networks, multiple lattices, and rescoring. Forth- coming works include the dev elopment of baselines and re- lated recipes for MEMS microphones, for WSJ and conv ersa- tional sequences, as well as for the UK English language. 6. CORPUS RELEASE Some 1-minute sequences can be found at http://dirha. fbk.eu/DIRHA_English . The access to data that were used in this work, and to related documents, will be possible soon through a FBK server , with modalities that will be re- ported under http://dirha.fbk.eu. In the future, other data sets will be made publicly av ailable, together with correspond- ing documentation and recipes, and with instructions to allo w comparison of systems and maximize scientific insights. 7 The distribution of WSJ data set is under discussion with LDC. 7. REFERENCES [1] Dong Y u and Li Deng, Automatic Speech Recognition - A Deep Learning Appr oach , Springer, 2015. [2] M. W ¨ olfel and J. McDonough, Distant Speech Recog- nition , W iley , 2009. [3] E. H ¨ ansler and G. Schmidt, Speech and Audio Process- ing in Adverse En vironments , Springer , 2008. [4] M. Brandstein and D. W ard, Micr ophone arrays , Springer , Berlin, 2000. [5] W . K ellermann, Beamforming for Speech and A udio Signals , in HandBook of Signal Processing in Acous- tics, Springer , 2008. [6] M. W olf and C. Nadeu, “Channel selection measures for multi-microphone speech recognition, ” Speec h Commu- nication , vol. 57, pp. 170–180, Feb . 2014. [7] S. Makino, T . Lee, and H. Sawada, Blind Speech Sepa- ration , Springer , 2010. [8] P . A. Naylor and N. D. Gaubitch, Speech Dere verbera- tion. , Springer , 2010. [9] P . Swietojanski, A. Ghoshal, and S. Renals, “Hybrid acoustic models for distant and multichannel large vo- cabulary speech recognition, ” in Pr oc. of ASR U 2013 , 2013, pp. 285–290. [10] Y . Liu, P . Zhang, and T . Hain, “Using neural net- work front-ends on far field multiple microphones based speech recognition, ” in Pr oc. of ICASSP 2014 , 2014, pp. 5542–5546. [11] F . W eninger, S. W atanabe, J. Le Roux, J.R. Hershey , Y . T achioka, J. Geiger , B. Schuller , and G. Rigoll, “The MERL/MELCO/TUM System for the REVERB Chal- lenge Using Deep Recurrent Neural Network Feature Enhancement, ” in Pr oc. of the IEEE REVERB W ork- shop , 2014. [12] S. Sakai M. Mimura and T . Kaw ahara, “Rev erberant speech recognition combining deep neural networks and deep autoencoders, ” in Pr oc. of the IEEE REVERB W orkshop , 2014. [13] A. Schwarz, C. Huemmer , R. Maas, and W . Keller - mann, “Spatial diffuseness features for dnn-based speech recognition in noisy and reverberant en viron- ments, ” in Proc. of ICASSP 2015 , 2015. [14] M. Ravanelli and M. Omologo, “Contaminated speech training methods for robust DNN-HMM distant speech recognition, ” in Proc. of INTERSPEECH 2015 , 2015. [15] K. Kinoshita, M. Delcroix, T . Y oshioka, T . Nakatani, E. Habets, R. Haeb-Umbach, V . Leutnant, A. Sehr , W . Kellermann, R. Maas, S. Gannot, and B. Raj, “The rev erb challenge: A common ev aluation frame work for derev erberation and recognition of reverberant speech, ” in Pr oc. of W ASP AA 2013 , 2013, pp. 1–4. [16] J. Barker , E. V incent, N. Ma, H. Christensen, and P . Green, “The P ASCAL CHiME speech separation and recognition challenge, ” Computer Speech and Lan- guage , v ol. 27, no. 3, pp. 621–633, 2013. [17] J. Barker , R. Marxer , E. V incent, and S. W atanabe, “The third CHiME Speech Separation and Recognition Chal- lenge: Dataset, task and baselines, ” in Pr oc. of ASRU 2015 , 2015. [18] L. Cristoforetti, M. Ravanelli, M. Omologo, A. Sosi, A. Abad, M. Hagmueller, and P . Maragos, “The DIRHA simulated corpus, ” in Proc. of LREC 2014 , 2014, pp. 2629–2634. [19] D. Pov ey , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz, J. Silovsky , G. Stemmer, and K. V esely , “The Kaldi Speech Recognition T oolkit, ” in Pr oc. of ASRU 2011 , 2011. [20] M. Matassoni, M. Omologo, D. Giuliani, and P . Sv aizer , “Hidden Markov model training with contaminated speech material for distant-talking speech recognition, ” Computer Speech & Language , vol. 16, no. 2, pp. 205– 223, 2002. [21] L. Couvreur , C. Couvreur, and C. Ris, “A corpus-based approach for rob ust ASR in re verberant en vironments., ” in Pr oc. of INTERSPEECH 2000 , 2000, pp. 397–400. [22] T . Haderlein, E. N ¨ oth, W . Herbordt, W . K ellermann, and H. Niemann, “Using Artificially Re verberated T raining Data in Distant-T alking ASR, ” 2005, vol. 3658 of Lec- tur e Notes in Computer Science , pp. 226–233, Springer . [23] A. Brutti, M. Rav anelli, P . Svaizer , and M. Omologo, “ A speech e vent detection/localization task for multi- room en vironments, ” in Pr oc. of HSCMA 2014 , 2014, pp. 157–161. [24] E. Zwyssig, M. Rav anelli, P . Svaizer , and M. Omologo, “ A multi-channel corpus for distant-speech interaction in presence of kno wn interferences, ” in Pr oc. of ICASSP 2015 , 2015, pp. 4480–4485. [25] M. Matassoni, R. Astudillo, A. Katsamanis, and M. Ra- vanelli, “The DIRHA-GRID corpus: baseline and tools for multi-room distant speech recognition using distributed microphones, ” in Pr oc. of INTERSPEECH 2014 , 2014, pp. 1616–1617. [26] M. Cook e, J. Barker , S. Cunningham, and X. Shao, “ An audio-visual corpus for speech perception and automatic speech recognition, ” Journal of the Acoustical Society of America , vol. 120, no. 5, pp. 2421–2424, November 2006. [27] F . Brugnara, D. Fala vigna, and M. Omologo, “ Auto- matic segmentation and labeling of speech based on hi d- den markov models, ” Speech Communication , vol. 12, no. 4, pp. 357–370, 1993. [28] Douglas B. Paul and Janet M. Baker , “The design for the wall street journal-based csr corpus, ” in Pr oc. of the W orkshop on Speech and Natural Language , 1992, pp. 357–362. [29] A. Farina, “Simultaneous measurement of impulse re- sponse and distortion with a swept-sine technique, ” in Pr oc. of the 108th AES Con vention , 2000, pp. 18–22. [30] M. Rav anelli, A. Sosi, P . Svaizer , and M. Omologo, “Im- pulse response estimation for robust speech recognition in a rev erberant environment, ” in Pr oc. of EUSIPCO 2012 , 2012, pp. 1668–1672. [31] J. S. Garofolo, L. F . Lamel, W . M. Fisher, J. G. Fis- cus, D. S. Pallett, and N. L. Dahlgren, “D ARP A TIMIT Acoustic Phonetic Continuous Speech Corpus CDR OM, ” 1993. [32] A. Ghoshal and D. Pov ey , “Sequence discriminative training of deep neural networks, ” in Pr oc. INTER- SPEECH 2013 , 2013. [33] M. Ra vanelli and M. Omologo, “On the selection of the impulse responses for distant-speech recognition based on contaminated speech training, ” in Pr oc. of INTER- SPEECH 2014 , 2014, pp. 1028–1032. [34] K.-F . Lee and H.-W . Hon, “Speaker -independent phone recognition using hidden mark ov models, ” IEEE T rans- actions on Acoustics, Speech and Signal Processing , vol. 37, no. 11, pp. 1641–1648, No v 1989.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

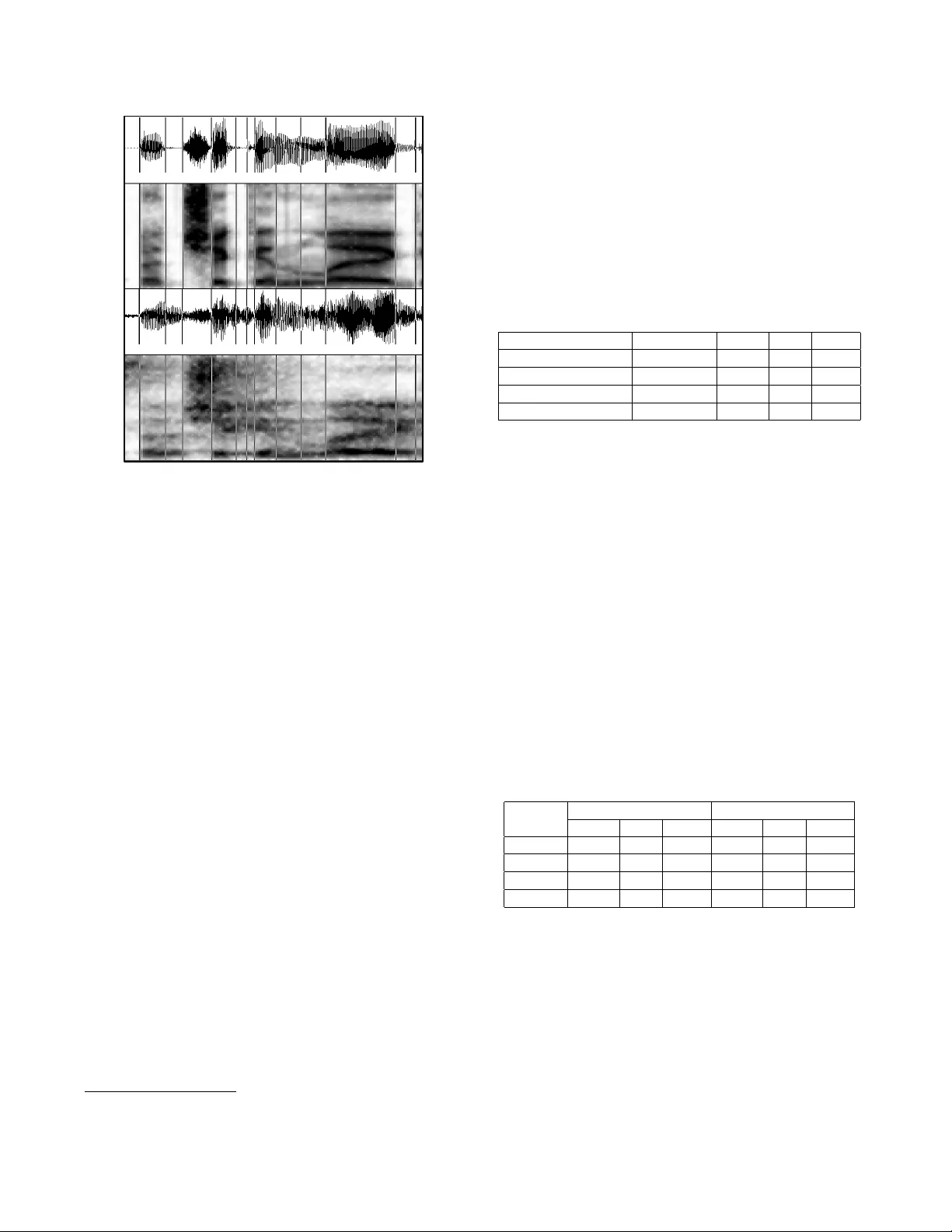

Leave a Comment