Generating Nontrivial Melodies for Music as a Service

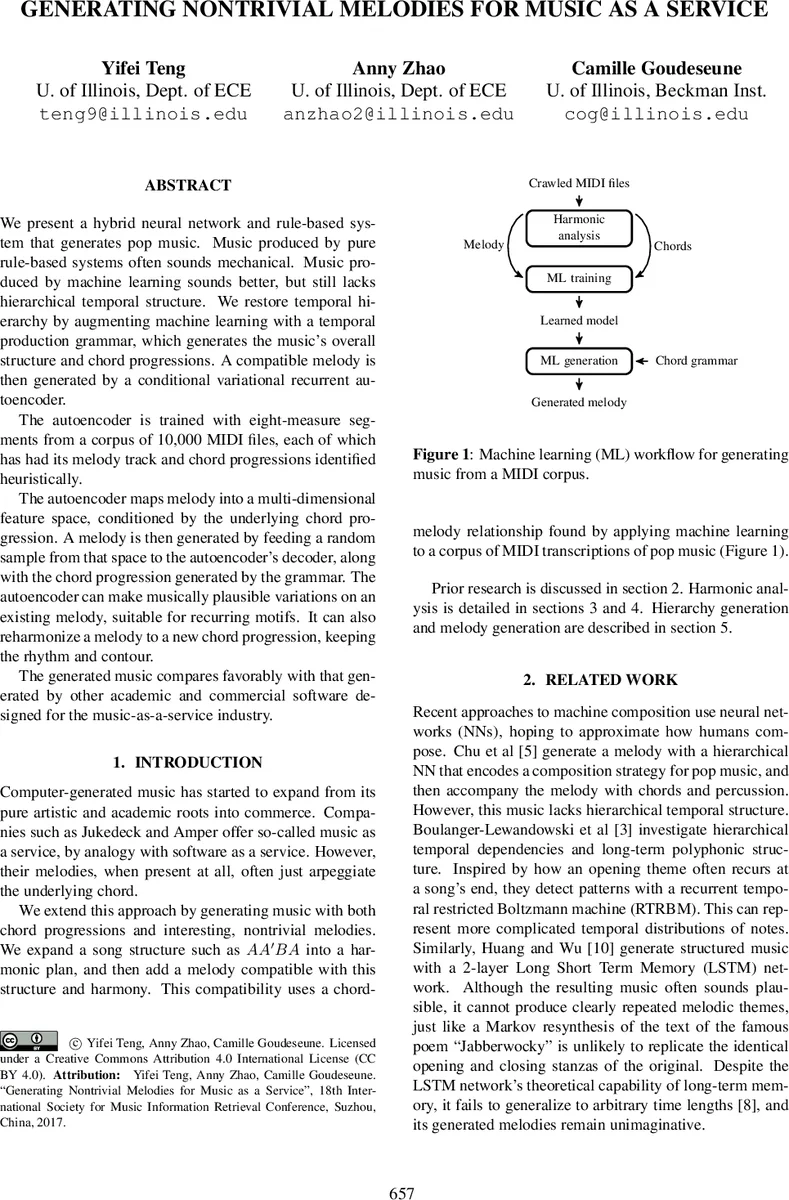

We present a hybrid neural network and rule-based system that generates pop music. Music produced by pure rule-based systems often sounds mechanical. Music produced by machine learning sounds better, but still lacks hierarchical temporal structure. We restore temporal hierarchy by augmenting machine learning with a temporal production grammar, which generates the music’s overall structure and chord progressions. A compatible melody is then generated by a conditional variational recurrent autoencoder. The autoencoder is trained with eight-measure segments from a corpus of 10,000 MIDI files, each of which has had its melody track and chord progressions identified heuristically. The autoencoder maps melody into a multi-dimensional feature space, conditioned by the underlying chord progression. A melody is then generated by feeding a random sample from that space to the autoencoder’s decoder, along with the chord progression generated by the grammar. The autoencoder can make musically plausible variations on an existing melody, suitable for recurring motifs. It can also reharmonize a melody to a new chord progression, keeping the rhythm and contour. The generated music compares favorably with that generated by other academic and commercial software designed for the music-as-a-service industry.

💡 Research Summary

The paper presents a hybrid architecture for generating pop‑style music that combines a rule‑based temporal generative grammar with a conditional variational recurrent autoencoder (CVRAE). The authors argue that purely rule‑based systems produce structurally coherent pieces but suffer from mechanical‑sounding melodies, while pure machine‑learning approaches capture local musicality but lack long‑range hierarchical organization. To address both shortcomings, the system first creates a high‑level harmonic scaffold using a temporal generative grammar. This grammar encodes common pop forms (e.g., A A′ B A), supports symbol binding for repeated sections, and introduces controlled Gaussian perturbations to generate variations of a motif while preserving the underlying chord progression.

For the melodic component, the authors build a CVRAE trained on eight‑measure excerpts extracted from a corpus of 10,000 MIDI files (approximately 1.9 million measures). Melody tracks are identified automatically by a two‑stage scoring system that combines a rubric (instrument type, note density, pitch range) with an entropy measure of pitch distribution, achieving a 15 % error rate against manually labeled ground truth. Each melody is quantized to sixteenth‑note resolution and encoded as a one‑hot vector of pitch offsets relative to the tonic (−16 to +16 semitones) plus a binary “attack” flag. Chord information is encoded per measure using one‑hot scale‑degree vectors and Boolean channels for chord quality, supporting up to two chords per measure.

The CVRAE architecture consists of 12 bidirectional GRU layers for the encoder and 12 for the decoder (24 recurrent layers total), with residual connections every third layer to improve gradient flow. The latent space is modeled as a multivariate Gaussian; during training a KL‑divergence term encourages the posterior to match a standard normal distribution. The authors employ a sigmoid‑shaped KL warm‑up schedule, which they found reduces reconstruction loss more effectively than linear scaling. The network is implemented in TensorFlow and trained for four days on an NVIDIA Tesla K80 GPU, using ELU activations and gated recurrent units for computational efficiency.

During generation, a chord progression produced by the grammar is supplied as a conditioning vector at every recurrent layer. A random sample drawn from the learned Gaussian latent space is then fed to the decoder together with the chord conditioning, yielding a novel melody that respects the given harmony. Because the latent representation can be perturbed independently of the chord sequence, the system can “reharmonize” an existing melody—changing the chord progression while preserving rhythmic contour and melodic shape. Moreover, by reusing the same symbolic section identifier with different Gaussian perturbations, the model produces coherent variations of a motif across repeated sections, addressing the lack of long‑term thematic development in many prior neural music generators.

The authors evaluate the system both quantitatively (reconstruction loss, KL divergence) and qualitatively through listening tests. Compared against commercial music‑as‑a‑service platforms such as Jukedeck and Amper, as well as academic baselines like hierarchical LSTMs and recurrent temporal RBMs, the proposed method scores higher on perceived musicality, structural coherence, and melodic interest. Human listeners particularly note the presence of recognizable motifs and sensible variations, which are absent in many baseline outputs.

In summary, the paper’s contributions are threefold: (1) a temporal generative grammar that explicitly models high‑level song form and enables controlled motif repetition; (2) a conditional variational recurrent autoencoder that learns a probabilistic latent space for melodies conditioned on chord progressions; and (3) a demonstration that combining these components yields pop music with both realistic harmonic structure and non‑trivial, thematically coherent melodies, making it a promising approach for scalable music‑as‑a‑service applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment