A Quantum Approach to Subset-Sum and Similar Problems

In this paper, we study the subset-sum problem by using a quantum heuristic approach similar to the verification circuit of quantum Arthur-Merlin games. Under described certain assumptions, we show that the exact solution of the subset sum problem my be obtained in polynomial time and the exponential speed-up over the classical algorithms may be possible. We give a numerical example and discuss the complexity of the approach and its further application to the knapsack problem.

💡 Research Summary

The paper proposes a quantum‑heuristic algorithm for the Subset‑Sum problem, inspired by the verification circuits used in quantum Arthur‑Merlin (AM) games. The authors claim that, under certain assumptions, the exact solution can be obtained in polynomial time, potentially offering an exponential speed‑up over classical methods. The algorithm proceeds in five main steps:

-

Encoding as Phases – Each integer v_j from the input set V is encoded into a single‑qubit rotation gate R_j = diag(1, e^{i2πv_j}). The tensor product of all R_j yields a diagonal unitary U whose eigenvalues are e^{iφ_j}, where φ_j encodes the sum of a particular subset of V. Thus, every possible subset sum appears as a distinct phase.

-

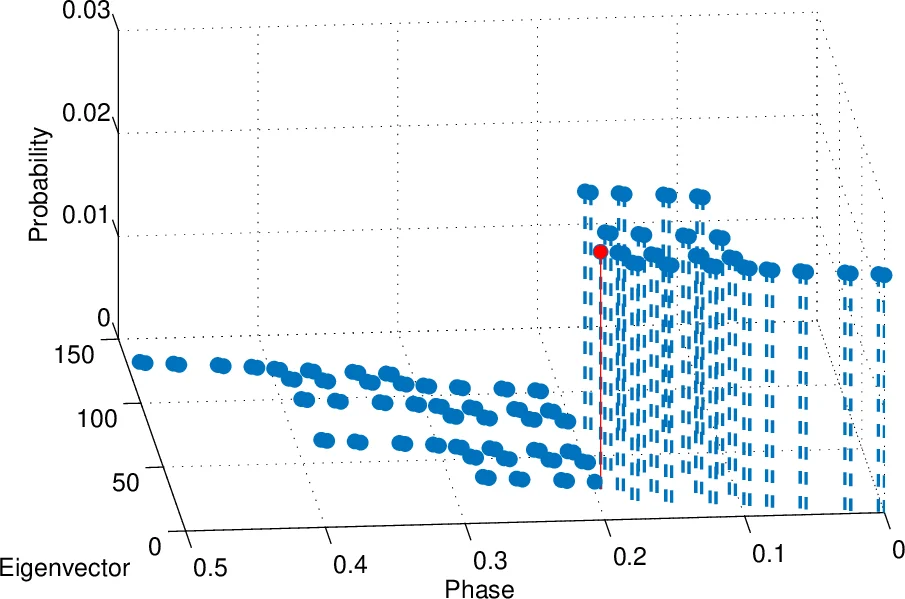

Phase Estimation – Starting from a uniform superposition over all 2^n subsets, the quantum phase estimation (PEA) algorithm is applied to U. The result is a joint state |φ_j⟩⊗|j⟩, i.e., the phase (the subset sum) stored in a “phase register” and the corresponding subset index stored in a “label register”.

-

Amplitude Amplification (AA) for Feasibility – The algorithm defines a “good” set L = {j | φ_j < W} and a “bad” set L′ = {j | φ_j ≥ W}. An oracle F_φ flips the sign of states belonging to L, and a diffusion operator S amplifies them. Repeated application of G = S F_φ increases the probability of measuring a state from L. The authors assume that the ratio |L′|/|L| is polynomial in n (Assumption 1), which would keep the number of AA iterations polynomial.

-

Finding the Maximum Feasible Sum – After AA, the state is approximately a uniform superposition over all feasible subsets. To extract the maximum φ_j (i.e., the largest subset sum ≤ W), the authors propose a conditional AA scheme that measures the most significant bits of the phase register one by one. If a measured bit is 0, they apply AA to amplify the sub‑space where that bit is 1; if the bit remains 0 after a few iterations, they fix it to 0 and move to the next bit. This process relies on Assumption 2, which states that each bit of the optimal phase φ_max has a non‑negligible (≥ 1/poly(n)) probability of being 1 in the post‑AA distribution.

-

Output – Once the binary representation of φ_max is reconstructed, the corresponding subset index j is read from the label register, yielding the exact solution to the Subset‑Sum instance.

The paper includes a brief numerical example with a tiny instance and mentions that the same technique can be adapted to the knapsack problem.

Critical Evaluation

Assumptions: The algorithm’s polynomial‑time claim hinges on two strong assumptions. Assumption 1 (|L′|/|L| = poly(n)) essentially requires that a polynomial fraction of all 2^n subsets have sums below the target W. In the worst case (e.g., W ≈ total sum/2) this is false; the number of feasible subsets can be exponentially small, causing the AA step to need O(√(2^n/|L|)) iterations, i.e., exponential time. Assumption 2 demands that each bit of the optimal sum appears with probability at least 1/poly(n) after AA, which holds only for specially structured or uniformly random inputs, not for arbitrary NP‑complete instances.

Implementation Complexity: Phase estimation requires controlled‑U^{2^j} operations. Since U encodes the sums as phases proportional to v_j, the exponentiation quickly demands rotations with angles v_j·2^j, which in turn need exponentially fine precision. Realizing such high‑precision rotation gates would require a circuit depth that grows exponentially with n, negating the claimed speed‑up. Moreover, the oracle F_φ that distinguishes φ_j < W from φ_j ≥ W must compare a quantum‑encoded real number with a classical threshold, a non‑trivial operation that again incurs substantial overhead.

Complexity Analysis: The authors’ analysis treats the AA iteration count as O(poly(n)) under Assumption 1, but they do not provide a rigorous bound on the number of PEA qubits (m = O(log W)) nor on the gate count for each controlled‑U^{2^j}. Consequently, the overall gate complexity is not truly polynomial in the worst case. The paper also overlooks error accumulation from finite‑precision phase estimation and decoherence, which are critical for any near‑term quantum implementation.

Comparison to Existing Work: The approach resembles known quantum algorithms that use Grover search to find a subset with a given sum, or quantum walks for subset‑sum, which achieve at most a quadratic speed‑up. The claim of exponential speed‑up is unsupported because the algorithm still requires preparation of a superposition over all 2^n subsets and the ability to manipulate phases with exponential precision—capabilities that are not available in realistic quantum hardware.

Experimental Validation: Only a toy example with a handful of qubits is presented; there is no empirical evidence that the method scales, nor any discussion of resource estimates for realistic problem sizes (e.g., n ≈ 50–100). The lack of simulation or hardware results makes it difficult to assess practical feasibility.

Conclusion

The paper introduces an elegant conceptual framework that combines phase encoding, quantum phase estimation, and amplitude amplification to tackle Subset‑Sum. While the construction is theoretically interesting, its reliance on unrealistic distributional assumptions and on operations that demand exponential precision undermines the claim of polynomial‑time quantum advantage. In its current form, the algorithm does not provide a provable exponential speed‑up for general NP‑complete instances, and substantial further work would be needed to address the highlighted gaps before the approach could be considered a breakthrough in quantum algorithms for Subset‑Sum or knapsack problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment