ChimpCheck: Property-Based Randomized Test Generation for Interactive Apps

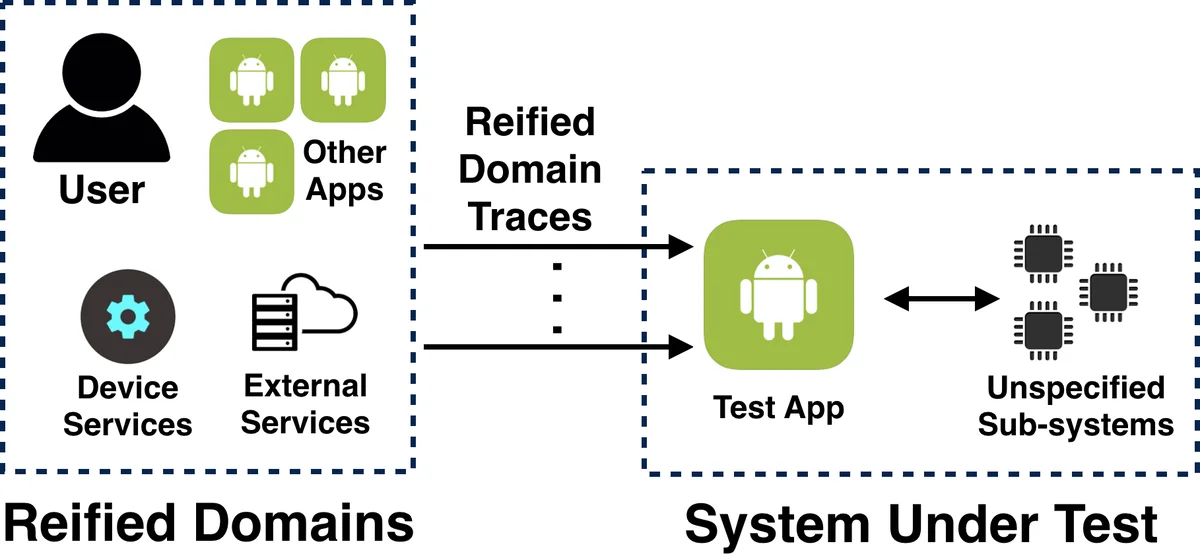

We consider the problem of generating relevant execution traces to test rich interactive applications. Rich interactive applications, such as apps on mobile platforms, are complex stateful and often distributed systems where sufficiently exercising the app with user-interaction (UI) event sequences to expose defects is both hard and time-consuming. In particular, there is a fundamental tension between brute-force random UI exercising tools, which are fully-automated but offer low relevance, and UI test scripts, which are manual but offer high relevance. In this paper, we consider a middle way—enabling a seamless fusion of scripted and randomized UI testing. This fusion is prototyped in a testing tool called ChimpCheck for programming, generating, and executing property-based randomized test cases for Android apps. Our approach realizes this fusion by offering a high-level, embedded domain-specific language for defining custom generators of simulated user-interaction event sequences. What follows is a combinator library built on industrial strength frameworks for property-based testing (ScalaCheck) and Android testing (Android JUnit and Espresso) to implement property-based randomized testing for Android development. Driven by real, reported issues in open source Android apps, we show, through case studies, how ChimpCheck enables expressing effective testing patterns in a compact manner.

💡 Research Summary

The paper tackles a fundamental challenge in testing rich interactive applications—especially Android apps—by reconciling the low relevance of fully automated random UI exercisers with the high manual effort required for scripted UI tests. The authors propose a “fused” approach that combines human‑written domain knowledge with property‑based random generation, and they materialize this idea in a prototype tool called ChimpCheck.

ChimpCheck’s core contribution is a domain‑specific language (DSL) that treats user‑interaction event sequences as first‑class UI traces. The language defines primitive events (Click, Type, Rotate, Sleep, etc.) and combinators for sequencing (:>>), non‑deterministic choice (<+>), and interruptible sequencing (*>>). On top of this formal trace language, the tool builds trace generators—ScalaCheck generators that can produce potentially infinite families of UI traces. By writing a generator, a developer can describe a whole class of test scenarios (e.g., valid and invalid login flows) rather than a single concrete script.

The integration with ScalaCheck provides the forAll combinator, which samples traces from a generator and executes each sample on an Android device or emulator via a driver built on Android JUnit and Espresso (the “ChimpDriver”). Execution failures are reported not only with traditional stack traces but also with the exact UI trace that led to the failure, giving developers a concise, reproducible description of the bug.

Beyond generating traces, ChimpCheck supports property‑based assertions. Within a chimpCheck { … } block, developers can write predicates on UI state (e.g., isDisplayed, isClickable) and on internal application state (e.g., mediaPlayerIsPlaying). The framework evaluates these predicates at the appropriate points in the trace, allowing detection of subtle logical errors that do not manifest as crashes.

The paper validates the approach with two case studies drawn from real open‑source Android applications. The first case studies a music‑streaming app’s login flow: the generator inserts screen rotations, app suspensions, and resumptions at random positions, exposing crashes and UI inconsistencies that would be missed by pure random testing or by manually scripted tests. The second case studies the play/stop toggle: a generator drives the app to a logged‑in state and then repeatedly toggles playback while asserting that the UI toggle state matches the underlying MediaPlayer state. Both studies demonstrate that a few lines of DSL code can express complex, relevant test families and achieve higher fault detection than traditional methods.

Key contributions listed by the authors are: (1) a formal core language for UI traces, (2) the notion of trace generators that lift scripting to sets of sequences (including infinite sets), (3) an implementation that reuses ScalaCheck’s sampling machinery and Espresso’s execution capabilities, (4) empirical evidence that the fused approach yields compact yet powerful test specifications, and (5) a vision that the same ideas can be ported to other interactive platforms such as iOS or web applications.

In the discussion, the authors outline future directions: automated synthesis or learning of generators, richer models of interrupt events, integration with large‑scale distributed testing infrastructures, and tighter coupling with static analysis to guide generator design. Overall, the paper presents a novel, practical framework that bridges the gap between fully automated random testing and manual UI scripting, offering developers a scalable way to inject domain knowledge into property‑based random testing of interactive applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment