Learning to Synthesize a 4D RGBD Light Field from a Single Image

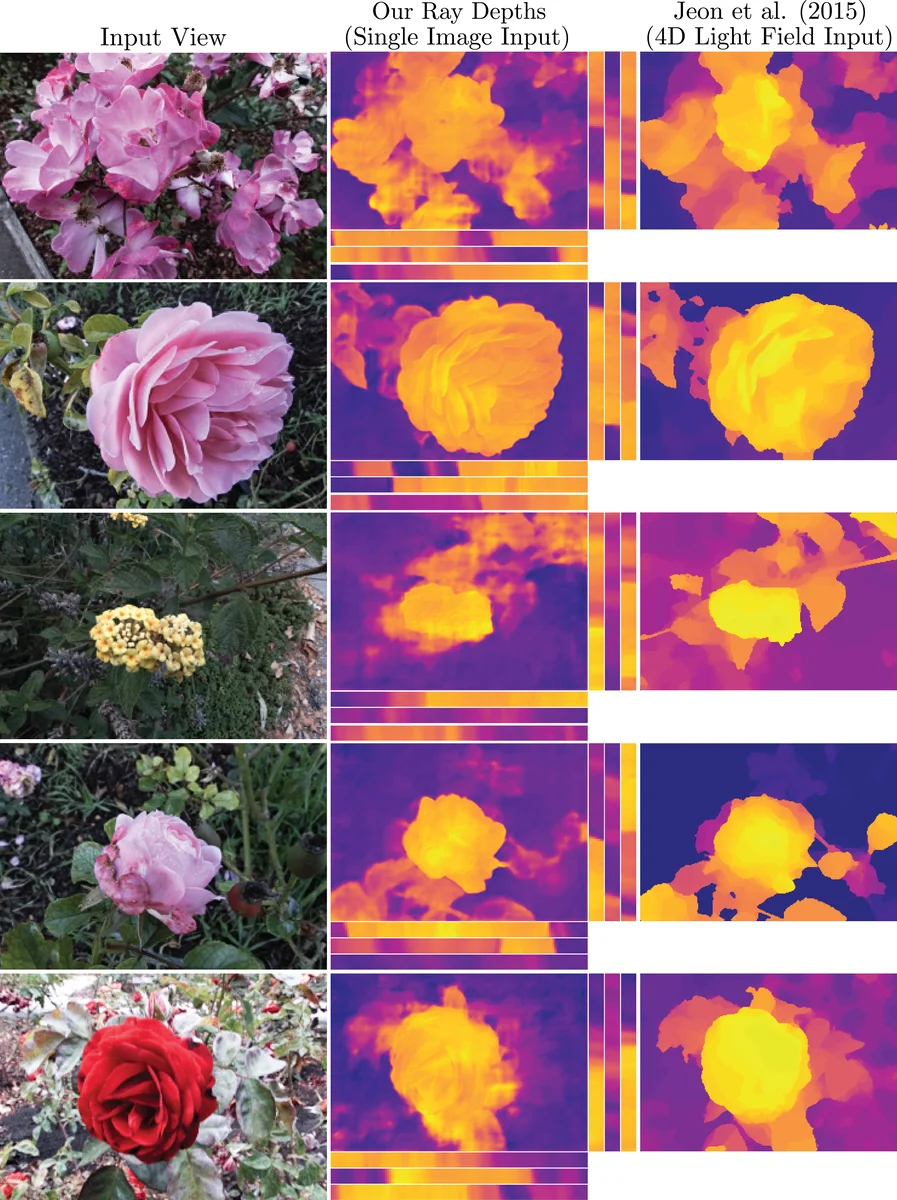

We present a machine learning algorithm that takes as input a 2D RGB image and synthesizes a 4D RGBD light field (color and depth of the scene in each ray direction). For training, we introduce the largest public light field dataset, consisting of over 3300 plenoptic camera light fields of scenes containing flowers and plants. Our synthesis pipeline consists of a convolutional neural network (CNN) that estimates scene geometry, a stage that renders a Lambertian light field using that geometry, and a second CNN that predicts occluded rays and non-Lambertian effects. Our algorithm builds on recent view synthesis methods, but is unique in predicting RGBD for each light field ray and improving unsupervised single image depth estimation by enforcing consistency of ray depths that should intersect the same scene point. Please see our supplementary video at https://youtu.be/yLCvWoQLnms

💡 Research Summary

The paper introduces a novel approach for “local light‑field synthesis,” where a single 2D RGB photograph is expanded into a full 4‑dimensional RGB‑D light field (color and depth for every ray). To train such a system the authors first release the largest public light‑field dataset to date: 3,343 plenoptic captures of flowers and plants taken with a Lytro Illum camera. Each capture contains 376 × 541 spatial samples and 14 × 14 angular samples; for experiments an 8 × 8 angular grid fully inside the aperture is used. The dataset is diverse in species, occlusion complexity, and natural illumination, providing a realistic benchmark for view synthesis and unsupervised geometry learning.

The synthesis pipeline is factorized into three stages, each implemented as a convolutional neural network (CNN) or a differentiable renderer:

-

Depth Estimation Network (d) – Given the central view L(x, 0), the network predicts a depth map for every angular coordinate u (i.e., D(x, u) for each of the 8 × 8 viewpoints). This “per‑ray depth” representation is more expressive than a single depth map and enables consistency constraints across views.

-

Lambertian Rendering (r) – Using the predicted depths and the input image, a physically‑based renderer synthesizes a Lambertian approximation of the light field L_r(x, u). Because Lambertian surfaces preserve color along epipolar lines, this step provides a clean baseline that isolates geometry‑induced warping errors.

-

Occlusion & Non‑Lambertian Refinement (o) – A second CNN takes L_r and D as input and learns to correct rays that are occluded in the reference view or exhibit non‑Lambertian effects such as specularities, translucency, and shadows. The output is the final RGB‑D light field (\hat L(x, u)).

A key technical contribution is the depth‑consistency regularization ψ_c. In a light field, rays that intersect the same 3D point lie on lines of constant slope in the (x, u) domain. The authors enforce that depths along these sheared lines be equal, implemented as an L1 loss between D(x, u) and D(x + D(x, u), u − 1). This regularizer addresses two common failure modes of unsupervised depth learning: (i) texture‑less regions where photometric loss provides no gradient, and (ii) occluded points where the correct depth would cause sampling of the occluder and thus increase reconstruction error. By penalizing depth discontinuities along the physically correct epipolar geometry, the network learns meaningful depth even without ground‑truth supervision. A total‑variation term ψ_tv further smooths depth maps.

The overall training objective combines three terms: (1) L1 error between the Lambertian render L_r and the ground‑truth light field L, (2) L1 error between the final prediction (\hat L) and L, and (3) the depth regularizers weighted by λ_c and λ_tv. Including both reconstruction losses prevents the refinement network from taking over the entire synthesis task, thereby preserving the learning signal for the depth estimator.

Experimental results show that the proposed method outperforms prior single‑image depth estimation techniques and view‑synthesis baselines on the new dataset. Quantitatively, the depth‑consistency regularizer reduces mean absolute depth error, especially in texture‑poor and occluded regions. Qualitatively, the refined light fields exhibit realistic refocusing, correct handling of occlusions, and faithful reproduction of specular highlights and translucency—effects that the Lambertian stage alone cannot capture.

Limitations are acknowledged: the model is trained on camera‑scale baselines (≈1 mm), so extending to wide‑baseline VR/AR scenarios would require additional data and possibly architectural changes. The linear shearing used in ψ_c may be less effective for scenes with abrupt depth jumps, leaving residual artifacts.

In summary, the paper makes three major contributions: (1) a large, publicly available real‑world light‑field dataset, (2) a novel depth‑consistency regularizer that enables unsupervised per‑ray depth learning from a single image, and (3) a two‑stage CNN pipeline that first renders a Lambertian light field and then refines it to recover occlusions and non‑Lambertian effects. This work establishes a practical pathway for generating full 4‑D RGB‑D light fields from a single photograph, opening new possibilities for computational photography, AR/VR content creation, and self‑supervised 3‑D representation learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment