Interaction Methods for Smart Glasses

Since the launch of Google Glass in 2014, smart glasses have mainly been designed to support micro-interactions. The ultimate goal for them to become an augmented reality interface has not yet been attained due to an encumbrance of controls. Augmented reality involves superimposing interactive computer graphics images onto physical objects in the real world. This survey reviews current research issues in the area of human computer interaction for smart glasses. The survey first studies the smart glasses available in the market and afterwards investigates the interaction methods proposed in the wide body of literature. The interaction methods can be classified into hand-held, touch, and touchless input. This paper mainly focuses on the touch and touchless input. Touch input can be further divided into on-device and on-body, while touchless input can be classified into hands-free and freehand. Next, we summarize the existing research efforts and trends, in which touch and touchless input are evaluated by a total of eight interaction goals. Finally, we discuss several key design challenges and the possibility of multi-modal input for smart glasses.

💡 Research Summary

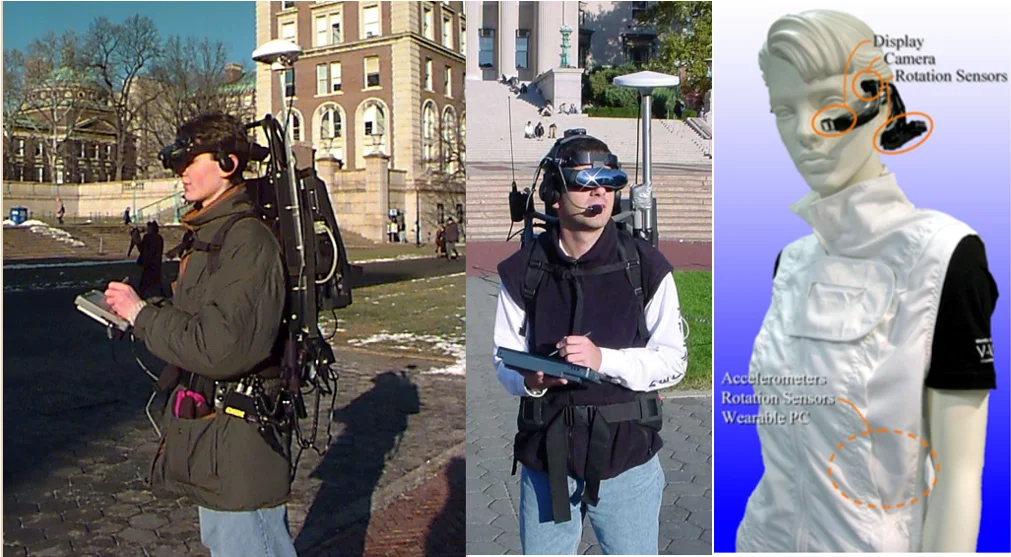

The paper provides a comprehensive survey of interaction methods for smart glasses, tracing the evolution of wearable head‑mounted displays from early prototypes such as the Touring Machine to contemporary commercial products like Google Glass, Sony SmartEyeGlass, and Microsoft HoloLens. After outlining the market landscape and the sensor suites typically embedded in these devices (RGB cameras, depth/infrared sensors, microphones, inertial measurement units, etc.), the authors classify user input techniques into two primary families: touch‑based and touchless.

Touch‑based interaction is further divided into on‑device (touchpads, buttons, or capacitive surfaces built into the glasses) and on‑body (wearable peripherals such as wristbands, smart watches, or rings that capture gestures). On‑device methods are simple, low‑latency, and require no extra hardware, but they suffer from the limited surface area of the glasses and consequently support only micro‑interactions. On‑body approaches expand the interaction space by leveraging additional sensors on auxiliary wearables, enabling richer gestures while introducing extra cost, power consumption, and potential ergonomics issues.

Touchless interaction is split into hands‑free and free‑hand categories. Hands‑free techniques rely on non‑contact signals—head orientation, eye‑gaze, voice commands, or even brain‑computer interfaces—to trigger actions. These are valuable in contexts where the user’s hands are occupied (e.g., driving, industrial tasks) but often exhibit higher false‑positive rates, increased cognitive load, and social acceptability concerns (especially for voice in public). Free‑hand methods employ cameras and depth sensors to recognize hand gestures directly in the field of view. They enable intuitive, natural commands and support 3‑D manipulation, yet they are highly sensitive to lighting conditions, background clutter, and impose significant computational and energy demands on the glasses.

To evaluate the suitability of each technique, the authors define eight interaction goals: (1) speed/latency, (2) accuracy/error rate, (3) energy efficiency, (4) cognitive load, (5) social acceptability & privacy, (6) security & data protection, (7) extensibility across scenarios, and (8) cost & manufacturability. Each input class is mapped against these goals, revealing trade‑offs: for instance, free‑hand gestures score high on speed and accuracy but low on cognitive load and privacy; on‑device touch scores well on energy and cost but poorly on extensibility.

The survey highlights recent research trends that move beyond single‑modality solutions toward multimodal, context‑aware interfaces. Hybrid systems that fuse voice, gaze, and gesture are emerging, often powered by lightweight machine‑learning models and edge‑computing techniques to mitigate latency and battery drain. Adaptive frameworks that automatically switch the dominant modality based on environmental cues (e.g., lighting, user activity) are also discussed.

Finally, the paper identifies four overarching design challenges for future smart‑glass interaction: (1) real‑time processing of sensor data within the constraints of small displays and limited compute resources; (2) ergonomic mitigation of fatigue and musculoskeletal strain during prolonged use; (3) robust privacy and security mechanisms for continuously captured visual and audio streams; and (4) the development of a unified multimodal architecture that can seamlessly integrate diverse input channels while remaining cost‑effective. By systematically categorizing existing methods, evaluating them against concrete interaction goals, and outlining open research problems, the authors provide a clear roadmap for advancing smart glasses from micro‑interaction devices toward fully fledged augmented‑reality platforms.

Comments & Academic Discussion

Loading comments...

Leave a Comment