Toward Deeper Understanding of Neural Networks: The Power of Initialization and a Dual View on Expressivity

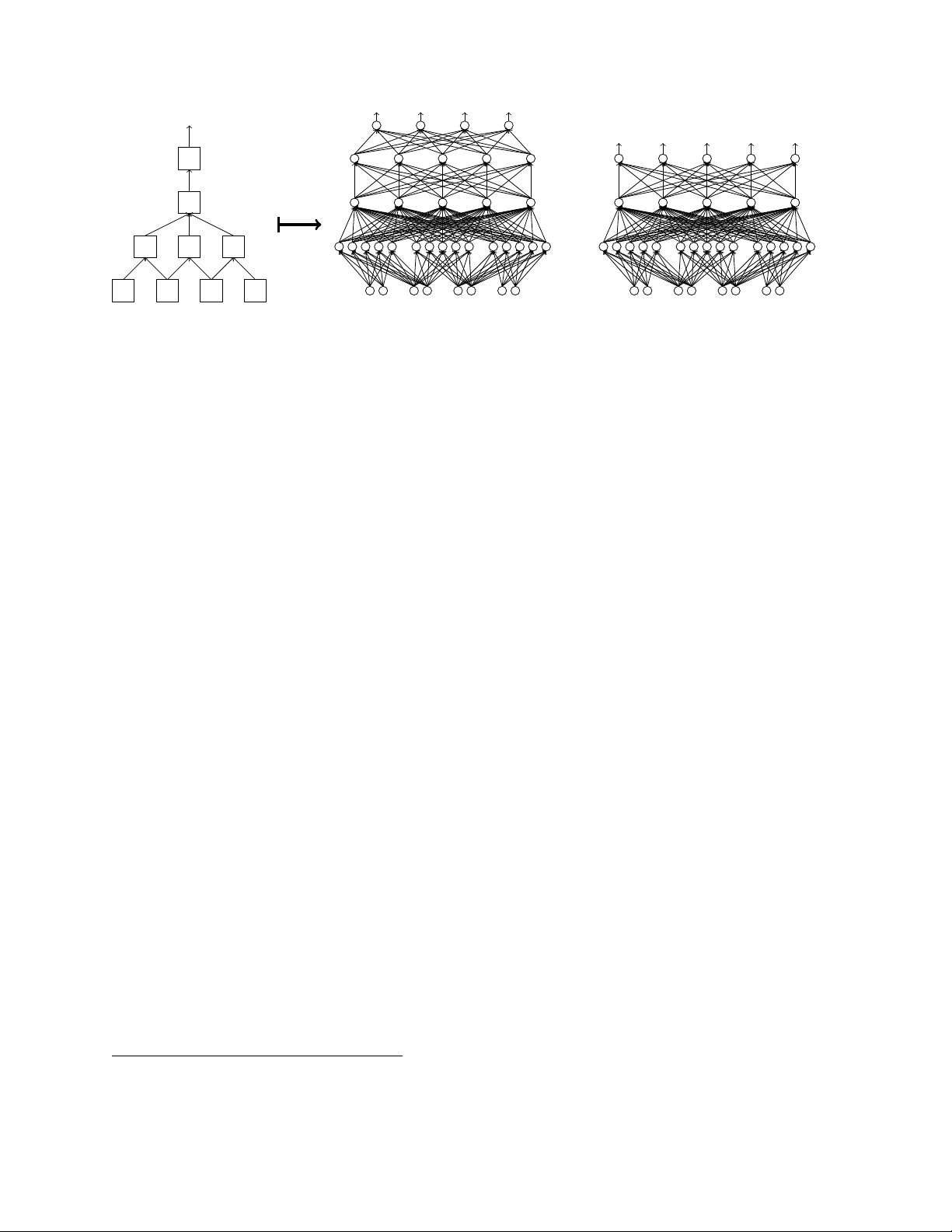

We develop a general duality between neural networks and compositional kernels, striving towards a better understanding of deep learning. We show that initial representations generated by common random initializations are sufficiently rich to express…

Authors: Amit Daniely, Roy Frostig, Yoram Singer