Range-Clustering Queries

In a geometric $k$-clustering problem the goal is to partition a set of points in $\mathbb{R}^d$ into $k$ subsets such that a certain cost function of the clustering is minimized. We present data structures for orthogonal range-clustering queries on a point set $S$: given a query box $Q$ and an integer $k>2$, compute an optimal $k$-clustering for $S\setminus Q$. We obtain the following results. We present a general method to compute a $(1+\epsilon)$-approximation to a range-clustering query, where $\epsilon>0$ is a parameter that can be specified as part of the query. Our method applies to a large class of clustering problems, including $k$-center clustering in any $L_p$-metric and a variant of $k$-center clustering where the goal is to minimize the sum (instead of maximum) of the cluster sizes. We extend our method to deal with capacitated $k$-clustering problems, where each of the clusters should not contain more than a given number of points. For the special cases of rectilinear $k$-center clustering in $\mathbb{R}^1$, and in $\mathbb{R}^2$ for $k=2$ or 3, we present data structures that answer range-clustering queries exactly.

💡 Research Summary

The paper introduces the problem of range‑clustering queries: given a static set S of points in ℝᵈ, an orthogonal query box Q, and an integer k (>2), compute a k‑clustering of the points inside the box, S ∩ Q, that minimizes a prescribed cost function Φ. Traditional range searching first reports all points in Q and then runs a separate clustering algorithm, which can be prohibitively expensive when |S ∩ Q| is large. The authors therefore design data structures that answer the clustering task directly, without materializing the subset.

Main Contributions

-

General (1 + ε) Approximation Framework

- They define a class of (c, f(k))-regular cost functions. Regularity comprises two properties:

- Diameter‑sensitivity: Φ(C) ≥ c·max_{cluster C} diam(C).

- Expansion: For any weak r‑packing R of a point set S₀, the optimal k‑clustering cost on R approximates that on S₀ within an additive term r·f(k), and a clustering on R can be expanded to a clustering on S₀ with the same additive overhead.

- Many classic clustering objectives satisfy this definition, including k‑center (any Lₚ metric), sum‑of‑radii variants, and 2‑D minimum‑perimeter‑sum clustering.

- They define a class of (c, f(k))-regular cost functions. Regularity comprises two properties:

-

Query Algorithm

- Compute a lower bound lb on Optₖ(S ∩ Q) using a cube‑cover whose smallest cube size gives c·size_min.

- Set r = ε·lb / f(k) and construct a weak r‑packing R by covering S ∩ Q with interior‑disjoint axis‑aligned cubes of side ≤ r/√d and picking one representative point per cube.

- Run a single‑shot clustering routine on R (e.g., exact k‑center or a (1 + ε) approximation) to obtain a clustering C.

- Expand C to a clustering C* of the original points using the expansion property; the resulting cost satisfies Φ(C*) ≤ (1 + ε)·Optₖ(S ∩ Q).

The crucial observation is that the size of R depends only on k, ε, and the dimension, not on the number of points inside Q. This yields query times of the form

O((k/ε)·log n + poly(k, 1/ε)), with linear‑size storage. -

Data‑Structure Foundations

- A compressed octree (generalized to any dimension) is built on S. Each node corresponds to a canonical cube (a cell of a dyadic grid). The tree is recursively subdivided until each leaf contains at most one point or the points are split among at least two children.

- Using the octree, a suitable cube‑cover can be retrieved in O(log n) time, and the weak r‑packing is formed by traversing the tree and selecting one point per leaf that satisfies the size constraint.

-

Capacitated Clustering

- The same framework extends to capacitated k‑clustering, where each cluster may contain at most α·|S ∩ Q|/k points (α > 1). The weak r‑packing is built as before, and during expansion the algorithm respects the capacity bound, preserving the (1 + ε) approximation guarantee.

-

Exact Solutions for Special Cases

- 1‑D rectilinear k‑center: Two linear‑space structures are presented. One answers queries in O(k²·log² n) time, the other in O(3ᵏ·log n) time. Both store O(n) space.

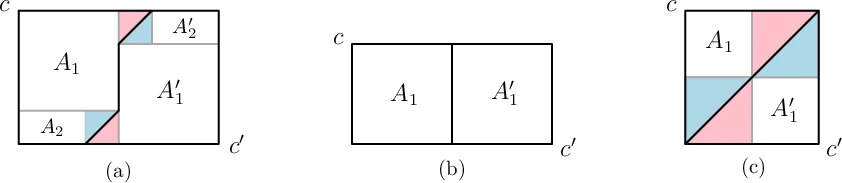

- 2‑D rectilinear k‑center: For k = 2, a data structure answers queries in O(log n) time; for k = 3, queries are answered in O(log² n) time. Both use O(n·log ε n) space, where ε is a fixed small constant. The structures rely on pre‑computed arrangements of axis‑aligned squares and hierarchical decomposition of the plane.

Technical Highlights

- Weak r‑Packing vs. Coreset: The weak packing is similar to a coreset but only guarantees that every point lies within distance r of a sampled point; distinct sampled points may be closer than r to each other. This relaxed condition is sufficient because the regularity of Φ bounds the error incurred by expanding clusters.

- Lower‑Bound Lemma: Lemma 3 shows that if a cube‑cover contains more than k·2ᵈ cubes, then the optimal cost must exceed c·(minimum cube size). This provides a cheap, query‑time computable lower bound.

- Expansion Procedure: For k‑center, expansion simply inflates each ball by r; for sum‑of‑radii, the same additive increase works; for perimeter‑sum clustering, each polygon is Minkowski‑summed with a disk of radius r, increasing perimeter by 2πr. The time to perform expansion is O(n log n) in the worst case but can be made linear for many objectives.

Significance

The work bridges a gap between range searching and geometric clustering, offering the first data structures that answer clustering queries directly on a subrange of the data. By abstracting the cost function into a regularity condition, the authors provide a unified treatment that covers a broad spectrum of clustering objectives. The combination of weak packings, cube‑covers, and compressed octrees yields query times that are polylogarithmic in n and polynomial only in k and 1/ε, making the approach practical for moderate k and high‑dimensional data sets. The exact structures for 1‑D and 2‑D rectilinear k‑center further demonstrate that, for low dimensions, optimal answers can be obtained with near‑linear space and logarithmic query time.

Overall, the paper establishes a solid theoretical foundation for range‑clustering queries, opens avenues for further research on other clustering objectives (e.g., k‑means, k‑median), and suggests practical implementations for interactive data analysis systems where users repeatedly query clusters of spatial subsets.

Comments & Academic Discussion

Loading comments...

Leave a Comment