A Network-based End-to-End Trainable Task-oriented Dialogue System

Teaching machines to accomplish tasks by conversing naturally with humans is challenging. Currently, developing task-oriented dialogue systems requires creating multiple components and typically this involves either a large amount of handcrafting, or…

Authors: Tsung-Hsien Wen, David V, yke

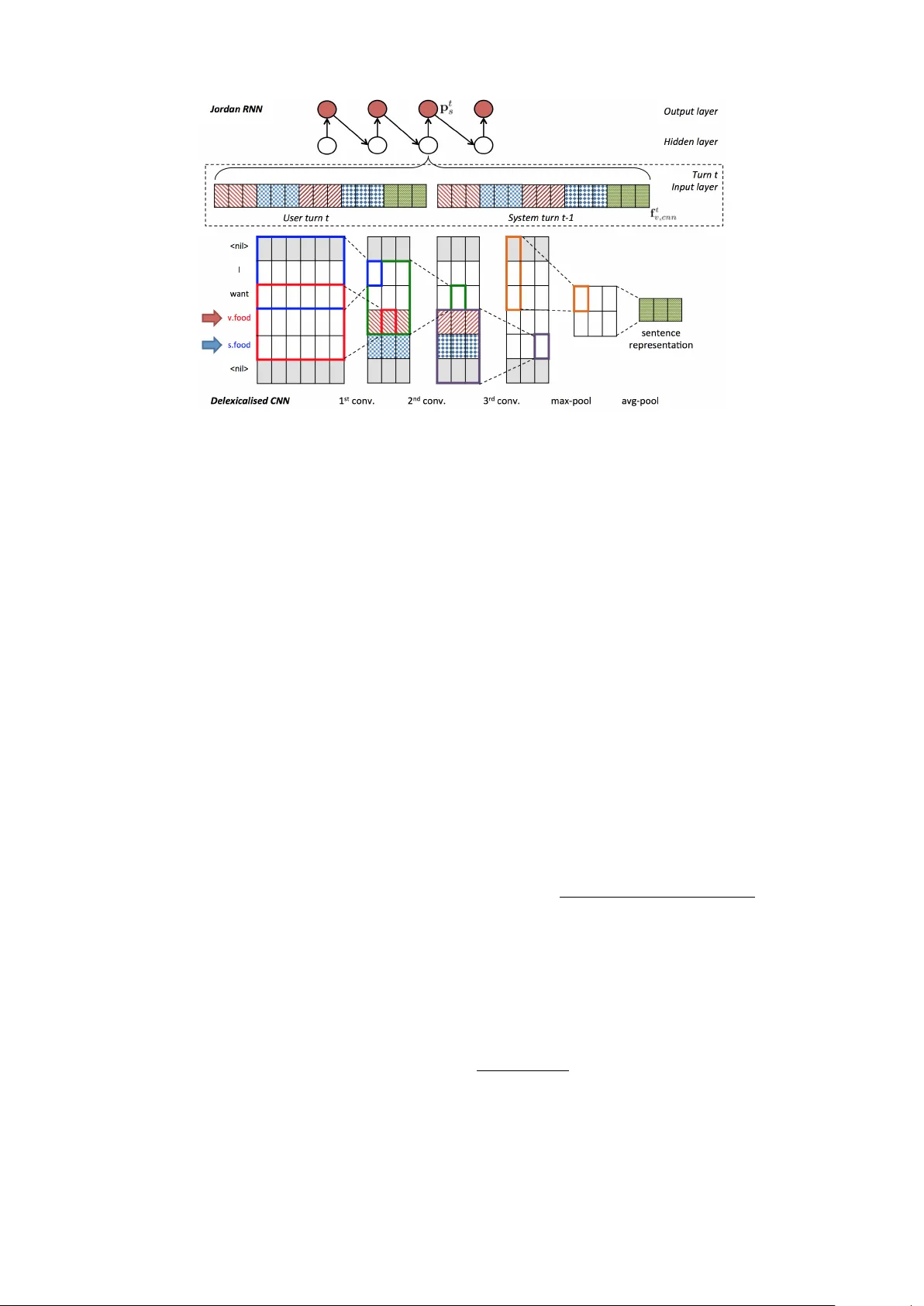

A Network-based End-to-End T rainable T ask-oriented Dialogue System Tsung-Hsien W en 1 , Da vid V andyke 1 , Nikola Mrkši ´ c 1 , Milica Gaši ´ c 1 , Lina M. Rojas-Barahona 1 , Pei-Hao Su 1 , Stefan Ultes 1 , and Stev e Y oung 1 1 Cambridge Uni versity Engineering Department, T rumpington Street, Cambridge, CB2 1PZ, UK {thw28,djv27,nm480,mg436,lmr46,phs26,su259,sjy11}@cam.ac.uk Abstract T eaching machines to accomplish tasks by con v ersing naturally with humans is challenging. Currently , de v eloping task- oriented dialogue systems requires creating multiple components and typically this in- volv es either a large amount of handcraft- ing, or acquiring costly labelled datasets to solve a statistical learning problem for each component. In this w ork we intro- duce a neural network-based text-in, text- out end-to-end trainable goal-oriented di- alogue system along with a new way of collecting dialogue data based on a novel pipe-lined W izard-of-Oz frame w ork. This approach allo ws us to de velop dialogue sys- tems easily and without making too man y assumptions about the task at hand. The results show that the model can con verse with human subjects naturally whilst help- ing them to accomplish tasks in a restaurant search domain. 1 Introduction Building a task-oriented dialogue system such as a hotel booking or a technical support service is dif ficult because it is application-specific and there is usually limited av ailability of training data. T o mitigate this problem, recent machine learning ap- proaches to task-oriented dialogue system design hav e cast the problem as a partially observ able Marko v Decision Process (POMDP) (Y oung et al., 2013) with the aim of using reinforcement learn- ing (RL) to train dialogue policies online through interactions with real users (Gaši ´ c et al., 2013). Ho wev er , the language understanding (Henderson et al., 2014; Y ao et al., 2014) and language gener - ation (W en et al., 2015b; W en et al., 2016) mod- ules still rely on supervised learning and therefore need corpora to train on. Furthermore, to make RL tractable, the state and action space must be carefully designed (Y oung et al., 2013; Y oung et al., 2010), which may restrict the expressi v e power and learnability of the model. Also, the reward functions needed to train such models are difficult to design and hard to measure at run-time (Su et al., 2015; Su et al., 2016). At the other end of the spectrum, sequence to sequence learning (Sutskev er et al., 2014) has in- spired se veral ef forts to b uild end-to-end trainable, non-task-oriented con v ersational systems (V in yals and Le, 2015; Shang et al., 2015; Serban et al., 2015b). This family of approaches treats dialogue as a source to target sequence transduction problem, applying an encoder network (Cho et al., 2014) to encode a user query into a distributed vector rep- resenting its semantics, which then conditions a decoder network to generate each system response. These models typically require a large amount of data to train. The y allow the creation of effecti v e chatbot type systems but the y lack any capability for supporting domain specific tasks, for example, being able to interact with databases (Sukhbaatar et al., 2015; Y in et al., 2015) and aggregate useful information into their responses. In this work, we propose a neural netw ork-based model for task-oriented dialogue systems by bal- ancing the strengths and the weaknesses of the two research communities: the model is end-to-end trainable 1 but still modularly connected; it does not directly model the user goal, but ne vertheless, it still learns to accomplish the required task by pro- viding r ele vant and appr opriate responses at each turn; it has an explicit representation of database (DB) attributes (slot-value pairs) which it uses to achie ve a high task success rate, but has a dis- tributed representation of user intent (dialogue act) 1 W e define end-to-end trainable as that each system mod- ule is trainable from data except for a database operator . Figure 1: The proposed end-to-end trainable dialogue system framew ork to allo w ambiguous inputs; and it uses dele xicalisa- tion 2 and a weight tying strategy (Henderson et al., 2014) to reduce the data required to train the model, but still maintains a high degree of freedom should larger amounts of data become av ailable. W e show that the proposed model performs a gi ven task v ery competiti vely across se v eral metrics when trained on only a fe w hundred dialogues. In order to train the model for the target appli- cation, we introduce a nov el pipe-lined data col- lection mechanism inspired by the W izard-of-Oz paradigm (K elley , 1984) to collect human-human dialogue corpora via crowd-sourcing. W e found that this process is simple and enables fast data collection online with very lo w de velopment costs. 2 Model W e treat dialogue as a sequence to sequence map- ping problem (modelled by a sequence-to-sequence architecture (Sutske ver et al., 2014)) augmented with the dialogue history (modelled by a set of belief trackers (Henderson et al., 2014)) and the current database search outcome (modelled by a database operator), as sho wn in Figure 1. At each turn, the system takes a sequence of tokens 2 from the user as input and conv erts it into two inter- nal representations: a distributed representation generated by an intent network and a probability distribution o ver slot-v alue pairs called the belief state (Y oung et al., 2013) generated by a set of be- lief trackers. The database operator then selects the 2 Delexicalisation: we replaced slots and values by generic tokens (e.g. keywords like Chinese or Indian are replaced by in Figure 1) to allow weight sharing. most probable v alues in the belief state to form a query to the DB, and the search result, along with the intent representation and belief state are trans- formed and combined by a policy network to form a single vector representing the next system action. This system action vector is then used to condition a response generation network (W en et al., 2015a; W en et al., 2015b) which generates the required system output token by token in skeletal form. The final system response is then formed by substitut- ing the actual v alues of the database entries into the skeletal sentence structure. A more detailed description of each component is gi ven belo w . 2.1 Intent Network The intent network can be viewed as the en- coder in the sequence-to-sequence learning frame- work (Sutskev er et al., 2014) whose job is to en- code a sequence of input tokens w t 0 , w t 1 , ...w t N into a distributed v ector representation z t at e very turn t . T ypically , a Long Short-term Memory (LSTM) network (Hochreiter and Schmidhuber , 1997) is used and the last time step hidden layer z N t is tak en as the representation, z t = z N t = LSTM ( w t 0 , w t 1 , ...w t N ) (1) Alternati vely , a con v olutional neural network (CNN) can be used in place of the LSTM as the encoder (Kalchbrenner et al., 2014; Kim, 2014), z t = CNN ( w t 0 , w t 1 , ...w t N ) (2) and here we inv estigate both. Since all the slot- v alue specific information is dele xicalised, the en- coded vector can be vie wed as a distrib uted intent Figure 2: T ied Jordan-type RNN belief tracker with dele xicalised CNN feature e xtractor . The output of the CNN feature extractor is a concatenation of top-le vel sentence (green) embedding and se v eral le vels of intermediate ngram-like embeddings (red and blue). Ho we ver , if a value cannot be delexicalised in the input, its ngram-like embeddings will all be padded with zeros. W e pad zero vectors (in gray) before each con v olution operation to mak e sure the representation at each layer has the same length. The output of each tracker p t s is a distribution o ver v alues of a particular slot s . representation which replaces the hand-coded di- alogue act representation (Traum, 1999) in tradi- tional task-oriented dialogue systems. 2.2 Belief T rackers Belief tracking (also called Dialogue State track- ing) provides the core of a task-oriented spoken dialogue system (SDS) (Henderson, 2015). Cur- rent state-of-the-art belief trackers use discrimi- nati ve models such as recurrent neural networks (RNN) (Mikolo v et al., 2010; W en et al., 2013) to directly map ASR hypotheses to belief states (Hen- derson et al., 2014; Mrkši ´ c et al., 2016). Although in this work we focus on text-based dialogue sys- tems, we retain belief tracking at the core of our system because: (1) it enables a sequence of free- form natural language sentences to be mapped into a fixed set of slot-value pairs, which can then be used to query a DB. This can be vie wed as a simple version of a semantic parser (Berant et al., 2013); (2) by keeping track of the dialogue state, it av oids learning unnecessarily complicated long-term de- pendencies from raw inputs; (3) it uses a smart weight tying strategy that can greatly reduce the data required to train the model, and (4) it pro vides an inherent rob ustness which simplifies future ex- tension to spoken systems. Using each user input as ne w evidence, the task of a belief tracker is to maintain a multinomial dis- tribution p ov er v alues v ∈ V s for each informable slot s , and a binary distrib ution for each requestable slot 3 . Each slot in the ontology G 4 has its o wn specialised tracker , and each tracker is a Jordan- type (recurrence from output to hidden layer) (Jor- dan, 1989) RNN 5 with a CNN feature extractor , as sho wn in Figure 2. Like Mrkši ´ c et al. (2015), we tie the RNN weights together for each v alue v but v ary features f t v when updating each pre-softmax acti v ation g t v . The update equations for a gi ven slot s are, f t v = f t v ,cnn ⊕ p t − 1 v ⊕ p t − 1 ∅ (3) g t v = w s · sigmoid ( W s f t v + b s ) + b 0 s (4) p t v = exp ( g t v ) exp ( g ∅ ,s ) + P v 0 ∈ V s exp ( g t v 0 ) (5) where vector w s , matrix W s , bias terms b s and b 0 s , and scalar g ∅ ,s are parameters. p t ∅ is the probability that the user has not mentioned that slot up to turn t and can be calculated by substituting g ∅ ,s for g t v in the numerator of Equation 5. In order to model the discourse context at each turn, the feature vector 3 Informable slots are slots that users can use to constrain the search, such as food type or price range; Requestable slots are slots that users can ask a value for , such as address. 4 A small k nowledge graph defining the slot-v alue pairs the system can talk about for a particular task. 5 W e don’t use the recurrent connection for requestable slots since they don’ t need to be tracked. f t v ,cnn is the concatenation of two CNN deri ved features, one from processing the user input u t at turn t and the other from processing the machine response m t − 1 at turn t − 1 , f t v ,cnn = CNN ( u ) s,v ( u t ) ⊕ CNN ( m ) s,v ( m t − 1 ) (6) where e very token in u t and m t − 1 is represented by an embedding of size N deri ved from a 1-hot input vector . In order to make the tracker aware when delexicalisation is applied to a slot or v alue, the slot-v alue specialised CNN operator CNN ( · ) s,v ( · ) ex- tracts not only the top le vel sentence representation but also intermediate n-gram-like embeddings de- termined by the position of the delexicalised token in each utterance. If multiple matches are observed, the corresponding embeddings are summed. On the other hand, if there is no match for a particular slot or v alue, the empty n-gram embeddings are padded with zeros. In order to keep track of the position of delexicalised tokens, both sides of the sentence are padded with zeros before each con volution opera- tion. The number of vectors is determined by the filter size at each layer . The ov erall process of e x- tracting sev eral layers of position-specific features is visualised in Figure 2. The belief tracker described above is based on Henderson et al. (2014) with some modifica- tions: (1) only probabilities over informable and requestable slots and v alues are output, (2) the re- current memory block is removed, since it appears to of fer no benefit in this task, and (3) the n-gram feature extractor is replaced by the CNN extrac- tor described abov e. By introducing slot-based belief trackers, we essentially add a set of interme- diate labels into the system as compared to train- ing a pure end-to-end system. Later in the paper we will show that these tracker components are critical for achieving task success. W e will also sho w that the additional annotation requirement that they introduce can be successfully mitigated using a nov el pipe-lined W izard-of-Oz data collec- tion frame work. 2.3 Policy Network and Database Operator Database Operator Based on the output p t s of the belief trackers, the DB query q t is formed by , q t = [ s 0 ∈ S I { argmax v p t s 0 } (7) where S I is the set of informable slots. This query is then applied to the DB to create a binary truth v alue vector x t ov er DB entities where a 1 indi- cates that the corresponding entity is consistent with the query (and hence it is consistent with the most likely belief state). In addition, if x is not entirely null, an associated entity pointer is main- tained which identifies one of the matching entities selected at random. The entity pointer is updated if the current entity no longer matches the search criteria; otherwise it stays the same. The entity referenced by the entity pointer is used to form the final system response as described in Section 2.4. Policy network The polic y netw ork can be vie wed as the glue which binds the system modules together . Its output is a single v ector o t represent- ing the system action, and its inputs are comprised of z t from the intent network, the belief state p t s , and the DB truth value vector x t . Since the genera- tion network only generates appropriate sentence forms, the individual probabilities of the categor - ical values in the informable belief state are im- material and are summed together to form a sum- mary belief vector for each slot ˆ p t s represented by three components: the summed v alue probabilities, the probability that the user said the y "don’ t care" about this slot and the probability that the slot has not been mentioned. Similarly for the truth value vector x t , the number of matching entities mat- ters b ut not their identity . This vector is therefore compressed to a 6-bin 1-hot encoding ˆ x t , which represents different de grees of matching in the DB (no match, 1 match, ... or more than 5 matches). Finally , the policy netw ork output is generated by a three-way matrix transformation, o t = tanh( W z o z t + W po ˆ p t + W xo ˆ x t ) (8) where matrices W z o , W po , and W xo are param- eters and ˆ p t = L s ∈ G ˆ p t s is a concatenation of all summary belief vectors. 2.4 Generation Network The generation network uses the action vector o t to condition a language generator (W en et al., 2015b). This generates template-like sentences token by token based o n the language model prob- abilities, P ( w t j +1 | w t j , h t j − 1 , o t ) = LSTM j ( w t j , h t j − 1 , o t ) (9) where LSTM j ( · ) is a conditional LSTM operator for one output step j , w t j is the last output token (i.e. a word, a dele xicalised slot name or a dele xicalised slot value), and h t j − 1 is the hidden layer . Once the output token sequence has been generated, the generic tokens are replaced by their actual v alues: (1) replacing delexicalised slots by random sam- pling from a list of surface forms, e.g. to food or type of food , and (2) replacing delexicalised v alues by the actual attribute values of the entity currently selected by the DB pointer . This is simi- lar in spirit to the Latent Predictor Network (Ling et al., 2016) where the token generation process is augmented by a set of pointer networks (V inyals et al., 2015) to transfer entity specific information into the response. Attentive Generation Network Instead of de- coding responses directly from a static action v ec- tor o t , an attention-based mechanism (Bahdanau et al., 2014; Hermann et al., 2015) can be used to dynamically aggregate source embeddings at each output step j . In this work we e xplore the use of an attention mechanism to combine the tracker belief states, i.e. o t is computed at each output step j by , o ( j ) t = tanh( W z o z t + ˆ p ( j ) t + W xo ˆ x t ) (10) where for a gi ven ontology G , ˆ p ( j ) t = X s ∈ G α ( j ) s tanh( W s po · ˆ p t s ) (11) and where the attention weights α ( j ) s are calculated by a scoring function, α ( j ) s = softmax r | tanh( W r · u t ) (12) where u t = z t ⊕ ˆ x t ⊕ ˆ p t s ⊕ w t j ⊕ h t j − 1 , matrix W r , and vector r are parameters to learn and w t j is the embedding of token w t j . 3 Wizard-of-Oz Data Collection Arguably the greatest bottleneck for statistical ap- proaches to dialogue system dev elopment is the collection of appropriate training data, and this is especially true for task-oriented dialogue sys- tems. Serban et al (Serban et al., 2015a) hav e catalogued existing corpora for dev eloping con- versational agents. Such corpora may be useful for bootstrapping, b ut, for task-oriented dialogue sys- tems, in-domain data is essential 6 . T o mitigate this problem, we propose a novel crowdsourcing ver - sion of the W izard-of-Oz (WOZ) paradigm (K elley , 1984) for collecting domain-specific corpora. 6 E.g. technical support for Apple computers may differ completely from that for Windo ws, due to the many differ - ences in software and hardware. Based on the giv en ontology , we designed two webpages on Amazon Mechanical T urk, one for wizards and the other for users (see Figure 4 and 5 for the designs). The users are given a task specify- ing the characteristics of a particular entity that they must find (e.g. a Chinese restaur ant in the north ) and asked to type in natural language sentences to fulfil the task. The wizards are gi ven a form to record the information con ve yed in the last user turn (e.g. pricerang e=Chinese, area=north ) and a search table showing all the av ailable matching entities in the database. Note these forms contain all the labels needed to train the slot-based belief trackers. The table is automatically updated e v ery time the wizard submits new information. Based on the updated table, the wizard types an appropriate system response and the dialogue continues. In order to enable large-scale parallel data collec- tion and av oid the distracting latencies inherent in con v entional WOZ scenarios (Bohus and Rudnicky , 2008), users and wizards are asked to contribute just a single turn to each dialogue. T o ensure coher - ence and consistency , users and wizards must re- vie w all previous turns in that dialogue before they contribute their turns. Thus dialogues progress in a pipe-line. Many dialogues can be activ e in parallel and no worker e ver has to wait for a response from the other party in the dialogue. Despite the f act that multiple workers contribute to each dialogue, we observe that dialogues are generally coherent yet di verse. Furthermore, this turn-lev el data collection strategy seems to encourage w orkers to learn and correct each other based on pre vious turns. In this paper , the system was designed to assist users to find a restaurant in the Cambridge, UK area. There are three informable slots ( food , pricerang e , ar ea ) that users can use to constrain the search and six requestable slots ( addr ess , phone , postcode plus the three informable slots) that the user can ask a v alue for once a restaurant has been of fered. There are 99 restaurants in the DB. Based on this domain, we ran 3000 HITs (Human Intelligence T asks) in total for roughly 3 days and collected 1500 dialogue turns. After cleaning the data, we hav e approximately 680 dialogues in total (some of them are unfinished). The total cost for collecting the dataset was ∼ 400 USD. 4 Empirical Experiments T raining T raining is divided into two phases. Firstly the belief tracker parameters θ b are T able 1: T racker performance in terms of Precision, Recall, and F-1 score. T racker type Informable Requestable Prec. Recall F-1 Prec. Recall F-1 cnn 99.77% 96.09% 97.89% 98.66% 93.79% 96.16% ngram 99.34% 94.42% 96.82% 98.56% 90.14% 94.16% trained using the cross entropy errors between tracker labels y t s and predictions p t s , L 1 ( θ b ) = − P t P s ( y t s ) | log p t s . For the full model, we ha ve three informable trackers ( food, pricerange , area ) and sev en requestable trackers ( address, phone, postcode, name , plus the three informable slots). Having fixed the tracker parameters, the re- maining parts of the model θ \ b are trained using the cross entrop y errors from the gen- eration network language model, L 2 ( θ \ b ) = − P t P j ( y t j ) | log p t j , where y t j and p t j are out- put token targets and predictions respectiv ely , at turn t of output step j . W e treated each dialogue as a batch and used stochastic gradient decent with a small l 2 regularisation term to train the model. The collected corpus was partitioned into a train- ing, validation, and testing sets in the ratio 3:1:1. Early stopping was implemented based on the vali- dation set for re gularisation and gradient clipping was set to 1. All the hidden layer sizes were set to 50, and all the weights were randomly initialised between -0.3 and 0.3 including word embeddings. The vocab ulary size is around 500 for both input and output, in which rare words and words that can be delexicalised are remo ved. W e used three con- volutional layers for all the CNNs in the w ork and all the filter sizes were set to 3. Pooling operations were only applied after the final con v olution layer . Decoding In order to decode without length bias, we decoded each system response m t based on the av erage log probability of tokens, m ∗ t = argmax m t { log p ( m t | θ , u t ) /J t } (13) where θ are the model parameters, u t is the user input, and J t is the length of the machine response. As a contrast, we also in vestig ated the MMI cri- terion (Li et al., 2016) to increase di v ersity and put additional scores on delexicalised tokens to en- courage task completion. This weighted decoding strategy has the follo wing objecti v e function, m ∗ t = argmax m t { log p ( m t | θ , u t ) /J t − (14) λ log p ( m t ) /J t + γ R t } where λ and γ are weights selected on validation set and log p ( m t ) can be modelled by a standalone LSTM language model. W e used a simple heuris- tic for the scoring function R t designed to re ward gi ving appropriate information and penalise spu- riously providing unsolicited information 7 . W e applied beam search with a beamwidth equal to 10, the search stops when an end of sentence tok en is generated. In order to obtain language variability from the deployed model we ran decoding until we obtained 5 candidates and randomly sampled one as the system response. T racker performance T able 1 sho ws the e v al- uation of the trackers’ performance. Due to delex- icalisation, both CNN type trackers and N-gram type trackers (Henderson et al., 2014) achieve high precision, but the N-gram track er has worse recall. This result suggests that compared to simple N- grams, CNN type trackers can better generalise to sentences with long distance dependencies and more complex syntactic structures. Corpus-based ev aluation W e e v aluated the end-to-end system by first performing a corpus- based ev aluation in which the model is used to pre- dict each system response in the held-out test set. Three e v aluation metrics were used: BLEU score (on top-1 and top-5 candidates) (Papineni et al., 2002), entity matching rate and objecti ve task suc- cess rate (Su et al., 2015). W e calculated the entity matching rate by determining whether the actual selected entity at the end of each dialogue matches the task that was specified to the user . The dialogue is then marked as successful if both (1) the offered entity matches, and (2) the system answered all the associated information requests (e.g. what is the addr ess? ) from the user . W e computed the BLEU scores on the template-like output sentences before lexicalising with the entity v alue substitution. 7 W e giv e an additional reward if a requestable slot (e.g. address) is requested and its corresponding delexicalised slot or value token (e.g. and ) is gener- ated. W e giv e an additional penalty if an informable slot is nev er mentioned (e.g. food=none) but its corresponding delex- icalised value token is generated (e.g. ). For more details on scoring, please see T able 5. T able 2: Performance comparison of different model architectures based on a corpus-based e v aluation. Encoder T racker Decoder Match(%) Success(%) T5-BLEU T1-BLEU Baseline lstm - lstm - - 0.1650 0.1718 lstm turn recurrence lstm - - 0.1813 0.1861 V ariant lstm rnn-cnn, w/o req. lstm 89.70 30.60 0.1769 0.1799 cnn rnn-cnn lstm 88.82 58.52 0.2354 0.2429 Full model w/ different decoding strategy lstm rnn-cnn lstm 86.34 75.16 0.2184 0.2313 lstm rnn-cnn + weighted 86.04 78.40 0.2222 0.2280 lstm rnn-cnn + att. 90.88 80.02 0.2286 0.2388 lstm rnn-cnn + att. + weighted 90.88 83.82 0.2304 0.2369 T able 2 sho ws the result of the corpus-based e valuation averaging over 5 randomly initialised networks. The Baseline block sho ws two baseline models: the first is a simple turn-level sequence to sequence model (Sutske v er et al., 2014) while the second one introduces an additional recurrence to model the dependency on the dialogue history fol- lo wing Serban et al (Serban et al., 2015b). As can be seen, incorporation of the recurrence impro ves the BLEU score. Howe ver , baseline task success and matching rates cannot be computed since the models do not make an y provision for a database. The V ariant block of T able 2 shows two v ariants of the proposed end-to-end model. For the first one, no requestable trackers were used, only informable trackers. Hence, the burden of modelling user re- quests falls on the intent network alone. W e found that without explicitly modelling user requests, the model performs very poorly on task completion ( ∼ 30 %), e v en though it can offer the correct entity most of the time( ∼ 90 %). More data may help here; ho we ver , we found that the incorporation of an explicit internal semantic representation in the full model (shown below) is more efficient and extremely ef fectiv e. For the second variant, the LSTM intent network is replaced by a CNN. This achie ves a v ery competitiv e BLEU score b ut task success is still quite poor ( ∼ 58 % success). W e think this is because the CNN encodes the intent by capturing se veral local features b ut lacks the global vie w of the sentence, which may easily result in an unexpected o verfit. The Full model block shows the performance of the proposed model with different decoding strate- gies. The first row sho ws the result of decoding us- ing the av erage likelihood term (Equation 13) while the second row uses the weighted decoding strat- egy (Equation 14). As can be seen, the weighted decoding strategy does not pro vide a significant improv ement in BLEU score b ut it does greatly improv e task success rate ( ∼ 3 %). The R t term contributes the most to this improv ement because it injects additional task-specific information during decoding. Despite this, the most ef fecti ve and ele- gant way to impro ve the performance is to use the attention-based mechanism ( +att. ) to dynamically aggregate the track er beliefs (Section 2.4). It gives a slight improv ement in BLEU score ( ∼ 0 . 01 ) and a big gain on task success ( ∼ 5 %). Finally , we can improv e further by incorporating weighted decod- ing with the attention models ( + att. + weighted ). As an aside, we used t-SNE (der Maaten and Hin- ton, 2008) to produce a reduced dimension vie w of the action embeddings o t , plotted and labelled by the first three generated output words (full model w/o attention). The figure is shown as Figure 3. W e can see clear clusters based on the system in- tent types, e v en though we did not e xplicitly model them using dialogue acts. Human evaluation In order to assess opera- tional performance, we tested our model using paid subjects recruited via Amazon Mechanical T urk. Each judge was asked to follow a giv en task and to rate the model’ s performance. W e assessed the subjecti ve success rate, and the percei ved compre- hension ability and naturalness of response on a scale of 1 to 5. The full model with attention and weighted decoding was used and the system was tested on a total of 245 dialogues. As can be seen in T able 3, the a verage subjecti v e success rate was 98%, which means the system was able to complete the majority of tasks. Moreov er , the comprehen- sion ability and naturalness scores both av eraged more than 4 out of 5. (See Appendix for some sample dialogues in this trial.) W e also ran comparisons between the NN model Figure 3: The action vector embedding o t generated by the NN model w/o attention. Each cluster is labelled with the first three words the embedding generated. T able 3: Human assessment of the NN system. The rating for comprehension/naturalness are both out of 5. Metric NN Success 98% Comprehension 4.11 Naturalness 4.05 # of dialogues: 245 and a handcrafted, modular baseline system ( HDC ) consisting of a handcrafted semantic parser , rule- based policy and belief tracker , and a template- based generator . The result can be seen in T able 4. The HDC system achie ved ∼ 95 % task success rate, which suggests that it is a strong baseline e ven though most of the components were hand- engineered. Ov er the 164 dialogues tested, the NN system ( NN ) was considered better than the handcrafted system ( HDC ) on all the metrics com- pared. Although both systems achie v ed similar suc- cess rates, the NN system ( NN ) was more ef ficient and provided a more engaging con v ersation (lower turn number and higher preference). Moreov er , the comprehension ability and naturalness of the NN system were also rated higher , which suggests that the learned system was perceiv ed as being more natural than the hand-designed system. 5 Conclusions and Future W ork This paper has presented a novel neural network- based framework for task-oriented dialogue sys- tems. The model is end-to-end trainable using two T able 4: A comparison of the NN system with a rule-based modular system ( HDC ). Metric NDM HDC T ie Subj. Success 96.95% 95.12% - A vg. # of T urn 3.95 4.54 - Comparisons(%) Naturalness 46.95 * 25.61 27.44 Comprehension 45.12 * 21.95 32.93 Preference 50.00 * 24.39 25.61 Performance 43.90 * 25.61 30.49 * p <0.005, # of comparisons: 164 supervision signals and a modest corpus of training data. The paper has also presented a nov el cro wd- sourced data collection frame work inspired by the W izard-of-Oz paradigm. W e demonstrated that the pipe-lined parallel organisation of this collection frame work enables good quality task-oriented dia- logue data to be collected quickly at modest cost. The experimental assessment of the NN dialogue system sho wed that the learned model can interact ef ficiently and naturally with human subjects to complete an application-specific task. T o the best of our kno wledge, this is the first end-to-end NN- based model that can conduct meaningful dialogues in a task-oriented application. Ho wev er , there is still much work left to do. Our current model is a text-based dialogue sys- tem, which can not directly handle noisy speech recognition inputs nor can it ask the user for con- firmation when it is uncertain. Indeed, the extent to which this type of model can be scaled to much larger and wider domains remains an open question which we hope to pursue in our further work. Wizard-of-Oz data collection websites Figure 4: The user webpage. The worker who plays a user is giv en a task to follow . For each mturk HIT , he/she needs to type in an appropriate sentence to carry on the dialogue by looking at both the task description and the dialogue history . Figure 5: The wizard page. The wizard’ s job is slightly more comple x: the worker needs to go through the dialogue history , fill in the form (top green) by interpreting the user input at this turn, and type in an appropriate response based on the history and the DB result (bottom green). The DB search result is updated when the form is submitted. The form can be divided into informable slots (top) and requestable slots (bottom), which contains all the labels we need to train the trackers. Scoring T able T able 5: Additional R t term for delexicalised tokens when using weighted decoding (Equation 14). Not observed means the corresponding tracker has a highest probability on either not mentioned or dontcar e v alue, while observed mean the highest probability is on one of the categorical v alues. A positiv e score encourages the generation of that token while a ne gati ve score discourages it. Delexicalised tok en Examples R t ( observed ) R t ( not observed ) informable slot token , ,... 0.0 0.0 informable v alue token , ,... +0.05 -0.5 requestable slot token ,,... +0.2 0.0 requestable v alue token ,,... +0.2 0.0 Acknowledgements Tsung-Hsien W en and David V andyke are sup- ported by T oshiba Research Europe Ltd, Cam- bridge. The authors w ould like to thank Ryan Lo we and Lukáš Žilka for their v aluable comments. References [Bahdanau et al.2014] Dzmitry Bahdanau, Kyunghyun Cho, and Y oshua Bengio. 2014. Neural machine translation by jointly learning to align and translate. arXiv pr eprint:1409.0473 . [Berant et al.2013] Jonathan Berant, Andrew Chou, Roy Frostig, and Percy Liang. 2013. Seman- tic parsing on Freebase from question-answer pairs. In EMNLP , pages 1533–1544, Seattle, W ashington, USA. A CL. [Bohus and Rudnicky2008] Dan Bohus and Ale xan- der I. Rudnicky , 2008. Sorry , I Didn’t Catch That! , pages 123–154. Springer Netherlands, Dordrecht. [Cho et al.2014] Kyunghyun Cho, Bart van Merrien- boer , Caglar Gulcehre, Dzmitry Bahdanau, Fethi Bougares, Holger Schwenk, and Y oshua Bengio. 2014. Learning phrase representations using rnn encoder–decoder for statistical machine translation. In EMNLP , pages 1724–1734, Doha, Qatar , October . A CL. [der Maaten and Hinton2008] Laurens V an der Maaten and Geoffrey Hinton. 2008. V isualizing Data using t-SNE. JMLR . [Gaši ´ c et al.2013] Milica Gaši ´ c, Catherine Breslin, Matthew Henderson, Dongho Kim, Martin Szum- mer , Blaise Thomson, Pirros Tsiakoulis, and Steve Y oung. 2013. On-line polic y optimisation of bayesian spoken dialogue systems via human inter- action. In ICASSP , pages 8367–8371, May . [Henderson et al.2014] Matthew Henderson, Blaise Thomson, and Ste ve Y oung. 2014. W ord-based dialog state tracking with recurrent neural networks. In SIGDIAL , pages 292–299, Philadelphia, P A, USA, June. A CL. [Henderson2015] Matthew Henderson. 2015. Machine learning for dialog state tracking: A revie w . In Machine Learning in Spoken Language Pr ocessing W orkshop . [Hermann et al.2015] Karl Moritz Hermann, T omás K o- ciský, Edward Grefenstette, Lasse Espeholt, W ill Kay , Mustafa Suleyman, and Phil Blunsom. 2015. T eaching machines to read and comprehend. In NIPS , pages 1693–1701, Montreal, Canada. MIT Press. [Hochreiter and Schmidhuber1997] Sepp Hochreiter and Jürgen Schmidhuber . 1997. Long short-term memory . Neural Compututation , 9(8):1735–1780, Nov ember . [Jordan1989] Michael I. Jordan. 1989. Serial order: A parallel, distributed processing approach. In Ad- vances in Connectionist Theory: Speech . Lawrence Erlbaum Associates. [Kalchbrenner et al.2014] Nal Kalchbrenner , Edward Grefenstette, and Phil Blunsom. 2014. A con volu- tional neural network for modelling sentences. In A CL , pages 655–665, Baltimore, Maryland, June. A CL. [Kelle y1984] John F . Kelley . 1984. An iterative design methodology for user-friendly natural language of- fice information applications. A CM T r ansaction on Information Systems . [Kim2014] Y oon Kim. 2014. Conv olutional neural net- works for sentence classification. In EMNLP , pages 1746–1751, Doha, Qatar , October . A CL. [Li et al.2016] Jiwei Li, Michel Galley , Chris Brockett, Jianfeng Gao, and Bill Dolan. 2016. A di versity- promoting objectiv e function for neural con versa- tion models. In N AA CL-HLT , pages 110–119, San Diego, California, June. A CL. [Ling et al.2016] W ang Ling, Phil Blunsom, Edward Grefenstette, Karl Moritz Hermann, T omáš K o ˇ ciský, Fumin W ang, and Andre w Senior . 2016. Latent pre- dictor networks for code generation. In ACL , pages 599–609, Berlin, Germany , August. A CL. [Mikolov et al.2010] T omáš Mikolov , Martin Karafiat, Lukáš Burget, Jan ˇ Cernocký, and Sanjee v Khudan- pur . 2010. Recurrent neural network based lan- guage model. In Interspeech , pages 1045–1048, Makuhari, Japan. ISCA. [Mrkši ´ c et al.2015] Nikola Mrkši ´ c, Diarmuid Ó Séaghdha, Blaise Thomson, Milica Gaši ´ c, Pei-Hao Su, David V andyke, Tsung-Hsien W en, and Stev e Y oung. 2015. Multi-domain dialog state tracking using recurrent neural networks. In A CL , pages 794–799, Beijing, China, July . A CL. [Mrkši ´ c et al.2016] Nikola Mrkši ´ c, Diarmuid Ó Séaghdha, Tsung-Hsien W en, Blaise Thom- son, and Steve Y oung. 2016. Neural belief tracker: Data-driv en dialogue state tracking. arXiv pr eprint:1606.03777 . [Papineni et al.2002] Kishore Papineni, Salim Roukos, T odd W ard, and W ei-Jing Zhu. 2002. Bleu: A method for automatic ev aluation of machine trans- lation. In ACL , pages 311–318, Stroudsburg, P A, USA. A CL. [Serban et al.2015a] Iulian Vlad Serban, Ryan Lowe, Laurent Charlin, and Joelle Pineau. 2015a. A sur- ve y of av ailable corpora for building data-driv en di- alogue systems. arXiv pr eprint:1512.05742 . [Serban et al.2015b] Iulian Vlad Serban, Alessandro Sordoni, Y oshua Bengio, Aaron C. Courville, and Joelle Pineau. 2015b . Hierarchical neural net- work generative models for movie dialogues. arXiv pr eprint:1507.04808 . [Shang et al.2015] Lifeng Shang, Zhengdong Lu, and Hang Li. 2015. Neural responding machine for short-text con versation. In A CL , pages 1577–1586, Beijing, China, July . A CL. [Su et al.2015] Pei-Hao Su, David V andyke, Milica Ga- sic, Dongho Kim, Nik ola Mrksic, Tsung-Hsien W en, and Ste ve J. Y oung. 2015. Learning from real users: rating dialogue success with neural networks for re- inforcement learning in spok en dialogue systems. In Interspeech , pages 2007–2011, Dresden, Germany . ISCA. [Su et al.2016] Pei-Hao Su, Milica Gasic, Nikola Mrkši ´ c, Lina M. Rojas Barahona, Stefan Ultes, David V andyke, Tsung-Hsien W en, and Stev e Y oung. 2016. On-line activ e re ward learning for policy optimisation in spoken dialogue systems. In A CL , pages 2431–2441 , Berlin, Germany , August. A CL. [Sukhbaatar et al.2015] Sainbayar Sukhbaatar , arthur szlam, Jason W eston, and Rob Fergus. 2015. End- to-end memory networks. In NIPS , pages 2440– 2448. Curran Associates, Inc., Montreal, Canada. [Sutske ver et al.2014] Ilya Sutske v er , Oriol V inyals, and Quoc V . Le. 2014. Sequence to sequence learn- ing with neural networks. In NIPS , pages 3104– 3112, Montreal, Canada. MIT Press. [T raum1999] David R. T raum, 1999. F oundations of Rational Agency , chapter Speech Acts for Dialogue Agents. Springer . [V inyals and Le2015] Oriol V in yals and Quoc V . Le. 2015. A neural conv ersational model. In ICML Deep Learning W orkshop , Lille, France. [V inyals et al.2015] Oriol V inyals, Meire F ortunato, and Na vdeep Jaitly . 2015. Pointer networks. In NIPS , pages 2692–2700, Montreal, Canada. Curran Associates, Inc. [W en et al.2013] Tsung-Hsien W en, Aaron Heidel, Hung yi Lee, Y u Tsao, and Lin-Shan Lee. 2013. Recurrent neural network based language model per- sonalization by social network cro wdsourcing. In Interspeech , pages 2007–2011, L yon France. ISCA. [W en et al.2015a] Tsung-Hsien W en, Milica Gaši ´ c, Dongho Kim, Nikola Mrkši ´ c, Pei-Hao Su, David V andyke, and Steve Y oung. 2015a. Stochastic lan- guage generation in dialogue using recurrent neural networks with con v olutional sentence reranking. In SIGdial , pages 275–284, Prague, Czech Republic, September . ACL. [W en et al.2015b] Tsung-Hsien W en, Milica Gaši ´ c, Nikola Mrkši ´ c, Pei-Hao Su, David V andyk e, and Stev e Y oung. 2015b . Semantically conditioned lstm-based natural language generation for spoken dialogue systems. In EMNLP , pages 1711–1721, Lisbon, Portugal, September . ACL. [W en et al.2016] Tsung-Hsien W en, Milica Gaši ´ c, Nikola Mrkši ´ c, Pei-Hao Su, David V andyk e, and Stev e Y oung. 2016. Multi-domain neural network language generation for spoken dialogue systems. In N AA CL-HL T , pages 120–129, San Diego, California, June. A CL. [Y ao et al.2014] Kaisheng Y ao, Baolin Peng, Y u Zhang, Dong Y u, Geoffre y Zweig, and Y angyang Shi. 2014. Spoken language understanding using long short- term memory neural networks. In IEEE SLT , pages 189–194, December . [Y in et al.2015] Pengcheng Y in, Zhengdong Lu, Hang Li, and Ben Kao. 2015. Neural enquirer: Learning to query tables. arXiv pr eprint:1512.00965 . [Y oung et al.2010] Steve Y oung, Milica Gaši ´ c, Simon Keizer , François Mairesse, Jost Schatzmann, Blaise Thomson, and Kai Y u. 2010. The hidden in- formation state model: A practical framew ork for pomdp-based spoken dialogue management. Com- puter , Speech and Language . [Y oung et al.2013] Steve Y oung, Milica Gaši ´ c, Blaise Thomson, and Jason D. W illiams. 2013. Pomdp- based statistical spoken dialog systems: A revie w . Pr oceedings of the IEEE . A ppendix: Sample dialogues T able 6: Some samples of real con v ersational logs between online judges and the end-to-end system. Human : Restaurant in any area that serves V ietnamese food Machine : thanh binh serves vietnamese food type , is there anything else i can help you with ? Human : What is the phone number and post code Machine : the number is 01223 362456 and the area code is c.b 3, 0 a.f . is there anything else i can do for you ? Human : Good bye Machine : thank you for using the cambridge restaurant system . Human : i am looking for moderately priced halal food Machine : i am sorry , there are no halal restaurants in the moderate price range . would you like something else ? Human : how about european food Machine : galleria serves european food type and is in the moderate price range . Human : address and postcode Machine : the address is 33 bridge street and the area code is c.b 2, 1 u.w . is there anything else i can help you with today ? Human : thanks Machine : galleria is a european restaurant in the moderate price range . would you like their phone ? Human : thank you Machine : thank you for using the cambridge restaurant system . good bye Human : Hi, I’m hungry for some Indonesian food Machine : i am sorry , there are no indonesian restaurants . would you like a different food ? Human : Oh no. Ho w about Indian? Machine : there are 9 restaurants serving indian types of food . do you have a preference on side of to wn ? Human : East side please Machine : sitar tandoori is a indian restaurant in the east side of town . would you like their information ? Human : Y es, the address and phone number please Machine : sitar tandoori is located at 43 high street cherry hinton cherry hinton and their phone number is 01223 249955 . Human : Okay , thank you Machine : thank you , goodbye . Human : hello Machine : what cuisine would you like ? Human : afghan Machine : i am sorry , there are no afghan restaurants . would you like something else ? Human : korean Machine : little seoul serves korean food , it is located at 108 re gent street city centre . Human : is it expensive? Machine : little seoul serves korean food and is in the e xpensive price range . Human : what area is that Machine : little seoul is located in the centre area of town . would you like their number ? Human : yes Machine : the telephone number is 01223 308681 . is there anything else i can help you with today ? Human : no goodbye Machine : thank you for using the cambridge restaurant system , goodbye . Human : I want to find a restaurant in the east part of town that serves creati ve food Machine : i am sorry , there are no creativ e restaurants in the east side of town . would you like a different restaurant ? Human : how about indian food? Machine : curry prince is a indian restaurant in the east of town . would you like their phone number ? Human : yes, may i have the address and phone number? Machine : curry prince is located at 451 newmarket road fen ditton and their phone is 01223 566388 . is there anything else i can help you with today ? Human : no, thank you goodbye Machine : thank you for using the cambridge restaurant system . good bye Machine : what food are you interested ? Human : english Machine : i am sorry , there are no english restaurants . would you like to try a different food type ? Human : chinese Machine : there are 8 restaurants serving chinese type of cuisine . do you have a preference of area ? Human : south part Machine : the good luck chinese food takeaway is a chinese restaurant in the south of to wn . would you like the phone number ? Human : no. just address and price range Machine : the good luck chinese food takeaway is located at 82 cherry hinton road cherry hinton . is there anything else i can help you with ? Human : what is their price range? Machine : the good luck chinese food takeaway is e xpensiv ely priced chinese restaurant in the south area . would you like more information ? Human : no goodbye Machine : thank you for using the cambridge restaurant system . good bye . Human : Is there a gastropub anywhere around here? Machine : there are 3 gastropub restaurants . would you like more information ? Human : Is there one on the west side? Machine : i am sorry , there are no gastropub restaurants in the west side of town . would you like something else ? Human : How about east? Machine : royal standard is a gastropub restaurant in the east of to wn . Human : What’s the address? Machine : the address is 290 mill road city centre . Human : Thanks, bye Machine : thank you for using the cambridge restaurant system . good bye

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment