A randomized primal distributed algorithm for partitioned and big-data non-convex optimization

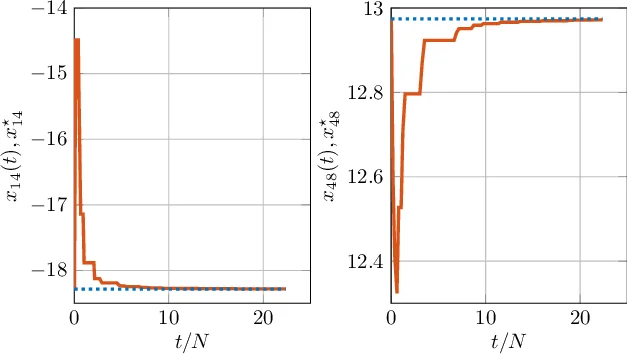

In this paper we consider a distributed optimization scenario in which the aggregate objective function to minimize is partitioned, big-data and possibly non-convex. Specifically, we focus on a set-up in which the dimension of the decision variable depends on the network size as well as the number of local functions, but each local function handled by a node depends only on a (small) portion of the entire optimization variable. This problem set-up has been shown to appear in many interesting network application scenarios. As main paper contribution, we develop a simple, primal distributed algorithm to solve the optimization problem, based on a randomized descent approach, which works under asynchronous gossip communication. We prove that the proposed asynchronous algorithm is a proper, ad-hoc version of a coordinate descent method and thus converges to a stationary point. To show the effectiveness of the proposed algorithm, we also present numerical simulations on a non-convex quadratic program, which confirm the theoretical results.

💡 Research Summary

The paper addresses a distributed optimization problem where the global decision variable grows with the number of agents (big‑data) and each agent’s local objective depends only on a small subset of the variables (partitioned structure). Formally, the variable (x\in\mathbb{R}^n) is split into blocks (x_i) (one per node). Each node (i) knows a smooth function (f_i(x_{\mathcal N_i})) that depends on its own block and those of its neighbors (\mathcal N_i) in a fixed undirected communication graph, and a possibly nonsmooth convex regularizer (g_i(x_i)). The overall problem is

\

Comments & Academic Discussion

Loading comments...

Leave a Comment