Distributed Random-Fixed Projected Algorithm for Constrained Optimization Over Digraphs

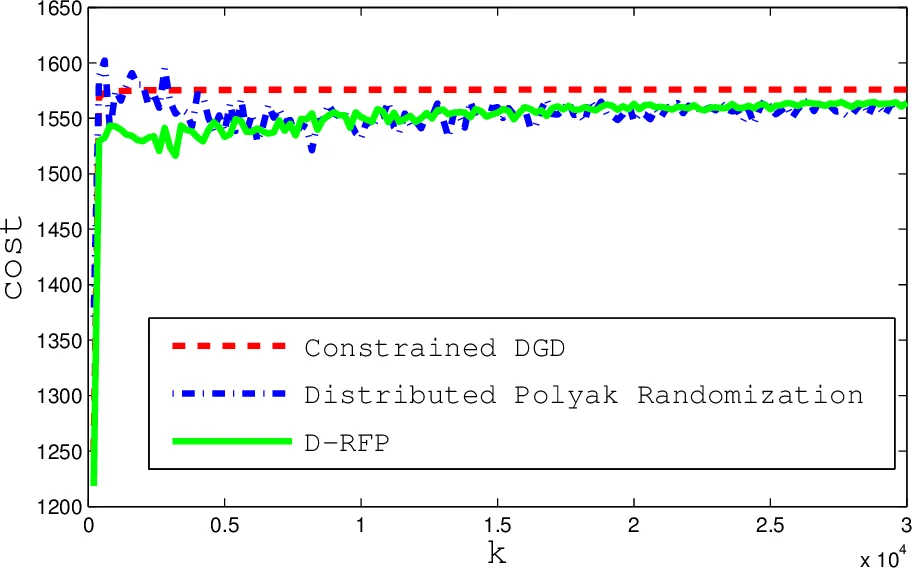

This paper is concerned with a constrained optimization problem over a directed graph (digraph) of nodes, in which the cost function is a sum of local objectives, and each node only knows its local objective and constraints. To collaboratively solve the optimization, most of the existing works require the interaction graph to be balanced or “doubly-stochastic”, which is quite restrictive and not necessary as shown in this paper. We focus on an epigraph form of the original optimization to resolve the “unbalanced” problem, and design a novel two-step recursive algorithm with a simple structure. Under strongly connected digraphs, we prove that each node asymptotically converges to some common optimal solution. Finally, simulations are performed to illustrate the effectiveness of the proposed algorithms.

💡 Research Summary

This paper addresses the problem of distributed constrained optimization over directed graphs (digraphs) where each node possesses only its local objective function and local constraints. Existing distributed algorithms typically require the communication graph to be balanced or doubly‑stochastic, which is a restrictive assumption for many real‑world networks. The authors overcome this limitation by reformulating the original problem into an epigraph form. By introducing an auxiliary vector t and the constraints f_i(x) ≤ t_i, the objective becomes a linear function (1/n) · 1ᵀt, which is identical for all agents. This transformation eliminates the influence of the Perron vector that otherwise skews the effective objective on unbalanced graphs.

The proposed algorithm, named Distributed Random‑Fixed Projected (DRFP), proceeds in two recursive steps. In the first step, each node computes weighted averages of its neighbors’ current estimates using a row‑stochastic weighting matrix A, yielding intermediate variables p_k^j (for t) and y_k^j (for x). This is essentially a standard distributed sub‑gradient descent applied to the epigraph‑transformed problem; because the objective is now linear and common to all agents, the unbalanced nature of the graph does not affect convergence.

In the second step, the algorithm enforces feasibility with respect to the local convex constraints. Each node randomly selects one of its local constraint sets X_ℓ^j and performs a Polyak‑type random projection:

z_k^j = y_k^j − β g_{ω_k^j}^j(y_k^j) + ‖g_{ω_k^j}^j(y_k^j)+‖ u_k^j,

where β∈(0,2) is a stepsize, g{ω_k^j}^j is the chosen constraint function, and u_k^j is a sub‑gradient of its positive part. This operation guarantees a non‑increasing expected distance to the selected constraint set. A subsequent “fixed‑direction” correction updates both x and t to satisfy the epigraph constraint f_j(x) ≤ t_j:

x_{k+1}^j = Π_X

Comments & Academic Discussion

Loading comments...

Leave a Comment