End-To-End Visual Speech Recognition With LSTMs

Traditional visual speech recognition systems consist of two stages, feature extraction and classification. Recently, several deep learning approaches have been presented which automatically extract features from the mouth images and aim to replace the feature extraction stage. However, research on joint learning of features and classification is very limited. In this work, we present an end-to-end visual speech recognition system based on Long-Short Memory (LSTM) networks. To the best of our knowledge, this is the first model which simultaneously learns to extract features directly from the pixels and perform classification and also achieves state-of-the-art performance in visual speech classification. The model consists of two streams which extract features directly from the mouth and difference images, respectively. The temporal dynamics in each stream are modelled by an LSTM and the fusion of the two streams takes place via a Bidirectional LSTM (BLSTM). An absolute improvement of 9.7% over the base line is reported on the OuluVS2 database, and 1.5% on the CUAVE database when compared with other methods which use a similar visual front-end.

💡 Research Summary

The paper introduces a novel end‑to‑end visual speech recognition (lip‑reading) system that jointly learns feature extraction from raw mouth images and temporal classification using Long Short‑Term Memory (LSTM) networks. The architecture consists of two parallel streams. The first stream processes the raw mouth region of interest (ROI) to capture static appearance information, while the second stream processes frame‑difference (diff) images that emphasize local temporal dynamics. Both streams share an identical deep bottleneck encoder: three sigmoid hidden layers of sizes 2000, 1000 and 500 followed by a linear bottleneck layer. These encoding layers are pretrained in a greedy layer‑wise fashion using Restricted Boltzmann Machines (RBMs) – a Gaussian‑Bernoulli RBM for the pixel input, two Bernoulli‑Bernoulli RBMs, and a Bernoulli‑Gaussian RBM for the bottleneck. First‑order (Δ) and second‑order (ΔΔ) temporal derivatives are computed from the bottleneck activations and appended, encouraging the encoder to produce representations that are amenable to temporal modeling.

On top of each encoder, a unidirectional LSTM (256 units) models the sequential evolution of the features within its stream. The outputs of the two LSTMs are concatenated and fed into a bidirectional LSTM (BLSTM, 256 units) that fuses the static and dynamic cues and produces a frame‑wise posterior distribution over the target classes via a softmax layer. The whole network – from raw pixels to the softmax – is trained end‑to‑end using the AdaDelta optimizer with mini‑batches of 20 utterances, early stopping (patience = 5 epochs), and gradient clipping for the recurrent layers. The label of the final frame of each utterance is used as the utterance‑level class label.

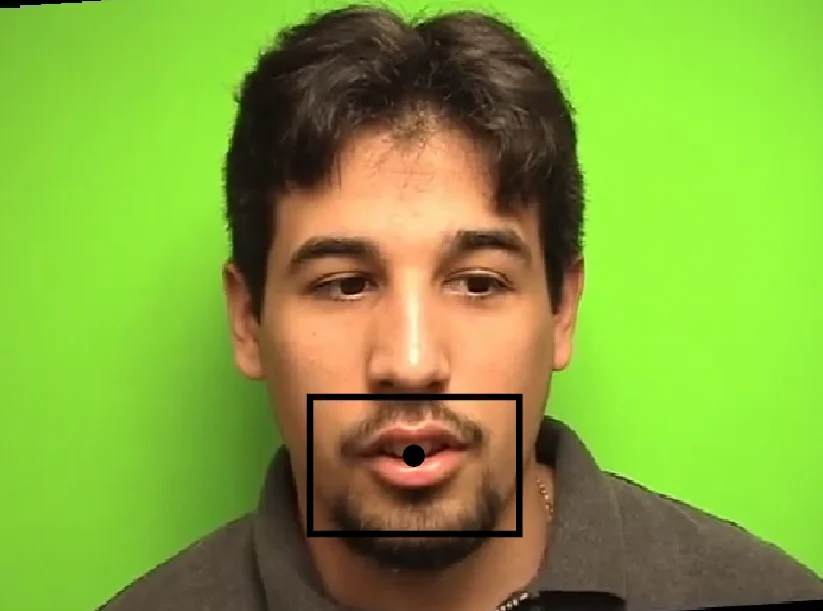

Experiments were conducted on two publicly available visual speech datasets. OuluVS2 contains 52 speakers uttering ten short phrases (e.g., “Hello”, “Thank you”) three times each; mouth ROIs are resized to 26 × 44 pixels. CUAVE consists of 36 speakers pronouncing digits 0‑9 five times each; ROIs are resized to 30 × 50 pixels. The authors follow the standard split: for OuluVS2, 40 subjects (900 utterances) for training/validation and 12 subjects (360 utterances) for testing; for CUAVE, 18 subjects for training/validation and 18 for testing.

Results show that each stream alone already outperforms the traditional DCT + HMM baseline (74.8 % on OuluVS2). The raw‑image stream reaches 78.0 % and the diff‑image stream 75.8 % accuracy. When both streams are combined, the system achieves 84.5 % on OuluVS2 – an absolute improvement of 9.7 % points over the baseline. On CUAVE, the raw‑image stream yields 71.4 % and the diff‑image stream 65.9 %; the fused two‑stream model attains 78.6 % accuracy, surpassing prior deep‑learning approaches such as Deep Autoencoder + SVM (68.7 %) and Deep Boltzmann Machines + SVM (69.0 %). Confusion matrix analysis reveals that most errors occur between semantically or visually similar classes (e.g., “Hello” vs. “Thank you”, digits 0 vs. 2, 6 vs. 9).

The authors also experimented with convolutional encoders and data augmentation, but these yielded inferior performance, likely due to the limited size of the training sets. This observation underscores the effectiveness of RBM‑based pretraining in small‑data regimes.

In conclusion, the paper demonstrates that a fully integrated LSTM‑based architecture can learn discriminative visual speech representations directly from pixels without handcrafted feature extraction, achieving state‑of‑the‑art performance on two benchmark datasets. The proposed framework is modular and can be readily extended to incorporate additional modalities (e.g., audio) for audiovisual speech recognition in future work.

Comments & Academic Discussion

Loading comments...

Leave a Comment