Continuous Authentication for Voice Assistants

Voice has become an increasingly popular User Interaction (UI) channel, mainly contributing to the ongoing trend of wearables, smart vehicles, and home automation systems. Voice assistants such as Siri, Google Now and Cortana, have become our everyday fixtures, especially in scenarios where touch interfaces are inconvenient or even dangerous to use, such as driving or exercising. Nevertheless, the open nature of the voice channel makes voice assistants difficult to secure and exposed to various attacks as demonstrated by security researchers. In this paper, we present VAuth, the first system that provides continuous and usable authentication for voice assistants. We design VAuth to fit in various widely-adopted wearable devices, such as eyeglasses, earphones/buds and necklaces, where it collects the body-surface vibrations of the user and matches it with the speech signal received by the voice assistant’s microphone. VAuth guarantees that the voice assistant executes only the commands that originate from the voice of the owner. We have evaluated VAuth with 18 users and 30 voice commands and find it to achieve an almost perfect matching accuracy with less than 0.1% false positive rate, regardless of VAuth’s position on the body and the user’s language, accent or mobility. VAuth successfully thwarts different practical attacks, such as replayed attacks, mangled voice attacks, or impersonation attacks. It also has low energy and latency overheads and is compatible with most existing voice assistants.

💡 Research Summary

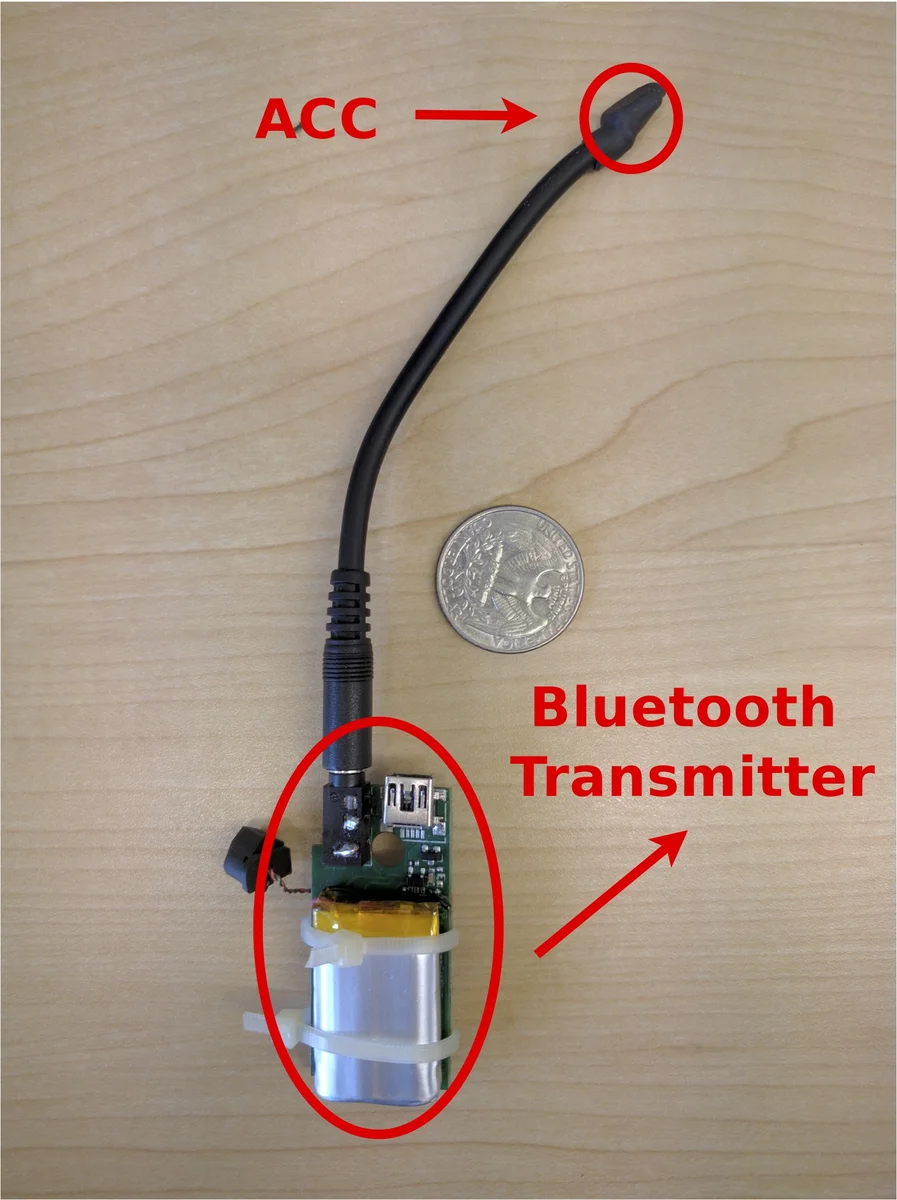

The paper introduces VAuth, a continuous authentication system for voice assistants that leverages body‑surface vibrations captured by a wearable accelerometer. Unlike traditional voice‑biometric methods that perform a one‑time check before a session, VAuth continuously verifies that every spoken command originates from the legitimate user by matching the accelerometer signal with the microphone audio in the time domain. The system consists of two components: (1) a wearable token (integrated into eyeglasses, earbuds, or a necklace) that records the user’s throat vibrations, and (2) an extension to the voice‑assistant platform that retrieves the accelerometer data via encrypted Bluetooth, aligns it with the microphone stream, and performs segment‑by‑segment cross‑correlation. Non‑speech segments are filtered out, and only segments whose correlation exceeds a predefined threshold are forwarded to the assistant for execution.

The threat model covers three attack categories: (A) stealthy attacks using inaudible or mangled audio, (B) biometric‑override attacks that replay or impersonate the victim’s voice, and (C) acoustic‑injection attacks that attempt to drive the accelerometer directly with high‑energy sound. VAuth defeats (A) and (B) because the attacker cannot generate the corresponding body vibration; (C) would require dangerously loud audio and is shown experimentally to be impractical. A theoretical analysis demonstrates that the probability of an attacker achieving a correlation above the threshold is asymptotically zero.

A prototype was built using a commodity MEMS accelerometer and a Bluetooth Classic transceiver, integrated with Google Now on Android. Eighteen participants each issued thirty distinct commands across three wearable form factors. Results show >97 % detection accuracy, <0.1 % false‑positive rate, and consistent performance across languages (English, Arabic, Chinese, Korean, Persian), accents, and mobility conditions (standing vs. jogging). The average authentication latency is about 300 ms, and power consumption is low enough for weekly recharging.

A usability survey of 952 respondents indicated strong willingness to adopt VAuth, especially when presented in familiar form factors and when security concerns are highlighted. VAuth requires no user‑specific training, is robust to voice changes due to illness or fatigue, and can be paired or unpaired like any possession‑based token.

Limitations include dependence on the wearable remaining in contact with the user’s skin, the need for network connectivity because matching is performed server‑side, and the inability to authenticate when the token is lost or removed. Future work suggests on‑device lightweight matching, multimodal sensor fusion (e.g., heart‑rate, skin conductance), and contact‑less vibration sensing to mitigate these constraints.

In summary, VAuth provides a practical, low‑overhead, and highly accurate continuous authentication layer for voice assistants, effectively closing the security gap left by existing one‑time voice‑biometric schemes while remaining compatible with current wearable ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment