Effective Degrees of Freedom: A Flawed Metaphor

To most applied statisticians, a fitting procedure's degrees of freedom is synonymous with its model complexity, or its capacity for overfitting to data. In particular, it is often used to parameterize the bias-variance tradeoff in model selection. W…

Authors: Lucas Janson, William Fithian, Trevor Hastie

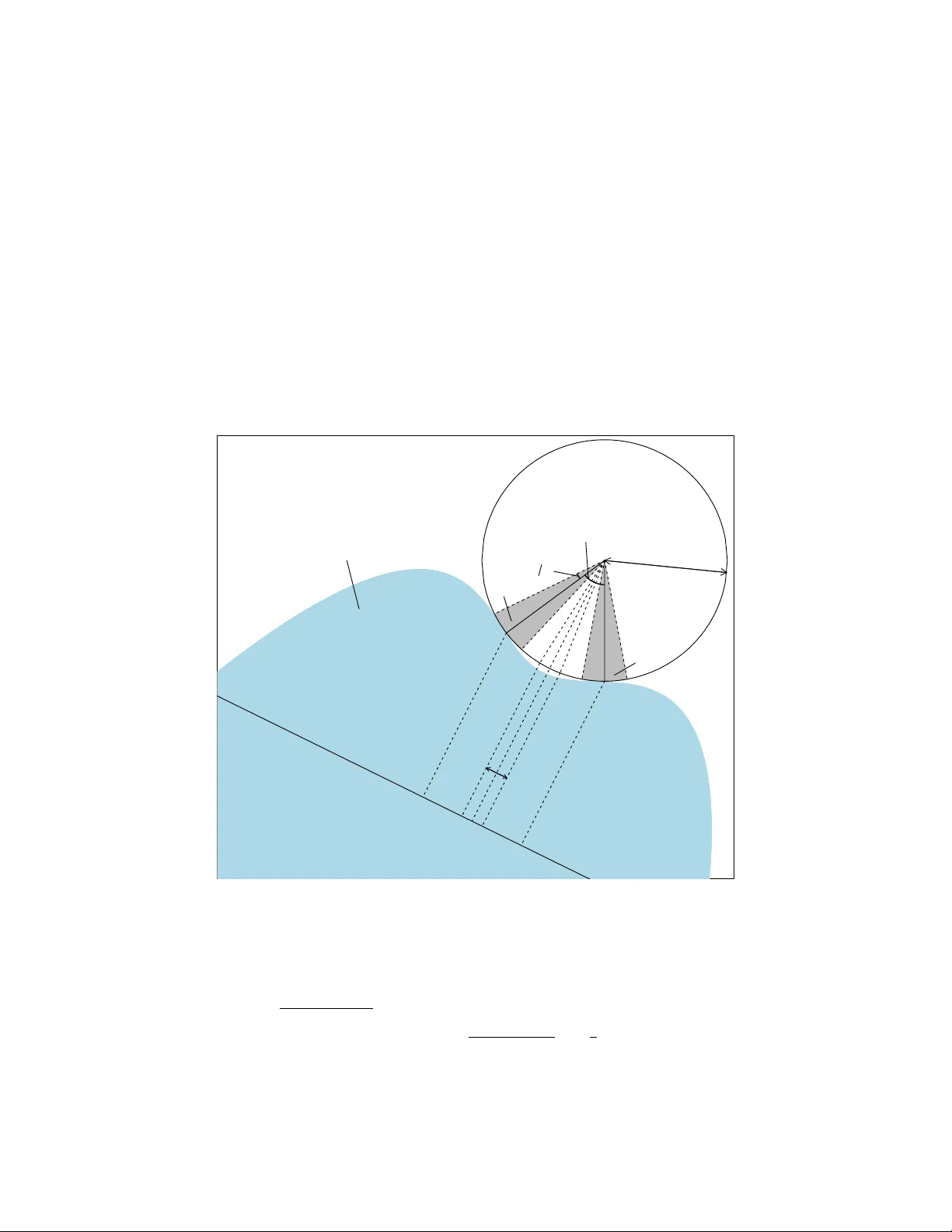

Effectiv e Degrees of F reedom: A Fla w ed Metaphor Lucas Janson, Will Fithian, T rev or Hastie Jan uary 6, 2017 Abstract T o most applied statisticians, a fitting procedure’s degrees of freedom is syn- on ymous with its mo del complexity , or its capacity for ov erfitting to data. In particular, it is often used to parameterize the bias-v ariance tradeoff in mo del selection. W e argue that, contrary to folk intuition, mo del complexity and de- grees of freedom are not synon ymous and ma y corresp ond v ery p oorly . W e ex- hibit and theoretically explore v arious examples of fitting procedures for which degrees of freedom is not monotonic in the mo del complexity parameter, and can exceed the total dimension of the response space. Even in v ery simple set- tings, the degrees of freedom can exceed the dimension of the ambien t space b y an arbitrarily large amoun t. W e sho w the degrees of freedom for an y non-conv ex pro jection method can b e un b ounded. 1 In tro duction Consider observing data y = µ + ε with fixed mean µ ∈ R n and mean-zero errors ε ∈ R n , and predicting y ∗ = µ + ε ∗ , where ε ∗ is an indep endent copy of ε . Assume for simplicity the en tries of ε ∗ and ε are indep enden t and iden tically distributed with v ariance σ 2 . Statisticians hav e prop osed an immense v ariet y of fitting pro cedures for pro duc- ing the prediction ˆ y ( y ), some of which are more complex than others. The effective degrees of freedom (DF) of Efron (1986), defined precisely as 1 σ 2 P n i =1 Co v y i , ˆ y i , has emerged as a p opular and c on v enient measuring stic k for comparing the com- plexit y of very differen t fitting pro cedures (we motiv ate this definition in Section 2). The name suggests that a metho d with p degrees of freedom is similarly complex as linear regression on p predictor v ariables. T o motiv ate our inquiry , consider estimating a no-intercept linear regression mo del with design matrix X = 1 0 0 1 and resp onse y ∼ N ( µ , I ), with µ ∈ R 2 . Supp ose further that, in order to obtain a more parsimonious mo del than the full biv ariate regression, w e instead estimate the b est fitting of the tw o univ ariate mo dels (in other words, b est subsets regression with mo del size k = 1). In effect we are 1 minimizing training error ov er the mo del µ ∈ M = R × { 0 } ∪ { 0 } × R . What are the effectiv e degrees of freedom of the fitted mo del in this seemingly inno cuous setting? A simple, in tuitive, and wrong argument predicts that the DF lies somewhere b et ween 1 and 2. W e exp ect it to b e greater than 1, since we use the data to select the b etter of tw o one-dimensional mo dels. How ev er, we ha ve only tw o free parameters at our disposal, with a rather severe constrain t on the v alues they are allo wed to take, so M is strictly less complex than the saturated mo del with t wo parameters that fits the data as hard as p ossible. Figure 1 sho ws the effective DF for this mo del, plotted as a function of µ ∈ R 2 , the exp ectation of the resp onse y . As expected, DF( µ ) ≥ 1, with near-equalit y when µ is close to one of the co ordinate axes but far from the other. Perhaps surprisingly , ho wev er, DF( µ ) can exceed 2, approac hing 7 in the corners of the plot. Indeed, the plot suggests (and we will later confirm) that DF( µ ) can grow arbitrarily large as µ mov es farther diagonally from the origin. 1 2 3 4 5 6 7 −10 −5 0 5 10 −10 −5 0 5 10 DF for Best of T wo Univariate Models µ 1 µ 2 Figure 1: Heatmap of the DF for 1-b est-subset fit with the mo del y ∼ N ( I 2 · β , I 2 · σ 2 ), as a function of the true mean v ector µ ∈ R 2 . Contrary to what one might naiv ely exp ect, the DF can significantly exceed 2, the DF for the full mo del. T o understand wh y our in tuition should lead us astra y here, we m ust first review ho w the DF is defined for a general fitting pro cedure, and what classical concept that definition is meant to generalize. 2 1.1 Degrees of F reedom in Classical Statistics The original meaning of degrees of freedom, the num b er of dimensions in which a random vector ma y v ary , plays a central role in classical statistics. In ordinary linear regression with full-rank n × p predictor matrix X , the fitted resp onse ˆ y = X ˆ β is the orthogonal pro jection of y on to the p -dimensional column space of X , and the residual r = y − ˆ y is the pro jection on to its orthogonal complement, whose dimension is n − p . W e say this linear mo del has p “model degrees of freedom” (or just “degrees of freedom”), with n − p “residual degrees of freedom.” If the error v ariance is σ 2 , then r is “pinned down” to ha ve zero pro jection in p directions, and is free to v ary , with v ariance σ 2 , in the remaining n − p orthogonal directions. In particular, if the mo del is correct ( E [ y ] = X β ), then the residual sum of squares (RSS) has distribution RSS = k r k 2 2 ∼ σ 2 · χ 2 n − p . (1) It follo ws that E k r k 2 2 = σ 2 ( n − p ), leading to the un biased v ariance estimate ˆ σ 2 = 1 n − p k r k 2 2 . t -tests and F -tests are based on comparing lengths of n -v ariate Gaussian random vectors after pro jecting on to appropriate linear subspaces. In linear regression, the mo del degrees of freedom (henceforth DF) serves to quan tify multiple related prop erties of the fitting pro cedure. The DF coincides with the num b er of non-redundant free parameters in the mo del, and th us constitutes a natural measure of mo del complexity or o verfitting. In addition, the total v ariance of the fitted response ˆ y is exactly σ 2 p , which depends only on the n umber of linearly indep enden t predictors and not on their size or correlation with each other. The DF also quantifies optimism of the residual sum of squares as an estimate of out-of-sample prediction error. In linear regression, one can easily show that the RSS understates mean squared prediction error by 2 σ 2 p on av erage. Mallo ws (1973) prop osed exploiting this identit y as a means of mo del selection, by comput- ing RSS + 2 σ 2 p , an unbiased estimate of prediction error, for sev eral mo dels, and selecting the mo del with the smallest estimated test error. Thus, the DF of each mo del con tributes a sort of p enalt y for ho w hard that mo del is fitted to the data. 1.2 “Effectiv e” or “Equiv alen t” Degrees of F reedom F or more general fitting pro cedures such as smo othing splines, generalized additive mo dels, or ridge regression, the num b er of free parameters is often either undefined or an inappropriate measure of mo del complexit y . Most such metho ds feature some tuning parameter mo dulating the fit’s complexit y , but it is not clear a priori how to compare e.g. a Lasso with Lagrange parameter λ = 3 to a lo cal regression with windo w width 0 . 5. When comparing differen t metho ds, or the same metho d with differen t tuning parameters, it can b e quite useful to ha ve some measure of complexit y with a consistent meaning across a diverse range of algorithms. T o this end, v arious authors hav e prop osed alternative, more general definitions of a 3 metho d’s “effective degrees of freedom,” or “equiv alen t degrees of freedom” (see Buja et al. (1989) and references therein). If the metho d is linear — that is, if ˆ y = H y for some “hat matrix” H that is not a function of y — then the trace of H serves as a natural generalization. F or linear regression H is a p -dimensional pro jection, so tr( H ) = p , coinciding with the original definition. Intuitiv ely , when H is not a pro jection, tr( H ) accumulates fractional degrees of freedom for directions of y that are shrunk, but not entirely eliminated, in computing ˆ y . F or nonlinear metho ds, further generalization is necessary . The most p opular definition, due to Efron (1986) and giv en in Equation (4), defines DF in terms of the optimism of RSS as an estimate of test error, and applies to any fitting metho d. Measuring or estimating optimism is a w orthy goal in and of itself. But to justify our in tuition that the DF offers a consisten t wa y to quantify mo del complexity , a bare requiremen t is that the DF b e monotone in model complexit y when considering a fixed metho d. The term “model complexity” is itself rather metaphorical when describing arbi- trary fitting algorithms, but has a concrete meaning for methods that minimize RSS sub j ect to the fit ˆ y b elonging to a closed constraint set M (a “mo del”). Commonly , some tuning parameter γ selects one of a nested set of mo dels: ˆ y ( γ ) = arg min z ∈M γ k y − z k 2 2 , with M γ 1 ⊆ M γ 2 ⊆ R n if γ 1 ≤ γ 2 (2) Examples include the Lasso (Tibshirani, 1996) and ridge regression (Ho erl, 1962) in their constraint formulation, as w ell as b est subsets regression (BSR). The mo del M k for BSR with k v ariables is a union of k -dimensional subspaces. Because larger mo dels “fit harder” to the data, one naturally exp ects DF to b e monotone with resp ect to mo del inclusion. F or example, one might imagine a plot of DF v ersus k for BSR to lo ok lik e Figure 2 when the full mo del has p = 10 predictors: monotone and sandwiched b etw een k and p . Ho wev er, as w e hav e already seen in Figure 1, monotonicity is far from guar- an teed even in v ery simple examples, and in particular the DF “ceiling” at p can b e brok en. Surprisingly , monotonicity can even break down for metho ds pro jecting on to conv ex sets, including ridge regression and the Lasso (although the DF cannot exceed the dimension of the conv ex set). The non-monotonicit y of DF for suc h con- v ex methods w as disco vered independently b y Kaufman and Rosset (2014), who give a thorough account. Among other results, they prov e that the degrees of freedom of pro jec tion onto an y conv ex set must alwa ys b e smaller than the dimension of that set. T o minimize o verlap with Kaufman and Rosset (2014), we fo cus our atten tion instead on non-conv ex fitting pro cedures suc h as BSR. In con trast to the results of Kaufman and Rosset (2014) for the conv ex case, w e sho w that pro jection on to any closed non-con vex set can ha ve arbitrarily large degrees of freedom, regardless of the dimensions of M and y . 4 ● ● ● ● ● ● ● ● ● ● ● 0 2 4 6 8 10 0 2 4 6 8 10 Number of Predictors Degrees of Freedom Figure 2: This plot follows the usual intuition for degrees of freedom for b est subset regression when the full mo del has 10 predictors. 2 Preliminaries W e consider fitting techniques with some tuning parameter (discrete or con tinuous) that can b e used to v ary the mo del from less to more constrained. In BSR, the tuning parameter k determines how many predictor v ariables are retained in the mo del, and we will denote BSR with tuning parameter k as BSR k . F or a general fitting tec hnique FIT, w e will use the notation FIT k for FIT with tuning parameter k and ˆ y ( F I T k ) for the fitted resp onse pro duced by FIT k . As men tioned in the in tro duction, a general form ula for DF can b e motiv ated b y the follo wing relationship b etw een exp ected prediction error (EPE) and RSS for ordinary least squares (OLS) (Mallows, 1973): EPE = E [RSS] + 2 σ 2 p. (3) Analogously , once a fitting technique (and tuning parameter) FIT k is chosen for fixed data y , DF is defined by the following iden tity: E " n X i =1 ( y ∗ i − ˆ y ( F I T k ) i ) 2 # = E " n X i =1 ( y i − ˆ y ( F I T k ) i ) 2 # + 2 σ 2 · DF( µ , σ 2 , FIT k ) , (4) where σ 2 is the v ariance of the ε i (whic h we assume exists), and y ∗ i is a new indep en- den t copy of y i with mean µ i . Th us DF is defined as a measure of the optimism of RSS. This definition in turn leads to a simple closed form expression for DF under v ery general conditions, as shown by the follo wing theorem. Theorem 1 (Efron (1986)) . F or i ∈ { 1 , . . . , n } , let y i = µ i + ε i , wher e the µ i ar e nonr andom and the ε i have me an zer o and finite varianc e. L et ˆ y i , i ∈ { 1 , . . . , n } 5 denote estimates of µ i fr om some fitting te chnique b ase d on a fixe d r e alization of the y i , and let y ∗ i , i ∈ { 1 , . . . , n } b e indep endent of and identic al ly distribute d as the y i . Then E " n X i =1 ( y ∗ i − ˆ y i ) 2 # − E " n X i =1 ( y i − ˆ y i ) 2 # = 2 n X i =1 Co v ( y i , ˆ y i ) (5) Pr o of. F or i ∈ { 1 , . . . , n } , E ( y ∗ i − ˆ y i ) 2 = E ( y ∗ i − µ i + µ i − ˆ y i ) 2 = E ( y ∗ i − µ i ) 2 + 2 E ( y ∗ i − µ i )( µ i − ˆ y i ) + E ( µ i − ˆ y i ) 2 = V ar( ε i ) + E ( µ i − ˆ y i ) 2 , (6) where the middle term in the second line equals zero b ecause y ∗ i is indep enden t of all the y j and thus also of ˆ y i , and b ecause E y ∗ i − µ i = 0. F urthermore, E ( y i − ˆ y i ) 2 = E ( y i − µ i + µ i − ˆ y i ) 2 = E ( y i − µ i ) 2 + 2 E ( y i − µ i )( µ i − ˆ y i ) + E ( µ i − ˆ y i ) 2 = V ar( ε i ) + E ( µ i − ˆ y i ) 2 − 2 Co v ( y i , ˆ y i ) . (7) Finally , subtracting Equation (7) from Equation (6) and summing o v er i ∈ { 1 , . . . , n } giv es the desired result. Remark 1. F or i.i.d. err ors with finite varianc e σ 2 , The or em 1 implies that, DF ( µ , σ 2 , FIT k ) = 1 σ 2 tr Co v ( y , ˆ y ( F I T k ) ) = 1 σ 2 n X i =1 Co v y i , ˆ y ( F I T k ) i . (8) Note also that when FIT k is a line ar fitting metho d with hat matrix H , Equation (8) r e duc es to DF ( µ , σ 2 , FIT k ) = tr( H ) . (9) 3 Additional Examples F or each of the following examples, a model-fitting tec hnique, mean v ector, and noise pro cess are c hosen. Then the DF is estimated by Mon te Carlo simulation. The details of this estimation pro cess, along with all R co de used, are provided in the app endix. 3.1 Best Subset Regression Example Our first motiv ating example is mean t to mimic a realistic application. This example is a linear mo del with n = 50 observ ations on p = 15 v ariables and a standard Gaussian noise process. The design matrix X is a n × p matrix of i.i.d. standard 6 Gaussian noise. The mean vector µ (or equiv alen tly , the co efficien t v ector β ) is generated by initially setting the co efficient vector to the v ector of ones, and then standardizing X β to hav e mean zero and standard deviation seven. W e generated a few ( X , β ) pairs b efore we disco vered one with substan tially non-monotonic DF, but then measured the DF for that ( X , β ) to high precision via Monte Carlo. ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 0 5 10 15 0 5 10 15 20 25 30 35 Subset Size Degrees of Freedom ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● Best Subset Regression ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 0 5 10 15 0 5 10 15 20 25 30 35 Subset Size Degrees of Freedom ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● Forward Stepwise Regression Figure 3: Mon te Carlo estimated degrees of freedom v ersus subset size in the b est subset regression (left) and forward stepwise regression (righ t) examples. Estimates are shown plus or minus tw o (Monte Carlo) standard errors. Dashed lines sho w the constan t ambien t dimension ( p ) and the increasing subset size, for reference ( k ). The left plot of Figure 3 sho ws the plot of Mon te Carlo estimated DF v ersus subset size. The right plot of Figure 3 sho ws the same plot for forw ard stepwise regression (FSR) applied to the same data. Although FSR isn’t quite a constrained least-squares fitting metho d, it is a p opular metho d whose complexit y is clearly increasing in k . Both plots show that the DF for a num b er of subset sizes is greater than 15, and w e kno w from standard OLS theory that the DF for subset size 15 is exactly 15 in b oth cases. 3.2 Un b ounded Degrees of F reedom Example Next we return to the motiv ating example from Section 1. The setup is again a linear mo del, but with n = p = 2. The design matrix X = A · I , where A is a scalar and I is the (2 × 2) identit y matrix. The coefficient vector is just (1 , 1) T making the mean v ector ( A, A ) T , and the noise is i.i.d. standard Gaussian. Considering BSR 1 , with high probability for large A (when y falls in the p ositiv e quadrant), it is clear that the b est univ ariate mo del just chooses the larger of the t wo resp onse v ariables for eac h realization. Sim ulating 10 5 times with A = 10 4 , we get a Mon te Carlo estimate of the DF of BSR 1 to b e ab out 5630, with a standard error of ab out 26. Pla ying 7 with the v alue of A rev eals that for large A , DF is approximately linearly increasing in A . This suggests that not only is the DF greater than n = 2, but can b e made un b ounded without changing n or the structure of X . Figure 4 shows what is going on for a few p oints (and a smaller v alue of A for visual clarity). The v ariance of the y i (the black p oin ts) is far smaller than that of the ˆ y i (the pro jections of the black dots onto the blue constrain t set). Therefore, since the correlation b et ween the y i and the ˆ y i is around 0.5, P n i =1 Co v ( y i , ˆ y i ) is also m uch larger than the v ariance of the y i , and the large DF can b e infered from Equation (8). y 1 y 2 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● Figure 4: Sketc h of the unbounded DF example (not to scale with the A v alue used in the text). The true mean vector is in red, the constraint region in blue, and some data p oints are shown in black. The grey lines connect some of the data p oints to their pro jections on to the constraint set, in blue. Note that for i ∈ { 1 , 2 } , ˆ y i is either 0 or approximately A dep ending on small changes in y . In this to y example, we can actually pro ve that the DF div erges as A → ∞ using 8 Equation (4), 1 A DF(( A, A ) T , 1 , BSR 1 ) = 1 2 E " 1 A 2 X i =1 ( y ∗ i − ˆ y ( B S R 1 ) i ) 2 − ( y i − ˆ y ( B S R 1 ) i ) 2 # = 1 2 E " 1 A 2 X i =1 ( y ∗ i − ˆ y ( B S R 1 ) i ) 2 − ( y i − ˆ y ( B S R 1 ) i ) 2 I y ∈ Q 1 # + 1 2 E " 1 A 2 X i =1 ( y ∗ i − ˆ y ( B S R 1 ) i ) 2 − ( y i − ˆ y ( B S R 1 ) i ) 2 I y / ∈ Q 1 # = 1 2 E 1 A A 2 + 2 Aε ∗ 1 + ( ε ∗ 1 ) 2 + ε ∗ 2 − min( ε 1 , ε 2 ) 2 − A 2 − 2 A min( ε 1 , ε 2 ) − min( ε 1 , ε 2 ) 2 I y ∈ Q 1 i + o (1) A →∞ − → 1 2 E [2 ε ∗ 1 − 2 min( ε 1 , ε 2 )] = E [max( ε 1 , ε 2 )] , (10) where Q 1 is the first quadrant of R 2 , I S is the indicator function on the set S , and ε ∗ 1 , ε ∗ 2 are noise realizations indep enden t of one another and of y . The o (1) term comes from the the fact that 1 A P 2 i =1 ( y ∗ i − ˆ y ( B S R 1 ) i ) 2 − ( y i − ˆ y ( B S R 1 ) i ) 2 is O p ( A ) while P ( y / ∈ Q ) shrinks exp onentially fast in A (as it is a Gaussian tail probability). The conv ergence in the last line follows by the Dominated Con vergence Theorem applied to the first term in the preceding line. Note that E [max( ε 1 , ε 2 )] ≈ 0 . 5642, in go o d agreemen t with the sim ulated result. 4 Geometric Picture The explanation for the non-monotonicit y of DF can be b est understoo d b y thinking ab out regression problems as constrained optimizations. W e will see that the rev ersal in DF monotonicity is caused by the in terplay b et ween the shap e of the co efficien t constrain t set and that of the contours of the ob jectiv e function. BSR has a non-conv ex constrain t set for k < p , and Subsection 3.2 gives some in tuition for why the DF can b e muc h greater than the mo del dimension. This in tuition can b e generalized to any non-conv ex constraint set, as follo ws. Place the true mean at a p oint with non-unique pro jection on to the constrain t set, see Figure 5 for a generic sketc h. Suc h a p oint must exist b y the Motzkin-Bunt Theorem (Bunt, 1934; Motzkin, 1935; Kritikos, 1938). Note the constraint set for ˆ y = X ˆ β is just an affine transformation of the constrain t set for ˆ β , and thus a non-con vex ˆ β constraint is equiv alen t to a non-con vex ˆ y constraint. Then the fit dep ends sensitively on the noise pro cess, ev en when the noise is v ery small, since y is pro jected onto m ultiple 9 w ell-separated sections of the constraint set. Thus as the magnitude of the noise, σ , go es to zero, the v ariance of ˆ y remains roughly constant. Equation (8) then tells us that DF can be made arbitrarily large, as it will b e roughly proportional to σ − 1 . W e formalize this intuition in the following theorem. ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● Figure 5: Sk etch of geometric picture for a regression problem with n = 2 and non-con vex constraint sets. The blue area is the constrain t set, the red p oint the true mean v ector, and the black circles the con tours of the least squares ob jective function. Note how the spherical contour spans the gap of the divot where it meets the constraint set. Theorem 2. F or a fitting te chnique FIT that minimizes squar e d err or subje ct to a non-c onvex, close d c onstr aint ˆ µ ∈ M ⊂ R n , c onsider the mo del, y = µ + σ ε , ε i i.i.d. ∼ F for i ∈ { 1 , . . . , n } , wher e F is a me an-zer o distribution with finite varianc e supp orte d on an op en neigh- b orho o d of 0. Then ther e exists some µ ∗ such that DF ( µ ∗ , σ 2 , FIT k ) → ∞ as σ 2 → 0 . Pr o of. W e lea ve the pro of for the app endix. 5 Discussion The common in tuition that “effective” or “equiv alent” degrees of freedom serves a consistent and in terpretable measure of model complexit y merits some degree of skepticism. Our results and examples, com bined with those of Kaufman and Rosset (2014), demonstrate that for man y widely-used conv ex and non-conv ex fitting 10 tec hniques, the DF can b e non-monotone with respect to model nesting. In the non- con vex case, the DF can exceed the dimension of the mo del space by an arbitrarily large amount. This phenomenon is not restricted to pathological examples or edge cases, but can arise in run-of-the-mill datasets as well. Finally , w e presented a geometric in terpretation of this phenomenon in terms of irregularities in the mo del constrain ts and/or ob jective function con tours. In ligh t of the ab o ve, we find the term “(effective) degrees of freedom” to b e somewhat misleading, as it is suggestive of a quantit y corresponding to mo del “size”. It is also misleading to consider DF as a measure of ov erfitting, or ho w flexibly the mo del is conforming to the data, since a mo del alwa ys fits at least as strongly as a strict submo del to a given dataset. By definition, the DF of Efron (1983) do es measure optimism of in-sample error as an estimate of out-of-sample error, but w e should not b e to o quic k to assume that our intuition ab out linear mo dels carries o ver exactly . References Buja, A., Hastie, T., and Tibshirani, R. (1989). Linear smoothers and additive mo dels. The Annals of Statistics , 17(2):453–510. Bun t, L. N. H. (1934). Bijdr age tot de the orie der c onvexe puntverzamelingen . PhD thesis, Rijks-Universiteit, Groningen. Efron, B. (1983). Estimating the error rate of a prediction rule: impro vemen t on cross-v alidation. Journal of the Americ an Statistic al Asso ciation , 78(382):316– 331. Efron, B. (1986). Ho w Biased Is the Apparen t Error Rate of a Prediction Rule? Journal of the A meric an Statistic al Asso ciation , 81(394):461–470. Ho erl, A. E. (1962). Application of Ridge Analysis to Regression Problems. Chemic al Engine ering Pr o gr ess , 58:54–59. Kaufman, S. and Rosset, S. (2014). When Do es More Regularization Imply F ew er Degrees of F reedom? Sufficient Conditions and Coun ter Examples from Lasso and Ridge Regression. Biometrika (to app e ar) . Kritik os, M. N. (1938). Sur quelques propri´ et ´ es des ensembles con vexes. Bul letin Math ´ ematique de la So ci´ et ´ e R oumaine des Scienc es , 40:87–92. Mallo ws, C. L. (1973). Some Commen ts on CP. T e chnometrics , 15(4):661–675. Motzkin, T. (1935). Sur quelques propri ´ et´ es caract ´ eristiques des ensem bles con vexes. A tti A c c ad. Naz. Linc ei R end. Cl. Sci. Fis. Mat. Natur , 21:562–567. 11 Tibshirani, R. (1996). Regression shrink age and selection via the lasso. Journal of the R oyal Statistic al So ciety. Series B , 58(1):267–288. 6 App endix A Pr o of of The or em 2. The pro of relies heavily on the fact that for ev ery non-conv ex set M in Euclidean space there is at least one p oint whose pro jection on to M is not unique (e.g. the red dot in Figure 5). This fact was prov ed indep enden tly in Bun t (1934), Motzkin (1935), and Kritikos (1938). A schematic for this pro of in t wo dimensions is provided in Figure 6. Let µ b e a p oint with non-unique pro jection ● ● ● ● ● ● ● µ x 1 x 2 P B ( µ ) θ θ 5 D 1 D 2 d P B ( x 1 ) P B ( x 2 ) B M 2a Figure 6: Schematic for the proof of Theorem 2, in tw o dimensions. on to the non-conv ex set M and let x 1 and x 2 b e tw o distinct pro jections of µ onto M . Let d = || µ − x 1 || 2 = || µ − x 2 || 2 b e the Euclidean distance b etw een µ and M , and θ = cos − 1 ( ( x 1 − µ ) · ( x 2 − µ ) | x 1 − µ || x 2 − µ | ) b e the angle betw een x 1 and x 2 , taken as v ectors from µ . Define the set D 1 = { v ∈ R n | cos − 1 ( ( x 1 − µ ) · ( v − µ ) | x 1 − µ || v − µ | ) < θ 5 , || v − µ || 2 < d } , and D 2 analogously for x 2 . Let B b e a one-dimensional affine subspace that is b oth parallel 12 to the line connecting x 1 and x 2 , and contained in the hyperplane defined b y µ , x 1 , and x 2 . Denoting the pro jection op erator on to B b y P B , let z = || P B y − P B µ || 2 /σ , and ˜ y = || P B ˆ y − P B µ || 2 . Let a = d cos( π − θ/ 5 2 ). W e now hav e, tr(Co v ( y , ˆ y )) ≥ Co v ( P B y , P B ˆ y ) = E [ σ z ˜ y ] = E [ σ z ˜ y 1 y ∈D 1 ∪D 2 ] + E [ σ z ˜ y 1 y / ∈D 1 ∪D 2 ] ≥ σ E [ z ˜ y 1 y ∈D 1 ] + σ E [ z ˜ y 1 y ∈D 2 ] ≥ aσ E [ z 1 y ∈D 2 ] − E [ z 1 y ∈D 1 ] , = 2 aσ E [ z 1 y ∈D 2 ] . The first inequality follows from the translation and rotation inv ariance of the trace of a cov ariance matrix, and from the p ositivit y of the diagonal entries of the co v ari- ance matrix for the case of pro jection fitting metho ds. F or the second inequality , note that E [ σ z ˜ y 1 y / ∈D 1 ∪D 2 ] ≥ 0, again b ecause of the p ositivity of the DF of pro jec- tion metho ds (applied to the same mo del with a noise pro cess that has supp ort on D 1 and D 2 remo ved). The third inequalit y follows from considering the pro jections of D 1 and D 2 on to M and then on to B , and noting that the t wo (double) pro jections m ust b e separated b y at least a distance of 2 a . Defining F 1 = { v ∈ R n | cos − 1 ( ( x 1 − µ ) · ( v − µ ) | x 1 − µ || v − µ | ) < θ 5 } and F 2 analogously for x 2 , note that P ( y ∈ F 1 \ D 1 ) = P ( y ∈ F 2 \ D 2 ) → 0 as σ 2 → 0. Thus, tr(Co v ( y , ˆ y )) ≥ 2 aσ E [ z 1 y ∈F 2 ] + o ( σ ) . Neither z nor the even t y ∈ F 2 dep end on σ , so define the constan t b = 2 a E [ z 1 y ∈F 2 ] > 0 which is indep endent of σ . Th us we ha ve shown that, DF( µ ∗ , σ 2 , FIT k ) = 1 σ 2 tr(Co v ( y , ˆ y )) ≥ b + o ( σ ) σ → ∞ as σ 2 → 0. 7 App endix B In Subsections 3.1 and 3.2, DF is estimated by computing an ubiased estimator of DF for each sim ulated noise realization. This unbiased estimator for DF can b e obtained from Equation (8) b y exploiting the linearity of the exp ectation and trace 13 op erators, DF( µ , σ 2 , FIT k ) = 1 σ 2 tr(Co v ( y , ˆ y ( F I T k ) )) = 1 σ 2 E ( y − µ ) T ( ˆ y ( F I T k ) − E [ ˆ y ( F I T k ) ]) = 1 σ 2 E ε T ˆ y ( F I T k ) , (11) where the last inequalit y follo ws b ecause E [ ε ] = 0 . Thus the true DF is estimated b y ε T ˆ y ( F I T k ) a veraged ov er man y simulations. Since this estimate of DF is an av erage of i.i.d. random v ariables, its standard deviation can b e estimated by the emipirical standard deviation of the ε T ˆ y ( F I T k ) divided by the square ro ot of the n umber of sim ulations. The following is the code for the BSR simulation in Subsection 3.1. Note that although the seed is preset, this is only done to generate a suitable design matrix, not to cherry-pic k the simulated noise pro cesses. The simulation results remain the same if the seed is reset after assigning the v alue to x . l i b r a r y ( l e a p s ) l i b r a r y ( g p l o t s ) n = 5 0 ; p = 1 5 ; B = 5 0 0 0 s e t . s e e d ( 1 1 1 3 ) x = m a t r i x ( r n o r m ( n * p ) , n , p ) m u = d r o p ( s c a l e ( ( x % * % r e p ( 1 , 1 5 ) ) ) ) s n r = 7 m u = m u * s n r d f m a t = m a t r i x ( 0 , B , p ) f o r ( j i n 1 : B ) { y = r n o r m ( n ) + m u # t e m p = r e g s u b s e t s ( x , y , n b e s t = 1 , n v m a x = p ) t e m p = r e g s u b s e t s ( x , y , n b e s t = 1 , n v m a x = p , i n t e r c e p t = F A L S E ) f o r ( i i n 1 : p ) { j c o e f = c o e f ( t e m p , i d = i ) # x n a m e s = n a m e s ( j c o e f ) [ - 1 ] x n a m e s = n a m e s ( j c o e f ) w h i c h = m a t c h ( x n a m e s , l e t t e r s [ 1 : p ] ) # y h a t = c b i n d ( 1 , x [ , w h i c h ] ) % * % j c o e f i f ( i = = 1 ) { y h a t = m a t r i x ( x [ , w h i c h ] , n , 1 ) % * % j c o e f } e l s e { y h a t = x [ , w h i c h ] % * % j c o e f } d f m a t [ j , i ] = s u m ( ( y - m u ) * y h a t ) } } # d f = a p p l y ( d f m a t , 2 , m e a n ) - 1 d f = a p p l y ( d f m a t , 2 , m e a n ) e r r o r = s q r t ( a p p l y ( d f m a t , 2 , v a r ) / B ) s a v e ( d f , e r r o r , p , f i l e = " B S R . r d a t a " ) p l o t C I ( 1 : p , d f , 2 * e r r o r , y l i m = c ( 0 , 3 5 ) , x l i m = c ( 0 , p ) , g a p = 0 . 4 , x l a b = " S u b s e t S i z e " , y l a b = " D e g r e e s o f F r e e d o m " , c e x . l a b = 1 . 5 , t y p e = " o " ) a b l i n e ( h = 1 5 , l t y = 2 ) l i n e s ( c ( 0 , 1 5 ) , c ( 0 , 1 5 ) , l t y = 2 ) t i t l e ( " B e s t S u b s e t R e g r e s s i o n " , c e x . m a i n = 2 ) 14 The co de for the FSR sim ulation in Subsection 3.1 is almost identical. l i b r a r y ( l e a p s ) l i b r a r y ( g p l o t s ) n = 5 0 ; p = 1 5 ; B = 5 0 0 0 s e t . s e e d ( 1 1 1 3 ) x = m a t r i x ( r n o r m ( n * p ) , n , p ) m u = d r o p ( s c a l e ( ( x % * % r e p ( 1 , 1 5 ) ) ) ) s n r = 7 m u = m u * s n r d f m a t = m a t r i x ( 0 , B , p ) f o r ( j i n 1 : B ) { y = r n o r m ( n ) + m u # t e m p = r e g s u b s e t s ( x , y , n b e s t = 1 , n v m a x = p , m e t h o d = " f o r w a r d " ) t e m p = r e g s u b s e t s ( x , y , n b e s t = 1 , n v m a x = p , m e t h o d = " f o r w a r d " , i n t e r c e p t = F A L S E ) f o r ( i i n 1 : p ) { j c o e f = c o e f ( t e m p , i d = i ) # x n a m e s = n a m e s ( j c o e f ) [ - 1 ] x n a m e s = n a m e s ( j c o e f ) w h i c h = m a t c h ( x n a m e s , l e t t e r s [ 1 : p ] ) # y h a t = c b i n d ( 1 , x [ , w h i c h ] ) % * % j c o e f i f ( i = = 1 ) { y h a t = m a t r i x ( x [ , w h i c h ] , n , 1 ) % * % j c o e f } e l s e { y h a t = x [ , w h i c h ] % * % j c o e f } d f m a t [ j , i ] = s u m ( ( y - m u ) * y h a t ) } } # d f = a p p l y ( d f m a t , 2 , m e a n ) - 1 d f = a p p l y ( d f m a t , 2 , m e a n ) e r r o r = s q r t ( a p p l y ( d f m a t , 2 , v a r ) / B ) s a v e ( d f , e r r o r , p , f i l e = " F S R . r d a t a " ) p l o t C I ( 1 : p , d f , 2 * e r r o r , y l i m = c ( 0 , 3 5 ) , x l i m = c ( 0 , p ) , g a p = 0 . 4 , x l a b = " S u b s e t S i z e " , y l a b = " D e g r e e s o f F r e e d o m " , c e x . l a b = 1 . 5 , t y p e = " o " ) a b l i n e ( h = 1 5 , l t y = 2 ) l i n e s ( c ( 0 , 1 5 ) , c ( 0 , 1 5 ) , l t y = 2 ) t i t l e ( " F o r w a r d S t e p w i s e R e g r e s s i o n " , c e x . m a i n = 2 ) Finally , the co de for Subsection 3.2 is differen t but short. W e can imagine taking A → ∞ . # A i s s o m e b i g n u m b e r A = 1 0 0 0 0 X = A * d a t a . f r a m e ( d i a g ( 2 ) ) B = 1 E 5 y = A + m a t r i x ( r n o r m ( 2 * B ) , n c o l = 2 ) # b e s t u n i v a r i a t e ( n o - i n t e r c e p t ) m o d e l j u s t p i c k s t h e l a r g e r y _ i y h a t . b s = t ( a p p l y ( y , M A R G I N = 1 , F U N = f u n c t i o n ( y y ) y y * ( y y > m e a n ( y y ) ) ) ) d f v e c = d r o p ( ( ( y - A ) * y h a t . b s ) % * % c ( 1 , 1 ) ) d f = m e a n ( d f v e c ) e r r o r = s q r t ( v a r ( d f v e c ) / B ) c ( d f , e r r o r ) 15

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment