Particle-kernel estimation of the filter density in state-space models

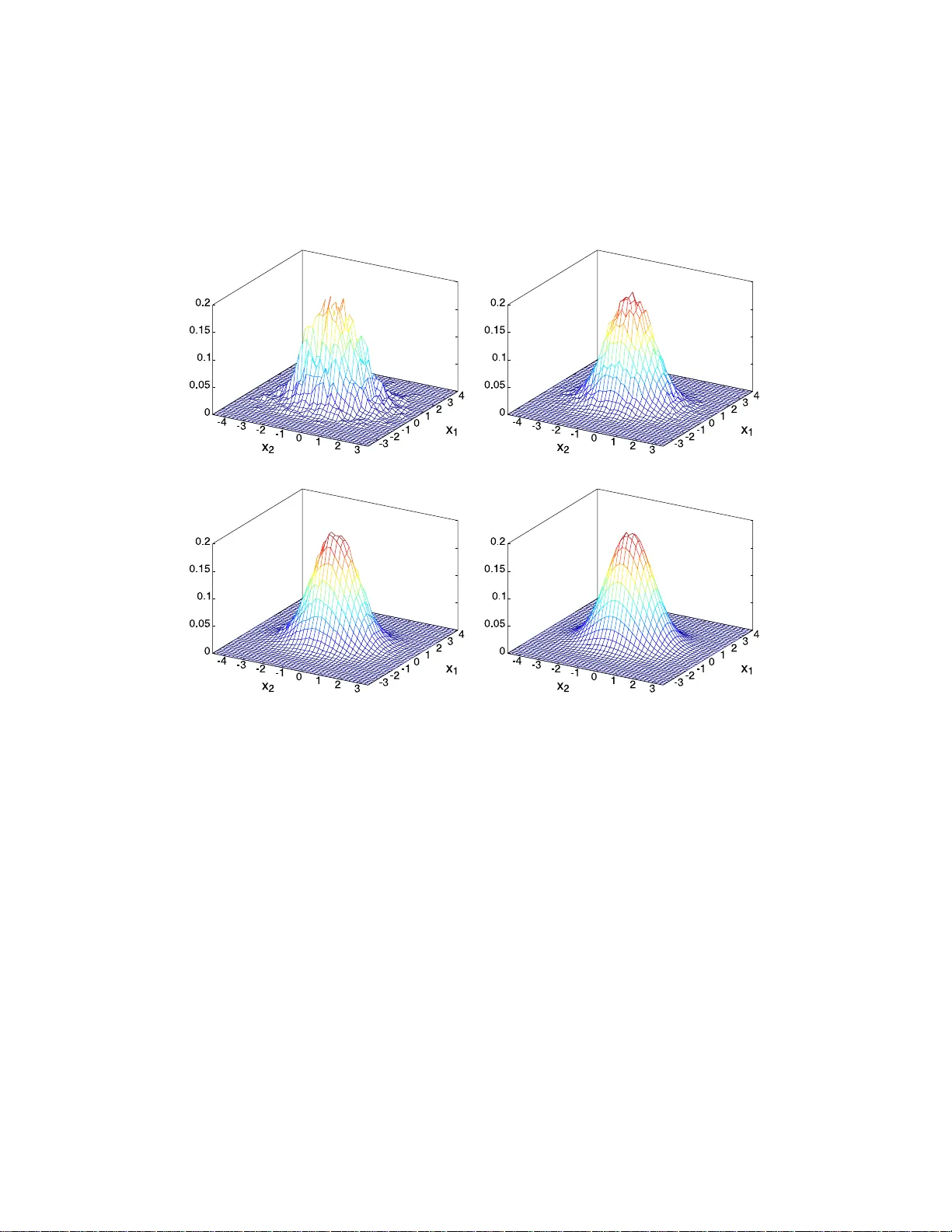

Sequential Monte Carlo (SMC) methods, also known as particle filters, are simulation-based recursive algorithms for the approximation of the a posteriori probability measures generated by state-space dynamical models. At any given time $t$, a SMC met…

Authors: Dan Crisan, Joaquin Miguez