Adaptive Temporal Compressive Sensing for Video

This paper introduces the concept of adaptive temporal compressive sensing (CS) for video. We propose a CS algorithm to adapt the compression ratio based on the scene's temporal complexity, computed from the compressed data, without compromising the …

Authors: Xin Yuan, Jianbo Yang, Patrick Llull

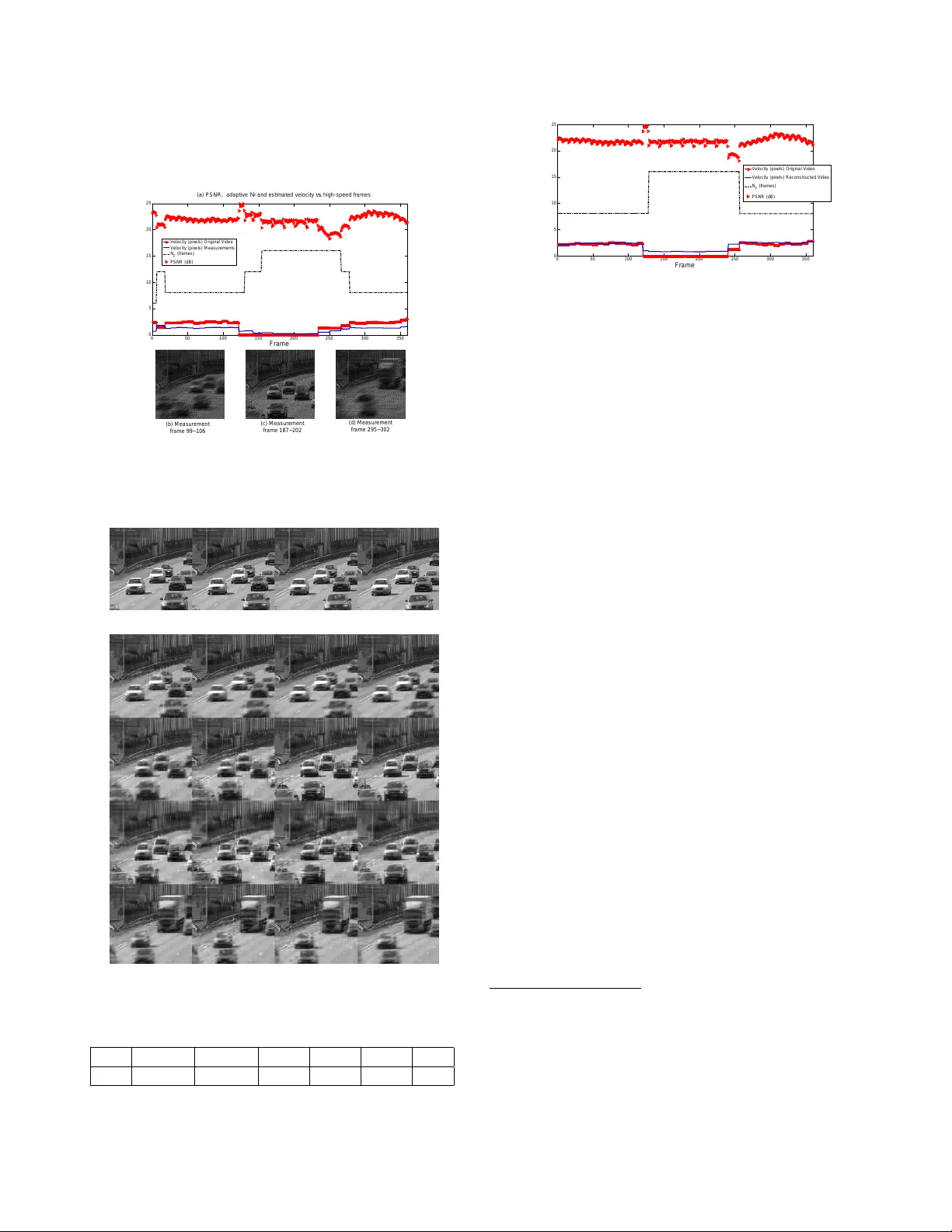

AD APTIVE TEMPORAL COMPRESSIVE SENSING FOR VIDEO Xin Y uan, Jianbo Y ang, P atrick Llull, Xuejun Liao, Guillermo Sapir o, David J . Brady and Lawr ence Carin Department of Electrical and Computer Engineering, Duke Uni versity , Durham, NC, 27708 , USA ABSTRA CT This paper introduces the concept of adaptive temporal com- pr essive sensing (CS) for video. W e propose a CS algorithm to adapt the compression ratio based on the scene’ s tempo- ral complexity , computed from the compr essed data, without compromising the quality of the reconstructed video. The temporal adaptivity is manifested by manipulating the inte- gration time of the camera, opening the possibility to r eal- time implementation. The proposed algorithm is a general- ized temporal CS approach that can be incorporated with a div erse set of existing hardware systems. Index T erms — V ideo compressive sensing, temporal compressiv e sensing ratio design, temporal superresolution, adaptiv e temporal compressi ve sensing, real-time implemen- tation. 1. INTRODUCTION V ideo compressiv e sensing (CS), a ne w application of CS, has recently been in vestigated to capture high-speed videos at low frame rate by means of temporal compr ession [1, 2, 3] 1 . A commonality of these video CS systems is the use of per-pixel modulation during one integration time-period, to o vercome the spatio-temporal resolution trade-off in video capture. As a consequence of active [1, 2] and passive pixel-lev el coding strategies [3, 9] (see Fig. 1), it is possible to uniquely mod- ulate sev eral temporal frames of a continuous video stream within the timescale of a single integration period of the video camera (using a conv entional camera). This permits these nov el imaging architectures to maintain high resolution in both the spatial and the temporal domains. Each low-speed exposure captured by such CS cameras is a linear combina- tion of the underlying coded high-speed video frames. After acquisition, high-speed videos are reconstructed by various CS in version algorithms [10, 11, 12, 13]. These hardware systems were originally designed for fixed temporal compression ratios. The correlation in time between video frames can vary , depending on the detailed time dependence of the scene being imaged. For example, a scene monitored by a surveillance camera may ha ve signif- icant temporal variability during the day , b ut at night there 1 Significant work in spatial compression has been demonstrated with a single-pixel camera [4, 5, 6, 7]. Unfortunately , this hardware cannot decrease the sampling frame rate, and therefore has not been applied in temporal CS. [8] achie ved compressi ve temporal superresolution for time-v arying periodic scenes by exploiting their Fourier sparsity . t M o d u l a t e d i m a g e O r i g i n a l v i d e o H a d a m a r d p r o d u c t S h i f t e d m a s k = = = = F r a m e 1 F r a m e 2 F r a m e 3 F r a m e N F + + + + + + = = C o n v e n t i o n a l c a p t u r e C A C T I c o d e d c a p t u r e O n e a d a p t i v e i n t e g r a t i o n p e r i o d 1 2 3 4 . . . 1 2 3 4 . . . 1 2 3 4 . . . Fig. 1 . Illustration of the coding mechanisms within the Coded Aperture Compressiv e T emporal Imaging (CA CTI) system [3]. The first row sho ws N F high-speed temporal frames of the source datacube video; the second row depicts the mask with which each frame is multiplied (black is zero, white is one). In CACTI, the same code (mask) is shifted (from left to right) to constitute a series of frame-dependent codes. Finally , the CACTI mea- surement of these N F frames is the sum of the coded frames, as shown at right-bottom. may be extended time windo ws with no or limited changes. Therefore, adapting the temporal compr ession ratio based on the captured scene is important, not only to maintain a high quality reconstruction, but also to sa ve power , memory , and related resources. W e introduce the concept of adaptive temporal compres- sive sensing to manifest a CS video system that adapts to the complexity of the scene under test. Since each of the afore- mentioned cameras in volv es similar integration over a time window , in which N F high-speed video frames are modu- lated/coded, we propose to adapt this time window (the inte- gration time N F ), to change the temporal compression ratio as a function of the complexity of the data. Specifically , we adaptiv ely determine the number of frames N F collapsed to one measurement, using motion estimation in the compr essed domain. 2 The algorithm for adaptive temporal CS can be incorpo- 2 Studies hav e shown that impro ved performance could be achie ved when projection matrices are designed to adapt to the underlying signal of interest [14, 15, 16, 17, 18]. Howev er , none of these methods was developed for video temporal CS. The adapti ve CS ratio for video has been inv estigated in [19, 20, 21, 22]. Each frame in the video to be captured is partitioned into several blocks based on the estimated motion, and each block is set with a dif ferent CS ratio. Though a nov el idea, it is difficult to employ it in real cameras since it is hard to sample at dif ferent framerates for dif ferent re gions (blocks) of the scene with an off-the-shelf camera. In contrast, the method presented in this paper can be readily incorporated with various e xisting hardware systems. rated with a div erse range of existing video CS systems (not only the imaging architectures in [1, 2, 3] but also flutter shut- ter [23, 24] cameras), to implement r eal-time temporal adap- tation. Furthermore, thanks to the av ailability of hardware for simple motion estimation [25], the proposed algorithm can be readily implemented in these cameras. 2. PROPOSED METHOD The underlying principle of the proposed method is to deter- mine the temporal compression ratio N F based on the motion of the scene being sensed. In the following, we propose to estimate the motion of the objects within the scene, to adapt the compression ratio for effecti ve video capture. Search in this window PxP block Fig. 2 . Basic principle of block-matching. Search all the P × P blocks in the window of frame B to find the one best matched with the block in frame A, and use this to compute the block motion. 2.1. Block-Matching Motion Estimation The block-matching method considered here has been em- ployed in a variety of video codes ranging from MPEG1 / H.261 to MPEG4 / H.263 [25, 26, 27]. Diverse algorithms [26] hav e in vestigated the block-matching concept shown in Fig. 2. The ke y steps of the block-matching method are re- viewed as follows: i ) partition frame A (e.g., pre vious frame) into P × P (pixels) blocks; ii ) pre-define a window size M × M (pixels); iii ) search all the P × P blocks in the M × M windows in frame B (e.g., current frame) around the selected block in frame A; iv ) and find the best-matching block in the window according to some metric (e.g. mean squared error), and use this to compute the block motion. W e demonstrate adaptiv e compression ratios based on this estimated motion from reconstructed video frames in Section 3. Estimating motion in high-speed dynamic scenes via the block-matching method in the reconstructed video (after sig- nal recovery) is computationally infeasible giv en current re- construction times at ev en modest compression ratios. Hence, we aim to compute the adaption of N F based dir ectly on the raw ( compressed ) measurements without the intermedi- ate step of reconstruction. The following section proposes a method to estimate motion solely on low-framerate, coded measurements from the camera. 2.2. Real-Time Block-Matching Motion Estimation Estimating motion from the camera captured data requires the motion to be observable without reconstructing the video frames from the measurement. Fig. 3 presents the underlying principle of the r eal-time block-matching motion estimation F r a m e A F r a m e B M a t c h e d W i n d o w Fig. 3 . Real-time motion estimation by block-matching. approach. From this figure, it is apparent that the scene’ s mo- tion is observable within the time-integrated coding structure. This property lets us employ the block-matching method di- rectly on raw measurements (frames A and B in Fig. 3) to estimate the scene’ s motion. Adapting the compression ratio N F online is feasible due to the computational simplicity of this method. 2 Fig. 4 . Segmentation of foreground and background by motion estimation from compr essed measurements. Left is the original measurement; middle is the background blocks with foreground blocks shown in black and the right part presents the foreground blocks with background blocks shown in black. Note that the aim of this work is to estimate the motion, not segmentation. This primary segmentation helps us to localize the mo ving parts of the scene. 16 × 16 ( P = 16 ) blocksize is used and the window size is defined as 40 × 40 ( M = 40 ). Cross-diamond search algorithm [28] has been used to generate this figure and the subsequent results in Section 3. W e may roughly segment the video sequence into fore- ground and background regions by computing the number of pixels that each block traverses between frames. For each block, if this number is greater than a pre-specified thresh- old, the block is presumably moving and is hence classified as foreground. Other blocks are considered background (Fig. 4). Notably , we adapt N F solely based on the estimated motion velocity V (pixels/frame) for the blocks determined by the block matching algorithm to hav e mov ed the greatest number of pixels between frames. Intuitiv ely , the compression ratio required to faithfully re- construct the scene’ s motion is in versely proportional to the detected velocity V , reaching a finite upper bound as V → 0 . In practice, we simply apply a look-up table to (discretely) appropriately adapt N F with few computations. See T able 1 for an example. Since good hardware exists for motion es- timation [25] , the proposed method can be implemented in r eal time . It is w orth noting that the estimated motion (and hence the compression ratio determined by the look-up table) are used in the upcoming frames. W e assume the consistent motion of the adjacent frames in the video. Sudden changes of the motion will result in one integration time delay of the N F adaption. Simulation results in Fig. 5 verify this point. W e can of course put an upper bound in N F . ( a ) P S N R , a d a p t i v e N F a n d e s t i m a t e d v e l o c i t y v s . h i g h - s p e e d f r a m e s ( b ) M e a s u r e m e n t f r a m e 9 9 ~ 1 0 6 ( c ) M e a s u r e m e n t f r a m e 1 8 7 ~ 2 0 2 ( d ) M e a s u r e m e n t f r a m e 2 9 5 ~ 3 0 2 0 50 100 150 200 250 300 350 0 5 10 15 20 25 F r a m e V eloc it y (pix els ) Origina l V ideo V eloc it y (pix els ) M eas urem ent s N F (f ram es ) P SN R (dB) Fig. 5 . (a) Reconstruction PSNR (dB), adaptive N F (frames), and veloc- ities (pixels/frame) estimated from the original video and measur ements , all are plotted against frame number . (b-d) Measurements with vehicles at dif- ferent velocities. (a) Ground truth, fram es 1~4 shown as examples (b) Reconstructed video frames, 16 selected frames shown as examples #1 #2 #3 #4 #1 #2 #3 #4 #121 #122 #123 #124 #201 #202 #203 #204 #301 #302 #303 #304 Fig. 6 . Selected reconstructed frames (b) based on the adaptive N F pre- sented in Fig. 5. Frames 1 to 4 in (a) are sho wn as examples of ground truth. V [0 , 0 . 5) [0 . 5 , 1) [1 , 2) [2 , 3) [3 , 7) ≥ 7 N F 16 12 8 6 4 2 T able 1 . Relationships between the velocity V (pixels/frame) of the fore- ground and the compression ratio N F (frames). 0 50 100 150 200 250 300 350 0 5 10 15 20 25 F r a m e V eloc it y (pix els ) Origina l V ideo V eloc it y (pix els ) R ec ons t ruc t ed V ideo N F (f ram es ) P SN R (dB) Fig. 7 . Reconstruction PSNR (dB), adaptive N F (frames), and velocities (pixels/frame) estimated from the original and reconstructed video frames, all are plotted against frame number . 3. EXPERIMENT AL RESUL TS From [3], we hav e found that (based on extensi ve simulations) shifting a fixed mask is as good as using the more sophisti- cated time evolving codes used in [1, 2]. For conv enience (but not necessity), the subsequent results will use a shifted mask to modulate the high-speed video frames. 3.1. Example 1: Synthetic T raffic Video W e illustrate the adaptiv e compression ratio framework on a traffic video [29] that has 360 frames. W e artificially v ary the foreground velocity for this video to ev aluate the proposed method’ s performance for motion estimation and N F adap- tion. Frames 1-120 (Fig. 5(b)) and 241-336 (Fig. 5(d)) run at the originally-captured framerate; we freeze the scene be- tween frames 121-240 (Fig. 5(c)). The generalized alternating projection (GAP) algorithm [12] is used for the reconstruc- tions. T able 1 provides the compression ratio N F correspond- ing to several scene velocities V . This look-up table (learned based on training data 3 ) seeks to maintain a constant recon- struction peak signal-to-noise ratio (PSNR) of 22dB. Fig. 5 presents the real-time motion estimation results using simu- lated low-framerate coded exposures of the traffic video with an initial compression ratio N F = 6 . After a short fluctu- ation, the estimated velocity of the scene becomes constant; N F accordingly stabilizes at 8. When the vehicles freeze, the block-matching algorithm senses zero change in the pix el position and updates N F to 16. N F returns to 8 upon con- tinuing video playback at normal speed. W e can also observe the consistence of velocities estimated from the original video and from the compressed measurements in Fig. 5(a). Sudden changes in the video’ s framerate (and hence the motion ve- locity V ) are reflected in short fluctuations of the PSNR (for 3 W e use other traffic videos playing at dif ferent velocities (different fram- erates) to learn this table. The main steps are presented as follows: i ) gen- erate videos with different motion velocities by changing the framerate; ii ) estimate the motion v elocities V of the generated videos; iii ) modulate the generated videos with shifting masks and constitute measurements with di- verse N F ; iv ) reconstruct the videos with GAP [12] from these compressed measurements and calculate the PSNR of the reconstructed video; v ) and build the relations between estimated velocities V and N F maintaining a constant PSNR (around 22dB). 0 100 200 300 400 500 600 0 5 10 15 20 25 30 35 Frame Velocity (pix els ) f rom Measur em ents N F (fram es) PSNR (d B) (d) Measurement frame 539~544 (a) PSNR, adaptive N F and estimated velocity vs. high-speed frames (c) Measurement frame 237~244 (b) Measurement frame 97~112 (e) Reconstructed frames 539~544 with adaptive N F , average PSNR = 30.6466dB #539 PSNR: 29.8204dB #540 PSNR: 31.1062dB #541 PSNR: 31.1244dB #542 PSNR: 31.1413dB #543 PSNR: 31.0626dB #544 PSNR: 29.6246dB N F =16 N F =8 N F =6 #539 PSNR: 27.8171dB #540 PSNR: 26.7920dB #541 PSNR: 27.0100dB #542 PSNR: 27.9510dB #543 PSNR: 27.7160dB #544 PSNR: 27.9811dB (f) Reconstructed frames 539~544 with nonadaptive (constant) N F =10 , average PSNR = 27.5445dB Fig. 8 . Motion estimation and adaptive N F from the measurements. (a) Reconstruction PSNR (dB), adaptive N F (frames) (average adaptive N F =10.12), and velocities (pix els/frame) estimated from the measurements , all are plotted against frame number . (b-d) Measurements when there is nothing, one person, and a couple moving inside the scene, adapted N F = 16 , 8 , 6 , respectiv ely . (e) Reconstructed frames 539-544 from the measure- ment in (d) with adaptive N F . (f) Reconstructed frames 539-544 with non- adpative (constant) N F = 10 . one time-integration period) in Fig. 5(a). The average PSNR of the reconstructed frames in Fig. 5 is 21.8dB, very close to our expectation (22dB). Fig. 6 presents several reconstructed frames based on the adaptiv e N F in Fig. 5. W e additionally ev aluate the block-matching algorithm’ s performance by deploying it to reconstructed frames. Fig. 7 demonstrates that its performance is similar to the phenom- ena sho wn in Fig. 5. This justifies that it is unnecessary to reconstruct each measurement prior to updating N F . 3.2. Example 2: Realistic Surveillance V ideo Fig. 8 implements adaptive N F on video data captured in front of a shop [30]. T able 1 is again useful for this exam- ple. The first 189 frames of this video (Fig. 8(b)) are station- ary; nothing is moving within the scene. As seen before, since V = 0 , the compression ratio remains at N F =16. After the 189 th frame, different people begin to walk in and out of the video area (Fig. 8(c-d)). The compression ratio N F is adapted between 6 and 16 according to the estimated velocity . When one person walks into the shop (Fig. 8(c)), the compression ratio drops ( N F =8). This results in a better -posed reconstruc- tion of the underlying video frames. When a couple walks in front of the shop (Fig. 8(d)), N F drops further to 6. The cor- responding measurement and reconstructed frames are sho wn in Fig. 8(d,e). This video takes a total of 67 adaptive measurements to capture and reconstruct 678 high-speed video frames, achie v- ing a mean compression ratio N F ≈ 10.12. T o demonstrate the utility of adapting N F based on the sensed data, we compare adaptiv e reconstructions to those obtained when N F is fixed at or near its expected value. Fig. 8(f) shows reconstructed frames 539-544 when fixing N F = 10. Com- paring part (e) with part (f), we notice that adapting N F provides a (3dB) higher reconstruction quality (av erage PSNR=30.65dB) than fixing N F near its expected value (av erage PSNR=27.54dB). These improvements are most noticeable whenever there is motion within the scene and demonstrate the potenc y of temporal compression ratio adap- tation in realistic applications. 4. CONCLUSION W e hav e introduced the concept of adaptive temporal com- pressiv e sensing for video and demonstrated a r eal-time method to adjust the temporal compression ratio for video compressiv e sensing. By estimating the motion of objects within the scene, we determine how many measurements are necessary to ensure a reasonably well-conditioned es timation of high-speed motion from lower -framerate measurements. A block-matching algorithm estimates the scene’ s mo- tion directly from the compressed measurements to obviate real-time reconstruction, thereby significantly reducing the expended real-time computational resources. Simulation results hav e verified the efficac y of the proposed adaption algorithm. Future work will seek to embed this real-time framew ork into the hardware prototype. 5. REFERENCES [1] Y . Hitomi, J. Gu, M. Gupta, T . Mitsunaga, and S. K. Na- yar , “V ideo from a single coded exposure photograph using a learned over -complete dictionary , ” IEEE International Con- fer ence on Computer V ision (ICCV) , pp. 287–294, November 2011. [2] D. Reddy , A. V eeraraghav an, and R. Chellappa, “P2C2: Pro- grammable pixel compressiv e camera for high speed imaging, ” IEEE International Conference on Computer V ision and P at- tern Recognition (CVPR) , pp. 329–336, June 2011. [3] P . Llull, X. Liao, X. Y uan, J. Y ang, D. Kittle, L. Carin, G. Sapiro, and D. J. Brady , “Coded aperture compressiv e tem- poral imaging, ” Optics Expr ess , vol. 21, no. 9, pp. 10526– 10545. [4] A. C. Sankaranarayanan, P . K. T uraga, R. G. Baraniuk, and R. Chellappa, “Compressive acquisition of dynamic scenes, ” 11th Eur opean Confer ence on Computer V ision, P art I , pp. 129–142, September 2010. [5] A. C. Sankaranarayanan, C. Studer , and R. G. Baraniuk, “CS- MUVI: V ideo compressive sensing for spatial-multiplexing cameras, ” IEEE International Confer ence on Computational Photography , pp. 1–10, April 2012. [6] M. B. W akin, J. N. Laska, M. F . Duarte, D. Baron, S. Sar- votham, D. T akhar , K. F . Kelly , and R. G. Baraniuk, “Compres- siv e imaging for video representation and coding, ” Proceed- ings of the Pictur e Coding Symposium , pp. 1–6, April 2006. [7] M. F . Duarte, M. A.Dav enport, D. T akhar , and S. T ing J. N. Laska, K. F . Kelly , and R. G. Baraniuk, “Single-pixel imaging via compressiv e sampling, ” IEEE Signal Pr ocessing Magazine , v ol. 25, no. 2, pp. 83–91, March 2008. [8] A. V eeraraghavan, D. Reddy , and R. Raskar , “Coded strob- ing photography: Compressi ve sensing of high speed periodic videos, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , v ol. 33, no. 4, pp. 671–686, April 2011. [9] P . Llull, X. Liao, X. Y uan, J. Y ang, D. Kittle, L. Carin, G. Sapiro, and D. J. Brady , “Compressive sensing for video using a passi ve coding element, ” Computational Optical Sens- ing and Imaging , 2013. [10] J. A. Tropp and A. C. Gilbert, “Signal recovery from random measurements via orthogonal matching pursuit, ” IEEE T rans- actions on Information Theory , vol. 53, no. 12, pp. 4655–4666, December 2007. [11] J.M. Bioucas-Dias and M.A.T . Figueiredo, “ A new T wIST: T wo-step iterativ e shrinkage/thresholding algorithms for image restoration, ” IEEE T ransactions on Image Pr ocessing , vol. 16, no. 12, pp. 2992–3004, December 2007. [12] X. Liao, H. Li, and L. Carin, “Generalized alternating pro- jection for weighted- ` 2 , 1 minimization with applications to model-based compressive sensing, ” accepted to appear in SIAM Journal on Ima ging Sciences , 2012. [13] J. Y ang, X. Y uan, X. Liao, P . Llull, G. Sapiro, D. J. Brady , and L. Carin, “Gaussian mixture model for video compres- siv e sensing, ” International Confer ence on Image Pr ocessing , 2013. [14] M. Elad, “Optimized projections for compressed sensing, ” IEEE T ransactions on Signal Pr ocessing , vol. 55, no. 12, pp. 5695–5702, December 2007. [15] S. Ji, Y . Xue, and L. Carin, “Bayesian compressive sensing, ” IEEE T ransactions on Signal Processing , vol. 56, no. 6, pp. 2346–2356, June 2008. [16] W . Carson, M. Chen, M. Rodrigues, R. Calderbank, and L. Carin, “Communications inspired projection design with application to compressiv e sensing, ” SIAM Journal on Imag- ing Sciences , vol. 5, no. 4, pp. 1185–1212, 2012. [17] J. M. Duarte-Carvajalino, G. Y u, L. Carin, and G. Sapiro, “T ask-driv en adaptiv e statistical compressive sensing of gaus- sian mixture models, ” IEEE T ransactions on Signal Pr ocess- ing , vol. 61, no. 3, pp. 585–600, February 2013. [18] L. Zelnik-Manor , K. Rosenblum, and Y . C. Eldar , “Sensing matrix optimization for block-sparse decoding, ” IEEE T rans- actions on Signal Processing , vol. 59, no. 9, pp. 4300–4312, September 2011. [19] Z. Liu, A. Y . Elezzabi, and H. V . Zhao, “Maximum frame rate video acquisition using adaptive compressed sensing, ” IEEE T ransactions on Circuits and Systems for V ideo T echnology , vol. 21, no. 11, pp. 1704–1718, No vember 2011. [20] J. Y . P ark and M. B. W akin, “ A multiscale framework for com- pressiv e sensing of video, ” Pr oceedings of the Pictur e Coding Symposium , pp. 1–4, May 2009. [21] M. Azghani, A. Aghagolzadeh, and M. Aghagolzadeh, “Com- pressed video sensing using adaptiv e sampling rate, ” Interna- tional Symposium on T elecommunications , pp. 710–714, 2010. [22] J. E. Fowler , S. Mun, and E. W . Tramel, “Block-based com- pressed sensing of images and video, ” F oundations and T r ends in Signal Pr ocessing , vol. 4, no. 4, pp. 297–416, 2012. [23] R. Raskar , A. Agraw al, and J. T umblin, “Coded exposure pho- tography: motion deblurring using fluttered shutter, ” A CM T ransactions on Gr aphics , vol. 25, no. 3, pp. 795, 2006. [24] Y . T endero, J-M Morel, and B. Roug ´ e, “The flutter shutter paradox, ” to appear in SIAM Journal on Imaging Sciences , pp. 1–33, 2013. [25] C.-H. Hsieh and T .-P . Lin, “VLSI architecture for block- matching motion estimation algorithm, ” IEEE T ransactions on Cir cuits and Systems for V ideo T echnology , vol. 2, no. 2, pp. 169–175, June 1992. [26] M. Ezhilarasan and P . Thambidurai, “Simplified block match- ing algorithm for fast motion estimation in video compres- sion, ” Journal of Computer Science , vol. 4, no. 4, pp. 282–289, 2008. [27] D. J. Le Gall, “The MPEG video compression algorithm, ” Sig- nal Pr ocessing: Image Communication , v ol. 4, no. 2, pp. 129– 140, April 1992. [28] C.-H. Cheung and L.-M. Po, “ A novel cross-diamond search algorithm for fast block motion estimation, ” IEEE T ransac- tions on Cir cuits and Systems for V ideo T echnology , vol. 12, no. 12, pp. 1168–1177, December 2002. [29] “http://projects.cwi.nl/dyntex/database pro.html, ” . [30] “http://i21www .ira.uka.de/image sequences/, ” .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment