Structure and Interpretation of Computer Programs

Call graphs depict the static, caller-callee relation between "functions" in a program. With most source/target languages supporting functions as the primitive unit of composition, call graphs naturally form the fundamental control flow representatio…

Authors: Ganesh M. Narayan, K. Gopinath, V. Sridhar

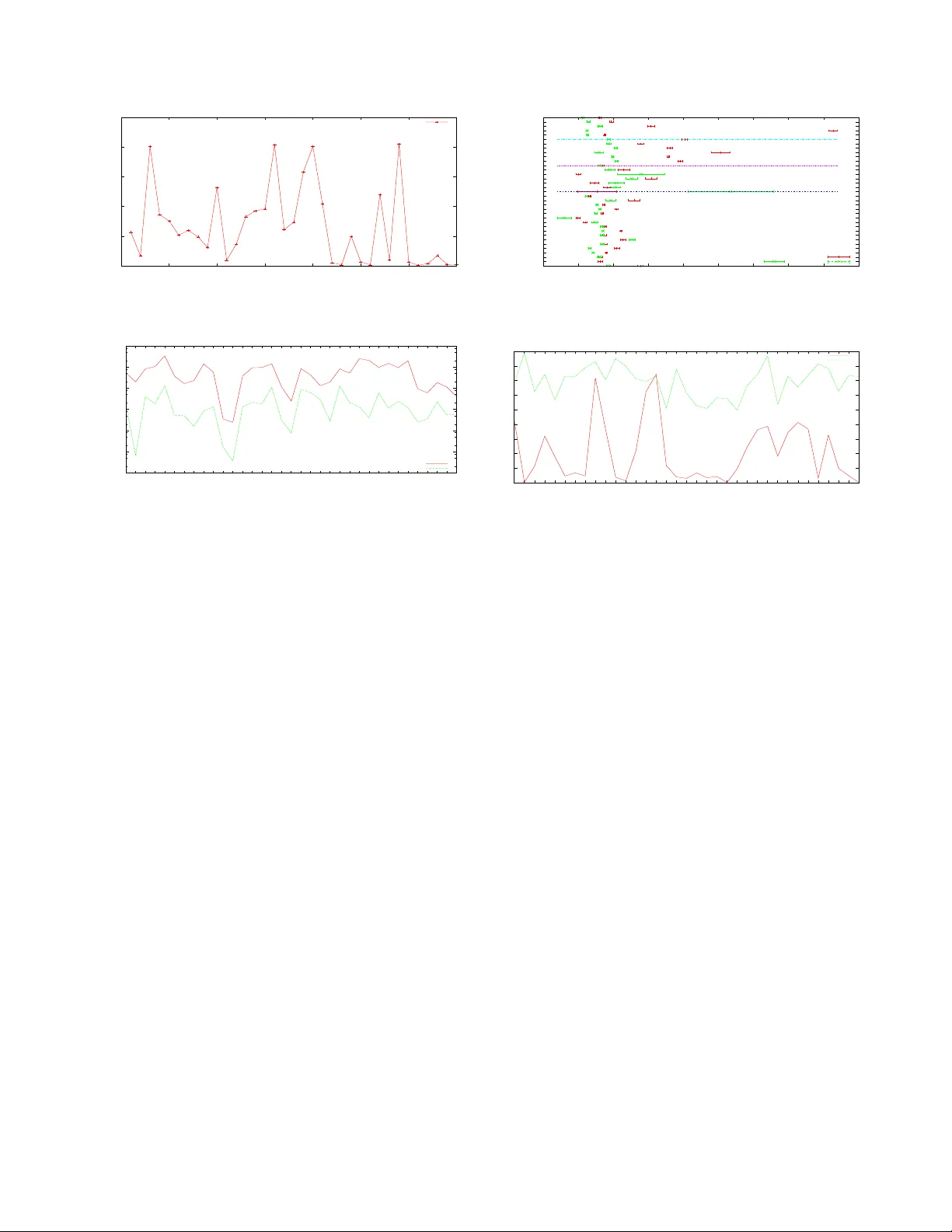

Structur e and Interpr etation of Computer Pr ograms ∗ Ganesh Narayan, Gopinath K Computer Science and Automation Indian Institute of Science { nganesh, gopi } @csa.iisc.ernet.in Sridhar V Applied Research Group Satyam Computers sridhar@satyam.com Abstract Call graphs depict the static, caller-callee r elation be- tween “functions” in a pr o gram. W ith most source/tar get languages supporting functions as the primitive unit of com- position, call graphs naturally form the fundamental contr ol flow r epresentation available to under stand/develop soft- war e. The y ar e also the substrate on which various inter- pr ocedural analyses ar e performed and ar e inte gral part of pr ogr am compr ehension/testing. Given their universality and usefulness, it is imperative to ask if call graphs exhibit any intrinsic graph theor etic features – acr oss versions, pr o- gram domains and sour ce languages. This work is an at- tempt to answer these questions: we pr esent and investigate a set of meaningful gr aph measur es that help us understand call graphs better; we establish how these measures cor- r elate, if any , acr oss differ ent languages and pr ogram do- mains; we also assess the overall, language independent softwar e quality by suitably interpreting these measur es. 1 Introduction Complexity is one of the most pertinent characteristics of computer programs and, thanks to Moore’ s law , com- puter programs are becoming e ver larger and comple x; it’ s not atypical for a software product to contain hundreds of thousands, e ven millions of lines of code where indi vidual components interact in myriad of ways. In order to tackle such complexity , variety of code organizing motifs were proposed. Of these motifs, functions form the most fun- damental unit of source code: software is organized as set of functions – of varying granularity and utility , with func- tions computing v arious results on their ar guments. Critical feature of this organizing principle is that functions them- selves can call other functions. This naturally leads to the notion of function call graph where indi vidual functions are nodes, with edges representing caller -callee relations; in- ∗ In rev erence to the Wizard Book. degree depicts the number of functions that could call the function and outdegree depicts the number of functions that this function can call. Since no further restrictions are em- ployed, the caller-callee relation induces a generic graph structure, possibly with loops and cycles. In this work we study the topology of such (static) call graphs. Our present understanding of call graphs is limited; we know: that call graphs are directed and sparse; can hav e cycles and often do; are not strongly connected; ev olve over time and could exhibit preferential attachment of nodes and edges. Apart from these basic understanding, we do not know much about the topology of call graphs. 2 Contributions In this paper we answer questions pertaining to topologi- cal properties of call graphs by studying a representati ve set of open-source programs. In particular , we ask following questions: What is the structure of call graphs? Are there any consistent properties? Are some properties inherent to certain programming language/problem class? In order to answer these questions, we inv estigate set of meaningful metrics from plethora of graph properties [9]. Our specific contributions are: 1) W e moti vate and provide insights as to why certain call graph properties are useful and how they could help us dev elop better and robust software. 2) W e compare graph structure induced by dif ferent language paradigms under an ev entual but structurally immediate structure – call graphs. The authors are unaware of any study that systematically compare the call graphs of different languages; in particular , the “call graph” structure of functional languages. 3) Our corpus, being varied and large, is far more statistically rep- resentativ e compared to the similar studies ([24], [4],[18]). 4) W e, apart from confirming previous results in a rigorous manner , also compute new metrics to capture finer aspects of graph structure. 5) As a side effect, we pro vide a po- tential means to assess software quality , independent of the source language. Rest of the paper is org anized as follows. W e begin by justifying the utility of our study and proceed to introduce relev ant structural measures in section 4. Section 5 dis- cusses the corpus and methodology . W e then present our the measurements and interpretations (Section 6). W e con- clude with section 7 and 8. 3 Motivation Call graphs define the set of permissible interactions and information flo ws and could influence softw are processes in non trivial w ays. In order to giv e the reader an intuitiv e un- derstanding as to how graph topology could influence soft- ware processes, we present following four scenarios where it does. Bug Propagation Dynamics Consider how a bug in some function affects the rest of the software. Let foo call bar and bar could return an incorrect value because of a bug in bar . if foo is to incorporate this return v alue in its part of computation, it is likely to compute wrong an- swer as well; that is, bar has infected foo . Note that such an infection is contagious and, in principle, bar can infect any arbitrary function f n as long as f n is connected to bar . Thus connectedness as graph property tri vially translates to infectability . Indeed, with appropriate notions of infection propagation and immunization, one could understand bug expression as an epidemic process. It is well kno wn that graph topology could influence the stationary distribution of this process. In particular , the critical infection rate – the infection rate beyond which an infection is not containable – is highly network specific; in fact, certain networks are known to have zero critical thresholds [5]. It pays to know if call graphs are instances of such graphs. Software T esting: Different functions contribute dif fer- ently to software stability . Certain functions that, when buggy , are likely to render the system unusable. Such func- tions, functions whose correctness is central to statistical correctness of the software, are traditionally characterized by per-function attributes like inde gree and size. Such sim- ple measure(s), though useful, fail to capture the transi- tiv e dependencies that could render e ven a not-so-well con- nected function an Achilles heel. Ha ving unambiguous met- rics that measure a node’ s importance helps making soft- ware testing more ef ficient. Centrality is such a measure that giv es a node’ s importance in a graph. Once relev ant centrality measures were assigned, one could expend rel- ativ ely more time testing central functions. Or , equally , test central functions and their called contexts for pre v a- lent error modes like interface nonconformity , context dis- parity and the likes ([21], [7]). By considering node cen- tralities, one could bias the testing ef fort to achieve similar confidence le vels without a costlier uniform/random testing schedule; though most dev elopers intuitiv ely kno w the im- portance of individual functions and devise elaborate test cases to stress these functions accordingly , we believ e such an idiosyncratic methodology could be safely replaced by an informed and statistically tenable biasing based on cen- tralities. Centrality is also readily helpful in software impact analysis. Software Comprehension: Understanding call graph structure helps us to construct tools that assist the dev el- opers in comprehending software better . For instance, con- sider a tool that magically extracts higher-le vel structures from program call graph by grouping related, lo wer-le vel functions. Such a tool, for example, when run on a kernel code base, would automatically decipher dif ferent logical subsystems, say , networking, filesystem, memory manage- ment or scheduling. Devising such a tool amounts to finding appropriate similarity metric(s) that partitions the graph so that nodes within a partition are “more” similar compared to nodes outside. Understandably , dif ferent notions of simi- larities entail dif ferent groupings. Recent studies sho w how network structure controls such grouping [2] and how per node graph metrics can be used to improve the de veloper- perceiv ed clustering validity ([26], [17]). Inter Procedural Analysis Call graph topology could influences both precision and con vergence of Inter Procedu- ral Analysis (IP A). When specializing individual procedures in a program, procedures that ha ve lar ge indegree could end up being less optimal: dataflo w facts for these functions tend to be too conservati ve as they are required to be con- sistent across a large number of call sites. By specifically cloning nodes with large indegree and by distributing the indegrees “appropriately” between these clones, one could specialize individual clones better . Also, number of itera- tions an iterative IP A takes compute a fixed-point depends on the max (longest path length, largest c ycle). 4 Statistical Properties of Inter est As with most nascent sciences, graph topology litera- ture is strewn with notions that are ov erlapping, correlated and misused gratuitously; for clarity , we restrict ourselves to following structural notions. A note on usage: we em- ploy graphs and networks interchangeably; G = ( V , E ) , | V | = n and | E | = m ; ( i, j ) implies i calls j ; d i denotes the degree of verte x i and d ij denotes the geodesic distance between i and j ; N ( i ) denotes the immediate neighbours of i; graphs are directed and simple: for ev ery ( i 1 , j 1 ) and ( i 2 , j 2 ) present, either ( i 1 6 = i 2 ) or ( j 1 6 = j 2 ) is true. Graphs, in general, could be modeled as random , small world , power-law , or scale rich , each permitting different dynamics. Random graphs: random graph model [11], is perhaps the simplest network model: undirected edges are added at random between a fixed number n of vertices to create a network in which each of the 1 2 n ( n − 1) possible edges is independently present with some probability p , and the verte x degree distribution follo ws Poisson in the limit of large n . Small world graphs: exhibit high degree of clustering and hav e mean geodesic distance ` – defined as, ` − 1 = 1 n ( n +1) P i 6 = j d − 1 ij – in the range of log n ; that is, number of v ertices within a distance r of a typical central v ertex grows e xponentially with r [19]. It should be noted that a large number of networks, in- cluding random networks, hav e ` in the range of log n or , ev en, log log n . In this work, we deem a network to be small world if ` grows sub logarithmically and the network exhibits high clustering. Po wer law networks: These are networks whose de gree distribution follow the discrete CDF: P [ X > x ] ∝ cx − γ , where c is a fix ed constant, and γ is the scaling exponent. When plotted as a double logarithmic plot, this CDF ap- pears as a straight line of slope − γ . The sole response of power -law distrib utions to conditioning is a change in scale: for large values of x , P [ X > x | X > X i ] is identical to the (unconditional) distribution P [ X > x ] . This “scale in- variance” of power -law distributions is attrib uted as scale- freeness. Note that this notion of scale-freeness does not depict the fractal-like self similarity in e very scale. Graphs with similar degree distributions differ widely in other structural aspects; rest of the definitions introduce metrics that permit finer classifications. degree correlations: In many real-world graphs, the probability of attachment to the target vertex depends also on the degree of the source vertex: many networks show assortative mixing on their degrees, that is, a pref- erence for high-degree nodes to attach to other high- degree node; others show disassortative mixing where high-degree nodes consistently attach to lo w-degree ones. Follo wing measure, a variant of Pearson correlation co- efficient [20], gives the degree correlation. ρ = m − 1 P i j i k i − [ m − 1 P i 1 2 ( j i + k i )] 2 m − 1 P i 1 2 ( j 2 i + k 2 i ) − [ m − 1 P i 1 2 ( j i + k i )] 2 , where j i , k i are the degrees of the vertices at the ends of i th edge, with i = 1 · · · m . ρ tak es values in the range − 1 ≤ ρ ≤ 1 , with ρ > 0 signifying assortativity and ρ < 0 signifying dis- sortativity . ρ = 0 when there is no discernible correlation between degrees of nodes that share an edge. scale fr ee metric: a useful measure capturing the fractal nature of graphs is scale-free metric s ( g ) [16], defined as: s ( g ) = P ( i,j ) ∈ E d i d j , along with its normalized variant S ( g ) = s ( g ) s max ; s max is the maximal s ( g ) and is dictated by the type of network understudy 1 . Rest of the paper will use the normalized variant. s ( g ) is maximal when nodes with similar de gree con- 1 For unrestricted graphs, s max = P n i =1 ( d i / 2) .d 2 i . nect to each other [13]; thus, S ( g ) is close to one for net- works that are fractal like, where the connecti vity , at all de- grees, stays similar . On the other hand, in networks where nodes repeatedly connect to dissimilar nodes, S ( g ) is close to zero. Networks that exhibit po wer-la w , but ha ve hav e a scale free metric S ( g ) close to zero are called scale rich ; power -law networks whose S ( g ) value is close to one are called scale-free . Measures S ( g ) and ρ are similar and are correlated; but they employ different normalizations and are useful in discerning different features [16]. clustering coefficient: is a measure of ho w clustered, or locally structured, a graph is: it depicts how , on an av er- age, interconnected each node’ s neighbors are. Specifically , if node v has k v immediate neighbors, then the clustering coefficient for that node, C v , is the ratio of number of edges present between its neighbours E v to the total possible con- nections between v ’ s neighbours, that is, k v ( k v − 1) / 2 . The whole graph clustering coef ficient, C , is the a verage of C v s: that is, C = h C v i v = D 2 E v k v ( k v − 1) E v . clustering profile: C has limited use when immedi- ate connectivity is sparse. In order to understand inter- connection profile of transitiv ely connected neighbours, we use clustering profile [1]: C d k = P { i | d i = k } C d ( i ) |{ i | d i = k }| , where C d ( i ) = |{ ( j,k ); j,k ∈ N ( i ) | d j k ∈ G ( V \ i )= d }| ( | N ( i ) | 2 ) . Note that, by this definition, clustering coefficient C is simply C 1 k , centrality: of a node is a measure of relativ e importance of the node within the graph; central nodes are both points of opportunities – that they can reach/influence most nodes in the graph, and of constraints – that any perturbation in them is likely to hav e greater impact in a graph. Many cen- trality measures exist and hav e been successfully used in many contexts ([6], [10]). Here we focus on betweenness centrality B u (of node u ), defined as the ratio of number of geodesic paths that pass through the node ( u ) to that of the total number of geodesic paths: that is, B u = P ij σ ( i,u,j ) σ ( i,j ) ; nodes that occur on many shortest paths between other ver - tices hav e higher betweenness than those that do not. connected components: size and number of connected components gi ves us the macroscopic connectivity of the graph. In particular , number and size of strongly connected components giv es us the extent of mutual recursion present in the software. Number of weakly connected component giv es us the upper bound on amount of runtime indirection resolutions possible. edge r eciprocity: measures if the edges are reciprocal, that is, if ( i, j ) ∈ E , is ( j, i ) also ∈ E ? A robust mea- sure for reciprocity is defined as [12]: ρ = % − ¯ a 1 − ¯ a where % = P ij a ij a j i m and ¯ a is mean of values in adjacency matrix. This measure is absolute: ρ greater than zero imply larger reciprocity than random networks and ρ less than zero im- 1 10 100 1000 10000 1 10 100 1000 Frequency InDegree InDegree Distribution C.Linux C++.Coin OCaml.Coq Haskell.Yarrow Figure 1. Indegree Distribution ply smaller reciprocity than random networks. 5 Corpora & Methodology W e studied 35 open source projects. The projects are written in four languages: C, C++, OCaml and Haskel. Appendix 8 enlists these software, their source language, versiom, domain and size: number of nodes N and the number of edges M . Most programs used are large, used by tens of thousands of users, written by hundreds of de- velopers and were dev eloped over years. These programs are acti vely dev eloped and supported. Most of these pro- grams – from proof assistant to media player , provide var- ied functionalities and ha ve no apparent similarity or ov er- lap in usage/philosophy/developers; if any , they exhibit greater orthogonality: Emacs Vs V im, OCaml Vs GCC, Postgres Vs Framerd, to name a few . Many are stand-alone programs while fe w , like glibc and f fmpeg, are provided as libraries. Some programs, like Linux and glibc, hav e machine-dependent components while others like yarrow and psilab are entirely architecture independent. In essence, our sample is unbiased towards applications, source languages, operating systems, program size, pro- gram features and developmental philosophy . The corpus versions and age vary widely: some are fe w years old while others, like gcc, Linux kernel and OCamlc, are more than a decade old. W e believ e that any in v ariant we find in such a varied collection is lik ely universal. W e used a modified version of CodeV iz [27] to extract call graphs from C/C++ sources. For OCaml and Haskell, we compiled the sources to binary and used this modified CodeV iz to extract call graph from binaries. OCaml pro- grams were compiled using ocamlopt while for Haskell we used GHC. A note of caution: to handle Haskell’ s laziness, GHC uses indirect jumps. Our tool, presently , could handle such calls only marginally; we ur ge the reader to be mindful of measures that are easily perturbed by edge additions. W e used custom developed graph analysis tools to mea- sure most of the properties; where possible we also used the graph-tool software [14]. W e used the largest weakly 1 10 100 1000 10000 100000 1 10 100 1000 Frequency OutDegree OutDegree Distribution C.Linux C++.Coin OCaml.Coq Haskell.Yarrow Figure 2. Outdegree Distribution connected components for our measurements. Component statistics were computed for the whole data set. 6 Interpr etation In the following section we walk through the results, dis- cuss what these results mean and why the y are of interest to language and software communities. Note that most plots hav e estimated sample v ariance as the confidence indicator . Also, most graphs run a horizontal line that separates data from different languages. Degree Distribution: Fitting samples to a distribution is impossibly thorny: any sample is finite, b ut of the dis- tributions there are infinitely man y . Despite the hardness of this problem, many of the previous results were based either on visual inspection of data or on linear regression, and are likely to be inaccurate [8]. W e use cumulative distribution to fit the data and we compute the likelihood measures for other distributions in order to improv e the confidence using [8]. Figures 1 and 2 depict ho w four programs written in four different lan- guage paradigms compare; the indegree distrib ution permits power -law ( 2 . 3 ≤ γ '≤ 2 . 9 ) while the outdegree distri- bution permits e xponential distrib ution (Haskell results are coarse, but are valid). This observation, that in and out de- gree distributions differ consistently across languages, is ex- pected as inde gree and outdegree are conditioned v ery dif- ferently during the dev elopmental process. Outdegree has a strict b udget; large, monolithic func- tions are difficult to read and reuse. Thus outdegree is min- imized on a local, immediate scale. On the other hand, large inde gree is implicitly encouraged, up to a point; inde- gree selection, ho wever , happens in a non-local scale, ov er a much larger time period; usually backward compatibil- ity permits lazy pruning/modifying of such nodes. Conse- quently one would expect the variability of outdegree – as depicted by the length of the errorbar , to be far less com- pared to that of the indegree. This is consistent with the observation (Fig. 3). Note that the tail of the outdegree is prominent in OCaml and C++: languages that allow highly HaXml DrIFT Frown yarrow ott psilab glsurf fftw coqtop ocamlc knotes doxygen coin cgal kcachegrind cccc gnuplot openssl sim vim70 emacs framerd postgresql gimp fvwm gcc MPlayer glibc ffmpeg sendmail bind httpd linux scheme 0 1 2 3 4 5 6 Average Degree Average Degree idegree odegree Figure 3. A verage Degree stylized call composition. Such observations are critical as distributions portend the accuracy of sample estimates. In particular, such distrib u- tions as power -law that permits non-finite mean and vari- ance – consequently eluding central limit theorem, are v ery poor candidates for simple sampling based analyses; under - standing the de gree distribution is of both empirical and the- oretical importance. Consider the bug propagation process delineated in Sec- tion 3. Assuming that the inter -node bug propagation is Markovian, we could construct an irreducible, aperiodic, finite state space Marko v chain (not unlike [6]) with b ug introduction rate β and deb ugging (immunization) rate δ as parameters. Note that this Markov chain has two absorb- ing states: all-infected or all-cured. Equipped with these notions, we could ask what is the minimal critical infec- tion rate β c beyond which no amount of immunization will help to sav e the software; below β c the system exponen- tially conv erges to the good, all-cured absorbing state. It is known that for a sufficiently large power-la w network with exponent in the range 2 < γ ≤ 3 , β c is zero [5]. Thus one is tempted to conclude that, provided Markovian as- sumption holds, it is statistically impossible to construct an all-reliable program. Howe ver that would be inaccurate as the sum of inde gree and outdegree distrib ution 2 indegree and outdegree need not follow po wer-law . Howe ver a re- cent study [25] establishes that, for finite networks, β c is bounded by the spectral diameter of the graph; in partic- ular , β c = 1 λ 1 ,A , where λ 1 ,A is the largest eigen v alue of the adjacency matrix. Figure 4 depicts the relation between λ 1 ,A and the graph size, n . F or a “robust” software, we re- quire β c to be large, or equally , λ 1 ,A to be small. Howe ver , it is e vident from the plot that larger the graph, higher the λ 1 ,A . This trend is observed uniformly across languages. Thus, we are to conclude that large programs tend to be more fragile, confirming the established wisdom. Another equally important inference one can mak e from the inde- gree distribution is that uniform fault testing is bound to fail: 2 Bug propagation is symmetric: foo and bar can pass/return bugs to one another . 10 20 30 40 50 60 70 80 90 100 110 0 2000 4000 6000 8000 10000 12000 14000 16000 18000 Largest Eigen Value of the Adjacency Matric Graph/Matrix Size - N Epidemic Threshold C C++ OCaml Haskell Figure 4. Epidemic Threshold Vs N should one is to build a statistically robust software, testing efforts ought to be heavily biased. These tw o inferences align closely with the common wisdom, except that these inferences are rigorously established (and party explained) using the statistical nature of call graphs. Scale Free Metric: Fig. 5 shows how scale-free metric for symmetrized call graphs v ary with different programs. T wo observations are critical: First, S ( g ) is close to zero. This implies call graphs are scale-rich and not scale-free. This is of importance because in a truly scale-free netw orks, epidemics are e ven harder to handle; hubs are connected to hubs and the Marko v chain rapidly con ver ged to the all- infected absorption state. In scale-rich networks, as hubs tend to connect to lesser nodes, the rate of conv ergence is less rapid. Second, S ( g ) appears to be language indepen- dent 3 . Both near zero and higher S ( g ) s appear in all lan- guages. Thus call graphs, though follow power -law for in- degree, are not fractal like in the self-similarity sense. Degree Correlation: Fig 7 show how input-input (i-i) and output-output (o-o) degrees correlate with each other . These sets are weakly assortativ e, signifying hierarchical organization. But finer picture ev olves as far as languages are con- cerned. C programs appears to hav e very similar i-i and o-o profiles with o-o correlation being smaller and compa- rable to i-i correlation. In addition, C’ s correlation measure is consistently less than that of other languages and is close to zero; thus, C programs exhibit as much i-i/o-o correlation as that of a random graph of similar size. In other words, if foo calls bar , the number of calls bar makes is inde- pendent of the number of calls foo made; this implies less hierarchical program structure as one would like the lev el n functions to receiv e fewer calls compared to le vel n − 1 functions. For instance, variance(list) is likely to re- ceiv e fewer calls compared to sum(list) ; we would also like lev el n functions to have higher outdegree compared to lev el n − 1 functions. Thus, in a highly hierarchical de- sign, i-i and o-o correlations would be mildly assortative, with i-i being more assortativ e. For C++, i-i and o-o dif- 3 Except Haskell; but this could be an artifact of edge limited sample. 0 0.05 0.1 0.15 0.2 0.25 0 5 10 15 20 25 30 35 Scale Free Metric Programs S(g) Figure 5. Scale Free Metric 1e-06 1e-05 1e-04 0.001 0.01 0.1 1 HaXml HaXml DrIFT Frown yarrow ott psilab glsurf fftw coqtop ocamlc knotes doxygen coin cgal kcachegrind cccc gnuplot openssl sim vim70 emacs framerd postgresql gimp fvwm gcc MPlayer glibc ffmpeg sendmail bind httpd linux scheme Clustering Coefficient Program CC RandCC Figure 6. Clustering Coefficient fer and are not ordered consistently . OCaml and Haskell exhibit marked difference in correlations: as with C, the o- o correlation is close to zero; b ut, i-i correlation is orders of magnitude higher than o-o correlation. That is, OCaml forces nodes with “proportional” indegree to pair up. If foo is has an indegree X, bar is likely to recei ve, say , 2X in- degree. One could interpret this result as a sign of stricter hierarchical organization in functional languages. Clustering Coefficient: Fig. 6 depicts how the call graph clustering coefficients compare to clustering coef fi- cients of random networks of same size. Computed clus- tering coef ficients are orders of magnitude higher than their random counterpart signifying higher degree of clustering. Also, observe that ` , as depicted is Fig. 8, is in the order of log n . T ogether these observations make call graphs decid- edly small world, irrespecti ve of the source language. W e also have observed that average clustering coefficient for nodes of particular degree, C ( d i ) follows power-la w . That is, the plot of d i to C ( d i ) follows the power -law with C ( d i ) ∝ d − 1 i : high degree nodes exhibit lesser cluster- ing and lower degree notes exhibit higher clustering. It is also observed that OCaml’ s fit for this po wer-law is the one that had least misfit. Though we need further samples to confirm it, we belie ve functional languages exhibit cleaner , non-interacting hierarchy compared to both procedural and OO languages. Component Statistics: Fig. 9 gi ves us the components statistics for the data set. It depicts the number of weakly HaXml HaXml DrIFT Frown yarrow ott psilab glsurf fftw coqtop ocamlc knotes doxygen coin cgal kcachegrind cccc gnuplot openssl sim vim70 emacs framerd postgresql gimp fvwm gcc MPlayer glibc ffmpeg sendmail bind httpd linux scheme -0.05 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 Assortativity in out Figure 7. Assortativity Coefficient 1 2 3 4 5 6 7 8 9 10 HaXml HaXml DrIFT Frown yarrow ott psilab glsurf fftw coqtop ocamlc knotes doxygen coin cgal kcachegrind cccc gnuplot openssl sim vim70 emacs framerd postgresql gimp fvwm gcc MPlayer glibc ffmpeg sendmail bind httpd linux scheme Mean Geodesic Distance mean.geodesic log(n) Figure 8. Harmonic Geodesic Mean connected components (#WCC), number of strongly con- nected components (#SCC), and fraction of nodes in the largest strongly connected component (%SCC). #WCC is lo wer in C and OCaml. F or C++ and Haskell, #WCC is higher compared to rest of the sample. This is an indication of lazy call resolution, coinciding with the de- layed/lazy bindings encouraged by both the languages. The #SCC values are highest for OCaml. This observation, com- bined with reciprocity of OCaml programs, makes OCaml a language that encourages recursion at v arying granularity . On the other end, C++ rates least against #SCC v alues. Another important aspect in Fig. 9 is the observed v al- ues for %SCC; this fraction v aries, surprisingly , from 1% to 30% of total number of nodes. C leads the way with some applications, notably vim and Emacs, measuring as much as 20 to 30% for %SCC. OCaml follows C with a moderate 2 to 6% while C++ measures 1% to 3%. W e do not yet know why one third of an application cluster to form a SCC. Also, %SCC values say that certain languages, notably OCaml, and programs domains (Editors: V im and Emacs) e xhibit significant mutual connectivity . Edge Reciprocity: Fig. 11 shows the plot of edge reci- procity for v arious programs. Edge reciprocity is a mea- sure of dir ect mutual recursion in the software. High reci- procity in a layered system implies layering in version and we would, ideally , like a program to hav e negati ve reci- procity . 1e-04 0.001 0.01 0.1 1 10 100 1000 10000 100000 HaXml HaXml DrIFT Frown yarrow ott psilab glsurf fftw coqtop ocamlc knotes doxygen coin cgal kcachegrind cccc gnuplot openssl sim vim70 emacs framerd postgresql gimp fvwm gcc MPlayer glibc ffmpeg sendmail bind httpd linux scheme %SCC : #SCC : #CC #WCC #SCC %SCC Figure 9. Component Statistics 0 1e-08 2e-08 3e-08 4e-08 5e-08 6e-08 0 2000 4000 6000 8000 10000 12000 14000 16000 18000 20000 Betweenness Centrality C.Linux Figure 10. Betweenness - C.Linux Most programs exhibit close to zero reciprocity: most call graphs exhibit as much reciprocity as that of random graphs of comparable size. None exhibit neg ati ve reci- procity , implying no statistically significant preferential se- lection to not to violate strict layering. The software that had least reciprocity is the Linux ker- nel. Recursion of any kind is abhorred inside kernel as kernel-stack is a limited resource; besides, in a en vironment where multiple contexts/threads communicate using shared memory , mutual recursion could happen through continua- tion flo w , not just as explicit control flow . Functional lan- guages lik e OCaml naturally sho w higher reciprocity . An- other curious observ ation is that compilers, both OCamlc and gcc, appear to hav e relatively higher reciprocity . This is the second instance where applications (Compilers: GCC and OCamlc) determining the graph property; this could be seen as a reflection of how compilers work: a great deal of the lexing, parsing and semantic algorithms that compilers are based on follow rich mutually recursive mathematical definitions. Clustering Profile: As we see in Fig 13, Clustering Pro- file indeed giv es us a better insight. Y axis depicts the av- erage clustering coefficient for nodes, say i and j , that are connected by geodesic distance d ij . In all the graphs ob- served, this average clustering increases up to d ij =3 and falls rapidly as d ij increases further . W e measured cluster- ing profile for degrees one to ten and the clustering profile appears to be unimodal, reaching the maximum at d ij =3, 0 0.005 0.01 0.015 0.02 0.025 0.03 0.035 0.04 0.045 HaXml HaXml DrIFT Frown yarrow ott psilab glsurf fftw coqtop ocamlc knotes doxygen coin cgal kcachegrind cccc gnuplot openssl sim vim70 emacs framerd postgresql gimp fvwm gcc MPlayer glibc ffmpeg sendmail bind httpd linux scheme Reciprocity "o" u 1:4 Figure 11. Edge Reciprocity 0 0.0005 0.001 0.0015 0.002 0.0025 0.003 0 100 200 300 400 500 600 Betweenness Centrality C++.Coin Figure 12. Betweenness - C++.Coin irrespectiv e of language/program domain. It suggests that maximal clustering occurs between nodes that are separated exactly by fi ve hops: clustering profile for a node u is mea- sured with u remov ed; so d ij =3 is 5 hops in the original graph. Howe ver exciting we find this result to be, we cur- rently hav e no explanation for this phenomenon. Betweenness: Fig. 10 to 15 depict ho w betweenness centrality is distributed – in different programs, written in different different languages. Note that betweenness is not distributed uniformly: it follo ws a rapidly decaying expo- nential distribution. This confirms our observation that im- portance of functions is distributed non-uniformly . Thus, by concentrating test efforts in functions that have higher betweenness – functions that are central to most paths – we could test the software better , possibly with less effort. An interesting line of in vestigation is to measure the correlation between various centrality measures and actual per function bug density in a real-w orld software. 7 Related W ork Understanding graph structures originating from v arious fields is an activ e field of research with v ast literature; there is a rene wed enthusiasm in studying graph structure of soft- ware and man y studies, alongside ours, report that software graphs exhibit small-world and po wer-la w properties. [18] studies the call graphs and reports that both indegree and outdegree distributions follo w power -law distributions 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 HaXml DrIFT Frown yarrow ott psilab glsurf fftw coqtop ocamlc knotes doxygen coin cgal kcachegrind cccc gnuplot openssl sim vim70 emacs framerd postgresql gimp fvwm gcc MPlayer glibc ffmpeg sendmail bind httpd linux scheme Clustering Profile 1 2 3 4 5 6 7 8 9 10 Figure 13. Clustering Profile f or Neighbours reachable in k +2 hops 0 0.001 0.002 0.003 0.004 0.005 0.006 0.007 0.008 0 2000 4000 6000 8000 10000 12000 14000 16000 18000 Betweenness Centrality OCaml.Coq Figure 14. Betweenness - OCaml.Coq and the graph exhibits hierarchical clustering. But [23] sug- gests that indegree alone follows power -law while the out- degree admits e xponential distribution. [23] also suggests a growing network model with copying, as proposed in [15], would consistently explain the observ ations. More recently , [4] studies the degree distrib utions of var - ious meaningful relationships in a Java software. Many re- lationships admit power-la w distributions in their indegree and exponential distrib ution in their out-degree. [22] stud- ies the dynamic, points-to graph of objects in Ja va programs and found them to follow po wer-la w . Note that most work, excepting [4], do not rigorously compare the likelihood of other distributions to explain the same data. Power -law is notoriously dif ficult to fit and e ven if power -law is a genuine fit, it might not be the best fit [8]. 8 Conclusion & Future W ork W e hav e studied the structural properties of large soft- ware systems written in different languages, serving differ - ent purposes. W e measured various finer aspects of these large systems in sufficient detail and hav e argued why such measures could be useful; we also depicted situations where such measurements are practically beneficial. W e belie ve our study is a step towards understanding software as an 0 5e-06 1e-05 1.5e-05 2e-05 2.5e-05 3e-05 3.5e-05 4e-05 0 1000 2000 3000 4000 5000 6000 7000 8000 9000 10000 Betweenness Centrality Haskell.Yarrow Figure 15. Betweenness - Haskell.Y arrow ev olving graph system with distinct characteristics, a view- point we think is of importance in dev eloping and maintain- ing large softw are systems. There is lot that needs to be done. First, we need to mea- sure the correlation between these precise quantities and the qualitati ve, rule of thumb understanding that de velop- ers usually possess. This helps us making such qualitative, albeit useful, observations rigorous. Second, we need to verify our finding o ver a much lar ger set to improv e the inference confidence. Finally , graphs are extremely useful objects that are analysed in a v ariety of ways, each e xpos- ing relev ant features; of these variants, the authors find two fields very promising: topological and algebraic graph the- ories. In particular , studying call graphs using a v ariant of Atkin’ s A-Homotop y theory is likely to yield interesting re- sults [3]. Also, spectral methods applied to call graphs is an area that we think is worth in vestigating. References [1] A. Abdo and A. de Moura. Clustering as a measure of the lo- cal topology of networks. In arXiv:physics/0605235 , 2006. [2] S. Asur, S. Parthasarathy , and D. Ucar . An ensemble ap- proach for clustering scalefree graphs. In LinkKDD work- shop , 2006. [3] H. Barcelo, X. Kramer , R. Laubenbacher, and C. W eaver . Foundations of a connectivity theory for simplicial com- plex es. In Advances in Applied Mathematics , 2006. [4] G. Baxter and et al. Understanding the shape of ja va soft- ware. In OOPSLA , 2006. [5] M. Boguna, R. Pastor -Satorras, and A. V espignani. Absence of epidemic threshold in scale-free networks with connec- tivity correlations. In arXiv:cond-mat/0208163 , 2002. [6] S. Brin and L. Page. The anatomy of a lar ge-scale hyper - textual W eb search engine. Computer Networks and ISDN Systems , 30(1–7):107–117, 1998. [7] J. A. Brretzen and R. Conradi. Results and experiences from an empirical study of fault reports in industrial projects. In PR OFES , 2006. [8] A. Clauset and et al. Power-la w distributions in empirical data. In arXiv .org:physics/0706.1062 , 2007. [9] L. da F . Costa, F . A. Rodrigues, G. T ravieso, and P . R. V . Boas. Characterization of complex netw orks: A surv ey of measurements. Advances In Physics , 56:167, 2007. [10] F . S. Diego Puppin. The social network of jav a classes. In A CM Symposium on Applied Computing , 2006. [11] P . Erd ¨ os and A. Rnyi. On random graphs. In Publicationes Mathematicae 6 , 1959. [12] D. Garlaschelli and M. I. Loffredo. Patterns of link reci- procity in directed networks. Physical Re view Letter s , 2004. [13] D. Hrimiuc. The rearrangement inequality - a tutorial: pims.math.ca/pi/issue2/page21-23.pdf, 2007. [14] http://projects.forked.de/graph tool/. [15] P . Krapivsk y and S. Redner . Network gro wth by copying. Phys Rev E Stat Nonlin Soft Matter Phys , 2005. [16] L. Li, D. Alderson, R. T anaka, J. Doyle, and W . W ill- inger . T o wards a theory of scale-free graphs. In arXiv:cond- mat/0501169 , 2005. [17] Y . Matsuo. Clustering using small world structure. In Knowledge-Based Intelligent Information and Engineering Systems , 2002. [18] C. R. Myers. Software systems as complex netw orks. In Physical Revie w , E68 , 2003. [19] M. Newman. The structure and function of complex net- works. In SIAM Revie w , 2003. [20] M. E. J. Newman. Assortativ e mixing in networks. Physical Revie w Letters , 89:208701, 2002. [21] D. E. Perry and W . M. Ev angelist. An empirical study of software interface faults. In Symposium on New Dir ections in Computing , 1985. [22] A. Potanin, J. Noble, M. Frean, and R. Biddle. Scale-free geometry in oo programs. Comm. ACM , May 2005. [23] S. V alverde and R. V . Sole. Logarithmic growth dynamics in software networks. In arXiv:physics/0511064 , Nov 2005. [24] S. V alverde and R. V . Sole. Hierarchical small worlds in software architecture. In arXiv:cond-mat/0307278 , 2006. [25] Y . W ang, D. Chakrabarti, C. W ang, and C. Faloutsos. Epi- demic spreading in real netw orks: An eigen value vie wpoint. In Symposium on Reliable Distributed Computing , 2003. [26] A. Y . W u, M. Garland, and J. Han. Mining scale-free net- works using geodesic clustering. In A CM SIGKDD , 2004. [27] www .csn.ul.ie/ mel/projects/codeviz/. A ppendix I * Lang Name.V ersion Appln. Domain N M 1 C scheme.mit.7.7.1 Interpreter 2512 5610 2 C linux.2.6.12.rc2 Kernel 20165 70010 3 C httpd.2.2.4 W eb Sev er 1396 4014 4 C bind.9.4.1 Name Server 4534 18874 5 C sendmail.8.12.8 Mail Server 783 4064 6 C ffmpe g.2007.05 Media Codecs 4207 11692 7 C glibc.2.3.6 C Lib 4401 13972 8 C MPlayer .1.0rc1 Media Player 7985 21744 9 C gcc.4.0.0 C Compiler 10848 48847 10 C fvwm.2.5.18 W in Manager 3312 12052 11 C gimp.2.3.9 Image Editor 16021 88473 12 C postgresql.8.2.3 R-DBMS 8517 41189 13 C framerd.2.6.1 OO-DMBS 3490 17048 14 C emacs.21.4 “Editor” 3872 13154 15 C vim70 Editor 4489 18368 16 C sim.outorder .3.0 µ arch/ISA Sim 442 1089 17 C openssl.0.9.8e Crypto Lib 7078 21827 18 C gnuplot.4.2.2 Graph Plotting 2191 7045 19 C++ cccc.3.1.4 Code Metrics 1654 5627 20 C++ kcachegrind.0.4 Cache Analyser 2593 8054 21 C++ cgal.3.3 CompGeom Lib 3151 7690 22 C++ coin.2.4.6 OpenGL 3D Lib 12963 51877 23 C++ doxygen.1.5.3 Doc Generator 11723 31889 24 C++ knotes.3.3 PIM 2174 4942 25 OCaml ocamlc.opt.3.09 OCaml Compiler 4397 12732 26 OCaml coqtop.opt.8 Theorem Prov er 16126 51092 27 OCaml fftw .3.2alpha2 FFT Computing 585 1011 28 OCaml glsurf.2.0 OpenGL Surface 4003 9173 29 OCaml psilab .2.0 Numeric En virn 1888 4341 30 OCaml ott.0.10.11 PL/Calculi T ool 4300 11193 31 Haskell yarrow .1.2 Theorem Prov er 9397 15199 32 Haskell Frown.0.6.1 Parser Gen 6796 10218 33 Haskell DrIFT .2.2.1 T yped Preproc 1428 3292 34 Haskell HaXml.1.V alidate XML V alidate 4117 7624 35 Haskell HaXml.1.Xtract XML grep 3909 5242

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment