A Neyman-Pearson Approach to Universal Erasure and List Decoding

When information is to be transmitted over an unknown, possibly unreliable channel, an erasure option at the decoder is desirable. Using constant-composition random codes, we propose a generalization of Csiszar and Korner's Maximum Mutual Information…

Authors: Pierre Moulin

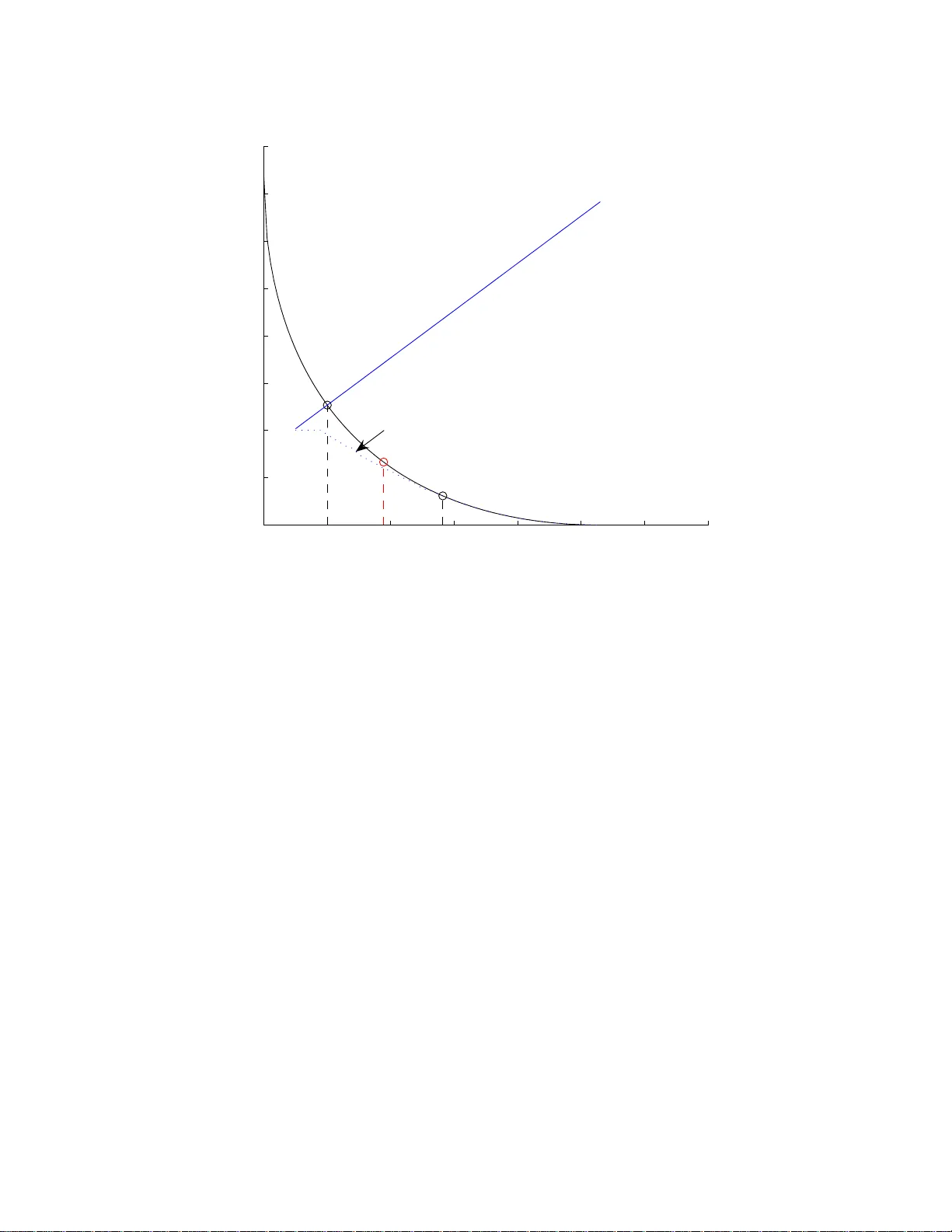

A Neyman-P earson Approac h to Univ ersal Erasure and List Deco ding Pierre Moulin ECE Departmen t, Bec kman Inst., and Co ordinated S cience Lab. Univ ersit y of Illinois at Urbana-Champaign Urbana, IL, 61801, USA Email: moulin@ifp.uiuc. edu ∗ No v ember 2, 2018 Abstract When infor ma tion is to b e transmitted ov er a n unknown, p ossibly unreliable channel, an erasure option at the deco der is desirable. Using constant-compo sition random codes, we prop ose a generaliz a tion of Csisz´ ar and K¨ orner’s Maximum Mutu a l Information deco de r with erasur e option for discrete memoryless c ha nnels. The new deco der is parameterized b y a weighting function that is designed to optimize the fundamental tradeoff b etw een undetected-er ror and erasure exponents for a comp ound cla ss of c hannels. The cla s s o f w eig hting functions may b e further enlarged to optimize a similar tradeoff for list deco ders — in that case, undetected-err or probability is replaced with average n umber of incorrect mes sages in the list. Explicit solutions are identified. The optimal exp onents admit s imple expressions in terms of the s phere-packing e x po nent , at all rates be low capacity . F or small erasur e exp onents, these expre ssions c oincide with those derived by F o rney (1968 ) for symmetric channels, us ing Maximum a Posteriori deco ding . Thus for those c ha nnels at least, ignorance of the channel law is inconsequential. Conditions for opti- mality of the Csisz´ ar-K ¨ or ner rule and of the simpler empirica l-mutual-information thresholding rule ar e identified. The erro r exp onents are ev aluated numerically for the binary symmetric channel. Keyw ords : error expo nent s , constant-compo sition co des, random co des, metho d o f t y p es, sphere packing, maximum mutual information deco der, univ er sal decoding , erasures, list deco d- ing, Neyman-Pearso n h yp othesis testing. ∗ This w ork was supp orted by NSF under gran t CCF 06-35137 and by DARP A under the ITMANET program. A 5-page version of this pap er was submitted to ISI T’08. 1 1 In tro du ction Univ ersal d ecod ers ha ve b een studied extensiv ely in the information theory literature as they are applicable to a v ariet y of comm u n ication pr oblems w h ere the channel is partly or ev en complete ly unknown [1, 2]. In particular, the Maxim um Mutual Information (MMI) deco der provides univer- sally attainable error exp onents for random constan t-comp osition co d es o ve r d iscrete memoryless c hannels (DMCs). In some cases, incomplete kno w ledge of the c hann el la w is in consequ en tial as the resulting error exp onen ts are the same as those for maximum-lik eliho o d decoders which kno w the c h annel la w in effect. It is often desirable to provided the r eceiv er with an erasure option that can b e exercised wh en the receiv ed data are deemed u nreliable. F or fixed c hann els, F orney [3] derived the d ecision rule that pro vides the optimal tradeoff b et wee n th e erasure and undetected-error probabilities, analo gously to the Neyman-P earson p roblem for b inary hyp othesis testing. F orney u s ed the same fr amew ork to optimize the p erformance of list d ecod ers; the probabilit y of u ndetected err ors is then replaced b y the exp ected num b er of incorrect messages on the list. The s ize of the list is a rand om v ariable whic h equals 1 with high probabilit y wh en comm unication is r eliable. F or un kno wn c hann els, the problem of d ecod ing with erasures was considered b y Csisz´ ar and K¨ orner [1]. They d eriv ed attainable pairs of un detected-error and erasure exp onents for any DMC. Their wo rk wa s later extended b y T elatar and Gallager [4]. Ho w ever neither [1] nor [4] ind icated whether true unive r salit y is ac hiev able, i.e., whether the exp onen ts matc h F orney’s exp onen ts. Also they did not indicate whether their error exp onen ts migh t b e optimal in some w eake r sens e. The problem was recen tly revisited by Merha v and F eder [5], usin g a comp etitiv e minimax appr oac h . The analysis of [5] yields lo w er b oun ds on a certain fraction of the optimal exp onents. It is su ggested in [5] that tru e u niv ersalit y might generally not b e atta in able, whic h would represent a fun damen tal difference with ord inary deco ding. This pap er considers decod ing with erasures for the comp ound DMC, with t w o goals in mind. The first is to construct a b road class of d ecision ru les that can b e optimized in an asymp totic Neyman-P earson sense, analogously to unive r sal hyp othesis testing [6–8]. Th e second is to inv esti- gate the universalit y p rop erties of the receiv er, in particular conditions u nder wh ic h th e exp onents coincide with F orney’s exp onen ts. W e first solv e the p roblem of v ariable-size list deco ders b ecause it is simpler, and the solution to the ordinary p roblem of size-1 lists follo ws dir ectly . W e establish conditions un der whic h our error exp onents matc h F orney’s exp on ents. F ollo wing bac kground mate rial in Sec. 2, the main results are g iven in Secs. 3 — 5. W e also observ e th at in some problems the comp oun d DMC appr oac h is o verly rigid and p essimistic. F or suc h pr oblems w e present in Sec. 6 a simple and flexible extension of our metho d based on the relativ e minimax principle. In S ec. 7 we apply our results to a class of Binary S ymmetric Chann els (BSC), which yields easily computable an d insightful form ulas. The pap er concludes with a brief discussion in S ec. 8. The p ro ofs of the main results are giv en in the app en dices. 1.1 Notation W e use up p ercase letters for ran d om v ariables, lo we r case letters for individu al v alues, and b oldface fon ts for sequences. The p robabilit y mass fun ction (p.m.f .) of a random v ariable X ∈ X is d enoted 2 b y p X = { p X ( x ) , x ∈ X } , the p robabilit y o f a set Ω under p X b y P X (Ω), and the exp ectation op erator by E . En tropy of a random v ariable X is denoted b y H ( X ), and mutual information b et ween t wo rand om v ariables X and Y is denoted b y I ( X ; Y ) = H ( X ) − H ( X | Y ), or by I ( p X Y ) when the d ep endency on p X Y should b e exp licit. T he K ullbac k-Leibler diverge n ce b et wee n t w o p.m.f.’s p and q is denoted b y D ( p || q ). All logarithms are in base 2. W e denote by f ′ the deriv ativ e of a fun ction f . Denote by p x the type of a sequence x ∈ X N ( p x is an empirical p.m.f. o ver X ) and b y T x the t yp e class asso ciated with p x , i.e., the set of all sequences of t yp e p x . Lik ewise, denote by p xy the join t typ e of a pair of sequences ( x , y ) ∈ X N × Y N (a p.m.f. o ver X × Y ) and by T xy the t yp e class asso ciated with p xy , i.e., the set of all sequen ces of t yp e p xy . Th e conditional t yp e p y | x of a pair of sequences ( x , y ) is defin ed as p xy ( x, y ) /p x ( x ) for all x ∈ X such that p x ( x ) > 0. The conditional t yp e class T y | x is the set of all sequences ˜ y su c h that ( x , ˜ y ) ∈ T xy . W e denote b y H ( x ) the en tropy of the p .m.f. p x and b y H ( y | x ) and I ( x ; y ) the cond itional entrop y and the m utu al in formation for the j oint p.m.f. p xy , resp ectiv ely . Recall that [1] ( N + 1) −|X | 2 N H ( x ) ≤ | T x | ≤ 2 N H ( x ) , (1.1) ( N + 1) −|X | |Y | 2 N H ( y | x ) ≤ | T y | x | ≤ 2 N H ( y | x ) . (1.2) W e let P X and P [ N ] X represent the set of all p.m.f.’s and empirical p.m.f .’s, resp ectiv ely , for a random v ariable X . Lik ewise, P Y | X and P [ N ] Y | X denote the set of all conditional p.m .f .’s a n d all empir ical conditional p.m.f.’s, resp ectiv ely , for a random v ariable Y given X . Th e notation f ( N ) ∼ g ( N ) denotes asymptotic equalit y: lim N → ∞ f ( N ) g ( N ) = 1. The shorthands f ( N ) . = g ( N ) and f ( N ) ≤ g ( N ) d enote equalit y and inequ alit y on the exp onen tial scale: lim N → ∞ 1 N ln f ( N ) g ( N ) = 0 and lim N → ∞ 1 N ln f ( N ) g ( N ) ≤ 0, resp ectiv ely . W e denote b y 1 { x ∈ Ω } the indicator fu nction of a set Ω and define | t | + , max(0 , t ) and exp 2 ( t ) , 2 t . W e adopt the notational con v ention that the minimum of a fun ction o v er an empt y set is + ∞ . The fu n ction-ordering notation F G indicates that F ( t ) ≤ G ( t ) for all t . Similarly , F G indicates that F ( t ) ≥ G ( t ) for all t . 2 Deco ding with Erasure and List Op tions 2.1 Maxim um-Lik elihoo d Deco ding In his 1968 pap er [3], F orney studied the follo wing erasure/list decod ing problem. A length- N , rate- R co d e C = { x ( m ) , m ∈ M} is selected, wh er e M = { 1 , 2 , · · · , 2 N R } is the message set and eac h co dew ord x ( m ) ∈ X N . Up on selection of a m essage m , the corresp on d ing x ( m ) is transm itted o v er a DMC p Y | X : X → Y . A set of deco din g regions D m ⊆ Y N , m ∈ M , is defined , and the deco der returns ˆ m = g ( y ) if and only if y ∈ D m . F or ordinary deco ding, {D m , m ∈ M} form a partition of Y N . Wh en an erasure option is in tro d uced, the decision space is extended to M ∪ ∅ , where ∅ denotes the erasure symbol. Th e erasure region D ∅ is the complemen t of ∪ m ∈M D m in Y N . An undetected error arises if m w as transmitted b ut y lies in the d ecod ing r egion of some other 3 message i 6 = m . This ev ent is giv en by E i = ( m, y ) : y ∈ [ i ∈M\{ m } D i , (2.1) where the sub script i stands for “incorrect message”. Hence P r [ E i ] = 1 |M| X m ∈M X y ∈ S i ∈M\{ m } D i p N Y | X ( y | x ( m )) = 1 |M| X m ∈M X i ∈M\{ m } X y ∈D i p N Y | X ( y | x ( m )) , (2.2) where the second equalit y h olds b ecause the decoding r egions are d isj oin t. The erasure even t is given by E ∅ = { ( m, y ) : y ∈ D ∅ } and has p r obabilit y P r [ E ∅ ] = 1 |M| X m ∈M X y ∈D ∅ p N Y | X ( y | x ( m )) . (2.3) The total error eve nt is give n by E er r = E i ∪ E ∅ . Th e d eco der is generally designed so that P r [ E i ] ≪ P r [ E ∅ ], so P r [ E er r ] ≈ P r [ E ∅ ]. Analogously to the Neyman-P earson problem, one wishes to design the decod ing regi ons to obtain an optimal tr ad eoff b et w een P r [ E i ] and P r [ E ∅ ]. F orney pro ved the follo win g class of decision rules is optimal: g M L ( y ) = ( ˆ m : if p N Y | X ( y | x ( ˆ m )) > e N T P i 6 = ˆ m p N Y | X ( y | x ( i )) ∅ : else (2.4) where T ≥ 0 is a free p arameter trading off P r [ E i ] against P r [ E ∅ ]. T he nonnegativit y constrain t on T ensur es that ˆ m is uniquely defin ed for an y giv en y . There is n o other decision ru le that yields sim ultaneously a lo w er v alue f or P r [ E i ] and for P r [ E ∅ ]. A conceptually simp le (but su b optimal) alternativ e to (2.4) is g M L, 2 ( y ) = ( ˆ m : if p N Y | X ( y | x ( ˆ m )) > e N T max i 6 = ˆ m p N Y | X ( y | x ( i )) ∅ : else (2.5) where the decision is made based on the tw o h ighest lik eliho o d scores. If one chooses T < 0, there is generally more than one v alue of ˆ m that satisfies (2.4), and g M L ma y be view ed as a list deco der that returns the list of all such ˆ m . Denote b y N i the n u m b er of incorrect messages on the list. Since the deco ding regions {D m , m ∈ M} ov erlap, the av erage n u mb er of incorrect m essages in th e list, E [ N i ] = 1 |M| X m ∈M X i ∈M\{ m } X y ∈D i p N Y | X ( y | x ( m )) , (2.6) 4 no longer coincides with P r [ E i ] in (2.2). F or the r ule (2.4 ) app lied to symm etric c h annels, F orney sho wed that the follo wing er r or exp o- nen ts are ac hiev able for al l ∆ suc h that R conj ≤ R + ∆ ≤ C : E i ( R, ∆) = E sp ( R ) + ∆ E ∅ ( R, ∆) = E sp ( R + ∆) (2.7) where E sp ( R ) is the sphere-pac king exp onen t, and R conj is the conjugate rate, defined as the r ate for which the slop e of E sp ( · ) is th e in verse of the s lop e at rate R : E ′ sp ( R conj ) = 1 E ′ sp ( R ) . The exp onents of (2.7) are ac hieved using in dep end ent and id en tically d istributed (i.i.d.) codes. 2.2 Univ ersal Deco ding When the c hannel la w p Y | X is unkno wn , m aximum-lik eliho o d d ecod ing cannot b e used. F or constan t-comp osition cod es with t yp e p X , the MMI decoder ta kes the form g M M I ( y ) = arg max i ∈M I ( x ( i ); y ) . (2.8) Csisz´ ar and K¨ orner [1, p. 174—178 ] extended the MMI deco der to include an erasur e option, usin g the follo wing decision rule: g λ, ∆ ( y ) = ( ˆ m : if I ( x ( ˆ m ); y ) > R + ∆ + λ max i 6 = ˆ m | I ( x ( i ); y ) − R | + ∅ : else (2.9) where ∆ ≥ 0 and λ > 1. They deriv ed the follo win g err or exponents for the resulting undetected- error and erasu re ev ents: { E r,λ ( R, p X , p Y | X ) + ∆ , E r, 1 /λ ( R + ∆ , p X , p Y | X ) } , ∀ p Y | X where E r,λ ( R, p X , p Y | X ) = m in ˜ p Y | X { D ( ˜ p Y | X k p Y | X | p X ) + λ | I ( p X , ˜ p Y | X ) − R | + } . While ∆ and λ are tradeoff parameters, they did not mention whether the decision rule (2.9 ) satisfies any Neyman-P earson typ e optimalit y criterion. A different appr oac h wa s recentl y pr op osed by Merha v and F eder [5]. They raised the p ossibilit y that the ac hiev able pairs of undetected-error and erasure exp onents might b e smaller than in the kno wn -channel case and prop osed a decision rule based on the comp etitiv e minimax pr inciple. This ru le is parameterized b y a scala r parameter 0 ≤ ξ ≤ 1 which represen ts a fraction of the optimal exp onents (for the kno wn-channel case) that their d ecod ing pro cedur e is guaran teed to ac hiev e. Decoding inv olv es explicit maximization of a cost f u nction o ve r the comp ound DMC family , analog ously to a Generalized Lik eliho o d Ratio T est (GLR T). The rule coincides whic h the GLR T when ξ = 0, b ut the choice of ξ can b e o p timized. They conjectured that the h ighest ac hiev able ξ is lo wer than 1 in general, and derive d a computable lo wer b ound on that v alue. 5 3 F –MM I Class of Deco ders 3.1 Deco ding Rule Assume that r andom constan t-comp osition co des with t yp e p X are used, an d that the DMC p Y | X b elongs to a c onne cte d subset W of P Y | X . The d ecod er kno ws W but not wh ic h p Y | X is in effect. Analogously to (2.9), our prop osed d ecod ing rule is a test b ased on the emp irical m u tual in- formations for the tw o highest-scoring messages. Let F b e the class of con tinuous, nondecreasing functions F : [ − R , H ( p X ) − R ] → R . Th e decision ru le in dexed by F ∈ F t akes the form g F ( y ) = ( ˆ m : if I ( x ( ˆ m ); y ) > R + max i 6 = ˆ m F ( I ( x ( i ); y ) − R ) ∅ : else . (3.1) Giv en a candid ate message ˆ m , the f u nction F weig h s the score of the b est comp eting co deword. Since 0 ≤ I ( x ( i ); y ) ≤ H ( p X ), all v alues of F ( t ) outside th e range [ − R, H ( p X ) − R ] are equiv alen t in terms of the d ecision rule (3.1). The c hoice F ( t ) = t r esu lts in the MMI d ecod ing rule (2.8), and F ( t ) = ∆ + λ | t | + (3.2) (t w o-parameter family of f unctions) resu lts in the Csisz´ ar-K¨ orner rule (2.9). One ma y further require that F ( t ) ≥ t to guarante e that ˆ m = arg m ax i I ( x ( i ); y ), as can b e v erified b y d irect substitution in to (3.1). In this case, th e decision is whether the d ecod er should output the highest-sco r ing message or outp u t an erasure d ecision. When the restriction F ( t ) ≥ t is not imp osed, the decision ru le (3.1) is am biguous b ecause more than one ˆ m could satisfy th e inequality in (3.1). Then (3.1) ma y b e view ed as a list decod er that returns the list of all suc h ˆ m , similarly to (2.4). The Csisz´ ar-K¨ orner decision rule parameterized by F in (3.2) is nonam b iguous for λ ≥ 1. Not e there is an err or in Theorem 5.11 and Corollary 5.11A of [1, p. 175], wh ere the cond ition λ > 0 should b e r ep laced with λ ≥ 1 [9]. In the limit as λ ↓ 0, (3.2) leads to the simple deco der that lists all messages whose empirical m utu al inform ation score exceeds R + ∆. If a list deco der is not desired, a simple v ariation on (3.2 ) when 0 < λ < 1 is F ( t ) = ∆ + λ | t | + : t ≤ ∆ 1 − λ t : else . It is also worth noting that the function F ( t ) = ∆ + t ma y b e though t of as an empirical ve r sion of F orney’s sub optimal decoding rule (2.5), w ith T = ∆. Indeed, using the identi ty I ( x ; y ) = H ( y ) − H ( y | x ) and viewing the negativ e empirical equiv o cation − H ( y | x ) = X x,y p xy ( x, y ) ln p y | x ( y | x ) as an empir ical v ersion of th e normalized loglik eliho o d 1 N ln p N Y | X ( y | x ) = X x,y p xy ( x, y ) ln p Y | X ( y | x ) , 6 w e ma y rewrite (2.5) and (3.1) resp ectiv ely as g M L, 2 ( y ) = ( ˆ m : if 1 N ln p N Y | X ( y | x ( ˆ m )) > T + max i 6 = ˆ m 1 N ln p N Y | X ( y | x ( i )) ∅ : else (3.3) and g F ( y ) = ( ˆ m : if − H ( y | x ( ˆ m )) > ∆ + max i 6 = ˆ m [ − H ( y | x ( i ))] ∅ : else . (3.4) While this observ ation do es not imply F ( t ) = ∆ + t is an optimal choic e for F , one might intuitiv ely exp ect optimalit y in some regime. 3.2 Error Exp onen ts F or a random-cod ing strategy u sing constant- comp osition cod es with t yp e p X , the exp ected num b er of inco r rect message s on the li st, E [ N i ], an d the erasure pr obabilit y , P r [ E ∅ ], ma y b e view ed as functions of R , p X , p Y | X , and F . A p air { E i ( R, p X , p Y | X , F ) , E ∅ ( R, p X , p Y | X , F ) } of incorrect- message and erasure exp onen ts is said to b e un iv ersally attainable for such co des o ver W if th e exp ected num b er of incorr ect messages on the list and the erasure pr obabilit y satisfy E [ N i ] ≤ exp 2 − N [ E i ( R, p X , p Y | X , F ) − ǫ ] , (3.5) P r [ E ∅ ] ≤ exp 2 − N [ E ∅ ( R, p X , p Y | X , F ) − ǫ ] , ∀ p Y | X ∈ W , (3.6) for an y ǫ > 0 and N greater than some N 0 ( ǫ ). The w orst-case exp onen ts (o v er all p Y | X ∈ W ) are denoted by E i ( R, p X , W , F ) , min p Y | X ∈ W E i ( R, p X , p Y | X , F ) , (3.7) E ∅ ( R, p X , W , F ) , min p Y | X ∈ W E ∅ ( R, p X , p Y | X , F ) . (3.8) Our p roblem is to maximize the erasure exp onent E ∅ ( R, p X , W , F ) sub ject to the constraint that th e incorrect-message exp onen t E i ( R, p X , W , F ) is at least equ al to some prescrib ed v alue α . This is an asymp totic Neyman-P earson problem. W e shall fo cus on the regime of p ractical inte r est where erasures are more accepta b le than undetected errors: E ∅ ( R, p X , W , F ) ≤ E i ( R, p X , W , F ) . W e emphasize th at asymp totic Neyman-P earson optimalit y of the d ecision r u le holds o n ly in a restricted sense, namely , w ith resp ect to the F -MMI class (3.1). Sp ecifically , giv en R an d W , we seek the solution to the constrained optimizat ion problem E ∗ ∅ ( R, W , α ) , max p X max F ∈F ( R,p X , W , α ) min p Y | X ∈ W E ∅ ( R, p X , p Y | X , F ) (3.9) where F ( R, p X , W , α ) is the set of functions F that satisfy min p Y | X ∈ W E i ( R, p X , p Y | X , F ) ≥ α (3.10) 7 as wel l as the con tinuit y and m onotonicit y conditions ment ioned abov e (3.1). If we w ere able to c ho ose F as a function of p Y | X , w e wo u ld d o at least as well as in (3.9) and ac hiev e the erasure exp onen t E ∗∗ ∅ ( R, W , α ) , max p X min p Y | X ∈ W max F ∈F ( R,p X ,p Y | X ,α ) E ∅ ( R, p X , p Y | X , F ) ≥ E ∗ ∅ ( R, W , α ) . (3.11) W e sh all b e particularly interested in c haracterizing ( R , W , α ) for whic h the decoder incurs no p enalt y for not knowing p Y | X , i.e., • equalit y holds in (3.11), and • the optimal exp onen ts E i ( R, p X , p Y | X , F ) and E ∅ ( R, p X , p Y | X , F ) in (3.10) and (3.9) coincide with F orney’s exp onen ts in (2.7) for all p Y | X ∈ W , the second p rop erty b eing s tr onger than the fi rst one. 3.3 Basic Prop erties of F T o simplify the d eriv atio n s, it is con v enient to slight ly strengthen the requir emen t that F b e nondecreasing, and w ork with str ictly in creasing functions F instead. Then the maxima o ver F in (3.9) and (3.11) are r eplaced with su prema, but of cours e their v alue remains the same. T o eac h monotonically increasing function F corr esp onds an inv erse F − 1 , such th at F ( t ) = u ⇔ F − 1 ( u ) = t. Elemen tary prop erties satisfied by the in verse function includ e: (P1) F − 1 is cont inuous and increasing o v er its range. (P2) If F G , th en F − 1 G − 1 . (P3) G ( t ) = F ( t ) + ∆ ⇔ G − 1 ( t ) = F − 1 ( t − ∆). (P4) dF ( t ) dt = 1 . dF − 1 ( t ) dt . (P5) If F is conv ex, then F − 1 is conca ve. (P6) The domain of F − 1 is the ran ge of F , and vice-v ersa. No w f or an y F suc h that F ( H ( p X ) − R ) ≥ 0, define th e scalar t F , sup { t : F ( t ) = | F ( t ′ ) | ∀ t ′ ≤ t ≤ H ( p X ) − R } (3.12) whic h may dep end on R and p X via the difference H ( p X ) − R . F rom this defin ition w e h a v e the follo wing prop erties: • | F ( t ) | + is constan t for all t ≤ t F ; • F ( t ) ≥ 0 for t ≥ t F . W e hav e t F = 0 if F ( t ) is c hosen as in (4.5), or if F ( t ) = ∆ + λ | t | + . I f F has a zero-crossing, t F is that zero-crossing. F or instance, t F = − ∆ /λ if F ( t ) = ∆ + λt . O r t F = min { ∆ , H ( p X ) − R } if F ( t ) = a | t − ∆ | + . The su premum in (3.12) alwa ys exists. 8 4 Random-Co ding and S phere-P ac king Exp on en ts The sph er e pac kin g exp onen t for channel p Y | X is defined as E sp ( R, p X , p Y | X ) , min ˜ p Y | X : I ( p X , ˜ p Y | X ) ≤ R D ( ˜ p Y | X k p Y | X | p X ) (4.1) and as ∞ if the m inimization ab o ve is o v er an empty set. The fu nction E sp ( R, p X , p Y | X ) is con vex, nonincreasing, and differentiable in R , and con tinuous in p Y | X . The sph er e p ac king exp onen t for class W is d efined as E sp ( R, p X , W ) , min p Y | X ∈ W E sp ( R, p X , p Y | X ) . (4.2) The function E sp ( R, p X , W ) is differen tiable in R b ecause W is a connected set. In some cases, E sp ( R, p X , W ) is con v ex in R , e.g., wh en the same p Y | X ac hiev es the minimum in (4 .2) at all rates. Denote by R ∞ ( p X , W ) the in fim u m of the rates R su ch that E sp ( R, p X , W ) < ∞ , and by I ( p X , W ) = min p Y | X ∈ W I ( p X , p Y | X ) the sup rem u m of R such that E sp ( R, p X , W ) > 0. The mo difie d r andom c o ding exp onent for channel p Y | X and for class W are resp ectiv ely defined as E r,F ( R, p X , p Y | X ) , min ˜ p Y | X [ D ( ˜ p Y | X k p Y | X | p X ) + F ( I ( p X , ˜ p Y | X ) − R )] (4.3) and E r,F ( R, p X , W ) , min p Y | X ∈ W E r,F ( R, p X , p Y | X ) . (4.4) When F ( t ) = | t | + , (4 .3 ) is just the us u al random cod ing exp onen t. It can b e ve r ified that (4.3) is a con tinuous fun ctional of F . Define the fu nction F R,p X , W ( t ) , E sp ( R, p X , W ) − E sp ( R + t, p X , W ) (4.5) whic h is depicted in Fig. 1 f or a BSC example to b e analyzed in Sec. 7. Th is fun ction is increasing for R ∞ ( p X , W ) − R ≤ t ≤ I ( p X , W ) − R and satisfies the f ollo wing prop erties: F R,p X , W (0) = 0 F ′ R,p X , W ( t ) = − E ′ sp ( R + t, p X , W ) E sp ( R ′ , p X , W ) + F R,p X , W ( R ′ − R ) ≡ E sp ( R, p X , W ) . (4.6) If E sp ( R, p X , W ) is conv ex in R , then F R,p X , W ( t ) is conca ve in t . Prop osition 4.1 The mo difie d r andom c o ding exp onent E r,F ( R, p X , p Y | X ) satisfies the fol lowing pr o p erties. (i) E r,F ( R, p X , p Y | X ) is nonincr e asing in R . (ii) If F ≺ G , then E r,F ( R, p X , p Y | X ) ≤ E r,G ( R, p X , p Y | X ) . (iii) E r,F ( R, p X , p Y | X ) is r elate d to the sph er e p acking exp onent as fol lows: E r,F ( R, p X , p Y | X ) = m in R ′ [ E sp ( R ′ , p X , p Y | X ) + F ( R ′ − R )] . (4.7) (iv) The ab ove pr op erties hold with W in pl ac e of p Y | X in the ar gu ments of the functions E r,F and E sp . 9 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Rate (R ′ ) → E sp (R ′ ) → E sp (R ′ ) R cr R R conj F R (R ′ −R) Figure 1: F un ction F R,p X , W ( ·− R ) when R = 0 . 1, W is the f amily of BSC’s with cr ossov er probabilit y ρ ≤ 0 . 1 (ca p acit y C ( ρ ) ≥ 0 . 53), and p X is the u n iform p.m.f. ov er { 0 , 1 } . The pro of of these prop erties is giv en in the app endix. P art (iii) is a v ariation on Lemma 5.4 and its corolla ry in [1, p . 168] . Also note that while E r,F ( R, p X , p Y | X ) is con v ex in R for some c hoices of F , including (3.2), that prop erty do es not extend to arbitrary F . Prop osition 4.2 The inc orr e ct- message and er asur e exp onents under the de cision rule (3.1) ar e r esp e ctively give n by E i ( R, p X , p Y | X , F ) = E r,F ( R, p X , p Y | X ) , (4.8) E ∅ ( R, p X , p Y | X , F ) = E r, | F − 1 | + ( R, p X , p Y | X ) . (4.9) Pr o of : see app endix. If F ( t ) = | t | + , then | F − 1 ( t ) | + = | t | + , and b oth (4.8) and (4.9) r ed uce to the ordinary random- co ding exp onent. If the c h annel is n ot reliable enough in the s ense that I ( p X , p Y | X ) ≤ R + F (0), then F − 1 ( I ( p X , p Y | X ) − R ) ≤ F − 1 ( F (0)) = 0, and from (4.9) w e obtain E ∅ ( R, p X , p Y | X , F ) = 0 b e- cause the minimizing ˜ p Y | X in the expression for E r, | F − 1 | + is equal to p Y | X . Ch ec king (3.1), a heuristic explanatio n for the zero e r asure expon ent is that I ( x ( m ); y ) ≈ I ( p X , p Y | X ) with high probabilit y when m is the trans m itted m essage, and max i 6 = m I ( x ( m ); y ) ≈ R (obtained using (B.4) with ν = R and th e u nion b ound). 10 5 W -Optimal Choice of F In this section, we view the incorrect-message and erasure exp on ents as functionals of | F | + and examine optimal tradeoffs b et we en them. It is instru ctiv e to first consider the one-parameter family F ( t ) ≡ ∆ ≥ 0 , (5.1) whic h co r esp onds to a thr esholding rule in (3.1 ). Using (4.7) it is easily v erified that E i ( R, p X , p Y | X , F ) = E r,F ( R, p X , p Y | X ) ≡ ∆ , (5.2) E ∅ ( R, p X , p Y | X , F ) ≡ E sp ( R + ∆ , p X , p Y | X ) . (5.3) Tw o extreme c hoices f or ∆ are 0 and I ( p X , W ) − R b ecause in eac h case one error exp onen t is zero and the other one is p ositiv e. On e would exp ect that b etter tradeoffs can b e ac h iev ed u sing a broader class of functions F , though. Recalling (3.9 ) (3.10) and using (4.8) and (4.9), we seek th e solution to the follo win g t wo asymptotic Neyman-Pe arson optimizatio n pr oblems. F or list deco ding, find E L ∅ ( R, W , α ) = max p X E L ∅ ( R, p X , W , α ) (5.4) where the cost function E L ∅ ( R, p X , W , α ) = sup F ∈F L ( R,p X , W , α ) E r, | F − 1 | + ( R, p X , W ) . (5 .5) where the f easible set F L ( R, p X , W , α ) is th e set of con tinuous, increasing fu nctions F that yield an incorrect-message exp onen t at least equal to α : E i ( R, p X , W , F ) = E r,F ( R, p X , W ) ≥ α. (5.6) F or classica l deco din g (list size ≤ 1), find E ∅ ( R, W , α ) = max p X E ∅ ( R, p X , W , α ) (5.7) where E ∅ ( R, p X , W , α ) = sup F ∈F ( R,p X , W ,α ) E r, | F − 1 | + ( R, p X , W ) (5.8) and F ( R, p X , W , α ) is the subset of functions in F L ( R, p X , W , α ) that satisfy F ( t ) ≥ t , i.e., th e deco der outputs at most one m essage. Since F ( R, p X , W , α ) ⊂ F L ( R, p X , W , α ), w e hav e E ∅ ( R, p X , W , α ) ≤ E L ∅ ( R, p X , W , α ) . The sequel of this pap er fo cuses on the class of v ariable-size list deco ders asso ciated with F L b ecause the error exp onen t tradeoffs are at least as go o d as those asso ciated with F , and th e corresp onding error exp onents take a more concise form . 11 Define th e critical r ate R cr ( p X , W ) as the rate at whic h the deriv ativ e E ′ sp ( · , p X , W ) = − 1, and ∆ , α − E sp ( R, p X , W ) . (5.9) Tw o rates R 1 and R 2 are said to b e conjugate gi ven p X and W if the corresp onding slop es of E sp ( · , p X , W ) are in verse of eac h other: E ′ sp ( R 1 , p X , W ) = 1 E ′ sp ( R 2 , p X , W ) . (5.10) The d ifference d b et ween t w o conjugate rates u niquely sp ecifies them. W e denote the smaller one b y R 1 ( d ) and the larger one by R 2 ( d ), irr esp ectiv e of the sign of d . Hence R 1 ( d ) ≤ R cr ( p X , W ) ≤ R 2 ( d ), with equalit y when d = 0. W e also denote by R conj ( p X , W ) the conjugate rate of R , as defined b y (5.10). The conju gate rate alw a ys exists when R is b elow the critica l rate R cr ( p X , W ). If R is ab o ve the critical rate and sufficient ly large, R conj ( p X , W ) m a y not exist. In stead of treating this case separately , we n ote that this case will b e irrelev an t b ecause the conjugate rate alwa ys app ears via th e expression max { R, R conj ( p X , W ) } wh ic h is equal to R if R > R cr ( p X , W ) and therefore this expression is alw a ys well defined. The pr o ofs of Pr op. 5.1 and 5.3 and Lemma 5.4 b elo w m a y b e f ound in the ap p endix; recall F R,p X , W ( t ) was defined in (4.5). The pro of of P rop. 5.5 p arallels that of Pr op. 5.1(ii) and is therefore omitted. Prop osition 5.1 The supr e ma i n (5.5) and (5.8) ar e r esp e ctively achieve d by F L ∗ ( t ) = F R,p X , W ( t ) + ∆ (5.11) = α − E sp ( R + t, p X , W ) (5.12) and F ∗ ( t ) = m ax( t, F L ∗ ( t )) . (5.13) The r esulting inc orr e ct-message exp onent is given by E i ( R, p X , W ) = α . The optima l solutio n is nonunique. In p articular, for t ≤ 0 , one c an r eplac e F L ∗ ( t ) by the c onstant F L ∗ (0) without effe ct on the err or exp onents. The pr o of of Prop. 5.1 is qu ite simple and can b e sep arated from the calculation of the error exp onents. Th e main id ea is as fol lows. If G F , w e ha ve E r,G ( R, p X , W ) ≤ E r,F ( R, p X , W ). Since G − 1 F − 1 , w e also ha ve E r,G − 1 ( R, p X , W ) ≥ E r,F − 1 ( R, p X , W ). Therefore we seek F ∗ ∈ F L ( R, p X , W , α ) suc h that F ∗ F for all F ∈ F L ( R, p X , W , α ). S uc h F ∗ , assuming it e xists, necessarily ac hieve s E L ∅ ( R, p X , W , α ). The same p ro cedure applies to F ( R, p X , W , α ). Corollary 5.2 If R ≥ I ( p X , W ) , the thr eshold ing rule F L ∗ ( t ) ≡ ∆ of (5.1) is optimal , and the optimal err or exp onents ar e E i ( R, p X , W , α ) = ∆ and E L ∅ ( R, p X , W , α ) = 0 . Pr o of. Since R ≥ I ( p X , W ), w e ha ve E sp ( R, p X , W ) = 0. He n ce from (4.5 ), F R,p X , W ( t ) ≡ 0 for all t ≥ 0. S ubstituting in to (5.1 1 ) pro ves the optimalit y of the thresholding r u le (5.1). T he corresp ondin g error exp onen ts are obtained b y minimizing (5.2) and (5.3) ov er p Y | X ∈ W . ✷ The case R < I ( p X , W ) is addr essed next. 12 Prop osition 5.3 If E sp ( R, p X , W ) is c onvex in R , the optimal inc orr e ct-message and er asur e ex- p onent s ar e r ela te d as fol lows. (i) F or | R conj ( p X , W ) − R | + ≤ ∆ ≤ I ( p X , W ) − R , we have E i ( R, p X , W , α ) = E sp ( R, p X , W ) + ∆ = α E L ∅ ( R, p X , W , α ) = E sp ( R + ∆ , p X , W ) . (5.14) (ii) The ab ove exp onents ar e also achieve d using the p enalty function F ( t ) = ∆ + λ | t | + with − E ′ sp ( R, p X , W ) ≤ λ ≤ 1 − E ′ sp ( R + ∆ , p X , W ) . (5.15) (iii) If R ≤ R cr ( p X , W ) and 0 ≤ ∆ ≤ R conj ( p X , W ) − R , we have E i ( R, p X , W , α ) = E sp ( R, p X , W ) + ∆ = α E L ∅ ( R, p X , W , α ) = E sp ( R 2 (∆) , p X , W ) + F − 1 R,p X , W ( R 1 (∆) − R ) . (5.16) (iv) If R ≤ R cr ( p X , W ) and R ∞ ( p X , W ) − R ≤ ∆ ≤ 0 , we have E i ( R, p X , W , α ) = E sp ( R, p X , W ) + ∆ = α E L ∅ ( R, p X , W , α ) = E sp ( R 1 (∆) , p X , W ) + F − 1 R,p X , W ( R 2 (∆) − R ) . (5.17) P art (ii) of th e prop osition implies that not only is the op timal F non uniqu e un d er the com bi- nations of ( R, p X , W , α ) of Part (i), but also th e C sisz´ ar-K¨ orner ru le (2.9) is optimal for any (∆ , λ ) in a certain range of v alues. Also, while Prop. 5.3 p ro vides sim p le expressions for the worst-case error exp onen ts ov er W , the exponents for an y sp ecific c hannel p Y | X ∈ W are obtained by s u bstituting the fu nction (5 .12 ) and its in v erse, r esp ectiv ely , into the minim ization pr oblem of (4.7). This problem do es ge n erally not admit a simple exp r ession. This leads us bac k to the question ask ed at the end of Sec. 2 , namely , when do es the deco der pa y no p enalt y for n ot kno wing p Y | X ? De fi ning E ′ sp ( R, p X , W ) , min p Y | X ∈ W E ′ sp ( R, p X , p Y | X ) , (5.18) and R conj ( p X , W ) , max p Y | X ∈ W R conj ( p X , p Y | X ) (5.19) w e ha v e th e follo wing lemma, whose pr o of app ears in th e app endix. Lemma 5.4 E ′ sp ( R, p X , W ) ≥ E ′ sp ( R, p X , W ) , (5 .20) R conj ( p X , W ) ≥ R conj ( p X , W ) (5.21) with e q u ality if the same p Y | X minimizes E sp ( R, p X , p Y | X ) at al l r ates. 13 Prop osition 5.5 Assume that R , p X , W , ∆ and λ ar e such that | R conj ( p X , W ) − R | + ≤ ∆ ≤ I ( p X , W ) − R, (5.22) − E ′ sp ( R, p X , W ) ≤ λ ≤ 1 − E ′ sp ( R + ∆ , p X , W ) . (5.23) Then the p air of inc orr e ct-message and er asur e exp onents { E sp ( R, p X , p Y | X ) + ∆ , E sp ( R + ∆ , p X , p Y | X ) } (5.24) is universal ly attainable over p Y | X ∈ W using the p enalty function F ( t ) = ∆ + λ | t | + , and e quality holds in the er asur e-exp onent game of (3.1 1 ). Pr o of . F rom (5.19) and (5.22), w e h a v e | R conj ( p X , p Y | X ) − R | + ≤ ∆ ≤ I ( p X , p Y | X ) − R , ∀ p Y | X ∈ W . Similarly , from (5 .18 ) and (5. 23 ), w e ha v e − E ′ sp ( R, p X , p Y | X ) ≤ λ ≤ 1 − E ′ sp ( R + ∆ , p X , p Y | X ) , ∀ p Y | X ∈ W . Then applying Prop. 5.3(ii) with the s in gleton { p Y | X } in p lace of W pro ves the claim. ✷ The set of (∆ , λ ) defined by (5.22), (5.23) is sm aller than that of Prop . 5.3(i) b ut is not empt y b ecause E ′ sp ( R + ∆ , p X , W ) tends to zero as ∆ app roac hes the up p er limit I ( p X , W ) − R . Th us the unive r s al exp onents in (5.24 ) hold at least in the small erasure-exp onent regime (where E sp ( R + ∆ , p X , p Y | X ) → 0) and coincide w ith those deriv ed b y F orney [3, T h eorem 3 (a)] for symmetric c hannels, using MAP deco ding. F or sym m etric c hann els, the s ame inp ut d istribution p X is optimal at all rates. 1 Our rates are ident ical to his, i.e., the same op timal error exp onents are ac hieved without kno w ledge of the channel. 6 Relativ e Minimax When the comp ound class W is s o large that I ( p X , W ) ≤ R , w e ha ve seen from Corollary 5.2 that the simp le thresh olding r u le F ( t ) ≡ ∆ is optimal. Eve n if I ( p X , W ) > R , our min imax criterion (whic h seeks the worst-ca se error exp onen ts ov er th e class W ) for designing F might b e a p essimistic one. Th is drawbac k can b e alleviated to some exten t u s ing a r elative minimax prin ciple, see [10] and references therein. Our pr op osed approac h is to define t wo fun ctionals α ( p Y | X ) and β ( p Y | X ) and the r elative err or exp onents ∆ α E i ( R, p X , p Y | X , F ) , E i ( R, p X , p Y | X , F ) − α ( p Y | X ) , ∆ β E ∅ ( R, p X , p Y | X , F ) , E ∅ ( R, p X , p Y | X , F ) − β ( p Y | X ) . Then solve the constrained optimizati on problem of (3.9) w ith the ab o v e fu nctionals in place of E i ( R, p X , p Y | X , F ) − α and E ∅ ( R, p X , p Y | X , F ). It is reaso n able to c ho ose α ( p Y | X ) and β ( p Y | X ) 1 F orney also stud ied the case E ∅ ( R ) > E i ( R ), whic h is n ot co vered by our analysis. 14 large for “go o d c hann els” and sm all for very noisy c hann els. While α ( p Y | X ) and β ( p Y | X ) could b e the error exp onents associated with some r eferen ce test, this is not a requirement . A p ossible c hoice is α ( p Y | X ) = ∆ β ( p Y | X ) = E sp ( R + ∆ , p X , p Y | X ) whic h are the err or exp onen ts (5.2) and (5.3) corresp onding to the thr esholding rule F ( t ) ≡ ∆. Another c hoice is α ( p Y | X ) = E sp ( R, p X , p Y | X ) + ∆ (6.1) β ( p Y | X ) = E sp ( R + ∆ , p X , p Y | X ) (6.2) whic h are the “ideal” F orney exp onen ts — ac hiev able under the assumptions of Prop. 5.5(i). The r elativ e minimax pr oblem is a simple ext ens ion o f the min imax pr ob lem solv ed ea r lier. Define the follo win g f u nctions: ∆ α E r,F ( R, p X , W ) = min p Y | X ∈ W [ E r,F ( R, p X , p Y | X ) − α ( p Y | X )] , (6.3) ∆ α E sp ( R, p X , W ) = min p Y | X ∈ W [ E sp ( R, p X , p Y | X ) − α ( p Y | X )] , (6.4) F R,p X , W ,α ( t ) = ∆ α E sp ( R, p X , W ) − ∆ α E sp ( R + t, p X , W ) . (6.5) The fun ction F R,p X , W , α ( t ) of (6.5) is increasing and satisfies F R,p X , W , α (0) = 0. T he ab ov e fun ctions ∆ α E r,F and ∆ α E sp satisfy the follo win g relationship: ∆ α E r,F ( R, p X , W ) ( a ) = min p Y | X ∈ W min R ′ [ E sp ( R ′ , p X , p Y | X ) + F ( R ′ − R )] − α ( p Y | X ) = min R ′ min p Y | X ∈ W [ E sp ( R ′ , p X , p Y | X ) − α ( p Y | X )] + F ( R ′ − R )] ( b ) = min R ′ [∆ α E sp ( R ′ , p X , W ) + F ( R ′ − R )] (6.6) where (a) is obtained from (4.7) and (6.3), and (b) from (6.4). Equation (6.6) is of the same form as (4.7), with ∆ α E r,F and ∆ α E sp in place of E r,F − α and E sp − α , resp ectiv ely . Analogously to (5.4 ), (5.5), and (5.6), the r elativ e m inimax for v ariable-size deco ders is giv en b y ∆ β E L ∅ ( R, W , α ) = max p X sup F ∈F L ( R,p X , W , α ) ∆ β E r, | F − 1 | + ( R, p X , W ) (6.7) where the feasible set F L ( R, p X , W , α ) is the set of functions F that satisfy ∆ α E r,F ( R, p X , W ) ≥ 0 as wel l as the previous cont inuit y and m onotonicit y conditions. The follo wing prop osition is anal- ogous to Prop. 5.1. 15 Prop osition 6.1 The supr e mum over F in (6.7) is achieve d by F L ∗ ( t ) = F R,p X , W ,α ( t ) − ∆ α E sp ( R, p X , W ) = − ∆ α E sp ( R + t, p X , W ) , (6.8) indep endent ly of the choic e of β . The r elative minimax i s giv en by ∆ β E L ∅ ( R, W , α ) = max p X ∆ β E r, | ( F L ∗ ) − 1 | ( R, p X , W ) . Pr o of : The pr o of exploits the same monotonicit y prop ert y ( E r,F ≤ E r,G for F G ) th at wa s used to d er ive the optimal F in (5.12). The s u premum o ver F is obtained b y follo win g the steps of the pro of of Prop. 5.1, sub s tituting ∆ α E r,F , ∆ α E sp , and F R,p X , W , α for E r,F − α , E sp − α , and F R,p X , W , resp ectiv ely . The relativ e minimax is obtained by subs tituting th e optimal F in to (6.7 ). ✷ W e would like to know ho w muc h influence the r eference function α h as on the optimal F . F or the “F orney reference exp onent f u nction” α of (6.1), we obtain the optimal F f rom (6.8) and (6.4): F L ∗ ( t ) = − min p Y | X ∈ W [ E sp ( R + t, p X , p Y | X ) − α ( p Y | X )] = − min p Y | X ∈ W [ E sp ( R + t, p X , p Y | X ) − E sp ( R, p X , p Y | X ) − ∆] = ∆ + max p Y | X ∈ W [ E sp ( R, p X , p Y | X ) − E sp ( R + t, p X , p Y | X )] = ∆ + max p Y | X ∈ W F R,p X ,p Y | X ( t ) . (6.9) In terestingly , the m axim um abov e is often ac hieved by th e cle anest channel in W — for wh ic h E sp ( R, p X , p Y | X ) is large and E sp ( R + t, p X , p Y | X ) falls off rap id ly as t incr eases. This stands in con trast to (5.12) which may b e written as F L ∗ ( t ) = α − min p Y | X ∈ W E sp ( R + t, p X , p Y | X ) = ∆ + min p Y | X ∈ W E sp ( R, p X , p Y | X ) − min p Y | X ∈ W E sp ( R + t, p X , p Y | X ) . (6.10) In (6.10), the minima are ac hiev ed b y the noisiest channel at rates R and R + t , resp ectiv ely . Also note that F L ∗ ( t ) from (6. 9 ) is u niformly larger than F L ∗ ( t ) from (6. 10 ) and thus results in larger incorrect-message exp onen ts. F or R > I ( p X , W ), C orollary 5.2 has sho wn that th e minimax criterion is maximized by th e thresholding rule F ( t ) = ∆ whic h yields E i ( R, p X , p Y | X ) = ∆ and E ∅ ( R, p X , p Y | X ) = E sp ( R + ∆ , p X , p Y | X ) for all p Y | X . The relativ e minimax cr iterion b ased on α ( p Y | X ) of (6.1) yields a higher E i ( R, p X , p Y | X ) for go o d c h annels an d this is counterbalance d by a lo we r E ∅ ( R, p X , p Y | X ). Thus the pr imary ad v anta ge of the r elativ e minimax approac h is that α ( p Y | X ) can b e c hosen to more finely balance the error exp onents across th e range of c hannels of interest. 7 Comp ound Binary S ymmetric Chann el W e hav e ev aluated the incorrect-message and erasu re exp onent s of (5.24) for the comp ound BSC with crossov er probability ρ ∈ [ ρ min , ρ max ], where 0 < ρ min < ρ max ≤ 1 2 . The class W may 16 b e iden tified with the int erv al [ ρ min , ρ max ], where ρ min and ρ max corresp ond to th e cleanest and noisiest c h annels in W , r esp ectiv ely . Denote b y h 2 ( ρ ) , − ρ log ρ − (1 − ρ ) log(1 − ρ ) the binary en tropy fun ction, b y h − 1 2 ( · ) the inv erse of that fun ction o ver the range [0 , 1 2 ], and b y p ρ the Bernoulli p.m.f. w ith parameter ρ . Capacit y of th e BSC is give n by C ( ρ ) = 1 − h 2 ( ρ ), and th e sphere pac king exp onent by [1, p. 195] E sp ( R, ρ ) = D ( p ρ R || p ρ ) = ρ R log ρ R ρ + (1 − ρ R ) log 1 − ρ R 1 − ρ , 0 ≤ R ≤ C ( ρ ) , (7.1) where ρ R = h − 1 2 (1 − R ) ≥ ρ . The optimal inpu t distribution p X is un iform at all rates and w ill b e omitted from the list of argumen ts of the fun ctions E r,F , E sp , and F R b elo w. The critical rate is R cr ( ρ ) = 1 − h 2 1 1 + p 1 /ρ 2 − 1 ! , and E sp (0 , ρ ) = − log p 4 ρ (1 − ρ ). The capacit y and sp here-pac king exp onen t for the comp oun d BSC are r esp ectiv ely given b y C ( W ) = C ( ρ max ) and E sp ( R, W ) = min ρ min ≤ ρ ≤ ρ max E sp ( R, ρ ) = E sp ( R, ρ max ) . (7.2) F or R ≥ C ( W ), the optimal F is the thresh olding rule of (5 .1 ), and (5.2 ) and (5.3) yield E i ( R, ρ ) = ∆ a n d E ∅ ( R, ρ ) = E sp ( R + ∆ , ρ ) . In the remaind er of this section we consid er the case R < C ( W ), in whic h case E sp ( R, W ) > 0. Optimal F . F or an y 0 ≤ ∆ ≤ C ( ρ ) − R , we h a v e ρ ≤ ρ R +∆ ≤ ρ R ≤ 1 2 . F rom (7.1) w e hav e F R,ρ ( t ) = E sp ( R, ρ ) − E sp ( R + t, ρ ) = D ( p ρ R || p ρ ) − D ( p ρ R + t || p ρ ) = h 2 ( ρ R ) − h 2 ( ρ R + t ) + ( ρ R − ρ R + t ) log 1 ρ − log 1 1 − ρ = h 2 ( ρ R ) − h 2 ( ρ R + t ) + ( ρ R − ρ R + t ) log 1 ρ − 1 , t ≥ 0 , whic h is a decreasing fun ction of ρ . Ev aluating the optimal F from (5.12), w e ha ve F L ∗ ( t ) = ∆ + F R, W ( t ) = ∆ + E sp ( R, W ) − E sp ( R + t, W ) = ∆ + E sp ( R, ρ max ) − E sp ( R + t, ρ max ) = ∆ + F R,ρ max ( t ) , t ≥ 0 . 17 Observe that the optimal F is determined by the noisiest c hann el ( ρ max ) an d do es not dep end at all on ρ min . This contrasts w ith the relativ e minimax criterion with α ( p Y | X ) of (6.1), where ev aluation of the optimal F f r om (6.9) yields F L ∗ ( t ) = ∆ + max ρ min ≤ ρ ≤ ρ max F R,ρ ( t ) = ∆ + F R,ρ min ( t ) , t ≥ 0 whic h is determined by the cleanest c hannel ( ρ min ) and d o es not dep end on ρ max . Optimal Error Exp onents. The deriv ations b elo w are simplified if instead of w orkin g w ith the crosso ver probabilit y ρ , we u s e the follo wing reparameterizat ion: µ , ρ − 1 − 1 , µ max , ρ − 1 min − 1 , µ min , ρ − 1 max − 1 and µ R , ρ − 1 R − 1 = 1 h − 1 2 (1 − R ) − 1 ⇔ R = 1 − h 2 1 1 + µ where µ R increases monotonically fr om 1 to ∞ as R increases from 0 to 1. With this notation, we ha ve ρ = 1 1+ µ and µ max ≥ µ ≥ µ R +∆ ≥ µ R ≥ 1. Also dR dρ R = − dh 2 ( ρ R ) dρ R = − log µ R ln 2 dE sp ( R, ρ ) dρ R = log µ − log µ R ln 2 − E ′ sp ( R, ρ ) = dE sp ( R, ρ ) /dρ R − dR/dρ R = log µ log µ R − 1 = log µ/µ R log µ R . (7.3) F r om (5.18) and (7.3), w e obtain − E ′ sp ( R, W ) = − min ρ min ≤ ρ ≤ ρ max E ′ sp ( R, ρ ) = log µ max /µ R log µ R . (7.4) Observe th at the minimizing ρ is ρ min , i.e., the cleanest c hannel in W . In con trast, from (7.2), w e ha ve − E ′ sp ( R, W ) = − E ′ sp ( R, ρ max ) = log µ min /µ R log µ R < − E ′ sp ( R, W ) (7.5) whic h is determined by the n oisiest channel ( ρ max ). Conditions for univ ersality . Next w e ev aluate R conj ( W ) from (5.1 9 ). F or a giv en ρ , th e conjugate rate of R is obtained fr om (7.3): − E ′ sp ( R, ρ ) = 1 − E ′ sp ( R conj , ρ ) log( µ/µ R ) log µ R = log µ R conj log( µ/µ R conj ) 18 hence µ R conj = µ µ R R conj ( µ ) = 1 − h 2 1 1 + µ/µ R R conj ( W ) = max µ min ≤ µ ≤ µ max R conj ( µ ) = R conj ( µ max ) . F r om (5.10) and (7.2), w e hav e R conj ( W ) = R conj ( µ min ) . Analogously to (7.5), observ e that b oth R conj ( W ) and R conj ( W ) are determin ed by the cleanest and noisiest channels in W , resp ectiv ely . W e can now ev aluate the conditions of Prop. 5.5, under which F ( t ) = ∆ + λ | t | + is universal (sub ject to co n ditions on ∆ and λ ). I n (5.22 ), ∆ m ust s atisfy R conj ( W ) − R + ≤ ∆ ≤ C ( W ) − R R conj ( µ max ) − R + ≤ ∆ ≤ C ( µ min ) − R h 2 1 1 + µ R − h 2 1 1 + µ max /µ R + ≤ ∆ ≤ h 2 1 1 + µ R − h 2 1 1 + µ min . (7 .6) The left side is zero if µ max ≤ µ 2 R . If µ max > µ 2 R , the argu m en t of | · | + is p ositiv e, and we need µ max /µ R < µ min to ensure th at the left side is low er th an the righ t side. Hence there exists a nonempt y range of v alues of ∆ satisfying (7.6) if and only if µ R ≥ min √ µ max , µ max µ min whic h ma y also b e written as µ max ≤ max { µ 2 R , µ R µ min } . Next, su b stituting (7.4) into (5.23), w e obtain the follo win g cond ition for λ : log µ max /µ R log µ R ≤ λ ≤ log µ R +∆ log µ max /µ R +∆ . (7.7) This equation h as a solution if and only if the left side does n ot exceed the r ight side, i.e., µ max ≤ µ R µ R +∆ , (7.8) or equiv alen tly , ρ min ≥ (1 + µ R µ R +∆ ) − 1 . Since µ R is an increasing function of R , the larger the v alues of R and ∆, th e lo wer the v alue of ρ min for which the u niv ersalit y prop ert y still holds . If equalit y holds in (7.8), th e only feasible v alue of λ is λ = log µ R +∆ log µ R ≥ 1 . (7.9) This v alue of λ r emains feasible if (7.8) holds with strict inequalit y . 19 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Rate (R ′ ) → E sp (R ′ ) → E sp (R ′ ) R cr R R conj E i (R, ∆ ) E φ (R, ∆ ) Figure 2: Erasure and incorrect-message exp onen ts E ∅ ( R ) and E i ( R ) for BSC with crossov er prob - abilit y ρ = 0 . 1 (capacit y C ( ρ ) ≈ 0 . 53) and rate R = 0 . 1. 8 Discussion The F -MMI decision r u le of (3.1) is a generalization of C sisz´ ar and K¨ orner’s MMI d eco d er w ith erasure option. T he w eigh ting fu nction F in (3.1) can b e optimized in an asymp totic Neyman- P earson sense giv en a comp oun d class of c han n els W . An explicit form ula has b een deriv ed in terms of the sph ere-pac king exp onen t fun ction for F th at m aximizes the erasure exp onen t sub ject to a constrain t on the in correct-message exp on ent. Th e optimal F is generally n on un ique but agrees with existing designs in sp ecial cases of interest. In partic u lar, Corollary 5.2 sh o ws that the simple thresh olding rule F ( t ) ≡ ∆ is optimal if R ≥ I ( p X , W ), i.e., when the transmission rate cannot b e reliably supp orted by the w orst c hann el in W . W h en R < I ( p X , W ), Prop. 5.5 sho ws that for small erasure exp onent s, our expressions for the op timal exp onen ts coincide with th ose derive d by F orney [3] for sy m metric c hann els, wh ere the same in put distribution p X is optimal at all rates. In this regime, Csisz´ ar and K¨ orner’s ru le F ( t ) = ∆ + λ | t | + is also universal u n der some conditions on the parameter pair (∆ , λ ). It is also w orth noting that while su b optimal, th e design F ( t ) = ∆ + t yields an emp irical version of F orney’s simple decision r u le (2.5). Previous work [5] using a differen t unive r sal d ecod er h ad sh o wn that F orn ey’s exp onen ts can b e 20 matc hed in the sp ecial case where u ndetected-error and erasure exp onents are equal (corresp ondin g to T = 0 in F orn ey’s rule (2.4)). Our results sho w that this prop ert y extends b ey ond this sp ecial case, alb eit not eve r ywhere. Another analogy b et ween F orney’s sub optimal decision ru le (2.5) and ours (3. 1 ) is that the former is b ased on the t wo highest lik eliho o d scores, and the latter is based on the tw o highest empirical m utual in f ormation s cores. Ou r r esults imply that (2.5) in optimal (in te r m s of err or exp onents) in the sp ecial regime identified ab o ve. The relativ e minimax criterion of S ec. 6 is attractiv e when the comp ound class W is br oad (or difficult to pick) as it allo ws finer tun ing of the error exp onents for differen t channels in W . T he class W could conce iv ably b e as large as P Y | X , the set of all DMC’s. Finally , we ha v e extended our fr amew ork to deco ding for comp ound m u ltiple access c hannels. Those results will b e presente d elsewhere. Ac kno wledgements . The author than k s S hank ar Sadasiv am for n umerical ev aluation of the error exp onent formulas in Sec. 7, and Prof. Liz h ong Zhen g for h elpful comment s. 21 A Pro of of Prop osition 4.1 (i) is imm ediate from (4.3), r estated b elo w: E r,F ( R, p X , p Y | X ) = m in ˜ p Y | X [ D ( ˜ p Y | X k p Y | X | p X ) + F ( I ( p X , ˜ p Y | X ) − R )] , (A.1) and the fact that F is nondecreasing. (ii) is imm ed iate for the same reason as ab o v e. (iii) Sin ce the function E sp ( R, p X , p Y | X ) is decreasing in R for R ≤ I ( p X , p Y | X ), we h a v e E sp ( R, p X , p Y | X ) = min ˜ p Y | X : I ( p X , ˜ p Y | X )= R D ( ˜ p Y | X k p Y | X | p X ) , ∀ R ≤ I ( p X , p Y | X ) . (A.2) Since F is nond ecreasing, ˜ p Y | X that ac h iev es the minim u m in (A.1) m ust satisfy I ( p X , ˜ p Y | X ) ≤ I ( p X , p Y | X ). Hence E r,F ( R, p X , p Y | X ) = min ˜ p Y | X : I ( p X , ˜ p Y | X ) ≤ I ( p X ,p Y | X ) [ D ( ˜ p Y | X k p Y | X | p X ) + F ( I ( p X , ˜ p Y | X ) − R )] = min R ′ ≤ I ( p X ,p Y | X ) min ˜ p Y | X : I ( p X , ˜ p Y | X )= R ′ [ D ( ˜ p Y | X k p Y | X | p X ) + F ( R ′ − R )] ( a ) = min R ′ ≤ I ( p X ,p Y | X ) [ E sp ( R ′ , p X , p Y | X ) + F ( R ′ − R )] ( b ) = min R ′ [ E sp ( R ′ , p X , p Y | X ) + F ( R ′ − R )] where (a) is d ue to (A.2), and (b) h olds b ecause E sp ( R ′ , p X , p Y | X ) = 0 for R ′ ≥ I ( p X , p Y | X ) and F is nondecreasing. (iv) The claim follo ws dir ectly from the defin itions (4.2) and (4.4 ), taking min im a ov er W . ✷ B Pro of of Prop osition 4.2 Giv en the p.m.f. p X , c h o ose any typ e p x suc h that max x ∈X | p x ( x ) − p X ( x ) | ≤ |X | N . 2 Define E r,F,N ( R, p x , p Y | X ) = m in p y | x [ D ( p y | x k p Y | X | p x ) + F ( I ( x ; y ) − R )] (B.1) and E sp,N ( R, p x , p Y | X ) = min p y | x : I ( x ; y ) ≤ R D ( p y | x k p Y | X | p x ) (B.2) whic h differ from (4.3) and (4.1) in that the minimization is p erformed o v er conditional types instead of general conditional p.m.f.’s. W e hav e lim N → ∞ E r,F,N ( R, p x , p Y | X ) = E r,F ( R, p X , p Y | X ) lim N → ∞ E sp,N ( R, p x , p Y | X ) = E sp ( R, p X , p Y | X ) (B.3) 2 F or instance, truncate each p X ( x ) down to the nearest integer multiple of 1 / N and add a/ N to the smallest resulting v alue to obtain p x ( x ) , x ∈ X , summing to one. a is an in teger in the range { 0 , 1 , · · · , |X | − 1 } . 22 b y con tin uity of the divergence fu nctional and of F in th e region where the m in imand D + F is finite. W e will use the follo wing t wo standard inequ alities. 1) Give n an arbitrary sequence y , dra w x ′ indep end en tly of y and uniformly o v er a fixed t yp e class T x . Then [1] P r [ T x ′ | y ] = | T x ′ | y | | T x | = | T x ′ | y | | T x ′ | . = 2 − N I ( x ′ ; y ) . Hence for any 0 ≤ ν ≤ H ( p x ), P r [ I ( x ′ ; y ) ≥ ν ] = X T x ′ | y P r [ T x ′ | y ] 1 { I ( x ′ ; y ) ≥ ν } . = X T x ′ | y 2 − N I ( x ′ ; y ) 1 { I ( x ′ ; y ) ≥ ν } ( a ) . = max T x ′ | y 2 − N I ( x ′ ; y ) 1 { I ( x ′ ; y ) ≥ ν } . = 2 − N ν (B.4) where (a) h olds b ecause the num b er of t yp es is p olynomial in N . F or ν > H ( p x ) w e hav e P r [ I ( x ′ ; y ) ≥ ν ] = 0. 2) Giv en an arbitrary sequen ce x , draw y from the co n ditional p.m.f. p N Y | X ( ·| x ). W e h a v e [1] P r [ T y | x ] . = 2 − N D ( p y | x k p Y | X | p x ) . (B.5) Then for any ν > 0, P r [ I ( x ; y ) ≤ ν ] = X T y | x P r [ T y | x ] 1 { I ( x ; y ) ≤ ν } . = X p y | x 2 − N D ( p y | x k p Y | X | p x ) 1 { I ( x ; y ) ≤ ν } . = max p y | x 2 − N D ( p y | x k p Y | X | p x ) 1 { I ( x ; y ) ≤ ν } = m ax p y | x : I ( x ; y ) ≤ ν 2 − N D ( p y | x k p Y | X | p x ) = 2 − N E sp,N ( ν,p x ,p Y | X ) . (B.6) Incorrect Messages. The co dew ords are d ra wn in dep end en tly and uniformly f r om t yp e class T x . Since th e conditional error pr obabilit y is indep endent of the transmitted messag e, assume without loss of generalit y that message m = 1 was transm itted. An incorrect co dewo r d x ( i ) app ears on th e d ecod er’s list if i > 1 and I ( x ( i ); y ) ≥ R + max j 6 = i F ( I ( x ( j ); y ) − R ) . Let x = x (1). T o ev aluate the exp ected num b er of incorrect co d ew ords on the list, we fi rst fix y . 23 Giv en y , define the i.i.d. random v ariables Z i = I ( x ( i ); y ) − R for 2 ≤ i ≤ 2 N R . Also let z 1 = I ( x ; y ) − R , whic h is a fu nction of the join t typ e p xy . The exp ected num b er of incorrect co dew ords on th e list dep end s on ( x , y ) only via their join t t yp e and is giv en by E [ N i | T xy ] = 2 N R X i =2 P r I ( x ( i ); y ) ≥ R + m ax j / ∈M\{ i } F ( I ( x ( j ); y ) − R ) = 2 N R X i =2 P r Z i ≥ max j / ∈M\{ i } F ( Z j ) ≤ 2 N R X i =2 P r [ Z i ≥ F ( z 1 )] = (2 N R − 1) P r [ Z 2 ≥ F ( z 1 )] ( a ) = (2 N R − 1) P r [ I ( x ′ ; y ) ≥ R + F ( I ( x ; y ) − R )] ( b ) . = 2 N R 2 − N [ R + F ( I ( x ; y ) − R )] = 2 − N F ( I ( x ; y ) − R ) (B.7) where in (a), x ′ is dra wn indep endent ly of y and uniform ly o v er the t yp e class T x ; and (b) is obtained by app lication of (B.4). Av eraging o v er y , we obtain E [ N i | T x ] = X T y | x P r [ T y | x ] E [ N i | T xy ] . = max T y | x P r [ T y | x ] E [ N i | T xy ] ( a ) . = max p y | x exp 2 {− N [ D ( p y | x k p Y | X | p x ) + F ( I ( x ; y ) − R )] } ( b ) = exp 2 {− N E r,F,N ( R, p x , p Y | X ) } ( c ) . = exp 2 {− N E r,F ( R, p X , p Y | X ) } where (a), (b), (c) follo w from (B.5 ), (B.1), (B.3), resp ectiv ely . T his pro ves (4.8). Erasure. T he deco der fails to r eturn the transm itted co dewo r d x = x (1) if I ( x ; y ) ≤ R + max 2 ≤ i ≤ 2 N R F ( I ( x ( i ); y ) − R ) . (B.8) Denote b y p ∅ ( p x ) the probabilit y of this ev ent. The ev ent is the d isj oin t un ion of ev en ts E 1 and E 2 b elo w. The first one is E 1 : I ( x ; y ) ≤ R + F (0) . (B.9) Since F − 1 is increasing, E 1 is equiv alen t to F − 1 ( I ( x ; y ) − R ) ≤ 0. T h e second ev ent is E 2 : R + F (0) < I ( x ; y ) ≤ R + max 2 ≤ i ≤ 2 N R F ( I ( x ( i ); y ) − R ) = R + F max 2 ≤ i ≤ 2 N R I ( x ( i ); y ) − R 24 where equalit y holds b ecause F is nondecreasing. T hus E 2 is equiv alen t to max 2 ≤ i ≤ 2 N R I ( x ( i ); y ) ≥ R + F − 1 ( I ( x ; y ) − R ) > R. (B.10) Applying (B.6), we ha ve P r [ E 1 ] = p (1) ∅ ( p x ) , P r [ I ( x ; y ) ≤ R + F (0)] . = exp 2 − N E sp,N ( R + F (0) , p x , p Y | X ) . (B .11) Clearly p ∅ ( p x ) ∼ p (1) ∅ ( p x ) ∼ 1 if R + F (0) ≥ I ( p x , p Y | X ). Next we hav e P r [ E 2 ] = p (2) ∅ ( T y | x ) = P r max 2 ≤ i ≤ 2 N R I ( x ( i ); y ) ≥ R + F − 1 ( I ( x ; y ) − R ) = P r max 2 ≤ i ≤ 2 N R Z i ≥ F − 1 ( z 1 ) ( a ) . = 2 − N F − 1 ( z 1 ) = 2 − N F − 1 ( I ( x ; y ) − R ) where (a) follo ws by application of the union b ound and (B.4 ). Av eraging o v er y , we obtain p (2) ∅ ( p x ) , X T y | x : I ( x ; y ) >R + F (0) P r [ T y | x ] p (2) ∅ ( T y | x ) . = max T y | x : I ( x ; y ) >R + F (0) P r [ T y | x ] p (2) ∅ ( T y | x ) . = ma x p y | x : I ( x ; y ) >R + F (0) exp 2 {− N [ D ( p y | x k p Y | X | p x ) + F − 1 ( I ( x ; y ) − R )] } . (B.1 2) F or R + F (0) ≤ I ( p x , p Y | X ), we h a v e max p y | x : I ( x ; y ) ≤ R + F (0) exp 2 {− N D ( p y | x k p Y | X | p x ) } . = max p y | x : I ( x ; y )= R + F (0) exp 2 {− N D ( p y | x k p Y | X | p x ) } , hence (B.12) may b e written more simply as p (2) ∅ ( p x ) . = max p y | x exp 2 {− N [ D ( p y | x k p Y | X | p x ) + | F − 1 ( I ( x ; y ) − R ) | + ] } = exp 2 {− N E r, | F − 1 | + ,N ( R, p x , p Y | X ) } . (B.13) Since E 1 and E 2 are disjoin t ev en ts, w e obtain p ∅ ( p x ) = p (1) ∅ ( p x ) + p (2) ∅ ( p x ) . = exp 2 {− N min { E r, | F − 1 | + ,N ( R, p x , p Y | X ) , E sp,N ( R + F (0) , p x , p Y | X ) }} . = exp 2 {− N min { E r, | F − 1 | + ( R, p X , p Y | X ) , E sp ( R + F (0) , p X , p Y | X ) }} 25 where the last line is due to (B.3). The fun ction F − 1 ( t ) has a zero- crossin g at t = F (0). Applying (4.7 ), w e obtain E r, | F − 1 | + ( R, p X , p Y | X ) = min R ′ [ E sp ( R ′ , p X , p Y | X ) + | F − 1 ( R ′ − R ) | + ] ≤ E sp ( R + F (0) , p X , p Y | X ) + 0 hence p ∅ ( p x ) . = exp 2 {− N E r, | F − 1 | + ( R, p X , p Y | X ) } . This pr o v es (4.9). ✷ C Pro of of Prop osition 5.1 W e first pro ve (5.12). R ecall (4.7) and (4.6), restate d here for conv enience: E r,F ( R, p X , W ) = m in R ′ [ E sp ( R ′ , p X , W ) + F ( R ′ − R )] , E sp ( R, p X , W ) ≡ E sp ( R ′ , p X , W ) + F R,p X , W ( R ′ − R ) , ∀ R ′ . Hence E r,F R,p X , W ( R, p X , W ) = E sp ( R, p X , W ) . (C.1) Case I : E sp ( R, p X , W ) = α , i.e., from (5.9), we hav e ∆ = 0. The feasible set F L ( R, p X , W , α ) defined in (5.6) ta kes th e f orm { F : E r,F ( R, p X , W ) ≥ E sp ( R, p X , W ) } . Owing to (C.1) and the monotonicit y prop erty of P r op. 4.1(ii), an equiv alen t representa tion of F L ( R, p X , W , α ) is { F : F F R,p X , W } . As indicated b elo w the s tatement of Prop. 5.1, this implies F R,p X , W ac hiev es the sup rem u m in (5.5). Case II : E sp ( R, p X , W ) 6 = α . The deriv ation parallels that of Case I. An equiv ale nt r epresen ta- tion of the constrained set F L ( R, p X , W , α ) in (5.6) is { F : F F L ∗ } , where F L ∗ ( t ) = F R,p X , W ( t ) + α − E sp ( R, p X , W ) = F R,p X , W ( t ) + ∆ = α − E sp ( R + t, p X , W ) . Hence F L ∗ ac hiev es the maximum in (5.5). T o pr ov e (5.13), w e simply observ e that if F L ∗ F for al l F ∈ F L ( R, p X , W , α ), then F ∗ F for all F ∈ F ( R, p X , W , α ), where F ∗ ( t ) = m ax( t, F L ∗ ( t )). ✷ D Pro of of Prop osition 5.3 W e ha ve E L ∅ ( R, p X , W , α ) ( a ) = E r, | ( F L ∗ ) − 1 | + ( R, p X , W ) ( b ) = min R ′ [ E sp ( R ′ , p X , W ) + | ( F L ∗ ) − 1 | + ( R ′ − R )] ( c ) = min R ′ ≥ R + t ( F L ∗ ) − 1 [ E sp ( R ′ , p X , W ) + ( F L ∗ ) − 1 ( R ′ − R )] (D.1) 26 where equ alit y (a) results from P r ops. 4.2 and 5.1, (b) follo ws f rom (4.7), and (c) from the definition (3.12) and the fact that the function E sp ( R ′ , p X , W ) is nonincreasing in R ′ . F rom (5.12) and prop erty (P3) in S ec. 3 we obtain the inv erse function ( F L ∗ ) − 1 ( t ) = F − 1 R,p X , W ( t − ∆) (D.2) where F R,p X , W ( t ) is giv en in (4.5). Hence t ( F L ∗ ) − 1 = ∆ and E L ∅ ( R, p X , W , α ) = min R ′ ≥ R +∆ [ E sp ( R ′ , p X , W ) + F − 1 R,p X , W ( R ′ − R − ∆ ) | {z } h ( R ′ ) ] . (D.3) By assu m ption E sp ( R, p X , W ) is con vex in R , and therefore F R,p X , W ( t ) is conca v e. By applica- tion of Pr op ert y (P5) in Sec. 3, the function F − 1 R,p X , W is conv ex, and th u s so is h ( R ′ ) in (D.3 ). The deriv ativ es of F − 1 R,p X , W and h are r esp ectiv ely giv en by ( F − 1 R,p X , W ) ′ ( t ) = 1 F ′ R,p X , W ( t ) = 1 − E ′ sp ( R + t, p X , W ) (D.4) and h ′ ( R ′ ) = E ′ sp ( R ′ , p X , W ) + 1 − E ′ sp ( R ′ − ∆ , p X , W ) . By Pr op. 5.1 and the defin ition of ∆ in (5.9), we hav e E i ( R, p X , W ) = α = E sp ( R, p X , W ) + ∆. Next we pr o v e the statemen ts (i)—(iv). (i) max(0 , R conj ( p X , W ) − R ) ≤ ∆ ≤ I ( p X , W ) − R . This case is illustrated in Fig. 3. W e ha v e R + ∆ ≥ max( R, R conj ( p X , W )) ≥ R cr ( p X , W ) . ( D.5) Hence h ′ ( R + ∆) = E ′ sp ( R + ∆ , p X , W ) + 1 − E ′ sp ( R, p X , W ) ≥ ( E ′ sp ( R, p X , W ) + 1 − E ′ sp ( R,p X , W ) ≥ 0 : if R ≥ R cr ( p X , W ) E ′ sp ( R conj ( p X , W ) , p X , W ) + 1 − E ′ sp ( R,p X , W ) = 0 : if R ≤ R cr ( p X , W ) ≥ 0 . (D.6) By con vexit y of h ( · ) this implies that R + ∆ minimizes h ( R ′ ) o ver R ′ ≥ R + ∆, an d so E L ∅ ( R, p X , W , α ) = h ( R + ∆) = E sp ( R + ∆ , p X , W ) . (ii). D u e to (D.5), w e hav e either R ≥ R cr ( p X , W ) or R + ∆ ≥ R conj ( p X , W ) ≥ R . In b oth cases, − E ′ sp ( R, p X , W ) ≤ 1 − E ′ sp ( R + ∆ , p X , W ) . 27 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Rate (R ′ ) → E sp (R ′ ) → E sp (R ′ ) R h(R ′ ) F R (R ′ −R) R+ ∆ R 2 ( ∆ ) Figure 3: Construction and minimization of h ( R ′ ) for Case (i). Let F ( t ) = ∆ + λ | t | + where λ is sandwiched b y the left and right sides of the ab o v e inequalit y . W e ha ve t F = 0 and F ′ ( t ) = λ 1 { t ≥ 0 } . The in verse fu nction is F − 1 ( t ) = 1 λ ( t − ∆) for t ≥ ∆. Hence ( F − 1 ) ′ ( t ) = 1 λ 1 { t ≥ ∆ } and t F − 1 = ∆. Substituting F and F − 1 in to (4.7 ), we obtain E r,F ( R, p X , W ) = min R ′ ≥ R [ E sp ( R ′ , p X , W ) + ∆ + λ ( R ′ − R )] , E r, | F − 1 | + ( R, p X , W ) = min R ′ ≥ R +∆ E sp ( R ′ , p X , W ) + 1 λ ( R ′ − R − ∆ ) . T aking deriv ativ es of the brack eted terms with resp ect to R ′ and recalling that λ ≥ − E ′ sp ( R, p X , W ) , 1 λ ≥ − E ′ sp ( R + ∆ , p X , W ) , w e obser ve that these deriv ativ es are nonnegativ e. Since E sp ( · , p X , W ) is con vex, the m in ima are ac hiev ed at R and R + ∆ resp ectiv ely . The r esulting exp onen ts are E sp ( R, p X , W ) + ∆ and E sp ( R + ∆ , p X , W ) which coincide with the optimal exp onents of (5.14). (iii). R ≤ R cr ( p X , W ) and 0 ≤ ∆ ≤ R conj ( p X , W ) − R . This case is illustrated in Fig. 4 in the case ∆ = 0. F r om (D. 6 ), we ha ve h ′ ( R ′ ) = 0 if and only if R ′ and R ′ − ∆ are conju gate rates. In this case, u s ing the ab o ve assumption on ∆, we ha ve R ≤ R ′ − ∆ = R 1 (∆) ≤ R cr ( p X , W ) ≤ R ′ = R 2 (∆) ≤ R conj ( p X , W ) . (D.7) 28 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Rate (R ′ ) → E sp (R ′ ) → E sp (R ′ ) R R conj R cr h(R ′ ) F R (R ′ −R) Figure 4: Construction and minimization of h ( R ′ ) for Case (ii), with ∆ = 0. Hence R 2 (∆) = R ′ ≥ R + ∆ is feasible for (D.3) and minimizes h ( · ). Substituting R ′ bac k in to (D.3), we obtain E L ∅ ( R, p X , W , α ) = h ( R 2 (∆)) = E sp ( R 2 (∆) , p X , W ) + F − 1 R,p X , W ( R 1 (∆) − R ) whic h esta b lishes (5.16). (iv). R ≤ R cr ( p X , W ) and R ∞ ( p X , W ) − R ≤ ∆ ≤ 0. Again we h a v e h ′ ( R ′ ) = 0 if and only if R ′ and R ′ − ∆ are conju gate rates. T hen, usin g the ab o ve assumption on ∆ , w e ha v e R ≤ R ′ = R 1 (∆) ≤ R cr ( p X , W ) ≤ R ′ − ∆ = R 2 (∆) . (D.8) Hence R 1 (∆) = R ′ ≥ R + ∆ is feasible for (D.3) and minimizes h ( · ). Substituting R ′ bac k in to (D.3), we obtain E L ∅ ( R, p X , W α ) = h ( R 1 (∆)) = E sp ( R 1 (∆) , p X , W ) + F − 1 R,p X , W ( R 2 (∆) − R ) whic h esta b lishes (5.17). ✷ 29 E Pro of of Lemma 5.4 First we pro ve (5.20). F or an y R 0 < R 1 , w e ha v e Z R 1 R 0 E ′ sp ( R, p X , W ) dR ( a ) = Z R 1 R 0 min p Y | X ∈ W E ′ sp ( R, p X , p Y | X ) dR ≤ min p Y | X ∈ W Z R 1 R 0 E ′ sp ( R, p X , p Y | X ) dR = min p Y | X ∈ W [ E sp ( R 1 , p X , p Y | X ) − E sp ( R 0 , p X , p Y | X )] ( b ) ≤ E sp ( R 1 , p X , p ∗ Y | X ) − E sp ( R 0 , p X , p ∗ Y | X ) = min p Y | X ∈ W E sp ( R 1 , p X , p Y | X ) − E sp ( R 0 , p X , p ∗ Y | X ) ≤ min p Y | X ∈ W E sp ( R 1 , p X , p Y | X ) − min p Y | X ∈ W E sp ( R 0 , p X , p Y | X ) = E sp ( R 1 , p X , W ) − E sp ( R 0 , p X , W ) = Z R 1 R 0 E ′ sp ( R , p X , W ) dR (E.1) where (a) follo ws fr om the definition of E ′ sp in (5.18), and w e c ho ose p ∗ Y | X in inequalit y (b) as the minimizer of E sp ( R 1 , p X , · ) ov er W . Sin ce (E.1 ) holds for all R 0 < R 1 , w e must ha ve inequalit y b et ween the integ ran d s in the left and righ t sides: E ′ sp ( R, p X , W ) ≤ E ′ sp ( R , p X , W ). Moreo v er, the three inequalities used to derive (E.1) hold w ith equalit y if the s ame p ∗∗ Y | X minimizes E ′ sp ( R, p X , · ) at all rates, and the same p ∗ Y | X minimizes E sp ( R, p X , · ) at all rates. W e need not (and generally do not) hav e p ∗∗ Y | X = p ∗ Y | X . 3 Next we pr o v e (5.2 1 ). By definition of R conj ( p X , W ), w e hav e − E ′ sp ( R conj ( p X , W ) , p X , W ) = 1 − E ′ sp ( R, p X , W ) ( a ) ≥ 1 − E ′ sp ( R, p X , W ) = min p Y | X ∈ W 1 − E ′ sp ( R, p X , p Y | X ) = min p Y | X ∈ W [ − E ′ sp ( R conj ( p X , p Y | X ) , p X , p Y | X )] ( b ) ≥ min p Y | X ∈ W [ − E ′ sp ( R conj ( p X , W ) , p X , p Y | X )] ( c ) = − E ′ sp ( R conj ( p X , W ) , p X , W ) ( d ) ≥ − E ′ sp ( R conj ( p X , W ) , p X , W ) . where (a) and (d) are due to (5.20), (b) to the d efinition of R conj ( p X , W ) in (5.19) and the f act that − E ′ sp ( R, p X , p Y | X ) is a decreasing fu nction of R , and (c) f rom (5.18). Sin ce − E ′ sp ( R, p X , W ) is also a decreasing fu n ction of R , we m ust ha ve R conj ( p X , W ) ≤ R conj ( p X , W ). Moreo v er, the conditions for equalit y are the same as those for equalit y in (5.20). ✷ 3 While p ∗ Y | X is the noisiest channel in W , p ∗∗ Y | X ma y be th e cleanest channel in W , as in the BSC example of Sec. 7. 30 References [1] I. Csisz´ ar and J. K¨ orner, Information The or y: Co ding The ory for Discr ete M emoryless Sys- tems , Academic P ress, NY, 1981. [2] A. Lapid oth and P . Nara yan, “Reliable Communicatio n Under Channel Un certaint y ,” IE EE T r ans. Information The or y , V ol. 44, No. 6, pp . 2148—21 77, Oct. 19 98. [3] G. D. F orney , Jr., “Exp onen tial Error Bounds for Erasure, List, and Decision F eedback Sc hemes,” IEEE T r ans. Information The ory , V ol. 14, No. 2, pp. 20 6—220, 1968 . [4] I. E. T elatar and R. G. Gallager, “New Exp onentia l Up p er Bounds to Error and Erasure Probabilities,” Pr o c. ISIT’94 , p. 379, T rondheim, Norw a y , June 1994. [5] N. Merha v an d M. F eder, “Minimax Universal Decod ing with Erasure O ption,” IEEE T r ans. Information The or y , V ol. 53, No. 5, p p. 1664—167 5, May 2007. [6] W. Ho effding, “Asymptotica lly Op timal T ests for Multinomial Distributions,” Ann. Math. Stat. , V ol. 36, No. 2, pp. 369—400, 19 65. [7] G. T u s n´ ady , “On Asymptotically O ptimal T ests,” Annals of Statistics , V ol. 5, No. 2, pp. 385— 393, 1977. [8] O. Zeitouni and M. Gutman, “On Univ ers al Hyp otheses T esting via L arge Deviations,” IEEE T r ans. Information The or y , V ol. 37, No. 2, pp . 285—290 , 1991. [9] I. Cs isz´ ar, p ersonal c ommunic ation , Aug. 2007. [10] M. F eder and N. Merhav, “Univ ersal Comp osite Hyp othesis T esting: A C omp etitiv e Minimax Approac h,” IEEE T r ans. Informatio n The ory , V ol. 48, No. 6, pp . 1504—15 17, Ju ne 2002. 31

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment