Assisted Common Information: Further Results

We presented assisted common information as a generalization of G\'acs-K\"orner (GK) common information at ISIT 2010. The motivation for our formulation was to improve upperbounds on the efficiency of protocols for secure two-party sampling (which is…

Authors: Vinod M. Prabhakaran, Manoj M. Prabhakaran

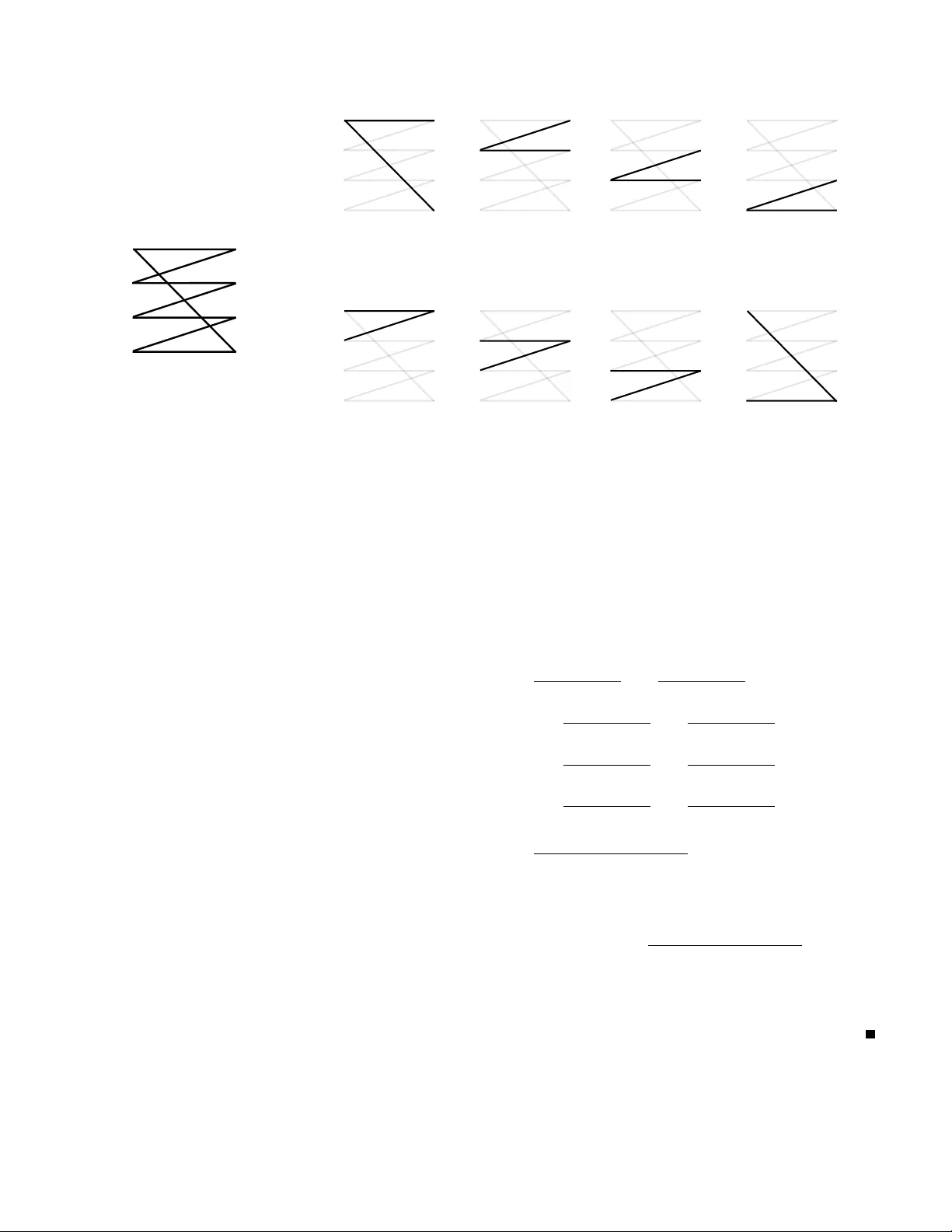

Assisted Common Information: Further Results V inod M. Prabhakaran ´ Ecole Polytechnique F ´ ed ´ erale de Lausanne Switzerland Manoj M. Prabhakaran Univ ersity of Illinois, Urbana-Champaign Urbana, IL 61801 Abstract —W e presented assisted common information as a generalization of G ´ acs-K ¨ orner (GK) common information at ISIT 2010. The motivation for our formulation was to improv e upperbounds on the efficiency of protocols for secure two-party sampling (which is a form of secure multi-party computation). Our upperbound was based on a monotonicity property of a rate- region (called the assisted residual information region) associated with the assisted common information formulation. In this note we present further results. W e explore the connection of assisted common information with the Gray-W yner system. W e show that the assisted residual inf ormation region and the Gray-W yner region are connected by a simple relationship: the assisted residual inf ormation region is the incr easing hull of the Gray-W yner region under an affine map. Several known relationships between GK common information and Gray-W yner system fall out as consequences of this. Quantities which arise in other source coding contexts acquire new interpretations. In pre vious work we showed that assisted common information can be used to derive upperbounds on the rate at which a pair of parties can securely sample correlated random variables, given correlated random variables from another distribution. Here we present an example where the bound deriv ed using assisted common inf ormation is much better than pr eviously known bounds, and in fact is tight. This example considers correlated random variables defined in terms of standard variants of oblivious transfer , and is interesting on its own as it answers a natural question about these cryptographic primitives. I . I N T R O D U C T I O N If U, V , W are independent random v ariables, a natural measure of “common information” of X = ( U, V ) and Y = ( U, W ) is H ( U ) . Observers of either X or Y may produce the common part U and conditioned on this common part, there is no residual information, i.e., I ( X ; Y | U ) = 0 . G ´ acs-K ¨ orner (GK) common information [5], [16] is a generalization of this to arbitrary X , Y . T w o observers see X n = ( X 1 , X 2 , . . . , X n ) and Y n = ( Y 1 , Y 2 , . . . , Y n ) , resp., where ( X i , Y i ) are indepen- dent draws of ( X , Y ) . The observers produce W 1 = f 1 ( X n ) and W 2 = f 2 ( X n ) which have an asymptotically v anishing probability of not matching. GK common information is the largest entropy rate (normalized by n ) of such a common random variable. It was howe ver shown that this value is the largest H ( U ) for which the random variables can be written as X = ( U, V ) and Y = ( U, W ) (where U, V , W may be dependent), i.e., the definition captures only an explicit form of common information in a single instance of X, Y . At ISIT 2010 we presented a generalization of GK common information [13]. In our setup (see Figure 1), an omniscient genie (who has access to the X and Y sequences) assists the users in generating the common random v ariables by sending them messages over rate-limited noiseless links. A three-dimensional trade-of f region which characterizes the trade-off between the rates of the two noiseless links and the resulting residual information (defined as the conditional mutual information between the source sequences conditioned on the common random variable normalized by the length of the sequence) was deriv ed. W e call this the assisted residual information r e gion . When the links ha ve zero rates, we recover GK common information. Our moti v ation for this generalization was an application to cryptography . Distributed dependent random variables are an important resource in the cryptographic task of secure multi-party computation. A fundamental problem here is for two parties to securely generate a certain pair of random variables, giv en another pair of random variables, by means of a protocol. Our main result there was that the assisted residual dependency region of the vie ws of two parties engaged in such a protocol can only monotonically expand and not shrink which immediately leads to upperbounds on the efficiency with which a target pair of random v ariables can be generated from another pair . This w ork generalized pre vious work on monotones [17]. These works are in the same vein as [1], [4], [15], [10], [9], [7], [2], [14] which employ information theory to deriv e bounds on efficienc y in cryptography . In the first part of this paper we e xplore connections be- tween the assisted common information system and the Gray- W yner source coding system of [6]. In the Gray-W yner system, a pair of sources is decomposed into three components: one public and two priv ate. Using the public and one of the priv ate components, one of the pair of sources must be recov erable, while the other source must be recoverable using the other priv ate component and the public component. Gray-W yner region is a three-dimensional region which characterizes the trade-offs between the rates at which the three components can be encoded. W e show that the assisted residual information region and the Gray-W yner region are connected by a simple relationship: the assisted residual information region is the increasing hull 1 of the Gray-W yner region under an affine map. Sev eral known relationships between GK common information and Gray- W yner system fall out as consequences of this. This also leads 1 Increasing hull i ( S ) of a set S ⊆ R d is the set of all s ∈ R d such that there is a s 0 ∈ S such that s ≥ s 0 , where the inequality is component-wise. User 1 User 2 genie X 1 , X 2 , . . . , X n Y 1 , Y 2 , . . . , Y n W 2 W 1 R 2 R 1 Fig. 1: Setup for assisted common information system. The users generate W 1 and W 2 which are required to agree with high probability . A genie assists the users by sending separate messages to them over rate-limited noiseless links. When the genie is absent the setup reduces to the one for G ´ acs-K ¨ orner common information. X 1 , X 2 , . . . , X n Y 1 , Y 2 , . . . , Y n Definition 1.2: The Gray-W yner re gion R GW ( X, Y ) is the set of all achie v able rate 3-tuples. W e write R GW when the random v ariables are clear from the conte xt. A simple lo wer -bound to R GW is L GW = { ( R A ,R B ,R C ): R A + R C ≥ H ( X ) ,R B + R C ≥ H ( Y ) ,R A + R B + R C ≥ H ( X, Y ) } The Gray-W yner re gion w as characterized in [ ? ]. Pr oposition 1.3: R GW = i p U | X ,Y ∈ P { ( H ( X | U ) ,H ( Y | U ) ,I ( X, Y ; U )) } The Gray-W yner system generalizes the setup for W yner’ s common information which is defined as the smallest R C such that the outputs of the encoder tak en together is an asymptotically ef ficient representation of ( X, Y ) , i.e., when R A + R B + R C = H ( X, Y ) . Using the abo v e proposition we ha v e Pr oposition 1.4: C Wyner = i nf { R C :( R A ,R B ,R C ) ∈ R GW , R A + R B + R C = H ( X, Y ) } = in f p U | X, Y ∈ P : X − U − Y I ( X, Y ; U ) C. Known connections The follo wing connections between the tw o systems are kno wn: • G ´ acs-K ¨ orner -W itsenhausen common information can be obtained from the Gray-W yner re gion [ 5 , Problem 4.29, pg. 404]. C GKW = sup { R C : R A + R C = H ( X ) ,R B + R C = H ( Y ) , ( R A ,R B ,R C ) ∈ R GW } (8) Alternati v ely [ ? ], C GKW = sup { R : R ≤ I ( X ; Y ) , { R C = R } ∩ L GW ⊆ R GW } (9) • W yner’ s common information can be obtained from the G ´ acs-K ¨ orner -W itsenhausen system [ ? , Corollary 2.3]. C Wyner = I ( X ; Y ) + inf ( R 1 ,R 2 , 0) ∈ R GKW R 1 + R 2 . (10) II. R EL A T I O NS H I P BE TW EE N G ´ AC S -K ¨ OR NER -W IT SE NHA U SE N A ND G RA Y -W YN ER S YS TE MS Theor em 2.1: Let R � GW be the image of R GW under the af fine map f defined belo w . f ( s )= 101 011 111 s − H ( X ) H ( Y ) H ( X, Y ) ,s ∈ R + 3 . Then R GKW = i R � GW . Thus, the assisted residual information re gion R GKW is the increasing hull of the Gray-W yner re gion R GW under an af fine map. Ho we v er , it must be noted that the Gray-W yner re gion itself does not possess the monoticity property of R GKW which leads to Theorem 3.1 and is therefore less-suited for the cryptographic application which moti v ated [ ? ]. The tw o points we noted in Sec- tion I-C f all out of Theorem 2.1 . Cor ollary 2.2: C GKW = sup { R C : R A + R C = H ( X ) ,R B + R C = H ( Y ) , ( R A ,R B ,R C ) ∈ R GW } (8) = sup { R : R ≤ I ( X ; Y ) , { R C = R } ∩ L GW ⊆ R GW } (9) Cor ollary 2.3: C Wyner = I ( X ; Y ) + inf ( R 1 ,R 2 , 0) ∈ R GKW R 1 + R 2 . (10) Analogous to the definition of R RD-0 , we define the ax es intercepts on the other tw o ax es. R 1 − 0 = i nf { R 1 :( R 1 , 0 , 0) ∈ R GKW } R 2 − 0 = i nf { R 2 :( 0 ,R 2 , 0) ∈ R GKW } R 1 − 0 (resp., R 2 − 0 ) is the rate at which the genie must communicate when it has a link to only the user who recei v es X (resp. Y ) source so that the users ca n produce a common random v ariable conditioned on which the sources are independent 2 . Using Proposition 1.2 we can 2 Though the definition allo ws for zero-rate communication to the other user and a zero-rate, b ut non-zero residual conditional mutual information, it can be sho wn from the e xpression for these rates in ( 11 )-( 12 ) that there is a scheme which achie v es e xact conditional independence and requires no communication to the other user . Definition 1.2: The Gray-W yner re gion R GW ( X, Y ) is the set of all achie v able rate 3-tuples. W e write R GW when the random v ariables are clear from the conte xt. A simple lo wer -bound to R GW is L GW = { ( R A , R B ,R C ): R A + R C ≥ H ( X ) ,R B + R C ≥ H ( Y ) ,R A + R B + R C ≥ H ( X, Y ) } The Gray-W yner re gion w as characterized in [ ? ]. Pr oposition 1.3: R GW = i p U | X ,Y ∈ P { ( H ( X | U ) ,H ( Y | U ) ,I ( X, Y ; U )) } The Gray-W yner system generalizes the setup for W yner’ s common information which is defined as the smallest R C such that the outputs of the encoder tak en together is an asymptotically ef ficient representation of ( X, Y ) , i.e., when R A + R B + R C = H ( X, Y ) . Using the abo v e proposition we ha v e Pr oposition 1.4: C Wyner = i nf { R C :( R A ,R B ,R C ) ∈ R GW , R A + R B + R C = H ( X, Y ) } = in f p U | X, Y ∈ P : X − U − Y I ( X, Y ; U ) C. Known connections The follo wing connections between the tw o systems are kno wn: • G ´ acs-K ¨ orner -W itsenhausen common information can be obtained from the Gray-W yner re gion [ 5 , Problem 4.29, pg. 404]. C GKW = sup { R C : R A + R C = H ( X ) ,R B + R C = H ( Y ) , ( R A ,R B ,R C ) ∈ R GW } (8) Alternati v ely [ ? ], C GKW = sup { R : R ≤ I ( X ; Y ) , { R C = R } ∩ L GW ⊆ R GW } (9) • W yner’ s common information can be obtained from the G ´ acs-K ¨ orner -W itsenhausen system [ ? , Corollary 2.3]. C Wyner = I ( X ; Y ) + inf ( R 1 ,R 2 , 0) ∈ R GKW R 1 + R 2 . (10) II. R EL A T I O NS H I P BE TW EE N G ´ AC S -K ¨ OR NER -W IT SE NHA U SE N A ND G RA Y -W YN ER S YS TE MS Theor em 2.1: Let R � GW be the image of R GW under the af fine map f defined belo w . f ( s )= 101 011 111 s − H ( X ) H ( Y ) H ( X, Y ) ,s ∈ R + 3 . Then R GKW = i R � GW . Thus, the assisted residual information re gion R GKW is the increasing hull of the Gray-W yner re gion R GW under an af fine map. Ho we v er , it must be noted that the Gray-W yner re gion itself does not possess the monoticity property of R GKW which leads to Theorem 3.1 and is therefore less-suited for the cryptographic application which moti v ated [ ? ]. The tw o points we noted in Sec- tion I-C f all out of Theorem 2.1 . Cor ollary 2.2: C GKW = sup { R C : R A + R C = H ( X ) ,R B + R C = H ( Y ) , ( R A ,R B ,R C ) ∈ R GW } (8) = sup { R : R ≤ I ( X ; Y ) , { R C = R } ∩ L GW ⊆ R GW } (9) Cor ollary 2.3: C Wyner = I ( X ; Y ) + inf ( R 1 ,R 2 , 0) ∈ R GKW R 1 + R 2 . (10) Analogous to the definition of R RD-0 , we define the ax es intercepts on the other tw o ax es. R 1 − 0 = i nf { R 1 :( R 1 , 0 , 0) ∈ R GKW } R 2 − 0 = i nf { R 2 :( 0 ,R 2 , 0) ∈ R GKW } R 1 − 0 (resp., R 2 − 0 ) is the rate at which the genie must communicate when it has a link to only the user who recei v es X (resp. Y ) source so that the users ca n produce a common random v ariable conditioned on which the sources are independent 2 . Using Proposition 1.2 we can 2 Though the definition allo ws for zero-rate communication to the other user and a zero-rate, b ut non-zero residual conditional mutual information, it can be sho wn from the e xpression for these rates in ( 11 )-( 12 ) that there is a scheme which achie v es e xact conditional independence and requires no communication to the other user . Definition 1.2: The Gray-W yner re gion R GW ( X, Y ) is the set of all achie v able rate 3-tuples. W e write R GW when the random v ariables are clear from the conte xt. A simple lo wer -bound to R GW is L GW = { ( R A ,R B , R C ): R A + R C ≥ H ( X ) ,R B + R C ≥ H ( Y ) ,R A + R B + R C ≥ H ( X, Y ) } The Gray-W yner re gion w as characterized in [ ? ]. Pr oposition 1.3: R GW = i p U | X ,Y ∈ P { ( H ( X | U ) ,H ( Y | U ) ,I ( X, Y ; U )) } The Gray-W yner system generalizes the setup for W yner’ s common information which is defined as the smallest R C such that the outputs of the encoder tak en together is an asymptotically ef ficient representation of ( X, Y ) , i.e., when R A + R B + R C = H ( X, Y ) . Using the abo v e proposition we ha v e Pr oposition 1.4: C Wyner = i nf { R C :( R A ,R B ,R C ) ∈ R GW , R A + R B + R C = H ( X, Y ) } = in f p U | X, Y ∈ P : X − U − Y I ( X, Y ; U ) C. Known connections The follo wing connections between the tw o systems are kno wn: • G ´ acs-K ¨ orner -W itsenhausen common information can be obtained from the Gray-W yner re gion [ 5 , Problem 4.29, pg. 404]. C GKW = sup { R C : R A + R C = H ( X ) ,R B + R C = H ( Y ) , ( R A ,R B ,R C ) ∈ R GW } (8) Alternati v ely [ ? ], C GKW = sup { R : R ≤ I ( X ; Y ) , { R C = R } ∩ L GW ⊆ R GW } (9) • W yner’ s common information can be obtained from the G ´ acs-K ¨ orner -W itsenhausen system [ ? , Corollary 2.3]. C Wyner = I ( X ; Y ) + inf ( R 1 ,R 2 , 0) ∈ R GKW R 1 + R 2 . (10) II. R EL A T I O NS H I P BE TW EE N G ´ AC S -K ¨ OR NER -W IT SE NHA U SE N A ND G RA Y -W YN ER S YS TE MS Theor em 2.1: Let R � GW be the image of R GW under the af fine map f defined belo w . f ( s )= 101 011 111 s − H ( X ) H ( Y ) H ( X, Y ) ,s ∈ R + 3 . Then R GKW = i R � GW . Thus, the assisted residual information re gion R GKW is the increasing hull of the Gray-W yner re gion R GW under an af fine map. Ho we v er , it must be noted that the Gray-W yner re gion itself does not possess the monoticity property of R GKW which leads to Theorem 3.1 and is therefore less-suited for the cryptographic application which moti v ated [ ? ]. The tw o points we noted in Sec- tion I-C f all out of Theorem 2.1 . Cor ollary 2.2: C GKW = sup { R C : R A + R C = H ( X ) ,R B + R C = H ( Y ) , ( R A ,R B ,R C ) ∈ R GW } (8) = sup { R : R ≤ I ( X ; Y ) , { R C = R } ∩ L GW ⊆ R GW } (9) Cor ollary 2.3: C Wyner = I ( X ; Y ) + inf ( R 1 ,R 2 , 0) ∈ R GKW R 1 + R 2 . (10) Analogous to the definition of R RD-0 , we define the ax es intercepts on the other tw o ax es. R 1 − 0 = i nf { R 1 :( R 1 , 0 , 0) ∈ R GKW } R 2 − 0 = i nf { R 2 :( 0 ,R 2 , 0) ∈ R GKW } R 1 − 0 (resp., R 2 − 0 ) is the rate at which the genie must communicate when it has a link to only the user who recei v es X (resp. Y ) source so that the users ca n produce a common random v ariable conditioned on which the sources are independent 2 . Using Proposition 1.2 we can 2 Though the definition allo ws for zero-rate communication to the other user and a zero-rate, b ut non-zero residual conditional mutual information, it can be sho wn from the e xpression for these rates in ( 11 )-( 12 ) that there is a scheme which achie v es e xact conditional independence and requires no communication to the other user . impro v ed on the upperbounds that could be deri v ed from pre vious results. These pairs were contri v ed to highlight the shortcomings of prior w ork. Here we gi v e yet another e xample where the upperbound from our result strictly impro v es on prior w ork, b ut is further interesting for tw o reasons: firstly , the ne w e xample is based on natural correlated random v ariables that are widely studied (namely , v ariants of obli vious transfer), and secondly the ne w upperbound we can pro v e actually matches an easy lo werbound and is therefore tight. A. A Ne w Example W e no w discuss the ne w e xample where our upper - bound is not only strictly better than the pre viously best a v ailable upperbound, b ut is also tight. Example 3.1: Let S A, 1 ,S A, 2 ,S B, 1 ,S B, 2 ∈ { 0 , 1 } L and C A ,C B ∈ { 1 , 2 } be six independent random v ari- ables all of which are uniformly distrib uted o v er their alphabets. Consider a pair of random v ariables X, Y defined as X =( C A ,S A, 1 ,S A, 2 ,S B ,C A ) and Y = ( C B ,S B, 1 ,S B, 2 ,S A,C B ) . Notice that these are in f act a pair of independent string-obli vious transfers (string- O T’ s) [ ? ] citation (string length L ) in opposite directions. Let U, V be a pair of random v ariables whose joint distrib ution is the same as that of X, Y , b ut with L =1 . In other w ords, U, V are a pair of independent bit-O T’ s in opposite directi ons. The goal is to characterize the ef ficienc y with which we may securely generate inde- pendent instances of U, V from independent instances of X, Y for L> 1 . It is easy to see that R GKW ( X, Y ) intersects the co- ordinate ax es at (1 + L, 0 , 0) , (0 , 1+ L, 0) , and (0 , 0 , 2 L ) . From, these we can immediately obtain the upperbound of [ 18 ] on the ef ficienc y , namely (1 + L ) / 2 . Notice that this is dependent on L and w ould suggest that (se v eral) long string-O T pairs can be turned into se v eral (more) bit-O T pairs. Ho we v er , as we sho w belo w , the ef ficienc y of con v ersion is just 1, i .e., the best one can do is to turn each pair of string-O T’ s into a pair of bit-O T’ s. W e will sho w that in f { R 1 + R 2 :( R 1 ,R 2 , 0) ∈ R GKW ( U, V ) } =2 . But, (1 , 1 , 0) ∈ R GKW ( X, Y ) . This can be seen by setting Q =( C A ,C B ,S A,C B ,S B ,C A ) for which ( R 1 ,R 2 ,R RD ) = (1 , 1 , 0) . Thus, in f { R 1 + R 2 :( R 1 ,R 2 , 0) ∈ R GKW ( X, Y ) } ≤ 2 . Hence, from Theorem 3.1 , we may conclude that the ef ficienc y of con v ersion we are after is 1. It only rem ains to characterize in f { R 1 + R 2 : ( R 1 ,R 2 , 0) ∈ R GKW ( U, V ) } . The follo wing lemma whose proof is dele g ated to the appendix for w ant of space pro vides the required characterization. Lemma 3.2: in f { R 1 + R 2 :( R 1 ,R 2 , 0) ∈ R GKW ( U, V ) } =2 . A CK NO WLE DGE M E NT S The authors w ould lik e to gratefully ackno wledge discussions with V enkat Anantharam, P ´ eter G ´ acs, and Y oung-Han Kim. The e xample in Section III-A is based on a suggestion by J ¨ ur g W ullschle ger . ˆ X 1 , ˆ X 2 ,..., ˆ X n ˆ Y 1 , ˆ Y 2 ,. .. , ˆ Y n A PP EN D I X Pr oof of Pr oposition 1.1 : GKW common information C GKW is defined as the supremum of the set of R such that for e v ery � > 0 there are maps g 1 : X n → Z , and g 2 : Y n → Z for a suf ficiently lar ge n which satisfy Pr ( g 1 ( X n ) � = g 2 ( Y n )) ≤ � , (17) 1 n H ( g 1 ( X n ))) ≥ R − � . (18) An alternati v e definti on which allo ws for a genie with zero-rate links to the users is gi v en belo w . It is easy to see that this can only lead to a lar ger v alue. But as we will sho w , the definitions are in f act equi v alent. Let C � GKW be the supremum of the set of R such that for e v ery � > 0 there are maps f k : X n × Y n → { 1 ,. .. , 2 n � } , ( k =1 , 2) , g 1 : X n × { 1 ,. .. , 2 n � } → Z , and g 2 : Y n × { 1 ,. .. , 2 n � } → Z for a suf ficiently lar ge n which satisfy ( 1 ) and 1 n H ( g 1 ( X n ,f 1 ( X n ,Y n ))) ≥ R − � . Clearly , C � GKW ≥ C GKW . W e first sho w C � GKW = I ( X ; Y ) − R RD-0 . (19) Let U = g 1 ( X n ( f 1 ( X n ,Y n ))) . Then I ( X n ; Y n | U )+ H ( U ) = I ( X n ; Y n | U )+ I ( X n ,Y n ; U ) = H ( X n ,Y n ) − H ( X n | Y n ,U ) − H ( Y n | X n ,U ) = I ( X n ; Y n )+ I ( X n ; U | Y n )+ I ( Y n ; U | X n ) ≥ nI ( X ; Y ) . Therefore, if the maps satisfy ( 2 ), then H ( U ) ≥ nI ( X ; Y ) − I ( X n ; Y n | U ) ≥ nI ( X ; Y ) − n ( R RD + � ) = n ( I ( X ; Y ) − R RD − � ) impro v ed on the upperbounds that could be deri v ed from pre vious results. These pairs were contri v ed to highlight the shortcomings of prior w ork. Here we gi v e yet another e xample where the upperbound from our result strictly impro v es on prior w ork, b ut is further interesting for tw o reasons: firstly , the ne w e xample is based on natural correlated random v ariables that are widely studied (namely , v ariants of obli vious transfer), and secondly the ne w upperbound we can pro v e actually matches an easy lo werbound and is therefore tight. A. A Ne w Example W e no w discuss the ne w e xample where our upper - bound is not only strictly better than the pre viously best a v ailable upperbound, b ut is also tight. Example 3.1: Let S A, 1 ,S A, 2 ,S B, 1 ,S B, 2 ∈ { 0 , 1 } L and C A ,C B ∈ { 1 , 2 } be six independent random v ari- ables all of which are uniformly distrib uted o v er their alphabets. Consider a pair of random v ariables X, Y defined as X =( C A ,S A, 1 ,S A, 2 ,S B ,C A ) and Y = ( C B ,S B, 1 ,S B, 2 ,S A,C B ) . Notice that these are in f act a pair of independent string-obli vious transfers (string- O T’ s) [ ? ] citation (string length L ) in opposite directions. Let U, V be a pair of random v ariables whose joint distrib ution is the same as that of X, Y , b ut with L =1 . In other w ords, U, V are a pair of independent bit-O T’ s in opposite directi ons. The goal is to characterize the ef ficienc y with which we may securely generate inde- pendent instances of U, V from independent instances of X, Y for L> 1 . It is easy to see that R GKW ( X, Y ) intersects the co- ordinate ax es at (1 + L, 0 , 0) , (0 , 1+ L, 0) , and (0 , 0 , 2 L ) . From, these we can immediately obtain the upperbound of [ 18 ] on the ef ficienc y , namely (1 + L ) / 2 . Notice that this is dependent on L and w ould suggest that (se v eral) long string-O T pairs can be turned into se v eral (more) bit-O T pairs. Ho we v er , as we sho w belo w , the ef ficienc y of con v ersion is just 1, i .e., the best one can do is to turn each pair of string-O T’ s into a pair of bit-O T’ s. W e will sho w that in f { R 1 + R 2 :( R 1 ,R 2 , 0) ∈ R GKW ( U, V ) } =2 . But, (1 , 1 , 0) ∈ R GKW ( X, Y ) . This can be seen by setting Q =( C A ,C B ,S A,C B ,S B ,C A ) for which ( R 1 ,R 2 ,R RD ) = (1 , 1 , 0) . Thus, in f { R 1 + R 2 :( R 1 ,R 2 , 0) ∈ R GKW ( X, Y ) } ≤ 2 . Hence, from Theorem 3.1 , we may conclude that the ef ficienc y of con v ersion we are after is 1. It only rem ains to characterize in f { R 1 + R 2 : ( R 1 ,R 2 , 0) ∈ R GKW ( U, V ) } . The follo wing lemma whose proof is dele g ated to the appendix for w ant of space pro vides the required characterization. Lemma 3.2: in f { R 1 + R 2 :( R 1 ,R 2 , 0) ∈ R GKW ( U, V ) } =2 . A CK NO WLE DGE M E NT S The authors w ould lik e to gratefully ackno wledge discussions with V enkat Anantharam, P ´ eter G ´ acs, and Y oung-Han Kim. The e xample in Section III-A is based on a suggestion by J ¨ ur g W ullschle ger . ˆ X 1 , ˆ X 2 ,. .. , ˆ X n ˆ Y 1 , ˆ Y 2 ,..., ˆ Y n A PP EN D I X Pr oof of Pr oposition 1.1 : GKW common information C GKW is defined as the supremum of the set of R such that for e v ery � > 0 there are maps g 1 : X n → Z , and g 2 : Y n → Z for a suf ficiently lar ge n which satisfy Pr ( g 1 ( X n ) � = g 2 ( Y n )) ≤ � , (17) 1 n H ( g 1 ( X n ))) ≥ R − � . (18) An alternati v e definti on which allo ws for a genie with zero-rate links to the users is gi v en belo w . It is easy to see that this can only lead to a lar ger v alue. But as we will sho w , the definitions are in f act equi v alent. Let C � GKW be the supremum of the set of R such that for e v ery � > 0 there are maps f k : X n × Y n → { 1 ,. .. , 2 n � } , ( k =1 , 2) , g 1 : X n × { 1 ,. .. , 2 n � } → Z , and g 2 : Y n × { 1 ,. .. , 2 n � } → Z for a suf ficiently lar ge n which satisfy ( 1 ) and 1 n H ( g 1 ( X n ,f 1 ( X n ,Y n ))) ≥ R − � . Clearly , C � GKW ≥ C GKW . W e first sho w C � GKW = I ( X ; Y ) − R RD-0 . (19) Let U = g 1 ( X n ( f 1 ( X n ,Y n ))) . Then I ( X n ; Y n | U )+ H ( U ) = I ( X n ; Y n | U )+ I ( X n ,Y n ; U ) = H ( X n ,Y n ) − H ( X n | Y n ,U ) − H ( Y n | X n ,U ) = I ( X n ; Y n )+ I ( X n ; U | Y n )+ I ( Y n ; U | X n ) ≥ nI ( X ; Y ) . Therefore, if the maps satisfy ( 2 ), then H ( U ) ≥ nI ( X ; Y ) − I ( X n ; Y n | U ) ≥ nI ( X ; Y ) − n ( R RD + � ) = n ( I ( X ; Y ) − R RD − � ) Encoder Decoder 1 Decoder 2 Fig. 2: Setup for Gray-W yner (GW) system. to alternative interpretations (in terms of the assisted common information system) to quantities which arise naturally in certain other source coding contexts. Howe ver , it must be noted that the Gray-W yner region itself does not possess the monotonicity property which makes it less-suited for the cryptographic application which motiv ated [13]. The second half of the paper is a sequel to the cryptographic application in [13]. There we showed an example where our upperbound (on the ef ficiency with which a pair of random variables can be securely generated from another pair) strictly improv ed upon bounds from previous results. That example was contriv ed to highlight the shortcomings of prior w ork. Here we give yet another example where the upperbound from our result strictly improv es on the prior work, but is further interesting for two reasons: firstly , the new example is based on natural correlated random v ariables that are widely studied (namely , v ariants of obli vious transfer), and secondly the new upperbound we can prove actually matches an easy lowerbound and is therefore tight. I I . P R E L I M I N A R I E S A. Assisted Common Information System W e presented the following generalization of GK common information at ISIT , 2010 [13]. W e call it the assisted common information system. Consider Figure 1. For a pair of random variables ( X, Y ) , we say that a rate pair ( R 1 , R 2 ) enables a residual information rate R RD if for ev ery > 0 , there is a large enough integer n and (deterministic) functions f k : X n × Y n → { 1 , . . . , 2 n ( R k + ) } , ( k = 1 , 2) , g 1 : X n × { 1 , . . . , 2 n ( R 1 + ) } → Z , and g 2 : Y n × { 1 , . . . , 2 n ( R 2 + ) } → Z (where Z is the set of integers) such that Pr ( g 1 ( X n , f 1 ( X n , Y n )) 6 = g 2 ( Y n , f 2 ( X n , Y n ))) ≤ , (1) 1 n I ( X n ; Y n | g 1 ( X n , f 1 ( X n , Y n ))) ≤ R RD + . (2) Definition 2.1: W e define the assisted r esidual information r e gion 2 R ACI ( X, Y ) of a pair of random variables ( X, Y ) with joint distribution p X,Y as the set of all ( r 1 , r 2 , r RD ) ∈ R + 3 for which there is a ( R 1 , R 2 , R RD ) such that r 1 ≥ R 1 , r 2 ≥ R 2 , r RD ≥ R RD , and ( R 1 , R 2 ) enables the residual information rate R RD . In other words, R ACI ( X, Y ) 4 = i ( { ( R 1 , R 2 , R RD ) : ( R 1 , R 2 ) enables R RD } ) , where i ( S ) denotes the increasing hull of S ⊆ R + 3 : i ( S ) = { s ∈ R + 3 : s ≥ s 0 component-wise for some s 0 ∈ S } . W e will write R ACI when the random variables inv olved are obvious from the context. When the tw o rates from the genie are zero, we reco ver G ´ acs-K ¨ orner common information, C GK [5], [16]. Let R RD-0 4 = inf { R RD : (0 , 0 , R RD ) ∈ R ACI ( X, Y ) } . Then we hav e the following proposition. Pr oposition 2.1: C GK ( X, Y ) = I ( X ; Y ) − R RD-0 . (3) Further R RD-0 = inf p U | X Y : I ( X ; U | Y )= I ( Y ; U | X )=0 I ( X ; Y | U ) (4) which giv es C GK ( X, Y ) = sup p U | X Y : I ( X ; U | Y )= I ( Y ; U | X )=0 H ( U ) . (5) Moreov er , C GK ( X, Y ) = 0 unless there are X 0 , Y 0 , U 0 such that X = ( X 0 , U 0 ) , Y = ( Y 0 , U 0 ) , in which case C GK = max U 0 : X =( X 0 ,U 0 ) ,Y =( Y 0 ,U 0 ) H ( U 0 ) . The proof of this proposition and all other results are av ailable in the appendix. The proof of (4) relies on the following characterization of R ACI which was proved in [13]. Let P be the set of all marginal p.m.f ’ s p U | X ,Y such that the cardinality of alphabet U of U is |X ||Y | + 2 . Pr oposition 2.2: R ACI ( X, Y ) = i [ p U | X,Y ∈P { ( I ( Y ; U | X ) , I ( X ; U | Y ) , I ( X ; Y | U )) } 2 W e may also define an analogous assisted common information region by replacing the definition in (2) by 1 n I ( X n , Y n ; g 1 ( X n , f 1 ( X n , Y n ))) ≥ R CI − . See [13] for this and its connection to the above definition. In effect, the definitions are equivalent as we discuss there. W e work with assisted residual information region since it has a simple monotonicity property (Theorem 4.1) which makes it appealing for deriving bounds for secure two-party sampling. B. Gray-W yner system The Gray-W yner system is sho wn in Figure 2. It is a source coding problem formulated as follo ws: W e say that a rate 3- tuple ( R A , R B , R C ) is achievable if for ev ery > 0 , there is a large enough integer n and (deterministic) encoder functions f A : X n × Y n → { 1 , . . . , 2 n ( R A + ) } , f B : X n × Y n → { 1 , . . . , 2 n ( R B + ) } , f C : X n × Y n → { 1 , . . . , 2 n ( R C + ) } , and (deterministic) decoder functions g AC : { 1 , . . . , 2 n ( R A + ) } × { 1 , . . . , 2 n ( R C + ) } → X n , and g BC : { 1 , . . . , 2 n ( R B + ) } × { 1 , . . . , 2 n ( R C + ) } → Y n such that Pr ( g AC ( f A ( X n , Y n ) , f C ( X n , Y n )) 6 = X n )) ≤ , (6) Pr ( g BC ( f B ( X n , Y n ) , f C ( X n , Y n )) 6 = Y n )) ≤ . (7) Definition 2.2: The Gray-W yner region R GW ( X, Y ) is the set of all achiev able rate 3-tuples. W e write R GW when the random v ariables are clear from the context. A simple lower -bound to R GW ( X, Y ) is L GW ( X, Y ) = { ( R A , R B , R C ) : R A + R C ≥ H ( X ) , R B + R C ≥ H ( Y ) , R A + R B + R C ≥ H ( X , Y ) } (8) The Gray-W yner region was characterized in [6]. Pr oposition 2.3 ([6]): R GW ( X, Y ) = i [ p U | X,Y ∈P { ( H ( X | U ) , H ( Y | U ) , I ( X , Y ; U )) } The Gray-W yner system generalizes the setup for W yner’ s common information [19] which is defined as the smallest R C such that the outputs of the encoder taken together is an asymptotically efficient representation of ( X, Y ) , i.e., when R A + R B + R C = H ( X , Y ) . Using the above proposition we hav e Pr oposition 2.4: C Wyner ( X, Y ) = inf { R C : ( R A , R B , R C ) ∈ R GW ( X, Y ) , R A + R B + R C = H ( X , Y ) } = inf p U | X,Y ∈P : X − U − Y I ( X , Y ; U ) C. Known connections The following connections between the two systems are known: • G ´ acs-K ¨ orner common information can be obtained from the Gray-W yner region [3, Problem 4.28, pg. 404]. C GK ( X, Y ) = sup { R C : R A + R C = H ( X ) , R B + R C = H ( Y ) , ( R A , R B , R C ) ∈ R GW } (9) Alternativ ely [11], C GK ( X, Y ) = sup { R : R ≤ I ( X ; Y ) , { R C = R } ∩ L GW ⊆ R GW } (10) • W yner’ s common information can be obtained from the G ´ acs-K ¨ orner system [13, Corollary 2.3]. C Wyner ( X, Y ) = I ( X ; Y ) + inf ( R 1 ,R 2 , 0) ∈R ACI R 1 + R 2 . (11) I I I . R E L A T I O N S H I P B E T W E E N A S S I S T E D C O M M O N I N F O R M A T I O N A N D G R AY - W Y N E R S Y S T E M S Theor em 3.1: Let R 0 GW ( X, Y ) be the image of R GW ( X, Y ) under the affine map f X,Y defined below . f X,Y R A R B R C 4 = R A + R C − H ( X ) R B + R C − H ( Y ) R A + R B + R C − H ( X , Y ) . Then R ACI ( X, Y ) = i ( R 0 GW ( X, Y )) . Thus, the assisted residual information region R ACI ( X, Y ) is the increasing hull of the Gray-W yner region R GW ( X, Y ) under an af fine map f X,Y . The map, in fact, computes the gap of R GW ( X, Y ) to the simple lower bound L GW ( X, Y ) of (8) under a coordinate transformation. The first coordinate of R 0 GW is indeed the gap between the (sum) rate at which the first decoder in the Gray-W yner system recei ves data and the minimum possible rate at which it may receiv e data so that it can losslessly reproduce X n . The second coordinate has a similar interpretation with respect to the second decoder . The third coordinate is the gap between the rate at which the encoder sends data and the minimum possible rate at which it may transmit to allow both decoders to losslessly reproduce their respectiv e sources. It must, ho wev er , be noted that the Gray-W yner re gion itself does not possess the monotonicity property of R ACI which leads to Theorem 4.1 and is therefore less-suited for the cryptographic application which motiv ated [13]. The two points we noted in Section II-C fall out of Theo- rem 3.1. Cor ollary 3.2: C GK ( X, Y ) = sup { R C : R A + R C = H ( X ) , R B + R C = H ( Y ) , ( R A , R B , R C ) ∈ R GW ( X, Y ) } (9) = sup { R : R ≤ I ( X ; Y ) , { R C = R } ∩ L GW ( X, Y ) ⊆ R GW ( X, Y ) } (10) Cor ollary 3.3: C Wyner ( X, Y ) = I ( X ; Y ) + inf ( R 1 ,R 2 , 0) ∈R ACI ( X,Y ) R 1 + R 2 . (11) Analogous to the definition of R RD-0 , we define the axes intercepts on the other two axes. R 1 − 0 4 = inf { R 1 : ( R 1 , 0 , 0) ∈ R ACI } R 2 − 0 4 = inf { R 2 : (0 , R 2 , 0) ∈ R ACI } R 1 − 0 (resp., R 2 − 0 ) is the rate at which the genie must commu- nicate when it has a link to only the user who recei ves X (resp. Y ) source so that the users can produce a common random variable conditioned on which the sources are independent 3 . Using Proposition 2.2 we can show that R 1 − 0 = inf p U | X,Y ∈P : I ( X ; U | Y )= I ( X ; Y | U )=0 I ( Y ; U | X ) , (12) R 2 − 0 = inf p U | X,Y ∈P : I ( Y ; U | X )= I ( X ; Y | U )=0 I ( X ; U | Y ) . (13) These quantities were identified in [17] and shown to posses a monotonic property in the context of secure two-party sampling (a result which [13] generalized). As we will sho w belo w , this pair of quantities is closely related to a pair which has been identified else where in the context of lossless coding with side-information [12] and the Gray-W yner system [11]. Let (following the notation of [11]) G ( Y → X ) = inf { R C : ( H ( X | Y ) , H ( Y ) − R C , R C ) ∈ R GW ( X, Y ) } , G ( X → Y ) = inf { R C : ( H ( X ) − R C , H ( Y | X ) , R C ) ∈ R GW ( X, Y ) } . It has been shown [12], [11] that G ( Y → X ) is the smallest rate at which side-information Y may be coded and sent to a decoder which is interested in recovering X with asymptotically vanishing probability of error if the decoder receiv es X coded and sent at a rate of only H ( X | Y ) (which is the minimum possible rate which will allow such recov ery). Further , [11] arri ves at the maximum of G ( Y → X ) and G ( X → Y ) as a dual to the alternative definition of C GK in (10) from the Gray-W yner system. W e hav e the following relationship between the two pairs of quantities. Cor ollary 3.4: G ( Y → X ) = I ( X ; Y ) + R 1 − 0 , (14) G ( X → Y ) = I ( X ; Y ) + R 2 − 0 . (15) Further , inf { R : R ≥ I ( X ; Y ) , ( R C = R ) ∩ L GW ( X, Y ) ⊆ R GW ( X, Y ) } = max( G ( Y → X ) , G ( X → Y )) (16) = I ( X ; Y ) + max( R 1 − 0 , R 2 − 0 ) . (17) I V . C RY P TO G R A P H I C A P P L I C A T I O N The cryptographic problem we consider is of 2-party secure sampling : Alice and Bob should sample correlated random variables ( U, V ) (Alice getting U and Bob getting V ), such that Alice’ s view during the sampling protocol re veals nothing more to her about Bob’ s outcome V than what her own outcome U reveals to her , and similarly Bob’ s view rev eals nothing more about Alice’ s outcome than is revealed by his 3 Though the definition allows for zero-rate communication to the other user and a zero-rate (but non-zero) residual conditional mutual information, it can be shown from the expression for these rates in (12)-(13) that there is a scheme which achiev es exact conditional independence and requires no communication to the other user . own outcome. This is an important special case of secure multi-party computation , a central problem in modern cryp- tography . Howe ver , it is well-known (see for instance [18] and ref- erences therein) that very fe w distributions can be sampled from in this way , unless the computation is aided by a set up — some correlated random variables that are giv en to the parties at the beginning of the protocol. The set up itself will be from some distribution ( X, Y ) (Alice gets X and Bob gets Y ) which is different from the desired distribution ( U, V ) . The fundamental question then is, which set ups ( X , Y ) can be used to securely sample which distributions ( U, V ) , and how efficiently . W e restrict ourselves to the setting of honest-but-curious players. In this case, the requirements on a protocol Π for securely sampling ( U, V ) giv en a set up ( X , Y ) can be stated as follo ws, in terms of the outputs and the views of the parties from the protocol: 4 (Π out Alice ( X, Y ) , Π out Bob ( X, Y )) = ( U, V ) Π view Alice ( X, Y ) ↔ Π out Alice ( X, Y ) ↔ Π out Bob ( X, Y ) Π out Alice ( X, Y ) ↔ Π out Bob ( X, Y ) ↔ Π view Bob ( X, Y ) These three conditions correspond to correctness, security against a curious Alice and security against a curious Bob, respectiv ely . In [13], we showed that the re gion R ACI can be used as a measure of cryptographic complexity of correlated random variables (a smaller region R ACI corresponding to a higher complexity), in that the rate at which a pair ( U, V ) can be securely sampled gi ven a set up ( X, Y ) can be upperbounded by the ratio of their comple xity measures. More formally , there we presented the following result. (For completeness, a proof is provided in the appendix.) Theor em 4.1 ([13]): If n 1 independent copies of a pair of correlated random variables ( U, V ) can be securely realized from n 2 independent copies of a pair of cor - related random variables ( X , Y ) , then n 1 R ACI ( X, Y ) ⊆ n 2 R ACI ( U, V ) (where multiplication by n refers to n -times repeated Minko wski sum). In [13] we gav e an instance of pairs ( U, V ) and ( X , Y ) such that the upperbound on the rate at which instances of ( U, V ) can be securely sampled from instances of ( X , Y ) that is implied by the abov e result strictly impro ved on the upperbounds that could be deri ved from pre vious results. These pairs were contriv ed to highlight the shortcomings of prior work. Here we gi ve yet another e xample where the upperbound from our result strictly improves on prior work, but is further interesting for two reasons: firstly , the new example is based on natural correlated random v ariables that are widely studied (namely , variants of oblivious transfer), and secondly , the new upperbound we can prov e actually matches an easy lowerbound and is therefore tight. 4 Here we state the conditions for “perfect security , ” but our definitions and results generalize to the setting of “statistical security , ” where a small statistical error is allowed. A. A New Example W e no w discuss the ne w example where our upperbound is not only strictly better than the pre viously best av ailable upperbound, but is also tight. Example 4.1: Let S A, 1 , S A, 2 , S B , 1 , S B , 2 ∈ { 0 , 1 } L and C A , C B ∈ { 1 , 2 } be six independent random variables all of which are uniformly distributed ov er their alphabets. Con- sider a pair of random variables X , Y defined as X = ( C A , S A, 1 , S A, 2 , S B ,C A ) and Y = ( C B , S B , 1 , S B , 2 , S A,C B ) . Notice that these are in fact a pair of independent string- oblivious transfers (string-O T’ s) of string length L in opposite directions. Let U, V be a pair of random v ariables whose joint distribution is the same as that of X, Y , b ut with L = 1 . In other words, U, V are a pair of independent bit-O T’ s in opposite directions. The goal is to characterize the efficienc y with which we may securely generate independent instances of U, V from independent instances of X , Y for L > 1 . Here efficienc y is the supremum of n 2 /n 1 ov er secure sampling schemes which produce n 2 independent copies of ( U, V ) from n 1 independent copies of ( X, Y ) . It is easy to see that R ACI ( X, Y ) intersects the co-ordinate axes at (1 + L, 0 , 0) , (0 , 1 + L, 0) , and (0 , 0 , 2 L ) . From, these we can immediately obtain the upperbound of [17] on the efficienc y , namely (1 + L ) / 2 . Notice that this is dependent on L and would suggest that (sev eral) long string-OT pairs can be turned into several (more) bit-O T pairs. Ho wev er , as we sho w below , the efficiency of con version is just 1, i.e., the best one can do is to turn each pair of string-O T’ s into a pair of bit-O T’ s. W e will sho w that inf { R 1 + R 2 : ( R 1 , R 2 , 0) ∈ R ACI ( U, V ) } = 2 . But, (1 , 1 , 0) ∈ R ACI ( X, Y ) . This can be seen by setting Q = ( C A , C B , S A,C B , S B ,C A ) for which ( R 1 , R 2 , R RD ) = (1 , 1 , 0) . Thus, inf { R 1 + R 2 : ( R 1 , R 2 , 0) ∈ R ACI ( X, Y ) } ≤ 2 . Hence, from Theorem 4.1, we may con- clude that the efficienc y of con version we are after is 1. It only remains to characterize inf { R 1 + R 2 : ( R 1 , R 2 , 0) ∈ R ACI ( U, V ) } . The follo wing lemma, which is pro ved in the appendix, provides the required characterization. Lemma 4.2: inf { R 1 + R 2 : ( R 1 , R 2 , 0) ∈ R ACI ( U, V ) } = 2 . A C K N O W L E D G E M E N T S The authors would like to gratefully acknowledge discus- sions with V enkat Anantharam, P ´ eter G ´ acs, and Y oung-Han Kim. The example in Section IV -A is based on a suggestion by J ¨ urg W ullschleger . R E F E R E N C E S [1] D. Beaver , “Correlated pseudorandomness and the complexity of priv ate computations, ” in Proc. 28 th STOC , pp. 479–488. ACM, 1996. [2] I. Csisz ´ ar and R. Ahlswede, “On oblivious transfer capacity , ” in Pr oc. International Symposium on Information Theory (ISIT) , pp. 2061–2064, 2007. [3] I. Csisz ´ ar and J. K ¨ orner , Information Theory: Coding Theorems for Discr ete Memorless Systems , Akad ´ emiai Kiad ´ o, Budapest, 1981. [4] Y . Dodis and S. Micali, “Lower bounds for oblivious transfer reductions, ” in Jacques Stern, editor , EUROCRYPT , vol. 1592 of Lecture Notes in Computer Science , pp. 42–55. Springer, 1999. [5] P . G ´ acs and J. K ¨ orner , “Common information is far less than mutual information, ” Pr oblems of Control and Information Theory , 2(2):119– 162, 1973. [6] R. M. Gray and A. D. W yner, “Source coding for a simple network, ” Bell System T echnical Journal , vol. 53, pp. 16811721, 1974. [7] H. Imai, K. Morozov , and A. C. A. Nascimento, “On the obli vious transfer capacity of the erasure channel, ” in Proc. International Symposium on Information Theory (ISIT) , pp. 1428–1431, 2006. [8] H. Imai, K. Morozov , and A. C. A. Nascimento, “Efficient oblivious transfer protocols achieving a non-zero rate from any non-trivial noisy correlation, ” in International Confer ence on Information Theor etic Security (ICITS) , 2007. [9] H. Imai, K. Morozov , A. C. A. Nascimento, and A. Winter , “Efficient protocols achieving the commitment capacity of noisy correlations, ” in International Symposium on Information Theory (ISIT) , pp. 1432–1436, 2006. [10] H. Imai, J. M ¨ uller-Quade, A. C. A. Nascimento, and A. W inter, “Rates for bit commitment and coin tossing from noisy correlation, ” in International Symposium on Information Theory (ISIT) , pp. 45–, 2004. [11] S. Kamath and V . Anantharam, “ A new dual to the G ´ acs-K ¨ orner common information defined via the Gray-W yner system, ” in Proc. 48th Allerton Conf. on Communication, Contr ol, and Computing , pp. 1340–1346, 2010. [12] D. Marco and M. Effros, “On lossless coding with coded side infor- mation, ” IEEE T ransactions on Information Theory , vol. 55, no. 7, pp. 3284–3296, 2009. [13] V . M. Prabhakaran and M. Prabhakaran, “ Assisted common informa- tion, ” in International Symposium on Information Theory (ISIT) , pp. 2602-2606, 2010. Extended draft available at http://arxiv .org/abs/1002. 1916. [14] S. Winkler and J. W ullschleger . “Statistical impossibility results for oblivious transfer reductions, ” Cryptology ePrint Archive, Report 2009/508, 2009. http://eprint.iacr .org/. [15] A. W inter, A. C. A. Nascimento, and H. Imai. “Commitment capacity of discrete memoryless channels, ” In Kenneth G. Paterson, editor, IMA Int. Conf. , vol. 2898 of Lectur e Notes in Computer Science , pp. 35–51. Springer , 2003. [16] H. S. Witsenhausen, “On sequences of pairs of dependent random variables, ” SIAM Journal of Applied Mathematics , 28:100–113, 1975. [17] S. W olf and J. W ullschleger . “New monotones and lower bounds in unconditional two-party computation, ” IEEE Tr ansactions on Information Theory , 54(6):2792–2797, 2008. [18] J. W ullschleger . Oblivious-T ransfer Amplification. Ph.D. thesis, Swiss Federal Institute of T echnology , Z ¨ urich. http://arxiv .org/abs/cs/0608076. [19] A. D. W yner , “The common information of two dependent random variables, ” IEEE T ransactions on Information Theory , 21(2),163–179, 1975. A P P E N D I X Pr oof of Proposition 2.1: GK common information C GK is defined as the supremum of the set of R such that for ev ery > 0 there are maps g 1 : X n → Z , and g 2 : Y n → Z for a suf ficiently large n which satisfy Pr ( g 1 ( X n ) 6 = g 2 ( Y n )) ≤ , (18) 1 n H ( g 1 ( X n ))) ≥ R − . (19) An alternativ e defintion which allows for a genie with zero- rate links to the users is giv en below . It is easy to see that this can only lead to a larger v alue. But as we will sho w , the definitions are in fact equiv alent. Let C 0 GK be the supremum of the set of R such that for ev ery > 0 there are maps f k : X n × Y n → { 1 , . . . , 2 n } , ( k = 1 , 2) , g 1 : X n × { 1 , . . . , 2 n } → Z , and g 2 : Y n × { 1 , . . . , 2 n } → Z for a sufficiently large n which satisfy (1) and 1 n H ( g 1 ( X n , f 1 ( X n , Y n ))) ≥ R − . I ( Y ; U | X ) = I ( X , Y ; U ) − I ( X ; U ) = H ( X | U ) + I ( X , Y ; U ) − H ( X ) , (20) I ( X ; U | Y ) = I ( X , Y ; U ) − I ( Y ; U ) = H ( Y | U ) + I ( X , Y ; U ) − H ( Y ) , and (21) I ( X ; Y | U ) = H ( X | U ) + H ( Y | U ) − H ( X , Y | U ) = H ( X | U ) + H ( Y | U ) + I ( X , Y ; U ) − H ( X , Y ) . (22) Clearly , C 0 GK ≥ C GK . W e first show C 0 GK = I ( X ; Y ) − R RD-0 . (20) Let U = g 1 ( X n ( f 1 ( X n , Y n ))) . Then I ( X n ; Y n | U ) + H ( U ) = I ( X n ; Y n | U ) + I ( X n , Y n ; U ) = H ( X n , Y n ) − H ( X n | Y n , U ) − H ( Y n | X n , U ) = I ( X n ; Y n ) + I ( X n ; U | Y n ) + I ( Y n ; U | X n ) ≥ nI ( X ; Y ) . Therefore, if the maps satisfy (2), then H ( U ) ≥ nI ( X ; Y ) − I ( X n ; Y n | U ) ≥ nI ( X ; Y ) − n ( R RD + ) = n ( I ( X ; Y ) − R RD − ) which implies (20). W ith C GK replaced by C 0 GK , we can prov e (4)-(5) as follows: (4) follo ws from Proposition 2.2; (4) and (3) imply (5). See [13, section II.B] for a proof from (5) of the explicit characterization stated at the end of the proposition. Since this explicit form can be achiev ed without any communication from the genie, it follows that C 0 GK = C GK . Pr oof of Theorem 3.1: It is easy to prove the above theorem from the single-letter expressions for the regions in propositions 2.2 and 2.3 by making use of the mutual information equalities (20)-(22) at the top of the page. Pr oof of Corollary 3.2: sup { R C : R A + R C = H ( X ) , R B + R C = H ( Y ) , ( R A , R B , R C ) ∈ R GW } ( a ) = sup { R : (0 , 0 , I ( X ; Y ) − R ) ∈ R 0 GW } ( b ) = sup { R : (0 , 0 , I ( X ; Y ) − R ) ∈ R ACI } , where (a) follows from the definition R 0 GW = f ( R GW ) . The ≤ direction of (b) follows directly from Theorem 3.1. But < cannot hold since if (0 , 0 , I ( X ; Y ) − R ) ∈ R ACI , then there is a R 0 ≥ R such that (0 , 0 , I ( X ; Y ) − R 0 ) ∈ R 0 GW . Finally , (c) follows from Proposition 2.1. T o arriv e at the alternativ e form, we verify the equiv alence of the two forms. { R : R ≤ I ( X ; Y ) , { R C = R } ∩ L GW ⊆ R GW } = { R C : R A + R C = H ( X ) , R B + R C = H ( Y ) , ( R A , R B , R C ) ∈ R GW } . ⊆ : if R ≤ I ( X ; Y ) , then ( H ( X ) − R, H ( Y ) − R , R ) ∈ { R C = R } ∩ L GW . ⊇ : Let s = ( H ( X ) − R C , H ( Y ) − R C , R C ) ∈ R GW . Then (a) R C ≤ I ( X ; Y ) since s ∈ L GW , and (b) if s 0 = ( r A , r B , R C ) ∈ L GW , then since r A ≥ H ( X ) − R C and r B ≥ H ( Y ) − R C , we hav e s 0 ≥ s (component-wise) which implies that s 0 ∈ R GW from the definition of the GW system. Pr oof of Corollary 3.3: C Wyner = inf { R C : ( R A , R B , R C ) ∈ R GW , R A + R B + R C = H ( X , Y ) } ( a ) = inf { R 1 + R 2 + I ( X ; Y ) : ( R 1 , R 2 , 0) ∈ R 0 GW } ( b ) = inf { R 1 + R 2 + I ( X ; Y ) : ( R 1 , R 2 , 0) ∈ R ACI } , where (a) follo ws from the definition R 0 GW = f ( R GW ) ; (b) follows from Theorem 3.1: ≥ direction follo ws directly from the theorem. But > cannot hold, since by the theorem, if ( R 1 , R 2 , 0) ∈ R ACI then there exists ( R 0 1 , R 0 2 , 0) ∈ R 0 GW such that R 0 1 ≤ R 1 and R 0 2 ≤ R 2 . Pr oof of Corollary 3.4: G ( Y → X ) = inf { R C : ( H ( X | Y ) , H ( Y ) − R C , R C ) ∈ R GW } , ( a ) = inf { R : ( R − I ( X ; Y ) , 0 , 0) ∈ R 0 GW } ( b ) = inf { R : ( R − I ( X ; Y ) , 0 , 0) ∈ R ACI } ( c ) = I ( X ; Y ) + R 1 − 0 , where (a) follows from R 0 GW = f ( R GW ) . (b) is a consequence of Theorem 3.1: And (c) follows from the definition of R 1 − 0 . Similarly we get (15). The equality (16) is proved in [11] which along with (14)-(15) implies (17). Pr oof of Theor em 4.1: The theorem is in fact corollary 3.2 of [13] which follows immediately from Theorem 3.1 of [13] and the following lemma: Lemma A.1: Let the pair of random v ariables ( X 1 , Y 1 ) be independent of the pair ( X 2 , Y 2 ) . If X = ( X 1 , X 2 ) and Y = ( Y 1 , Y 2 ) , then R ACI ( X, Y ) = R ACI ( X 1 , Y 1 ) + R ACI ( X 2 , Y 2 ) . For completeness, we gi ve a proof of Theorem 3.1 of [13] below since the proof was not provided there. This also contains a proof of Lemma A.1 (see (d) below). Please refer [13] for notation and a statement of the theorem being proved below . W e show that under each step of a secure protocol, R ACI can only grow . (a) Local computation cannot shrink it: For all random vari- ables with X − Y − Z , we ha ve R ACI ( X, Y Z ) ⊇ R ACI ( X, Y ) and R ACI ( X Y , Z ) ⊇ R ACI ( X, Y ) . The first set inclusion follows from the fact that for the joint p.m.f. p X,Y ,Z,Q = p X,Y p Z | Y p Q | X,Y I ( X ; Y , Z | Q ) = I ( X ; Y | Q ) I ( Q ; Y , Z | X ) = I ( Q ; Y | X ) I ( X ; Q | Y , Z ) = I ( X ; Q | Y ) . (b) Communication cannot shrink it: For all random vari- ables ( X, Y ) and functions f over the support of X (resp, Y ), we hav e R ACI ( X, ( Y , f ( X ))) ⊇ R ACI ( X, Y ) (resp, R ACI (( X, f ( Y )) , Y ) ⊇ R ACI ( X, Y ) ). The first set inclusion follows from the follo wing facts for the joint p.m.f p X,Y ,Z,Q = p X,Y p Z | Y p Q | X,Y : I ( X ; Y , f ( X ) | Q, f ( X )) = I ( X ; Y | Q, f ( X )) ≤ I ( X ; Y | Q ) I ( X ; Q, f ( X ) | Y , f ( X )) = I ( X ; Q | Y , f ( X )) ≤ I ( X ; Q | Y ) I ( Y ; Q, f ( X ) | X ) = I ( Y ; Q | X ) (c) Secur ely derived outputs do not have a smaller re gion: For all random variables X , U, V , Y such that X − U − V and U − V − Y , we have R ACI ( U, V ) ⊇ R ACI (( X, U ) , ( Y , V )) . This follows from the follo wing facts for (dependent) ran- dom variables X , Y , U, V , Q which satisfy the Markov chains X − U − V and U − V − Y : I ( X , U ; Y , V | Q ) ≥ I ( U ; V | Q ) , I ( X , U ; Q | Y , V ) = I ( X , U ; Q, Y | V ) − I ( X , U ; Y | V ) ( a ) = ( I ( U ; Q, Y | V ) + I ( X ; Q, Y | U, V )) − I ( X ; Y | U, V ) ≥ I ( U ; Q | V ) , and similarly I ( Y , V ; Q | X , U ) ≥ I ( V ; Q | U ) , where we used U − V − Y to obtain equality (a). (d) Re gions of independent pairs add up: If ( X , Y ) is independent of ( U, V ) , we hav e R ACI (( X, U ) , ( Y , V )) = R ACI ( X, Y ) + R ACI ( U, V ) . This follows easily from the fol- lowing facts: For the joint p.m.f. p X,Y p U,V p Q 1 | X,Y p Q 2 | U,V , we hav e I ( X , U ; Y , V | Q 1 , Q 2 ) = I ( X ; Y | Q 1 ) + I ( U, V | Q 2 ) I ( X , U ; Q 1 , Q 2 | Y , V ) = I ( X ; Q 1 | Y ) + I ( U ; Q 2 | V ) I ( Y , V ; Q 1 , Q 2 | X, U ) = I ( Y ; Q 1 | X ) + I ( V ; Q 2 | U ) And, for the joint p.m.f. p X,Y p U,V p Q | X,Y ,U,V , we hav e I ( X , U ; Y , V | Q ) ≥ I ( X ; Y | Q ) + I ( U ; V | Q ) I ( X , U ; Q | Y , V ) ≥ I ( X ; Q | Y ) + I ( U ; Q | V ) I ( Y , V ; Q | X , U ) ≥ I ( Y ; Q | X ) + I ( V ; Q | U ) Pr oof of Lemma 4.2: By Lemma A.1, we need only characterize the inf { R 1 + R 2 : ( R 1 , R 2 , 0) ∈ R ACI } of one of the pair of independent bit-O T’ s. Let us denote one bit-OT by A, B : where A = ( S 1 , S 2 ) ∈ { 0 , 1 } 2 uniformly distributed ov er its alphabet and B = ( C, S C ) , where C ∈ { 1 , 2 } is independent of A and uniformly distrbuted over its alphabet. By Proposition 2.2, inf { R 1 + R 2 : ( R 1 , R 2 , 0) ∈ R ACI ( A, B ) } = inf p Q | A,B ∈P : I ( A ; B | Q )=0 I ( B ; Q | A ) + I ( A ; Q | B ) = H ( A | B ) + H ( B | A ) − sup p Q | A,B ∈P : I ( A ; B | Q )=0 H ( A | Q, B ) + H ( B | Q, A ) . W e sho w below that the sup term is 1. Since H ( A | B ) + H ( B | A ) = 2 , this will allo w us to conclude that the smallest sum-rate of R RD (0) of A, B is 1. In voking the lemma above, the corresponding smallest sum-rate for U, V is then 2 as required. T o show that the sup term is 1, notice that the only valid choices of p Q | A,B are such that I ( A ; B | Q ) = 0 . This means that the resulting p A,B | Q ( ., . | q ) must belong to one of eight possible classes shown in Figure 3b (for any q with non-zero probability p Q ( q ) ; we may assume that all q ’ s have non-zero probability without loss of generality). Recall that there is a cardinality bound on Q ; let us denote the alphabet of Q by { q 1 , q 2 , . . . , q N } , where N is the cardinality bound. W e will first sho w that there is no loss of generality in assuming that no more than one of the q i ’ s is such that its p A,B | Q ( ., . | q i ) belongs to the same class (and hence we may take N = 8 ). Suppose, q 1 and q 2 belong to the same class, say class 1, with parameters p 1 and p 2 respectiv ely . Then, if we denote the binary entropy function by H 2 ( . ) , we hav e H ( A | Q, B ) + H ( B | Q, A ) = N X k =1 p Q ( q k ) ( H ( A | B , Q = q k ) + H ( B | A, Q = q k )) = p Q ( q 1 ) H 2 ( p 1 ) + p Q ( q 2 ) H 2 ( p 2 ) + N X k =3 p Q ( q k ) ( H ( A | B , Q = q k ) + H ( B | A, Q = q k )) ≤ ( p Q ( q 1 ) + p Q ( q 2 )) H 2 p Q ( q 1 ) p 1 + p Q ( q 2 ) p 2 p Q ( q 1 ) + p Q ( q 2 ) + N X k =3 p Q ( q k ) ( H ( A | B , Q = q k ) + H ( B | A, Q = q k )) , where the inequality (Jensen’ s) follo ws from the conca vity of the binary entropy function. Thus, we can define a Q 0 of alphabet size N − 1 where letters q 1 , q 2 are replaced by q 0 such that p Q 0 ( q 0 ) = p Q ( q 1 ) + p Q ( q 2 ) , and p A,B | Q 0 = q 0 is in class 1 with parameter p Q ( q 1 ) p 1 + p Q ( q 2 ) p 2 p Q ( q 1 )+ p Q ( q 2 ) , while maintaining for i = 3 , . . . , N , p Q 0 ( q i ) = p Q ( q i ) and p A,B | Q 0 ( a, b | q i ) = p A,B | Q ( a, b | q i ) . (It is easy to verify (a) that this giv es a valid 1/8 00 01 11 10 10 21 11 20 (a) p 1 p 2 p 3 p 4 p 5 1 - p 6 p 7 p 8 1 - p 1 1 - p 2 1 - p 4 1 - p 3 1 - p 5 p 6 1 - p 7 1 - p 8 p AB|Q (.,.| q i ) p AB|Q (.,.| q ii ) p AB|Q (.,.| q iii ) p AB|Q (.,.| q iv ) p AB|Q (.,.| q v ) p AB|Q (.,.| q vi ) p AB|Q (.,.| q vii ) p AB|Q (.,.| q viii ) (b) Fig. 3: (a) Joint p.m.f. of A, B . Each solid line represents a probablity mass of 1/8. (b) Eight possible classes that p A,B | Q ( ., . | q ) may belong to for a p Q | A,B which satisfies I ( A ; B | Q ) = 0 . joint p.m.f. for p A,B ,Q 0 , (b) that the induced p A,B is the same as the original, and (c) that the induced p Q 0 | A,B satisfies the condition I ( A ; B | Q 0 ) = 0 .) Then, the above inequality states that H ( A | Q, B ) + H ( B | Q, A ) ≤ H ( A, Q 0 , B ) + H ( B | Q 0 , A ) proving our claim. Thus, without loss of generality , we may assume that N = 8 and p A,B | Q ( ., . | q i ) belongs to class i . Notice that p Q | A,B ( q 1 | 00 , 10) + p Q | A,B ( q 5 | 00 , 10) = 1 , p Q | A,B ( q 2 | 01 , 10) + p Q | A,B ( q 5 | 01 , 10) = 1 , p Q | A,B ( q 2 | 01 , 21) + p Q | A,B ( q 6 | 01 , 21) = 1 , p Q | A,B ( q 3 | 11 , 21) + p Q | A,B ( q 6 | 11 , 21) = 1 , p Q | A,B ( q 3 , 11 , 11) + p Q | A,B ( q 7 | 11 , 11) = 1 , p Q | A,B ( q 4 | 10 , 11) + p Q | A,B ( q 7 | 10 , 11) = 1 , p Q | A,B ( q 4 | 10 , 20) + p Q | A,B ( q 8 | 10 , 20) = 1 , p Q | A,B ( q 1 | 00 , 20) + p Q | A,B ( q 8 | 00 , 20) = 1 . Let us define ˜ p 1 4 = p Q | A,B ( q 1 | 00 , 10) , ˜ p 5 4 = p Q | A,B ( q 5 | 01 , 10) , ˜ p 2 4 = p Q | A,B ( q 2 | 01 , 21) , ˜ p 6 4 = p Q | A,B ( q 6 | 11 , 21) , ˜ p 3 4 = p Q | A,B ( q 3 | 11 , 11) , ˜ p 7 4 = p Q | A,B ( q 7 | 10 , 11) , ˜ p 4 4 = p Q | A,B ( q 4 | 10 , 20) , ˜ p 8 4 = p Q | A,B ( q 8 | 00 , 20) . Let us ev aluate H ( B | Q, A ) in terms of the abov e parame- ters. Notice that H ( B | Q = q i , A ) = 0 for i = 5 , . . . , 8 . Hence H ( B | Q, A ) = X ( q ,a ) ∈{ (1 , 00) , (2 , 01) , (3 , 11) , (4 , 10) } p Q,A ( q , a ) H ( B | Q = q , A = a ) = ˜ p 1 + (1 − ˜ p 8 ) 8 H 2 ˜ p 1 ˜ p 1 + (1 − ˜ p 8 ) + ˜ p 2 + (1 − ˜ p 5 ) 8 H 2 ˜ p 2 ˜ p 2 + (1 − ˜ p 5 ) + ˜ p 3 + (1 − ˜ p 6 ) 8 H 2 ˜ p 3 ˜ p 3 + (1 − ˜ p 6 ) + ˜ p 4 + (1 − ˜ p 7 ) 8 H 2 ˜ p 4 ˜ p 4 + (1 − ˜ p 7 ) ≤ 4 + P 4 i =1 ˜ p i − P 8 j =5 ˜ p j 8 , where the inequality follows from the fact that binary entropy function is upperbounded by 1. Similary , we can get H ( A | Q, B ) ≤ 4 + P 8 j =5 ˜ p j − P 4 i =1 ˜ p i 8 . Combining, we obtain the desired H ( B | Q, A ) + H ( A | Q, B ) ≤ 1 .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment