Information Spectrum Approach to Second-Order Coding Rate in Channel Coding

Second-order coding rate of channel coding is discussed for general sequence of channels. The optimum second-order transmission rate with a constant error constraint $\epsilon$ is obtained by using the information spectrum method. We apply this resul…

Authors: Masahito Hayashi

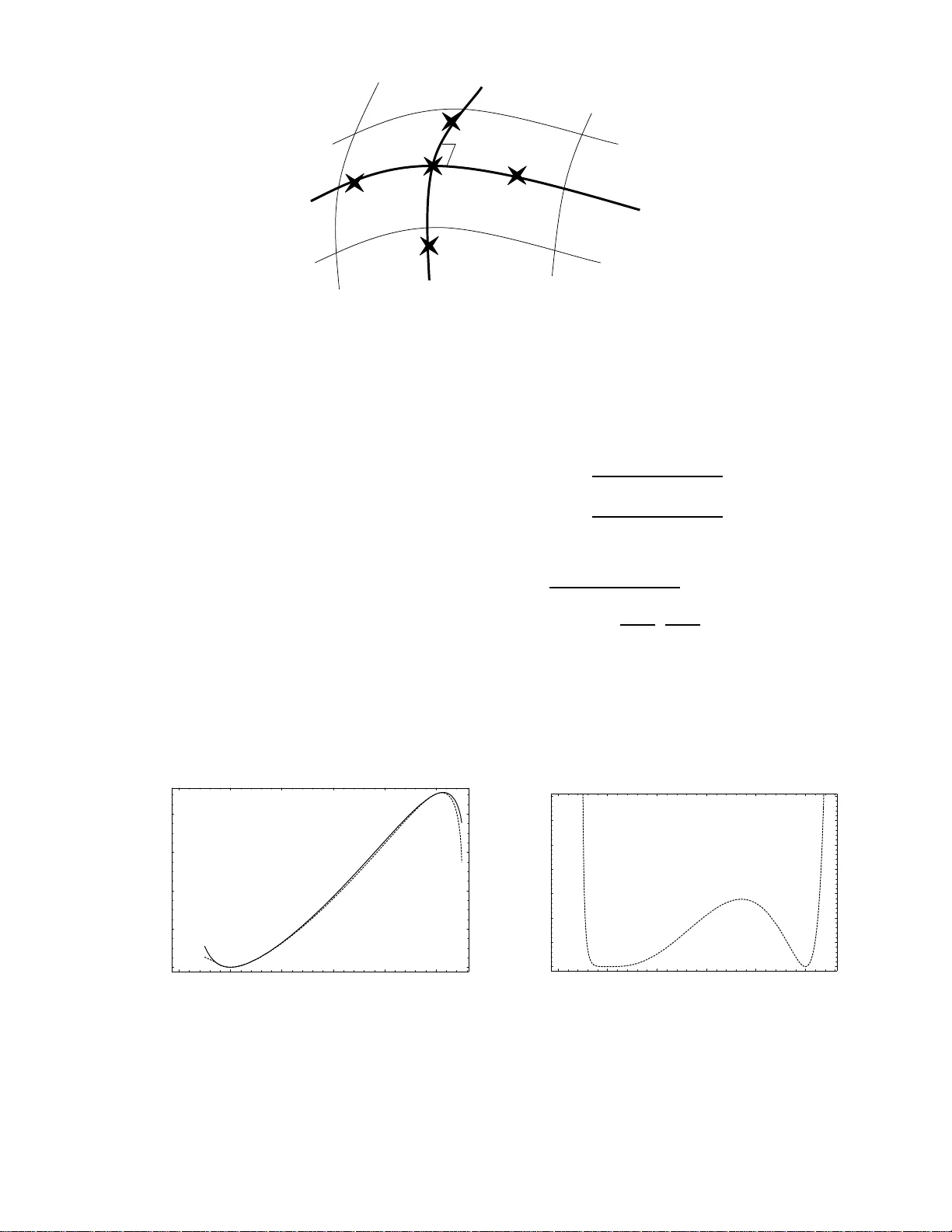

1 Information Spectrum Approach to Second-Order Coding Rate in Channel C oding Masahito Hayash i Abstract Second-order coding rate of channel coding is discussed for general sequence of channels. The optimum second-order transmission rate with a co nstant error constraint ǫ is ob tained by using the information spec trum method. W e app ly this result to the discrete memoryless case, the discrete memory less case with a cost constraint, the additi v e Marko vian case, and the Gaussian channel case with an en ergy constraint. W e also clarify tha t the Gallager bound do es not giv e the optimum e valuation in the second-orde r coding rate. Index T erms Second-order coding rate, Channel coding, Information spectrum, Central l imit theorem, Gallager bound, additive Mark o vian channel I . I N T R O D U C T I O N B ASED on the chann el codin g theorem , ther e e xists a sequence of cod es f or th e gi ven chann el W such that the average error pr obability goes to 0 when th e transmission r ate R is less tha n C DM W . That is, if the number n of applications of the channel W is sufficiently large, the av erage error prob ability of a good cod e goes to 0 . In order to e valuate th e average error probab ility with finite n , we often use the exponen tial r ate of decrease, which dep ends on the transmission rate R . Howe ver , such an exp onential evaluation ignore s the constant factor . Therefore, it is not clear whether exponential evaluation provides a good e v aluation for the average error probability when the transmission rate R is c lose to the capacity . In fact, many researchers believe th at, ou t of the known ev aluations, the Gallage r bo und [1] gi ves the best upper boun d of average error pr obability in the chann el coding when the transmission ra te is greater than th e critical rate. This is because the Gallager bound provides the optimal exponential rate of decr ease. In order to clarify this point, we f ocus on the second-ord er coding rate in channe l coding, in which, we describe the transmission length by C DM W n + R 2 √ n . From a prac tical viewpoint, when the coding length is close to C DM W n , the second- order coding rate giv es a better e valuation o f a verage error probab ility than the first-o rder coding rate. In f act, the second error co ding rate has b een applied for e v aluation o f the a verage erro r probability o f random coding concern ing the phase basis, which is essential to the secur ity of quantum key distrib ution[2]. There fore, it is ap propr iate to treat the second-order coding rate from the applied v iewpoint as well as the theoretical viewpoint. In the case of the discrete memory less case, Strassen [3] derived the optimu m rate R 2 for an arb itrary average error pr obability 0 < ǫ < 1 using the Gaussian distribution. In the present p aper, we e xtend his re sult to mor e general cases, i.e., the discrete m emory less case with cost constraint, the Gaussian ad ditive noise case with the energy constraint, and the a dditive Markovian case. Further, our proof for the discrete memo ryless case is much simpler th an the o riginal one. Indeed, since his proof is not so simple and his pap er is written in German, it is qu ite difficult to follow his proof. In the present paper , in order to treat this problem from a unified vie wpoint, we emp loy the m ethod of information spectrum, which was initiated by H an-V erd ´ u [4], an d was m ainly formu lated by Han[ 5]. The second-or der codin g rate is closely r elated to the meth od of info rmation spectru m because Hayashi[6] tr eated th is p roblem o f fixed-len gth source c oding an d intr insic random ness using th e method of information spectrum . Hayashi[6] d iscussed the erro r prob ability when the com pressed size is H ( P ) n + a √ n , wher e n is th e size o f input system and H ( P ) is the entro py of the distribution P of the input system. In the method of infor mation spectr um, w e treat the gen eral asym ptotic formula, which gives the relationship between the asymptotic optim al perfo rmance and th e norm alized logarithm of th e likelihoo d of the probability distribution. In order to treat a special case, we apply the general asymptotic for mula to the respectiv e informatio n source and calculate the asym ptotic stochastic beh avior of the normalized logar ithm of the likelihood. Tha t is, in the in formatio n spectrum metho d, we h av e two steps, d eriving the general asy mptotic form ula and app lying the g eneral asympto tic formula. With respect to fixed-length source coding and intrinsic randomn ess, the same relation holds concernin g the gen eral a symptotic formula in the second-or der coding rate. Howe ver , there is a difference concernin g the a pplication of the gen eral asymptotic formula to the independ ent and identical distributions. That is, while the norm alized logarithm of th e likelihoo d app roaches th e en tropy H ( P ) in the probab ility in the first-o rder cod ing rate, the stochastic behavior is asymp totically described by the Gaussian d istribution in the first-order coding rate. In other w ords, in the second step, the fir st-order coding rate corresponds to th e law o f large nu mbers, and the secon d-ord er coding rate correspon ds to the central limit theorem. M. Hay ashi is wi th Gra duate School o f Informat ion Science s, T ohoku Univ ersity , Aoba-ku, Sendai, 980-8 579, Japan (e-mail: hayashi@math.is.to hoku.ac.jp) 2 In the present paper , we treat the channel coding in the second-or der coding rate, i.e., the case in which the transmission length is C DM W n + a √ n . Similar to the above-men tioned case, we em ploy the method of informa tion spectr um. That is, we treat the general channel, which is the gen eral sequ ence { W n ( y | x ) } of probability distributions without structure. As shown by V erd ´ u- Han [14], this method enables us to chara cterize the asym ptotic p erform ance with only the rando m variable 1 n log W n ( y | x ) W n P n ( y ) (the normalized log arithm of th e likelihoo d ratio between the con ditional distribution and the n on-co nditional d istribution) without any fur ther assumption, wh ere W n P n ( y ) def = P x P n ( x ) W n ( y | x ) . Concernin g this general asymp totic formu la, if we can su itably for mulate theorem s in th e secon d-ord er coding rate and establish an app ropriate relationship between the first-order coding rate and the second-o rder cod ing rate, we can easily e xtend proofs concerning the first-order coding rate to those of the second-o rder coding rate. Therefo re, there is no serious difficulty in establishing the gener al asymp totic fo rmula in the second-o rder coding rate. I n order to clarify this po int, we pr esent proo fs of some r elev ant theorems in the first-order coding rate, even though they are known. In order to treat the special cases, it is sufficient to apply the g eneral asymptotic form ula, i.e ., to calculate the asymptotic behavior of the ran dom variable 1 n log W n ( y | x ) W n P n ( y ) . The additive Markovian case can be treated in the same way as fixed- length source cod ing and intrinsic ran domn ess. Howev er , o ther special cases h av e another difficulties, which do no t appear in fixed- length source codin g or intrinsic randomness. The first difficulty is th e optimization concern ing the input distrib ution in the conv erse part o f the cha nnel c oding. T his problem co mmonly app ears am ong the thre e cases, i.e. , the discrete mem oryless case, the discrete memory less case with cost constraint, and the Gaussian additive noise case with the energy con straint. In the discrete memoryless case, the seco nd-or der coding r ate corresponds to simple ap plication of the central limit theorem, while the first-order co ding ra te co rrespon ds to th e law of large numb ers. Hence, the p erform ance in second-or der coding r ate is characterized by the v ariance of the log arithmic likelihoo d ratio, and the direct pa rt can be easily obtained in this case. This relationship is summarized in Fig. 5. Source coding & Intrinsic randomness Optimum rate General formula 1 st order 2 nd order Law of Large num. Central limit thm. Same method Channel coding Optimum rate General formula i.i.d./ stationary discrete memoryless 1 st order 2 nd order Law of Large num. Same method ( ) H p General case Central limit thm. Variance W C W V Most difficult part (Section VIII) Fig. 1. Relat ionship between the present result and fixed-length source coding/intrin sic randomness. The → arrow describes the direct part, and the ← arrow describe s the con v erse part. Howe ver , there is another d ifficulty in the direct par t for th e d iscrete mem oryless case with cost c onstraint and the Gau ssian additive no ise case with the energy constraint. I n these cases, all of th e encoded signa ls has to satisfy cost co nstraint. This kind of difficulty d oes not ap pear in th e case of first-or der codin g rate of b oth of the discrete m emoryless case with cost constraint and the Gaussian additive noise case with the energy constraint. T his is because it is suf ficient to construct the code whose average err or p robability goes to zer o in the c ase o f the first-order coding rate while it is requ ired to construct the code whose average err or prob ability goes to a g i ven theresh old ǫ in the case of th e second-order coding rate. When we find a code satisfying the fo llowing; its a verage error probab ility goes to zero and its a verage cost is less than th e constraint. Th en, there exists a subcod e satisfying the fo llowing; its average error pro bability goes to zero an d the c osts of all e ncoded signals are le ss than the constraint. Howe ver , the same method cannot be applied when we find a code satis fying the following; its av erage error probability goes to ǫ and its a verage cost is less than the constraint. In the p resent pa per, we direc tly con struct a code, in which the costs of all en coded signals are less than the constraint. Here, we describe the mean ing of the second-o rder coding rate. When the transmission length is describ ed by nC DM W + √ nR 2 , as shown in Subsection IX-A, the optimal error can b e approximately attained by r andom coding. Since it seem s that random coding cannot b e realize d, o ur e v aluation seems to be re lated to only the th eoretical b est p erform ance. Howe ver , in the quantum key distrib ution, it can be realized concern ing th e ph ase bases [7], [2] . In such a setting, the coding leng th is on the order of 10,00 0 or 10 0,000 [8 ]. In the q uantum ke y distrib ution, Hayashi [2] has ap plied the second-order co ding rate to e v aluate the phase erro r probability , which is directly linked to the secur ity of the final key . The r emainder of th e presen t paper is organized as f ollows. In Section II, we r evisit the second ord er coding rate in the stationary discre te memo ryless case, and dicuss the s econd order coding rate in the stationary discrete mem oryless case with 3 cost constraint. In Section III, the Markovian ad ditive channel is treated. In Section IV, the Gaussian additive noise case with the en ergy con straint is con sidered. These results are shown in the Section X by employing th e m ethod of information specturm. In the p resent result, the pe rforman ce of information tr ansmission is discussed in terms of second-ord er coding rate using two important quantities V + W and V − W instead of the capacity in the c ase of discrete memor yless case. I n other cases, similar qu antities play the same role. In Section V, we com pare o ur ev aluation with the Gallager bound [1] in th e seco nd-or der setting. I n Section VI , th e proper ties of V + W and V − W are discu ssed. In Subsectio n VI-A, we discuss a typical example such th at V + W is different from V − W . In Subsection VI-B, the additivities co ncernin g V + W and V − W are proved. I n Section VII, the notations of the informatio n spectrum are explained. In Section VIII, the performan ce of the inform ation tr ansmission is discussed in terms of the second- order codin g rate using the infor mation spectrum in the genera l c ase. That is, we p resent gener al formulas for the second -order coding rate. In Section IX, the theorem p resented in the previous section is proved. In Section X, using g eneral formu las for the second- order co ding rate, we demonstrate our proof of the seco nd order coding rate in the stationary discrete memoryless case u sing o ur gener al result concerning the secon d or der cod ing rate. In this p roof, the direct part is immediate. Th e converse part is the mo st difficult co nsidered herein becau se we must treat the infor mation spectrum for the general inpu t distrib utions in the sense of the second -order coding rate. I I . S E C O N D O R D E R C O D I N G R A T E I N S TA T I O N A RY D I S C R E T E M E M O RY L E S S C H A N N E L S As the most typica l case, we revisit the secon d-or der codin g rate o f stationar y discrete m emory less cha nnels, in which, we use an n -multiple ap plication of the discr ete channel W ( y | x ) , which transmits the informa tion from the input sy stem X to the ou tput system Y . That is, th e cha nnel considered here is given as the stationar y d iscrete me moryless channel W × n ( y | x ) def = Q n i =1 W ( y i | x i ) . Note that, in th e present paper, P × P ′ ( W × W ′ ) denotes the p roduct of two distributions P and P ′ (two ch annels W an d W ′ ), and P × n ( W × n ) denotes the p roduct o f n uses o f the distribution P (the channel W ), i. e., the n -th inde penden t and identical distribution (i.i. d.) of P (th e n -th stationar y mem oryless channel of W ). In this case, when the transmission rate is less than the capacity C DM W , the a verage err or p robability goes to 0 expon entially , if we use a suitable encoder and th e maximum likelihood dec oder . Let N be the size of the transmitted info rmation. The encoder is a map φ fro m { 1 , . . . , N } to X n , a nd th e d ecoder is given by th e set of subsets {D i } N i =1 of Y n , w here D i correspo nds to the decodin g region of i ∈ { 1 , . . . , N } . Then, the code is gi ven by the triple ( N , φ, {D i } N i =1 ) and is denoted by Φ . The a verage error probability P e,W × n (Φ) is d escribed as P e,W × n (Φ) def = 1 N n N n X i =1 (1 − W × n φ ( i ) ( D i )) , where W x ( y ) def = W ( y | x ) . For simplicity , th e size N n is denote d by | Φ | . The p erform ance o f the co de Φ is given by the pair of P e (Φ) and | Φ | . As stated by the channel coding theor em [9], the capa city is given b y C DM W = max P I ( P, W ) = min Q max x D ( W x k Q ) , where Q is the output distribution, and W P ( y ) def = X x P ( x ) W ( y | x ) I ( P, W ) def = X x P ( x ) D ( W x k W P ) D ( P k P ′ ) def = X x P ( x ) log P ( x ) P ′ ( x ) . Thus, Q M def = a rgmin Q max x D ( W x k Q ) satisfies D ( W x k Q M ) ≤ C DM W . (1) Throu ghout the present paper, we choose the base o f the log arithm to be e . Although the above channel co ding theo rem conce rns only the first-ord er coding rate of the transmission length log N n , o ur main focu s is the analysis of th e second-o rder coding rate. When the tran smission length log N n asymptotically beh av es as nC DM W + a √ n , the o ptimal a verage error is gi ven a s follows: C DM p ( a, C DM W | W ) def = inf { Φ n } ∞ n =1 lim sup n →∞ P e,W × n (Φ n ) lim inf n →∞ 1 √ n (log | Φ n | − nC DM W ) ≥ a . (2) 4 Fixing the average error prob ability , we obtain the follo wing quantity: C DM ( ǫ, C DM W | W ) def = sup { Φ n } ∞ n =1 lim inf n →∞ 1 √ n (log | Φ n | − nC DM W ) lim sup n →∞ P e,W × n (Φ n ) ≤ ǫ . (3) W e refer to this v alue th e op timum secon d-ord er transmission ra te with the error prob ability ǫ . In ord er to treat th e second-orde r coding rate, we need the distribution function G for the standard Gau ssian distrib ution (with expectation 0 and variance 1 ), which is defin ed by G ( x ) def = Z x −∞ 1 √ 2 π e − x 2 / 2 dx. In this pr oblem, the qua ntity V P,W : V P,W def = X x P ( x ) X y W x ( y ) log W x ( y ) W P ( y ) − D ( W x k W P ) 2 plays an impo rtant role. By using these quantities, C DM p ( a, C DM W | W ) and C DM ( ǫ, C DM W | W ) are calculated in the stationary discrete memor yless case as fo llows Theor em 1: (Stra ssen[3]) When the card inality |X | is finite and P M def = a rgmax P I ( P, W ) exists uniquely , then C DM p ( a, C DM W | W ) = G ( a/ p V P M ,W ) (4) C DM ( ǫ, C DM W | W ) = p V P M ,W G − 1 ( ǫ ) . (5) When { W x } is lin early inde penden t b y regarding distributions as positive vectors, th e map P 7→ W P is a on e-to-o ne map. Then, P M def = a rgmax P I ( P, W ) exists u niquely . Howev er , when { W x } is not linear ly indepe ndent, argmax P I ( P, W ) is not necessarily u nique. I n o rder to treat such a case, we in troduc e two qu antities V + W and V − W and two distributions P M + and P M − : V + W def = ma x P ∈V V P,W V − W def = min P ∈V V P,W P M + def = a rgmax P ∈V V P,W P M − def = a rgmin P ∈V V P,W , where V def = { P | I ( P , W ) = C DM W } . In order to treat such a case, Theorem 1 is generalized as follows: Theor em 2: (Stra ssen[3]) When the card inality |X | is finite and the set V has multiple elemen ts, (4) and (5) are generalized as C DM p ( a, C DM W | W ) = G ( a/ q V + W ) a ≥ 0 G ( a/ q V − W ) a < 0 C DM ( ǫ, C DM W | W ) = q V + W G − 1 ( ǫ ) ǫ ≥ 1 / 2 q V − W G − 1 ( ǫ ) ǫ < 1 / 2 . More prec isely , the dir ect part C DM p ( a, C DM W | W ) ≤ G ( a/ q V + W ) a ≥ 0 G ( a/ q V − W ) a < 0 (6) C DM ( ǫ, C DM W | W ) ≥ q V + W G − 1 ( ǫ ) ǫ ≥ 1 / 2 q V − W G − 1 ( ǫ ) ǫ < 1 / 2 . (7) hold withou t any assumption, and the con verse part C DM p ( a, C DM W | W ) ≥ G ( a/ q V + W ) a ≥ 0 G ( a/ q V − W ) a < 0 C DM ( ǫ, C DM W | W ) ≤ q V + W G − 1 ( ǫ ) ǫ ≥ 1 / 2 q V − W G − 1 ( ǫ ) ǫ < 1 / 2 . 5 hold with the assumption |X | < ∞ . Next, consider the cost f unction c : X 7→ R . In this case, we of ten assume that all encoded alphabe ts φ ( i ) o f the code Φ n belongs to th e set X n c,K def = ( x ∈ X n n X i =1 c ( x i ) ≤ K ) . The maxim um coding rate with th e above condition is called th e capacity with the cost constraint, and is gi ven by [10] C DM W ,c,K = max P :E P c ( x ) ≤ K I ( P, W ) = min Q max P :E P c ( x ) ≤ K J ( P, Q, W ) , where J ( P, Q, W ) def = X x ∈X P ( x ) D ( W x k Q ) . In the sam e way to ( 2) and (3), we define the following v alues with the co st constrain t: C DM p ( a, C DM W | W , c, K ) def = inf { Φ n } ∞ n =1 lim sup n →∞ P e,W × n (Φ n ) lim inf n →∞ 1 √ n (log | Φ n | − nC DM W ) ≥ a, supp(Φ n ) ⊂ X n c,K . (8) C DM ( ǫ, C DM W | W , c, K ) def = sup { Φ n } ∞ n =1 lim inf n →∞ 1 √ n (log | Φ n | − nC DM W ) lim sup n →∞ P e,W × n (Φ n ) ≤ ǫ, supp(Φ n ) ⊂ X n c,K , (9) where supp(Φ n ) expr esses the set { φ (1) , . . . , φ ( N ) } for a code Φ = ( N , φ, {D i } N i =1 ) . W e intr oduce two qua ntities V + W ,c,K and V − W ,c,K and two distributions P M + ,c, K and P M − ,c ,K : V + W ,c,K def = max P ∈V c,K V P,W V − W ,c,K def = min P ∈V c,K V P,W P M + ,c, K def = a rgmax P ∈V c,K V P,W P M − ,c ,K def = a rgmin P ∈V c,K V P,W , where V c,K def = { P | I ( P , W ) = C DM W ,c,K , E P c ( x ) ≤ K } . Theor em 3: When the cardinality |X | is finite C DM p ( a, C DM W ,c,K | W , c, K ) = G ( a/ q V + W ,c,K ) a ≥ 0 G ( a/ q V − W ,c,K ) a < 0 C DM ( ǫ, C DM W ,c,K | W , c, K ) = q V + W ,c,K G − 1 ( ǫ ) ǫ ≥ 1 / 2 q V − W ,c,K G − 1 ( ǫ ) ǫ < 1 / 2 . More prec isely , the dir ect part C DM p ( a, C DM W ,c,K | W , c, K ) ≤ G ( a/ q V + W ,c,K ) a ≥ 0 G ( a/ q V − W ,c,K ) a < 0 (10) C DM ( ǫ, C DM W ,c,K | W , c, K ) ≥ q V + W ,c,K G − 1 ( ǫ ) ǫ ≥ 1 / 2 q V − W ,c,K G − 1 ( ǫ ) ǫ < 1 / 2 . (11) hold withou t any assumption, and the con verse part C DM p ( a, C DM W ,c,K | W , c, K ) ≥ G ( a/ q V + W ,c,K ) a ≥ 0 G ( a/ q V − W ,c,K ) a < 0 C DM ( ǫ, C DM W ,c,K | W , c, K ) ≤ q V + W ,c,K G − 1 ( ǫ ) ǫ ≥ 1 / 2 q V − W ,c,K G − 1 ( ǫ ) ǫ < 1 / 2 . 6 hold with the assumption |X | < ∞ . Remark 1: When the sets X and Y are given as g eneral probab ility spaces with general σ -fields σ ( X n ) and σ ( Y n ) , th e above form ulation can be extended with the following definition. The chan nel W is gi ven by the real-valued function from X and σ ( Y ) satis fying the following c ondition s; (i) For any x ∈ X , W n is a pr obability measure o n Y , (ii) For a ny F ∈ σ ( Y ) , W · ( F ) is a measurab le function on X . P take values in probability measures on X . Then, the summan ds P x ∈X P ( x ) and P y ∈Y W x ( y ) are rep laced b y R X P ( dx ) and R Y W x ( dy ) , respec ti vely . For any distribution Q on Y , the function W x ( y ) W P ( y ) is replaced by the inverse of Radon-Nikodym deri vati ve dW P dW x ( y ) o f W P with respect to W x . In th is extension, the direct part (6), (7), (1 0), and (11) are v alid. I I I . S E C O N D O R D E R C O D I N G R A T E I N A D D I T I V E M A R K OV I A N C H A N N E L Next, we we focu s on the additive Markovian channel, in wh ich, we assume tha t th e additive noise o beys the transition matrix Q ( y | x ) on the set X = { 1 , . . . , d } . Then, the channel W ( Q ) n ( y | x ) has the form Q n i =1 Q ( y i − x i | y i − 1 − x i − 1 ) , where y 0 − x 0 is the in itial state s 0 and the arith metic is based on mod d . For simplicity , we assum e tha t the tran sition matrix Q ( y | x ) is irreducible. Then, the n -th m arginal distribution Q n ( x n ) := P i 1 ,...,i n Q n i =1 Q ( x i | x i − 1 ) appr oaches the stationary distribution P Q ( x ) , which is gi ven as the eigen vector of Q ( y | x ) associated with the eigen value 1 [12]. When the con ditional distribution Q ( y | x ) is den oted by Q x ( y ) , the no rmalized entropy of the distribution Q n ( ~ x n ) := Q n i =1 Q ( x i | x i − 1 ) go es to H ( Q ) := P x P Q ( x ) H ( Q x ) . T hen, by defining the capacity C AM W in the same way as C DM W , the chan nel capacity C AM W is calculated as C AM Q = log d − H ( Q ) . (12) Similar to C DM p ( a, C DM W | W ) and C DM ( ǫ, C DM W | W ) , the second ord er quantities C AM p ( a, C AM Q | W ) and C AM ( ǫ, C AM Q | W ) are defined for the additi ve Markovian case. T hen, the f ollowing theorem holds. In this p roblem , th e variance V ( Q ) : V ( Q ) := X y ,x Q ( y | x ) P Q ( x )( − log Q ( y | x ) − H ( Q )) 2 + 2 X z ,y ,x Q ( z | y ) Q ( y | x ) P Q ( x )( − log Q ( z | y ) − H ( Q ))( − lo g Q ( y | x ) − H ( Q )) . plays an important r ole. By using these quantities, C AM p ( a, C AM Q | W ) and C AM ( ǫ, C AM Q | W ) are calc ulated in the additiv e Markovian case as follows Theor em 4: Th e relations C AM p ( a, C AM Q | W ) = G ( a/ p V ( Q )) C AM ( ǫ, C AM Q | W ) = p V ( Q ) G − 1 ( ǫ ) . hold. I V . S E C O N D O R D E R C O D I N G R A T E I N G AU S S I A N C H A N N E L In this section, we con sider the case of additive Gaussian noise. In this case, b oth of the inpu t system and the outpu t system are gi ven by R , and th e output distrib ution W x ( y ) is gi ven by 1 √ 2 π N e − ( y − x ) 2 2 N for a g iv en noise level N . If there is no restriction for input signal, the capa city diver ges. Hen ce, it is n atural to consider the cost con straint. Consider the c ost fun ction c ( x ) def = x 2 and th e max imum cost S . Then , the maximum mutu al info rmation max P :E P x 2 ≤ S I ( P, W ) is attained when P is equal to P M ( x ) def = 1 √ 2 π S e − x 2 2 S . In th is case, D ( W x k W P M ) = 1 2 log(1 + S N ) + x 2 N − S N 2(1 + S N ) . (13) Then, the ca pacity is kn own to be [9], [11] C G N ,S = max P :E P x 2 ≤ E I ( P, W ) = 1 2 log(1 + S N ) . Since Z ∞ −∞ log W x ( y ) W P M ( y ) − D ( W x k W P M ) 2 W x ( y ) dy = S 2 N 2 + 2 x 2 N 2(1 + S N ) 2 , 7 V P M ,W is calculated as V P M ,W = S 2 N 2 + 2 S N 2(1 + S N ) 2 . Since the cardinality of R is in finite, the assumption of section I I does not hold. That is, we can not apply Theorem 3 . Howe ver , the fo llowing theo rem holds. Theor em 5: Defin e the qu antities C G p ( a, C G N ,S | N , S ) and C G ( ǫ, C G N ,S | N , S ) in the same w ay as (8) an d (9). T hen, C G p ( a, C G N ,S | N , S ) = G ( a/ p V P M ,W ) C G ( ǫ, C G N ,S | N , S ) = p V P M ,W G − 1 ( ǫ ) . V . C O M PA R I S O N W I T H T H E G A L L AG E R B O U N D At first glance, the Gallag er bound [1] seem s to work well fo r evaluating the average error probab ility , even when the transmission le ngth is close to nC DM W . T his is because th is boun d gives the optimal e xpone ntial rate when the coding r ate is greater than the critical rate. In this section, we clarify whether the present ev aluation or th e Gallager boun d [1 ] provides a better ev aluation when the transmission leng th is close to nC DM W . For this analysis, we d escribe the tran smission length b y nC DM W + √ nR 2 . Let us compare the present ev aluation with the Gallag er boun d, which is given by min Φ: | Φ |≤ e nR P e,W × n (Φ) ≤ min P min 0 ≤ s ≤ 1 e n ( Rs + ψ P ( s )) , (14) where ψ P ( s ) def = lo g X y X x P ( x ) W x ( y ) 1 1+ s ! 1+ s . Since the p resent ev aluation is essentially based o n V erd ´ u-Han ’ s method[14], this com parison can be regard ed a s a comparison between V erd ´ u-Han’ s ev aluation and the Gallager bound. Next, we substitute nC DM W + √ nR 2 into nR . T hen, min 0 ≤ s ≤ 1 e n ( Rs + ψ P ( s )) = e n min 0 ≤ s ≤ 1 ( C DM W s + R 2 √ n s + ψ P ( s )) . T akin g the derivati ves of ψ P ( s ) , we o btain dψ P ( s ) ds s =0 = − I ( P , W ) d 2 ψ P ( s ) ds 2 s =0 = V P,W . When C DM W = I ( P, W ) , C DM W s + R 2 √ n s + ψ P ( s ) ∼ = C DM W s + R 2 √ n s − I ( P, W ) s + V P,W 2 s 2 = R 2 √ n s + V P,W 2 s 2 = V P,W 2 ( s + R 2 √ nV P,W ) 2 − R 2 2 2 nV P,W . Therefo re, as is rigor ously sho wn in Appen dix, when R 2 < 0 , lim n →∞ n min 0 ≤ s ≤ 1 C DM W s + R 2 √ n s + ψ P ( s ) = − R 2 2 2 V P,W . (15) Next, we set P as P M − . Then , the Gallager b ound yields C DM p ( R 2 , C DM W | W ) ≤ e − R 2 2 2 V − W (16) for any R 2 < 0 . That is, the gap between our e valuation and the Gallager bound is equal to the difference between F ( R 2 √ V − W ) = R R 2 √ V − W −∞ 1 √ 2 π e − x 2 / 2 dx and e − R 2 2 2 V − W . Althou gh the fo rmer is smaller than the latter , b oth exponen tial rates coincide in the limit R 2 → ∞ . Since we can consid er that the Gallager bound gives the tri vial bound for R 2 > 0 , both ev aluations are illustrated in Fig. 2. Next, we consider the same comparison for the ad ditiv e Markovian case. The Gallager boun d is given by min Φ: | Φ |≤ e nR P e,W ( Q ) n (Φ) ≤ min P min 0 ≤ s ≤ 1 e n ( Rs + ψ Q,n ( s )) , 8 -4 -2 0 2 4 R 2 !! ! !! ! V W - H V W - = V W + L 0 0.2 0.4 0.6 0.8 1 Probability Fig. 2. Comparison between th e present e valuat ion and the Ga llage r boun d. The s olid line indicates the Gallag er bound, and the dotted li ne i ndicat es the present e v al uation. where ψ Q,n ( s ) def = − s log d + 1 + s n log( X ~ x n Q n ( ~ x n ) 1 1+ s ) . Since the asym ptotic first an d second cumm ulants of the ran dom variable log Q n ( ~ x n ) are − H ( Q ) and V ( Q ) , we ha ve log( X ~ x n Q n ( ~ x n ) 1+ t √ n ) = − H ( Q ) t √ n + V ( Q ) 2 t 2 + o ( t 2 ) as t → 0 . Thus, nψ Q,n ( t √ n ) = ( − log d + H ( Q )) t √ n + V ( Q ) 2 t 2 + o ( t 2 ) . Substituting nC W + √ nR 2 and t √ n into nR and s , we h av e C AM Q s + R 2 √ n s + ψ Q,n ( s ) = n ( C AM Q t √ n + R 2 √ n t √ n + ψ Q,n ( t √ n )) = R 2 t + V ( Q ) 2 t 2 + o ( t 2 ) = V ( Q ) 2 ( t + R 2 V ( Q ) ) 2 − R 2 2 2 V ( Q ) . + o ( t 2 ) Therefo re, when R 2 < 0 , choosing s = − R 2 V ( Q ) √ n , we o btain min Φ: | Φ |≤ e nC AM Q + √ nR 2 P e,W ( Q ) n (Φ) ≤ e − R 2 2 2 V ( Q ) , which has th e same fo rm as (1 6). In b oth cases, when − 3 ≤ R 2 ≤ 2 , th e difference is n ot so small. In such a case, it is better to use the p resent ev aluation. That is, the Gallag er b ound does n ot gi ve the best ev aluation in this case. Th is conclusion is op posite to the expon ential ev aluation when the rate is greater than the critical rate. Han [5] calculated the exponential rate of the present boun d, and found that it is worse than that of th e Gallager bound 1 . Moreover , a similar con clusion was o btained in the LDPC case. K abashima and Saad [13] compared the Gallage r up per bound of the average error prob ability an d the app roximatio n of the average err or proba bility by th e replica meth od. That is, they compa red both thresh olds of the rate, i.e., both m aximum transmission r ates at wh ich the r espective er ror pr obability goes to zero. In their study (T ab le 1 of [13]), they pointed out that there exists a non-negligible d ifference between these two thresholds in th e LDPC case. Th is infor mation may be h elpful for discussing th e perfo rmance of the Gallag er bound. 1 This descrip tion was pro vided in the original Japanese v ersion, b ut not in the English tra nslatio n. 9 V I . P RO P E RT I E S O F V + W A N D V − W A. Exa mple In this section, we con sider a typical example, in which, V + W is different than V − W . For this purpose, we choose two parameter s q 1 , q 2 ∈ [0 , 1 ] satisfying 0 ≤ 2 q 1 − q 2 ≤ 1 h ( q 1 ) − h ( q 2 ) + h (2 q 1 − q 2 ) 2 ≤ − log max { q 1 , 1 − q 1 } , (17) where h ( x ) def = − x lo g x − (1 − x ) log (1 − x ) . According to the following three conditions (i), (ii) an d (iii), we d efine the fi ve joint distributions W 1 , W 2 , W 3 , W 4 , and W 5 on two rando m v ariables A = 0 , 1 and B = 0 , 1 . In the following, Q A ( Q B ) denotes the ma rginal distrib ution of A concerning A ( B ). (i) Unifor mity on A All distributions are assumed to satisfy W A i (0) = 1 / 2 . (ii) Same m arginal distribution on B for i = 1 , 2 T wo rand om v ariables A = 0 , 1 and B = 0 , 1 are not independent in W 1 and W 2 , but W 1 and W 2 have the same marginal distribution on B . That is, W B 1 (0 | A = 0) = W B 2 (0 | A = 1) = q 2 W B 1 (0 | A = 1) = W B 2 (0 | A = 0) = 2 q 1 − q 2 . Thus, W 1 and W 2 satisfy W B 1 (0) = W B 2 (0) = q 1 . (iii) In depend ence b etween A and B for i = 3 , 4 , 5 Due to the conditio n (17), ther e exist two solu tions fo r x in the following equation b ecause d ( x k q 1 ) is mon otone increasing in ( q 1 , 1 ) and is monotone decreasing in (0 , q 1 ) : h ( q 1 ) − h ( q 2 ) + h (2 q 1 − q 2 ) 2 = d ( x k q 1 ) , where d ( x k y ) def = x log x y + (1 − x ) log 1 − x 1 − y . Letting p 1 and p 2 be th ese two solu tions, we define three distributions W 3 , W 4 , and W 5 , in which two rando m variables A = 0 , 1 and B = 0 , 1 are indep endent, by W B 3 (0) = p 1 , W B 4 (0) = p 2 , W B 5 (0) = q 1 . From the con struction, we can check that D ( W i k W 5 ) = h ( q 1 ) − h ( q 2 ) + h (2 q 1 − q 2 ) 2 (18) for i = 1 , 2 , 3 , 4 . Consider the sub sets Z 0 def = { Q | Q A (0) = 1 / 2 } Z 1 def = { Q ∈ Z 0 | Q B (0) = q 1 } Z 2 def = { Q ∈ Z 0 | Q B (0 | A = 0) = Q B (0 | A = 1) } . Then, Z 1 ∩ Z 2 = { W 5 } . Hen ce, the relationship among Z 0 , Z 1 , Z 2 , W 1 , W 2 , W 3 , W 4 , a nd W 5 is shown in Fig. 3. For any distribution Q , Then, the f ollowing lemma holds. Lemma 1: argmax Q min x =1 , 2 D ( W x k Q ) = ar g max Q ∈Z 1 min x =1 , 2 D ( W x k Q ) (19) argmax Q min x =3 , 4 D ( W x k Q ) = ar g max Q ∈Z 2 min x =3 , 4 D ( W x k Q ) . (20) 10 0 Z 1 Z 2 Z 5 W 1 W 2 W 3 W 4 W Fig. 3. Z 0 , Z 1 , Z 2 , W 1 , W 2 , W 3 , W 4 , and W 5 Therefo re, (18) implies that argmax Q min x =1 , 2 , 3 , 4 D ( W x k Q ) = W 5 . and max Q min x =1 , 2 D ( W x k Q ) = max Q ∈Z 1 min x =1 , 2 D ( W x k Q ) = h ( q 1 ) − h ( q 2 ) + h (2 q 1 − q 2 ) 2 max Q min x =3 , 4 D ( W x k Q ) = max Q ∈Z 2 min x =3 , 4 D ( W x k Q ) = h ( q 1 ) − h ( q 2 ) + h (2 q 1 − q 2 ) 2 . That is, the capacity of the chan nel x = 1 , 2 , 3 , 4 7→ W x is calculated as C DM W = max Q min x =1 , 2 , 3 , 4 D ( W x k Q ) = h ( q 1 ) − h ( q 2 ) + h (2 q 1 − q 2 ) 2 . Then, the set V is gi ven by the c onv ex hull of P = (1 / 2 , 1 / 2 , 0 , 0) and P ′ = (0 , 0 , q 1 − p 2 p 1 − p 2 , q 1 − p 1 p 2 − p 1 ) . Thus, V λP +(1 − λ ) P ′ ,W = λV P,W + (1 − λ ) V P ′ ,W . When V P,W ≤ V P ′ ,W , V + W = V P ′ ,W , V − W = V P,W . Otherwise, V + W = V P,W , V − W = V P ′ ,W . Our num erical analysis (Fig. 4) su ggests the re lation V P,W ≤ V P ′ ,W . 0 0.1 0.2 0.3 0.4 0.5 q 1 H q 2 = 0.1 L 0 0.2 0.4 0.6 0.8 V 1 , V 2 0 0.1 0.2 0.3 0.4 0.5 q 1 H q 2 = 0.1 L 0 0.005 0.01 0.015 0.02 0.025 0.03 0.035 V 2 - V 1 Fig. 4. Comparison between V 1 = V P ,W (dotted line) and V 2 = V P ′ ,W (solid line ). Pr o of of Lemma 1: For this pro of, we define the maps E A and E B as ( E A Q )( A = a, B = b ) := P A ( a ) Q ( B = b | A = a ) ( E B Q )( A = a, B = b ) := P B ( b ) Q ( A = a | B = b ) , 11 where P A (0) = 1 / 2 an d P B (0) = q 1 . when the distribution Q ′ satisfies that Q ′ A = P A , the following Pyth agorean type inequality D ( Q ′ k Q ) = D ( Q ′ kE A ( Q )) + D ( E A ( Q ) k Q ) (21) holds. Similarly , when the distribution Q ′ satisfies that Q ′ B = P B , the follo wing Pythagorean type inequality D ( Q ′ k Q ) = D ( Q ′ kE B ( Q )) + D ( E B Q k Q ) (22) holds. Define Q 2 k := E B ◦ E A ◦ · · · ◦ E B ◦ E A | {z } 2 k Q and Q 2 k +1 := E A ◦ E B ◦ E A ◦ · · · ◦ E B ◦ E A | {z } 2 k +1 Q . Then , D ( Q 2 k +1 k Q 2 k ) = D ( E A Q 2 k kE A Q 2 k − 1 ) ≤ D ( Q 2 k k Q 2 k − 1 ) , and D ( Q 2 k k Q 2 k − 1 ) ≤ D ( Q 2 k − 1 k Q 2 k − 2 ) . For any Q ′ ∈ Z 1 , we h ave D ( Q ′ k Q ) = D ( Q ′ k Q n ) + n X k =1 D ( Q k k Q k − 1 ) . Thus, D ( Q k k Q k − 1 ) conver ges to zero. Therefor e, there exists a distribution Q ∞ such that Q k → Q ∞ . Hence, D ( Q ′ k Q ) = D ( Q ′ k Q ∞ ) + ∞ X k =1 D ( Q k k Q k − 1 ) , which implies (19 ). Further, for any P 2 ∈ Z 2 , we assume that Q satisfies Q A = P A . Since the concavity o f log implies the inequality log P a P A ( a ) Q ( B = b | A = a ) ≥ P a P A ( a ) log Q ( B = b | A = a ) , the f ollowing Pythagorean type inequality D ( P 2 k Q ) = H ( P 2 ) − X a X b P A 2 ( a ) P B 2 ( b ) lo g Q ( a, b ) = H ( P 2 ) − X a P A 2 ( a ) log Q A ( a ) − X a X b P A 2 ( a ) P B 2 ( b ) lo g Q ( B = b | A = a ) = H ( P 2 ) − X a P A 2 ( a ) log Q A ( a ) − X b P B 2 ( b ) lo g Q B ( b ) + X b P B 2 ( b ) lo g Q B ( b ) − X a X b P A 2 ( a ) P B 2 ( b ) lo g Q ( B = b | A = a ) = D ( P 2 k P A 2 × P B 2 ) + X b P B 2 ( b ) lo g Q B ( b ) − X b P B 2 ( b ) X a P A 2 ( a ) log Q ( B = b | A = a ) = D ( P 2 k P A 2 × P B 2 ) + X b P B 2 ( b ) log X a P A ( a ) Q ( B = b | A = a ) − X a P A ( a ) log Q ( B = b | A = a ) ! ≥ D ( P 2 k P A 2 × P B 2 ) (23) holds. Comb ination of (22) and (23) yields (20). B. Ad ditivity The capacity satisfies the additivity c ondition . Th at is, for any two ch annels { W x ( y ) } a nd { W ′ x ′ ( y ′ ) } , the co mbined chann el { ( W × W ′ ) x,x ′ ( y , y ′ ) = W x ( y ) W ′ x ′ ( y ′ ) } satisfies the follo wing: C DM W × W ′ = C DM W + C DM W ′ . Similarly , as mentioned in the following lemma, V + W and V − W satisfy the add itivity condition. Lemma 2: The equation s V + W × W ′ = V + W + V + W ′ (24) V − W × W ′ = V − W + V − W ′ (25) hold. Pr o of of Lemma 2: W e c hoose the distrib utions Q and Q ′ as Q def = a rgmin Q max x D ( W x k Q ) Q ′ def = a rgmin Q ′ max x ′ D ( W ′ x ′ k Q ′ ) . Then, Q × Q ′ = argmin Q ′′ max x,x ′ D ( W x × W ′ x ′ k Q ′′ ) . 12 Assume that a distrib ution P with the r andom variables x and x ′ satisfies the follo wing: X x,x ′ P ( x, x ′ ) W x × W ′ x ′ = Q × Q ′ , (26) I ( P, W × W ′ ) = C DM W + C DM W ′ . (27) Then, the m arginal distributions P 1 and P 1 of P con cerning x and x ′ satisfy I ( P 1 , W ) = C DM W , I ( P 2 , W ′ ) = C DM W ′ , which implies D ( W x k Q ) = C DM W , D ( W ′ x ′ k Q ′ ) = C DM W ′ for x ∈ supp( P 1 ) and x ′ ∈ s upp( P 2 ) , where s upp( P ) denotes the suppor t of the distribution P . Hence, V P,W × W ′ = X x,x ′ P ( x, x ′ ) X y ,y ′ W x ( y ) W ′ x ′ ( y ′ )(log W x ( y ) Q ( y ) + log W ′ x ′ ( y ′ ) Q ′ ( y ′ ) ) 2 − ( D ( W x k Q ) + D ( W ′ x ′ k Q ′ )) 2 = X x,x ′ P ( x, x ′ ) X y ,y ′ W x ( y ) W ′ x ′ ( y ′ ) (log W x ( y ) Q ( y ) ) 2 + (log W ′ x ′ ( y ′ ) Q ′ ( y ′ ) ) 2 + 2 lo g W x ( y ) Q ( y ) log W ′ x ′ ( y ′ ) Q ′ ( y ′ ) − ( D ( W x k Q ) 2 + D ( W ′ x ′ k Q ′ ) 2 + 2 D ( W x k Q ) D ( W ′ x ′ k Q ′ )) = X x,x ′ P ( x, x ′ ) X y ,y ′ W x ( y ) W ′ x ′ ( y ′ ) (log W x ( y ) Q ( y ) ) 2 + (log W ′ x ′ ( y ′ ) Q ′ ( y ′ ) ) 2 − D ( W x k Q ) 2 − D ( W ′ x ′ k Q ′ ) 2 = V P 1 ,W + V P 2 ,W ′ . Therefo re, when the conditions (26) and (27) ar e satisfied, the maximum of V P,W × W ′ is equal to V + W + V + W ′ , w hich implies (24). Similarly , we obtain (25). The same fact holds with the cost constrain t. The capacity w ith th e cost con straint satisfies the additi vity co ndition. Th at is , for any two cost fu cntions c and c ′ for channels { W x ( y ) } a nd { W ′ x ′ ( y ′ ) } , the co mbined cost ( c + c ′ )( x, x ′ ) def = c ( x ) + c ′ ( x ′ ) satisfies the fo llowing: C DM W × W ′ ,c + c ′ ,K + K ′ = C DM W ,c,K + C DM W ′ ,c ′ ,K ′ . The quan tities V + W ,c,K and V − W ,c,K satisfy the add itivity co ndition. Lemma 3: The equation s V + W × W ′ ,c + c ′ ,K + K ′ = V + W ,c,K + V + W ′ ,c ′ ,K ′ (28) V − W × W ′ ,c + c ′ ,K + K ′ = V − W ,c,K + V − W ′ ,c ′ ,K ′ (29) hold. This lemma c an be proven in the same way as Lemma 2 by rep lacing the d efinitions of Q and Q ′ by Q def = a rgmin Q max P :E P c ( x ) ≤ K X x P ( x ) D ( W x k Q ) Q ′ def = a rgmin Q ′ max P ′ :E P ′ c ′ ( x ′ ) ≤ K ′ X x ′ P ′ ( x ′ ) D ( W ′ x ′ k Q ′ ) . V I I . N O TA T I O N S O F T H E I N F O R M A T I O N S P E C T RU M A. In formation Spectrum In the present paper, we trea t g eneral channels. First, we focus on two sequences o f probab ility spaces {X n } ∞ n =1 of th e input s ignal and those {Y n } ∞ n =1 of the outp ut signa l, and a seq uence of probab ility transition matrixes W def = { W n ( y | x ) } ∞ n =1 . W e also focu s o n a sequence of distrib utions on input system s P def = { P n } ∞ n =1 . The asymptotic beha vior o f the logarithm ic likelihood ratio b etween W n x ( y ) def = W n ( y | x ) and W n P n ( y ) def = P x ∈X n P n ( x ) W n ( y | x ) can be char acterized by the following quantities I p ( R | P , W ) def = lim sup n →∞ X x ∈X n P n ( x ) W n x 1 n log W n x ( y ) W n P n ( y ) < R I ( ǫ | P , W ) def = s up { R | I p ( R | P , W ) ≤ ǫ } = inf { R | I p ( R | P , W ) ≥ ǫ } 13 for 0 ≤ ǫ < 1 . Focusing on a seq uence of distrib utions on output systems Q def = { Q n } ∞ n =1 , we c an define J p ( R | P , Q , W ) def = lim sup n →∞ X x ∈X n P n ( x ) W n x 1 n log W n x ( y ) Q n ( y ) < R J ( ǫ | P , Q , W ) def = s up { R | J p ( R | P , Q , W ) ≤ ǫ } = inf { R | J p ( R | P , Q , W ) ≥ ǫ } for 0 ≤ ǫ < 1 . When the channel W n is the n -th stationary discrete memoryless channel W × n of W ( y | x ) and the probability distrib ution P = { P n } is the n -th independ ent an d identical distrib ution P × n of P , the la w of lar ge numbers guarantees that I ( ǫ | P , W ) coincides with the mutual in formatio n I ( P, W ) = P x,y P ( x ) W x ( y ) log W x ( y ) W P ( y ) . F or a more detailed description of asymptotic behavior , we focus on the second order of the coding length n β for β < 1 . In o rder to ch aracterize the coefficient of the second order n β , we in troduc e th e following quantities: I p ( R 2 , R 1 | P , W ) def = lim sup n →∞ X x ∈X n P n ( x ) W n x 1 n β (log W n x ( y ) W n P n ( y ) − nR 1 ) < R 2 I ( ǫ, R 1 | P , W ) def = s up { R 2 | I p ( R 2 , R 1 | P , W ) ≤ ǫ } = inf { R 2 | I p ( R 2 , R 1 | P , W ) ≥ ǫ } for 0 ≤ ǫ < 1 . Similarly , J p ( R 2 , R 1 | P , Q , W ) and J ( ǫ, R 1 | P , Q , W ) are defined f or 0 ≤ ǫ < 1 . When W is W × = { W × n } and P is P × = { P × n } , the seco nd order o f the co ding length is n 1 2 and the cen tral limit theo rem g uarantees that 1 n 1 2 (log W n x ( y ) W n P n ( y ) − nI ( P, W )) asymptotically obeys the Gaussian distribution with expectation 0 and variance: V P,W def = X x P ( x ) X y W x ( y ) log W x ( y ) W P ( y ) − I ( P , W ) 2 . Therefo re, using the distribution function F for th e standard Gaussian distribution, we can express th e above qu antities as follows: I ( ǫ, I ( P, W ) | P × , W × ) = p V P,W G − 1 ( ǫ ) . (30) In the case of additi ve chann els, we focus o n the limiting behavior of the entropy rate of th e d istributions Q = { Q n } ∞ n =1 describing the add itiv e noise. Similar to th e above, we define the fo llowing. H p ( R | Q ) def = lim inf n →∞ X x ∈X n Q n − 1 n log Q n ( x ) < R H ( ǫ | Q ) def = s up { R | H p ( R | Q ) ≤ ǫ } = inf { R | H p ( R | Q ) ≥ ǫ } H p ( R 2 , R 1 | Q ) def = lim inf n →∞ X x ∈X n Q n 1 n β ( − log Q n ( x ) − n R 1 ) < R 2 H ( ǫ, R 1 | Q ) def = s up { R 2 | H p ( R 2 , R 1 | Q ) ≤ ǫ } = inf { R 2 | H p ( R 2 , R 1 | Q ) ≥ ǫ } for 0 ≤ ǫ < 1 . As is discussed in Section VII in [6], when Q is gi ven by a Markovian process Q ( y | x ) , the relationships H ( ǫ | Q ) = H ( Q ) (31) H ( ǫ, H ( Q ) | Q ) = p V ( Q ) G − 1 ( ǫ ) (32) H p ( R 2 , H ( Q ) | Q ) = G ( R 2 / p V ( Q )) (33) hold with β = 1 / 2 . B. Sto chastic limits In order to treat the relationship between the ab ove quan tities, we consider the limit superior in probab ility p- lim sup n →∞ and the limit inferior in prob ability p- lim inf n →∞ , which a re defined b y p- lim sup n →∞ Z n | P n def = inf { a | lim n →∞ P n { Z n > a } = 0 } p- lim inf n →∞ Z n | P n def = s up { a | lim n →∞ P n { Z n < a } = 0 } . 14 In particu lar , when p- lim sup n →∞ Z n | P n = p- lim inf n →∞ Z n | P n = a , we write p- lim n →∞ Z n | P n = a. The conce pt p- lim inf n →∞ can be g eneralized as ǫ -p- lim inf n →∞ Z n | P n def = s up { a | lim sup n →∞ P n { Z n < a } ≤ ǫ } . From the defin itions, we can check the following properties: ǫ -p- lim inf n →∞ Z n + Y n | P n ≥ ǫ -p- lim inf n →∞ Z n | P n + p- lim inf n →∞ Y n | P n . (34) ǫ -p- lim inf n →∞ Z n + Y n | P n ≤ ǫ -p- lim inf n →∞ Z n | P n + p- lim sup n →∞ Y n | P n . (35) As shown by Han [5] , the relatio n p- lim inf n →∞ 1 n α log P n ( x ) P n ′ ( x ) P n ≥ 0 (36) holds for α > 0 and any two sequ ences P = { P n } and P ′ = { P n ′ } of d istributions with the variable x . By using th is concep t, I ( ǫ | P , W ) , J ( ǫ | P , Q , W ) , I ( ǫ, R 1 | P , W ) , and J ( ǫ, R 1 | P , Q , W ) are characterized by I ( ǫ | P , W ) = ǫ -p- lim inf n →∞ 1 n log W n x ( y ) W n P n ( y ) P P n ,W n J ( ǫ | P , Q , W ) = ǫ -p- lim inf n →∞ 1 n log W n x ( y ) Q n ( y ) P P n ,W n I ( ǫ, R 1 | P , W ) = ǫ -p- lim inf n →∞ 1 n β (log W n x ( y ) W n P n ( y ) − nR 1 ) P P n ,W n J ( ǫ, R 1 | P , Q , W ) = ǫ - p- lim inf n →∞ 1 n β (log W n x ( y ) Q n ( y ) − nR 1 ) P P n ,W n . Substituting W n P n and Q n into P n and P n ′ in (36), a nd using (3 4), we ob tain I ( ǫ | P , W ) ≤ J ( ǫ | P , Q , W ) I ( ǫ, R 1 | P , W ) ≤ J ( ǫ, R 1 | P , Q , W ) . Since 1 − H p ( R | Q ) = lim inf n →∞ Q n { 1 n log Q n ( x ) < − R } , H ( ǫ | Q ) is ch aracterized as − H ( ǫ | Q ) = − inf { R | H p ( R | Q ) ≥ ǫ } = sup {− R | 1 − H p ( R | Q ) ≤ 1 − ǫ } = (1 − ǫ ) -p - lim inf n →∞ 1 n log Q n ( x ) | Q n . (37) Similarly , − H ( ǫ, R 1 | Q ) = (1 − ǫ ) -p- lim inf n →∞ 1 n β (log Q n ( x ) + nR 1 ) Q n . (38) In the f ollowing, w e discuss th e relation ship between the above-men tioned qu antities and chan nel capacities. V I I I . G E N E R A L A S Y M P T OT I C F O R M U L A S A. Gene ral case Next, we consider the ǫ capacity and its related quantity , which are de fined by C p ( R | W ) def = inf { Φ n } ∞ n =1 lim sup n →∞ P e,W n (Φ n ) lim inf n →∞ 1 n log | Φ n | ≥ R C ( ǫ | W ) def = sup { Φ n } ∞ n =1 lim inf n →∞ 1 n log | Φ n | lim sup n →∞ P e,W n (Φ n ) ≤ ǫ . Concernin g these quantities, the following general asym ptotic formulas hold. Theor em 6: (V erd ´ u & Han[14], Ha yashi & Nag aoka [15]) The r elations C p ( R | W ) = inf P lim γ ↓ 0 I p ( R − γ | P , W ) = inf P sup Q lim γ ↓ 0 J p ( R − γ | P , Q , W ) (39) C ( ǫ | W ) = sup P I ( ǫ | P , W ) = sup P inf Q J ( ǫ | P , Q , W ) (40) 15 hold for 0 ≤ ǫ < 1 . Remark 2: Historically , V erd ´ u & Han [14] proved the first eq uation in (40). Hayashi & Nagao ka [ 15] established the second equation in (40) with ǫ = 0 for the first time, even for the classical case, alth ough th eir main topic was the q uantum c ase. T he relation (39) is proven fo r the first time in this paper . Next, we proc eed to the secon d-ord er cod ing rate. As a generalization of (2) and (3), we define the f ollowing: C p ( R 2 , R 1 | W ) def = inf { Φ n } ∞ n =1 lim sup n →∞ P e,W n (Φ n ) lim inf n →∞ 1 n β (log | Φ n | − nR 1 ) ≥ R 2 (41) C ( ǫ, R 1 | W ) def = sup { Φ n } ∞ n =1 lim inf n →∞ 1 n β (log | Φ n | − nR 1 ) lim sup n →∞ P e,W n (Φ n ) ≤ ǫ . (42) Similar to Th eorem 6, the follo wing general formu las for the second -orde r cod ing rate ho ld. Theor em 7: Th e relations C p ( R 2 , R 1 | W ) = inf P lim γ ↓ 0 I p ( R 2 − γ , R 1 | P , W ) = inf P sup Q lim γ ↓ 0 J p ( R 2 − γ , R 1 | P , Q , W ) (43) C ( ǫ, R 1 | W ) = sup P I ( ǫ, R 1 | P , W ) = sup P inf Q J ( ǫ, R 1 | P , Q , W ) (44) hold for 0 ≤ ǫ < 1 . Indeed , Theo rem 7 has greater significance than gen eralization. This theorem provid es a unified vie wpoint c oncernin g the second order asymp totic rate in channel coding and the fo llowing mer its. First, it shortens the pr oof of Theorem 3. Second it enables us to extend Theor em 3 to th e c ase o f cost constraint. Third, it yields the extension to Gaussian noise case, which h as continuo us input signals. Fourth, it allows us to extend the same treatment to the Mar kovian case with the add itiv e noise. B. Cost c onstraint W e fo cus on a sequence of cost function c = { c n } ∞ n =1 where c n is a functio n from X n to R . In this case, all alphabets are assumed to belong to the set X n,c,K def = ( x ∈ X n n X i =1 c n ( x ) ≤ nK ) . That is, ou r code { Φ n } is assum ed to satisfy th at supp(Φ n ) ⊂ X n,c,K . Then, the ca pacities with cost constraint are given b y C p ( R | W , c , K ) def = inf { Φ n } ∞ n =1 lim sup n →∞ P e,W n (Φ n ) lim inf n →∞ 1 n log | Φ n | ≥ R, supp(Φ n ) ⊂ X n,c,K C ( ǫ | W , c , K ) def = sup { Φ n } ∞ n =1 lim inf n →∞ 1 n log | Φ n | lim sup n →∞ P e,W n (Φ n ) ≤ ǫ, supp(Φ n ) ⊂ X n,c,K C p ( R 2 , R 1 | W , c , K ) def = inf { Φ n } ∞ n =1 lim sup n →∞ P e,W n (Φ n ) lim inf n →∞ 1 n β (log | Φ n | − nR 1 ) ≥ R 2 , s upp(Φ n ) ⊂ X n,c,K . ( 45) C ( ǫ, R 1 | W , c , K ) def = sup { Φ n } ∞ n =1 lim inf n →∞ 1 n β (log | Φ n | − nR 1 ) lim sup n →∞ P e,W n (Φ n ) ≤ ǫ, supp(Φ n ) ⊂ X n,c,K . (46) Concernin g these quantities, the following general asym ptotic formulas hold. Theor em 8: (Ha n[5], Hayashi & Nag aoka [15]) The r elations C p ( R | W , c , K ) = inf P :supp( P n ) ⊂X n,c,K lim γ ↓ 0 I p ( R − γ | P , W ) = inf P sup Q lim γ ↓ 0 J p ( R − γ | P , Q , W ) (47) C ( ǫ | W , c , K ) = sup P :supp( P n ) ⊂X n,c,K I ( ǫ | P , W ) = sup P :supp( P n ) ⊂X n,c,K inf Q J ( ǫ | P , Q , W ) (48) hold for 0 ≤ ǫ < 1 . Remark 3: Historically , Han [ 5] proved the first e quation in ( 48). Hayashi & Nagaok a [15] established the seco nd equation in (48) with ǫ = 0 for the first time, e ven f or the classical case, alth ough their main topic was the qua ntum case. The r elation (47) is p roven f or the first time in this pap er . Similar to Th eorem 7, the follo wing general formu las for the second -orde r cod ing rate ho ld. Theor em 9: Th e relations C p ( R 2 , R 1 | W , c , K ) = inf P :supp( P n ) ⊂X n,c,K lim γ ↓ 0 I p ( R 2 − γ , R 1 | P , W ) = inf P :supp( P n ) ⊂X n,c,K sup Q lim γ ↓ 0 J p ( R 2 − γ , R 1 | P , Q , W ) (49) C ( ǫ, R 1 | W , c , K ) = sup P :supp( P n ) ⊂X n,c,K I ( ǫ, R 1 | P , W ) = sup P :supp( P n ) ⊂X n,c,K inf Q J ( ǫ, R 1 | P , Q , W ) (50) 16 hold for 0 ≤ ǫ < 1 . The ab ove theorems can be regar ded as spec ial cases of Theorems 6 and 7 by substituting the set X n,c,K into the set X n . Hence, it is suf ficient to show Theorems 6 and 7. C. Ad ditive case Next, we consider th e ca se wher e the chann el is g iv en as a sequ ence of additive channel W ( Q ) = { W n ( Q n )( y | x ) = Q n ( y − x ) } o n the set X n with the car dinality d . V erd ´ u & Han proved the following theorem. Theor em 10: (V erd ´ u & Han [1 4]) The relation s C p ( R | W ( Q )) = 1 − lim γ ↓ 0 H p (log d − R + γ | Q ) (51) C ( ǫ | W ( Q )) = log d − H (1 − ǫ | Q ) (52) hold for 0 ≤ ǫ < 1 . This theo rem and (55) imply (54). Remark 4: V erd ´ u & Han proved (52) in th e c ase of ǫ = 0 at (7.2) in [14]. Other cases are proven at the first time in this paper . Similar to Th eorem 10, the f ollowing formulas for the seco nd-or der co ding rate h old for gen eral additive ch annels. Theor em 11: The r elations C p ( R 2 , R 1 | W ) = 1 − lim γ ↓ 0 H p ( − R 2 + γ , log d − R 1 | Q ) (53) C ( ǫ, R 1 | W ) = − H (1 − ǫ, log d − R 1 | Q ) (54) hold for 0 ≤ ǫ < 1 . Hence, we o btain Theorem 4 from (32) and (33). Now , u sing Theor ems 6 and 7, we p rove Theorems 10 and 11. Since W n x ( y ) = Q n ( y − x ) , we ha ve I ( ǫ | P , W ) = ǫ -p- lim inf n →∞ 1 n log W n x ( y ) W n P n ( y ) P P n ,W n ≤ ǫ -p- lim inf n →∞ 1 n log W n x ( y ) P P n ,W n + p- lim sup n →∞ − 1 n log W n P n ( y ) P P n ,W n (55) ≤ ǫ -p- lim inf n →∞ 1 n log Q n ( x ) Q n + log d = log d − H (1 − ǫ | Q ) , (56) where ( 55) and (56) follow from (35) and (37), respe ctiv ely . Since the eq uality h olds wh en P n is the unifo rm d istribution, we obtain sup P I ( ǫ | P , W ) = log d − H (1 − ǫ | Q ) , which implies (52 ). Similarly , we can show (54). Since p- lim sup n →∞ − 1 n log W n P n ( y ) | W n P n ≤ d , we hav e lim sup n →∞ X x ∈X n P n ( x ) W n x 1 n log W n x ( y ) W n P n ( y ) < R ≥ lim sup n →∞ X x ∈X n P n ( x ) W n x 1 n log W n x ( y ) + log d < R = lim sup n →∞ Q n 1 n log Q n ( x ) < R − lo g d =1 − lim inf n →∞ Q n − 1 n log Q n ( x ) < lo g d − R , which implies that I p ( R | P , W ) ≥ 1 − H p (log d − R | Q ) . Thus, we o btain (51). Similarly , we obtain (53). Remark 5: When the sets X n and Y n are gi ven as general pr obability spaces with g eneral σ -fields σ ( X n ) and σ ( Y n ) , th e above form ulation can be extended with the following definition. The n -th ch annel W n is gi ven by the real-valued function 17 from X n and σ ( Y n ) satisfyin g the following conditions; (i) For a ny x ∈ X n , W n x is a pro bability measure o n Y n , (ii) For any F ∈ σ ( Y n ) , W n · ( F ) is a measurab le fun ction o n X n . P an d Q take values in sequence of probability measures on X n and Y n , respectively . Then, the summands P x ∈X n P n ( x ) and P y ∈Y n W n x ( y ) are r eplaced by R X n P n ( dx ) and R Y n W n x ( dy ) , respectively . For any distribution Q on Y n , the fun ction W n x ( y ) Q ( y ) is r eplaced b y the in verse of Radon -Nikodym deriv ati ve dQ dW n x ( y ) of Q with respect to W n x . In the above definitions, inf P , sup P , inf Q , and sup Q are giv en as the infimum and supremu m among all sequ ences of pr obability measures on {X n } ∞ n =1 and {Y n } ∞ n =1 . The f ollowing proof is also valid in this exten sion. I X . P RO O F O F T H E G E N E R A L F O R M U L A S F O R T H E S E C O N D - O R D E R CO D I N G R A T E In th is section , we prove Theorem s 6 an d 7. That is, f or th e read er’ s conv enience, we present a proof fo r the first-ord er coding rate, as well as that for th e secon d-ord er cod ing rate. A. Direct P art W e prove the d irect part, i.e., th e ineq ualities C p ( R | W ) ≤ inf P lim γ ↓ 0 I p ( R − γ | P , W ) (57) C ( ǫ | W ) ≥ sup P I ( ǫ | P , W ) (58) C p ( R 2 , R 1 | W ) ≤ inf P lim γ ↓ 0 I p ( R 2 − γ , R 1 | P , W ) (59) C ( ǫ, R 1 | W ) ≥ sup P I ( ǫ, R 1 | P , W ) . (60) For arbitrary R , using the random coding method, we show that there exists a sequence of codes { Φ n } such that 1 n log | Φ n | → R and lim sup n →∞ P e,W n (Φ n ) ≤ I p ( R | P , W ) . Th is method is essentially the same as V erd ´ u & Han’ s method [14]. First, we set the size of Φ n,Z,R to be N n = e nR − n β / 2 with the ran dom variable Z . W e gener ate the encod er φ Z , in which x ∈ X n is chosen as φ Z ( i ) with the pr obability P ( x ) . Here, the choice o f φ Z ( i ) is independent of the choice of other φ Z ( j ) . The decod er {D i,Z } N n i =1 is cho sen by the fo llowing inducti ve method: D i,Z,R def = ( 1 n log W n φ Z ( i ) ( y ) W n P n ( y ) > R ) \ i − 1 [ j =1 D j,Z . Thus, the average error prob ability is ev aluated as E Z P e,W n (Φ n,Z,R ) ≤ E Z 1 N n N n X i =1 W n φ Z ( i ) ( 1 n log W n φ Z ( i ) ( y ) W n P n ( y ) > R ) c [ i − 1 [ j =1 ( 1 n log W n φ Z ( j ) ( y ) W n P n ( y ) > R ) ≤ E Z 1 N n N n X i =1 W n φ Z ( i ) ( 1 n log W n φ Z ( i ) ( y ) W n P n ( y ) < R ) + E Z 1 N n N n X i =1 i − 1 X j =1 W n φ Z ( i ) ( 1 n log W n φ Z ( j ) ( y ) W n P n ( y ) ≥ R ) = X x P n ( x ) W n x 1 n log W n x ( y ) W n P n ( y ) ≤ R + 1 N n N n X i =1 i − 1 X j =1 E Z ( E Z W n φ Z ( i ) ) ( 1 n log W n φ Z ( j ) ( y ) W n P n ( y ) ≥ R ) . The second ter m is ev aluated as 1 N n N n X i =1 i − 1 X j =1 E Z ( E Z W n φ Z ( i ) ) ( 1 n log W n φ Z ( j ) ( y ) W n P n ( y ) ≥ R ) = 1 N n N n ( N n − 1) 2 X x P ( x ) W n P 1 n log W n x ( y ) W n P n ( y ) ≥ R = N n − 1 2 X x P ( x ) W n P { W n x ( y ) e − nR ≥ W n P n ( y ) } ≤ N n 2 e − nR ≤ e − n β / 2 2 → 0 . Since lim inf n →∞ P x P n ( x ) W n x n 1 n log W n x ( y ) W n P n ( y ) ≤ R o = I p ( R | P , W ) , (35) implies that lim inf n →∞ E Z P e,W n (Φ n,Z ) ≤ I p ( R | P , W ) . Th us, the co n vergence 1 n log | N n | → R implies the inequa lity C p ( R | W ) ≤ inf P I p ( R | P , W ) . Next, in order to p rove (57), for any sequence P , we con struct a code Φ n such that lim sup n →∞ P e,W n (Φ n ) ≤ lim γ ↓ 0 I p ( R 0 − γ | P , W ) . For any k , we choo se the integer N k such th at E Z P e,W n (Φ n,Z,R 0 − 1 /k ) ≤ I p ( R 0 − 1 / k | P , W ) + 1 /k for 18 ∀ n ≥ N k . Then , for any n , we ch oose k ( n ) to be th e maximum k satisfying n ≥ N k . Then , k ( n ) → ∞ as n → ∞ . Thus, E Z Φ n,Z,R 0 − 1 /k ( n ) goes to lim γ ↓ 0 I p ( R 0 − γ | P , W ) , an d 1 n log | Φ n,Z,R 0 − 1 /k ( n ) | goes to R 0 . Hence, we o btain the inequality C p ( R | W ) ≤ inf P lim γ ↓ 0 I p ( R 0 − γ | P , W ) , i.e., ( 57). For pr oving (59), we ch oose N n = e nR 1 + n β R 2 − n β / 2 . Substituting nR 1 + n β R 2 into nR in the abov e discussion, we d enote the cod e Φ n,Z,R by Φ n,Z,R 1 ,R 2 . Then , E Z P e,W n (Φ n,Z,R 1 ,R 2 ) ≤ X x P n ( x ) W n x ( 1 n β log W n φ Z ( i ) ( y ) W n P n ( y ) − nR 1 ! < R 2 ) + N n 2 e − ( nR 1 + n β R 2 ) . Since N n 2 e − ( nR 1 + n β R 2 ) ≤ e − n β / 2 2 → 0 and 1 n β log | N n | e nR 1 → R 2 , we obtain the inequality C p ( R 2 , R 1 | W ) ≤ inf P I p ( R 2 , R 1 | P , W ) . For any k , we choose the integer N k such that E Z P e,W n (Φ n,Z,R 1 ,R 2 − 1 /k ) ≤ I p ( R 2 − 1 /k , R 1 | P , W ) + 1 /k fo r ∀ n ≥ N k . Then, defining k ( n ) similar ly , we obtain E Z Φ n,Z,R 1 ,R 2 − 1 /k ( n ) → lim γ ↓ 0 I p ( R 2 − γ , R 1 | P , W ) , and 1 n β log | Φ n,Z,R 1 ,R 2 − 1 /k ( n ) | e nR 1 → R 2 . Hence, we obtain the inequ ality C p ( R 2 , R 1 | W ) ≤ inf P lim γ ↓ 0 I p ( R 2 − γ , R 1 | P , W ) , i.e., (59 ). For an arbitrary num ber R < sup P I ( ǫ | P , W ) , there e xists a sequence of input distributions P such that I p ( R | P , W ) ≤ ǫ . Therefo re, the inequality (58) ho lds. Similarly , we c an show the inequa lity (60). B. Convers e part Next, we prove the conv erse part, i.e., C p ( R | W ) ≥ inf P sup Q lim γ ↓ 0 J p ( R − γ | P , Q , W ) ( 61) C ( ǫ | W ) ≤ sup P inf Q J ( ǫ | P , Q , W ) (62) C p ( R 2 , R 1 | W ) ≥ inf P sup Q lim γ ↓ 0 J p ( R 2 − γ , R 1 | P , Q , W ) (63 ) C ( ǫ, R 1 | W ) ≤ sup P inf Q J ( ǫ, R 1 | P , Q , W ) , (64) which comp lete our proo f, because the other in equalities inf P lim γ ↓ 0 I p ( R − γ | P , W ) ≤ inf P sup Q lim γ ↓ 0 J p ( R − γ | P , Q , W ) sup P I ( ǫ | P , W ) ≥ sup P inf Q J ( ǫ | P , Q , W ) inf P lim γ ↓ 0 I p ( R 2 − γ , R 1 | P , W ) ≤ inf P sup Q lim γ ↓ 0 J p ( R 2 − γ , R 1 | P , Q , W ) sup P I ( ǫ, R 1 | P , W ) ≥ sup P inf Q J ( ǫ, R 1 | P , Q , W ) are tri vial based on their definitions. In the con verse part, we essentially em ploy Hayash i-Nagaok a’ s[15 ] m ethod. W e choose an arbitrar y sequence of code s { Φ n } ∞ n =1 . Let R be lim inf n →∞ 1 n log | Φ n | . Assume tha t the code Φ n consists of the triplet ( N n , φ, {D i } N n i =1 ) . Then , for any sequence of ou tput distributions Q = { Q n } ∞ n =1 and any real γ > 0 , the inequality P e,W n (Φ n ) ≥ X x ∈X n P Φ n ( x ) W n x 1 n log W n x ( y ) Q n ( y ) < R − γ − e n ( R − γ ) N n (65) holds, wher e P Φ n is the em pirical distribution for the | Φ n | poin ts ( φ (1) , . . . , φ ( N n )) . Since e n ( R − γ ) N n → 0 , the relatio n lim inf n →∞ P e,W n (Φ n ) ≥ J p ( R − γ | P ′ , Q , W ) h olds for any Q , where P ′ = { P Φ n } . Thu s, lim inf n →∞ P e,W n (Φ n ) ≥ sup Q lim γ ↓ 0 J p ( R − γ | P ′ , Q , W ) . Theref ore, lim inf n →∞ P e,W n (Φ n ) ≥ inf P sup Q lim γ ↓ 0 J p ( R − γ | P ′ , Q , W ) , wh ich implies (61). Now , assume that lim sup n →∞ P e,W n (Φ n ) = ǫ . Since e n ( R − γ ) N n → 0 , ( 65) implies that R − γ ≤ J ( ǫ | P , Q , W ) . Thus, R − γ ≤ sup P inf Q J ( ǫ | P , Q , W ) , which implies Since γ is an arbitrary positi ve r eal number, R ≤ sup P inf Q J ( ǫ | P , Q , W ) , which implies (62 ). Next, consider the case in which lim inf n →∞ 1 n β log | Φ n | e nR 1 = R 2 . Replacin g R − γ by R 1 + n β − 1 ( R 2 − γ ) in ( 65), we obtain e nR 1 + n β ( R 2 − γ ) N n → 0 . Thus, lim inf n →∞ P e,W n (Φ n ) ≥ inf P sup Q lim γ ↓ 0 J p ( R 2 − γ , R 1 | P , Q , W ) , which implies (63). replacing R 1 + R 2 n β − 1 into R − γ in (65), similar to (62), we can sh ow (64). 19 The inequ ality (65) is shown as follows. W e focus on the inequ alities: W n φ ( i ) ( D i ) − e nR ′ Q n ( D i ) ≤ W n φ ( i ) ( { W n φ ( i ) ( y ) − e nR ′ Q n ( y ) ≥ 0 } ) − e nR ′ Q n ( { W n φ ( i ) ( y ) − e nR ′ Q n ( y ) ≥ 0 } ) ≤ W n φ ( i ) ( { W n φ ( i ) ( y ) − e nR ′ Q n ( y ) ≥ 0 } ) = W n φ ( i ) ( 1 n log W n φ ( i ) ( y ) Q n ( y ) ≥ R ′ ) , where the first inequality follows fr om the fact that any two distributions P and Q and a ny po siti ve constant a satisfy max D [ P ( D ) − aQ ( D )] = P { P ( ω ) − aQ ( ω ) ≥ 0 } − aQ { P ( ω ) − aQ ( ω ) ≥ 0 } . Thus, 1 − P e,W n (Φ n ) = 1 N n N n X i =1 W n φ ( i ) ( D i ) ≤ 1 N n N n X i =1 e nR ′ Q n ( D i ) + W n φ ( i ) ( 1 n log W n φ ( i ) ( y ) Q n ( y ) ≥ R ′ ) = e nR ′ N n + 1 − X x ∈X n P Φ n ( x ) W n x 1 n log W n x ( y ) Q n ( y ) < R ′ , which implies (65 ). X . P RO O F O F T H E S TA T I O N A RY M E M O RY L E S S C A S E A. Pr oof of Theo r em 2 In this subsection, using Theorem 7 , we prove Theorem 2 when the cardinality |X | is finite. For this purpose, we sho w the following relations in the stationary discrete memor yless case, i.e., the case in which W n x ( y ) = W × n x ( y ) def = Q n i =1 W x i ( y i ) for x = ( x 1 , . . . , x n ) and y = ( y 1 , . . . , y n ) . I n this section, a bbreviating C DM W as C , we will prove that inf P lim γ ↓ 0 I p ( R 2 − γ , C | P , W ) ≤ G ( R 2 / q V + W ) R 2 ≥ 0 G ( R 2 / q V − W ) R 2 < 0 . (66) and inf P sup Q lim γ ↓ 0 J p ( R 2 − γ , C | P , Q , W ) ≥ G ( R 2 / q V + W ) R 2 ≥ 0 G ( R 2 / q V − W ) R 2 < 0 . (67) Showing both inequalities and u sing Theor em 7, we obtain C p ( R 2 , R 1 | W ) = G ( R 2 / q V + W ) R 2 ≥ 0 G ( R 2 / q V − W ) R 2 < 0 . (68) Since the rh s of (6 8) is con tinuou s with respect to ǫ , (68) imp lies that C ( ǫ, R 1 | W ) = q V + W G − 1 ( ǫ ) ǫ ≥ 1 / 2 q V − W G − 1 ( ǫ ) ǫ < 1 / 2 . That is, we can show Theorem 2. In fact, when P is the i.i.d . of P M + or P M − , I ( ǫ, C | P , W ) is equal to q V + W F − 1 ( ǫ ) or q V − W F − 1 ( ǫ ) . Thu s, (66) holds. Therefo re, the achie vability part (the dir ect p art) of Th eorem 2 hold . Therefore, it is su fficient to prove the co n verse part (67). W e focus on the set T n of empirical distributions with n outcome s. Its cardinality | T n | is evaluated as | T n | ≤ ( n + 1) |X | . In this pr oof, we u se the distribution Q n U def = X P ∈ T n 1 | T n | + 1 ( W P ) × n + 1 | T n | + 1 Q × n M 20 ε 0 2 R Normal distribution with variance W V − ( ) 1 log ( ) n n x W n P W y nC W y n × × − Fig. 5. Limiting behav ior of 1 √ n „ log W × n x ( y ) W × n P n ( y ) − nC « and the Gaussian distrib ution with the varia nce V − W and the sets V ǫ def = { P | I ( P , W ) ≥ C + ǫ } Ω n def = { x ∈ X n | ep( x ) ∈ V ǫ } , where ep( x ) is the empirical distribution of x ∈ X n . Since Q n U ( y ) ≥ 1 | T n | +1 ( W ep( x ) ) × n ( y ) an d Q n U ( y ) ≥ 1 | T n | +1 Q × n M ( y ) , P P n ,W × n 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R = X x ∈ Ω n P n ( x )P W × n x 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R + X x ∈ Ω c n P n ( x )P W × n x 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R ≥ X x ∈ Ω n P n ( x )P W × n x 1 √ n log W × n x ( y ) ( Q M ) × n ( y ) + log( | T n | + 1) − nC ≤ R + X x ∈ Ω c n P n ( x )P W × n x 1 √ n log W × n x ( y ) ( W ep( x ) ) × n ( y ) + log( | T n | + 1 ) − nC ≤ R . When x ∈ V c ǫ , V W × n x 1 √ n log W × n x ( y ) ( W ep( x ) ) × n ( y ) − nC = V ep( x ) ,W < max P V P,W E W × n x 1 √ n log W × n x ( y ) ( W ep( x ) ) × n ( y ) + log( | T n | + 1) − nC = 1 √ n ( nI (ep( x ) , W ) + log( | T n | + 1) − nC ) ≤ log( | T n | + 1) √ n − ǫ √ n. Thus, Cheby shev in equality implies P W × n x 1 √ n log W × n x ( y ) ( W ep( x ) ) × n ( y ) + log( | T n | + 1) − nC ≤ R ≥ 1 − max P V P,W R + ǫ √ n − log( | T n | +1) √ n . Define the quantity V ′ P,W def = E P E W x (log W x ( y ) Q M ( y ) − D ( W x k Q M )) 2 . When x ∈ V ǫ , since the rand om variable log W × n x ( y ) ( Q M ) × n ( y ) = 21 P n i =1 log W x i ( y i ) ( Q M )( y i ) has the variance nV ′ ep( x ) ,W , P W × n x 1 √ n log W × n x ( y ) ( Q M ) × n ( y ) + log( | T n | + 1 ) − nC ≤ R ≥ P W × n x 1 √ n log W × n x ( y ) ( Q M ) × n ( y ) + log( | T n | + 1 ) − nI (ep( x ) , W ) ≤ R ∼ = G R q V ′ ep( x ) ,W ≥ min P ∈V ǫ G R q V ′ P,W . Since the random variable log W × n x ( y ) ( Q M ) × n ( y ) = P n i =1 log W x i ( y i ) ( Q M )( y i ) is wr itten as a combinatio n of finite nu mber of r andom variables { log W x ( y ) ( Q M )( y ) } x ∈X , the above convergence is unifo rm. That is, for any δ > 0 , there exists N > 0 such that for n ≥ N , P W × n x 1 √ n log W × n x ( y ) ( Q M ) × n ( y ) + log( | T n | + 1 ) − nC ≤ R ≥ min P ∈V ǫ G R q V ′ P,W − δ. Therefo re, P P n ,W × n 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R ≥ P n (Ω n )(1 − max P V P,W R + ǫ √ n − log( | T n | +1) √ n ) + P n (Ω c n ) min P ∈V ǫ G R q V ′ P,W − δ ≥ min P ∈V ǫ G R q V ′ P,W − δ, where Ω c n is the co mplemen t of Ω n . Thus, lim sup n →∞ P P n ,W × n 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R ≥ min P ∈V ǫ G R p V P,W ! − δ. Since δ > 0 and ǫ > 0 are ar bitrary , when Q = { Q n U } , J p ( R, C | P , Q , W ) = lim sup n →∞ P P n ,W × n 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R ≥ min P ∈V G R p V P,W ! = G ( R/ q V + W ) R ≥ 0 G ( R/ q V − W ) R < 0 . which implies (67 ) becau se of the con tinuity of the r .h.s. B. Pr oof of Theo r em 3 In this subsection, using Theorem 9 , we prove Theorem 3 when the cardinality |X | is finite. For this purpose, we sho w the following relations in th e stationar y discrete m emoryle ss case, i.e., the case in which W n x ( y ) = W × n x ( y ) def = Q n i =1 W x i ( y i ) fo r x = ( x 1 , . . . , x n ) and y = ( y 1 , . . . , y n ) , and c n ( x ) = P n i =1 c ( x i ) . In this section , abbreviating C DM W as C , we will prove that inf P :supp( P n ) ⊂X n,c,K lim γ ↓ 0 I p ( R 2 − γ , R 1 | P , W ) ≤ G ( R 2 / q V + W ,c,K ) R 2 ≥ 0 G ( R 2 / q V − W ,c,K ) R 2 < 0 . (69) 22 and inf P :supp( P n ) ⊂X n,c,K sup Q lim γ ↓ 0 J p ( R 2 − γ , R 1 | P , Q , W ) ≥ G ( R 2 / q V + W ,c,K ) R 2 ≥ 0 G ( R 2 / q V − W ,c,K ) R 2 < 0 . (70) Showing both inequalities and u sing Theor em 9, we obtain C p ( R 2 , R 1 | W , c , K ) = G ( R 2 / q V + W ,c,K ) R 2 ≥ 0 G ( R 2 / q V − W ,c,K ) R 2 < 0 . (71) Since the rh s of (7 1) is con tinuou s with respect to ǫ , (71) imp lies that C ( ǫ, R 1 | W , c , K ) = q V + W ,c,K G − 1 ( ǫ ) ǫ ≥ 1 / 2 q V − W ,c,K G − 1 ( ǫ ) ǫ < 1 / 2 . That is, we can show Theorem 3. The inequality (7 0) ca n be p roven in the same way as (67) by replacing T n and Q M by the set of empirical d istributions T n,c,K def = { P ∈ T n | E P c ( x ) ≤ K } . and Q M ,c,K . Therefore, the con verse part of Theorem 3 h old. Therefore, it is su fficient to prove the direct part (69). For any distribution P satisfyin g E P c ( x ) ≤ K , we cho ose the closet empirical distrib ution P n ∈ T n,c,K . Let P = { P n } be the un iform distributions on the set T P n def = { x ∈ X n | ep( x ) = P n } . It is suf ficient to show that I p ( R, C | P , W ) ≤ G ( R/ p V P,W ) . (72 ) Since P n ( x ) ≤ | T n | ( P n ) × n ( x ) , (73) we have I p ( R, C | P , W ) = lim sup n →∞ P P n ,W × n 1 √ n log W × n x ( y ) W × n P n ( y ) − nC ≤ R ≤ lim sup n →∞ P P n ,W × n 1 √ n log W × n x ( y ) ( W P n ) × n ( y ) − log | T n | − nC ≤ R ≤ G R p V P,W ! , (74) which implies (72 ). In order to pr ove (72) without condition |X | < ∞ , we choose a seq uence of input d istributions { P ( k ) ± } ∞ k =1 with finite supports such that P ( k ) ∈ T n,c,K I ( P ( k ) ± , W ) → max P :E P c ( x ) ≤ K I ( P, W ) V P ( k ) ± ,W → V ± W ,c,K . Choose the distrib ution P n as the u niform distributions on the set T P ( n 1 4 ) . Then, in stead of (73), the r elation P n ( x ) ≤ ( n + 1 ) n 1 4 ( P ( n 1 4 ) ) × n ( x ) holds. Since 1 √ n log( n + 1) n 1 4 goes to ze ro, the same discussion as (74) yields ( 72). 23 C. Pr oof of Th eor em 5 As is sh own in Subsection X-B, we o btain the dire ct part, i.e., C G p ( a, C G N ,S | N , S ) ≤ G ( a/ p V P M ,W ) . Hence, when c n ( x ) = P n i =1 x 2 i , it is suf ficient to pr ove inf P :supp( P n ) ⊂X n,c,S sup Q lim γ ↓ 0 J p ( R 2 − γ , R 1 | P , Q , W ) ≥ G ( a/ p V P M ,W ) . (75) In the f ollowing discussion, we use the distribution Q n U def = 1 2 ( W P M ) × n + 1 2 ( W P M,ǫ ) × n P M ,ǫ def = 1 p 2 π ( S − ǫ ) e − x 2 2( S − ǫ ) and the sets V ǫ def = { P | E P x 2 ≤ S − ǫ } Ω n def = { x ∈ X n | ep( x ) ∈ V ǫ } . W e obtain P P n ,W × n 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R = X x ∈ Ω n P n ( x )P W × n x 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R + X x ∈ Ω c n P n ( x )P W × n x 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R ≥ X x ∈ Ω n P n ( x )P W × n x 1 √ n log W × n x ( y ) ( W P M ) × n ( y ) + log 2 − nC ≤ R + X x ∈ Ω c n P n ( x )P W × n x 1 √ n log W × n x ( y ) ( W P M,ǫ ) × n ( y ) + log 2 − nC ≤ R . When x ∈ V c ǫ , the ran dom variable 1 √ n log W × n x ( y ) ( W P M,ǫ ) × n ( y ) + log 2 − nC has the expectatio n 1 √ n n 2 log(1 + S − ǫ N ) + k x k 2 nN − S − ǫ N 2(1+ S − ǫ N ) − n 2 log(1 + S N ) + lo g 2 ( ≤ log 2 √ n − √ n 2 log 1+ S N 1+ S − ǫ N ) , and th e variance ( S − ǫ ) 2 N 2 +2 k x k 2 nN 2(1+ S − ǫ N ) 2 ( ≤ ( S − ǫ ) 2 N 2 +2 S − ǫ N 2(1+ S − ǫ N ) 2 ) . Thu s, Chebyshev inequality implies P W × n x 1 √ n log W × n x ( y ) ( W P M,ǫ ) × n ( y ) + log 2 − nC ≤ R ≥ 1 − ( S − ǫ ) 2 N 2 +2 S − ǫ nN 2(1+ S − ǫ N ) 2 R + √ n 2 log 1+ S N 1+ S − ǫ N − log 2 √ n → 1 . When x ∈ V ǫ , und er the n -variable Gaussian distribution W × n x , the ran dom variable log W × n x ( y + x ) ( W P M ) × n ( y + x ) is calcu lated to be 1 2(1 + S N ) − S k y k 2 N 2 + 2 x · y N + k x k 2 N n 2 log(1 + S N ) . The expectation is k x k 2 N − n S N 2(1+ S N ) + n 2 log(1 + S N ) , and th e variance is 2 n S 2 N 2 +4 k x k 2 N 4(1+ S N ) 2 . The r andom variable 24 1 √ n log W × n x ( y + x ) ( W P M ) × n ( y + x ) − k x k 2 N − n S N 2(1+ S N ) − n 2 log(1 + S N ) conv erges the nor mal distribution wh en n goe s to infinity . Due to the pr operty of Gaussian distribution, this co n vergence is unifo rm when k x k is bounded. Hence, P W × n x 1 √ n log W × n x ( y ) ( W P M ) × n ( y ) + log 2 − nC ≤ R ≥ P W × n x ( 1 √ n log W × n x ( y ) ( W P M ) × n ( y ) + log 2 − k x k 2 N − n S N 2(1 + S N ) − n 2 log(1 + S N ) ! ≤ R ) ∼ = G R r 2 S 2 N 2 +4 k x k 2 nN 4(1+ S N ) 2 ≥ G R s 2 S 2 N 2 +4 S − ǫ N 4(1+ S N ) 2 R ≤ 0 G R s 2 S 2 N 2 +4 S N 4(1+ S N ) 2 R > 0 . Therefo re, lim sup n →∞ P P n ,W × n 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R ≥ G R s 2 n S 2 N 2 +4 n S − ǫ N 4(1+ S N ) 2 R ≤ 0 G R s 2 n S 2 N 2 +4 n S N 4(1+ S N ) 2 R > 0 Since ǫ > 0 is arbitrar y , when Q = { Q n U } , J p ( R, C | P , Q , W ) = lim sup n →∞ P P n ,W × n 1 √ n log W × n x ( y ) Q n U ( y ) − nC ≤ R ≥ G R r 2 S 2 N 2 +4 S N 4(1+ S N ) 2 , which implies (75 ). X I . C O N C L U D I N G R E M A R K S A N D F U T U R E S T U DY W e h ave obtained a general asymp totic form ula for chann el coding in the sen se of the second-or der coding rate. That is, it has been shown that the optimum second-or der tr ansmission rate with the erro r probability ǫ is characteriz ed by the second-or der asymptotic beh avior of the logarithmic likelihood ratio between th e cond itional o utput distribution and the n on-con ditional output distribution. Using this result, we hav e d erived this type of optimal tr ansmission rate for the d iscrete memoryless case, the discrete mem oryless case with a cost constraint, the additive Markovian case, and the G aussian channel case with an energy constraint. The performan ce in the second-ord er coding rate is characterized by the average of the variance o f the logarithmic likelihood ratio with the sing le letterized expression. When the inp ut distribution producing the capacity is not u nique, it is characterized by its minimum a nd its maximum. W e give a typ ical exam ple such that the minimum is different from the maximum . Furthermore , both quantities ha ve been verified to satisfy th e additivity . The main r esults of the p resent study ar e as f ollows. While the ap plication of the inf ormation spe ctrum method to the second-o rder coding rate w as initiated by Hay ashi [6], his research indicated that there is no difficulty in extending general formu las to th e seco nd-o rder coding rate. Therefore, in the i.i.d. case, the second-order cod ing rate of the source coding and intrinsic ra ndomn ess are solved by th e centr al limit th eorem. Howe ver , ch annel cod ing cannot bee n treated using the me thod 25 of Hayashi[6] except fo r the additive noise case with no co st co nstraint b ecause the presen t prob lem co ntains the optimization concern ing the input distribution in the non-ad ditiv e noise case. In the con verse part, we hav e to treat the general s equence of input distributions. In orde r to resolve this difficulty , we have treated the loga rithmic likelihood ratio between the con ditional output distribution and the d istribution Q n U , which is introduced in Subsection X-A. Furthermo re, we can conside r the q uantum extension of ou r results. There is con siderable difficulty con cerning non- commutativity in this direction. In addition, the third -order coding rate is expected but app ears difficult. The second order is the o rder √ n , and it is not clea r whether the third order is a constant order or the order log n . This is an interesting prob lem for futu re study . A C K N O W L E D G M E N T S This study was sup ported by MEXT through a Gr ant-in-Aid for Scientific Research on Priority Area ”Deepening and Expansion o f Statistical Mech anical Inf ormatics (DEX-SMI)”, No. 1 8079 014. The author than ks Professor T omohiko Uyematsu for info rming the refer ence [3]. A P P E N D I X For a given R < 0 , we prove (15). Since d 2 ψ P ds 2 ( s ) > 0 , the fun ction ψ P is con vex. Choosing s n such that C DM W + R 2 √ n = − dψ P ds ( s n ) = − dψ P ds (0) − R s n 0 d 2 ψ P ds 2 ( t ) dt , we ha ve the relation R 2 √ n = − Z s n 0 d 2 ψ P ds 2 ( t ) dt. (76) Then, the min imum of C DM W s + R 2 √ n s + ψ P ( s ) is attain ed when s = s n . Since d 2 ψ P ds 2 ( s ) is contin uous and bou nded, s n approa ches zero as n go es to infinity . More pre cisely , (76) implies R 2 = − lim n →∞ √ n R s n 0 d 2 ψ P ds 2 ( t ) dt = − lim n →∞ ( √ ns n ) d 2 ψ P ds 2 (0) . That is, lim n →∞ ( √ ns n ) = − R 2 d 2 ψ P ds 2 (0) . When the f unction ǫ ( u ) is ch osen to be d 2 ψ P ds 2 ( u ) − d 2 ψ P ds 2 (0) , ǫ ( u ) app roache s zero as u goes to ze ro. Thus, we h ave n min 0 ≤ s ≤ 1 C DM W s + R 2 √ n s + ψ P ( s ) = n C DM W s n + R 2 √ n s n + ψ P ( s n ) = n ( R 2 √ n s n + Z s n 0 Z t 0 d 2 ψ P ds 2 ( u ) dudt ) = √ nR 2 s n + n s 2 n 2 d 2 ψ P ds 2 (0) + n Z s n 0 Z t 0 ǫ ( u ) dudt → − R 2 2 2 d 2 ψ P ds 2 (0) , which implies (15 ). R E F E R E N C E S [1] R. G. Galla ger , Information Theory and Reliable Communi cation , John W ile y & Sons, 1968. [2] M. Hayashi, “Prac tica l Ev alu ation of Securi ty for Quantum K e y Distrib utio n, ” Phys. R ev . A , 74 , 0223 07 (2006). [3] V . Strassen, “ Asymptotische Absch ¨ atzuge n in Shannon’ s Informationsthe orie, ” In Transac tions of the Third Prague Co nferenc e on Information Theory etc, 1962 . Czechosl ov ak Academy of S cienc es, Prague, pp. 689-723. [4] T .S. Han and S. V erd ´ u , “ Approximation theory of outpu t statisti cs, ” IEEE T rans. Inform. Theory , vol.39, no.3, 752–772, 1993. [5] T .-S. Han, Information-S pectrum Methods in Informat ion Theory , (Springer , Be rlin, 2003). (Originally published by Baifukan 1998 in Japane se) [6] M. Hayashi, “Second order asymptotics in fixed-lengt h source coding and intrinsic randomness, ” to appear in IEEE T ra ns. Inform. Theory ; arXi v:cs/ 0503089 . [7] S. W atanabe, R. Matsumoto, and T . Uyematsu, “Noise T olerance of the BB84 Protocol with Rand om Priv ac y Amplificati on, ” Int. J. Quant. Info. , 4 , pp. 935-946, (2006 ) [8] J. Hasega wa, M. Hayashi , T . Hiroshima, A. T anaka, and A. T omita, “Experime ntal De coy Stat e Quantum Ke y Distrib ution with Unconditio nal Sec urity Incorpora ting Finite Statisti cs, ” arXi v:0705.3081 [9] C. E. Shannon, “ A mathematica l t heory of communic ation , ” Bell System T echnic al Journal , 27 , 379-423, 623-656 (1948). [10] I. Csisz ´ ar and J. K ¨ orner , Information Theory: Coding Theor ems for Discr ete Me moryless Systems , (Academi c Press, Ne w Y ork, 1981). [11] C. E. Shannon, “Probability of error for optimal codes in a Gaussian channel , ” Bell System T echnical J ournal, 38 , 611-656 (1959). [12] A. Dembo and O. Zeitouni, Larg e deviat ion T echniques and Applications , (Springer , Berl in Heidel berg New Y ork, 1997). [13] Y . Kabashi ma and D. Saa d, “Stati stical mechanics of lo w-densi ty parity-che ck code s, ” J. P hys. A : Math . Gen. , 37 R1-R43 (2004). [14] S. V erd ´ u and T .S. Han, “ A general formula for channel capacity , ” IEEE T rans. Inform. Theory , vol.40, 1147–1157, 1994. [15] M. Hayashi and H. Nagaoka, “Gene ral formulas for capacity of classical -quantum channels, ” IEEE T ra ns. Inform. Theory , 49 , 1753-1768 (2003 ).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment