Optimal Watermark Embedding and Detection Strategies Under Limited Detection Resources

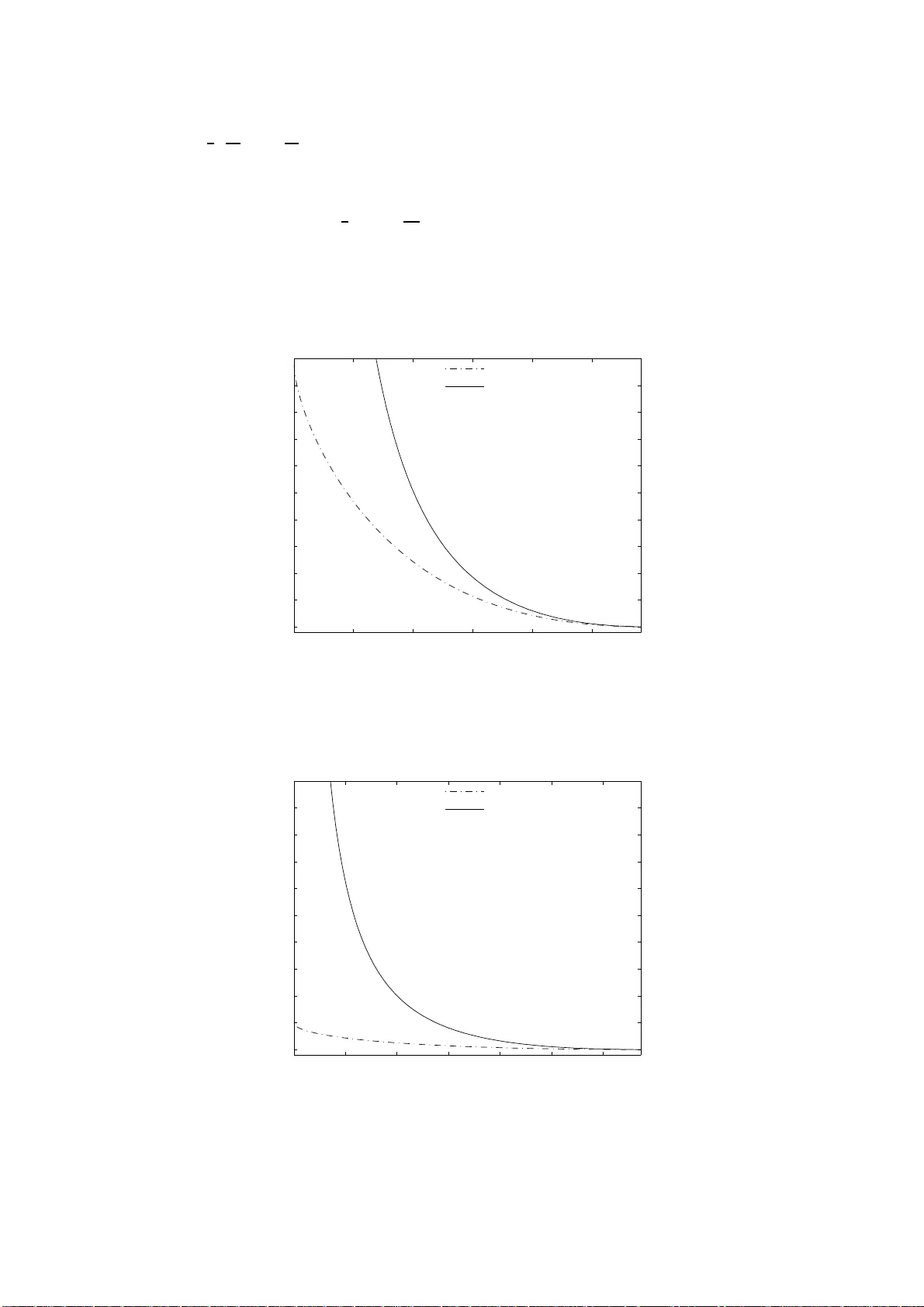

An information-theoretic approach is proposed to watermark embedding and detection under limited detector resources. First, we consider the attack-free scenario under which asymptotically optimal decision regions in the Neyman-Pearson sense are propo…

Authors: Neri Merhav, Erez Sabbag