Safe and Efficient Off-Policy Reinforcement Learning

In this work, we take a fresh look at some old and new algorithms for off-policy, return-based reinforcement learning. Expressing these in a common form, we derive a novel algorithm, Retrace($\lambda$), with three desired properties: (1) it has low v…

Authors: Remi Munos, Tom Stepleton, Anna Harutyunyan

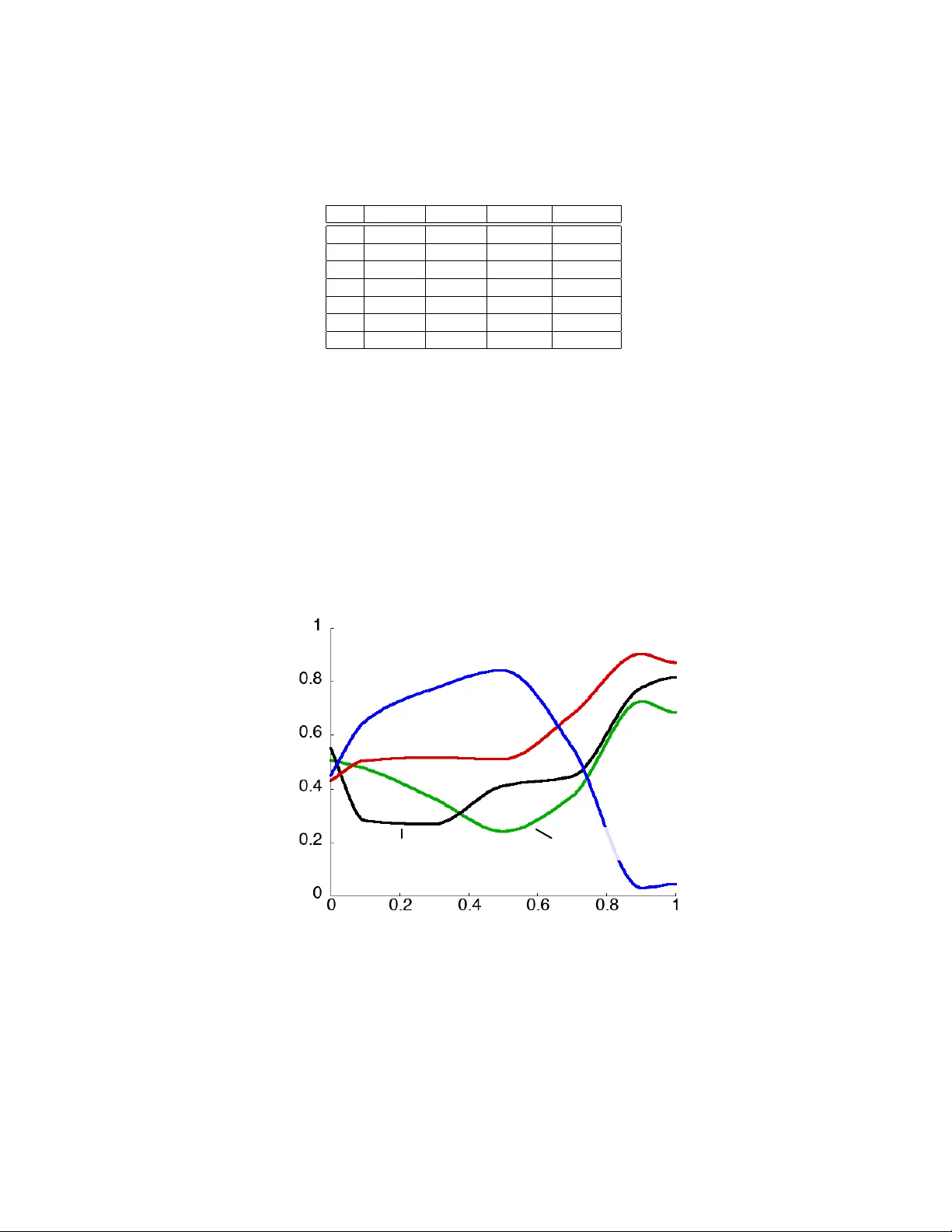

Safe and efficient off-policy r einf or cement lear ning R ´ emi Munos munos@google.com Google DeepMind Thomas Stepleton stepleton@google.com Google DeepMind Anna Harutyunyan anna.harutyunyan@vub .ac.be Vrije Univ ersiteit Brussel Marc G. Bellemar e bellemare@google.com Google DeepMind Abstract In this work, we take a fresh look at some old and new algorithms for off-policy , return-based reinforcement learning. Expressing these in a common form, we de- riv e a nov el algorithm, Retrace( λ ), with three desired properties: (1) it has low variance ; (2) it safely uses samples collected from an y behaviour policy , whate ver its degree of “of f-policyness”; and (3) it is ef ficient as it makes the best use of sam- ples collected from near on-polic y beha viour policies. W e analyze the contracti ve nature of the related operator under both off-polic y policy ev aluation and control settings and derive online sample-based algorithms. W e belie ve this is the first return-based off-polic y control algorithm con ver ging a.s. to Q ∗ without the GLIE assumption (Greedy in the Limit with Infinite Exploration). As a corollary , we prov e the con vergence of W atkins’ Q( λ ), which was an open problem since 1989. W e illustrate the benefits of Retrace( λ ) on a standard suite of Atari 2600 games. One fundamental trade-off in reinforcement learning lies in the definition of the update target: should one estimate Monte Carlo returns or bootstrap from an existing Q-function? Return-based meth- ods (where r eturn refers to the sum of discounted rew ards P t γ t r t ) offer some adv antages over value bootstrap methods: they are better beha ved when combined with function approximation, and quickly propagate the fruits of e xploration (Sutton, 1996). On the other hand, v alue bootstrap meth- ods are more readily applied to of f-policy data, a common use case. In this paper we show that learning fr om returns need not be at cr oss-purposes with off-policy learning. W e start from the recent work of Harutyunyan et al. (2016), who show that nai ve off-policy policy ev aluation, without correcting for the “of f-policyness” of a trajectory , still conv erges to the desired Q π value function provided the behavior µ and target π policies are not too far apart (the maxi- mum allo wed distance depends on the λ parameter). Their Q π ( λ ) algorithm learns from trajectories generated by µ simply by summing discounted off-polic y corrected rewards at each time step. Un- fortunately , the assumption that µ and π are close is restrictiv e, as well as difficult to uphold in the control case, where the target policy is greedy with respect to the current Q-function. In that sense this algorithm is not safe : it does not handle the case of arbitrary “off-polic yness”. Alternativ ely , the T ree-backup (TB( λ )) algorithm (Precup et al., 2000) tolerates arbitrary tar- get/behavior discrepancies by scaling information (here called traces ) from future temporal dif- ferences by the product of target policy probabilities. TB( λ ) is not efficient in the “near on-policy” case (similar µ and π ), though, as traces may be cut prematurely , blocking learning from full returns. In this work, we express sev eral off-policy , return-based algorithms in a common form. From this we deriv e an improv ed algorithm, Retrace( λ ), which is both safe and efficient , enjoying conv ergence guarantees for off-polic y policy ev aluation and – more importantly – for the control setting. 30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain. Retrace( λ ) can learn from full returns retrie ved from past policy data, as in the context of experience replay (Lin, 1993), which has returned to fav our with advances in deep reinforcement learning (Mnih et al., 2015; Schaul et al., 2016). Of f-policy learning is also desirable for e xploration, since it allo ws the agent to deviate from the tar get policy currently under e valuation. T o the best of our kno wledge, this is the first online return-based off-polic y control algorithm which does not require the GLIE (Greedy in the Limit with Infinite Exploration) assumption (Singh et al., 2000). In addition, we provide as a corollary the first proof of conv ergence of W atkins’ Q ( λ ) (see, e.g., W atkins, 1989; Sutton and Barto, 1998). Finally , we illustrate the significance of Retrace( λ ) in a deep learning setting by applying it to the suite of Atari 2600 games provided by the Arcade Learning En vironment (Bellemare et al., 2013). 1 Notation W e consider an agent interacting with a Markov Decision Process ( X , A , γ , P, r ) . X is a finite state space, A the action space, γ ∈ [0 , 1) the discount factor , P the transition function mapping state- action pairs ( x, a ) ∈ X × A to distributions ov er X , and r : X × A → [ − R M A X , R M A X ] is the re ward function. F or notational simplicity we will consider a finite action space, but the case of infinite – possibly continuous – action space can be handled by the Retrace( λ ) algorithm as well. A policy π is a mapping from X to a distribution o ver A . A Q-function Q maps each state-action pair ( x, a ) to a value in R ; in particular , the reward r is a Q-function. For a policy π we define the operator P π : ( P π Q )( x, a ) := X x 0 ∈X X a 0 ∈A P ( x 0 | x, a ) π ( a 0 | x 0 ) Q ( x 0 , a 0 ) . The v alue function for a policy π , Q π , describes the e xpected discounted sum of re wards associated with following π from a given state-action pair . Using operator notation, we write this as Q π := X t ≥ 0 γ t ( P π ) t r . (1) The Bellman oper ator T π for a polic y π is defined as T π Q := r + γ P π Q and its fix ed point is Q π , i.e. T π Q π = Q π = ( I − γ P π ) − 1 r . The Bellman optimality operator introduces a maximization ov er the set of policies: T Q := r + γ max π P π Q. (2) Its fixed point is Q ∗ , the unique optimal value function (Puterman, 1994). It is this quantity that we will seek to obtain when we talk about the “control setting”. Return-based Operators: The λ -return extension (Sutton, 1988) of the Bellman operators con- siders exponentially weighted sums of n -steps returns: T π λ Q := (1 − λ ) X n ≥ 0 λ n [( T π ) n Q ] = Q + ( I − λγ P π ) − 1 ( T π Q − Q ) , where T π Q − Q is the Bellman r esidual of Q for polic y π . Examination of the above sho ws that Q π is also the fixed point of T π λ . At one extreme ( λ = 0 ) we have the Bellman operator T π λ =0 Q = T π Q , while at the other ( λ = 1 ) we hav e the policy ev aluation operator T π λ =1 Q = Q π which can be estimated using Monte Carlo methods (Sutton and Barto, 1998). Intermediate values of λ trade off estimation bias with sample variance (K earns and Singh, 2000). W e seek to ev aluate a tar get policy π using trajectories drawn from a behaviour policy µ . If π = µ , we are on-policy ; otherwise, we are off-policy . W e will consider trajectories of the form: x 0 = x, a 0 = a, r 0 , x 1 , a 1 , r 1 , x 2 , a 2 , r 2 , . . . with a t ∼ µ ( ·| x t ) , r t = r ( x t , a t ) and x t +1 ∼ P ( ·| x t , a t ) . W e denote by F t this sequence up to time t , and write E µ the expectation with respect to both µ and the MDP transition probabilities. Throughout, we write k · k for supremum norm. 2 2 Off-Policy Algorithms W e are interested in two related off-polic y learning problems. In the policy evaluation setting, we are gi ven a fixed polic y π whose v alue Q π we wish to estimate from sample trajectories drawn from a behaviour policy µ . In the contr ol setting, we consider a sequence of policies that depend on our own sequence of Q-functions (such as ε -greedy policies), and seek to approximate Q ∗ . The general operator that we consider for comparing sev eral return-based off-policy algorithms is: R Q ( x, a ) := Q ( x, a ) + E µ h X t ≥ 0 γ t t Y s =1 c s r t + γ E π Q ( x t +1 , · ) − Q ( x t , a t ) i , (3) for some non-negati ve coefficients ( c s ) , where we write E π Q ( x, · ) := P a π ( a | x ) Q ( x, a ) and define ( Q t s =1 c s ) = 1 when t = 0 . By extension of the idea of eligibility traces (Sutton and Barto, 1998), we informally call the coefficients ( c s ) the traces of the operator . Importance sampling (IS): c s = π ( a s | x s ) µ ( a s | x s ) . Importance sampling is the simplest way to correct for the discrepancy between µ and π when learning from off-polic y returns (Precup et al., 2000, 2001; Geist and Scherrer, 2014). The off-policy correction uses the product of the likelihood ratios between π and µ . Notice that R Q defined in (3) with this choice of ( c s ) yields Q π for any Q . For Q = 0 we recover the basic IS estimate P t ≥ 0 γ t Q t s =1 c s r t , thus (3) can be seen as a variance reduction technique (with a baseline Q ). It is well known that IS estimates can suffer from large – even possibly infinite – variance (mainly due to the variance of the product π ( a 1 | x 1 ) µ ( a 1 | x 1 ) · · · π ( a t | x t ) µ ( a t | x t ) ), which has motivated further variance reduction techniques such as in (Mahmood and Sutton, 2015; Mahmood et al., 2015; Hallak et al., 2015). Off-policy Q π ( λ ) and Q ∗ ( λ ): c s = λ . A recent alternati ve proposed by Harutyunyan et al. (2016) introduces an of f-policy correction based on a Q -baseline (instead of correcting the probability of the sample path like in IS). This approach, called Q π ( λ ) and Q ∗ ( λ ) for policy e valuation and control, respectiv ely , corresponds to the choice c s = λ . It of fers the advantage of a voiding the blo w-up of the variance of the product of ratios encountered with IS. Interestingly , this operator contracts around Q π provided that µ and π are sufficiently close to each other . Defining ε := max x k π ( ·| x ) − µ ( ·| x ) k 1 the lev el of “off-policyness”, the authors prove that the operator defined by (3) with c s = λ is a contraction mapping around Q π for λ < 1 − γ γ ε , and around Q ∗ for the worst case of λ < 1 − γ 2 γ . Unfortunately , Q π ( λ ) requires knowledge of ε , and the condition for Q ∗ ( λ ) is very conservati ve. Neither Q π ( λ ), nor Q ∗ ( λ ) are safe as they do not guarantee con ver gence for arbitrary π and µ . T ree-backup, TB( λ ): c s = λπ ( a s | x s ) . The TB( λ ) algorithm of Precup et al. (2000) corrects for the target/beha viour discrepanc y by multiplying each term of the sum by the product of tar get policy probabilities. The corresponding operator defines a contraction mapping for any policies π and µ , which makes it a safe algorithm. Howe ver , this algorithm is not efficient in the near on-policy case (where µ and π are similar) as it unnecessarily cuts the traces, prev enting it to make use of full returns: indeed we need not discount stochastic on-policy transitions (as shown by Harutyunyan et al.’ s results about Q π ). Retrace( λ ): c s = λ min 1 , π ( a s | x s ) µ ( a s | x s ) . Our contrib ution is an algorithm – Retrace ( λ ) – that takes the best of the three previous algorithms. Retrace ( λ ) uses an importance sampling ratio truncated at 1 . Compared to IS, it does not suffer from the variance explosion of the product of IS ratios. Now , similarly to Q π ( λ ) and unlike TB( λ ), it does not cut the traces in the on-policy case, making it possible to benefit from the full returns. In the off-polic y case, the traces are safely cut, similarly to TB( λ ). In particular, min 1 , π ( a s | x s ) µ ( a s | x s ) ≥ π ( a s | x s ) : Retrace( λ ) does not cut the traces as much as TB( λ ). In the subsequent sections, we will show the follo wing: • F or any traces 0 ≤ c s ≤ π ( a s | x s ) /µ ( a s | x s ) (thus including the Retrace( λ ) operator), the return-based operator (3) is a γ -contraction around Q π , for arbitrary policies µ and π • In the control case (where π is replaced by a sequence of increasingly greedy policies) the online Retrace( λ ) algorithm con verges a.s. to Q ∗ , without requiring the GLIE assumption. • As a corollary , W atkins’ s Q ( λ ) con verges a.s. to Q ∗ . 3 Definition Estimation Guaranteed Use full returns of c s variance con vergence † (near on-policy) Importance sampling π ( a s | x s ) µ ( a s | x s ) High for any π , µ yes Q π ( λ ) λ Low for π close to µ yes TB( λ ) λπ ( a s | x s ) Low for an y π , µ no Retrace( λ ) λ min 1 , π ( a s | x s ) µ ( a s | x s ) Low for an y π , µ yes T able 1: Properties of several algorithms defined in terms of the general operator giv en in (3). † Guaranteed con vergence of the e xpected operator R . 3 Analysis of Retrace( λ ) W e will in turn analyze both off-polic y policy ev aluation and control settings. W e will show that R is a contraction mapping in both settings (under a mild additional assumption for the control case). 3.1 P olicy Evaluation Consider a fixed target policy π . For ease of exposition we consider a fixed behaviour policy µ , noting that our result extends to the setting of sequences of beha viour policies ( µ k : k ∈ N ) . Our first result states the γ -contraction of the operator (3) defined by any set of non-negativ e coef- ficients c s = c s ( a s , F s ) (in order to emphasize that c s can be a function of the whole history F s ) under the assumption that 0 ≤ c s ≤ π ( a s | x s ) µ ( a s | x s ) . Theorem 1. The operator R defined by (3) has a unique fixed point Q π . Furthermore, if for each a s ∈ A and each history F s we have c s = c s ( a s , F s ) ∈ 0 , π ( a s | x s ) µ ( a s | x s ) , then for any Q-function Q kR Q − Q π k ≤ γ k Q − Q π k . The following lemma will be useful in pro ving Theorem 1 (proof in the appendix). Lemma 1. The dif fer ence between R Q and its fixed point Q π is R Q ( x, a ) − Q π ( x, a ) = E µ h X t ≥ 1 γ t t − 1 Y i =1 c i E π [( Q − Q π )( x t , · )] − c t ( Q − Q π )( x t , a t ) i . Pr oof (Theorem 1). The fact that Q π is the fixed point of the operator R is obvious from (3) since E x t +1 ∼ P ( ·| x t ,a t ) r t + γ E π Q π ( x x +1 , · ) − Q π ( x t , a t ) = ( T π Q π − Q π )( x t , a t ) = 0 , since Q π is the fixed point of T π . Now , from Lemma 1, and defining ∆ Q := Q − Q π , we hav e R Q ( x, a ) − Q π ( x, a ) = X t ≥ 1 γ t E x 1: t a 1: t h t − 1 Y i =1 c i E π ∆ Q ( x t , · ) − c t ∆ Q ( x t , a t ) i = X t ≥ 1 γ t E x 1: t a 1: t − 1 h t − 1 Y i =1 c i E π ∆ Q ( x t , · ) − E a t [ c t ( a t , F t )∆ Q ( x t , a t ) |F t ] i = X t ≥ 1 γ t E x 1: t a 1: t − 1 h t − 1 Y i =1 c i X b π ( b | x t ) − µ ( b | x t ) c t ( b, F t ) ∆ Q ( x t , b ) i . Now since π ( a | x t ) − µ ( a | x t ) c t ( b, F t ) ≥ 0 , we have that R Q ( x, a ) − Q π ( x, a ) = P y ,b w y ,b ∆ Q ( y , b ) , i.e. a linear combination of ∆ Q ( y, b ) weighted by non-ne gati ve coef ficients: w y ,b := X t ≥ 1 γ t E x 1: t a 1: t − 1 h t − 1 Y i =1 c i π ( b | x t ) − µ ( b | x t ) c t ( b, F t ) I { x t = y } i . 4 The sum of those coefficients is: X y ,b w y ,b = X t ≥ 1 γ t E x 1: t a 1: t − 1 h t − 1 Y i =1 c i X b π ( b | x t ) − µ ( b | x t ) c t ( b, F t ) i = X t ≥ 1 γ t E x 1: t a 1: t − 1 h t − 1 Y i =1 c i E a t [1 − c t ( a t , F t ) |F t ] i = X t ≥ 1 γ t E x 1: t a 1: t h t − 1 Y i =1 c i (1 − c t ) i = E µ h X t ≥ 1 γ t t − 1 Y i =1 c i − X t ≥ 1 γ t t Y i =1 c i i = γ C − ( C − 1) , where C := E µ P t ≥ 0 γ t Q t i =1 c i . Since C ≥ 1 , we have that P y ,b w y ,b ≤ γ . Thus R Q ( x, a ) − Q π ( x, a ) is a sub-con ve x combination of ∆ Q ( y , b ) weighted by non-negati ve coef- ficients w y ,b which sum to (at most) γ , thus R is a γ -contraction mapping around Q π . Remark 1. Notice that the coefficient C in the pr oof of Theor em 1 depends on ( x, a ) . If we write η ( x, a ) := 1 − (1 − γ ) E µ h P t ≥ 0 γ t ( Q t s =1 c s ) i , then we have shown that |R Q ( x, a ) − Q π ( x, a ) | ≤ η ( x, a ) k Q − Q π k . Thus η ( x, a ) ∈ [0 , γ ] is a ( x, a ) -specific contraction coefficient, which is γ when c 1 = 0 (the trace is cut immediately) and can be close to zer o when learning fr om full returns ( c t ≈ 1 for all t ). 3.2 Contr ol In the control setting, the single target policy π is replaced by a sequence of policies ( π k ) which depend on ( Q k ) . While most prior work has focused on strictly greedy policies, here we consider the larger class of incr easingly gr eedy sequences. W e now mak e this notion precise. Definition 1. W e say that a sequence of policies ( π k : k ∈ N ) is increasingly greedy w .r .t. a sequence ( Q k : k ∈ N ) of Q-functions if the following pr operty holds for all k : P π k +1 Q k +1 ≥ P π k Q k +1 . Intuitiv ely , this means that each π k +1 is at least as greedy as the previous policy π k for Q k +1 . Many natural sequences of policies are increasingly greedy , including ε k -greedy policies (with non- increasing ε k ) and softmax policies (with non-increasing temperature). See proofs in the appendix. W e will assume that c s = c s ( a s , F s ) = c ( a s , x s ) is Markovian, in the sense that it depends on x s , a s (as well as the policies π and µ ) only but not on the full past history . This allows us to define the (sub)-probability transition operator ( P cµ Q )( x, a ) := X x 0 X a 0 p ( x 0 | x, a ) µ ( a 0 | x 0 ) c ( a 0 , x 0 ) Q ( x 0 , a 0 ) . Finally , an additional requirement to the con vergence in the control case, we assume that Q 0 satisfies T π 0 Q 0 ≥ Q 0 (this can be achiev ed by a pessimistic initialization Q 0 = − R M AX / (1 − γ ) ). Theorem 2. Consider an arbitrary sequence of behaviour policies ( µ k ) (which may depend on ( Q k ) ) and a sequence of tar get policies ( π k ) that ar e incr easingly gr eedy w .r .t. the sequence ( Q k ) : Q k +1 = R k Q k , wher e the return operator R k is defined by (3) for π k and µ k and a Markovian c s = c ( a s , x s ) ∈ [0 , π k ( a s | x s ) µ k ( a s | x s ) ] . Assume the targ et policies π k ar e ε k -away fr om the gr eedy policies w .r .t. Q k , in the sense that T π k Q k ≥ T Q k − ε k k Q k k e , wher e e is the vector with 1-components. Further suppose that T π 0 Q 0 ≥ Q 0 . Then for any k ≥ 0 , k Q k +1 − Q ∗ k ≤ γ k Q k − Q ∗ k + ε k k Q k k . In consequence, if ε k → 0 then Q k → Q ∗ . Sketch of Pr oof (The full pr oof is in the appendix). Using P cµ k , the Retrace ( λ ) operator rewrites R k Q = Q + X t ≥ 0 γ t ( P cµ k ) t ( T π k Q − Q ) = Q + ( I − γ P cµ k ) − 1 ( T π k Q − Q ) . 5 W e now lo wer- and upper-bound the term Q k +1 − Q ∗ . Upper bound on Q k +1 − Q ∗ . W e prove that Q k +1 − Q ∗ ≤ A k ( Q k − Q ∗ ) with A k := γ ( I − γ P cµ k ) − 1 P π k − P cµ k . Since c t ∈ [0 , π ( a t | x t ) µ ( a t | x t ) ] we deduce that A k has non-negati ve elements, whose sum ov er each row , is at most γ . Thus Q k +1 − Q ∗ ≤ γ k Q k − Q ∗ k e. (4) Lower bound on Q k +1 − Q ∗ . Using the fact that T π k Q k ≥ T π ∗ Q k − ε k k Q k k e we have Q k +1 − Q ∗ ≥ Q k +1 − T π k Q k + γ P π ∗ ( Q k − Q ∗ ) − γ ε k k Q k k e = γ P cµ k ( I − γ P cµ k ) − 1 ( T π k Q k − Q k ) + γ P π ∗ ( Q k − Q ∗ ) − ε k k Q k k e. (5) Lower bound on T π k Q k − Q k . Since the sequence ( π k ) is increasingly greedy w .r .t. ( Q k ) , we ha ve T π k +1 Q k +1 − Q k +1 ≥ T π k Q k +1 − Q k +1 = r + ( γ P π k − I ) R k Q k = B k ( T π k Q k − Q k ) , (6) where B k := γ [ P π k − P cµ k ]( I − γ P cµ k ) − 1 . Since P π k − P cµ k and ( I − γ P cµ k ) − 1 are non-negati ve matrices, so is B k . Thus T π k Q k − Q k ≥ B k − 1 B k − 2 . . . B 0 ( T π 0 Q 0 − Q 0 ) ≥ 0 , since we assumed T π 0 Q 0 − Q 0 ≥ 0 . Thus, (5) implies that Q k +1 − Q ∗ ≥ γ P π ∗ ( Q k − Q ∗ ) − ε k k Q k k e. Combining the abo ve with (4) we deduce k Q k +1 − Q ∗ k ≤ γ k Q k − Q ∗ k + ε k k Q k k . When ε k → 0 , we further deduce that Q k are bounded, thus Q k → Q ∗ . 3.3 Online algorithms So far we have analyzed the contraction properties of the expected R operators. W e now de- scribe online algorithms which can learn from sample trajectories. W e analyze the algorithms in the every visit form (Sutton and Barto, 1998), which is the more practical generalization of the first-visit form. In this section, we will only consider the Retrace( λ ) algorithm defined with the coefficient c = λ min(1 , π /µ ) . For that c , let us rewrite the operator P cµ as λP π ∧ µ , where P π ∧ µ Q ( x, a ) := P y P b min( π ( b | y ) , µ ( b | y )) Q ( y , b ) , and write the Retrace operator R Q = Q + ( I − λγ P π ∧ µ ) − 1 ( T π Q − Q ) . W e focus on the control case, noting that a similar (and simpler) result can be deriv ed for policy ev aluation. Theorem 3. Consider a sequence of sample trajectories, with the k th trajectory x 0 , a 0 , r 0 , x 1 , a 1 , r 1 , . . . gener ated by following µ k : a t ∼ µ k ( ·| x t ) . F or each ( x, a ) along this trajectory , with s being the time of first occurr ence of ( x, a ) , update Q k +1 ( x, a ) ← Q k ( x, a ) + α k X t ≥ s δ π k t t X j = s γ t − j t Y i = j +1 c i I { x j , a j = x, a } , (7) wher e δ π k t := r t + γ E π k Q k ( x t +1 , · ) − Q k ( x t , a t ) , α k = α k ( x s , a s ) . W e consider the Retrace( λ ) algorithm wher e c i = λ min 1 , π ( a i | x i ) µ ( a i | x i ) . Assume that ( π k ) ar e incr easingly gr eedy w .r .t. ( Q k ) and ar e eac h ε k -away fr om the greedy policies ( π Q k ) , i.e. max x k π k ( ·| x ) − π Q k ( ·| x ) k 1 ≤ ε k , with ε k → 0 . Assume that P π k and P π k ∧ µ k asymptotically commute: lim k k P π k P π k ∧ µ k − P π k ∧ µ k P π k k = 0 . Assume further that (1) all states and actions are visited infinitely often: P t ≥ 0 P { x t , a t = x, a } ≥ D > 0 , (2) the sample trajectories ar e finite in terms of the second moment of their lengths T k : E µ k T 2 k < ∞ , (3) the stepsizes obe y the usual Robbins-Munr o conditions. Then Q k → Q ∗ a.s. The proof extends similar conv ergence proofs of TD( λ ) by Bertsekas and Tsitsiklis (1996) and of optimistic policy iteration by Tsitsiklis (2003), and is provided in the appendix. Notice that com- pared to Theorem 2 we do not assume that T π 0 Q 0 − Q 0 ≥ 0 here. Howe ver , we make the additional (rather technical) assumption that P π k and P π k ∧ µ k commute at the limit. This is satisfied for ex- ample when the probability assigned by the behavior policy µ k ( ·| x ) to the greedy action π Q k ( x ) is independent of x . Examples include ε -greedy policies, or more generally mixtures between the greedy policy π Q k and an arbitrary distribution µ (see Lemma 5 in the appendix for the proof): µ k ( a | x ) = ε µ ( a | x ) 1 − µ ( π Q k ( x ) | x ) I { a 6 = π Q k ( x ) } + (1 − ε ) I { a = π Q k ( x ) } . (8) Notice that the mixture coefficient ε needs not go to 0 . 6 4 Discussion of the results 4.1 Choice of the trace coefficients c s Theorems 1 and 2 ensure conv ergence to Q π and Q ∗ for any trace coefficient c s ∈ [0 , π ( a s | x s ) µ ( a s | x s ) ] . Howe ver , to make the best choice of c s , we need to consider the speed of con vergence, which depends on both (1) the variance of the online estimate, which indicates how many online updates are required in a single iteration of R , and (2) the contraction coefficient of R . V ariance: The v ariance of the estimate strongly depends on the variance of the product trace ( c 1 . . . c t ) , which is not an easy quantity to control in general, as the ( c s ) are usually not inde- pendent. Ho wev er, assuming independence and stationarity of ( c s ) , we hav e that V P t γ t c 1 . . . c t is at least P t γ 2 t V ( c ) t , which is finite only if V ( c ) < 1 /γ 2 . Thus, an important requirement for a numerically stable algorithm is for V ( c ) to be as small as possible, and certainly no more than 1 /γ 2 . This rules out importance sampling (for which c = π ( a | x ) µ ( a | x ) , and V ( c | x ) = P a µ ( a | x ) π ( a | x ) µ ( a | x ) − 1 2 , which may be larger than 1 /γ 2 for some π and µ ), and is the reason we choose c ≤ 1 . Contraction speed: The contraction coefficient η ∈ [0 , γ ] of R (see Remark 1) depends on how much the traces hav e been cut, and should be as small as possible (since it tak es log(1 /ε ) / log(1 /η ) iterations of R to obtain an ε -approximation). It is smallest when the traces are not cut at all (i.e. if c s = 1 for all s , R is the policy e valuation operator which produces Q π in a single iteration). Indeed, when the traces are cut, we do not benefit from learning from full returns (in the extreme, c 1 = 0 and R reduces to the (one step) Bellman operator with η = γ ). A reasonable trade-off between lo w variance (when c s are small) and high contraction speed (when c s are large) is gi ven by Retrace( λ ), for which we provide the con ver gence of the online algorithm. If we relax the assumption that the trace is Markovian (in which case only the result for policy ev aluation has been proven so f ar) we could trade off a lo w trace at some time for a possibly larger - than- 1 trace at another time, as long as their product is less than 1 . A possible choice could be c s = λ min 1 c 1 . . . c s − 1 , π ( a s | x s ) µ ( a s | x s ) . (9) 4.2 Other topics of discussion No GLIE assumption. The crucial point of Theorem 2 is that con ver gence to Q ∗ occurs for arbi- trary beha viour policies. Thus the online result in Theorem 3 does not require the behaviour policies to become greedy in the limit with infinite exploration (i.e. GLIE assumption, Singh et al., 2000). W e believ e Theorem 3 provides the first con ver gence result to Q ∗ for a λ -return (with λ > 0 ) algorithm that does not require this (hard to satisfy) assumption. Proof of W atkins’ Q( λ ). As a corollary of Theorem 3 when selecting our target policies π k to be greedy w .r .t. Q k (i.e. ε k = 0 ), we deduce that W atkins’ Q( λ ) ( e.g., W atkins, 1989; Sutton and Barto, 1998) con verges a.s. to Q ∗ (under the assumption that µ k commutes asymptotically with the greedy policies, which is satisfied for e.g. µ k defined by (8)). W e believ e this is the first such proof. Increasingly greedy policies The assumption that the sequence of target policies ( π k ) is in- creasingly greedy w .r .t. the sequence of ( Q k ) is more general that just considering greedy policies w .r .t. ( Q k ) (which is W atkins’ s Q( λ )), and leads to more efficient algorithms. Indeed, using non- greedy target policies π k may speed up conv ergence as the traces are not cut as frequently . Of course, in order to con verge to Q ∗ , we ev entually need the target policies (and not the behaviour policies, as mentioned abov e) to become greedy in the limit (i.e. ε k → 0 as defined in Theorem 2). Comparison to Q π ( λ ) . Unlik e Retrace( λ ), Q π ( λ ) does not need to know the behaviour policy µ . Howe ver , it fails to conv erge when µ is far from π . Retrace( λ ) uses its kno wledge of µ (for the chosen actions) to cut the traces and safely handle arbitrary policies π and µ . Comparison to TB( λ ). Similarly to Q π ( λ ) , TB( λ ) does not need the kno wledge of the behaviour policy µ . But as a consequence, TB( λ ) is not able to benefit from possible near on-policy situations, cutting traces unnecessarily when π and µ are close. 7 0.0 0.2 0. 4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Fraction of Games Inter-algorithm Score 40M T RAINING F RAMES Q* Retrace Tree-backup Q-Learning 0.0 0.2 0. 4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Fraction of Games Inter-algorithm Score 200M T RAINING F RAMES Retrace Tree-backup Q-Learning Figure 1: Inter-algorithm score distrib ution for λ -return ( λ = 1 ) variants and Q-Learning ( λ = 0 ). Estimating the behavior policy . In the case µ is unknown, it is reasonable to build an estimate b µ from observ ed samples and use b µ instead of µ in the definition of the trace coef ficients c s . This may actually ev en lead to a better estimate, as analyzed by ? . Continuous action space. Let us mention that Theorems 1 and 2 extend to the case of (mea- surable) continuous or infinite action spaces. The trace coefficients will make use of the densities min(1 , dπ /dµ ) instead of the probabilities min(1 , π /µ ) . This is not possible with TB( λ ). Open questions include: (1) Removing the technical assumption that P π k and P π k ∧ µ k asymp- totically commute, (2) Relaxing the Markov assumption in the control case in order to allo w trace coefficients c s of the form (9). 5 Experimental Results T o v alidate our theoretical results, we employ Retrace ( λ ) in an experience replay (Lin, 1993) setting, where sample transitions are stored within a large but bounded replay memory and subsequently replayed as if the y were ne w experience. Naturally , older data in the memory is usually dra wn from a policy which differs from the current policy , offering an excellent point of comparison for the algorithms presented in Section 2. Our agent adapts the DQN architecture of Mnih et al. (2015) to replay short sequences from the memory (details in the appendix) instead of single transitions. The Q-function target update for a sample sequence x t , a t , r t , · · · , x t + k is ∆ Q ( x t , a t ) = t + k − 1 X s = t γ s − t s Y i = t +1 c i r ( x s , a s ) + γ E π Q ( x s +1 , · ) − Q ( x s , a s ) . W e compare our algorithms’ performance on 60 dif ferent Atari 2600 games in the Arcade Learning En vironment (Bellemare et al., 2013) using Bellemare et al.’ s inter-algorithm score distribution. Inter-algorithm scores are normalized so that 0 and 1 respectiv ely correspond to the worst and best score for a particular game, within the set of algorithms under comparison. If g ∈ { 1 , . . . , 60 } is a game and z g ,a the inter-algorithm score on g for algorithm a , then the score distribution function is f ( x ) := |{ g : z g ,a ≥ x }| / 60 . Roughly , a strictly higher curve corresponds to a better algorithm. Across values of λ , λ = 1 performs best, save for Q ∗ ( λ ) where λ = 0 . 5 obtains slightly superior performance. Howe ver , is highly sensitive to the choice of λ (see Figure 1, left, and T able 2 in the appendix). Both Retrace( λ ) and TB ( λ ) achiev e dramatically higher performance than Q-Learning early on and maintain their advantage throughout. Compared to TB( λ ), Retrace( λ ) offers a narro wer but still mark ed adv antage, being the best performer on 30 games; TB( λ ) claims 15 of the remainder . Per-game details are gi ven in the appendix. Conclusion. Retrace( λ ) can be seen as an algorithm that automatically adjusts – efficiently and safely – the length of the return to the degree of ”of f-policyness” of any a vailable data. Acknowledgments. The authors thank Daan Wierstra, Nicolas Heess, Hado van Hasselt, Ziyu W ang, Da vid Silv er , Audrunas Gr ¯ uslys, Geor g Ostrovski, Hubert Soyer , and others at Google Deep- Mind for their very useful feedback on this work. 8 References Bellemare, M. G., Naddaf, Y ., V eness, J., and Bowling, M. (2013). The Arcade Learning Envi- ronment: An ev aluation platform for general agents. Journal of Artificial Intelligence Researc h , 47:253–279. Bertsekas, D. P . and Tsitsiklis, J. N. (1996). Neur o-Dynamic Pr ogramming . Athena Scientific. Geist, M. and Scherrer , B. (2014). Off-policy learning with eligibility traces: A surve y . The J ournal of Machine Learning Resear ch , 15(1):289–333. Hallak, A., T amar, A., Munos, R., and Mannor , S. (2015). Generalized emphatic temporal dif ference learning: Bias-variance analysis. . Harutyunyan, A., Bellemare, M. G., Stepleton, T ., and Munos, R. (2016). Q( λ ) with off-policy corrections. . Kearns, M. J. and Singh, S. P . (2000). Bias-variance error bounds for temporal difference updates. In Confer ence on Computational Learning Theory , pages 142–147. Lin, L. (1993). Scaling up reinforcement learning for robot control. In Mac hine Learning: Pr oceed- ings of the T enth International Conference , pages 182–189. Mahmood, A. R. and Sutton, R. S. (2015). Off-policy learning based on weighted importance sampling with linear computational complexity . In Conference on Uncertainty in Artificial Intel- ligence . Mahmood, A. R., Y u, H., White, M., and Sutton, R. S. (2015). Emphatic temporal-dif ference learning. . Mnih, V ., Badia, A. P ., Mirza, M., Graves, A., Lillicrap, T . P ., Harley , T ., Silver , D., and Kavukcuoglu, K. (2016). Asynchronous methods for deep reinforcement learning. arXiv:1602.01783 . Mnih, V ., Kavukcuoglu, K., Silver , D., Rusu, A. A., V eness, J., Bellemare, M. G., Graves, A., Riedmiller , M., Fidjeland, A. K., Ostrovski, G., et al. (2015). Human-le vel control through deep reinforcement learning. Nature , 518(7540):529–533. Precup, D., Sutton, R. S., and Dasgupta, S. (2001). Off-polic y temporal-difference learning with function approximation. In International Confer ence on Machine Laerning , pages 417–424. Precup, D., Sutton, R. S., and Singh, S. (2000). Eligibility traces for off-polic y polic y e valuation. In Pr oceedings of the Seventeenth International Confer ence on Machine Learning . Puterman, M. L. (1994). Markov Decision Pr ocesses: Discr ete Stochastic Dynamic Pr ogramming . John W iley & Sons, Inc., New Y ork, NY , USA, 1st edition. Schaul, T ., Quan, J., Antonoglou, I., and Silver , D. (2016). Prioritized experience replay . In Inter- national Confer ence on Learning Representations . Singh, S., Jaakkola, T ., Littman, M. L., and Szepesv ´ ari, C. (2000). Con ver gence results for single- step on-policy reinforcement-learning algorithms. Machine Learning , 38(3):287–308. Sutton, R. and Barto, A. (1998). Reinforcement learning: An intr oduction , volume 116. Cambridge Univ Press. Sutton, R. S. (1988). Learning to predict by the methods of temporal differences. Machine learning , 3(1):9–44. Sutton, R. S. (1996). Generalization in reinforcement learning: Successful examples using sparse coarse coding. In Advances in Neural Information Pr ocessing Systems 8 . Tsitsiklis, J. N. (2003). On the con ver gence of optimistic policy iteration. Journal of Machine Learning Resear ch , 3:59–72. W atkins, C. J. C. H. (1989). Learning fr om Delayed Rewar ds . PhD thesis, King’ s College, Cam- bridge, UK. 9 A Proof of Lemma 1 Pr oof (Lemma 1). Let ∆ Q := Q − Q π . W e begin by re writing (3): R Q ( x, a ) = X t ≥ 0 γ t E µ " t Y s =1 c s r t + γ h E π Q ( x t +1 , · ) − c t +1 Q ( x t +1 , a t +1 ) i # . Since Q π is the fixed point of R , we ha ve Q π ( x, a ) = R Q π ( x, a ) = X t ≥ 0 γ t E µ " t Y s =1 c s r t + γ h E π Q π ( x t +1 , · ) − c t +1 Q π ( x t +1 , a t +1 ) i # , from which we deduce that R Q ( x, a ) − Q π ( x, a ) = X t ≥ 0 γ t E µ h t Y s =1 c s γ E π ∆ Q ( x t +1 , · ) − c t +1 ∆ Q ( x t +1 , a t +1 ) i = X t ≥ 1 γ t E µ h t − 1 Y s =1 c s E π ∆ Q ( x t , · ) − c t ∆ Q ( x t , a t ) i . B Increasingly gr eedy policies Recall the definition of an increasingly greedy sequence of policies. Definition 2. W e say that a sequence of policies ( π k ) is increasingly greedy w .r .t. a sequence of functions ( Q k ) if the following pr operty holds for all k : P π k +1 Q k +1 ≥ P π k Q k +1 . It is obvious to see that this property holds if all policies π k are greedy w .r .t. Q k . Indeed in such case, T π k +1 Q k +1 = T Q k +1 ≥ T π Q k +1 for any π . W e now prove that this property holds for ε k -greedy policies (with non-increasing ( ε k ) ) as well as soft-max policies (with non-decreasing ( β k ) ), as stated in the two lemmas belo w . Of course not all policies satisfy this property (a counter-example being π k ( a | x ) := arg min a 0 Q k ( x, a 0 ) ). Lemma 2. Let ( ε k ) be a non-incr easing sequence. Then the sequence of policies ( π k ) which are ε k -gr eedy w .r .t. the sequence of functions ( Q k ) is incr easingly gr eedy w .r .t. that sequence. Pr oof. From the definition of an ε -greedy policy we ha ve: P π k +1 Q k +1 ( x, a ) = X y p ( y | x, a ) (1 − ε k +1 ) max b Q k +1 ( y , b ) + ε k +1 1 A X b Q k +1 ( y , b ) ≥ X y p ( y | x, a ) (1 − ε k ) max b Q k +1 ( y , b ) + ε k 1 A X b Q k +1 ( y , b ) ≥ X y p ( y | x, a ) (1 − ε k ) Q k +1 ( y , arg max b Q k ( y , b )) + ε k 1 A X b Q k +1 ( y , b ) = P π k Q k +1 , where we used the fact that ε k +1 ≤ ε k . Lemma 3. Let ( β k ) be a non-decreasing sequence of soft-max parameters. Then the sequence of policies ( π k ) which ar e soft-max (with parameter β k ) w .r .t. the sequence of functions ( Q k ) is incr easingly greedy w .r .t. that sequence. 10 Pr oof. For an y Q and y , define π β ( b ) = e β Q ( y,b ) P b 0 e β Q ( y,b 0 ) and f ( β ) = P b π β ( b ) Q ( y , b ) . Then we have f 0 ( β ) = X b π β ( b ) Q ( y , b ) − π β ( b ) X b 0 π β ( b 0 ) Q ( y , b 0 ) Q ( y , b ) = X b π β ( b ) Q ( y , b ) 2 − X b π β ( b ) Q ( y , b ) 2 = V b ∼ π β Q ( y , b ) ≥ 0 . Thus β 7→ f ( β ) is a non-decreasing function, and since β k +1 ≥ β k , we hav e P π k +1 Q k +1 ( x, a ) = X y p ( y | x, a ) X b e β k +1 Q k +1 ( y ,b ) P b 0 e β k +1 Q k +1 ( y ,b 0 ) Q k +1 ( y , b ) ≥ X y p ( y | x, a ) X b e β k Q k +1 ( y ,b ) P b 0 e β k Q k +1 ( y ,b 0 ) Q k +1 ( y , b ) = P π k Q k +1 ( x, a ) . C Proof of Theor em 2 As mentioned in the main te xt, since c s is Marko vian, we can define the (sub)-probability transition operator ( P cµ Q )( x, a ) := X x 0 X a 0 p ( x 0 | x, a ) µ ( a 0 | x 0 ) c ( a 0 , x 0 ) Q ( x 0 , a 0 ) . The Retrace ( λ ) operator then writes R k Q = Q + X t ≥ 0 γ t ( P cµ k ) t ( T π k Q − Q ) = Q + ( I − γ P cµ k ) − 1 ( T π k Q − Q ) . Pr oof. W e now lo wer- and upper-bound the term Q k +1 − Q ∗ . Upper bound on Q k +1 − Q ∗ . Since Q k +1 = R k Q k , we hav e Q k +1 − Q ∗ = Q k − Q ∗ + ( I − γ P cµ k ) − 1 T π k Q k − Q k = ( I − γ P cµ k ) − 1 T π k Q k − Q k + ( I − γ P cµ k )( Q k − Q ∗ )] = ( I − γ P cµ k ) − 1 T π k Q k − Q ∗ − γ P cµ k ( Q k − Q ∗ )] = ( I − γ P cµ k ) − 1 T π k Q k − T Q ∗ − γ P cµ k ( Q k − Q ∗ )] ≤ ( I − γ P cµ k ) − 1 γ P π k ( Q k − Q ∗ ) − γ P cµ k ( Q k − Q ∗ )] = γ ( I − γ P cµ k ) − 1 P π k − P cµ k ( Q k − Q ∗ ) , = A k ( Q k − Q ∗ ) , (10) where A k := γ ( I − γ P cµ k ) − 1 P π k − P cµ k . Now let us prov e that A k has non-negati ve elements, whose sum over each row is at most γ . Let e be the vector with 1-components. By re writing A k as γ P t ≥ 0 γ t ( P cµ k ) t ( P π k − P cµ k ) and noticing that ( P π k − P cµ k ) e ( x, a ) = X x 0 X a 0 p ( x 0 | x, a )[ π k ( a 0 | x 0 ) − c ( a 0 , x 0 ) µ k ( a 0 | x 0 )] ≥ 0 , (11) it is clear that all elements of A k are non-negati ve. W e hav e A k e = γ X t ≥ 0 γ t ( P cµ k ) t P π k − P cµ k e = γ X t ≥ 0 γ t ( P cµ k ) t e − X t ≥ 0 γ t +1 ( P cµ k ) t +1 e = e − (1 − γ ) X t ≥ 0 γ t ( P cµ k ) t e ≤ γ e, (12) 11 (since P t ≥ 0 γ t ( P cµ k ) t e ≥ e ). Thus A k has non-negati ve elements, whose sum ov er each row , is at most γ . W e deduce from (10) that Q k +1 − Q ∗ is upper-bounded by a sub-con ve x combination of components of Q k − Q ∗ ; the sum of their coefficients is at most γ . Thus Q k +1 − Q ∗ ≤ γ k Q k − Q ∗ k e. (13) Lower bound on Q k +1 − Q ∗ . W e have Q k +1 = Q k + ( I − γ P cµ k ) − 1 ( T π k Q k − Q k ) = Q k + X i ≥ 0 γ i ( P cµ k ) i ( T π k Q k − Q k ) = T π k Q k + X i ≥ 1 γ i ( P cµ k ) i ( T π k Q k − Q k ) = T π k Q k + γ P cµ k ( I − γ P cµ k ) − 1 ( T π k Q k − Q k ) . (14) Now , from the definition of ε k we hav e T π k Q k ≥ T Q k − ε k k Q k k ≥ T π ∗ Q k − ε k k Q k k , thus Q k +1 − Q ∗ = Q k +1 − T π k Q k + T π k Q k − T π ∗ Q k + T π ∗ Q k − T π ∗ Q ∗ ≥ Q k +1 − T π k Q k + γ P π ∗ ( Q k − Q ∗ ) − ε k k Q k k e Using (14) we deriv e the lower bound: Q k +1 − Q ∗ ≥ γ P cµ k ( I − γ P cµ k ) − 1 ( T π k Q k − Q k ) + γ P π ∗ ( Q k − Q ∗ ) − ε k k Q k k . (15) Lower bound on T π k Q k − Q k . By hypothesis, ( π k ) is increasingly greedy w .r .t. ( Q k ) , thus T π k +1 Q k +1 − Q k +1 ≥ T π k Q k +1 − Q k +1 = T π k R k Q k − R k Q k = r + ( γ P π k − I ) R k Q k = r + ( γ P π k − I ) Q k + ( I − γ P cµ k ) − 1 ( T π k Q k − Q k ) = T π k Q k − Q k + ( γ P π k − I )( I − γ P cµ k ) − 1 ( T π k Q k − Q k ) = γ P π k − P cµ k ( I − γ P cµ k ) − 1 ( T π k Q k − Q k ) = B k ( T π k Q k − Q k ) , (16) where B k := γ [ P π k − P cµ k ]( I − γ P cµ k ) − 1 . Since P π k − P cµ k has non-negativ e elements (as prov en in (11)) as well as ( I − γ P cµ k ) − 1 , then B k has non-negati ve elements as well. Thus T π k Q k − Q k ≥ B k − 1 B k − 2 . . . B 0 ( T π 0 Q 0 − Q 0 ) ≥ 0 , since we assumed T π 0 Q 0 − Q 0 ≥ 0 . Thus (15) implies that Q k +1 − Q ∗ ≥ γ P π ∗ ( Q k − Q ∗ ) − ε k k Q k k . and combining the abov e with (13) we deduce k Q k +1 − Q ∗ k ≤ γ k Q k − Q ∗ k + ε k k Q k k . Now assume that ε k → 0 . W e first deduce that Q k is bounded. Indeed as soon as ε k < (1 − γ ) / 2 , we hav e k Q k +1 k ≤ k Q ∗ k + γ k Q k − Q ∗ k + 1 − γ 2 k Q k k ≤ (1 + γ ) k Q ∗ k + 1 + γ 2 k Q k k . Thus lim sup k Q k k ≤ 1+ γ 1 − (1+ γ ) / 2 k Q ∗ k . Since Q k is bounded, we deduce that lim sup Q k = Q ∗ . 12 D Proof of Theor em 3 W e first prove con ver gence of the general online algorithm. Theorem 4. Consider the algorithm Q k +1 ( x, a ) = (1 − α k ( x, a )) Q k ( x, a ) + α k ( x, a )( R k Q k ( x, a ) + ω k ( x, a ) + υ k ( x, a )) , (17) and assume that (1) ω k is a centered, F k -measurable noise term of bounded variance, and (2) υ k is bounded from above by θ k ( k Q k k + 1) , wher e ( θ k ) is a random sequence that con verg es to 0 a.s. Then, under the same assumptions as in Theor em 3, we have that Q k → Q ∗ almost sur ely . Pr oof. W e write R for R k . Let us prov e the result in three steps. Upper bound on R Q k − Q ∗ . The first part of the proof is similar to the proof of (13), so we hav e R Q k − Q ∗ ≤ γ k Q k − Q ∗ k e. (18) Lower bound on R Q k − Q ∗ . Again, similarly to (15) we hav e R Q k − Q ∗ ≥ γ λP π k ∧ µ k ( I − γ λP π k ∧ µ k ) − 1 ( T π k Q k − Q k ) + γ P π ∗ ( Q k − Q ∗ ) − ε k k Q k k . (19) Lower -bound on T π k Q k − Q k . Since the sequence of policies ( π k ) is increasingly greedy w .r .t. ( Q k ) , we hav e T π k +1 Q k +1 − Q k +1 ≥ T π k Q k +1 − Q k +1 = (1 − α k ) T π k Q k + α k T π k ( R Q k + ω k + υ k ) − Q k +1 = (1 − α k )( T π k Q k − Q k ) + α k T π k R Q k − R Q k + ω 0 k + υ 0 k , (20) where ω 0 k := ( γ P π k − I ) ω k and υ 0 k := ( γ P π k − I ) υ k . It is easy to see that both ω 0 k and υ 0 k continue to satisfy the assumptions on ω k , and υ k . Now , from the definition of the R operator , we hav e T π k R Q k − R Q k = r + ( γ P π k − I ) R Q k = r + ( γ P π k − I ) Q k + ( I − γ λP π k ∧ µ k ) − 1 ( T π k Q k − Q k ) = T π k Q k − Q k + ( γ P π k − I )( I − γ λP π k ∧ µ k ) − 1 ( T π k Q k − Q k ) = γ ( P π k − λP π k ∧ µ k )( I − γ λP π k ∧ µ k ) − 1 ( T π k Q k − Q k ) . Using this equality into (20) and writing ξ k := T π k Q k − Q k , we hav e ξ k +1 ≥ (1 − α k ) ξ k + α k B k ξ k + ω 0 k + υ 0 k , (21) where B k := γ ( P π k − λP π k ∧ µ k )( I − γ λP π k ∧ µ k ) − 1 . The matrix B k is non-negati ve but may not be a contraction mapping (the sum of its components per row may be larger than 1 ). Thus we cannot directly apply Proposition 4.5 of Bertsekas and Tsitsiklis (1996). Howe ver , as we have seen in the proof of Theorem 2, the matrix A k := γ ( I − γ λP π k ∧ µ k ) − 1 ( P π k − λP π k ∧ µ k ) is a γ -contraction mapping. So now we relate B k to A k using our assumption that P π k and P π k ∧ µ k commute asymptotically , i.e. k P π k P π k ∧ µ k − P π k ∧ µ k P π k k = η k with η k → 0 . For any (sub)- transition matrices U and V , we hav e U ( I − λγ V ) − 1 = X t ≥ 0 ( λγ ) t U V t = X t ≥ 0 ( λγ ) t h t − 1 X s =0 V s ( U V − V U ) V t − s − 1 + V t U i = ( I − λγ V ) − 1 U + X t ≥ 0 ( λγ ) t t − 1 X s =0 V s ( U V − V U ) V t − s − 1 . Replacing U by P π k and V by P π k ∧ µ k , we deduce k B k − A k k ≤ γ X t ≥ 0 t ( λγ ) t η k = γ 1 (1 − λγ ) 2 η k . 13 Thus, from (21), ξ k +1 ≥ (1 − α k ) ξ k + α k A k ξ k + ω 0 k + υ 00 k , (22) where υ 00 k := υ 0 k + γ P t ≥ 0 t ( λγ ) t η k k ξ k k continues to satisfy the assumptions on υ k (since η k → 0 ). Now , let us define another sequence ξ 0 k as follows: ξ 0 0 = ξ 0 and ξ 0 k +1 = (1 − α k ) ξ 0 k + α k ( A k ξ 0 k + ω 0 k + υ 00 k ) . W e can now apply Proposition 4.5 of Bertsekas and Tsitsiklis (1996) to the sequence ( ξ 0 k ) . The matrices A k are non-negati ve, and the sum of their coefficients per ro w is bounded by γ , see (12), thus A k are γ -contraction mappings and have the same fixed point which is 0 . The noise ω 0 k is centered and F k -measurable and satisfies the bounded v ariance assumption, and υ 00 k is bounded abov e by (1 + γ ) θ 0 k ( k Q k k + 1) for some θ 0 k → 0 . Thus lim k ξ 0 k = 0 almost surely . Now , it is straightforward to see that ξ k ≥ ξ 0 k for all k ≥ 0 . Indeed by induction, let us assume that ξ k ≥ ξ 0 k . Then ξ k +1 ≥ (1 − α k ) ξ k + α k ( A k ξ k + ω 0 k + υ 00 k ) ≥ (1 − α k ) ξ 0 k + α k ( A k ξ 0 k + ω 0 k + υ 00 k ) = ξ 0 k +1 , since all elements of the matrix A k are non-negati ve. Thus we deduce that lim inf k →∞ ξ k ≥ lim k →∞ ξ 0 k = 0 (23) Conclusion. Using (23) in (19) we deduce the lower bound: lim inf k →∞ R Q k − Q ∗ ≥ lim inf k →∞ γ P π ∗ ( Q k − Q ∗ ) , (24) almost surely . Now combining with the upper bound (18) we deduce that kR Q k − Q ∗ k ≤ γ k Q k − Q ∗ k + O ( ε k k Q k k ) + O ( ξ k ) . The last two terms can be incorporated to the υ k ( x, a ) and ω k ( x, a ) terms, respectively; we thus again apply Proposition 4.5 of Bertsekas and Tsitsiklis (1996) to the sequence ( Q k ) defined by (17) and deduce that Q k → Q ∗ almost surely . It remains to rewrite the update (7) in the form of (17), in order to apply Theorem 4. Let z k s,t denote the accumulating trace (Sutton and Barto, 1998): z k s,t := t X j = s γ t − j t Y i = j +1 c i I { ( x j , a j ) = ( x s , a s ) } . Let us write Q o k +1 ( x s , a s ) to emphasize the online setting. Then (7) can be written as Q o k +1 ( x s , a s ) ← Q o k ( x s , a s ) + α k ( x s , a s ) X t ≥ s δ π k t z k s,t , (25) δ π k t := r t + γ E π k Q o k ( x t +1 , · ) − Q o k ( x t , a t ) , Using our assumptions on finite trajectories, and c i ≤ 1 , we can show that: E h X t ≥ s z k s,t |F k i < E T 2 k |F k < ∞ (26) where T k denotes trajectory length. Now , let D k := D k ( x s , a s ) := P t ≥ s P { ( x t , a t ) = ( x s , a s ) } . Then, using (26), we can show that the total update is bounded, and re write E µ k h X t ≥ s δ π k t z k s,t i = D k ( x s , a s ) R k Q k ( x s , a s ) − Q ( x s , a s ) . 14 Finally , using the above, and writing α k = α k ( x s , a s ) , (25) can be rewritten in the desired form: Q o k +1 ( x s , a s ) ← (1 − ˜ α k ) Q o k ( x s , a s ) + ˜ α k R k Q o k ( x s , a s ) + ω k ( x s , a s ) + υ k ( x s , a s ) , (27) ω k ( x s , a s ) := ( D k ) − 1 X t ≥ s δ π k t z k s,t − E µ k X t ≥ s δ π k t z k s,t , υ k ( x s , a s ) := ( ˜ α k ) − 1 Q o k +1 ( x s , a s ) − Q k +1 ( x s , a s ) , ˜ α k := α k D k . It can be sho wn that the variance of the noise term ω k is bounded, using (26) and the fact that the rew ard function is bounded. It follows from Assumptions 1-3 that the modified stepsize sequence ( ˜ α k ) satisfies the conditions of Assumption 1. The second noise term υ k ( x s , a s ) measures the difference between online iterates and the corresponding offline v alues, and can be shown to satisfy the required assumption analogously to the argument in the proof of Prop. 5.2 in Bertsekas and Tsitsiklis (1996). The proof relies on the eligibility coef ficients (26) and rewards being bounded, the trajectories being finite, and the conditions on the stepsizes being satisfied. W e can thus apply Theorem 4 to (27), and conclude that the iterates Q o k → Q ∗ as k → ∞ , w .p. 1. E Asymptotic commutativity of P π k and P π k ∧ µ k Lemma 4. Let ( π k ) and ( µ k ) two sequences of policies. If there e xists α such that for all x, a , min( π k ( a | x ) , µ k ( a | x )) = απ k ( a | x ) + o (1) , (28) then the transition matrices P π k and P π k ∧ µ k asymptotically commute: k P π k P π k ∧ µ k − P π k ∧ µ k P π k k = o (1) . Pr oof. For an y Q , we hav e ( P π k P π k ∧ µ k ) Q ( x, a ) = X y p ( y | x, a ) X b π k ( b | y ) X z p ( z | y , b ) X c ( π k ∧ µ k )( c | z ) Q ( z , c ) = α X y p ( y | x, a ) X b π k ( b | y ) X z p ( z | y , b ) X c π k ( c | z ) Q ( z , c ) + k Q k o (1) = X y p ( y | x, a ) X b ( π k ∧ µ k )( b | y ) X z p ( z | y , b ) X c π k ( c | z ) Q ( z , c ) + k Q k o (1) = ( P π k ∧ µ k P π k ) Q ( x, a ) + k Q k o (1) . Lemma 5. Let ( π Q k ) a sequence of (deterministic) gr eedy policies w .r .t. a sequence ( Q k ) . Let ( π k ) a sequence of policies that ar e ε k away fr om ( π Q k ) , in the sense that, for all x , k π k ( ·| x ) − π Q k ( x ) k 1 := 1 − π k ( π Q k ( x ) | x ) + X a 6 = π Q k ( x ) π k ( a | x ) ≤ ε k . Let ( µ k ) a sequence of policies defined by: µ k ( a | x ) = αµ ( a | x ) 1 − µ ( π Q k ( x ) | x ) I { a 6 = π Q k ( x ) } + (1 − α ) I { a = π Q k ( x ) } , (29) for some arbitrary policy µ and α ∈ [0 , 1] . Assume ε k → 0 . Then the transition matrices P π k and P π k ∧ µ k asymptotically commute. Pr oof. The intuition is that asymptotically π k gets very close to the deterministic policy π Q k . In that case, the minimum distribution ( π k ∧ µ k )( ·| x ) puts a mass close to 1 − α on the greedy action π Q k ( x ) , and no mass on other actions, thus ( π k ∧ µ k ) gets very close to (1 − α ) π k , and Lemma 4 applies (with multiplicativ e constant 1 − α ). Indeed, from our assumption that π k is ε -away from π Q k we hav e: π k ( π Q k ( x ) | x ) ≥ 1 − ε k , and π k ( a 6 = π Q k ( x ) | x ) ≤ ε k . 15 W e deduce that ( π k ∧ µ k )( π Q k ( x ) | x ) = min( π k ( π Q k ( x ) | x ) , 1 − α ) = 1 − α + O ( ε k ) = (1 − α ) π k ( π Q k ( x ) | x ) + O ( ε k ) , and ( π k ∧ µ k )( a 6 = π Q k ( x ) | x ) = O ( ε k ) = (1 − α ) π k ( a | x ) + O ( ε k ) . Thus Lemma 4 applies (with a multiplicative constant 1 − α ) and P π k and P π k ∧ µ k asymptotically commute. F Experimental Methods Although our experiments’ learning problem closely matches the DQN setting used by Mnih et al. (2015) (i.e. single-thread off-policy learning with large replay memory), we conducted our trials in the multi-threaded, CPU-based framework of Mnih et al. (2016), obtaining ample result data from affordable CPU resources. K ey differences from the DQN are as follows. Sixteen threads with pri vate en vironment instances train simultaneously; each infers with and finds gradients w .r .t. a local copy of the network parameters; gradients then update a “master” parameter set and local copies are refreshed. T arget network parameters are simply shared globally . Each thread has priv ate replay memory holding 62,500 transitions (1/16 th of DQN’ s total replay capacity). The optimizer is unchanged from (Mnih et al., 2016): “Shared RMSprop” with step size annealing to 0 o ver 3 × 10 8 en vironment frames (summed over threads). Exploration parameter ( ε ) behaviour differs slightly: ev ery 50,000 frames, threads switch randomly (probability 0.3, 0.4, and 0.3 respecti vely) between three schedules (anneal ε from 1 to 0.5, 0.1, or 0.01 o ver 250,000 frames), starting ne w schedules at the intermediate positions where they left old ones. 1 Our experiments comprise 60 Atari 2600 games in ALE (Bellemare et al., 2013), with “life” loss treated as episode termination. The control, minibatched (64 transitions/minibatch) one-step Q- learning as in (Mnih et al., 2015), shows performance comparable to DQN in our multi-threaded setup. Retrace, TB, and Q * runs use minibatches of four 16-step sequences (again 64 transi- tions/minibatch) and the current exploration policy as the target policy π . All trials clamp rewards into [ − 1 , 1] . In the control, Q-function targets are clamped into [ − 1 , 1] prior to gradient calculation; analogous quantities in the multi-step algorithms are clamped into [ − 1 , 1] , then scaled (divided by) the sequence length. Coarse, then fine logarithmic parameter sweeps on the games Asterix , Br eak- out , Endur o , F r eeway , H.E.R.O , P ong , Q*bert , and Seaquest yielded step sizes of 0.0000439 and 0.0000912, and RMSprop regularization parameters of 0.001 and 0.0000368, for control and multi- step algorithms respectiv ely . Reported performance av erages over four trials with different random seeds for each experimental configuration. F .1 Algorithmic P erformance in Function of λ W e compared our algorithms for dif ferent values of λ , using the DQN score as a baseline. As before, for each λ we compute the inter-algorithm scores on a per-game basis. W e then averaged the inter- algorithm scores across games to produce T able 2 (see also Figure 2 for a visual depiction). W e first remark that Retrace always achiev e a score higher than TB, demonstrating that it is efficient in the sense of Section 2. Next, we note that Q ∗ performs best for small values of λ , but begins to fail for values above λ = 0 . 5 . In this sense, it is also not safe. This is particularly problematic as the safe threshold of λ is likely to be problem-dependent. Finally , there is no setting of λ for which Retrace performs particularly poorly; for high values of λ , it achieves close to the top score in most games. For Retrace( λ ) it makes sense to use a values λ = 1 (at least in deterministic en vironments) as the trace cutting effect required in off-polic y learning is taken care of by the use of the min(1 , π /µ ) coefficient. On the contrary , Q ∗ ( λ ) only relies on a value λ < 1 to take care of cutting traces for off-polic y data. 1 W e ev aluated a DQN-style single schedule for ε , but our multi-schedule method, similar to the one used by Mnih et al., yielded improv ed performance in our multi-threaded setting. 16 λ DQN TB Retrace Q ∗ 0.0 0.5071 0.5512 0.4288 0.4487 0.1 0.4752 0.2798 0.5046 0.651 0.3 0.3634 0.268 0.5159 0.7734 0.5 0.2409 0.4105 0.5098 0.8419 0.7 0.3712 0.4453 0.6762 0.5551 0.9 0.7256 0.7753 0.9034 0.02926 1.0 0.6839 0.8158 0.8698 0.04317 T able 2: A verage inter-algorithm scores for each value of λ . The DQN scores are fixed across different λ , but the corresponding inter-algorithm scores varies depending on the worst and best performer within each λ . Average Normalized Score λ Retrace Tree-backup Q-Learning Q* Figure 2: A verage inter-algorithm scores for each value of λ . The DQN scores are fixed across different λ , but the corresponding inter-algorithm scores varies depending on the worst and best performer within each λ . Note that average scores are not directly comparable across different values of λ . 17 T ree-backup( λ ) Retrace( λ ) DQN Q ∗ ( λ ) A L I E N 2508.62 3109.21 2088.81 154.35 A M I D A R 1221.00 1247.84 772.30 16.04 A S S AU LT 7248.08 8214.76 1647.25 260.95 A S T E R I X 29294.76 28116.39 10675.57 285.44 A S T E R O I D S 1499.82 1538.25 1403.19 308.70 A T L A N T I S 2115949.75 2110401.90 1712671.88 3667.18 B A N K H E I S T 808.31 797.36 549.35 1.70 B AT T L E Z O N E 22197.96 23544.08 21700.01 3278.93 B E A M R I D E R 15931.60 17281.24 8053.26 621.40 B E R Z E R K 967.29 972.67 627.53 247.80 B OW L I N G 40.96 47.92 37.82 15.16 B OX I N G 91.00 93.54 95.17 -29.25 B R E A K O U T 288.71 298.75 332.67 1.21 C A R N I V A L 4691.73 4633.77 4637.86 353.10 C E N T I P E D E 1199.46 1715.95 1037.95 3783.60 C H O P P E R C O M M A N D 6193.28 6358.81 5007.32 534.83 C R A Z Y C L I M B E R 115345.95 114991.29 111918.64 1136.21 D E F E N D E R 32411.77 33146.83 13349.26 1838.76 D E M O N A T T AC K 68148.22 79954.88 8585.17 310.45 D O U B L E D U N K -1.32 -6.78 -5.74 -23.63 E L E V ATO R A C T I O N 1544.91 2396.05 14607.10 930.38 E N D U R O 1115.00 1216.47 938.36 12.54 F I S H I N G D E R B Y 22.22 27.69 15.14 -98.58 F R E E W A Y 32.13 32.13 31.07 9.86 F RO S T B I T E 960.30 935.42 1124.60 45.07 G O P H E R 13666.33 14110.94 11542.46 50.59 G R A V I TA R 30.18 29.04 271.40 13.14 H . E . R . O . 25048.33 21989.46 17626.90 12.48 I C E H O C K E Y -3.84 -5.08 -4.36 -15.68 J A M E S B O N D 560.88 641.51 705.55 21.71 K A N G A R O O 11755.01 11896.25 4101.92 178.23 K RU L L 9509.83 9485.39 7728.66 429.26 K U N G - F U M A S T E R 25338.05 26695.19 17751.73 39.99 M O N T E Z U M A ’ S R E V E N G E 0.79 0.18 0.10 0.00 M S . P AC - M A N 2461.10 3208.03 2654.97 298.58 N A M E T H I S G A M E 11358.81 11160.15 10098.85 1311.73 P H O E N I X 13834.27 15637.88 9249.38 107.41 P I T FA L L -37.74 -43.85 -392.63 -121.99 P O OY A N 5283.69 5661.92 3301.69 98.65 P O N G 20.25 20.20 19.31 -20.99 P R I V AT E E Y E 73.44 87.36 44.73 -147.49 Q * B E RT 13617.24 13700.25 12412.85 114.84 R I V E R R A I D 14457.29 15365.61 10329.58 922.13 R O A D R U N N E R 34396.52 32843.09 50523.75 418.62 R O B O T A N K 36.07 41.18 49.20 5.77 S E AQ U E S T 3557.09 2914.00 3869.30 175.29 S K I I N G -25055.94 -25235.75 -25254.43 -24179.71 S O L A R I S 1178.05 1135.51 1258.02 674.58 S PAC E I N V A D E R S 6096.21 5623.34 2115.80 227.39 S TA R G U N N E R 66369.18 74016.10 42179.52 266.15 S U R R O U N D -5.48 -6.04 -8.17 -9.98 T E N N I S -1.73 -0.30 13.67 -7.37 T I M E P I L O T 8266.79 8719.19 8228.89 657.59 T U TA N K H A M 164.54 199.25 167.22 2.68 U P A N D D OW N 14976.51 18747.40 9404.95 530.59 V E N T U R E 10.75 22.84 30.93 0.09 V I D E O P I N B A L L 103486.09 228283.79 76691.75 6837.86 W I Z A R D O F W O R 7402.99 8048.72 612.52 189.43 Y A R ’ S R E V E N G E 14581.65 26860.57 15484.03 1913.19 Z A X X O N 12529.22 15383.11 8422.49 0.40 T imes Best 16 30 12 2 T able 3: Final scores achiev ed by the different λ -return v ariants ( λ = 1 ). Highlights indicate high scores. 18

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment