Robust Hypothesis Testing with $alpha$-Divergence

A robust minimax test for two composite hypotheses, which are determined by the neighborhoods of two nominal distributions with respect to a set of distances - called $\alpha-$divergence distances, is proposed. Sion's minimax theorem is adopted to ch…

Authors: G"okhan G"ul, Abdelhak M. Zoubir

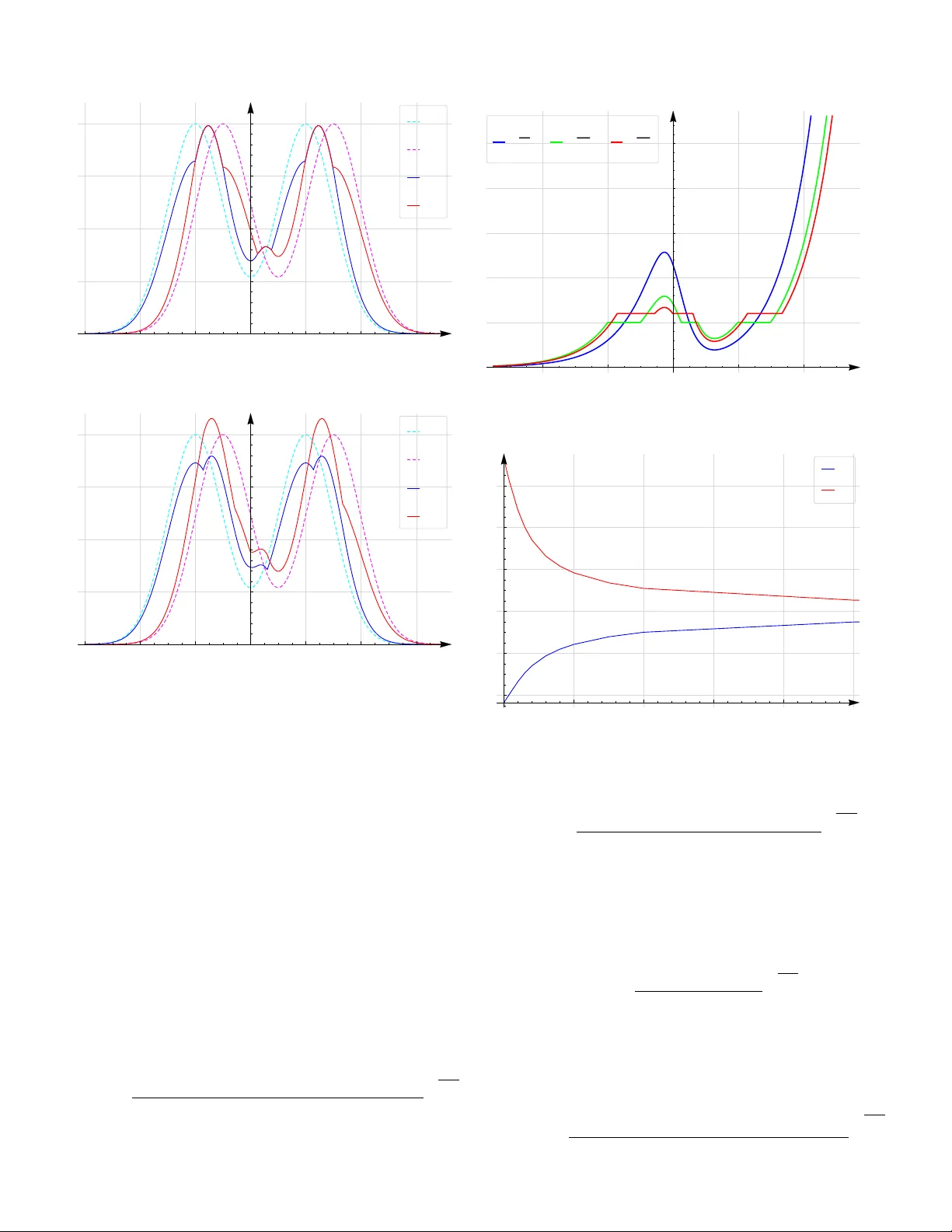

1 Rob ust Hypothesis T esting with α -Di v er gence G ¨ okhan G ¨ ul, Student Member , IEEE, Abdelhak M. Zoubir , F ellow , IEEE, Abstract —A rob ust minimax test for tw o composite hypotheses, which are determined by the neighborhoods of two nominal dis- tributions with respect to a set of distances - called α − divergence distances, is proposed. Sion’ s minimax theorem is adopted to characterize the saddle value condition. Least fa vorable distri- butions, the r obust decision rule and the robust likelihood ratio test ar e deriv ed. If the nominal pr obability distrib utions satisfy a symmetry condition, the design procedur e is shown to be simplified considerably . The parameters contr olling the degree of rob ustness are bounded from above and the bounds are shown to be resulting from a solution of a set of equations. The simulations performed evaluate and exemplify the theoretical derivations. Index T erms —Detection, hypothesis testing, robustness, least fav orable distributions, minimax optimization, likelihood ratio test. I . I N T R O D U C T I O N Decision theory has been an active field of research ben- efiting from contributions from sev eral disciplines, such as economics, engineering, mathematics, or statistics. A decision maker (or a detector) chooses a course of action from several possibilities. A detector is said to be optimal or to be giving the best decision for a particular problem if the decision rule of interest minimizes (or maximizes) a well defined cost function, e.g., the error probability (or the probability of detection) [1]. In addition to the fact that decision theory is truly an in- terdisciplinary subject of research, there are many areas of engineering, where decision theory finds applications, e.g., radar , sonar, seismology , communications and biomedicine. For some applications, such as image and speech classifi- cation or pattern recognition, interest is in a statistical test that performs well on average. Howe ver , for safety oriented applications such as seismology or forest fire detection, as well as for biomedical applications such as early cancer detection from magnetic resonance images or X-ray images, interest is in maximizing the worst case performance because the consequences of an incorrect decision can be se vere [1]. In general, any practical application of decision theory can be formulated as a hypothesis testing problem. For binary hypothesis testing, it is assumed that under each hypothesis H i , the receiv ed data y = ( y 1 , . . . , y n ) ∈ Ω follo ws a par - ticular distribution F i corresponding to a density function f i , i ∈ { 0 , 1 } . A decision rule δ partitions the whole observation space Ω into non-overlapping regions corresponding to each hypothesis. The optimality of the decision rule δ depends on the correctness of the assumption that the data y follo ws F i . Howe ver , in many practical applications either F 0 and/or F 1 are partially kno wn or are af fected by some secondary physical G. G ¨ ul and A. M. Zoubir are with the Signal Processing Group, Institute of T elecommunications, T echnische Uni versitt Darmstadt, 64283, Darmstadt, Germany (e-mail: ggul@spg.tu-darmstadt.de; zoubir@spg.tu-darmstadt.de) Manuscript received April 19, 2005; re vised January 11, 2007. effects that go unmodeled [2]. Imprecise knowledge of F 0 or F 1 leads, in general, to per- formance degradation and a useful approach is to extend the known model by accepting a set of distrib utions F i , under each hypothesis H i , that are populated by probability distributions G i , which are at the neighborhood of the nominal distribution F i based on some distance D [1]. Under some mild conditions on D , it can be sho wn that the best (error minimizing) decision rule ˆ δ for the worst case (error maximizing) pair of probability distributions ( ˆ G 0 , ˆ G 1 ) ∈ F 0 × F 1 accepts a saddle value. Therefore, such a test design guarantees a certain le vel of detection at all times. This type of optimization is known as minimax optimization and the corresponding worst case distributions ( ˆ G 0 , ˆ G 1 ) are called least fav orable distrib utions (LFD)s [3]. The literature in this field is unfortunately not rich. One of the earliest and probably the most crucial work goes back to Huber , who proposed a robust version of the probability ratio test for the − contamination and total variation classes of distributions [4]. He pro ved the existence of least fa vorable distributions and showed that the corresponding robust test was a censored version of the nominal likelihood ratio for both uncertainty classes. In a later work, Huber and Strassen extended the − contamination neighborhood to a larger class, which includes fi ve dif ferent distances as special cases [5]. It was also sho wn that the robust test resulting from this new neighborhood was still a censored likelihood ratio test. Although it was found to be less engineering oriented by Levy [1], the largest classes for which similar conclusions have been made was for the 2 − alternating capacities proposed by Huber and Strassen [6]. Another approach for robust hypothesis testing was proposed by Dabak and Johnson based on the fact that the choice of measures defining the contamination neighborhoods was arbitrary [7]. They chose the relati ve entropy (KL-div ergence) because it is a natural distance between probability measures and therefore a natural way to define the contamination neighborhoods. Somewhat surprisingly , the robust test which minimizes the KL-div ergence between the LFDs obtained from the closed balls with respect to the relati ve entropy distance was not a clipped likelihood ratio test, but a nominal likelihood ratio test with a modified threshold. It was noted that their approach was not robust for all sample sizes but when Kullback’ s theorem is valid, that is for a large number of observations [7]. The difference in the robust tests for − contamination and relativ e entropy neighborhoods lies in the fact that all the densities in the class of distrib utions based on relative entropy are absolutely continuous with respect to the nominal distributions, but not for the case of the − contamination class. A question left open by Dabak and Johnson was the design of 2 a robust test for a finite number of samples. Le vy answered this question under two assumptions; monotone increasing nominal likelihood ratio and symmetric nominal density func- tions ( f 0 ( y ) = f 1 ( − y )) , where y ∈ R . He implied that a robust test based on the relati ve entropy would be more suitable for modeling errors rather than outliers, due to the smoothness property (absolute continuity). He also showed that the resulting robust test w as neither equiv alent to Huber’ s nor to Dabak’ s robust test; it was a completely different test [2]. Although KL-di vergence is a smooth and a natural distance be- tween probability measures, it is not clear why KL di vergence should be considered to build uncertainty sets, especially since there are many other div ergences, which are also smooth and hav e nice theoretical properties, e.g. the symmetry property , which KL-di vergence does not hav e. Besides, theoretically nice properties do not always lead to preferable engineering applications, see for example [8, p.7]. In this respect, KL- div ergence can be replaced by the α − div ergence because α − div ergence includes uncountably many distances as special cases, e.g. χ 2 distance for α = 2 [9], it reduces to the KL- div ergence as α → 1 and shares similar theoretical proper- ties with the KL-di vergence such as smoothness, conv exity or satisfiability of (generalized) Pythagorean inequality [10]. Moreov er, the flexibility provided by the choice of α results in performance improv ements in v arious signal processing appli- cations and implies the sub-optimality of the KL-div ergence. For example, in the design of distributed detection netw orks with power constraints, α − div ergence is considered as the dis- tance between the probability measures, and error e xponents of both kinds are maximized over all α ∈ (0 , 1) [11]. In non- negati ve matrix factorization [12], and indexing and retriev al [13], the optimal value of α (with respect to some objective function) is found to be 1 / 2 corresponding to the squared Hellinger distance. In medical applications; e.g. in medical image segmentation [14], restoration [15] and re gistration [16], the α − diver gence is considered and the optimal value of α is found to be a non-standard value, i.e. a value which does not correspond to any known distance. There are also theoretical works which take advantage of the α − div ergence in the design of statistical tests. It is reported for parametric models [17], [18] as well as for non parametric models [19] that the use of α − div ergence as the distance between probability measures, again with some non-standard values of α , e.g. α = 1 . 6 in [18] and α = 1 . 3 or α = 1 . 5 in [19], leads to promising results. Ho wever , non of these aforementioned works hav e the property of minimax robustness. Furthermore, in non of the aforementioned works, it is possible to adjust the tradeoff between robustness and detection performance. Additionally , the parametric models have a possibly inv alid assumption that the actual probability distributions can be represented by a parametric model. This moti vates the work in this paper: a minimax robust design of hypothesis testing with the α − div ergence distance, where the rob ustness is adjustable with respect to the detection performance by the choice of two robustness parameters, 0 and 1 . The related literature can be summarized as follo ws: In [3], the symmetry constraint that was imposed in [2] was remov ed, considering the squared Hellinger distance. In [20], the number of non-linear equations that needs to be solved to be able to design the robust test was reduced and a formula from where the maximum robustness parameters could be obtained was deriv ed. In [21], robust approaches were extended to distributed detection problems where communication from the sensors to the fusion center is constrained. In a recent work [22], based on the KL-diver gence, the monotone increasing likelihood ratio constraint was remov ed. In this paper, A minimax robust test for two composite hypotheses, which are formed by the neighborhoods of two nominal distributions with respect to the α − div ergence, is designed. It is shown that for any α , the corresponding robust test is the same and unique. There is no constraint on the choice of nominal distrib utions. Therefore, our design general- izes [2]. Since the α − diver gence includes the KL-di vergence or the squared Hellinger distance as a special case, cf. [9], our work also generalizes the works in [3], [20] and [22]. The advantage of considering the α − div ergence for modeling errors is that it allo ws the designer to choose a single parameter that accounts for the distance without carrying out tedious steps of deri vations for the design of a rob ust test. Additionally , the a priori probabilities in our work are not required to be equal, which was assumed in all previous works on model mismatch. An e xample is cognitiv e radio where the primary user may be idle for most of the time, i.e. P ( H 0 ) P ( H 1 ) [23]. Last but not least, the work in this paper allows vector valued observations. The organization of this paper is as follows. In the following section, some background to the minimax optimization prob- lem is gi ven and characterization the saddle value condition is detailed, before the problem definition is stated. Section III is divided into three parts. In the first part, the minimax optimiza- tion problem is solved and the least fav orable distrib utions, the robust decision rule as well as the robust likelihood ratio, which are later shown to be determined via solving two non- linear equations, are obtained. The second part sho ws ho w the problem is simplified if the nominal probability density functions satisfy the symmetry condition. In the third part, the maximum of the robustness parameters, abov e which a minimax robust test cannot be designed, are deri ved. In Section IV simulation results that illustrate the validity of the theoretical deri vations are detailed. Finally , the paper is concluded in Section V. I I . P R O B L E M F O R M U L AT I O N A. Backgr ound Let (Ω , A ) be a measurable space with the probability measures F 0 , F 1 , G 0 , G 1 , and G on it, having the density functions f 0 , f 1 , g 0 , g 1 and g respecti vely , with respect to some dominating measure µ , i.e., F i , G i , G µ , i ∈ { 0 , 1 } . It is assumed that the nominal measures are distinct, i.e. the condition F 0 = F 1 µ − almost everywhere is not true. Consider the binary composite hypothesis testing problem H c 0 : G = G 0 H c 1 : G = G 1 (1) 3 where the measures G i are defined whenever their correspond- ing density functions g i belong to the closed ball G i = { g i : D ( g i , f i ) ≤ i } i ∈ { 0 , 1 } , (2) where D is a distance between the density functions. In other words, e very density function g i which is at least i close to the nominal density f i is a member of the uncertainty class G i and defines G i , i ∈ { 0 , 1 } . W e choose D to be the α − di ver gence i.e., D ( g, f ; α ) := 1 α (1 − α ) 1 − Z Ω g α f 1 − α d µ , α ∈ R \{ 0 , 1 } (3) since it is a con ve x distance for ev ery α and it includes various distances as special cases [9, p.1536]. 1 . Giv en that y ∈ Ω has been observed, a randomized decision rule δ : Ω 7→ [0 , 1] maps each y to a real number in the unit interval. Let ∆ be the set of all decision rules (functions). Then, for any possible choice of δ ∈ ∆ , the following error types are well defined: first, the false alarm probability P F ( δ, f 0 ) = Z Ω δ f 0 d µ, (4) second, the miss detection probability P M ( δ, f 1 ) = Z Ω (1 − δ ) f 1 d µ, (5) and third, the ov erall error probability P E ( δ, f 0 , f 1 ) = P ( H 0 ) P F ( δ, f 0 ) + P ( H 1 ) P M ( δ, f 1 ) . (6) It is well known that P E is minimized if the decision rule is chosen to be the likelihood ratio test δ ( y ) = 0 , l ( y ) < ρ κ ( y ) , l ( y ) = ρ 1 , l ( y ) > ρ , (7) where ρ = P ( H 0 ) /P ( H 1 ) is some threshold, l ( y ) := f 1 /f 0 ( y ) is the likelihood ratio at observ ation y and κ : Ω → [0 , 1] . B. Saddle value specification In this section, the existence of a saddle v alue condition due to the functional topology of the minimax optimization problem is sho wn. Minimax theorem, which is attributed to John von Neumann, giv es the necessary conditions such that the existence of a saddle val ue is guaranteed [24]. Ho wever , it is applicable if and only if both sets o ver which the maximiza- tion and minimization is performed are compact. Note that the closed balls ( G 0 and G 1 ) with respect to the α − di vergence distance are not compact, therefore V on Neumann’ s minimax theorem is not applicable in our case. Here, we adopt Sion’ s minimax theorem [25], sup ( g 0 ,g 1 ) ∈G 0 ×G 1 min δ ∈ ∆ P E ( δ, g 0 , g 1 ) = min δ ∈ ∆ sup ( g 0 ,g 1 ) ∈G 0 ×G 1 P E ( δ, g 0 , g 1 ) , (8) 1 Notice that α − div ergence is preferred against the R ´ enyi’ s α − div ergence because R ´ enyi’ s α − div ergence is con vex only for α ∈ [0 , 1] [9, p.1540] which remov es the compactness constraint on the set over which maximization is performed. In order for (8) to be valid the following conditions must hold: • The objecti ve function P E ( δ, · ) is real v alued, upper semi- continuous and quasi-concave on G 0 × G 1 for all δ ∈ ∆ • The objecti ve function P E ( · , ( g 0 , g 1 )) is lo wer semi- continuous and quasi-con vex on ∆ for all ( g 0 , g 1 ) ∈ G 0 × G 1 • ∆ is a compact con vex subset of a linear topological space • G 0 × G 1 is a con vex subset of a linear topological space The first two conditions hold true because P E is a real valued continuous function, and linear on all three terms δ, g 0 , g 1 , therefore both conv ex and concave. The last condition is also true because, all con vex combinations of g 0 i ∈ G i and g 1 i ∈ G i are in G i since D is a con ve x distance and the Cartesian product of con vex sets is again a conv ex set. Similarly , ∆ is a con vex set because for any t ∈ [0 , 1] and for all δ 0 , δ 1 ∈ ∆ , tδ 0 + (1 − t ) δ 1 ∈ ∆ . Note that any continuous function is also upper or lower semi-continuous and any con vex function is also quasi-con ve x. Lastly , ∆ , which is equiv alent to [0 , 1] Ω in infinite dimensional vector space, is the product of un- countably many compact sets [0 , 1] . According to T ychonof f ’ s theorem, ∆ is compact with respect to the product topology [26], [27]. Note that any finitely supported discretization of g 0 and g 1 makes both G 0 × G 1 and ∆ compact with respect to the standard topology . This is a straightforward result of Heine-Borel theorem [28, Theorem 2.41]. Accordingly , based on Sions’ s minimax theorem, there exists a saddle value for the objective function P E , i.e., P E ( δ, ˆ g 0 , ˆ g 1 ) ≥ P E ( ˆ δ , ˆ g 0 , ˆ g 1 ) ≥ P E ( ˆ δ , g 0 , g 1 ) . (9) Since P E is distinct in g 0 and g 1 , we also hav e P F ( ˆ δ , g 0 ) ≤ P F ( ˆ δ , ˆ g 0 ) P M ( ˆ δ , g 1 ) ≤ P M ( ˆ δ , ˆ g 1 ) . (10) C. Pr oblem definition Based on (10), the minimax optimization problem (8) can be solved considering the Karush-Kuhn-T ucker (KKT) multi- pliers. Hence, the problem formulation can be restated as Maximization: ˆ g 0 = arg sup g 0 ∈G 0 P F ( δ, g 0 ) s.t. g 0 > 0 , Υ( g 0 ) = Z R g 0 d µ = 1 ˆ g 1 = arg sup g 1 ∈G 1 P M ( δ, g 1 ) s.t. g 1 > 0 , Υ( g 1 ) = Z R g 1 d µ = 1 Minimization: ˆ δ = arg min δ ∈ ∆ P E ( δ, ˆ g 0 , ˆ g 1 ) . (11) In (11), there are two separate maximization problems, which are coupled with the minimization problem through the deci- 4 sion rule δ 2 I I I . R O BU S T D E T E C T I O N W I T H α − D I V E R G E N C E The follo wing theorem provides a solution for (11), which is composed of the least fa vorable densities ˆ g 0 and ˆ g 1 , the robust decision rule ˆ δ , the robust likelihood ratio function ˆ l = ˆ g 1 / ˆ g 0 in parametric forms, as well as two non-linear equations from which the parameters can be obtained. Before the statement of the theorem, let l l and l u be two real numbers with 0 < l l ≤ 1 ≤ l u < ∞ . Furthermore, let k ( l l , l u ) = R I 1 ( l − l l ) f 0 d µ R I 3 ( l u − l ) f 0 d µ , (12) z ( l l , l u ; α , ρ ) = Z I 1 f 1 d µ + k ( l l , l u ) Z I 3 f 1 d µ + Z I 2 k ( l l , l u ) α − 1 ( l α − 1 l − l α − 1 u ) l α − 1 l − ( k ( l l , l u ) l u ) α − 1 + ( k ( l l , l u ) α − 1 − 1)( l/ρ ) α − 1 1 α − 1 f 1 d µ, (13) where I 1 := { y : l ( y ) < ρl l } ≡ { y : ˆ l ( y ) < ρ } I 2 := { y : ρl l ≤ l ( y ) ≤ ρl u } ≡ { y : ˆ l ( y ) = ρ } I 3 := { y : l ( y ) > ρl u } ≡ { y : ˆ l ( y ) > ρ } (14) and Φ 1 ( l, l l , l u ; α , ρ ) = 1 z ( l l , l u ; α , ρ ) · k ( l l , l u ) α − 1 ( l α − 1 l − l α − 1 u ) l α − 1 l − ( k ( l l , l u ) l u ) α − 1 + ( k ( l l , l u ) α − 1 − 1)( l/ρ ) α − 1 1 α − 1 with Φ 0 = Φ 1 lρ − 1 . Theorem III.1. The least favorable densities ˆ g 0 = l l z ( l l ,l u ; α,ρ ) f 0 , l < ρl l Φ 0 ( l, l l , l u ; α , ρ ) f 0 , ρl l ≤ l ≤ ρl u k ( l l ,l u ) l u z ( l l ,l u ; α,ρ ) f 0 , l > ρl u , (15) ˆ g 1 = 1 z ( l l ,l u ; α,ρ ) f 1 , l < ρl l Φ 1 ( l, l l , l u ; α , ρ ) f 1 , ρl l ≤ l ≤ ρl u k ( l l ,l u ) z ( l l ,l u ; α,ρ ) f 1 , l > ρl u , (16) and the r obust decision rule ˆ δ = 0 , l < ρl l l α − 1 l ( l/ρ ) 1 − α − 1 ( l α − 1 l − ( k ( l l ,l u ) l u ) α − 1 )( l/ρ ) 1 − α + k ( l l ,l u ) α − 1 − 1 , ρl l ≤ l ≤ ρl u 1 , l > ρl u , (17) 2 In general arg sup may not always be achiev ed since G 0 and G 1 are non- compact sets in the topologies induced by the α -di vergence distance. In this paper , existence of ˆ g 0 and ˆ g 1 is due to the KKT solution of the minimax optimization problem, which is introduced in Section III. implying the r obust likelihood ratio function ˆ l = ˆ g 1 ˆ g 0 = l − 1 l l, l < ρl l ρ, ρl l ≤ l ≤ ρl u l − 1 u l, l > ρl u (18) pr ovide a unique solution to (11) . Furthermor e, the parameter s l l and l u can be determined by solving 1 z ( l l , l u ; α , ρ ) α l l α Z I 1 f 0 d µ + Z I 2 Φ 0 0 ( l l , l u ; α , ρ ) α f 0 d µ + ( k ( l l , l u ) l u ) α Z I 3 f 0 d µ ! = x ( α, ε 0 ) (19) and 1 z ( l l , l u ; α , ρ ) α Z I 1 f 1 d µ + Z I 2 Φ 0 1 ( l l , l u ; α , ρ ) α f 1 d µ + k ( l l , l u ) α Z I 3 f 1 d µ ! = x ( α, ε 1 ) (20) wher e Φ 0 j ( l l , l u ; α , ρ ) = z ( l l , l u ; α , ρ )Φ j , and x ( α, ε ) = 1 − α (1 − α ) ε . A proof of Theorem III.1 is gi ven in three stages. In the maximization stage, the Karush-Kuhn-T ucker (KKT) multipli- ers are used to determine the parametric forms of the LFDs, ˆ g 0 and ˆ g 1 , and the robust likelihood ratio function ˆ l . In the minimization stage, the LFDs and the robust decision rule ˆ δ are made explicit. Finally , in the optimization stage, four parameters that are needed to design the test are reduced to two parameters without loss of generality . Pr oof: A. Derivation of LFDs and the r obust decision rule 1) Maximization step: Consider the Lagrangian function L ( g 0 , λ 0 , µ 0 ) = P F ( δ, g 0 )+ λ 0 ( 0 − D ( g 0 , f 0 ; α ))+ µ 0 (1 − Υ( g 0 ))) , (21) where µ 0 and λ 0 ≥ 0 are the KKT multipliers. It can be seen that L is a strictly concave functional of g 0 , as ∂ 2 L/∂ g 2 0 < 0 for ev ery λ 0 > 0 . Therefore, there exists a unique solution to (21), in case all KKT conditions are met [29, Chapter 5]. More explicitly the Lagrangian can be stated as L ( g 0 , λ 0 , µ 0 ) = Z R δ g 0 − µ 0 g 0 + λ 0 α (1 − α ) (1 − α ) f 0 + αg 0 − g 0 f 0 α f 0 ! + λ 0 0 + µ 0 d µ. (22) Note that similar to [2], the positivity constraint g 0 ≥ 0 (or g 1 ≥ 0 ) is not imposed, because for some α , this constraint is satisfied automatically , while for others each solution of La- grangian optimization must be checked for positivity . T o find the maximum of (22), the directional (G ˆ ateaux’ s) deri vati ve of the Lagrangian L with respect to g 0 in the direction of a function ψ is taken: Z Ω " δ − µ 0 + λ 0 1 − α g 0 f 0 α − 1 − 1 !# ψ d µ. (23) 5 Since ψ is arbitrary , L is maximized whenever δ − µ 0 + λ 0 1 − α g 0 f 0 α − 1 − 1 ! = 0 . (24) Solving (24) the density function of the LFD ˆ G 0 , ˆ g 0 = 1 − α λ 0 ( µ 0 − δ ) + 1 1 α − 1 f 0 (25) is obtained. Writing the Lagrangian for P M , in a similar way , with the KKT multipliers µ 0 := µ 1 and λ 0 := λ 1 it follows that ˆ g 1 = 1 − α λ 1 ( µ 1 − 1 + δ ) + 1 1 α − 1 f 1 . (26) Accordingly , the robust lik elihood ratio function can be ob- tained as ˆ l = ˆ g 1 ˆ g 0 = " 1 − α λ 1 ( µ 1 − 1 + δ ) + 1 1 − α λ 0 ( µ 0 − δ ) + 1 # 1 α − 1 l. (27) 2) Minimization step: The minimizing decision function is known to be of type (7) with l to be replaced by ˆ l and κ to be determined from (27) via solving ˆ l = ρ for δ := ˆ δ . For every ρ , this results in ˆ δ = 0 , ˆ l < ρ λ 0 ( − 1+ α + λ 1 + µ 1 − αµ 1 ) ( − 1+ α )( λ 0 + λ 1 ( l/ρ ) 1 − α ) − λ 1 ( λ 0 + µ 0 − αµ 0 )( l/ρ ) 1 − α ( − 1+ α )( λ 0 + λ 1 ( l/ρ ) 1 − α ) , ˆ l = ρ. 1 , ˆ l > ρ (28) Inserting (28) in (25) and (26), the least fav orable density functions can be obtained as ˆ g 0 = c 1 f 0 , ˆ l < ρ Φ 0 f 0 , ˆ l = ρ c 2 f 0 , ˆ l > ρ , ˆ g 1 = c 3 f 1 , ˆ l < ρ Φ 1 f 1 , ˆ l = ρ c 4 f 1 , ˆ l > ρ , (29) where c 1 = (1 − α ) µ 0 + λ 0 λ 0 1 α − 1 , c 2 = (1 − α )( µ 0 − 1) + λ 0 λ 0 1 α − 1 , c 3 = (1 − α )( µ 1 − 1) + λ 1 λ 1 1 α − 1 , c 4 = (1 − α ) µ 1 + λ 1 λ 1 1 α − 1 and Φ 0 = − 1 + λ 0 + λ 1 + µ 0 + µ 1 − α ( − 1 + µ 0 + µ 1 ) λ 0 + λ 1 ( l/ρ ) 1 − α 1 α − 1 , (30) Φ 1 = − 1 + λ 0 + λ 1 + µ 0 + µ 1 − α ( − 1 + µ 0 + µ 1 ) λ 1 + λ 0 ( l/ρ ) α − 1 1 α − 1 . (31) In order to determine the unknown parameters, the constraints in the Lagrangian definition, i.e., D (ˆ g i , f i , α ) = i and Υ( ˆ g i ) = 1 , i ∈ { 0 , 1 } are imposed. This leads to four non- linear equations: c 1 Z ˆ l<ρ f 0 d µ + Z ˆ l = ρ Φ 0 f 0 d µ + c 2 Z ˆ l>ρ f 0 d µ = 1 , c 3 Z ˆ l<ρ f 1 d µ + Z ˆ l = ρ Φ 1 f 1 d µ + c 4 Z ˆ l>ρ f 1 d µ = 1 , c α 1 Z ˆ l<ρ f 0 d µ + Z ˆ l = ρ Φ α 0 f 0 d µ + c α 2 Z ˆ l>ρ f 0 d µ = x ( α, 0 ) , c α 3 Z ˆ l<ρ f 1 d µ + Z ˆ l = ρ Φ α 1 f 1 d µ + c α 4 Z ˆ l>ρ f 1 d µ = x ( α, 1 ) , (32) in four parameters, where x ( α , ) = 1 − α (1 − α ) . 3) Optimization Step: In this section, the number of equa- tions as well as the number of parameters are reduced. This allows the re-definition of ˆ l , ˆ δ , ˆ g 0 and ˆ g 1 in a more compact form. Let l l = c 1 /c 3 and l u = c 2 /c 4 , then ˆ l = ˆ g 1 / ˆ g 0 from (29) indicates the equi valence of integration domains, I 1 , I 2 and I 3 as defined by (14). Applying the following steps in (32): • Consider new domains I 1 , I 2 , I 3 • Use the substitutions c 1 := c 3 l l and c 2 := c 4 l u • Di vide both sides of the first two equations by c 3 • Equate the resulting equations to each other via 1 /c 3 leads to c 4 = k ( l l , l u ) c 3 , where k ( l l , l u ) is as defined by (12). Next, the goal is to find a functional f s.t. Φ 1 = c 3 f ( l, l l , l u , α ) . Since Φ 0 f 0 ρ = Φ 1 f 1 , it follows that Φ 0 = c 3 f ( l, l l , l u , α ) lρ − 1 , therefore it suffices to ev aluate only Φ 1 . A step by step deriv ation of the functional f is giv en in Appendix A. Accordingly , Φ 0 is also fully specified in terms of the desired parameters and functions. Inserting Φ 1 (which is now a functional of c 3 , l, l l , l u , α ), c.f., (51), into the second equation in (32) and noticing that c 4 = k ( l l , l u ) c 3 leads to c 3 = 1 /z ( l l , l u ; α , ρ ) , where z ( l l , l u ; α , ρ ) is as defined by (13). Applying a similar procedure, which can be found in Appendix B, to ˆ δ , c.f., (28), for the case ˆ l = ρ leads to the robust decision rule ˆ δ as giv en by Theorem III.1. The least fa vorable densities, ˆ g 0 and ˆ g 1 , and the robust likelihood ratio function ˆ l are obtained similarly , by exploiting the connection between the parameters c 1 , c 2 , c 3 , c 4 and l l , l u . The same simplifications eventually let the four equations given by (32) to be re written as the two equations stated by Theorem III.1. As it was mentioned earlier, both ˆ g 0 and ˆ g 1 are obtained uniquely from the Lagrangian L . Hence, ˆ l = ˆ g 1 / ˆ g 0 , and as a result, ˆ δ are also unique. It follows that the solution found for (11) by the KKT multipliers approach is unique as claimed. Theorem III.1 can be summarized as illustrated in Figure 1. In other words, for any choice of pair of nominal density functions f 0 and f 1 , the robustness parameters 0 and 1 , the Bayesian threshold ρ and the distance parameter α , the robust design outputs the least fav orable density functions ˆ g 0 and ˆ g 1 and the robust decision rule ˆ δ . Notice that ˆ g 0 and ˆ g 1 are the scaled versions (with different scaling factors) of the nominal distributions on l < ρl l and l > ρl u , and in between, they are a composition of both nominals, since Φ 0 and Φ 1 are both functionals of f 0 and f 1 . Interpretation of the decision rule ˆ δ is 6 Minimax Robust Test Design f 0 , f 1 Ε 0 , Ε 1 Ρ , Α ∆ ` g ` 0 , g ` 1 Fig. 1. Summary of the robust hypothesis testing scheme given by Theorem III.1. Ρ l l Ρ l u Ρ l l l l l u l l ` Fig. 2. Nonlinearity relating the nominal likelihood ratios to the robust likelihood ratios. similar , i.e. in the same two regions the robust decision rule is almost surely zero or one, and in between it is a randomized decision rule. The robust version of the nominal likelihood ratio test is a non-linearly transformed version of the nominal likelihood ratios as illustrated by Figure 2. It is some what surprising that the resulting robust likelihood ratio test is the same for the whole family of distances that are parameterized by α . In other words, the robust version of the likelihood ratio test, which is giv en by (18) is not explicitly a function of α . Theorem III.1 is a generalization of [22] in the sense that as α → 1 and ρ = 1 , the least fa vorable densities ˆ g 0 and ˆ g 1 as well as the robust decision rule ˆ δ reduce to the ones found in [22]. The flexibility afforded by the generality of considering a set of distances, called the α − div ergence, ov er [22] is twofold. First, the designer does not need to search for a suitable distance for modeling errors, and each time test for the applicability to the engineering problem at hand, follo wing tedious steps of deriv ations. Instead, only the parameter α is required to be determined, which can be done ov er a training data set via using a suitable search algorithm. Second, the a priori probabilities are not necessarily to be chosen equal. The proposed design with the α − diver gence covers both cases, in addition to the fact that the choice of the nominal probability distributions also does not require any assumption. Additional constraints on the choice of nominal distributions as well as on the robustness parameters simplify the design as introduced in the next section. B. Simplified model with additional constraints In some cases, evidence that the following assumption holds may be av ailable: Assumption III.2. The nominal likelihood ratio l is monotone and the nominal density functions are symmetric, i.e., f 1 ( y ) = f 0 ( − y ) ∀ y If, additionally , the robustness parameters are set to be equal, = 0 = 1 , or in other w ords x ( α, ) = x ( α, 0 ) = x ( α, 1 ) , it follows that δ ( y ) = 1 − δ ( − y ) ⇑ ( l u = 1 /l l y u = − y l ) ⇐ ⇒ ( c 2 c 1 = c 3 = c 4 ) ⇐ ⇒ ( λ 0 = λ 1 µ 0 = µ 1 ) m g 1 ( y ) = g 0 ( − y ) (33) where y l = l − 1 ( l l ) and y u = l − 1 ( l u ) . These relationships are straightforward and therefore the proofs are omitted. Notice that, due to monotonicity of l , the limits of inte grals I 1 , I 2 and I 3 should be re-arranged e.g., I 1 : = { y : l ( y ) < ρl l } ≡ { y : y < l − 1 ( ρl ( y l )) } ≡ { y : y < l − 1 ( ρl ( − y u )) } . The symmetry assumption implies: x ( α, ) = Z R g 1 ( y ) f 1 ( y ) α f 1 ( y ) d y = Z R g 1 ( y ) f 0 ( − y ) α f 0 ( − y ) d y = Z R g 0 ( y ) f 0 ( y ) α f 0 ( y ) d y = Z R g 0 ( − y ) f 0 ( − y ) α f 0 ( − y ) d y = Z R g 0 ( − y ) f 1 ( y ) α f 1 ( y ) d y (34) for all α and and, it also implies l ( y ) = 1 /l ( − y ) and as a result ˆ l ( y ) = 1 / ˆ l ( − y ) for all y . Hence, g 1 ( y ) = g 0 ( − y ) ∀ y is a solution and all the simplifications in (33) follo w . This reduces the four equations given by (32) to two: c 4 = l ( y u ) Z y ∗ l −∞ f 1 ( y ) d y + Z y ∗ u y ∗ l 1 + l ( y u ) α − 1 1 + ( l ( y ) /ρ ) α − 1 1 α − 1 f 1 ( y ) d y + Z ∞ y ∗ u f 1 ( y ) d y ! − 1 (35) and c 4 α l ( y u ) α Z y ∗ l −∞ f 1 ( y ) d y + Z y ∗ u y ∗ l 1 + l ( y u ) α − 1 1 + ( l ( y ) /ρ ) α − 1 α α − 1 f 1 ( y ) d y + Z ∞ y ∗ u f 1 ( y ) d y ! = x ( α, ) , (36) 7 where y ∗ l ( y u ) = l − 1 ( ρl ( − y u )) and y ∗ u ( y u ) = l − 1 ( ρl ( y u )) . These two equations can then be combined into a single equation l ( y u ) α Z y ∗ l −∞ f 1 ( y ) d y + Z y ∗ u y ∗ l 1 + l ( y u ) α − 1 1 + ( l ( y ) /ρ ) α − 1 α α − 1 f 1 ( y ) d y + Z ∞ y ∗ u f 1 ( y ) d y − x ( α , ) l ( y u ) Z y ∗ l −∞ f 1 ( y ) d y + Z y ∗ u y ∗ l 1 + l ( y u ) α − 1 1 + ( l ( y ) /ρ ) α − 1 1 α − 1 f 1 ( y ) d y + Z ∞ y ∗ u f 1 ( y ) d y ! α = 0 , (37) from where the parameter y u can easily be determined. Obvi- ously , the computational complexity is reduced considerably with the aforementioned assumptions, i.e., when (37) is com- pared to (19) and (20). Note that when ρ = 1 , we hav e y ∗ l = − y u and y ∗ u = y u and if additionally α → 1 , (37) reduces to [2], cf. [9]. C. Limiting Robustness P arameters The existence of a minimax robust test strictly depends on the pre-condition that the uncertainty sets G i are distinct. T o satisfy this condition, Huber suggested i to be chosen small, see [4, p.3]. Dabak [7] does not mention ho w to choose the parameters, whereas Levy gi ves an implicit bound as the rela- tiv e entropy between the half way density f 1 / 2 = f 1 / 2 0 f 1 / 2 1 /z and the nominal density f 0 , i.e., < D ( f 1 / 2 , f 0 ) , where z is a normalizing constant. In the sequel, we show e xplicitly which pairs of parameters ( 0 , 1 ) are valid to design a minimax robust test for the α − div ergence distance. The limiting condition for the uncertainty sets to be disjoint is ˆ G 0 = ˆ G 1 µ -a.e. It is clear from the saddle value condition (30) that for any possible choice of ( 0 , 1 ) , which results in ˆ G 0 = ˆ G 1 , it is true that P E ≤ 1 / 2 for all ( g 0 × g 1 ) ∈ G 0 × G 1 . Since infinitesimally smaller parameters guarantee the strict inequality P E < 1 / 2 , it is sufficient to determine all pos- sible pairs which result in ˆ G 0 = ˆ G 1 . A careful inspection suggests that the LFDs are identical whenev er l l → inf l and l u → sup l . For this choice I 1 and I 3 are empty sets and the density functions under each h ypothesis are defined only on I 2 . W ithout loss of generality , assume that α < 1 , inf l = 0 and sup l = ∞ . For this choice l l → 0 implies µ 1 = λ 1 / ( α − 1) + 1 and l u → ∞ implies µ 0 = λ 0 / ( α − 1) + 1 . Inserting these into one of the first two equations in (32), gi ves Z Ω λ 0 f 0 ( y ) 1 − α + λ 1 ρ α − 1 f 1 ( y ) 1 − α 1 1 − α d y = (1 − α ) 1 1 − α . (38) Similarly , from the third and fourth equations it follows that Z R λ 0 f 0 ( y ) 1 − α α + λ 1 ρ α − 1 f 1 ( y ) 1 − α f 0 ( y ) ( α − 1) 2 α α 1 − α d y = (1 − α ) α 1 − α x ( α, 0 ) (39) and Z R λ 1 f 1 ( y ) 1 − α α + λ 0 ρ 1 − α f 0 ( y ) 1 − α f 1 ( y ) ( α − 1) 2 α α 1 − α d y = (1 − α ) α 1 − α x ( α, 1 ) . (40) Giv en ρ and α , (38), (39), and (40) can jointly be solved to determine the space of maximum robustness parameters. As an e xample, consider Ω = R , ρ = 1 and α = 1 / 2 . This choice of α corresponds to the squared Hellinger distance with an additional scaling factor of 1 /α (1 − α ) = 4 . Let a = R ∞ −∞ p f 0 ( y ) f 1 ( y ) d y . Then, the Equations (38)-(40) reduce to the polynomials in the Lagrangian multipliers λ 0 and λ 1 , λ 2 0 + λ 2 1 + 2 λ 0 λ 1 a − 1 4 = 0 , (41) 4 − 8 λ 0 − 8 λ 1 a − 0 = 0 , (42) 4 − 8 λ 1 − 8 λ 0 a − 1 = 0 , (43) respectiv ely . Solving (42) and (43) for λ 0 and λ 1 , respectively , and inserting the results into Equation (41) we get 2 1 ( a ( 0 − 4) + 4) − (4 a + 0 − 4) 2 − 2 1 = 0 . (44) Equation (44) is quadratic in a and has two roots. One of the roots results in a = 1 for all 0 = 1 , which is not plausible. Therefore, the correct root is, a = 1 16 16 − 4 1 + 0 ( 1 − 4) − p ( 0 − 8) 0 ( 1 − 8) 1 . (45) Notice that (45) is symmetric in 0 and 1 , i.e., a ( 0 , 1 ) = a ( 1 , 0 ) for all ( 0 , 1 ) , as expected. Since 0 ≤ a ≤ 1 is known a priori, given a choice of i , the corresponding 1 − i can be determined from (45) easily , c.f., Section IV. A special case occurs whenev er = 0 = 1 , which simplifies (45) to max = 4 − 2 p 2(1 + a ) . (46) Maximum robustness parameters giv en by (45) and (46) are in agreement with the ones found in [20]. The case α > 1 , which implies µ 0 = λ 0 / ( α − 1) and µ 1 = λ 1 / ( α − 1) , can be examined similarly . I V . S I M U L A T I O N S In this section, some simulations are performed to illustrate the theoretical deriv ations. Consider a simple hypothesis test- ing problem H s 0 : Y = W H s 1 : Y = W + A (47) where A > 0 is a known DC signal, W is a random variable which follows a symmetric Gaussian mixture distribution W ∼ 1 2 N ( − µ, σ 2 ) + N ( µ, σ 2 ) , (48) where N ( µ, σ 2 ) is a Gaussian distribution with mean µ and variance σ 2 and Y is a random variable on Ω = R , which is consistent with the data sample y . T o account for uncertainties on Y under both hypotheses, let F 0 ( y ) := P ( Y < y |H s 0 ) ∀ y F 1 ( y ) := P ( Y < y |H s 1 ) ∀ y (49) be the nominal distributions, having the density functions f 0 and f 1 for the binary composite hypothesis testing problem giv en by (1) and (2). Note that the symmetry condition, 8 f 1 ( y ) = f 0 ( − y ) for all y , does not hold, and l = f 1 /f 0 is not monotone. Assume µ = 2 , σ = 1 and A = 1 and let the robustness parameters be 0 = 0 . 02 and 1 = 0 . 03 for the ( α = 4) − div ergence distance. This example demonstrates an extreme case, for which no straightforward simplification to the equations (19) and (20) exists, both in terms of reducing the number of equations as well as for the domain of inte grals. Figure 3 illustrates the nominal density functions f 0 and f 1 along with the density functions of the corresponding least fav orable densities (LFD)s g 0 and g 1 , for an equal a priori probability ρ = 1 . It can be observed that LFDs intersect in three distinct intervals, each at the neighborhood of y = − 1 . 5 + j for j ∈ { 0 , 2 , 4 } . In Fig. 4, the same simulation is repeated for ρ = 1 . 2 . In Fig. 5 the nominal and least fav orable likelihood ratios for the same example are shown. As it was given by (18), robustification of the simple hypothesis test corresponds to a non-linear transformation of the nominal likelihood ratios. In the next simulation, all the parameters are fixed as before, except for α . W e are especially interested in the change in the lower and upper thresholds, l l and l u , for varying α . Figure 6 illustrates the outcome of this simulation for ρ = 1 . W e can see that l l and l u tend to 1 for α → ∞ . It is not straightforward to deri ve this from (19) and (20) for an y f 0 and f 1 . Howe ver , if there exists a solution, which is true and unique by the KKT multipliers approach, it should satisfy D ( f , g ; α ) = i for any α > 0 and for all allo wable i , cf. Section III-C. Assume that g is fixed and it does not depend on α . Then, the integral R R g α f 1 − α d µ is 1 at α = 0 and α = 1 , con vex in α , and it is positiv e for all α > 0 , f and g . Hence, lim α →∞ R R g α f 1 − α d µ = ∞ and lim α →∞ D ( f , g ; α ) is indeterminate. Using L ’Hospital’ s rule twice we obtain K = lim α →∞ D ( g, f ; α ) = lim α →∞ R R log 2 ( g /f )( g/f ) α f d µ 2 . (50) The integral R R log 2 ( g /f )( g/f ) α f d µ is also positiv e and con vex in α . This implies K → ∞ for α → ∞ . Now , assume that g depends on α and tends to a limiting distribution g ∗ for || g ∗ − f || > 0 , when α → ∞ . Then, our conclusion does not change, i.e., K → ∞ for α → ∞ . Since D ( f , g ; α ) is finite, we require that α → ∞ = ⇒ g ∗ → f . Consequently , from (15) and (16), ˆ g i → f i whenev er l l → 1 and l u → 1 explains the asymptotic of Figure 6 for any pair ( f 0 , f 1 ) . Based on simulation results the following are conjectured: • For a fixed 0 and 1 , increasing α leads to a monotone decrease in l u and monotone increase in l l on R + \{ 0 , 1 } . • For a fix ed α , increasing 0 , 1 or both introduces a non- decrease in l u , non-increase in l l , or both, gi ven that 0 and 1 are less than their allowable maximum, cf. Section III-C. The proof of these conjectures is an open problem. From (19) and (20), it is clear that gi ven a pair ( 0 , 1 ) , a slight change in α changes the equations completely and in general l l and l u are functions of α . In Figure 7, the robust decision rule ˆ δ for various α values is plotted, without considering the dependency of l l and l u on α . T o do this, l l ≈ 0 . 605 and l u ≈ 1 . 618 , that are found for ρ = 1 , α = 4 , 0 = 0 . 02 and 0 = 0 . 03 , are fixed constants in (17). Then, for α = { 0 . 01 , 10 , 100 } , (17) is plotted. The decision rule ˆ δ tends to a step like function for an increasing α , whereas for a smaller α , i.e., α = 0 . 01 , the decision rule is almost linear at the domain of the likelihood ratio for which ˆ l = 1 . This result is also in agreement with the previous findings; ˆ δ tends to a non-randomized lik elihood ratio test for α → ∞ , for which we obtained ˆ g i → f i and for ( f 0 , f 1 ) optimum decision rule is known to be a non-randomized likelihood ratio test. In the following simulation, the simplified model ( f 0 ( y ) = f 1 ( − y ) ) is tested for mean shifted Gaussian distributions; F 0 ∼ N ( µ 0 , σ 2 ) and F 1 ∼ N ( µ 1 , σ 2 ) with means µ 0 = − 1 , µ 1 = 1 and v ariance σ 2 = 1 . The parameters of the composite test are chosen to be ρ = 1 , 0 = 0 . 1 and 1 = 0 . 1 . Here, our main interest is to observe the change in ov erlapping regions of least fav orable density pairs for v arious α . Figure 8 illustrates the outcome of this simulation. It can be seen that the ov erlapping region is conv ex for a negativ e α , ( α = − 10 ) almost constant for α = 0 . 01 and concave for a positi ve α , ( α = 10 ). For the sake of clarity only three examples of α are plotted. In Figure 9, the false alarm and miss detection probabilities of the likelihood ratio test δ for ( f 0 , f 1 ) are graphed and compared with the robust test ˆ δ for ( ˆ g 0 , ˆ g 1 ) . T wo dif ferent robust parameter pairs and various signal to noise ratios (SNR)s, i.e., SNR = 20 log( A/σ ) are considered. It can be seen that increasing the robustness parameters increases the false alarm and miss detection probabilities for all SNRs, as expected. The dif ference between false alarm and miss detection probabilities for the same robust test is small and it is more pronounced for low SNRs. For high SNRs the performance of two robust tests are close to each other . The reason is that for high SNRs maximum allowable robustness parameters become relatively high compared to the parameters of both robust settings. Although the nominal test has the lowest error rates, its performance can degrade considerably under uncertainties in the nominal model. The robust tests, on the other hand, have slightly higher error rates, but guaranteed power of the test, which indicates the trade-off between performance and rob ustness. Finally , in the last simulation, the 3D boundary surface of the maximum robustness parameters is determined for α = 0 . 5 (45) and is shown in Figure 10. This surface has a cropped rotated cone like shape, which is symmetric about its main diagonal, i.e., with respect to the plane 0 = 1 on the space ( 0 , 1 , a ) . Notice that except for the points on the cone like shape that intersect with the ( 0 , 1 , a = 0) plane, all other points on ( 0 , 1 , a = 0) that are plotted in blue color are un-defined (rather than being v alid points with a = 0 ), implying that for those points no minimax robust test exists. V . C O N C L U S I O N A robust version of the likelihood ratio test considering α − div ergence as the distance to characterize the uncertainty sets has been proposed. The existence of a saddle v alue to the minimax optimization problem was sho wn by adopting Sion’ s minimax theorem. The least fav orable distributions, the robust decision rule as well as the robust version of the likelihood 9 f 0 f 1 g ` 0 g ` 1 - 6 - 4 - 2 0 2 4 6 y 0.05 0.10 0.15 0.20 8 f i , g ` i < Fig. 3. Nominal densities and the corresponding least favorable densities for ρ = 1 , α = 4 , 0 = 0 . 02 and 1 = 0 . 03 . f 0 f 1 g ` 0 g ` 1 - 6 - 4 - 2 0 2 4 6 y 0.05 0.10 0.15 0.20 8 f i , g ` i < Fig. 4. Nominal densities and the corresponding least favorable densities for ρ = 1 . 2 , α = 4 , 0 = 0 . 02 and 1 = 0 . 03 . ratio test were deriv ed in two parameters and in three distinct regions on the co-domain of the nominal likelihood ratio. T wo equations from where the parameters can be determined were also deriv ed. It was found that the rob ust likelihood ratio doesn’t depend on the parameter α that characterizes the distance between the probability measures. When the nominal density functions satisfy a symmetry constraint, the two non- linear equations were combined into a single equation. Finally , the upper bounds on the parameters that control the degree of robustness were deriv ed. Open problems include proving the monotonicity of the parameters l l and l u for increasing ( 0 , 1 ) , or α . It was sho wn that simulation results illustrate the theoretical results. A P P E N D I X A S I M P L I FI C A T I O N O F Φ 1 From (31) consider the following steps for Φ 1 = − 1 + λ 0 + λ 1 + µ 0 + µ 1 − α ( − 1 + µ 0 + µ 1 ) λ 1 + λ 0 ( l/ρ ) α − 1 1 α − 1 • Di viding the numerator and the denominator by λ 0 and replacing the term 1 + µ 0 /λ 0 − αµ 0 /λ 0 by c α − 1 1 results f 1 f 0 g ` 1 g ` 0 g ` 1 * g ` 0 * - 4 - 2 2 4 y 1 2 3 4 5 9 l , l ` = Fig. 5. Nominal and least favorable likelihood ratios ( ˆ g 1 / ˆ g 0 for ρ = 1 and ˆ g ∗ 1 / ˆ g ∗ 0 for ρ = 1 . 2 ) for α = 4 , 0 = 0 . 02 and 1 = 0 . 03 . l l l u 50 100 150 200 Α 0.6 0.8 1.0 1.2 1.4 1.6 8 l l , l u < Fig. 6. Lower and upper thresholds, l l and l u , for a variable α , ρ = 1 , 0 = 0 . 02 and 1 = 0 . 03 . in Φ 1 = c 1 α − 1 + ( λ 1 − 1 + µ 1 + α − αµ 1 ) /λ 0 ( λ 1 /λ 0 ) + ( l/ρ ) α − 1 1 α − 1 . • Multiplying the numerator and the denominator of the result of the previous step by λ 0 /λ 1 , replacing the term 1 − 1 /λ 1 + µ 1 /λ 1 + α/λ 1 − αµ 1 /λ 1 by c α − 1 3 and again multiplying both the numerator and the denominator by λ 1 giv es Φ 1 = λ 0 c 1 α − 1 + λ 1 c 3 α − 1 λ 1 + λ 0 ( l/ρ ) α − 1 1 α − 1 . • The result of the previous step is free of parameters µ 0 and µ 1 , but still parameterized by λ 0 and λ 1 . T o eliminate them, using the identities λ 0 = (1 − α ) / ( c α − 1 1 − c α − 1 2 ) and λ 1 = (1 − α ) / ( c α − 1 4 − c α − 1 3 ) leads to Φ 1 = ( c 1 c 4 ) α − 1 + ( c 2 c 3 ) α − 1 c 1 α − 1 − c 2 α − 1 + ( c 4 α − 1 − c 3 α − 1 )( l/ρ ) α − 1 ! 1 α − 1 . 10 Α = 100 Α = 10 Α = 0.01 - 2 2 4 y 0.2 0.4 0.6 0.8 ∆ H y L Fig. 7. The decision rule ˆ δ for α = { 0 . 01 , 10 , 100 } , ρ = 1 , 0 = 0 . 02 and 1 = 0 . 03 . f 0 f 1 g ` 0 H Α = 10 L g ` 1 H Α = 10 L g ` 0 H Α = 0.01 L g ` 1 H Α = 0.01 L g ` 0 H Α =- 10 L g ` 1 H Α =- 10 L - 4 - 2 2 4 y 0.1 0.2 0.3 8 f i , g ` i < Fig. 8. Nominal densities and the corresponding least favorable densities for ρ = 1 , 0 = 0 . 1 and 1 = 0 . 1 . • The result from the pre vious step depends only on c 1 , c 2 , c 3 , c 4 and α . Using the substitutions c 1 = c 3 l l , c 2 = c 4 l u Nominal H P F L Nominal H P M L H Ε 0 = 0.02 , Ε 1 = 0.03 L , H P F L H Ε 0 = 0.02 , Ε 1 = 0.03 L , H P M L H Ε 0 = 0.3 , Ε 1 = 0.2 L , H P F L H Ε 0 = 0.3 , Ε 1 = 0.2 L , H P M L 0 5 10 15 SNR 10 - 5 0.001 0.1 8 P F , P M < Fig. 9. False alarm and miss detection probabilities of δ , (2), ( ρ = 1) for ( f 0 , f 1 ) compared to that of the robust decision rule ˆ δ for ( ˆ g 0 , ˆ g 1 ) when SNR is varied. 0 1 2 3 4 Ε 0 0 1 2 3 4 Ε 1 0.0 0.5 1.0 a Fig. 10. All allowable pairs of maximum robustness parameters, ( 0 , 1 ) , w .r .t. all distances a ∈ [0 , 1] for α = 0 . 5 . and c 4 = k ( l l , l u ) c 3 yields Φ 1 ( l, l l , l u , c 3 ; α , ρ ) = c 3 k ( l l , l u ) α − 1 ( l α − 1 l − l α − 1 u ) l α − 1 l − ( k ( l l , l u ) l u ) α − 1 + ( k ( l l , l u ) α − 1 − 1)( l/ρ ) α − 1 1 α − 1 . (51) A P P E N D I X B S I M P L I FI C A T I O N O F ˆ δ Since the equiv alence of integration domains are given by (14), only ˆ δ = λ 0 ( − 1 + α + λ 1 + µ 1 − αµ 1 ) ( − 1 + α )( λ 0 + λ 1 ( l/ρ ) 1 − α ) − λ 1 ( λ 0 + µ 0 − αµ 0 )( l/ρ ) 1 − α ( − 1 + α )( λ 0 + λ 1 ( l/ρ ) 1 − α ) , ˆ l = ρ is required to be simplified. In the follo wing, the simplification is performed in three steps and the domain term ˆ l = ρ is omitted for the sake of simplicity: • Di viding the numerator and the denominator of the first term by λ 1 and the second term by λ 0 , and replacing the related terms by c α − 1 1 and c α − 1 3 results in ˆ δ = λ 0 − 1 + α · c α − 1 3 λ 0 λ 1 + ( l/ρ ) 1 − α − λ 1 − 1 + α · c α − 1 1 ( l/ρ ) 1 − α 1 + λ 1 λ 0 ( l/ρ ) 1 − α = c α − 1 3 − c α − 1 1 ( l/ρ ) 1 − α ( − 1 + α ) 1 λ 1 + 1 λ 0 ( l/ρ ) 1 − α . • The result of the previous step is free of parameters µ 0 and µ 1 , but still parameterized by λ 0 and λ 1 . T o eliminate them, using the identities λ 0 = (1 − α ) / ( c α − 1 1 − c α − 1 2 ) and λ 1 = (1 − α ) / ( c α − 1 4 − c α − 1 3 ) leads to ˆ δ = ( l/ρ ) 1 − α c α − 1 1 − c α − 1 3 c α − 1 4 − c α − 1 3 + ( c α − 1 1 − c α − 1 2 )( l/ρ ) 1 − α . • The result from the pre vious step depends only on c 1 , c 2 , c 3 , c 4 and α . Using the substitutions c 1 = c 3 l l , c 2 = c 4 l u 11 and c 4 = k ( l l , l u ) c 3 yields ˆ δ = l α − 1 l ( l/ρ ) 1 − α − 1 ( l α − 1 l − ( k ( l l , l u ) l u ) α − 1 )( l/ρ ) 1 − α + k ( l l , l u ) α − 1 − 1 , as wanted. A C K N O W L E D G M E N T The authors would like to sincerely thank the anonymous revie wers for their v aluable comments and suggestions to improv e the quality of the paper . This work was supported by the LOEWE Priority Program Cocoon (http://www .cocoon.tu- darmstadt.de). R E F E R E N C E S [1] B. C. Levy , Principles of Signal Detection and P arameter Estimation , 1st ed. Springer Publishing Compan y , Incorporated, 2008. [2] ——, “Rob ust hypothesis testing with a relati ve entropy tolerance, ” IEEE T ransactions on Information Theory , vol. 55, no. 1, pp. 413–421, 2009. [3] G. G ¨ ul and A. M. Zoubir , “Rob ust hypothesis testing for modeling errors, ” in IEEE Int. Conf. on Acoustics, Speech, and Signal Pr ocessing (ICASSP) , V ancouver , Canada, May 2013, pp. 5514–5518. [4] P . J. Huber , “ A robust v ersion of the probability ratio test, ” Ann. Math. Statist. , vol. 36, pp. 1753–1758, 1965. [5] P . J. Huber and V . Strassen, “Robust confidence limits, ” Z. W ahr chein- lichkeitstheorie verw . Gebiete , vol. 10, pp. 269–278, 1968. [6] ——, “Minimax tests and the Neyman-Pearson lemma for capacities, ” Ann. Statistics , vol. 1, pp. 251–263, 1973. [7] A. G. Dabak and D. H. Johnson, “Geometrically based robust detection, ” in Proceedings of the Conference on Information Sciences and Systems , Johns Hopkins Univ ersity , Baltimore, MD, May 1994, pp. 73–77. [8] A. B. Chan, A. B. Chan, N. V asconcelos, N. V asconcelos, P . J. Moreno, and P . J. Moreno, “ A family of probabilistic kernels based on information div ergence, ” T ech. Rep., 2004. [9] A. Cichocki and S. ichi Amari, “Families of alpha- beta- and gamma- div ergences: Flexible and robust measures of similarities. ” Entr opy , no. 6, pp. 1532–1568. [10] T . van Erven and P . Harremo ¨ es, “R ´ enyi di vergence and kullback-leibler div ergence, ” IEEE T ransactions on Information Theory , vol. 60, no. 7, pp. 3797–3820, 2014. [11] E. Tuncel, “On error exponents in hypothesis testing. ” IEEE T ransac- tions on Information Theory , no. 8, pp. 2945–2950. [12] A. Cichocki, H. Lee, Y .-D. Kim, and S. Choi, “Non-negati ve matrix factorization with alpha-diver gence. ” P attern Recognition Letters , no. 9, pp. 1433–1440. [13] A. O. Hero, B. Ma, O. Michel, and J. Gorman, “ Alpha-div ergence for classification, inde xing and retrie val, ” Uni versity of Michigan, T ech. Rep., 2001. [14] L. Meziou, A. Histace, and F . Precioso, “ Alpha-diver gence maximiza- tion for statistical region-based active contour segmentation with non- parametric pdf estimations, ” in Acoustics, Speech and Signal Processing (ICASSP), 2012 IEEE International Conference on , March 2012, pp. 861–864. [15] Z. Bian, J. Ma, L. Tian, J. Huang, H. Zhang, Y . Zhang, W . Chen, and Z. Liang, “Penalized weighted alpha-div ergence approach to sinogram restoration for low-dose x-ray computed tomography , ” in Nuclear Sci- ence Symposium and Medical Imaging Conference (NSS/MIC), 2012 IEEE , Oct 2012, pp. 3675–3678. [16] J. Pluim, J. Maintz, and M. V iergever , “f-information measures in medical image registration, ” Medical Imaging, IEEE T ransactions on , vol. 23, no. 12, pp. 1508–1516, Dec 2004. [17] D. Morales, L. Pardo, and I. V ajda, “Some new statistics for testing hypotheses in parametric models, ” J ournal of Multivariate Analysis , vol. 62, no. 1, pp. 137 – 168, 1997. [18] T . Hobza, I. Molina, and D. Morales, “Multi-sample R ´ enyi test statis- tics, ” Braz. J. Probab . Stat. , vol. 23, no. 2, pp. 196–215, 12 2009. [19] M. Pardo and J. Pardo, “Use of R ´ enyi’ s diver gence to test for the equality of the coef ficients of variation, ” J ournal of Computational and Applied Mathematics , vol. 116, no. 1, pp. 93 – 104, 2000. [20] G. G ¨ ul and A. M. Zoubir, “Robust hypothesis testing with squared Hellinger distance, ” in Pr oceedings of the 22nd European Signal Pr o- cessing Conference (EUSIPCO) , Lisbon, Portugal, Sep. 2014. [21] ——, “Rob ust detection under communication constraints, ” in Pr oceed- ings of the IEEE 14th International W orkshop on Advances in W ireless Communications (SP A WC) , Darmstadt, Germany , Jun. 2013, pp. 109– 112. [22] ——, “Minimax robust hypothesis testing, ” 2015, submitted for publi- cation at IEEE Tr ansactions on Information Theory . [23] S. Zheng, P .-Y . Kam, Y .-C. Liang, and Y . Zeng, “Spectrum sensing for digital primary signals in cogniti ve radio: A bayesian approach for maximizing spectrum utilization, ” Wir eless Communications, IEEE T ransactions on , vol. 12, no. 4, pp. 1774–1782, April 2013. [24] J.-P . Aubin and I. Ekeland, Applied Nonlinear Analysis . New Y ork: J. W iley , 1984. [25] M. Sion, “On general minimax theorems. ” P acific Journal of Mathemat- ics , vol. 8, no. 1, pp. 171–176, 1958. [26] “T ychonoff ’ s theorem without the axiom of choice, ” Fundamenta Math- ematicae , vol. 113, no. 1, pp. 21–35, 0 1981. [27] A. T ychonoff, “ ¨ Uber die topologische erweiterung v on rumen, ” Mathe- matische Annalen , v ol. 102, no. 1, pp. 544–561, 1930. [28] W . Rudin, Principles of Mathematical Analysis , ser . International series in pure and applied mathematics. Paris: McGraw-Hill, 1976. [29] D. Bertsekas, A. Nedi ´ c, and A. Ozdaglar , Conve x Analysis and Op- timization , ser . Athena Scientific optimization and computation series. Athena Scientific, 2003.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment