Deep Reconstruction-Classification Networks for Unsupervised Domain Adaptation

In this paper, we propose a novel unsupervised domain adaptation algorithm based on deep learning for visual object recognition. Specifically, we design a new model called Deep Reconstruction-Classification Network (DRCN), which jointly learns a shar…

Authors: Muhammad Ghifary, W. Bastiaan Kleijn, Mengjie Zhang

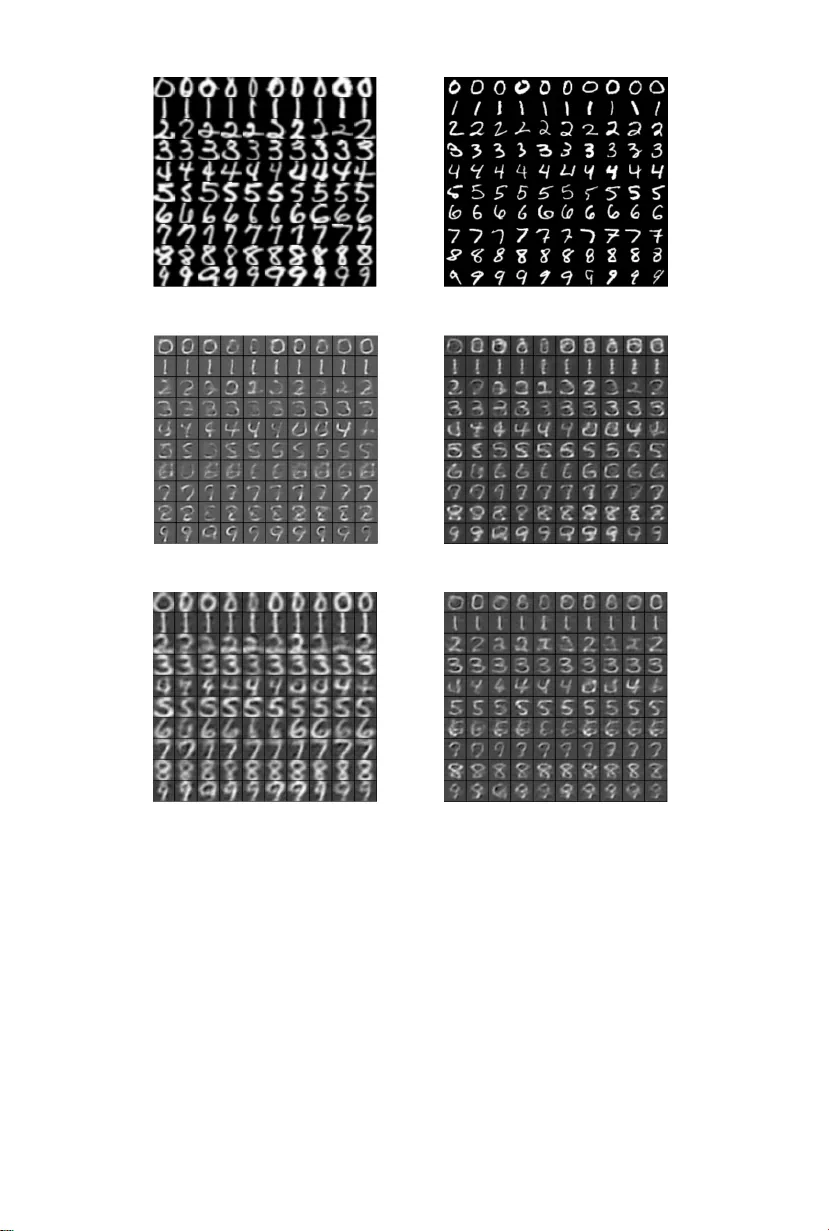

Deep Reconstruction-Classification Net w orks for Unsup ervised Domain Adaptation Muhammad Ghifary 1 ∗ , W. Bastiaan Kleijn 1 , Meng jie Zhang 1 , Da vid Balduzzi 1 , W en Li 2 1 Victoria Un iversit y of W ellington, 2 ETH Z ¨ uric h Abstract. In this paper, we propose a nov el unsup ervised domain adaptation algorithm based on deep learning for visual ob ject recog- nition. Sp ecifically , w e design a new model called Deep Reconstruction- Classification Net work (DRCN), which jointly learns a shared enco ding represen tation for t wo tasks: i) sup ervised classification of lab eled source data, and ii) unsup ervised reconstruction of unlabeled target data. In this w ay , the learn t representation not only preserv es discriminability , but also encodes useful information from the target domain. Our new DR CN mo del can b e optimized by using backpropagation similarly as the standard neural net works. W e ev aluate the performance of DRCN on a series of cross-domain ob- ject recognition tasks, where DRCN pro vides a considerable impro ve- men t (up to ∼ 8% in accuracy) ov er the prior state-of-the-art algorithms. In terestingly , we also observe that the reconstruction pip eline of DRCN transforms images from the source domain in to images whose appearance resem bles the target dataset. This suggests that DRCN’s p erformance is due to constructing a single comp osite represen tation that encodes infor- mation about b oth the structure of target images and the classification of source images. Finally , we provide a formal analysis to justify the algorithm’s ob jective in domain adaptation con text. Keyw ords: domain adaptation, ob ject recognition, deep learning, con- v olutional net works, transfer learning 1 In tro duction An imp ortan t task in visual ob ject recognition is to design algorithms that are robust to dataset bias [1]. Dataset bias arises when lab eled training instances are av ailable from a source domain and test instances are sampled from a re- lated, but different, target domain. F or example, consider a p erson iden tification application in unmanne d aerial vehicles (UA V), which is essential for a v ariety of tasks, suc h as surveillance, people searc h, and remote monitoring [2]. One of the critical tasks is to identify p eople from a bird’s-eye view; how ev er collecting lab eled data from that viewp oin t can b e very c hallenging. It is more desirable that a UA V can be trained on some already av ailable on-the-gr ound lab eled ∗ Curren t affiliation: W eta Digital 2 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li images (source), e.g., p eople photographs from so cial media, and then success- fully applied to the actual UA V view (target). T raditional sup ervised learning algorithms t ypically p erform po orly in this setting, since they assume that the training and test data are dra wn from the same domain. Domain adaptation attempts to deal with dataset bias using unlab eled data from the target domain so that the task of man ual lab eling the target data can b e reduced. Unlab eled target data pro vides auxiliary training information that should help algorithms generalize b etter on the target domain than using source data only . Successful domain adaptation algorithms hav e large practical v alue, since acquiring a h uge amoun t of lab els from the target domain is often exp ensiv e or impossible. Although domain adaptation has gained increasing attention in ob ject recognition, see [3] for a recen t ov erview, the problem remains essen tially unsolv ed since mo del accuracy has yet to reac h a level that is satisfactory for real-w orld applications. Another issue is that man y existing algorithms require optimization procedures that do not scale w ell as the size of datasets increases [4,5,6,7,8,9,10]. Earlier algorithms were typically designed for relativ ely small datasets, e.g., the Office dataset [11]. W e consider a solution based on learning representations or features from ra w data. Ideally , the learned feature should mo del the lab el distribution as well as reduce the discrepancy b et ween the source and target domains. W e hypothesize that a possible w ay to approximate suc h a feature is by (supervised) learning the sour c e lab el distribution and (unsup ervised) learning of the tar get data distribu- tion . This is in the same spirit as multi-task le arning in that learning auxiliary tasks can help the main task b e learned b etter [12,13]. The goal of this pap er is to dev elop an accurate, scalable multi-task feature learning algorithm in the con text of domain adaptation. Contribution: T o achiev e the goal stated ab o ve, we prop ose a new deep learn- ing model for unsup ervised domain adaptation. Deep learning algorithms are highly scalable since they run in linear time, can handle streaming data, and can b e parallelized on GPUs. Indeed, deep learning has come to dominate ob- ject recognition in recen t years [14,15]. W e propose De ep R e c onstruction-Classific ation Network (DRCN), a conv olu- tional net work that join tly learns tw o tasks: i) supervised source lab el prediction and ii) unsup ervised target data reconstruction. The encoding parameters of the DR CN are shared across b oth tasks, while the deco ding parameters are sepa- rated. The aim is that the learned lab el prediction function can p erform well on classifying images in the target domain – the data reconstruction can thus b e view ed as an auxiliary task to supp ort the adaptation of the lab el prediction. Learning in DRCN alternates b et ween unsupervised and supervised training, whic h is different from the standard pr etr aining-finetuning strategy [16,17]. F rom experiments ov er a v ariet y of cross-domain ob ject recognition tasks, DR CN p erforms b etter than the state-of-the-art domain adaptation algorithm [18], with up to ∼ 8% accuracy gap. The DR CN learning strategy also provides a considerable improv emen t o ver the pretraining-finetuning strategy , indicating that it is more suitable for the unsupervised domain adaptation setting. W e DR CN for Unsup ervised Domain Adaptation 3 furthermore p erform a visual analysis by reconstructing source images through the learned reconstruction function. It is found that the r e c onstructe d outputs r esemble the app e ar anc es of the tar get images suggesting that the enco ding rep- resen tations are successfully adapted. Finally , we presen t a probabilistic analysis to sho w the relationship b et ween the DRCN’s learning ob jective and a semi- sup ervised learning framework [19], and also the soundness of considering only data from a target domain for the data reconstruction training. 2 Related W ork Domain adaptation is a large field of researc h, with related work under several names suc h as class im balance [20], co v ariate shift [21], and sample selection bias [22]. In [23], it is considered as a special case of transfer learning. Earlier work on domain adaptation focused on text do cumen t analysis and NLP [24,25]. In recent y ears, it has gained a lot of atten tion in the computer vision comm unity , mainly for ob ject recognition application, see [3] and references therein. The domain adaptation problem is often referred to as dataset bias in computer vision [1]. This paper is concerned with unsup ervise d domain adaptation in which la- b eled data from the target domain is not av ailable [26]. A range of approaches along this line of researc h in ob ject recognition ha v e b een proposed [4,5,27,28,29,30,9], most were designed specifically for small datasets such as the Office dataset [11]. F urthermore, they usually op erated on the SURF-based features [31] extracted from the raw pixels. In essence, the unsup ervised domain adaptation problem remains open and needs more p o werful solutions that are useful for practical situations. Deep learning now plays a ma jor role in the adv ancement of domain adapta- tion. An early attempt addressed large-scale sen timent classification [32], where the concatenated features from fully connected lay ers of stack ed denoising au- to encoders hav e b een found to b e domain-adaptiv e [33]. In visual recognition, a fully connected, shallo w netw ork pretrained b y denoising autoenco ders has sho wn a certain level of effectiv eness [34]. It is widely kno wn that deep conv o- lutional net w orks (Con vNets) [35] are a more natural choice for visual recog- nition tasks and ha ve ac hieved significan t successes [36,14,15]. More recen tly , Con vNets pretrained on a large-scale dataset, ImageNet, hav e b een shown to b e reasonably effective for domain adaptation [14]. They provide significantly bet- ter p erformances than the SURF-based features on the Office dataset [37,38]. An earlier approac h on using a conv olutional arc hitecture without pretraining on ImageNet, DLID, has also b een explored [39] and performs b etter than the SURF-based features. T o further improv e the domain adaptation p erformance, the pretrained Con- vNets can be fine-tune d under a particular constrain t related to minimizing a domain discrepancy measure [18,40,41,42]. Deep Domain Confusion (DDC) [41] utilizes the maxim um mean discrepancy (MMD) measure [43] as an additional loss function for the fine-tuning to adapt the last fully connected lay er. Deep Adaptation Netw ork (D AN) [40] fine-tunes not only the last fully connected 4 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li la yer, but also some conv olutional and fully connected lay ers underneath, and outp erforms DDC. Recen tly , the deep mo del prop osed in [42] extends the idea of DDC by adding a criterion to guarantee the class alignment b et ween differen t domains. How ever, it is limited only to the semi-sup ervise d adaptation setting, where a small n umber of target lab els can b e acquired. The algorithm prop osed in [18], which we refer to as ReverseGrad, handles the domain in v ariance as a binary classification problem. It th us optimizes tw o con tradictory ob jectives: i) minimizing lab el prediction loss and ii) maximizing domain classification loss via a simple gr adient r eversal strategy . ReverseGrad can b e effectiv ely applied b oth in the pretrained and randomly initialized deep net works. The randomly initialized model is also sho wn to perform w ell on cross- domain recognition tasks other than the Office benchmark, i.e., large-scale hand- written digit recognition tasks. Our w ork in this pap er is in a similar spirit to Rev erseGrad in that it do es not necessarily require pretrained deep net works to p erform well on some tasks. Ho w ever, our prop osed metho d undertak es a fundamen tally different learning algorithm: finding a go od lab el classifier while sim ultaneously learning the structure of the target images. 3 Deep Reconstruction-Classification Net w orks This section describes our prop osed deep learning algorithm for unsup ervised do- main adaptation, whic h w e refer to as De ep R e c onstruction-Classific ation Net- works (DRCN). W e first briefly discuss the unsupervised domain adaptation problem. W e then present the DR CN arc hitecture, learning algorithm, and other useful asp ects. Let us define a domain as a probabilit y distribution D X Y (or just D ) on X × Y , where X is the input space and Y is the output space. Denote the source domain by P and the target domain b y Q , where P 6 = Q . The aim in unsup ervise d domain adaptation is as follo ws: given a lab eled i.i.d. sample from a source domain S s = { ( x s i , y s i ) } n s i =1 ∼ P and an unlab eled sample from a target domain S t u = { ( x t i ) } n t i =1 ∼ Q X , find a goo d labeling function f : X → Y on S t u . W e consider a feature learning approac h: finding a function g : X → F such that the discrepancy b et w een distribution P and Q is minimized in F . Ideally , a discriminative represen tation should model b oth the lab el and the structure of the data. Based on that intuition, we hypothesize that a domain- adaptiv e represen tation should satisfy t w o criteria: i) classify w ell the source domain lab eled data and ii) reconstruct well the target domain unlab eled data, whic h can b e view ed as an appro ximate of the ideal discriminativ e representation. Our mo del is based on a conv olutional architecture that has tw o pip elines with a shared enco ding representation. The first pipeline is a standard con volutional net work for sour c e lab el pr e diction [35], while the second one is a conv olutional auto encoder for tar get data r e c onstruction [44,45]. Conv olutional arc hitectures are a natural choice for ob ject recognition to capture spatial correlation of im- ages. The mo del is optimized through m ultitask learning [12], that is, join tly learns the (sup ervised) source lab el prediction and the (unsupervised) target DR CN for Unsup ervised Domain Adaptation 5 data reconstruction tasks. 1 The aim is that the enco ding shared representation should learn the commonality b et ween those tasks that provides useful informa- tion for cross-domain ob ject recognition. Figure 1 illustrates the architecture of DR CN. Fig. 1. Illustration of the DRCN’s architecture. It consists of t wo pip elines: i) lab el prediction and ii) data reconstruction pip elines. The shared parameters b et wee n those t wo pip elines are indicated by the red color. W e now describe DR CN more formally . Let f c : X → Y b e the (supervised) lab el prediction pip eline and f r : X → X b e the (unsupervised) data recon- struction pip eline of DRCN. Define three additional functions: 1) an enco der / feature mapping g enc : X → F , 2) a deco der g dec : F → X , and 3) a feature lab eling g lab : F → Y . F or m -class classification problems, the output of g lab usually forms an m -dimensional v ector of real v alues in the range [0 , 1] that add up to 1, i.e., softmax output. Given an input x ∈ X , one can decomp ose f c and f r suc h that f c ( x ) = ( g lab ◦ g enc )( x ) , (1) f r ( x ) = ( g dec ◦ g enc )( x ) . (2) Let Θ c = { Θ enc , Θ lab } and Θ r = { Θ enc , Θ dec } denote the parameters of the sup ervised and unsup ervised mo del. Θ enc are shared parameters for the feature mapping g enc . Note that Θ enc , Θ dec , Θ lab ma y encode parameters of multiple la yers. The goal is to seek a single feature mapping g enc mo del that supp orts b oth f c and f r . L e arning algorithm: The learning ob jective is as follows. Supp ose the inputs lie in X ⊆ R d and their lab els lie in Y ⊆ R m . Let ` c : Y × Y → R and ` r : X × X → R be the classification and reconstruction loss resp ectively . Given 1 The unsup ervised con volutional autoenco der is not trained via the greedy lay er-wise fashion, but only with the standard bac k-propagation ov er the whole pip eline. 6 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li lab eled source sample S s = { ( x s i , y s i ) } n s i =1 ∼ P , where y i ∈ { 0 , 1 } m is a one-hot v ector, and unlabeled target sample S t u = { ( x t j ) } n t j =1 ∼ Q , w e define the empirical losses as: L n s c ( { Θ enc , Θ lab } ) := n s X i =1 ` c ( f c ( x s i ; { Θ enc , Θ lab } ) , y s i ) , (3) L n t r ( { Θ enc , Θ dec } ) := n t X j =1 ` r f r ( x t j ; { Θ enc , Θ dec } ) , x t j ) . (4) T ypically , ` c is of the form cr oss-entr opy loss m X k =1 y k log[ f c ( x )] k (recall that f c ( x ) is the softmax output) and ` r is of the form squar e d loss k x − f r ( x ) k 2 2 . Our aim is to solv e the following ob jectiv e: min λ L n s c ( { Θ enc , Θ lab } ) + (1 − λ ) L n t r ( { Θ enc , Θ dec } ) , (5) where 0 ≤ λ ≤ 1 is a hyper-parameter controlling the trade-off b etw een classifi- cation and reconstruction. The ob jective is a conv ex combination of sup ervised and unsup ervised loss functions. W e justify the approach in Section 5. Ob jective (5) can b e achiev ed by alternately minimizing L n s c and L n t r us- ing sto chastic gr adient desc ent (SGD). In the implemen tation, w e used RM- Sprop [46], the v ariant of SGD with a gradien t normalization – the current gradien t is divided b y a moving a verage o ver the previous ro ot mean squared gradien ts. W e utilize dropout regularization [47] during L n s c minimization, whic h is effectiv e to reduce ov erfitting. Note that drop out regularization is applied in the fully-connected/dense la yers only , see Figure 1. The stopping criterion for the algorithm is determined b y monitoring the a verage reconstruction loss of the unsupervised mo del during training – the pro cess is stopp ed when the av erage reconstruction loss stabilizes. Once the training is completed, the optimal parameters ˆ Θ enc and ˆ Θ lab are used to form a classification mo del f c ( x t ; { ˆ Θ enc , ˆ Θ lab } ) that is exp ected to p erform well on the target domain. The DRCN learning algorithm is summarized in Algorithm 1 and implemen ted using Theano [48]. Data augmentation and denoising: W e use t wo well-kno wn strategies to impro ve DR CN’s p erformance: data augmen tation and denoising. Data augmen- tation generates additional training data during the sup ervised training with re- sp ect to some plausible transformations ov er the original data, which improv es generalization, see e.g. [49]. Denoising inv olv es reconstructing cle an inputs giv en their noisy coun terparts. It is used to improv e the feature inv ariance of denoising auto encoders (DAE) [33]. Generalization and feature in v ariance are t wo prop er- ties needed to improv e domain adaptation. Since DRCN has b oth classification and reconstruction asp ects, we can naturally apply these t wo tric ks simultane- ously in the training stage. Let Q ˜ X | X denote the noise distribution giv en the original data from whic h the noisy data are sampled from. The classification pip eline of DRCN f c th us DR CN for Unsup ervised Domain Adaptation 7 Algorithm 1 The Deep Reconstruction-Classification Netw ork (DRCN) learn- ing algorithm. Input: • Lab eled source data: S s = { ( x s i , y s i ) } n s i =1 ; • Unlab eled target data: S t u = { x t j } n t i = j ; • Learning rates: α c and α r ; 1: Initialize parameters Θ enc , Θ dec , Θ lab 2: while not stop do 3: for each source batch of size m s do 4: Do a forward pass according to (1); 5: Let Θ c = { Θ enc , Θ lab } . Up date Θ c : Θ c ← Θ c − α c λ ∇ Θ c L m s c ( Θ c ); 6: end for 7: for each target batch of size m t do 8: Do a forward pass according to (2); 9: Let Θ r = { Θ enc , Θ dec } . Up date Θ r : Θ r ← Θ r − α r (1 − λ ) ∇ Θ r L m t r ( Θ r ) . 10: end for 11: end while Output: • DRCN learn t parameters: ˆ Θ = { ˆ Θ enc , ˆ Θ dec , ˆ Θ lab } ; actually observ es additional pairs { ( ˜ x s i , y s i ) } n s i =1 and the reconstruction pip eline f r observ es { ( ˜ x t i , x t i ) } n t i =1 . The noise distribution Q ˜ X | X are t ypically geometric transformations (translation, rotation, skewing, and scaling) in data augmenta- tion, while either zero-masked noise or Gaussian noise is used in the denoising strategy . In this work, we com bine all the fore-mention ed t yp es of noise for de- noising and use only the geometric transformations for data augmentation. 4 Exp erimen ts and Results This section reports the ev aluation results of DR CN. It is divided in to t wo parts. The first part fo cuses on the ev aluation on large-scale datasets popular with deep learning metho ds, while the second part summarizes the results on the Office dataset [11]. 4.1 Exp erimen t I: SVHN, MNIST, USPS, CIF AR, and STL The first set of experiments inv estigates the empirical p erformance of DRCN on fiv e widely used b enc hmarks: MNIST [35], USPS [50], Street View House Num- b ers (SVHN) [51], CIF AR [52], and STL [53], see the corresponding references for more detailed configurations. The task is to p erform cross-domain recognition: 8 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li taking the tr aining set fr om one dataset as the sour c e domain and the test set fr om another dataset as the tar get domain . W e ev aluate our algorithm’s recog- nition accuracy ov er three cross-domain pairs: 1) MNIST vs USPS, 2) SVHN vs MNIST, and 3) CIF AR vs STL. MNIST ( mn ) vs USPS ( us ) contains 2D gra yscale handwritten digit images of 10 classes. W e prepro cessed them as follo ws. USPS images were rescaled into 28 × 28 and pixels were normalized to [0 , 1] v alues. F rom this pair, t wo cross- domain recognition tasks w ere p erformed: mn → us and us → mn . In SVHN ( sv ) vs MNIST ( mn ) pair, MNIST images were rescaled to 32 × 32 and SVHN images were grayscaled. The [0 , 1] normalization was then applied to all images. Note that w e did not prepro cess SVHN images using lo cal con trast normalization as in [54]. W e ev aluated our algorithm on sv → mn and mn → sv cross-domain recognition tasks. STL ( st ) vs CIF AR ( ci ) consists of R GB images that share eigh t ob ject classes: airplane , bir d , c at , de er , do g , horse , ship , and truck , which forms 4 , 000 (train) and 6 , 400 (test) images for STL, and 40 , 000 (train) and 8 , 000 (test) images for CIF AR. STL images were rescaled to 32 × 32 and pixels w ere stan- dardized into zero-mean and unit-v ariance. Our algorithm was ev aluated on tw o cross-domain tasks, that is, st → ci and ci → st . The ar chite ctur e and le arning setup: The DR CN arc hitecture used in the exp erimen ts is adopted from [44]. The label prediction pipeline has three con- v olutional lay ers: 100 5x5 filters ( conv1 ), 150 5x5 filters ( conv2 ), and 200 3x3 filters ( conv3 ) resp ectiv ely , t w o max-po oling la y ers of size 2x2 after the first and the second conv olutional la yers ( pool1 and pool2 ), and three fully-connected la yers ( f c4 , fc5 ,and fc out ) – fc out is the output lay er. The num b er of neu- rons in fc4 or fc5 w as treated as a tunable hyper-parameter in the range of [300 , 350 , ..., 1000], chosen according to the b est p erformance on the v alidation set. The shared enco der g enc has th us a configuration of conv1 - pool1 - conv2 - pool2 - conv3 - fc4 - f c5 . F urthermore, the configuration of the deco der g dec is the in verse of that of g enc . Note that the unp ooling op eration in g dec p erforms b y upsampling-by-duplication: inserting the p o oled v alues in the appropriate lo- cations in the feature maps, with the remaining elemen ts b eing the same as the p ooled v alues. W e employ ReLU activ ations [55] in all hidden lay ers and linear activ ations in the output lay er of the reconstruction pip eline. Up dates in both classification and reconstruction tasks w ere computed via RMSprop with learning rate of 10 − 4 and moving a verage deca y of 0 . 9. The con trol p enalt y λ was selected according to accuracy on the source v alidation data – typically , the optimal v alue w as in the range [0 . 4 , 0 . 7]. Benchmark algorithms: W e compare DR CN with the follo wing metho ds. 1) Con vNet src : a sup ervised con volutional netw ork trained on the lab eled source domain only , with the same net work configuration as that of DR CN’s lab el prediction pip eline, 2) SCAE: Con vNet preceded by the lay er-wise pretraining of stac k ed conv olutional autoenco ders on all unlab eled data [44], 3) SCAE t : DR CN for Unsup ervised Domain Adaptation 9 similar to SCAE, but only unlab eled data from the target domain are used during pretraining, 4) SD A sh [32]: the deep netw ork with three fully connected la yers, whic h is a successful domain adaptation mo del for sentimen t classification, 5) Subspace Alignment (SA) [27], 2 and 6) ReverseGrad [18]: a recently published domain adaptation mo del based on deep con volutional netw orks that pro vides the state-of-the-art p erformance. All deep learning based models ab o ve ha ve the same arc hitecture as DRCN for the lab el predictor. F or ReverseGrad, we also ev aluated the “original archi- tecture” devised in [18] and chose whichev er performed better of the original arc hitecture or our architecture. Finally , we applied the data augmentation to all mo dels similarly to DRCN. The ground-truth mo del is also ev aluated, that is, a con volutional net work trained from and tested on images from the target domain only (ConvNet tg t ), to measure the difference b et w een the cross-domain p erformance and the ideal p erformance. Classific ation ac cur acy: T able 1 summarizes the cross-domain recognition accuracy ( me an ± std ) of all algorithms o ver ten indep enden t runs. DR CN per- forms best in all but one cross-domain tasks, b etter than the prior state-of-the- art ReverseGrad. Notably on the sv → mn task, DR CN outp erforms ReverseG- rad with ∼ 8% accuracy gap. DR CN also provides a considerable improv emen t o ver ReverseGrad ( ∼ 5%) on the rev erse task, mn → sv , but the gap to the groundtruth is still large – this case was also mentioned in previous w ork as a failed case [18]. In the case of ci → st , the p erformance of DRCN almost matc hes the p erformance of the target baseline. DR CN also con vincingly outperforms the greedy-la yer pretraining-based al- gorithms (SDA sh , SCAE, and SCAE t ). This indicates the effectiv eness of the sim ultaneous reconstruction-classification training strategy o ver the standard pretraining-finetuning in the con text of domain adaptation. T able 1. Accuracy ( me an ± std %) on five cross-domain recognition tasks ov er ten indep enden t runs. Bold and underline indicate the b est and second best domain adap- tation p erformance. ConvNet tgt denotes the ground-truth mo del: training and testing on the target domain only . Methods mn → us us → mn sv → mn mn → sv st → ci ci → st ConvNet src 85.55 ± 0.12 65.77 ± 0.06 62.33 ± 0.09 25.95 ± 0.04 54.17 ± 0.21 63.61 ± 0.17 SDA sh [32] 43.14 ± 0.16 37.30 ± 0.12 55.15 ± 0.08 8.23 ± 0.11 35.82 ± 0.07 42.27 ± 0.12 SA [27] 85.89 ± 0.13 51.54 ± 0.06 63.17 ± 0.07 28.52 ± 0.10 54.04 ± 0.19 62.88 ± 0.15 SCAE [44] 85.78 ± 0.08 63.11 ± 0.04 60.02 ± 0.16 27.12 ± 0.08 54.25 ± 0.13 62.18 ± 0.04 SCAE t [44] 86.24 ± 0.11 65.37 ± 0.03 65 . 57 ± 0 . 09 27.57 ± 0.13 54 . 68 ± 0 . 08 61 . 94 ± 0 . 06 ReverseGrad [18] 91.11 ± 0.07 74.01 ± 0.05 73.91 ± 0.07 35.67 ± 0.04 56.91 ± 0.05 66.12 ± 0.08 DRCN 91.80 ± 0.09 73.67 ± 0.04 81.97 ± 0.16 40.05 ± 0.07 58 . 86 ± 0 . 07 66 . 37 ± 0 . 10 ConvNet tgt 96 . 12 ± 0 . 07 98 . 67 ± 0 . 04 98 . 67 ± 0 . 04 91.52 ± 0.05 78 . 81 ± 0 . 11 66 . 50 ± 0 . 07 2 The setup follo ws one in [18]: the inputs to SA are the last hidden la yer activ ation v alues of ConvNet src . 10 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li Comp arison of differ ent DRCN flavors: Recall that DRCN uses only the unlab eled target images for the unsupervised reconstruction training. T o verify the importance of this strategy , we further compare differen t flav ors of DRCN: DR CN s and DRCN st . Those algorithms are conceptually the same but different only in utilizing the unlab eled images during the unsup ervised training. DRCN s uses only unlab eled source images, whereas DRCN st com bines b oth unlab eled source and target images. The exp erimen tal results in T able 2 confirm that DR CN alw ays performs b etter than DRCN s and DRCN st . While DR CN st o ccasionally outp erforms Re- v erseGrad, its ov erall p erformance do es not comp ete with that of DR CN. The only case where DRCN s and DR CN st fla vors can closely match DRCN is on mn → us . This suggests that the use of unlab ele d sour c e data during the recon- struction training do not contribute m uch to the cross-domain generalization, whic h verifies the DR CN strategy in using the unlab eled target data only . T able 2. Accuracy (%) of DR CN s and DRCN st . Methods mn → us us → mn sv → mn mn → sv st → ci ci → st DR CN s 89.92 ± 0.12 65.96 ± 0.07 73.66 ± 0.04 34.29 ± 0.09 55.12 ± 0.12 63.02 ± 0.06 DR CN st 91.15 ± 0.05 68.64 ± 0.05 75.88 ± 0.09 37.77 ± 0.06 55.26 ± 0.06 64.55 ± 0.13 DR CN 91.80 ± 0.09 73.67 ± 0.04 81.97 ± 0.16 40.05 ± 0.07 58 . 86 ± 0 . 07 66 . 37 ± 0 . 10 Data r e c onstruction: A useful insight w as found when reconstructing source images through the reconstruction pipeline of DR CN. Sp ecifically , we observ e the visual app earance of f r ( x s 1 ) , . . . , f r ( x s m ), where x s 1 , . . . , x s m are some images from the source domain. Note that x s 1 , . . . , x s m are unseen during the unsup ervised reconstruction training in DRCN. W e visualize such a reconstruction in the case of sv → mn training in Figure 3. Figure 2(a) and 3(a) display the original source (SVHN) and target (MNIST) images. The main finding of this observ ation is depicted in Figure 3(c): the recon- structed images pro duced by DRCN given some SVHN images as the source in- puts. W e found that the r e c onstructe d SVHN images r esemble MNIST-like digit app e ar anc es, with white str oke and black b ackgr ound , see Figure 3(a). Remark- ably , DR CN still can produce “correct” reconstructions of some noisy SVHN images. F or example, all SVHN digits 3 display ed in Figure 2(a) are clearly re- constructed by DRCN, see the fourth row of Figure 3(c). DR CN tends to pick only the digit in the middle and ignore the remaining digits. This may explain the sup erior cross-domain recognition performance of DRCN on this task. How- ev er, suc h a cross-reconstruction app earance does not happ en in the reverse task, mn → sv , which may be an indicator for the low accuracy relative to the groundtruth p erformance. W e also conduct such a diagnostic reconstruction on other algorithms that ha ve the reconstruction pip eline. Figure 3(d) depicts the reconstructions of the SVHN images produced b y Con vAE trained on the MNIST images only . They do DR CN for Unsup ervised Domain Adaptation 11 (a) Source (SVHN) (b) T arget (MNIST) (c) DR CN (d) Con vAE (e) DR CN st (f ) ConvAE+Con vNet Fig. 2. Data reconstruction after training from SVHN → MNIST. Fig. (a)-(b) show the original input pixels, and (c)-(f ) depict the reconstructed source images (SVHN). The reconstruction of DRCN appears to b e MNIST-like digits, see the main text for a detailed explanation. not appear to b e digits, suggesting that Con vAE recognizes the SVHN images as noise. Figure 3(e) sho ws the reconstructed SVHN images pro duced by DRCN st . W e can see that they lo ok almost identical to the source images shown in Figure 2(a), whic h is not surprising since the source images are included during the reconstruction training. Finally , we ev aluated the reconstruction induced by Con vNet src to observe the difference with the reconstruction of DRCN. Sp ecifically , we trained Con vAE 12 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li on the MNIST images in whic h the enco ding parameters were initialized from those of ConvNet src and not up dated during training. W e refer to the mo del as Con vAE+ConvNet src . The reconstructed images are visualized in Figure 3(f ). Although they resemble the style of MNIST images as in the DRCN’s case, only a few source images are correctly reconstructed. T o summarize, the results from this diagnostic data reconstruction corre- late with the cross-domain recognition p erformance. More visualization on other cross-domain cases can b e found in the Supplemental materials. 4.2 Exp erimen ts I I: Office dataset In the second exp erimen t, we ev aluated DRCN on the standard domain adap- tation b enc hmark for visual ob ject recognition, Office [11], which consists of three differen t domains: amazon (a) , dslr (d) , and webcam (w) . Office has 2817 lab eled images in total distributed across 31 ob ject categories. The num b er of images is th us relatively small compared to the previously used datasets. W e applied the DRCN algorithm to finetune AlexNet [14], as was done with differen t metho ds in previous work [18,40,41]. 3 The fine-tuning w as p erformed only on the fully connected la yers of AlexNet, f c 6 and f c 7, and the last con- v olutional lay er, conv 5. Sp ecifically , the lab el prediction pip eline of DRCN con- tains conv 4- conv 5- f c 6- f c 7- label and the data reconstruction pip eline has conv 4- conv 5- f c 6- f c 7- f c 6 0 - conv 5 0 - conv 4 0 (the 0 denotes the the inv erse lay er) – it thus do es not reconstruct the original input pixels. The learning rate w as selected follo wing the strategy devised in [40]: cross-v alidating the base learning rate b et w een 10 − 5 and 10 − 2 with a m ultiplicative step-size 10 1 / 2 . W e follow ed the standard unsup ervised domain adaptation training proto col used in previous w ork [39,7,40], that is, using al l lab eled source data and unla- b eled target data. T able 3 summarizes the p erformance ac curacy of DR CN based on that protocol in comparison to the state-of-the-art algorithms. W e found that DR CN is comp etitiv e against D AN and ReverseGrad – the p erformance is either the best or the second best except for one case. In particular, DRCN p erforms b est with a convincing gap in situations when the target domain has relatively man y data, i.e., amazon as the target dataset. T able 3. Accuracy ( me an ± std %) on the Office dataset with the standard unsup er- vised domain adaptation protocol used in [7,39]. Method a → w w → a a → d d → a w → d d → w DDC [41] 61.8 ± 0.4 52.2 ± 0.4 64.4 ± 0.3 52.1 ± 0.8 98.5 ± 0.4 95.0 ± 0.5 DAN [40] 68.5 ± 0.4 53.1 ± 0.3 67.0 ± 0.4 54.0 ± 0.4 99.0 ± 0.2 96.0 ± 0.3 ReverseGrad [18] 72.6 ± 0.3 52.7 ± 0.2 67.1 ± 0.3 54.5 ± 0.4 99.2 ± 0.3 96.4 ± 0.1 DR CN 68.7 ± 0.3 54.9 ± 0.5 66.8 ± 0.5 56.0 ± 0.5 99.0 ± 0.2 96.4 ± 0.3 3 Recall that AlexNet consists of five conv olutional lay ers: conv 1 , . . . , conv 5 and three fully connected lay ers: f c 6 , f c 7, and f c 8 /output . DR CN for Unsup ervised Domain Adaptation 13 5 Analysis This section pro vides a first step tow ards a formal analysis of the DR CN al- gorithm. W e demonstrate that optimizing (5) in DR CN relates to solving a semi-sup ervised learning problem on the target domain according to a frame- w ork prop osed in [19]. The analysis suggests that unsup ervised training using only unlabeled target data is sufficient. That is, adding unlabeled source data migh t not further improv e domain adaptation. Denote the lab eled and unlab eled distributions as D X Y =: D and D X resp ec- tiv ely . Let P θ ( · ) refer to a family of models, parameterized b y θ ∈ Θ , that is used to learn a maxim um lik eliho od estimator. The DRCN learning algorithm for domain adaptation tasks can b e in terpreted probabilistically b y assuming that P θ ( x ) is Gaussian and P θ ( y | x ) is a multinomial distribution, fit by logistic regression. The ob jective in Eq.(5) is equiv alen t to the follo wing maxim um likelihoo d estimate: ˆ θ = argmax θ λ n s X i =1 log P θ Y | X ( y s i | x s i ) + (1 − λ ) n t X j =1 log P θ X | ˜ X ( x t j | ˜ x t j ) , (6) where ˜ x is the noisy input generated from Q ˜ X | X . The first term represents the mo del learned by the sup ervised conv olutional netw ork and the second term represen ts the mo del learned b y the unsup ervised con volutional autoenco der. Note that the discriminative mo del only observes labeled data from the source distribution P X in ob jectives (5) and (6). W e now recall a semi-supervised learning problem form ulated in [19]. Supp ose that lab eled and unlab eled samples are taken from the tar get domain Q with probabilities λ and (1 − λ ) resp ectiv ely . By Theorem 5.1 in [19], the maximum lik eliho o d estimate ζ is ζ = argmax ζ λ E Q [log P ζ ( x, y )] + (1 − λ ) E Q X [log P ζ X ( x )] (7) The theorem holds if it satisfies the following assumptions: c onsistency , the mo del contains true distribution, so the MLE is consistent; and smo othness and me asur ability [56]. Giv en target data ( x t 1 , y t 1 ) , . . . , ( x t n t , y t n t ) ∼ Q , the parameter ζ can b e estimated as follows: ˆ ζ = argmax ζ λ n t X i =1 [log P ζ ( x t i , y t i )] + (1 − λ ) n t X i =1 [log P ζ X ( x t i )] (8) Unfortunately , ˆ ζ cannot b e computed in the unsup ervised domain adaptation setting since w e do not hav e access to target lab els. Next w e insp ect a certain condition where ˆ θ and ˆ ζ are closely related. Firstly , b y the c ovariate shift assumption [21]: P 6 = Q and P Y | X = Q Y | X , the first term in (7) can b e switc hed from an exp ectation o ver target samples to source samples: E Q h log P ζ ( x, y ) i = E P Q X ( x ) P X ( x ) · log P ζ ( x, y ) . (9) 14 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li Secondly , it was sho wn in [57] that P θ X | ˜ X ( x | ˜ x ), see the second term in (6), defines an ergodic Marko v c hain whose asymptotic marginal distribution of X conv erges to the data-generating distribution P X . Hence, Eq. (8) can b e rewritten as ˆ ζ ≈ argmax ζ λ n s X i =1 Q X ( x s i ) P X ( x s i ) log P ζ ( x s i , y s i ) + (1 − λ ) n t X j =1 [log P ζ X | ˜ X ( x t j | ˜ x t j )] . (10) The ab o ve ob jective differs from ob jectiv e (6) only in the first term. Notice that ˆ ζ w ould b e approximately equal ˆ θ if the ratio Q X ( x s i ) P X ( x s i ) is constant for all x s . In fact, it b ecomes the ob jective of DR CN st . Although the constant ratio assumption is to o strong to hold in practice, comparing (6) and (10) suggests that ˆ ζ can b e a reasonable appro ximation to ˆ θ . Finally , we argue that using unlabeled source samples during the unsup er- vised training may not further con tribute to domain adaptation. T o see this, we expand the first term of (10) as follo ws λ n s X i =1 Q X ( x s i ) P X ( x s i ) log P ζ Y | X ( y s i | x s i ) + λ n s X i =1 Q X ( x s i ) P X ( x s i ) log P ζ X ( x s i ) . Observ e the second term ab o ve. As n s → ∞ , P θ X will conv erge to P X . Hence, since R x ∼ P X Q X ( x ) P X ( x ) log P X ( x ) ≤ R x ∼ P X P t X ( x ), adding more unlab eled source data will only result in a constant. This implies an optimization pro cedure equiv alent to (6), which may explain the uselessness of unlabeled source data in the con text of domain adaptation. Note that the latter analysis do es not necessarily imply that incorp orating unlab eled source data degrades the p erformance. The fact that DR CN st p erforms w orse than DRCN could b e due to, e.g., the mo del capacity , whic h depends on the c hoice of the architecture. 6 Conclusions W e ha v e prop osed Deep Reconstruction-Classification Net work (DRCN), a nov el mo del for unsupervised domain adaptation in ob ject recognition. The mo del p erforms multitask learning, i.e., alternately learning (source) label prediction and (target) data reconstruction using a shared encoding represen tation. W e ha ve shown that DR CN pro vides a considerable impro vemen t for some cross- domain recognition tasks ov er the state-of-the-art mo del. It also p erforms b etter than deep mo dels trained using the standard pr etr aining-finetuning approac h. A useful insight in to the effectiv eness of the learned DR CN can b e obtained from its data reconstruction. The app earance of DR CN’s reconstructed source images resem ble that of the target images, which indicates that DRCN learns the domain corresp ondence. W e also pro vided a theoretical analysis relating the DR CN algorithm to semi-supervised learning. The analysis w as used to supp ort the strategy in in volving only the target unlab eled data during learning the reconstruction task. DR CN for Unsup ervised Domain Adaptation 15 References 1. T orralba, A., Efros, A.A.: Unbiased Lo ok at Dataset Bias. In: CVPR. (2011) 1521–1528 2. Hsu, H.J., Chen, K.T.: F ace recognition on drones: Issues and limitations. In: Pro ceedings of ACM DroNet 2015. (2015) 3. P atel, V.M., Gopalan, R., Li, R., Chellapa, R.: Visual domain adaptation: A survey of recent adv ances. IEEE Signal Pro cessing Magazine 32 (3) (2015) 53–69 4. Aljundi, R., Emonet, R., Muselet, D., Sebban, M.: Landmarks-Based Kernelized Subspace Alignment for Unsup ervised Domain Adaptation. In: CVPR. (2015) 5. Baktashmotlagh, M., Harandi, M.T., Lov ell, B.C., Salzmann, M.: Unsup ervised Domain Adaptation by Domain Inv ariant Pro jection. In: ICCV. (2013) 769–776 6. Bruzzone, L., Marconcini, M.: Domain Adaptation Problems: A DASVM Classifi- cation T ec hnique and a Circular V alidation Strategy . IEEE TP AMI 32 (5) (2010) 770–787 7. Gong, B., Grauman, K., Sha, F.: Connecting the Dots with Landmarks: Discrim- inativ ely Learning Domain-Inv ariant F eatures for Unsup ervised Domain Adapta- tion. In: ICML. (2013) 8. Long, M., Ding, G., W ang, J., Sun, J., Guo, Y., Y u, P .S.: T ransfer Sparse Co ding for Robust Image Represen tation. In: CVPR. (2013) 404–414 9. Long, M., W ang, J., Ding, G., Sun, J., Y u, P .S.: T ransfer Join t Matc hing for Unsup ervised Domain Adaptation. In: CVPR. (2014) 1410–1417 10. P an, S.J., Tsang, I.W.H., Kwok, J.T., Y ang, Q.: Domain adaptation via transfer comp onen t analysis. IEEE T rans. Neural Netw orks 22 (2) (2011) 199–210 11. Saenk o, K., Kulis, B., F ritz, M., Darrell, T.: Adapting Visual Category Mo dels to New Domains. In: ECCV. (2010) 213–226 12. Caruana, R.: Multitask Learning. Mac hine Learning 28 (1997) 41–75 13. Argyriou, A., Evgeniou, T., Pon til, M.: Multi-task F eature Learning. In: Adv ances in Neural Information Processing Systems 19. (2006) 41–48 14. Krizhevsky , A., Sutsk ever, I., Hin ton, G.E.: Classification with Deep Con volutional Neural Netw orks. In: NIPS. V olume 25. (2012) 1106–1114 15. Simon yan, K., Zisserman, A.: V ery deep con volutional net works for large-scale image recognition. In: ICLR. (2015) 16. Hin ton, G.E., Osindero, S.: A fast learning algorithm for deep b elief nets. Neural Computation 18 (7) (2006) 1527–1554 17. Bengio, Y., Lamblin, P ., Popovici, D., Laro che lle, H.: Greedy Lay er-Wise T raining of Deep Netw orks. In: NIPS. V olume 19. (2007) 153–160 18. Ganin, Y., Lempitsky , V.S.: Unsupervised domain adaptation b y bac kpropagation. In: ICML. (2015) 1180–1189 19. Cohen, I., Cozman, F.G.: Risks of semi-sup ervised learning: how unlab eled data can degrade p erformance of generative classifiers. In: Semi-Sup ervised Learning. MIT Press (2006) 20. Japk owicz, N., Stephen, S.: The class im balance problem: A systematic study . In telligent Data Analysis 6 (5) (2002) 429–450 21. Shimo daira, H.: Improving predictive inference under co v ariate shift by weigh ting the log-lik eliho o d function. Journal of Statistical Planning and Inference 90 (2) (2000) 227–244 22. Zadrozn y , B.: Learning and ev aluating classifiers under sample selection bias. In: Pro ceedings of the 21th Annual In ternational Conference on Machine Learning. (2004) 114–121 16 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li 23. P an, S.J., Y ang, Q.: A Surv ey on T ransfer Learning. IEEE T ransactions on Knowl- edge and Data Engineering 22 (10) (2010) 1345–1359 24. Blitzer, J., McDonald, R., Pereira, F.: Domain Adaptation with Structural Corre- sp ondence Learning. In: Proceedings of the 2006 Conference on Empirical Metho ds in Natural Language Processing. (2006) 120–128 25. Daum ´ e-I II, H.: F rustratingly Easy Domain Adaptation. In: Pro ceedings of ACL. (2007) 26. Margolis, A.: A literature review of domain adaptation with unlab eled data. T ech- nical rep ort, Universit y of W ashington (2011) 27. F ernando, B., Habrard, A., Sebban, M., T uytelaars, T.: Unsupervised Visual Do- main Adaptation Using Subspace Alignment. In: ICCV. (2013) 2960–2967 28. Ghifary , M., Balduzzi, D., Kleijn, W.B., Zhang, M.: Scatter comp onen t analysis: A unified framew ork for domain adaptation and domain generalization. CoRR abs/1510.04373 (2015) 29. Gopalan, R., Li, R., Chellapa, R.: Domain Adaptation for Ob ject Recognition: An Unsup ervised Approac h. In: ICCV. (2011) 999–1006 30. Gong, B., Shi, Y., Sha, F., Grauman, K.: Geo desic Flow Kernel for Unsup ervised Domain Adaptation. In: CVPR. (2012) 2066–2073 31. Ba y , H., T uytelaars, T., Go ol, L.V.: SURF: Sp eeded Up Robust F eatures. CVIU 110 (3) (2008) 346–359 32. Glorot, X., Bordes, A., Bengio, Y.: Domain Adaptation for Large-Scale Sentimen t Classification: A Deep Learning Approach. In: ICML. (2011) 513–520 33. Vincen t, P ., Laro c helle, H., La joie, I., Bengio, Y., Manzagol, P .A.: Stac ked De- noising Auto encoders: Learning Useful Representations in a Deep Netw ork with a Lo cal Denoising Criterion. Journal of Machine Learning Research 11 (2010) 3371–3408 34. Ghifary , M., Kleijn, W.B., Zhang, M.: Domain adaptive neural net works for ob ject recognition. In: PRICAI: T rends in AI. V olume 8862. (2014) 898–904 35. LeCun, Y., Bottou, L., Bengio, Y., Haffner, P .: Gradient-based learning applied to do cumen t recognition. In: Pro ceedings of the IEEE. V olume 86. (1998) 2278–2324 36. Girshic k, R., Donahue, J., Darrell, T., Malik, J.: Rich feature hierarc hies for accu- rate ob ject detection and semantic segmen tation. In: CVPR. (2014) 37. Donah ue, J., Jia, Y., Viny als, O., Hoffman, J., Zhang, N., Tzeng, E., Darrell, T.: DeCAF: A Deep Con volutional Activ ation F eature for Generic Visual Recognition. In: ICML. (2014) 38. Hoffman, J., Tzeng, E., Donah ue, J., Jia, Y., Saenko, K., Darrell, T.: One-Shot Adaptation of Sup ervised Deep Conv olutional Models. CoRR abs/1312.6204 (2013) 39. Chopra, S., Balakrishnan, S., Gopalan, R.: DLID: Deep Learning for Domain Adaptation b y In terp olating betw een Domains. In: ICML W orkshop on Challenges in Representation Learning. (2013) 40. Long, M., Cao, Y., W ang, J., Jordan, M.I.: Learning transferable features with deep adaptation netw orks. In: ICML. (2015) 41. Tzeng, E., Hoffman, J., Zhang, N., Saenk o, K., Darrell, T.: Deep domain confusion: Maximizing for domain in v ariance. CoRR abs/1412.3474 (2014) 42. Tzeng, E., Hoffman, J., Darrell, T., Saenk o, K.: Simultaneous deep transfer across domains and tasks. In: ICCV. (2015) 43. Borgw ardt, K.M., Gretton, A., Rasch, M.J., Kriegel, H.P ., Sch¨ olk opf, B., Smola, A.J.: In tegrating structured biological data by Kernel Maxim um Mean Discrep- ancy . Bioinformatics 22 (14) (2006) e49–e57 DR CN for Unsup ervised Domain Adaptation 17 44. Masci, J., Meier, U., Ciresan, D., Schmidh ub er, J.e.: Stack ed Conv olutional Auto- Enco ders for Hierarchical F eature Extraction. In: ICANN. (2011) 52–59 45. Zeiler, M.D., Krishnan, D., T aylor, G.W., F ergus, R.: Deconv olutional net works. In: CVPR. (2010) 2528–2535 46. Tieleman, T., Hin ton, G.: Lecture 6.5—RmsProp: Divide the gradient b y a run- ning av erage of its recen t magnitude. COURSERA: Neural Netw orks for Machine Learning (2012) 47. Sriv asta v a, N., Hinton, G., Krizhevsky , A., Sutskev er, I., Salakh utdino v, R.: Drop out: A Simple W ay to Prev ent Neural Netw orks from Overfitting. JMLR (2014) 48. Bastien, F., Lamblin, P ., Pascan u, R., Bergstra, J., Go o dfello w, I.J., Bergeron, A., Bouc hard, N., Bengio, Y.: Theano: new features and sp eed improv ements. In: Deep Learning and Unsupervised F eature Learning NIPS 2012 W orkshop. (2012) 49. Simard, P .Y., Steinkraus, D., Platt, J.C.: Best practices for conv olutional neural net works applied to visual document analysis. In: ICD AR. V olume 2. (2003) 958– 962 50. Hull, J.J.: A database for handwritten text recognition research. IEEE TP AMI 16 (5) (1994) 550–554 51. Netzer, Y., W ang, T., Coates, A., Bissacco, A., W u, B., Ng, A.Y.: Reading Digits in Natural Images with Unsup ervised F eature Learning. In: NIPS W orkshop on Deep Learning and Unsupervised F eature Learning. (2011) 52. Krizhevsky , A.: Learning Multiple Lay ers of F eatures from Tin y Images. Master’s thesis, Department of Computer Science, Univ ersity of T oronto (April 2009) 53. Coates, A., Lee, H., Ng, A.Y.: An Analysis of Single-Lay er Netw orks in Unsup er- vised F eature Learning. In: AIST A TS. (2011) 215–223 54. Sermanet, P ., Chintala, S., LeCun, Y.: Con volutional neural netw orks applied to house num b er digit classification. In: ICPR. (2012) 3288–3291 55. Nair, V., Hinton, G.E.: Rectified Linear Units Improv e Restricted Boltzmann Mac hines. In: ICML. (2010) 56. White, H.: Maxim um likelihoo d estimation of missp ecified mo dels. Econometrica 50 (1) (1982) 1–25 57. Bengio, Y., Y ao, L., Guillaume, A., Vince nt, P .: Generalized denoising auto- enco ders as generative mo dels. In: NIPS. (2013) 899–907 58. v an der Maaten, L., Hinton, G.: Visualizing High-Dimensional Data Using t-SNE. Journal of Machine Learning Research 9 (2008) 2579–2605 18 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li Supplemen tal Material This do cumen t is the supplemental material for the pap er De ep R e c onstruction-Classific ation for Unsup ervise d Domain A daptation . It con tains some more exp erimen tal results that cannot b e included in the main manuscript due to a lac k of space. (a) Source (MNIST) (b) T arget (USPS) (c) DR CN (d) Con vAE (e) DR CN st (f ) ConvAE+Con vNet src Fig. 3. Data reconstruction after training from MNIST → USPS. Fig. (a)-(b) show the original input pixels, and (c)-(f ) depict the reconstructed source images (MNIST). DR CN for Unsup ervised Domain Adaptation 19 (a) Source (USPS) (b) T arget (MNIST) (c) DR CN (d) Con vAE (e) DR CN st (f ) ConvAE+Con vNet src Fig. 4. Data reconstruction after training from USPS → MNIST. Fig. (a)-(b) show the original input pixels, and (c)-(f ) depict the reconstructed source images (USPS). Data Reconstruction Figures 3 and 4 depict the reconstruction of the source images in cases of MNIST → USPS and USPS → MNIST, resp ectiv ely . The trend of the outcome is similar to that of SVHN → MNIST, see Figure 2 in the main manuscript. That is, the reconstructed images pro duced by DR CN resem ble the style of the target images. 20 M. Ghifary , W. B. Kleijn, M. Zhang, D. Balduzzi, W. Li T raining Progress Recall that DRCN has tw o pipelines with a shared enco ding representation; eac h corresp onds to the classification and reconstruction task, resp ectiv ely . One can consider that the unsup ervised reconstruction learning acts as a regularization for the sup ervised classification to reduce ov erfitting onto the source domain. Figure 5 compares the source and target accuracy of DR CN with that of the standard Con vNet during training. The most prominent results indicating the o verfitting reduction can b e seen in SVHN → MNIST case, i.e., DRCN produces higher target accuracy , but with lo wer source accuracy , than Con vNet. (a) SVHN → MNIST training (b) MNIST → USPS training Fig. 5. The source accuracy (blue lines) and target accuracy (red lines) comparison b et w een Con vNet and DR CN during training stage on SVHN → MNIST cross-domain task. DR CN induces low er source accuracy , but higher target accuracy than Con vNet. t-SNE visualization. F or completeness, w e also visualize the 2D p oint cloud of the last hidden la yer of DRCN using t-SNE [58] and compare it with that of the standard ConvNet. Figure 6 depicts the feature-point clouds extracted from the target images in the case of MNIST → USPS and SVHN → MNIST. Red p oin ts indicate the source feature-p oin t cloud, while gra y p oin ts indicate the target feature-point cloud. Domain in v ariance should b e indicated b y the degree of o verlap betw een the source and target feature clouds. W e can see that the ov erlap is more prominent in the case of DR CN than ConvNet. DR CN for Unsup ervised Domain Adaptation 21 (a) ConvNet (MNIST → USPS) (b) DR CN (MNIST → USPS) (c) Con vNet (SVHN → MNIST) (d) DR CN (SVHN → MNIST) Fig. 6. The t-SNE visualizations of the last lay er’s activ ations. Red and gray p oints indicate the source and target domain examples, resp ectiv ely .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment