Man is to Computer Programmer as Woman is to Homemaker? Debiasing Word Embeddings

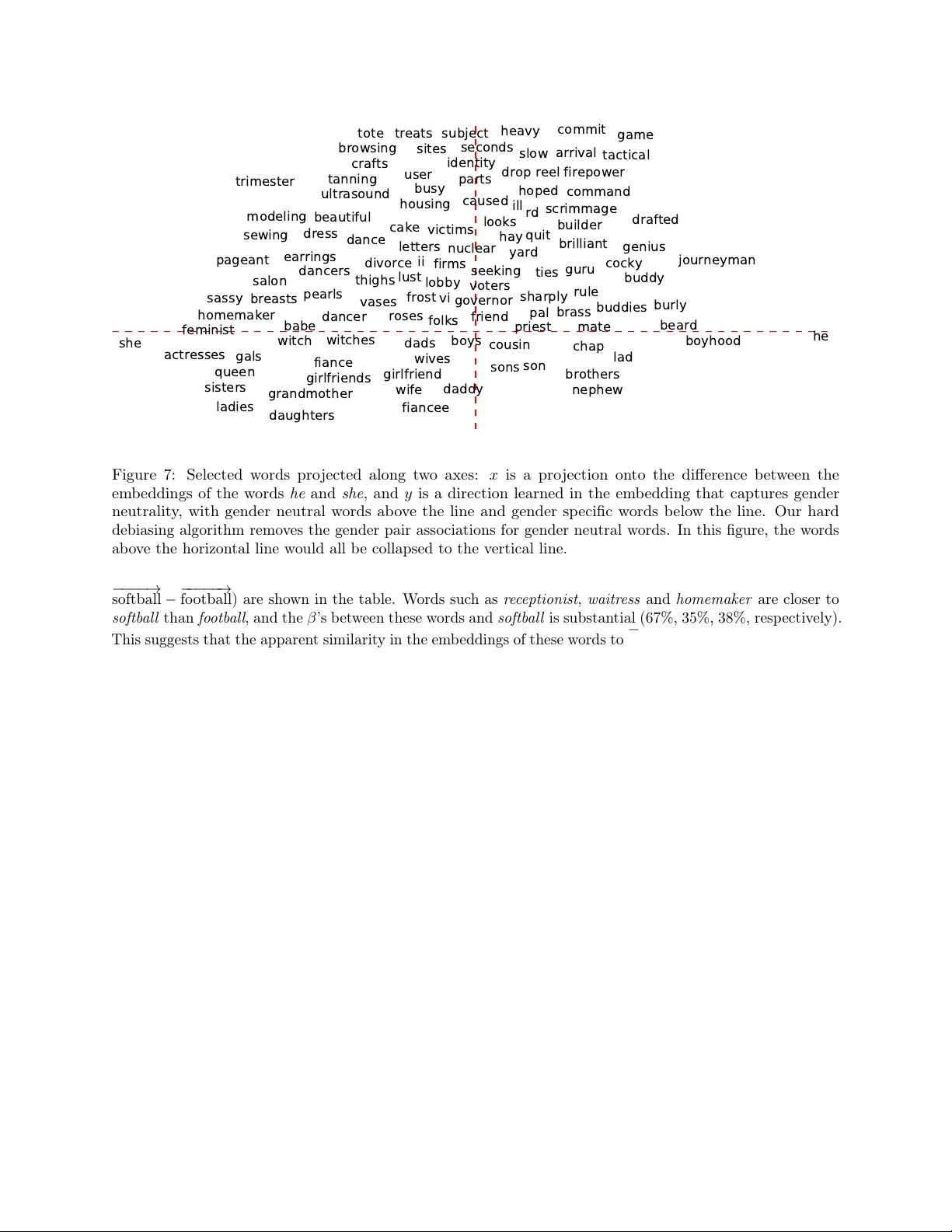

The blind application of machine learning runs the risk of amplifying biases present in data. Such a danger is facing us with word embedding, a popular framework to represent text data as vectors which has been used in many machine learning and natur…

Authors: Tolga Bolukbasi, Kai-Wei Chang, James Zou